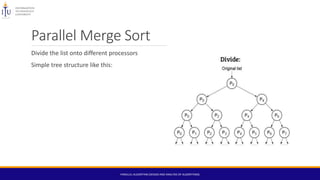

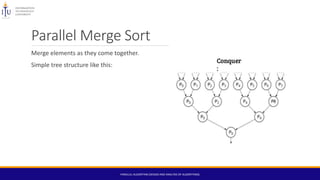

The document discusses parallel algorithms, which are designed for execution on multi-processor computers while also being applicable to single processors. It covers their benefits, such as improved throughput and reduced latency, as well as challenges like data dependency, resource requirements, and scalability issues. Key examples include odd-even transposition sort and parallel merge sort, highlighting their methodologies and complexities.

![Computing the sum of a Sequence.

Consider a sequence of n elements.

Devise an algorithm that performs many operations in parallel.

In parallel, each element of A with an even index is paired and summed with the next element

of A. Like , A[0] is paired with A[1], A[2] with A[3], and so on.

The result is a new sequence of ⌈n/2⌉ numbers.

This pairing and summing step can be repeated until, after ⌈log2 n⌉ steps, a sequence consisting

of a single value is produced, and this value is equal to the final sum.

Sequentially, its time complexity is O(n) but using this technique of parallelism the time

complexity reduced to O(log2n).

PARALLEL ALGORITHM (DESIGN AND ANALYSIS OF ALGORITHMS)](https://image.slidesharecdn.com/parallelalgorithms-161227144525/85/Parallel-algorithms-21-320.jpg)

![Data Dependency

Results from multiple use of the same location(s) in storage by different tasks.

e.g.

for (int i=0;i<100;i++)

array[i]=array[i-1]*20;

Shared memory architectures -synchronize read/write operations between tasks.

PARALLEL ALGORITHM (DESIGN AND ANALYSIS OF ALGORITHMS)](https://image.slidesharecdn.com/parallelalgorithms-161227144525/85/Parallel-algorithms-23-320.jpg)