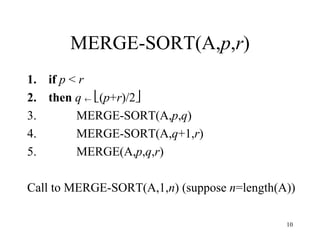

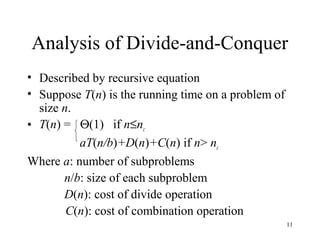

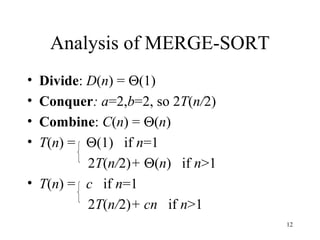

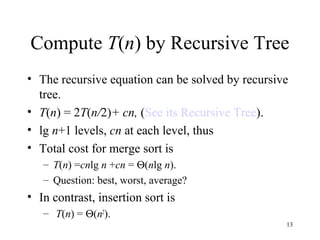

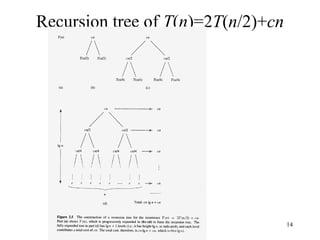

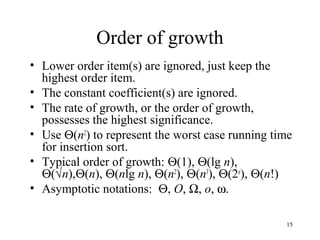

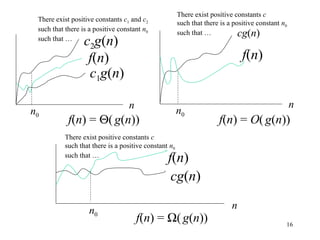

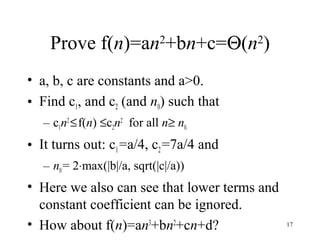

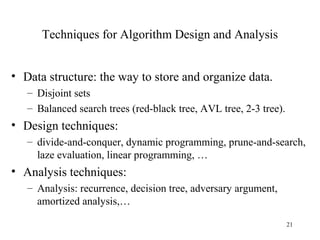

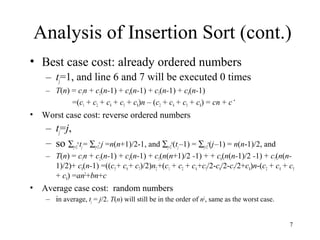

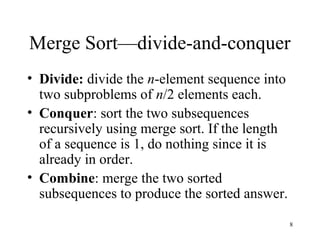

This document discusses algorithm design and analysis. It introduces sorting as an example problem and compares the insertion sort and merge sort algorithms. Insertion sort runs in O(n2) time in the worst case, while merge sort runs in O(nlogn) time. It provides pseudocode for insertion sort and merge sort and analyzes their time complexities. It also covers algorithm analysis techniques like recursion trees and asymptotic notation.

![4

Insertion Sort Algorithm (cont.)

INSERTION-SORT(A)

1. for j = 2 to length[A]

2. do key ← A[j]

3. //insert A[j] to sorted sequence A[1..j-1]

4. i ← j-1

5. while i >0 and A[i]>key

6. do A[i+1] ← A[i] //move A[i] one position right

7. i ← i-1

8. A[i+1] ← key](https://image.slidesharecdn.com/introduction-130920031122-phpapp01/85/Introduction-4-320.jpg)

![5

Correctness of Insertion Sort Algorithm

• Loop invariant

– At the start of each iteration of the for loop, the

subarray A[1..j-1] contains original A[1..j-1] but in

sorted order.

• Proof:

– Initialization : j=2, A[1..j-1]=A[1..1]=A[1], sorted.

– Maintenance: each iteration maintains loop invariant.

– Termination: j=n+1, so A[1..j-1]=A[1..n] in sorted

order.](https://image.slidesharecdn.com/introduction-130920031122-phpapp01/85/Introduction-5-320.jpg)

![6

Analysis of Insertion Sort

INSERTION-SORT(A) cost times

1. for j = 2 to length[A] c1 n

2. do key ← A[j] c2 n-1

3. //insert A[j] to sorted sequence A[1..j-1] 0 n-1

4. i ← j-1 c4 n-1

5. while i >0 and A[i]>key c5 ∑j=2

n

tj

6. do A[i+1] ← A[i] c6 ∑j=2

n

(tj–1)

7. i ← i-1 c7 ∑j=2

n

(tj–1)

8. A[i+1] ← key c8 n–1

(tj is the number of times the while loop test in line 5 is executed for that value of j)

The total time cost T(n) = sum of cost × times in each line

=c1n + c2(n-1) + c4(n-1) + c5∑j=2

n

tj+ c6∑j=2

n

(tj-1)+ c7∑j=2

n

(tj-1)+ c8(n-1)](https://image.slidesharecdn.com/introduction-130920031122-phpapp01/85/Introduction-6-320.jpg)

![9

Merge Sort –merge function

• Merge is the key operation in merge sort.

• Suppose the (sub)sequence(s) are stored in

the array A. moreover, A[p..q] and

A[q+1..r] are two sorted subsequences.

• MERGE(A,p,q,r) will merge the two

subsequences into sorted sequence A[p..r]

– MERGE(A,p,q,r) takes Θ(r-p+1).](https://image.slidesharecdn.com/introduction-130920031122-phpapp01/85/Introduction-9-320.jpg)