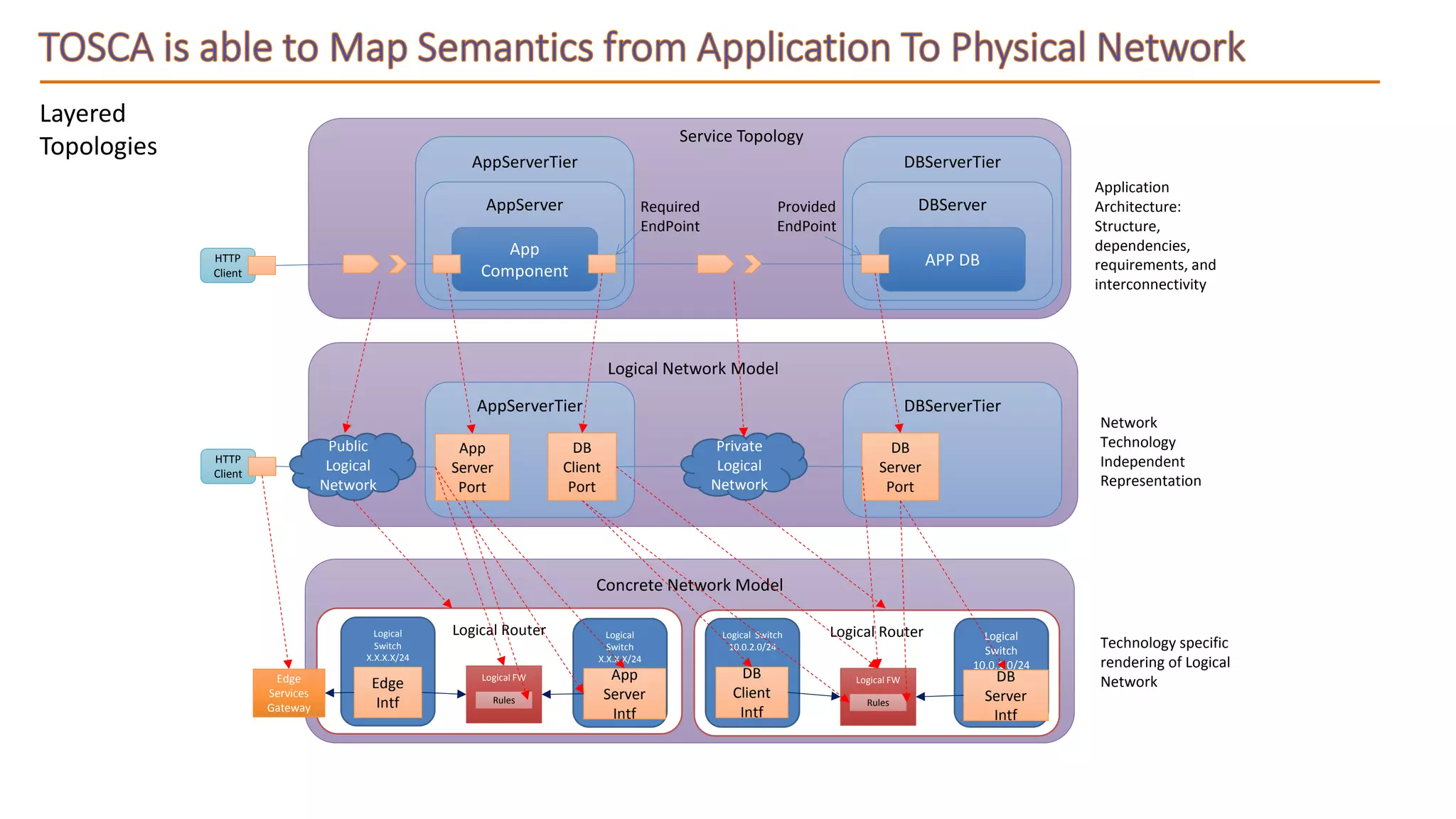

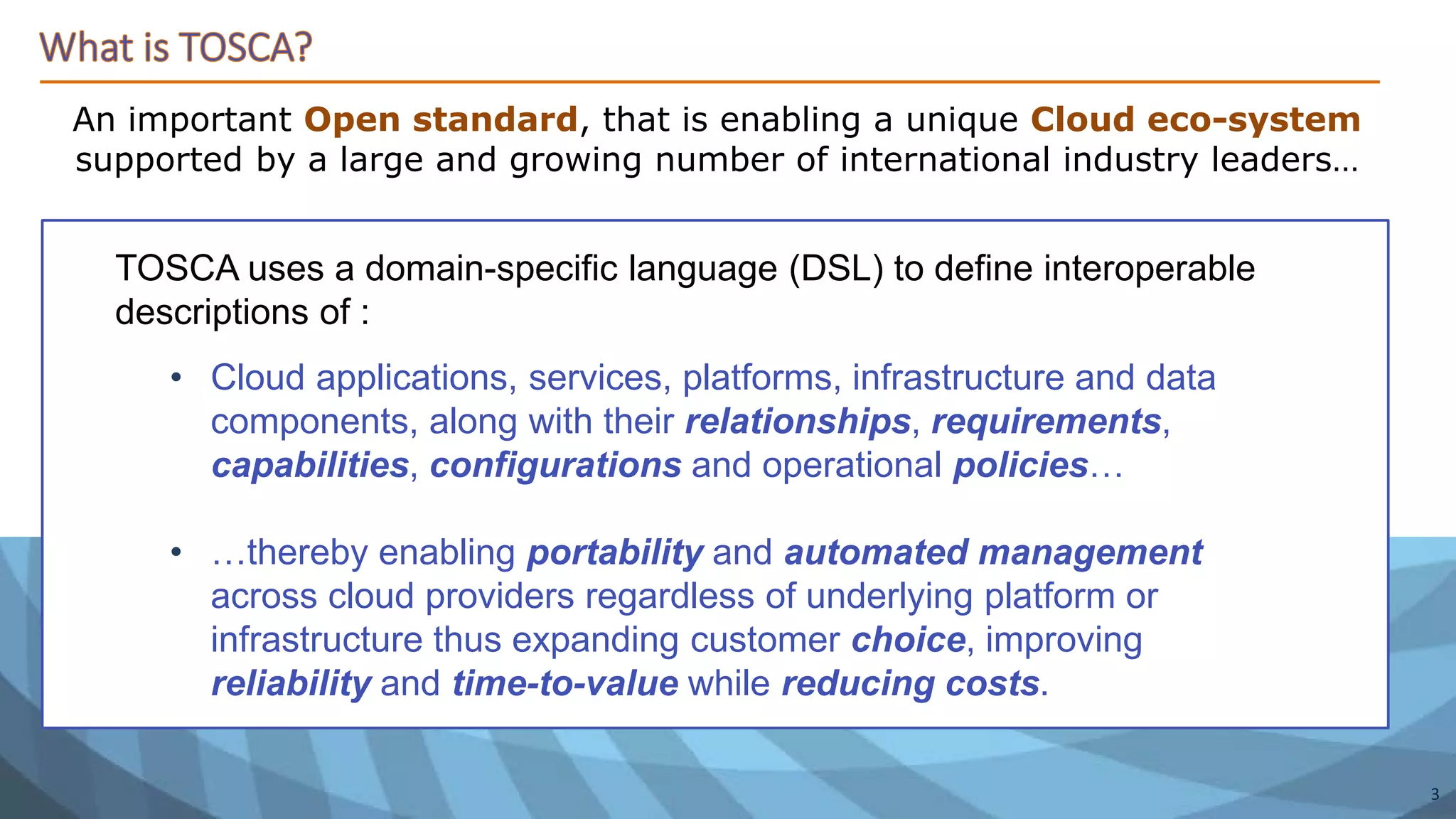

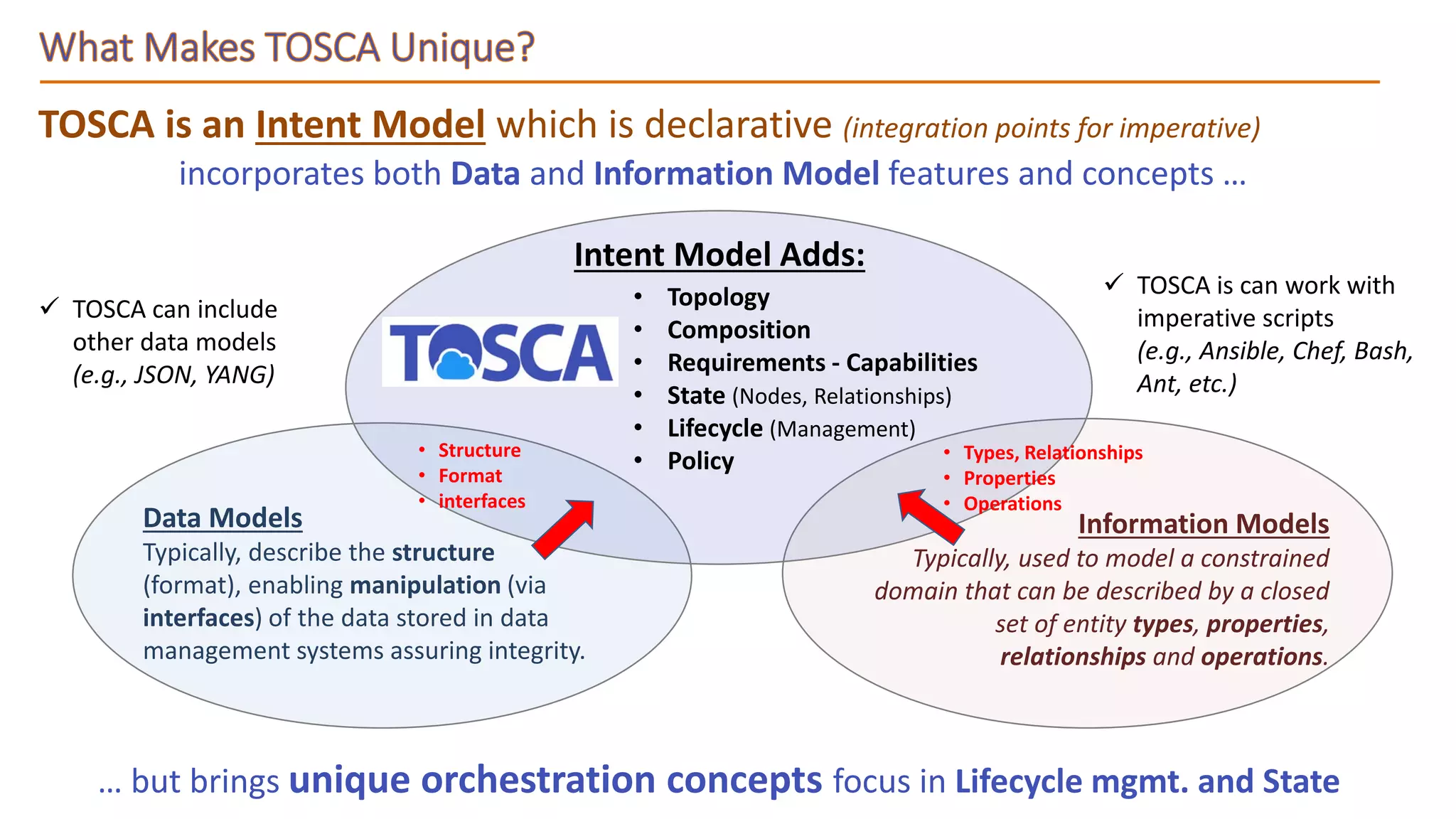

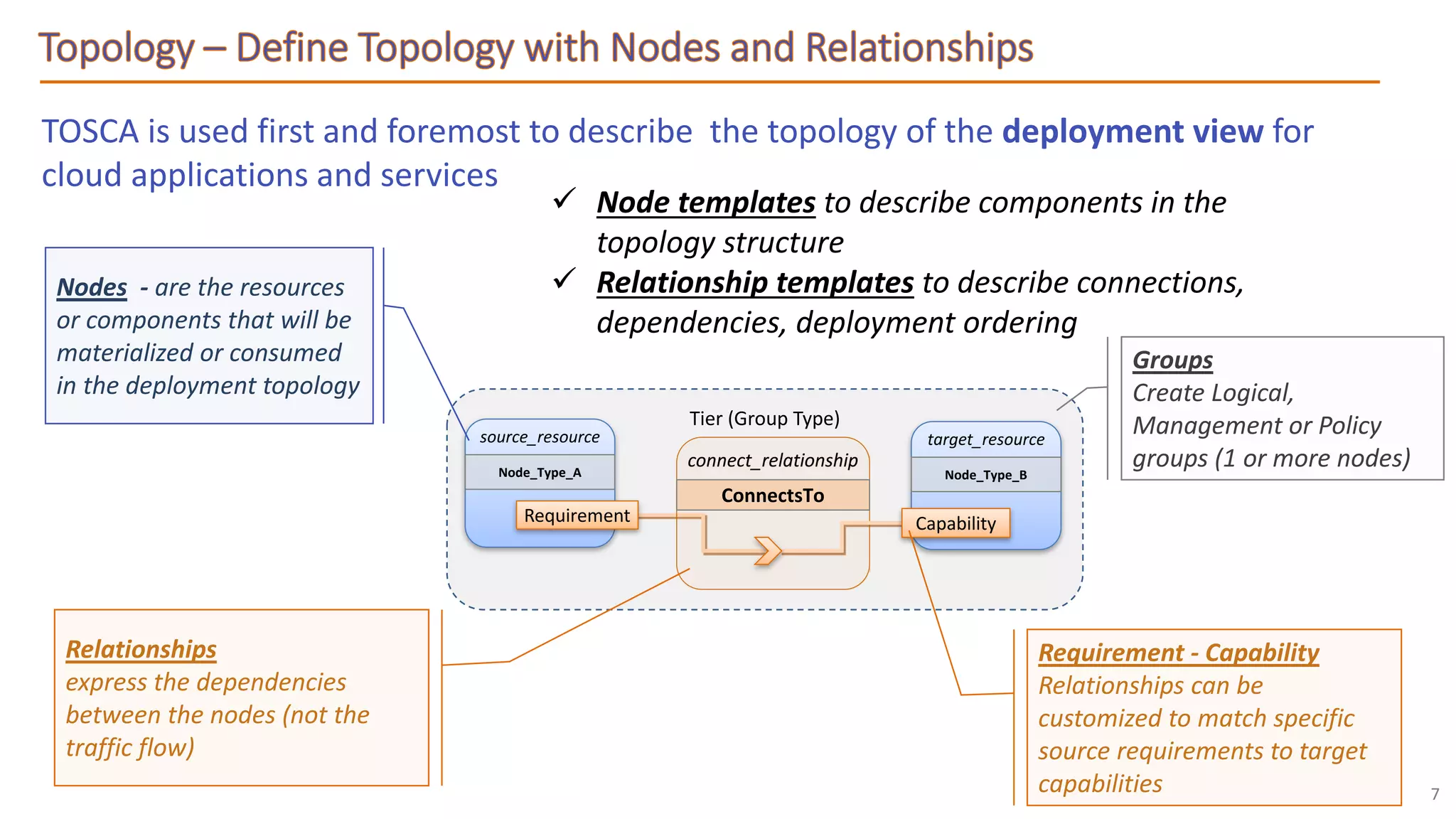

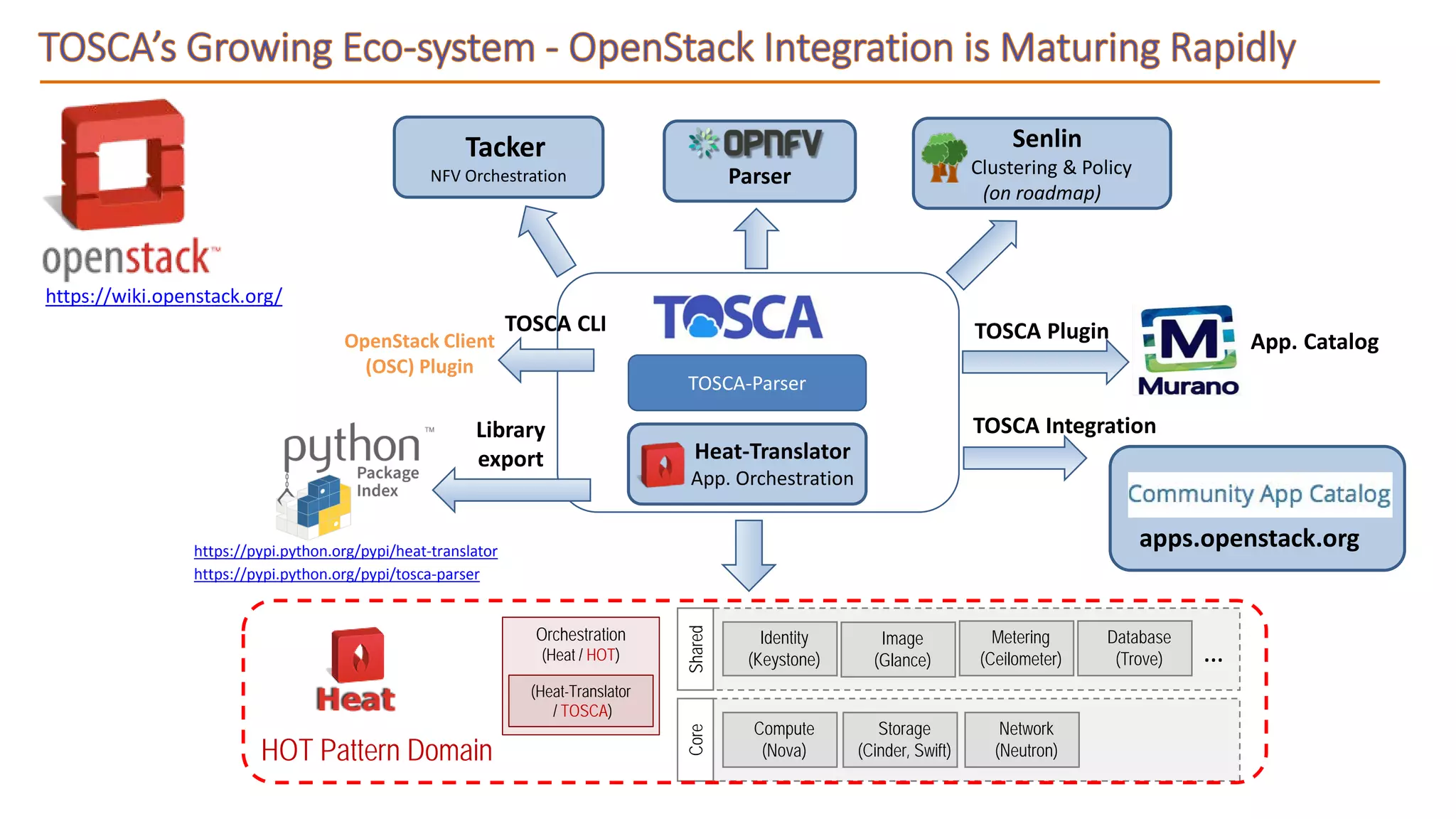

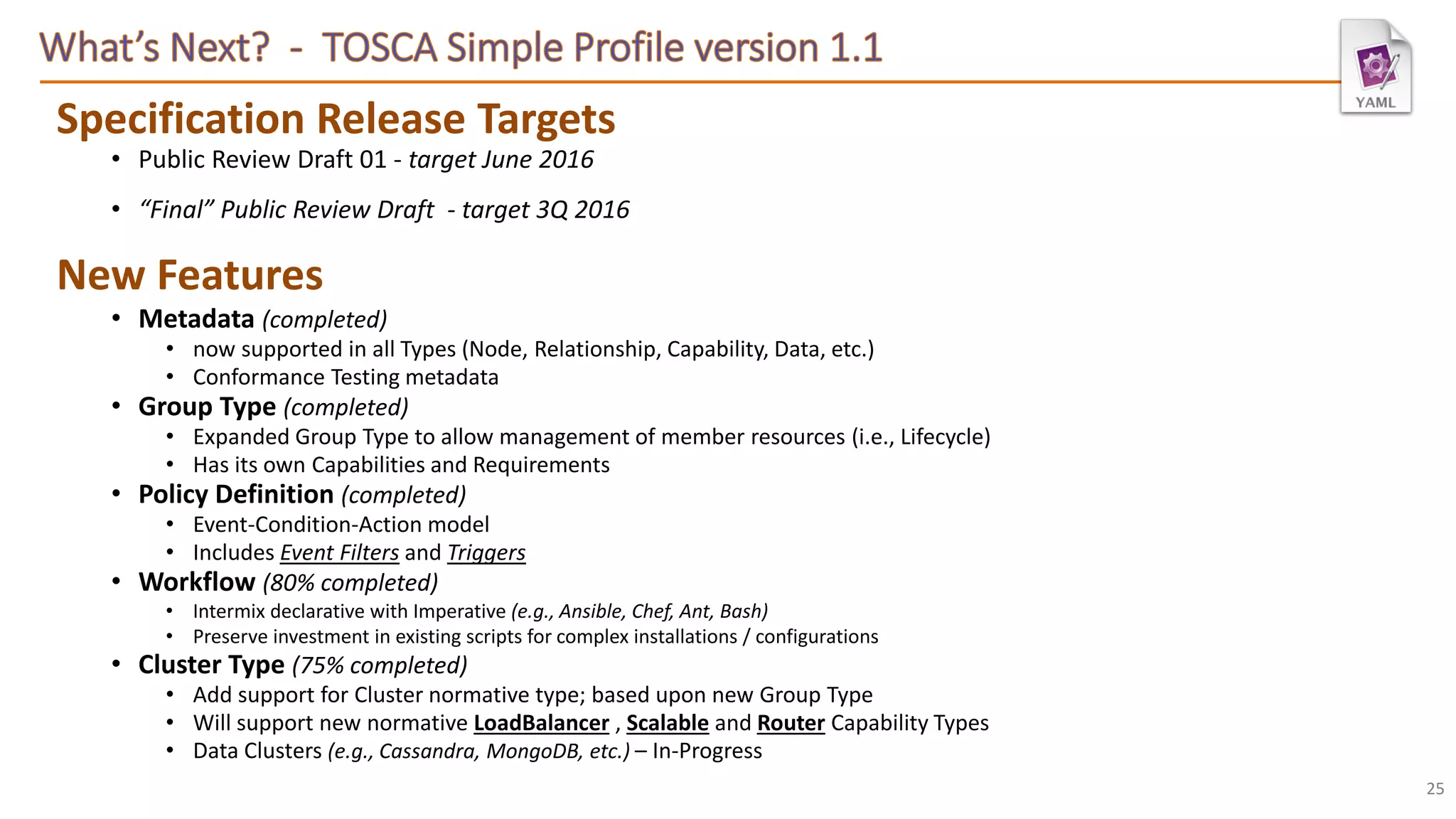

The document elaborates on the Topology and Orchestration Specification for Cloud Applications (TOSCA), which enables the definition of portable descriptions for cloud applications, services, and infrastructure components using a domain-specific language. TOSCA facilitates improved interoperability, lifecycle management, and policy definition across cloud providers, promoting customer flexibility and cost reduction. The document outlines the specifications, milestones, and expanding ecosystem associated with TOSCA, highlighting its importance in enabling automated management and orchestration of multi-cloud environments.

![TOSCA Policy Definition (e.g., Placement, Scaling, Performance) :

<policy_name>:

type: <policy_type_name>

description: <policy_description>

properties: <property_definitions>

# allowed targets for policy association

targets: [ <list_of_valid_target_resources> ]

triggers:

<trigger_symbolic_name_1>:

event: <event_type_name>

target_filter:

node: <node_template_name> | <node_type>

# (optional) reference to a related node

# via a requirement

requirement: <requirement_name>

# (optional) Capability within node to monitor

capability: <capability_name>

# Describes an attribute-relative test that

# causes the trigger’s action to be invoked.

condition: <constraint_clause>

action:

# implementation-specific operation name

<operation_name>:

description: <optional description>

inputs: <list_of_parameters>

implementation: <script> | <service_name>

...

Event

• Name of a normative TOSCA Event Type

• That describes an event based upon a

Resource “state” change.

• Or a change in one or more of the

resources attribute value.

Condition

Identifies:

• the resource (Node) in the TOSCA

model to monitor.

• Optionally, identify a Capability of the

identified node.

• Describe the attribute (state) of the

resource to evaluate (condition)

1..NTriggerscanbedeclared

Describes:

• An Operation (name) to invoke when

the condition is met

• within the declared Implementation

• Optionally, pass in Input parameters to

the operation along with any well-

defined strategy values.

Action](https://image.slidesharecdn.com/cscc-webinar-oasis-tosca-cloud-portability-and-lifecycle-management-5-18-16-160518182746/75/OASIS-TOSCA-Cloud-Portability-and-Lifecycle-Management-20-2048.jpg)

![Complete Source: https://github.com/openstack/heat-translator/blob/master/translator/toscalib/tests/data/tosca_single_server.yaml

tosca_definitions_version: tosca_simple_yaml_1_0

description: >

Template for deploying a single server with predefined properties and input parameter

topology_template:

inputs:

cpus:

type: integer

description: Number of CPUs for the server.

constraints:

- valid_values: [ 1, 2, 4, 8 ]

node_templates:

my_server:

type: tosca.nodes.Compute

capabilities:

host:

properties:

num_cpus: { get_input: cpus }

disk_size: 10 GB

mem_size: 512 MB

os:

properties:

architecture: x86_64

type: linux

distribution: rhel

outputs:

server_address:

description: IP address of server instance.

value: { get_attribute: [server, private_address] }](https://image.slidesharecdn.com/cscc-webinar-oasis-tosca-cloud-portability-and-lifecycle-management-5-18-16-160518182746/75/OASIS-TOSCA-Cloud-Portability-and-Lifecycle-Management-28-2048.jpg)