Motif finding plays a vital role in identifying transcription factor binding sites (TFBS) that help understand gene expression regulation. The document discusses various approaches to motif finding including:

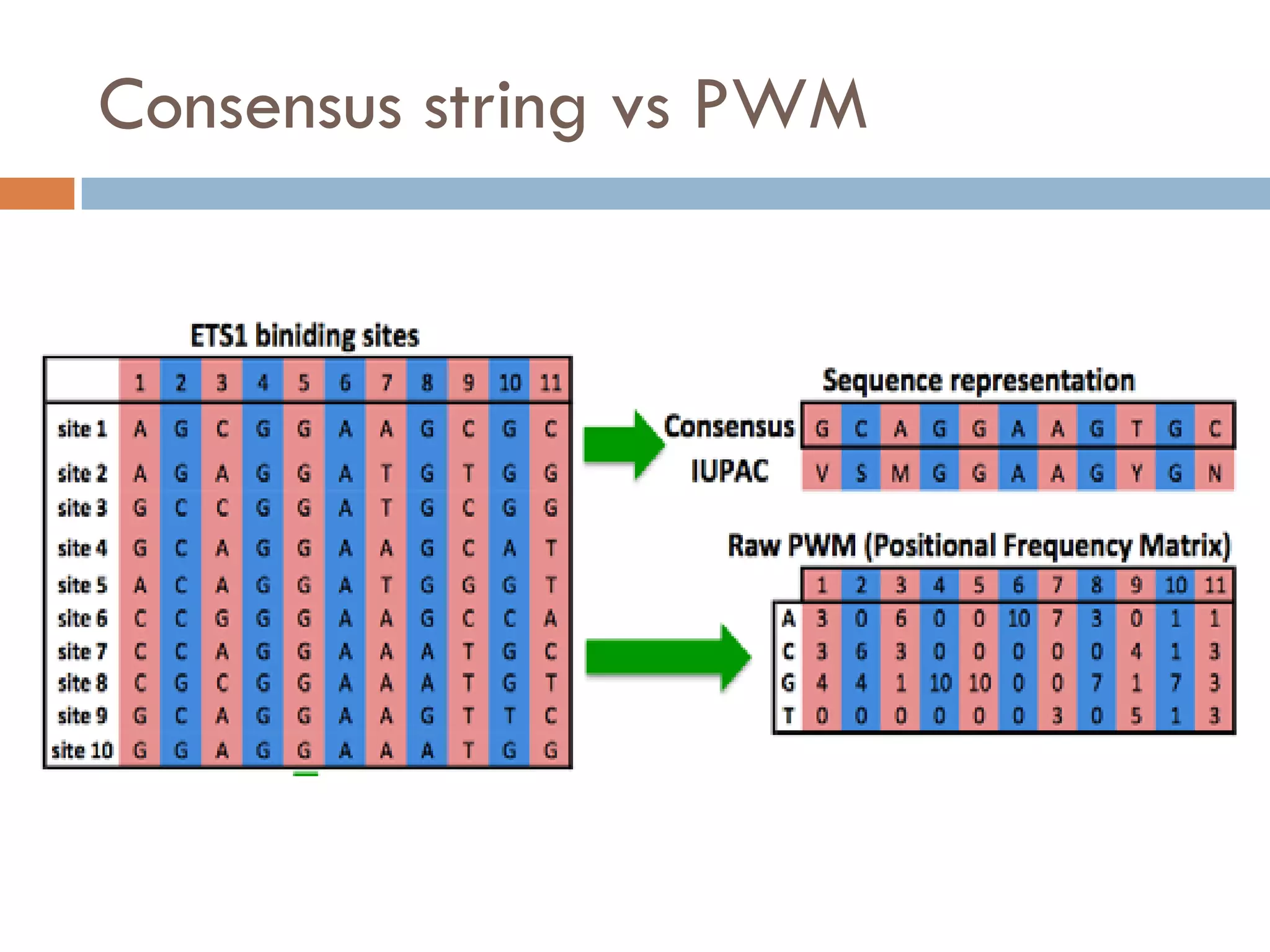

1. Enumerative approaches that search for consensus sequences like YMF, DREME, CisFinder, Weeder, FMotif, and MCES.

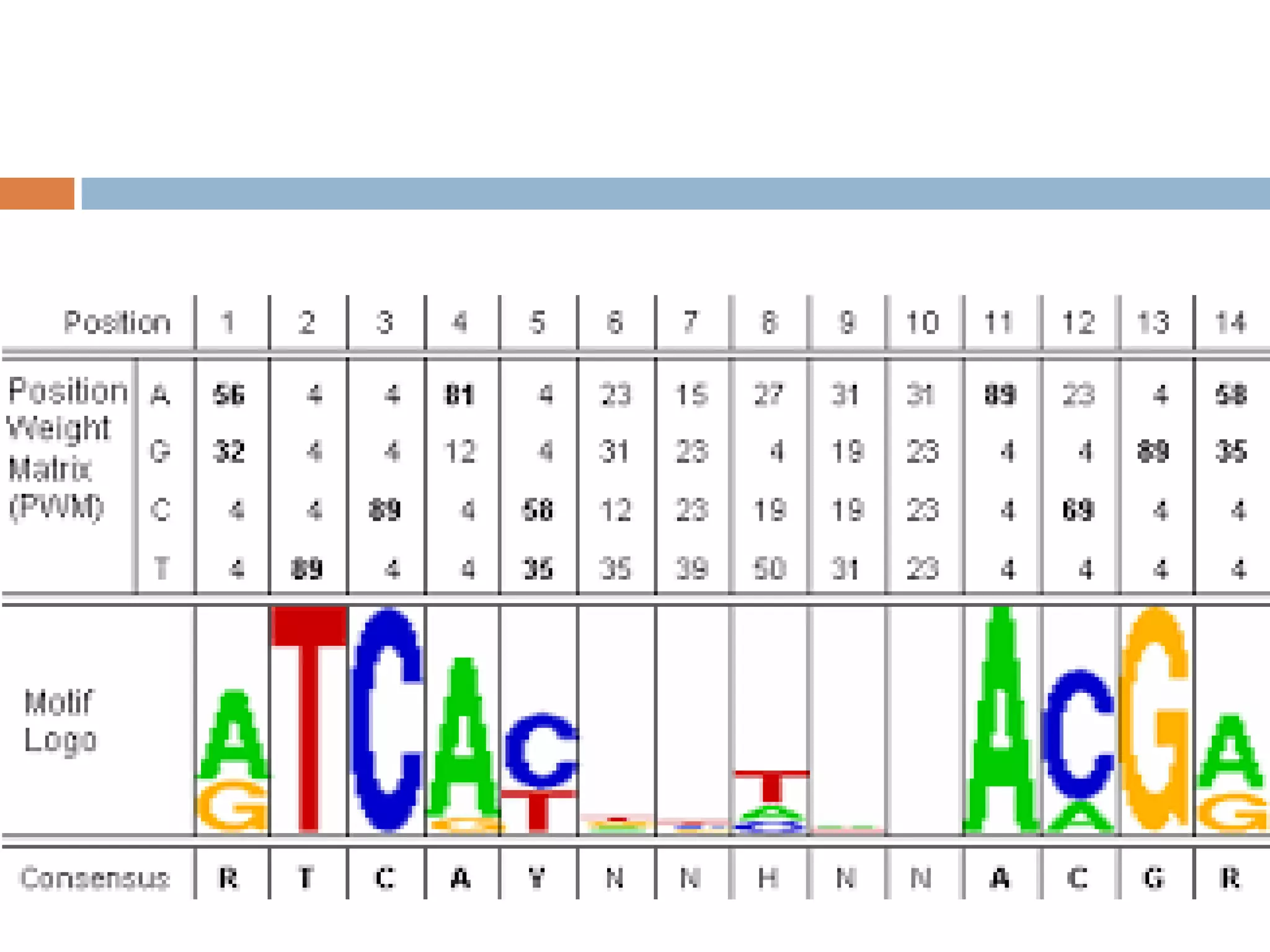

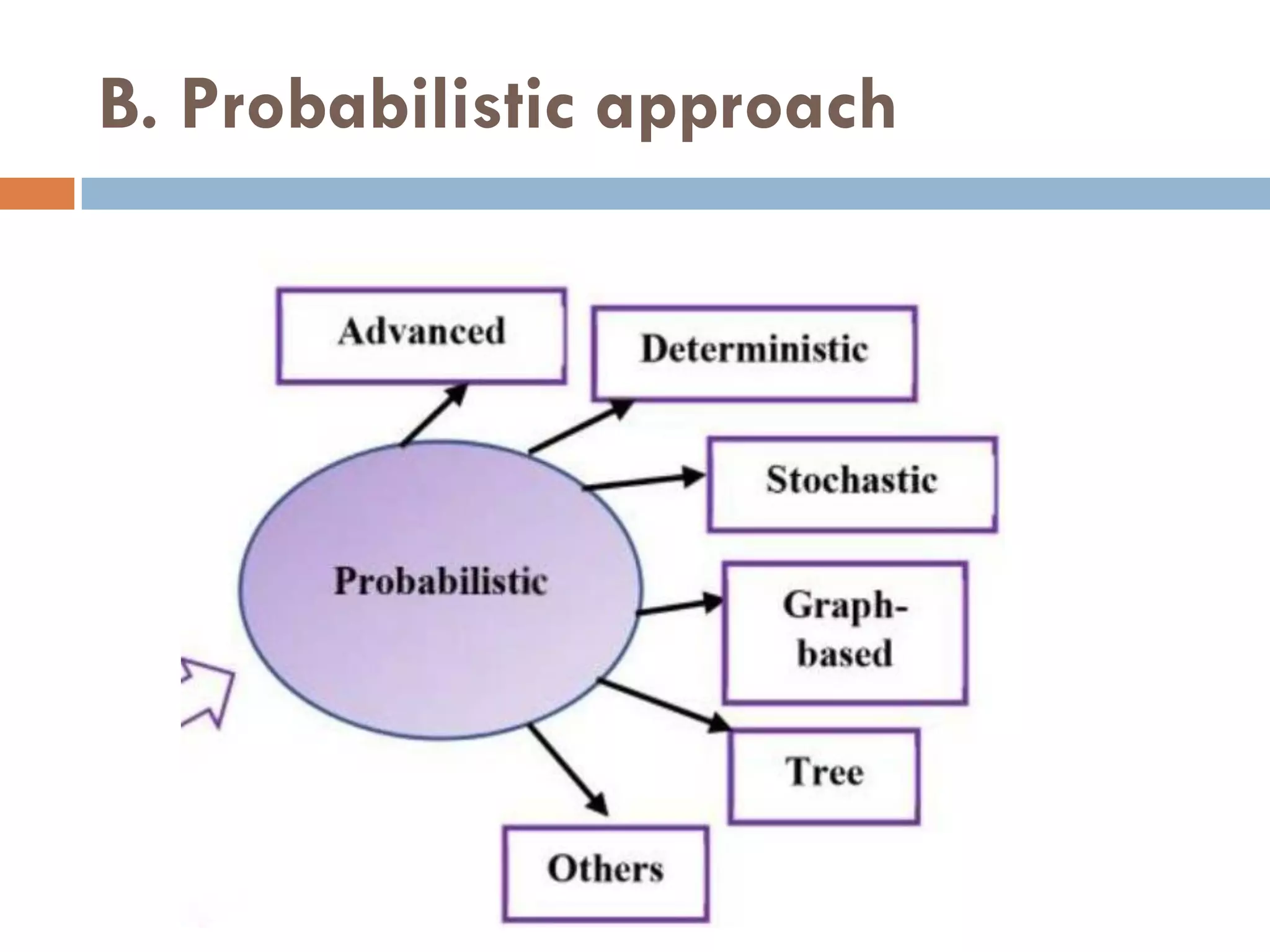

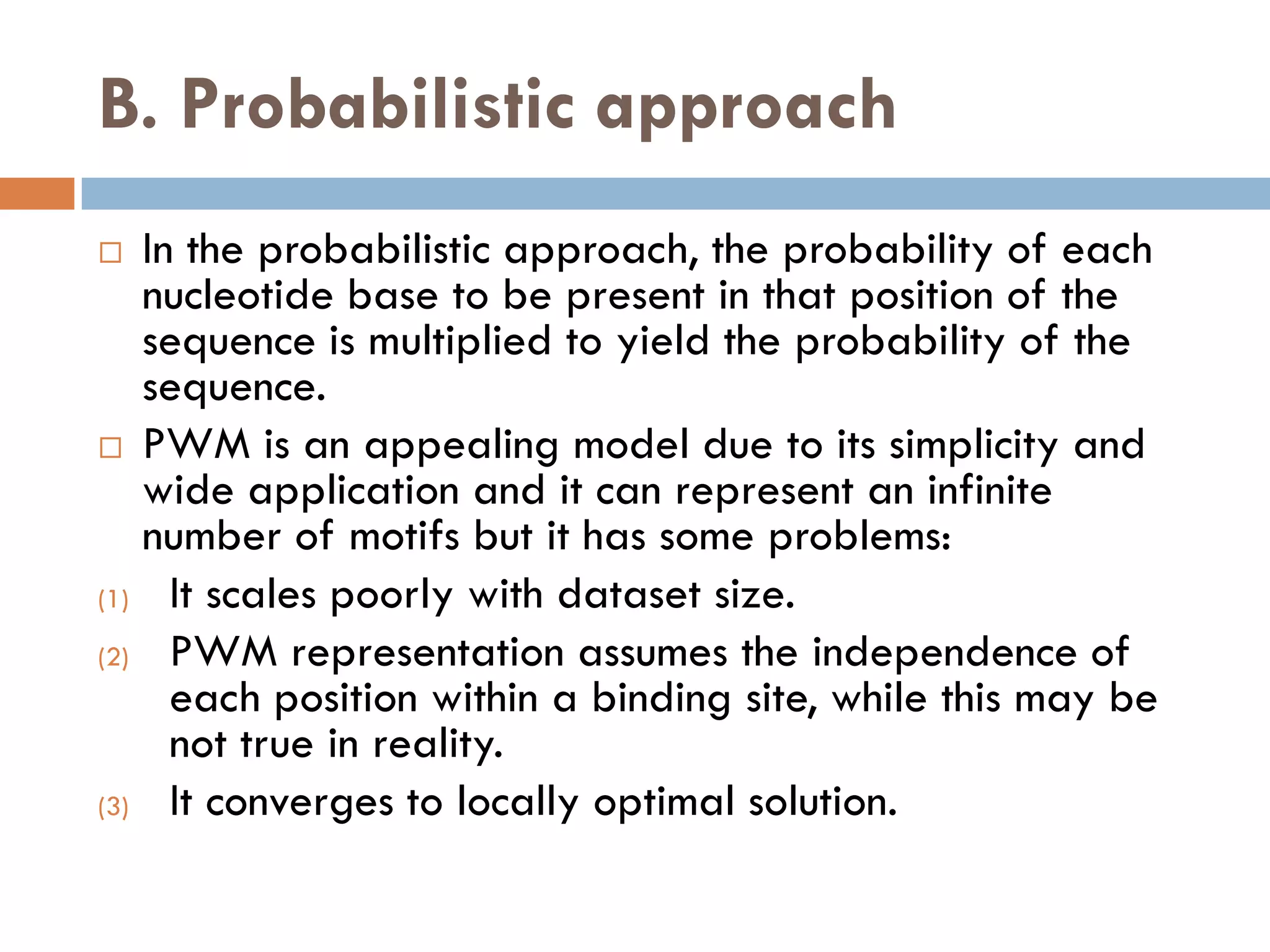

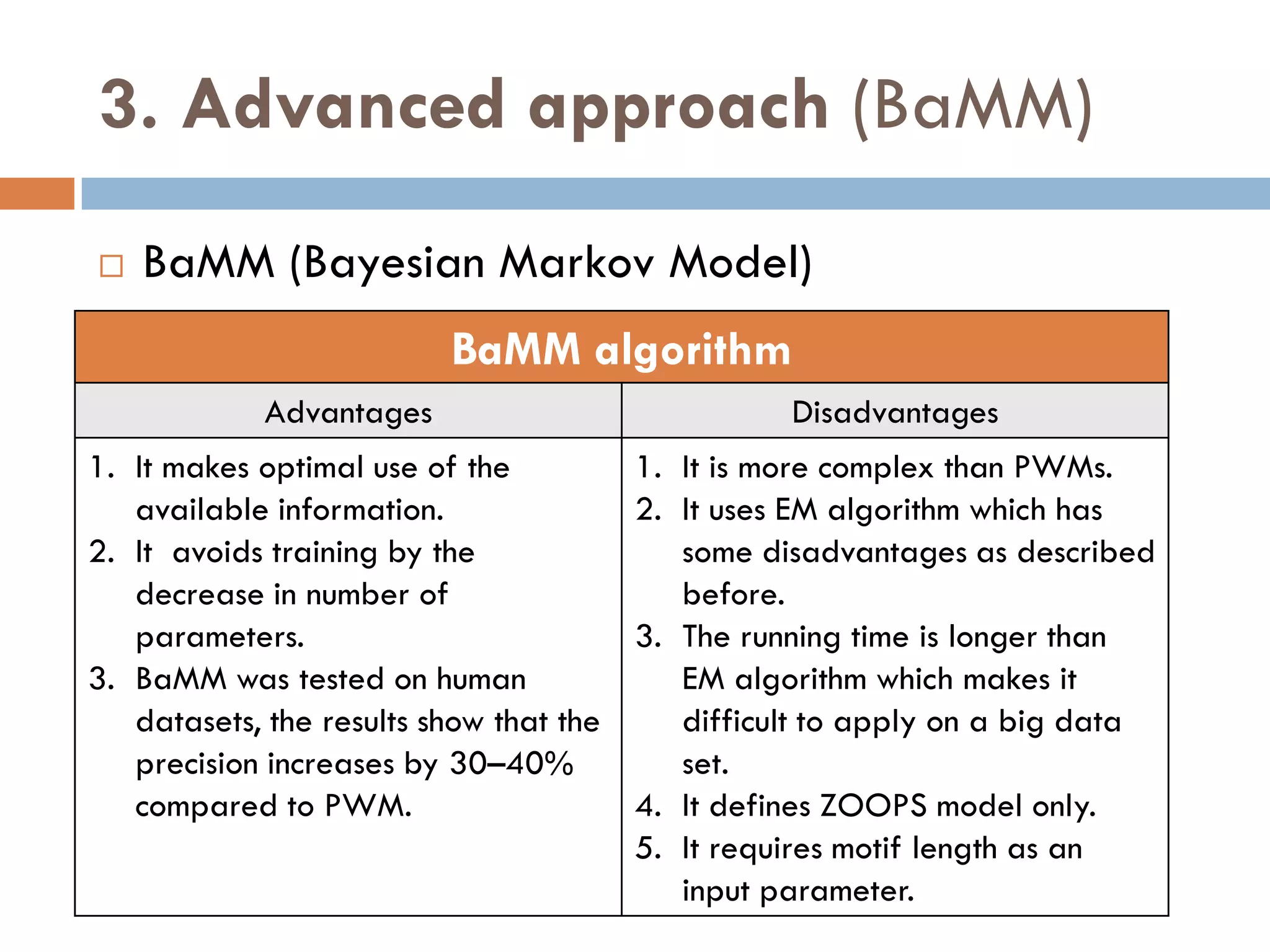

2. Probabilistic approaches using position weight matrices (PWM) and algorithms like EM, MEME, STEME, EXTREME, Gibbs sampling, AlignACE, and BioProspector.

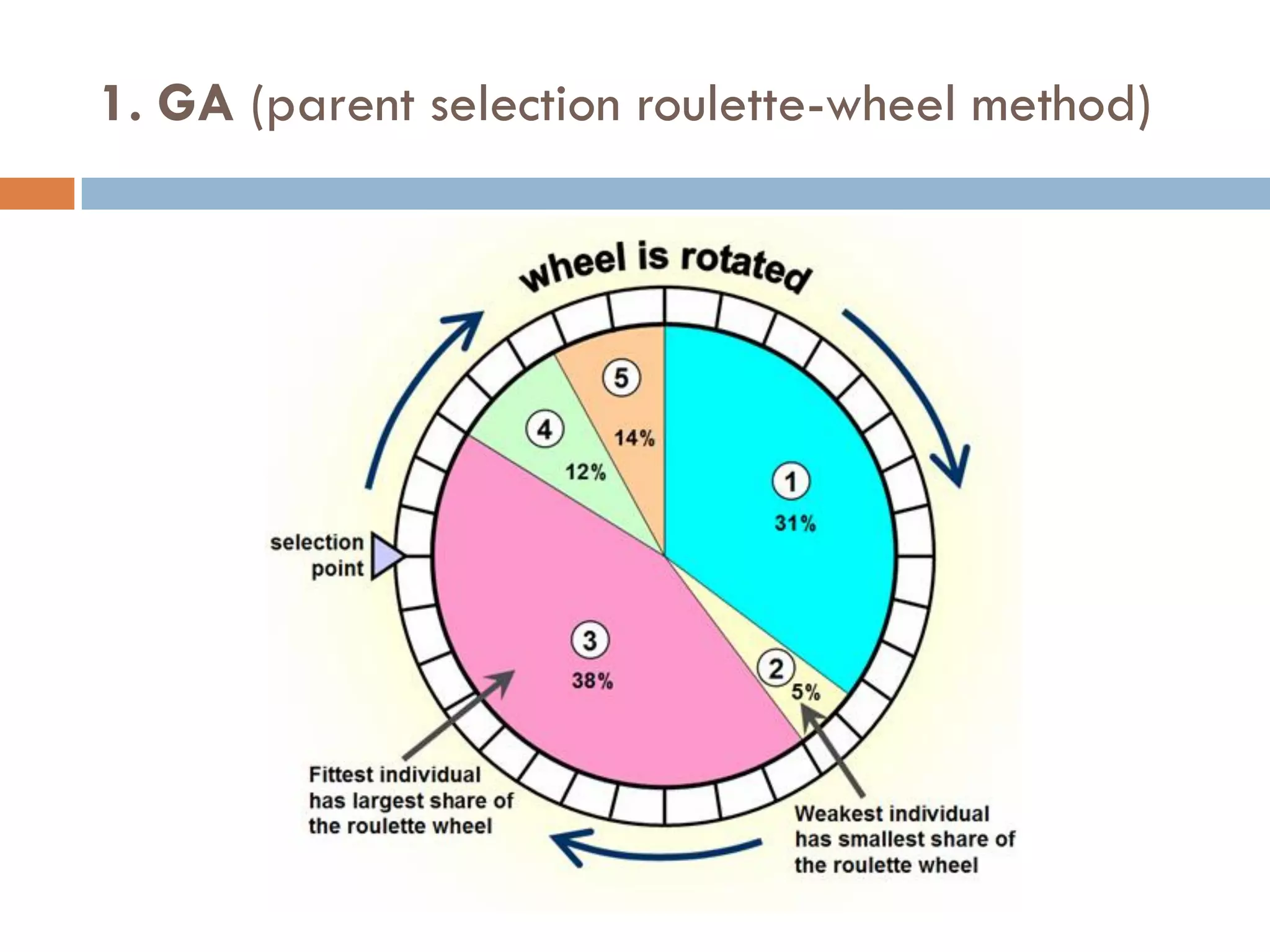

3. Other approaches like nature-inspired algorithms, combinatorial methods, and the LOGOS algorithm based on Bayesian modeling.

The document provides details on the methodology,

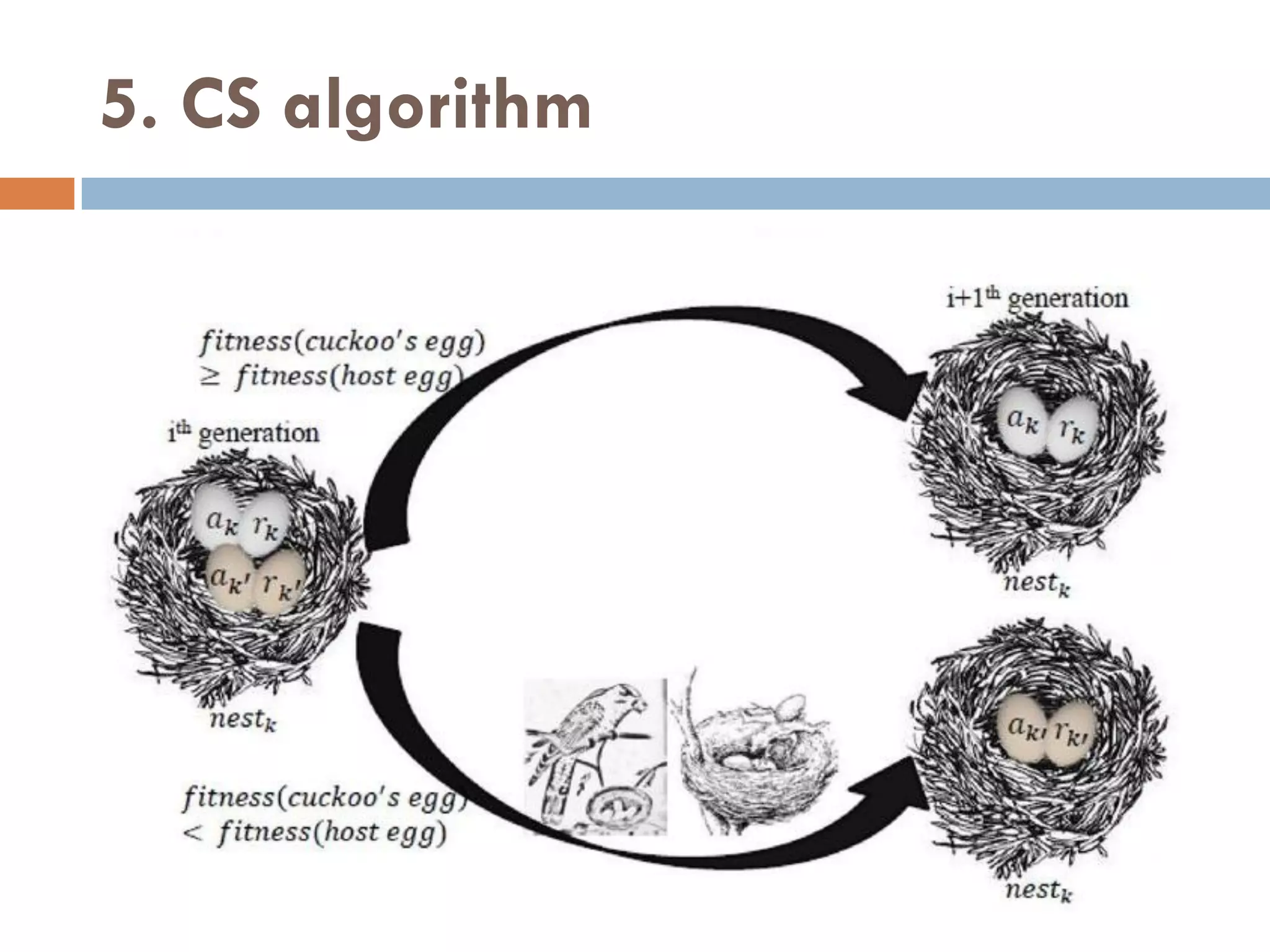

![5. CS algorithm

CS is a new simple heuristic search algorithm that is more efficient

than GA and PSO.

CS is inspired from brood parasitism reproduction behavior of

some cuckoo species in combination with Lévy flight behavior.

The cuckoos lay their eggs in nests of the other birds with the

abilities of selecting the lately spawned nests and removing existing

eggs to increase the hatching probability of their eggs.

If host birds discover these eggs, they either throw them away or

abandon the nest and build a new nest.

CS is characterized by the subsequent rules:

1. Each cuckoo lays one egg at a time and disposes its egg in a

random selected nest.

2. The nests that have high quality of eggs (Solutions) are the best

and will continue to the following generations.

3. The number of accessible host nests is fixed, and a host bird can

discover a parasitic egg with a probability Pa E [0,1].](https://image.slidesharecdn.com/motiffinding-221031104130-3b7420f8/75/Motif-Finding-pdf-77-2048.jpg)