Embed presentation

Download to read offline

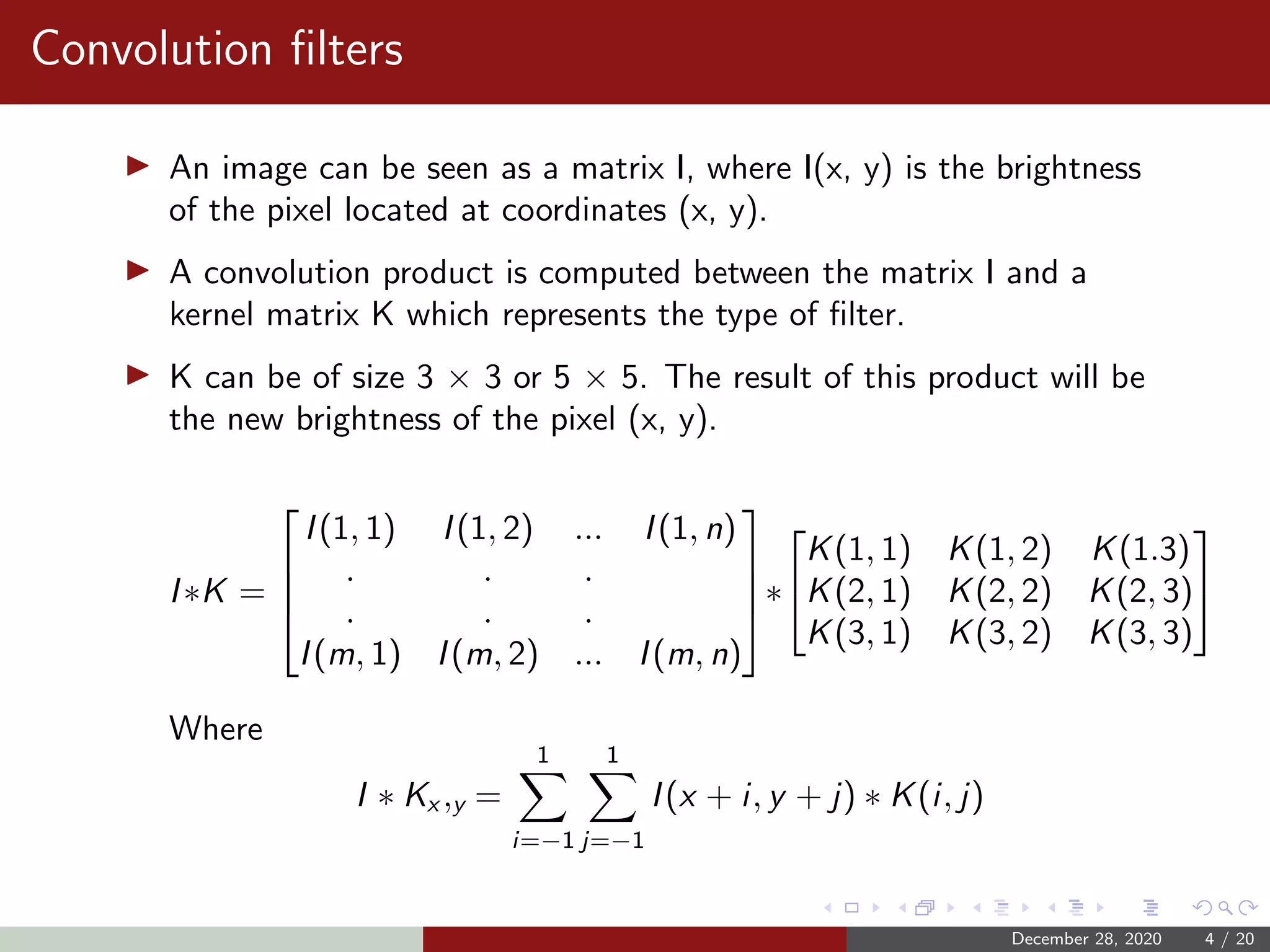

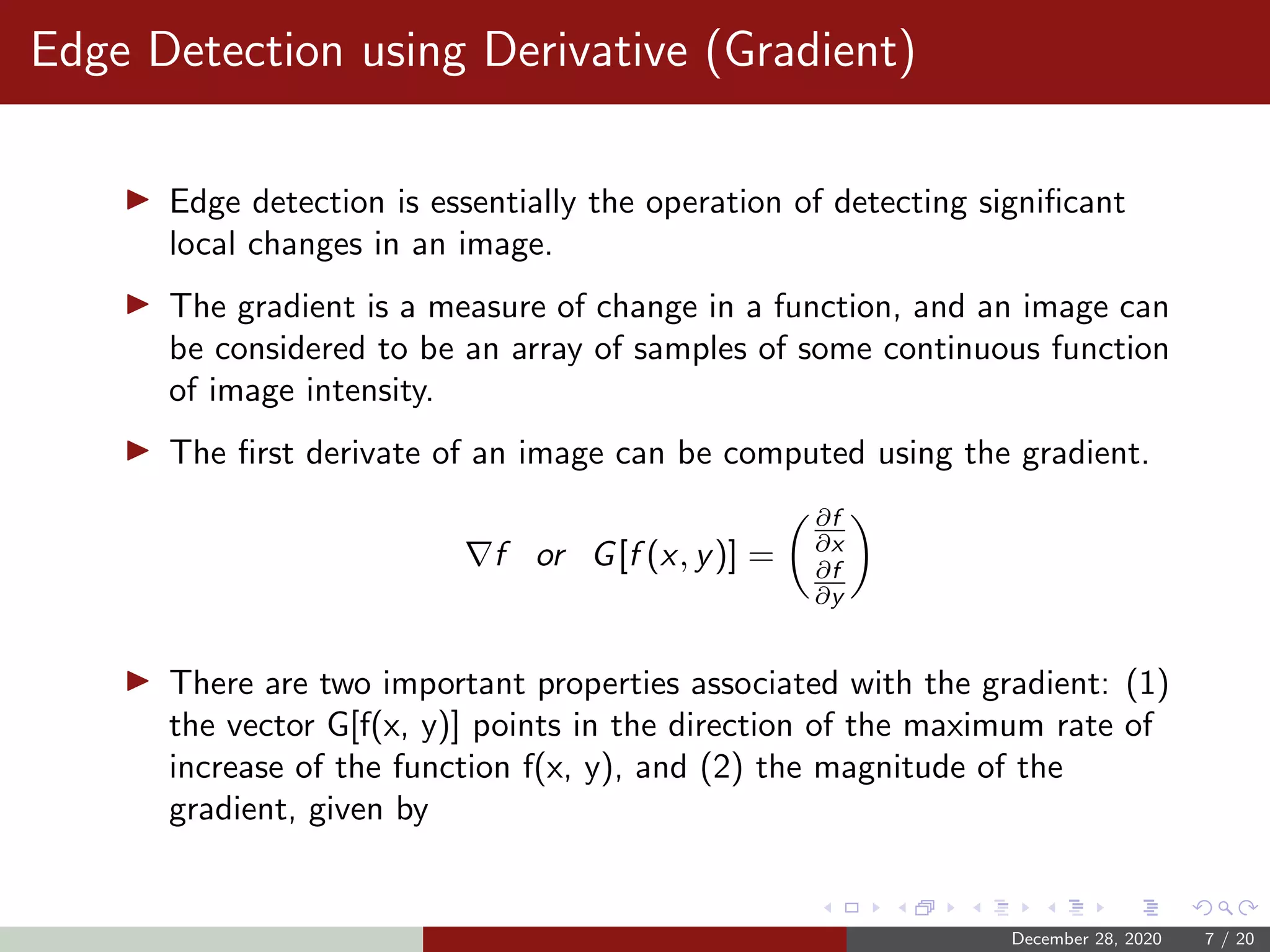

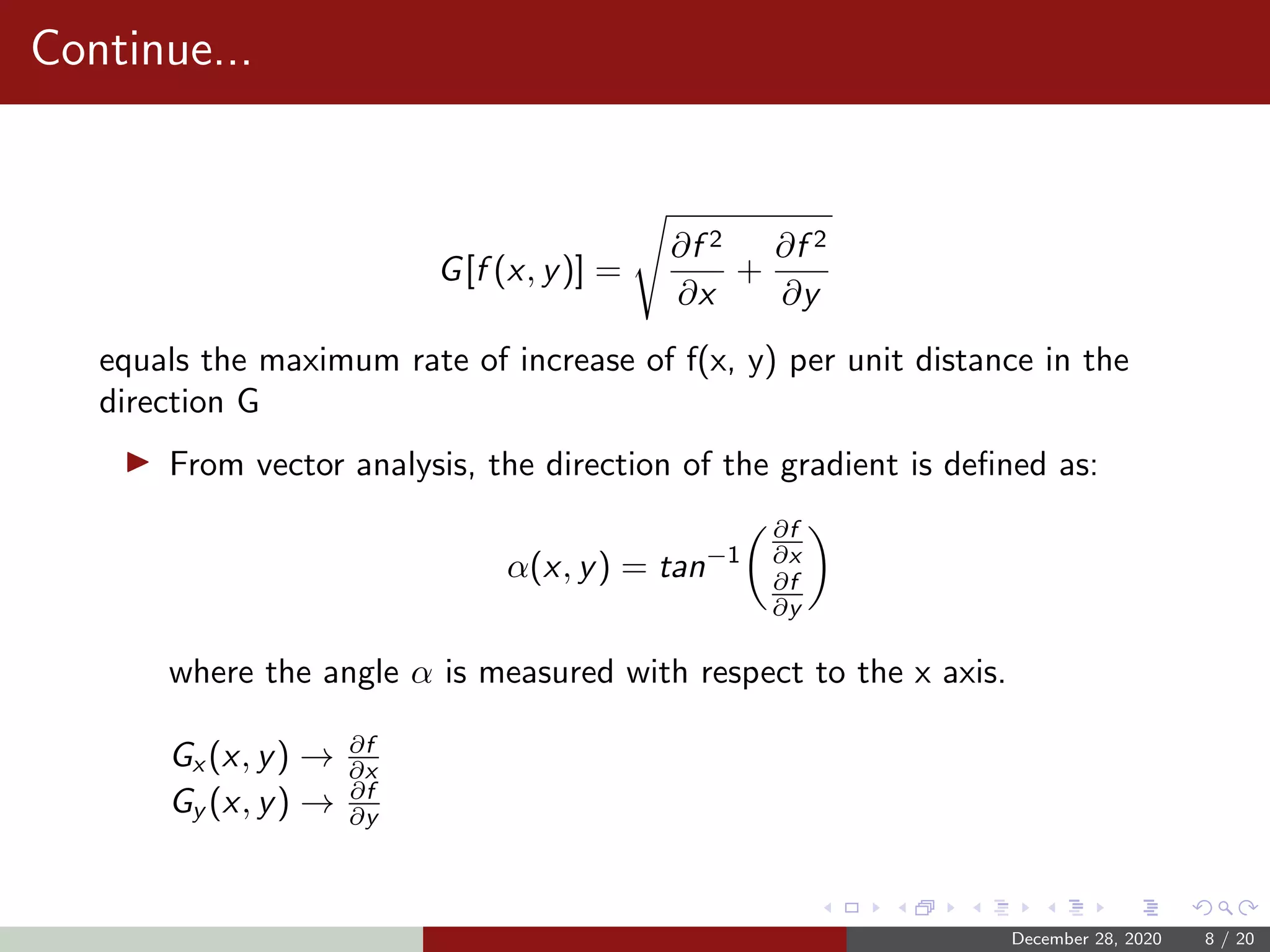

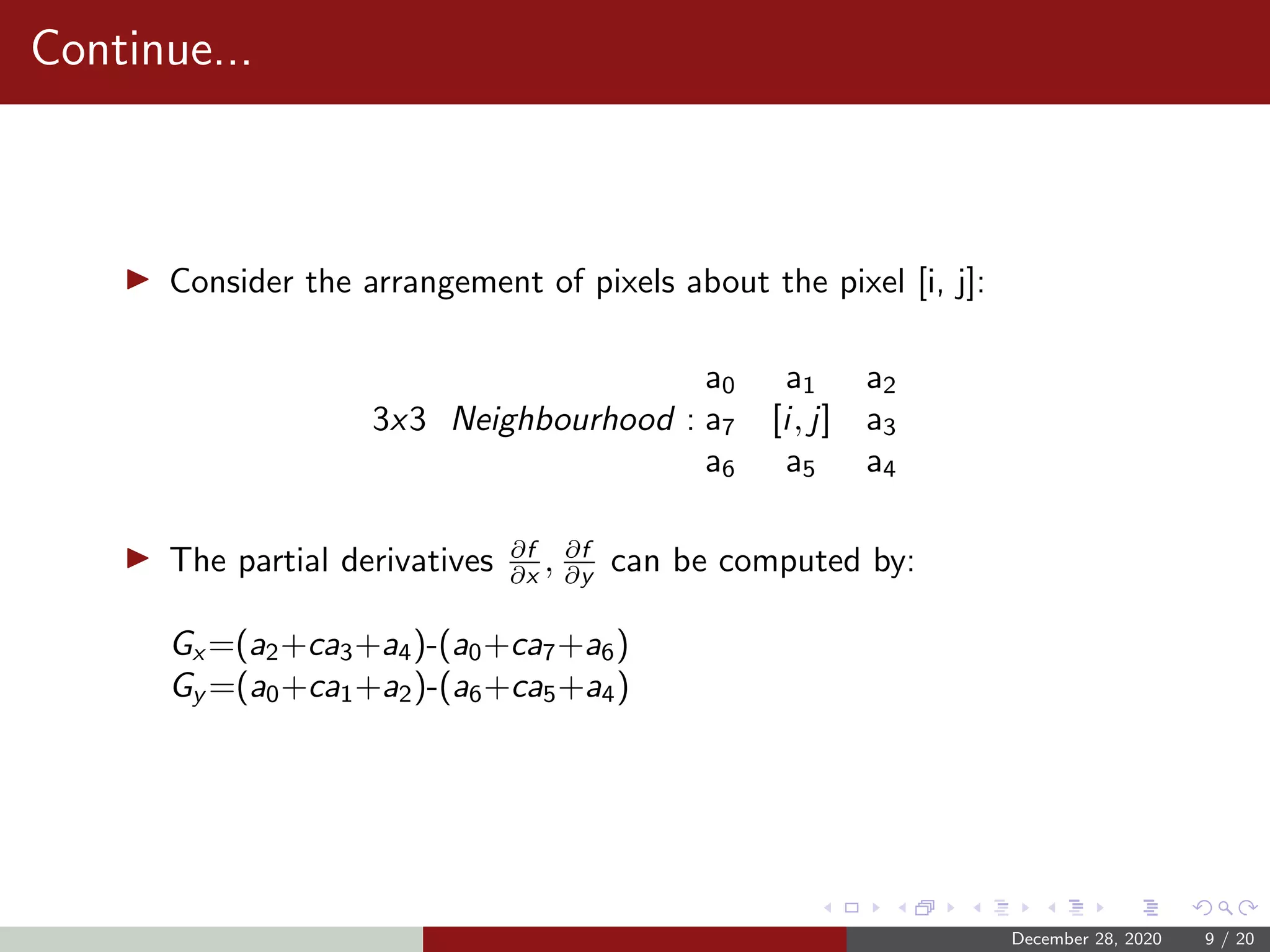

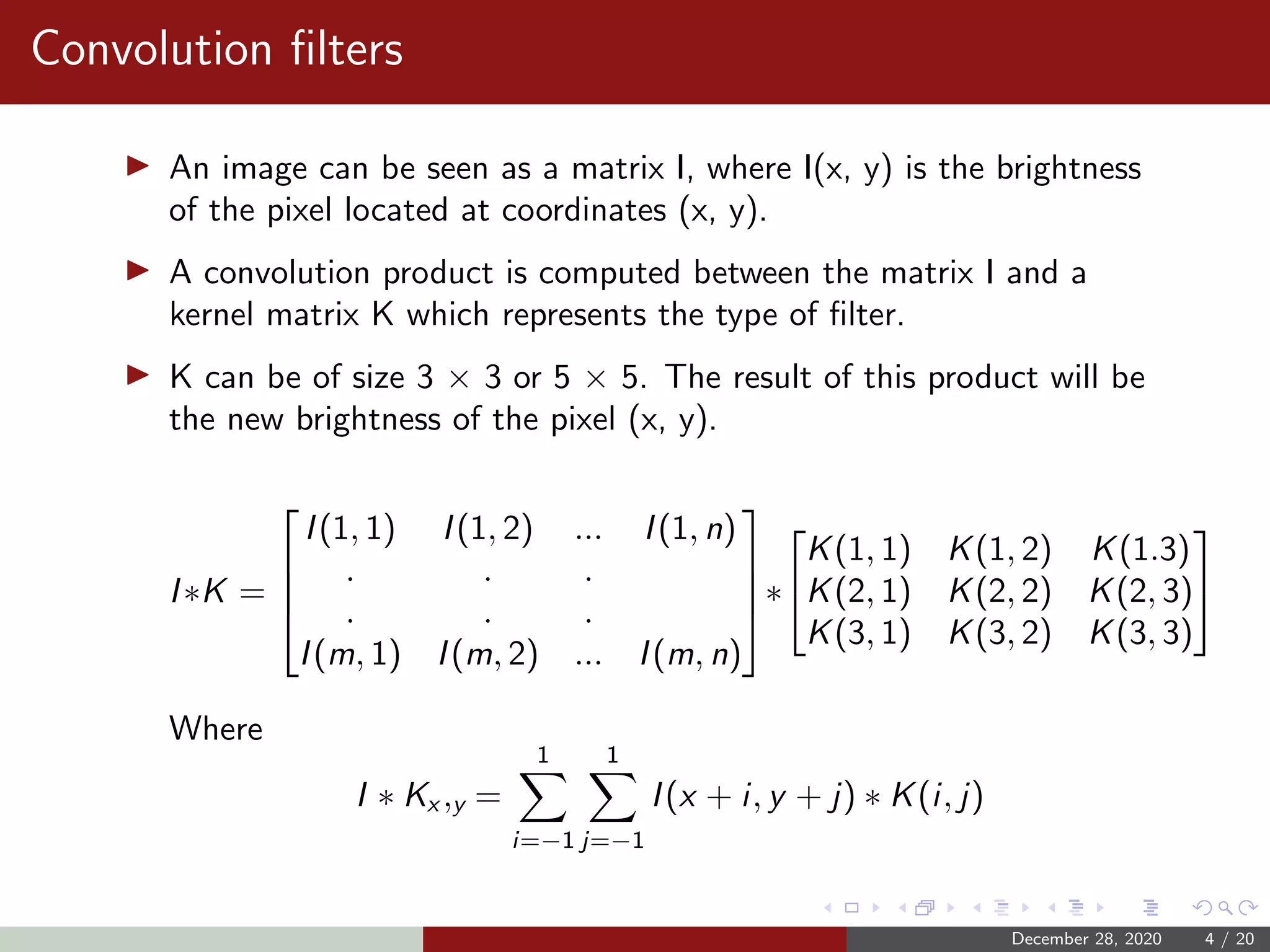

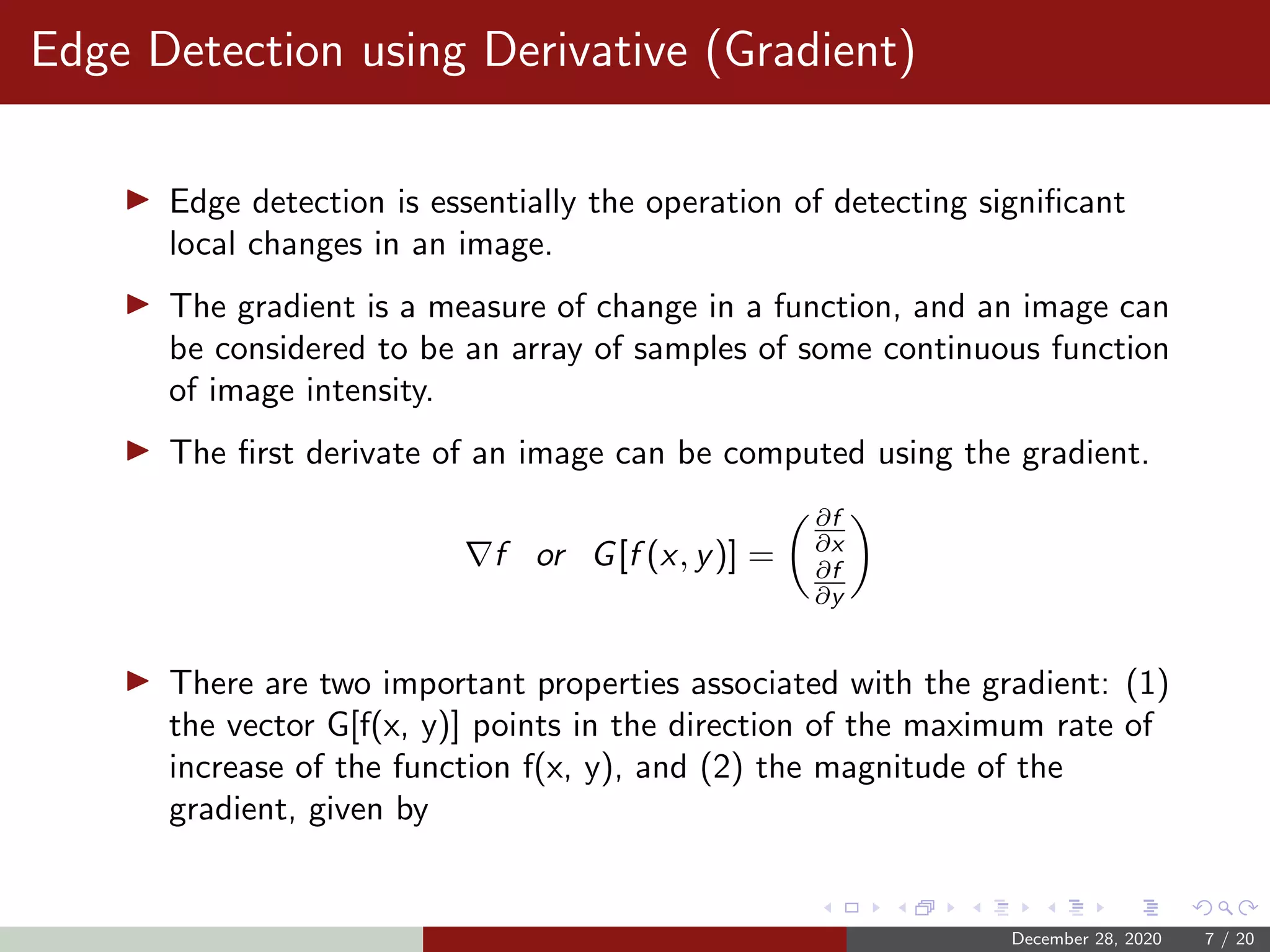

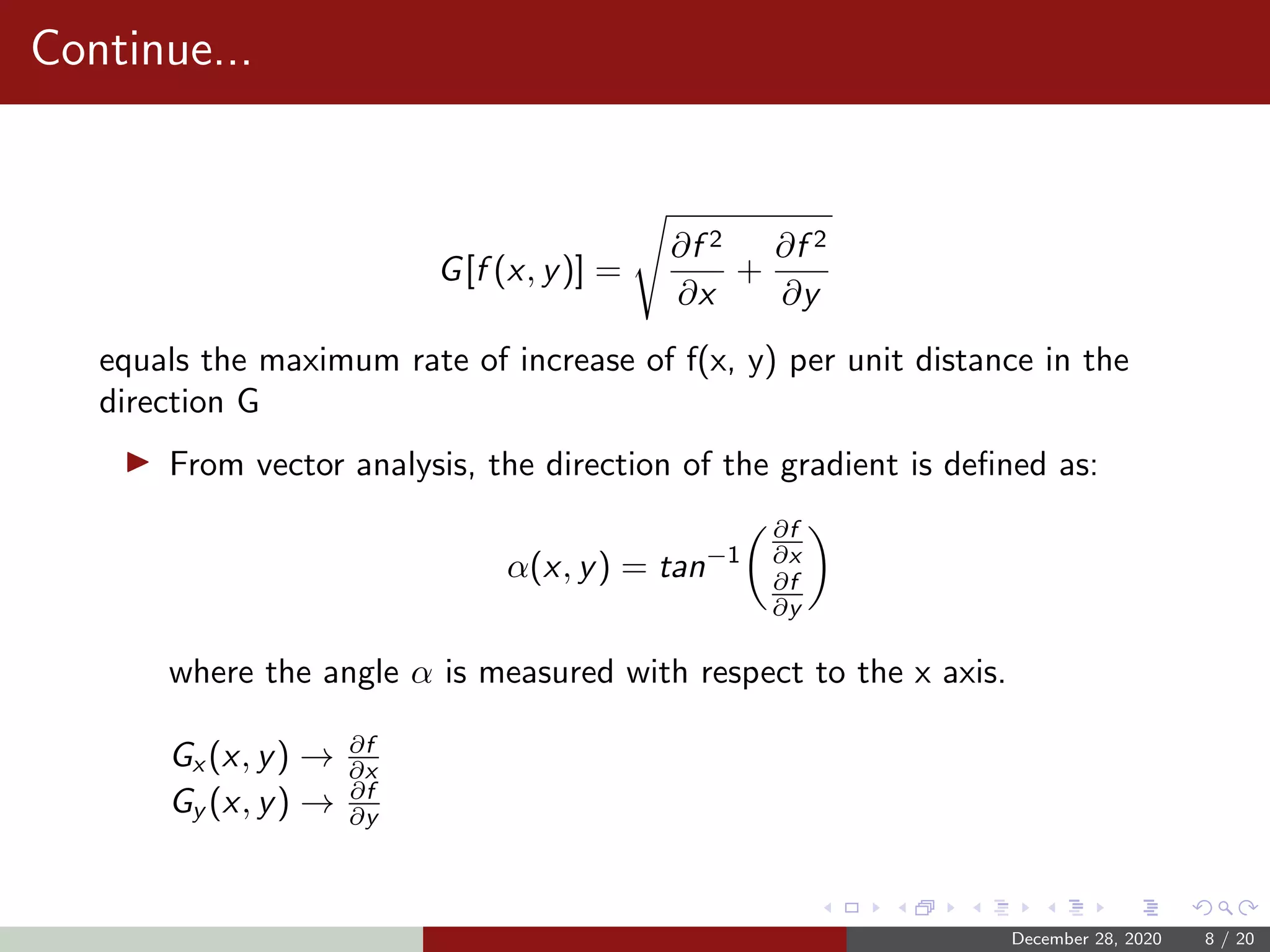

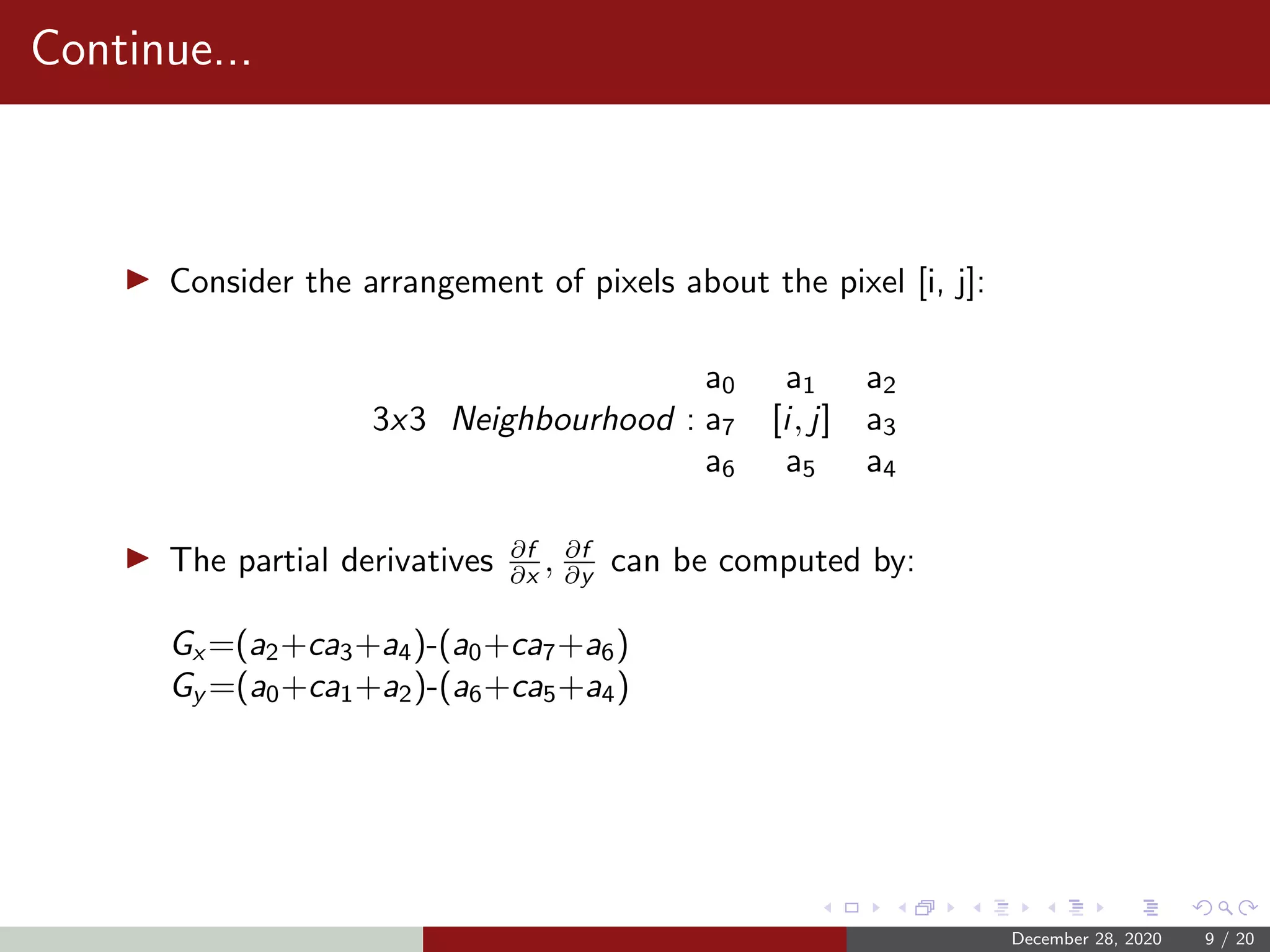

The document discusses the mathematical concepts behind kernels used in convolution operations, particularly in image processing and machine learning. It covers various aspects like edge detection using different methods, convolution filters, and the calculation of Gaussian convolution kernels. Key techniques such as the Sobel and Prewitt operators for detecting edges are also explained, emphasizing the importance of kernel design in feature extraction from images.