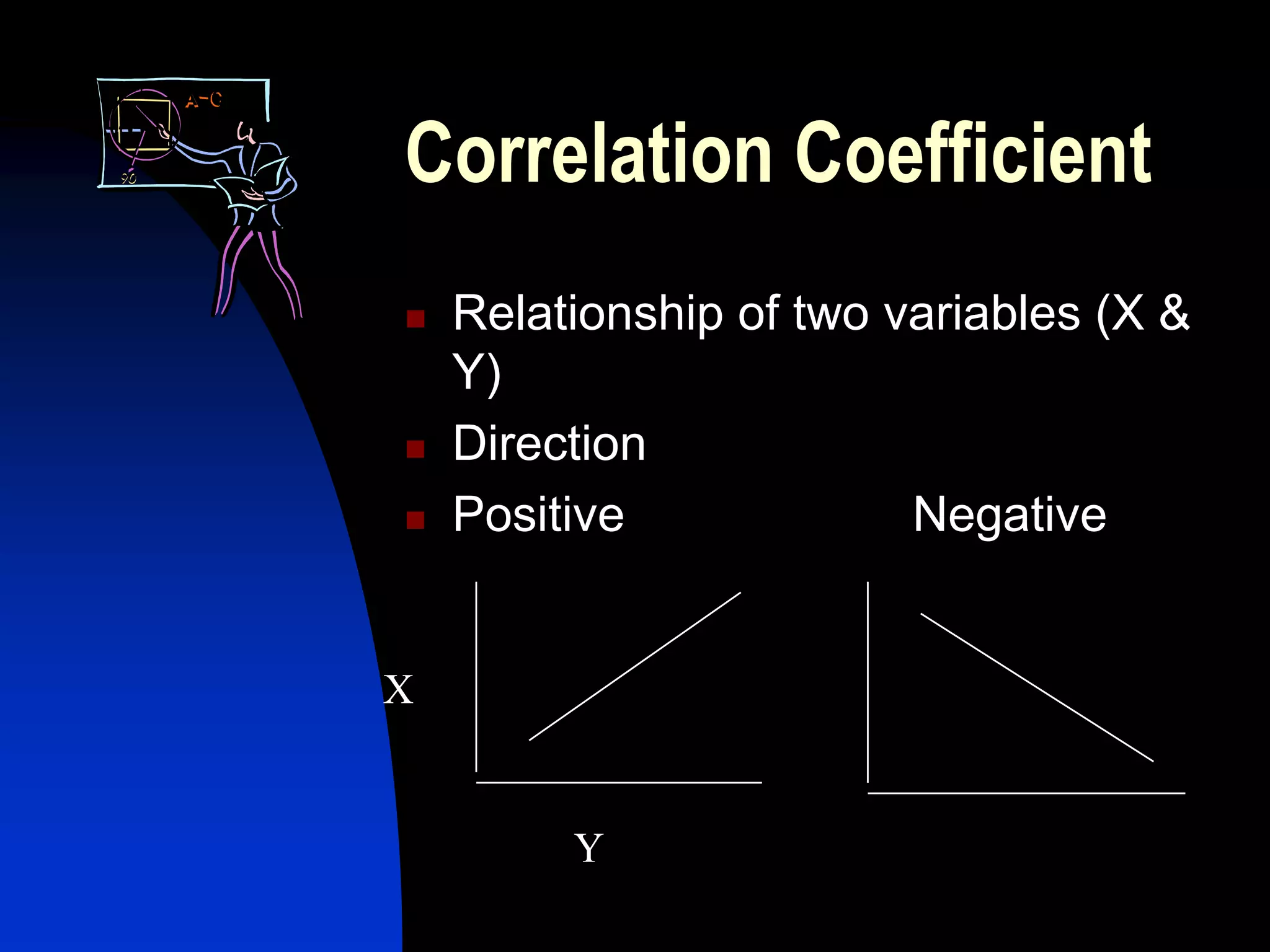

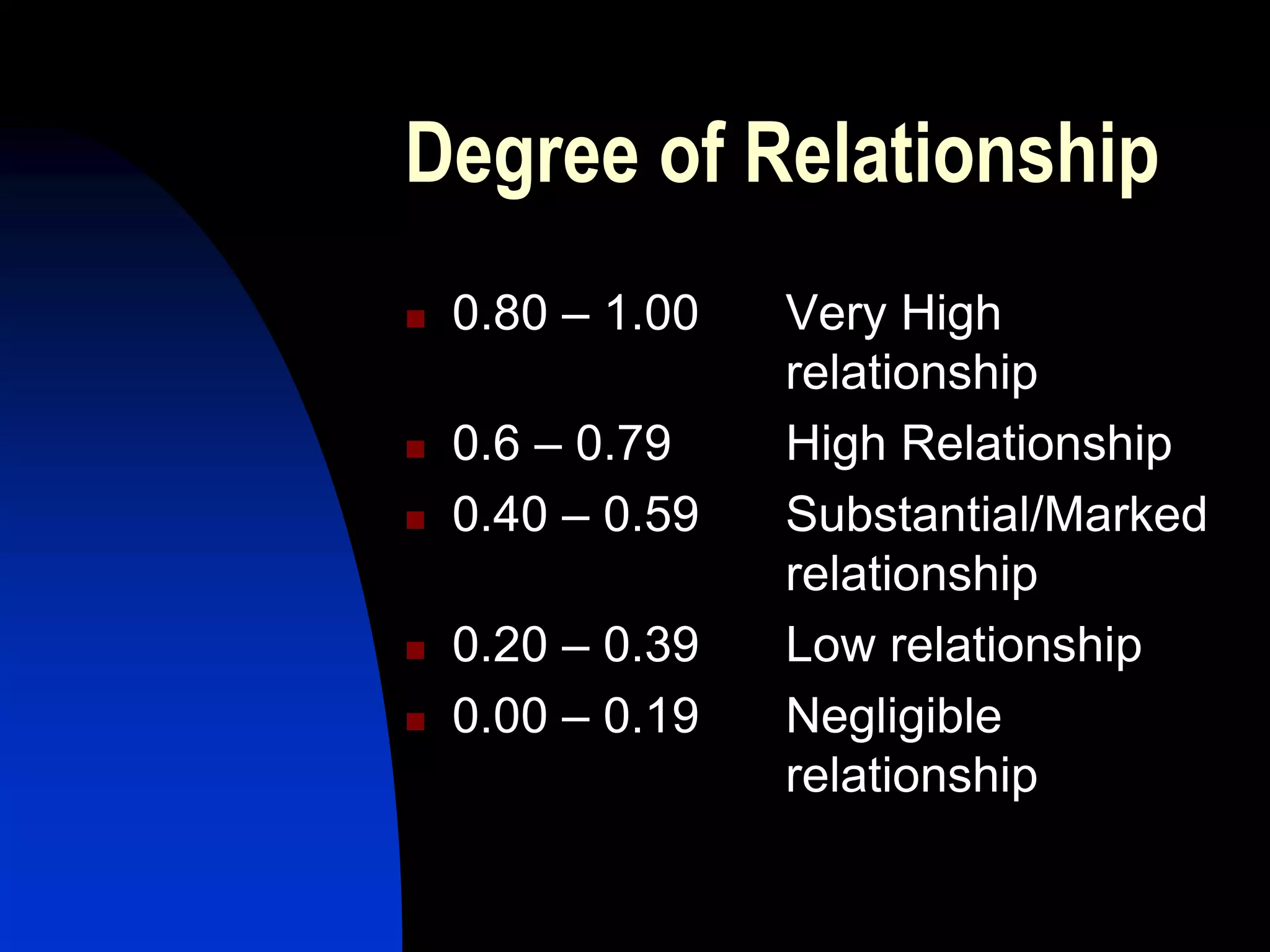

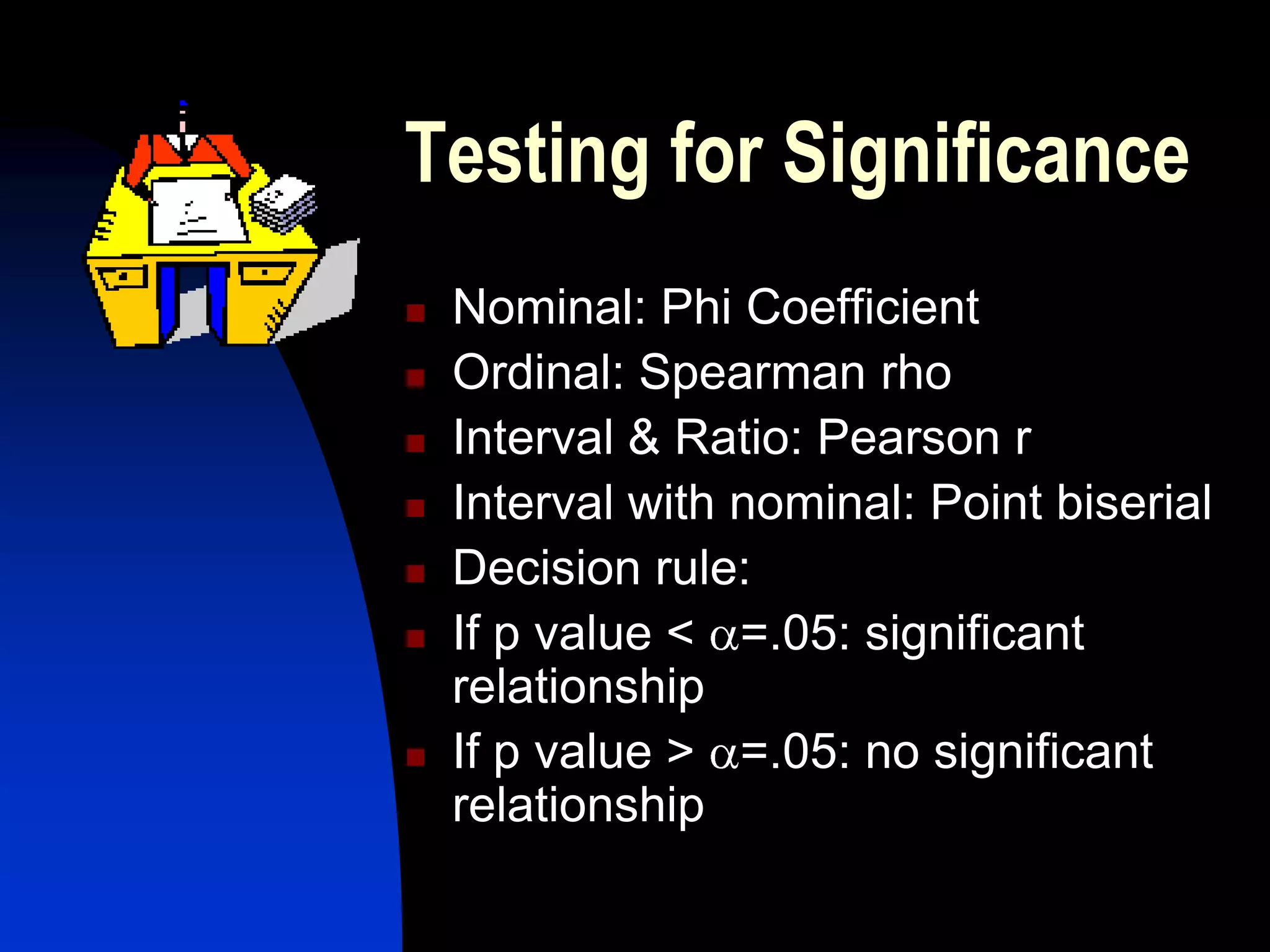

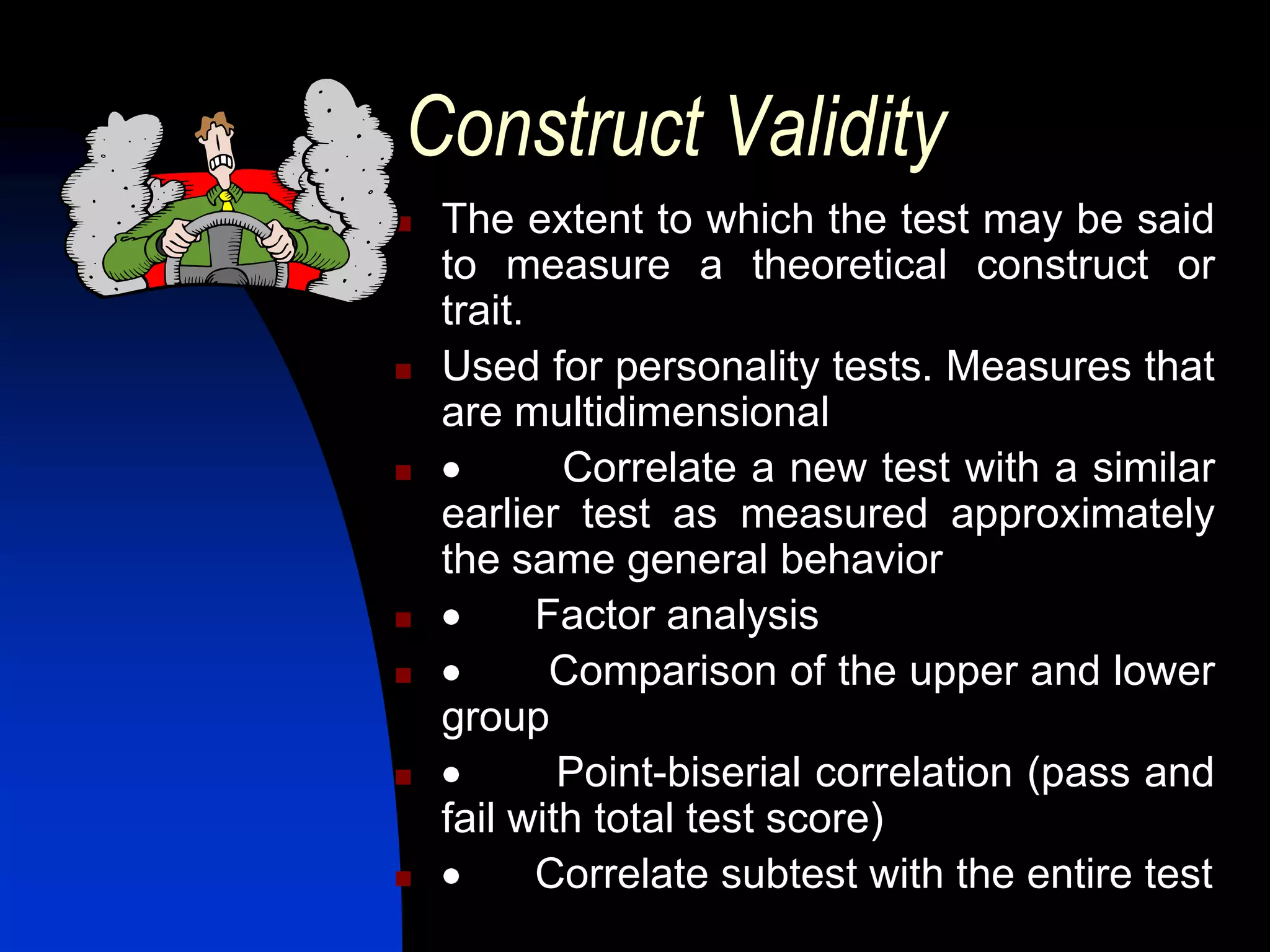

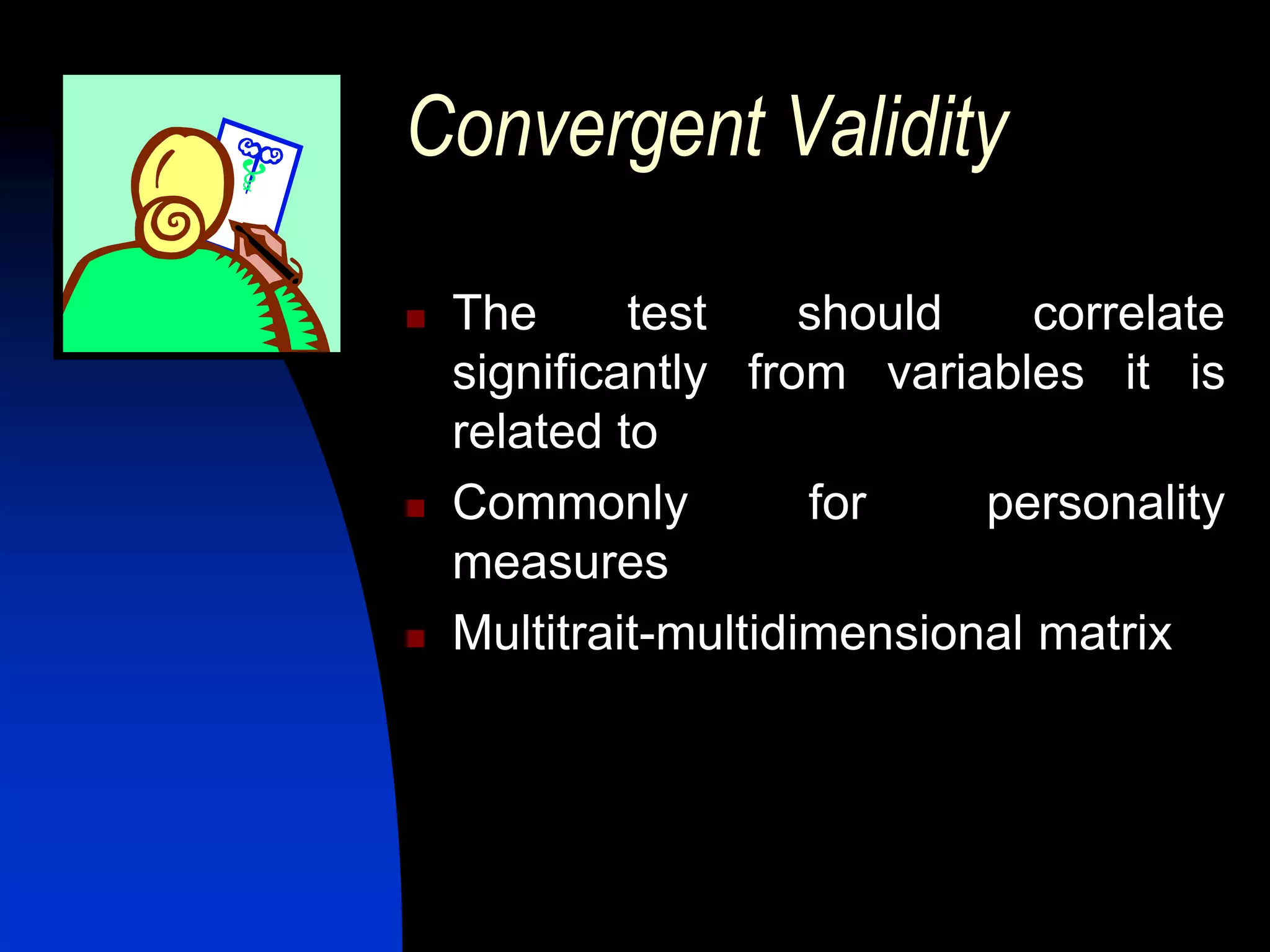

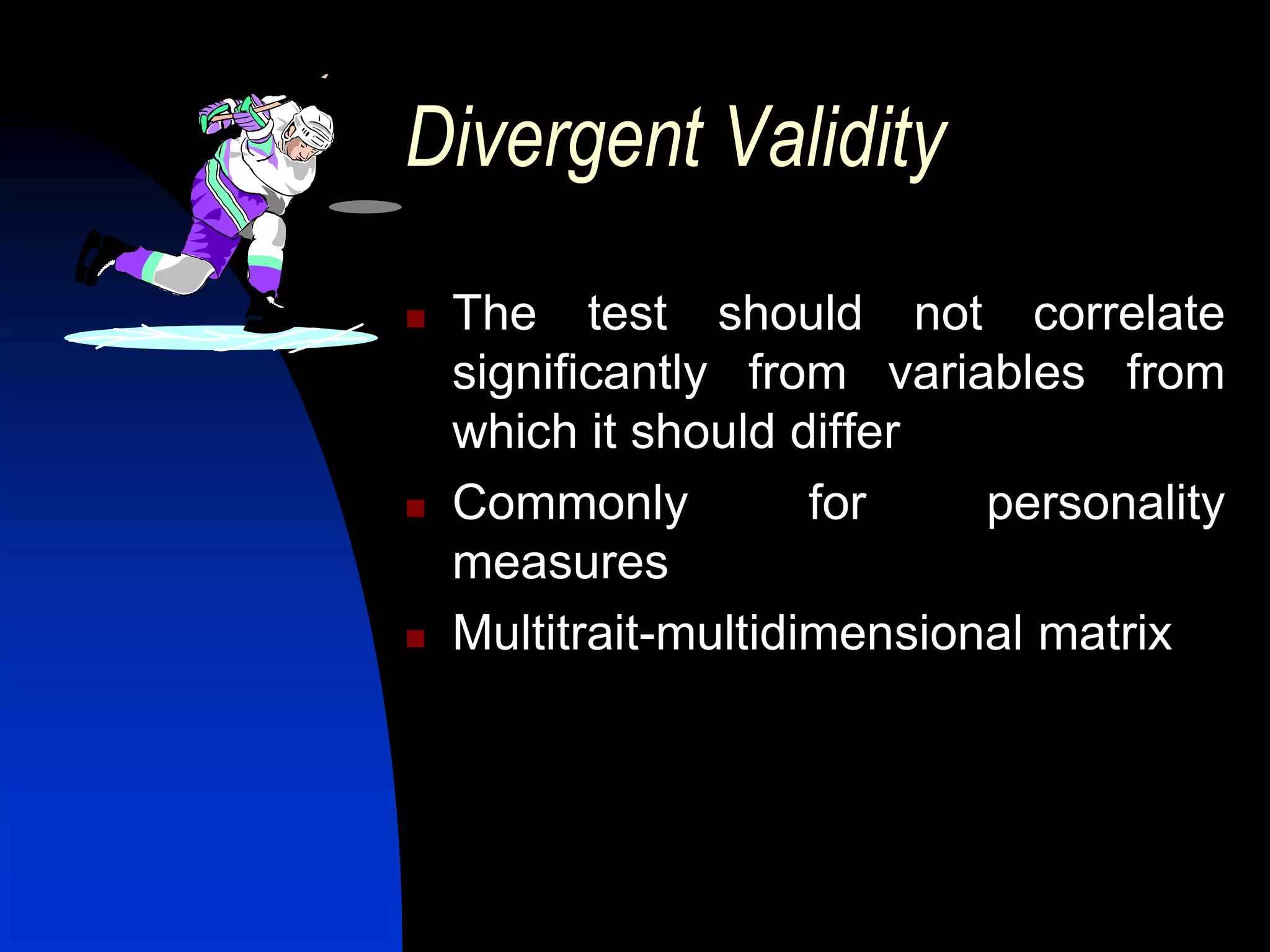

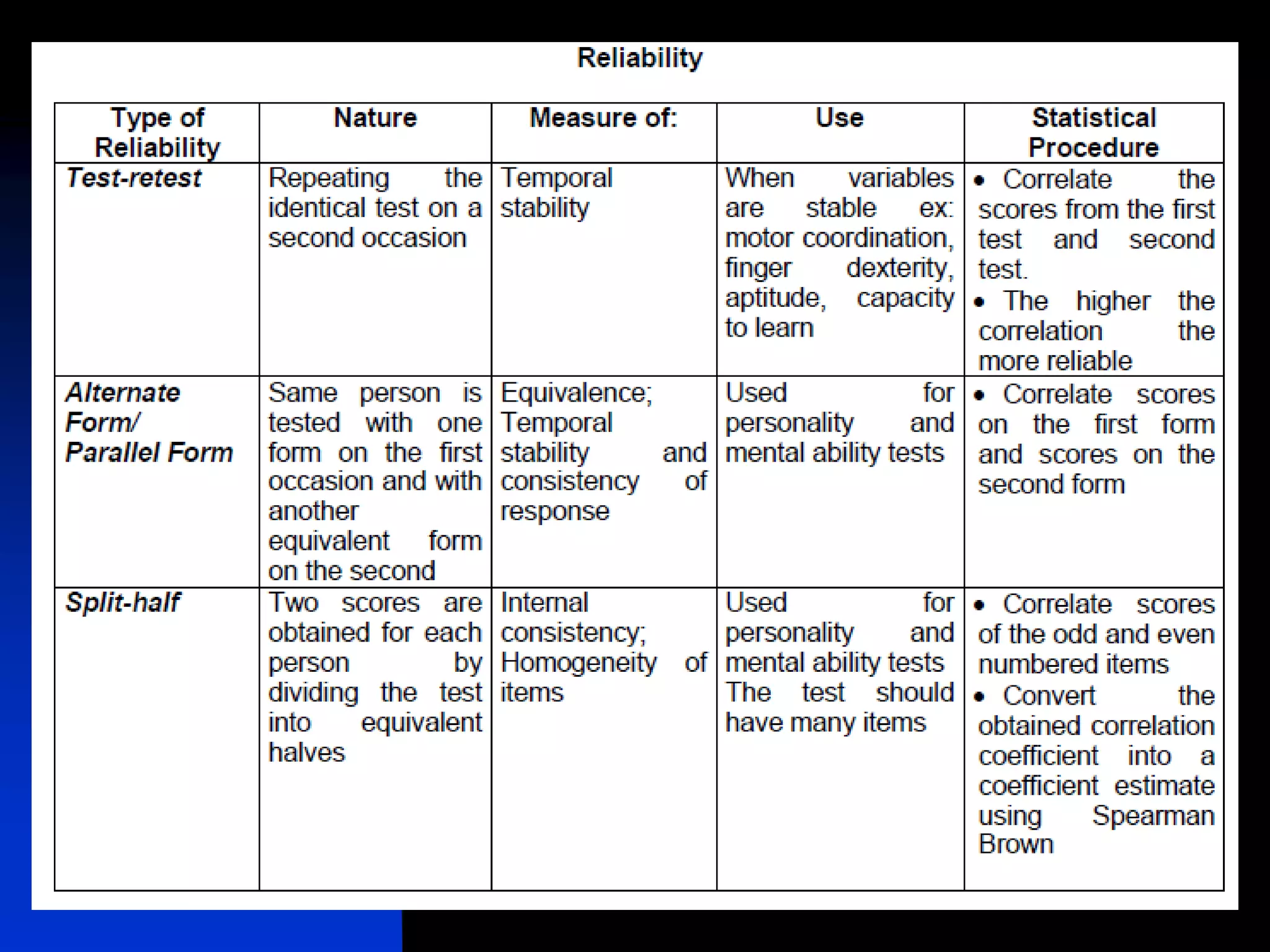

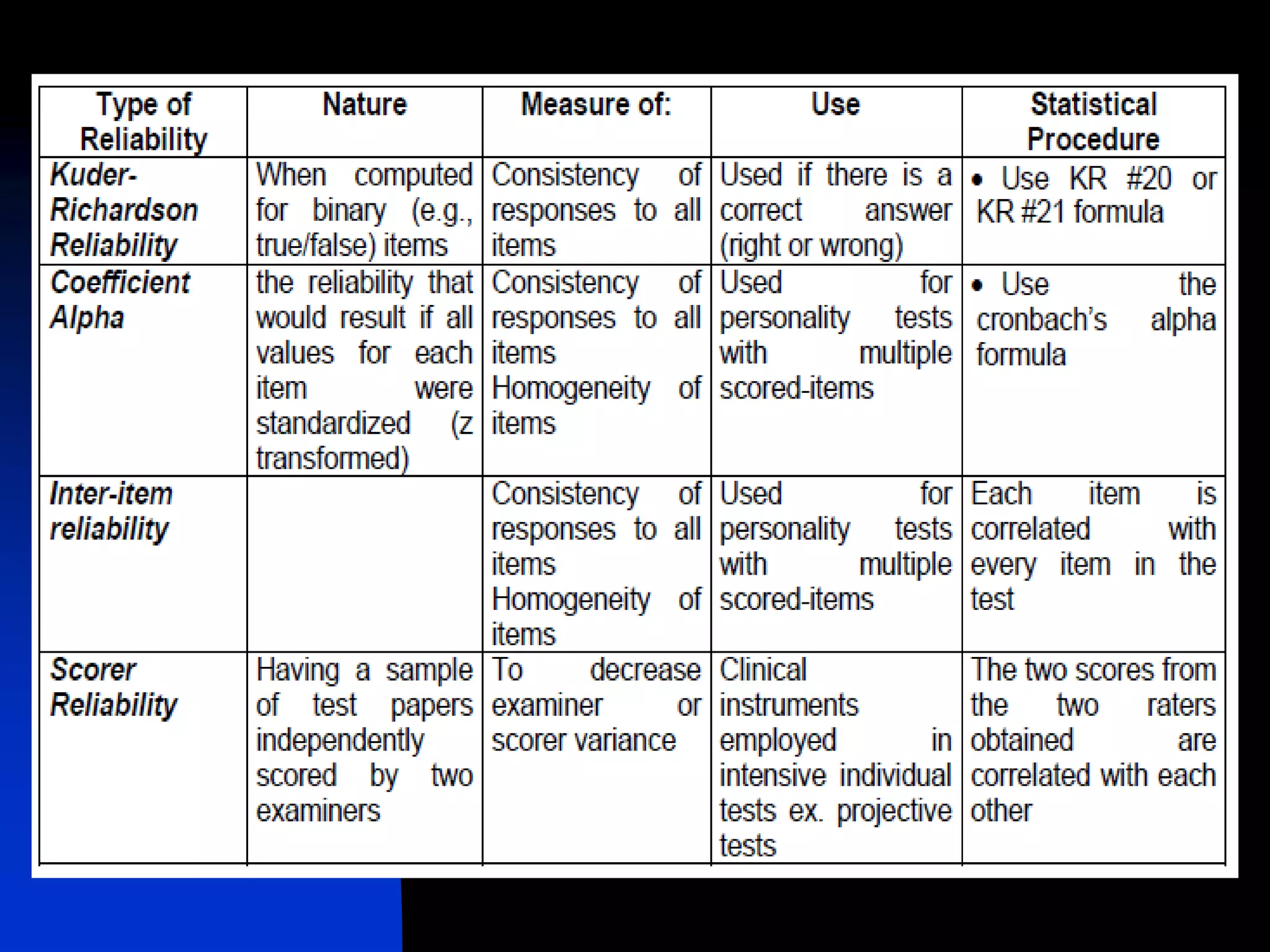

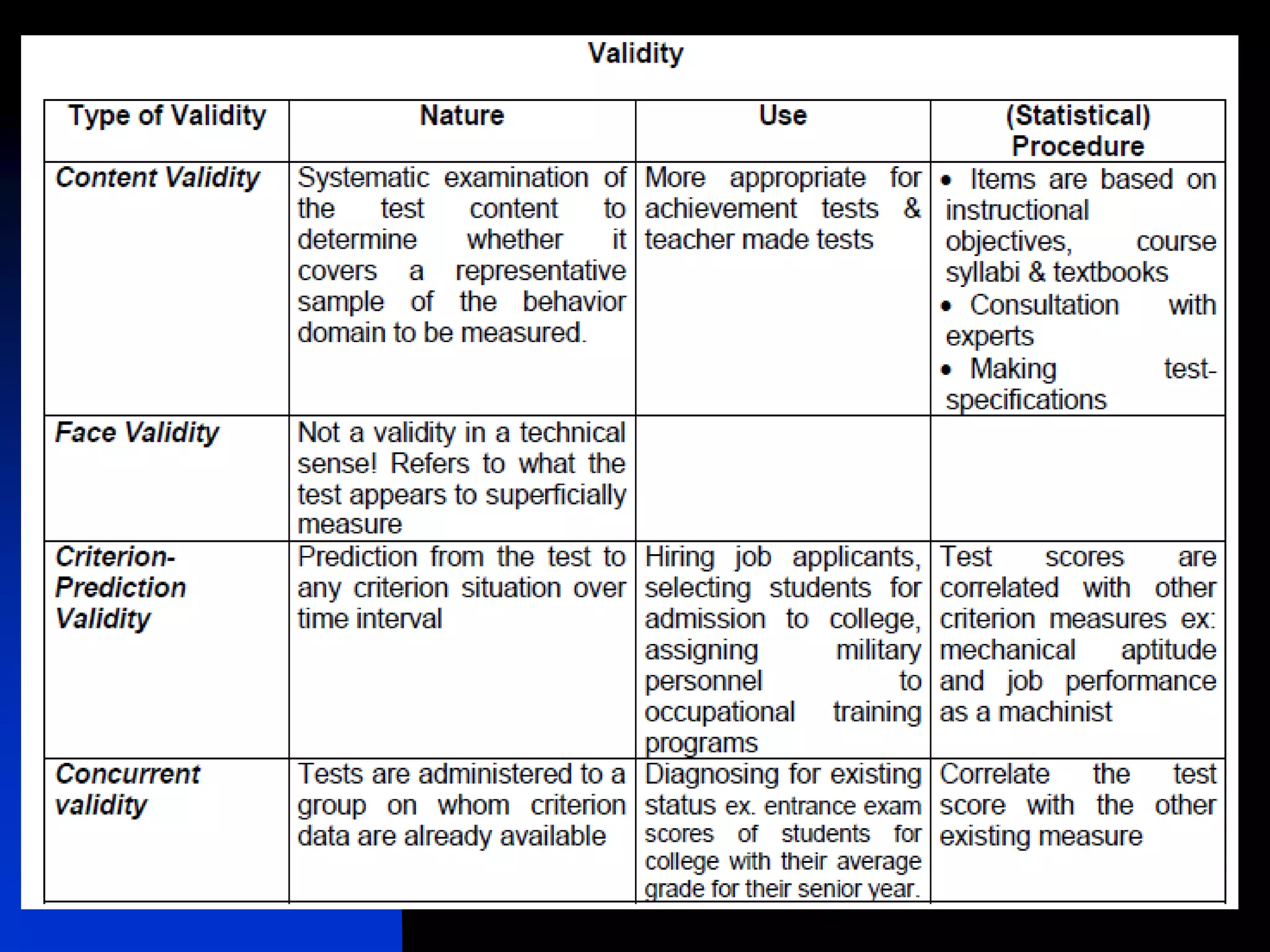

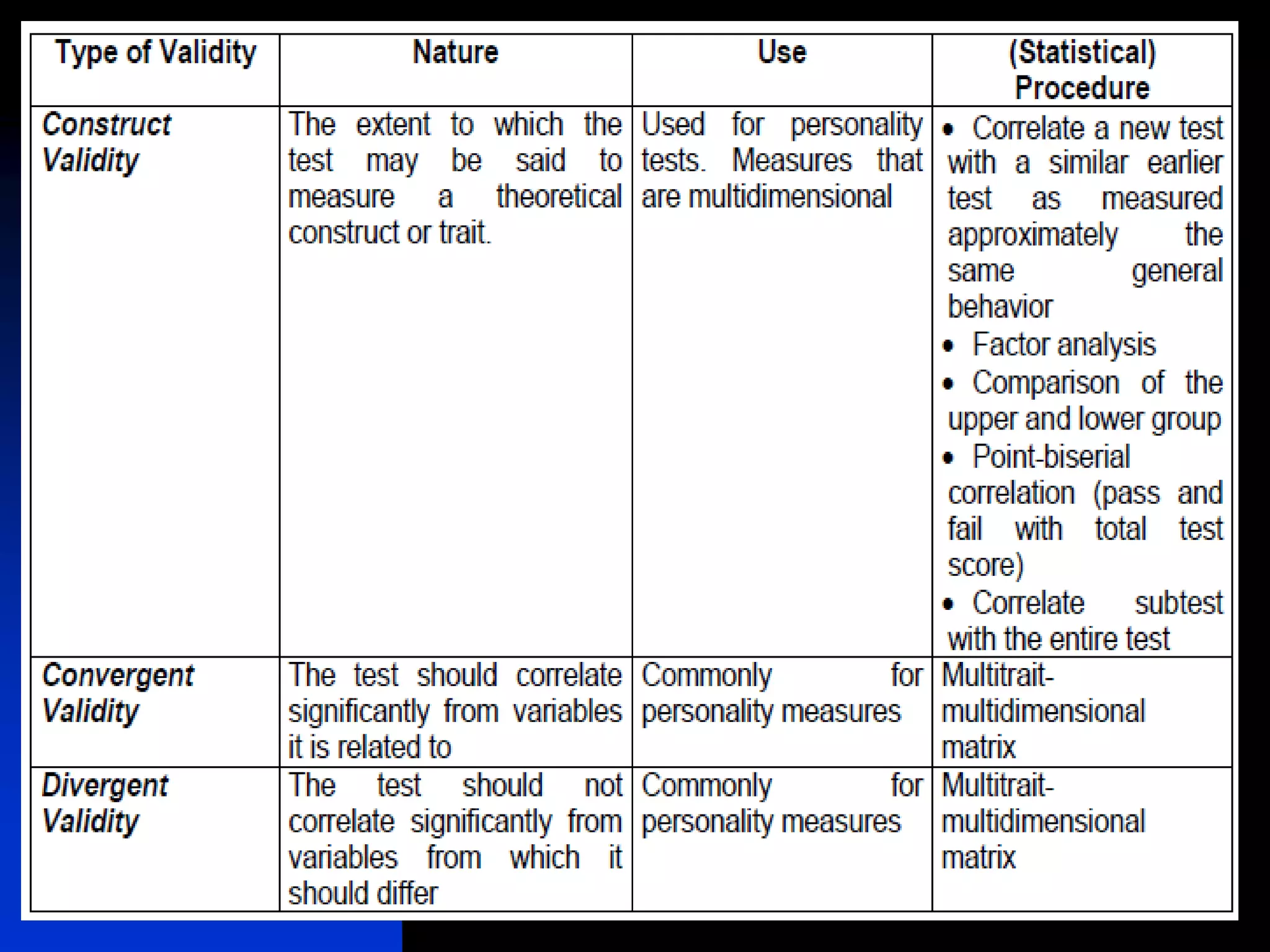

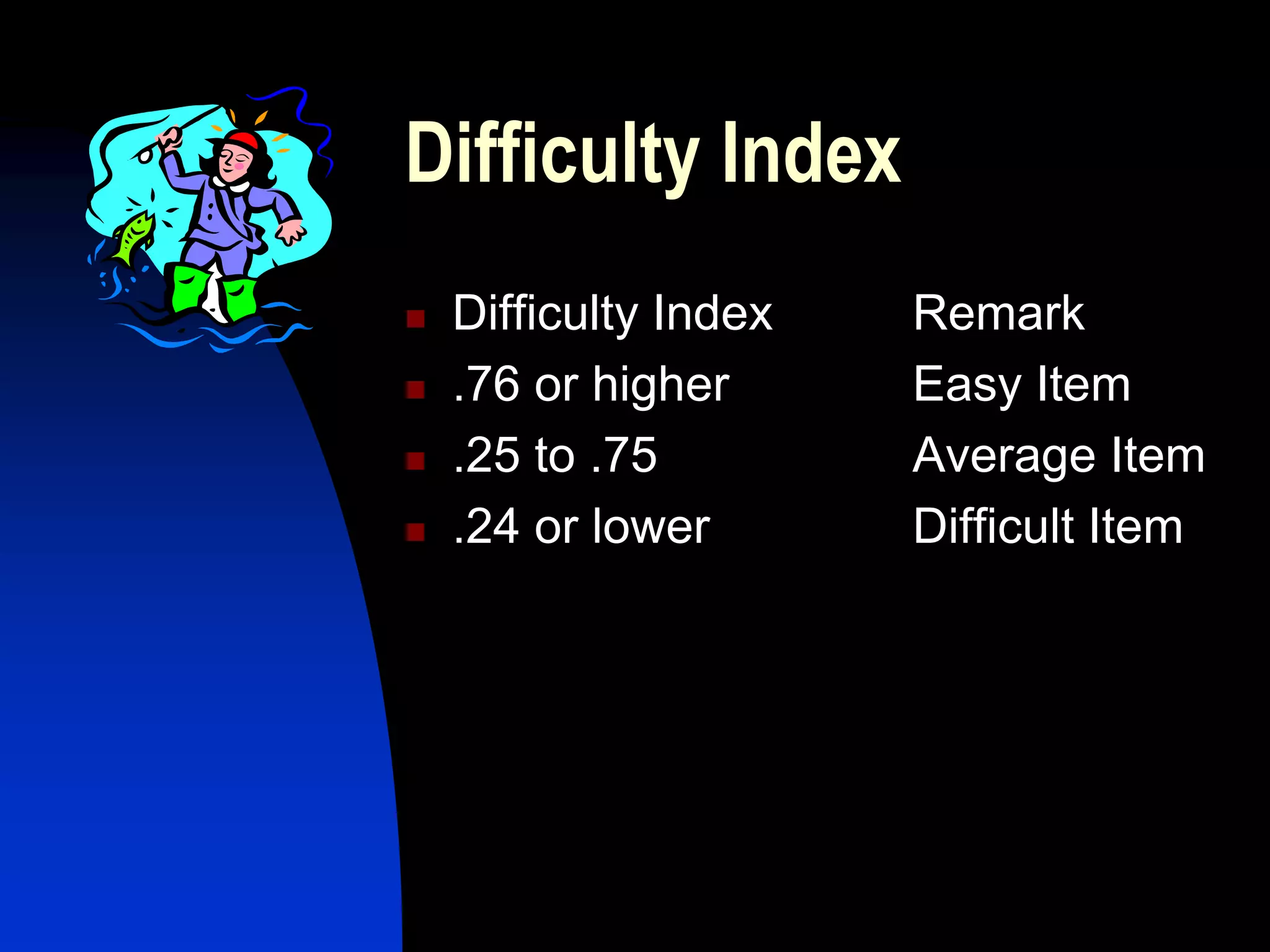

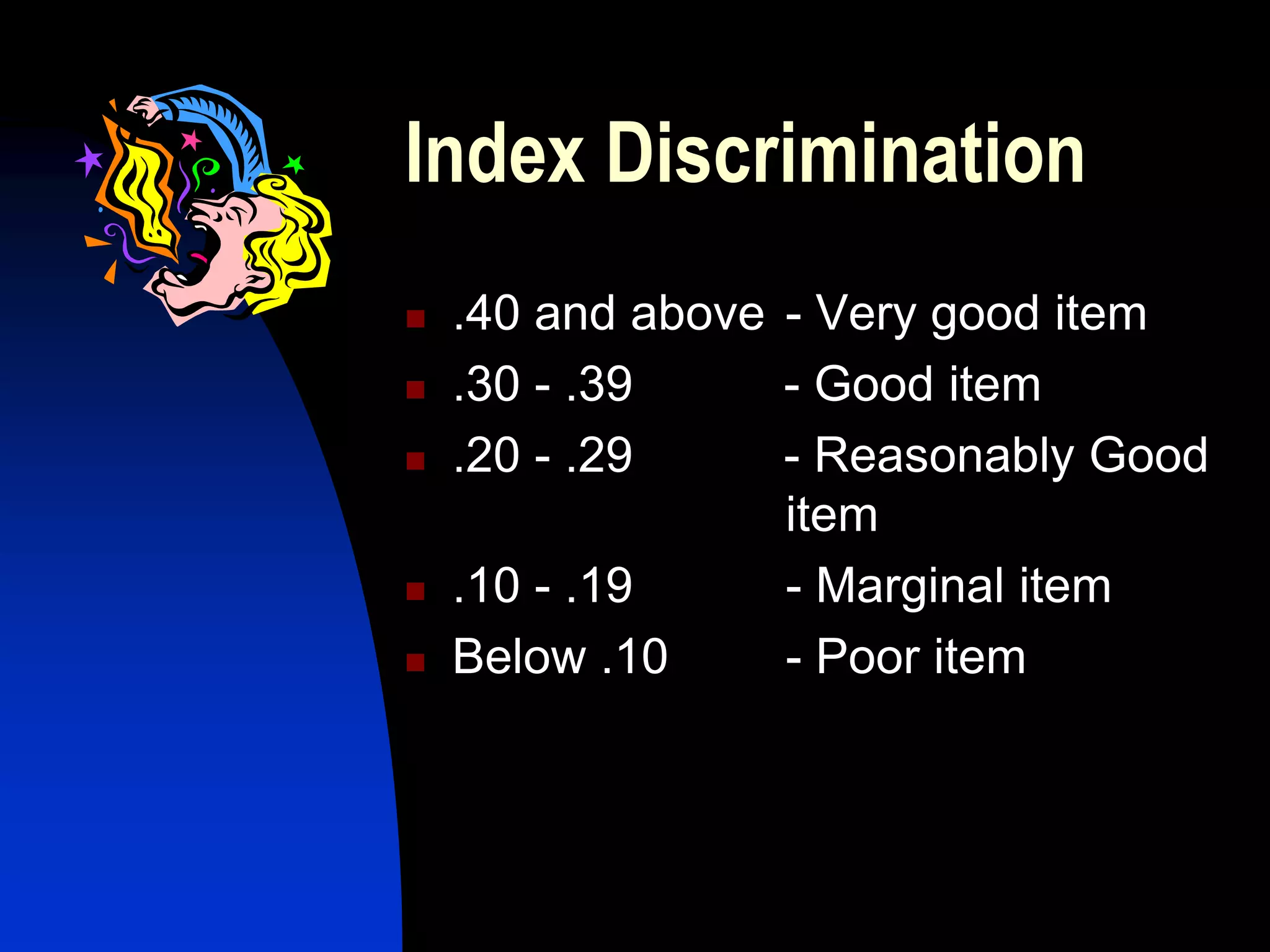

The document discusses methods for establishing the validity and reliability of assessment tools, including correlation coefficients, levels of measurement, reliability measures like test-retest reliability and internal consistency, and validity measures like content validity, criterion validity, and construct validity. It also covers item analysis methods like calculating item difficulty and discrimination indices. The overall aim is to evaluate how well an assessment tool measures what it intends to measure and produces consistent results.