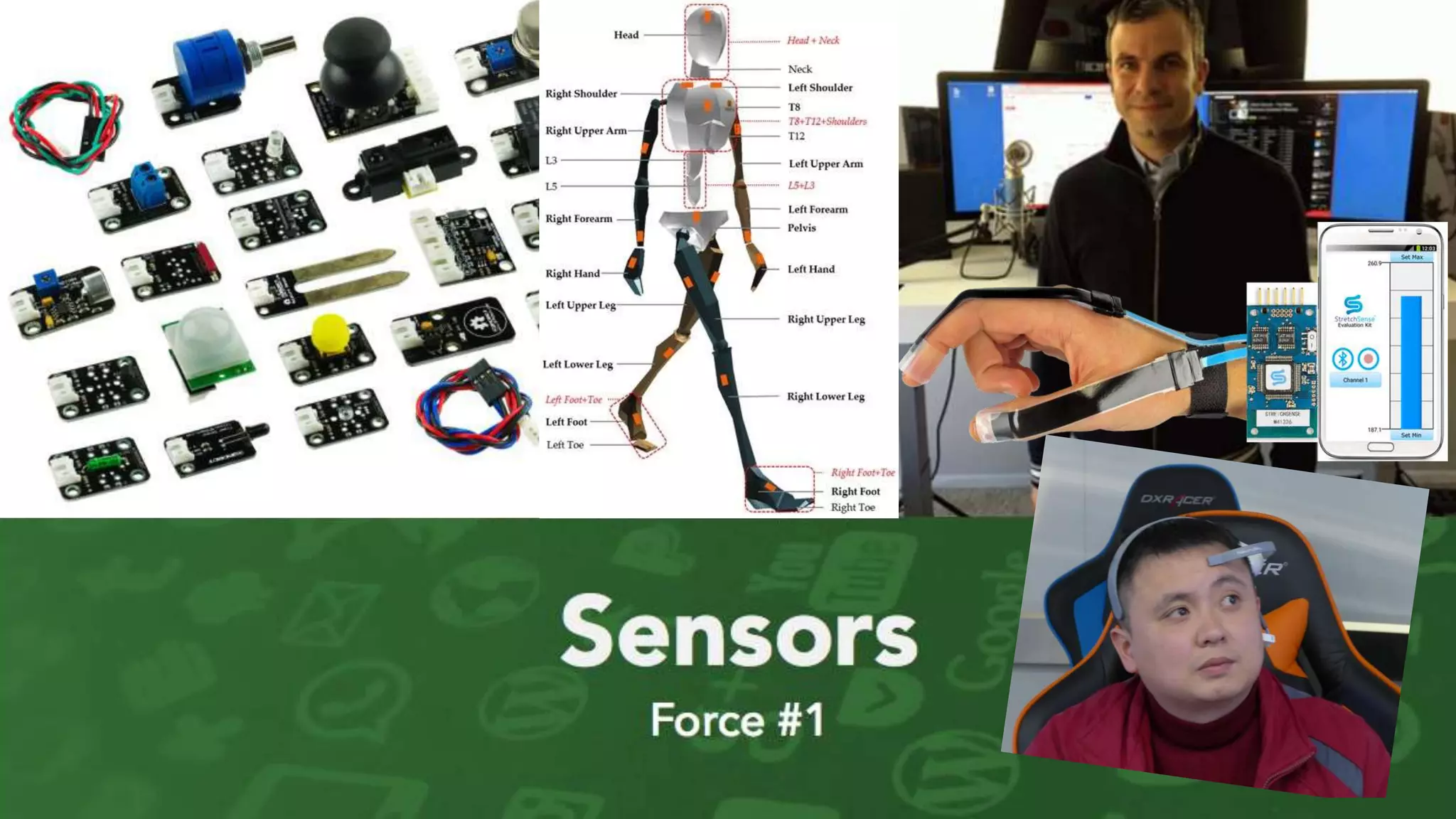

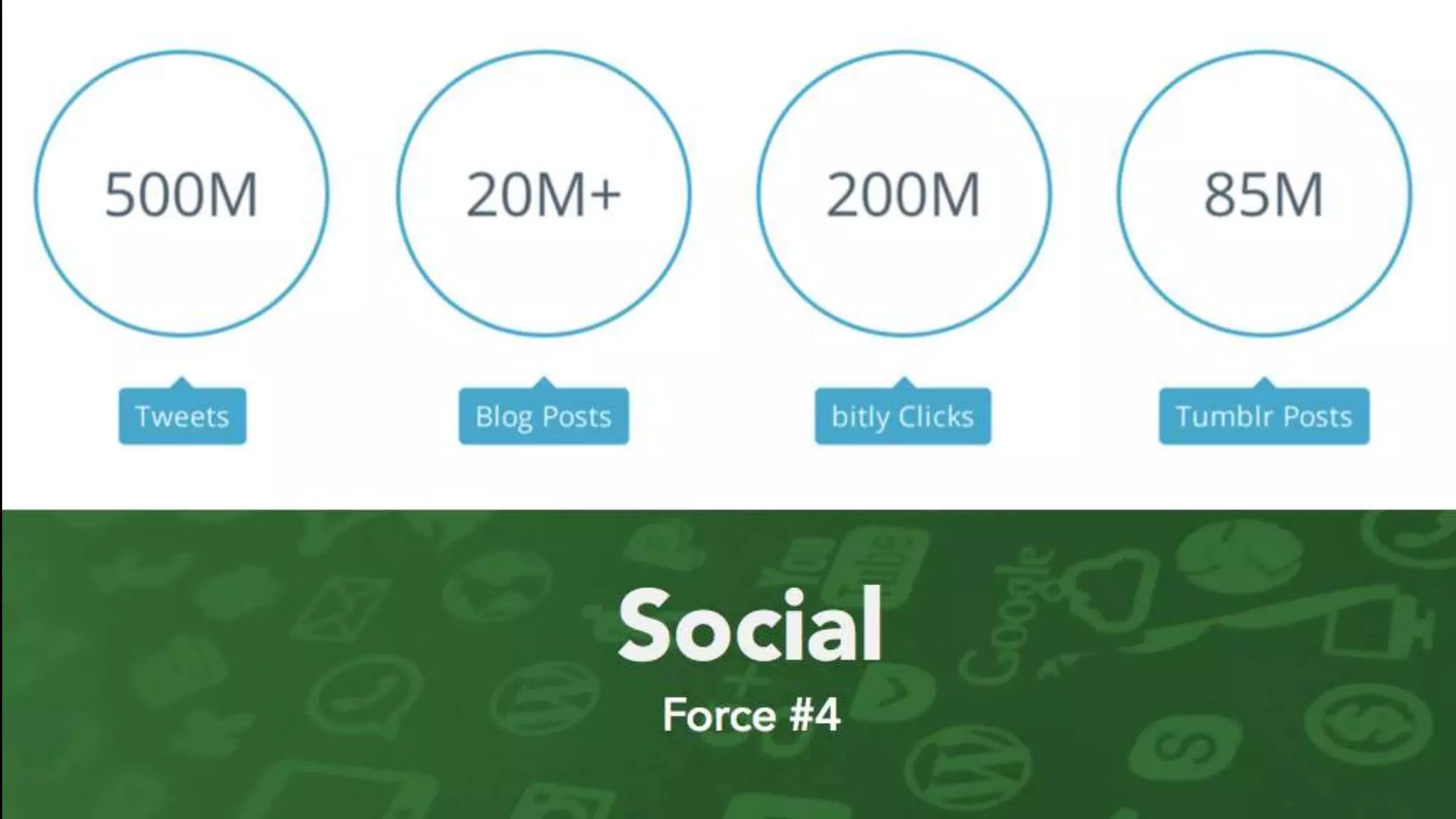

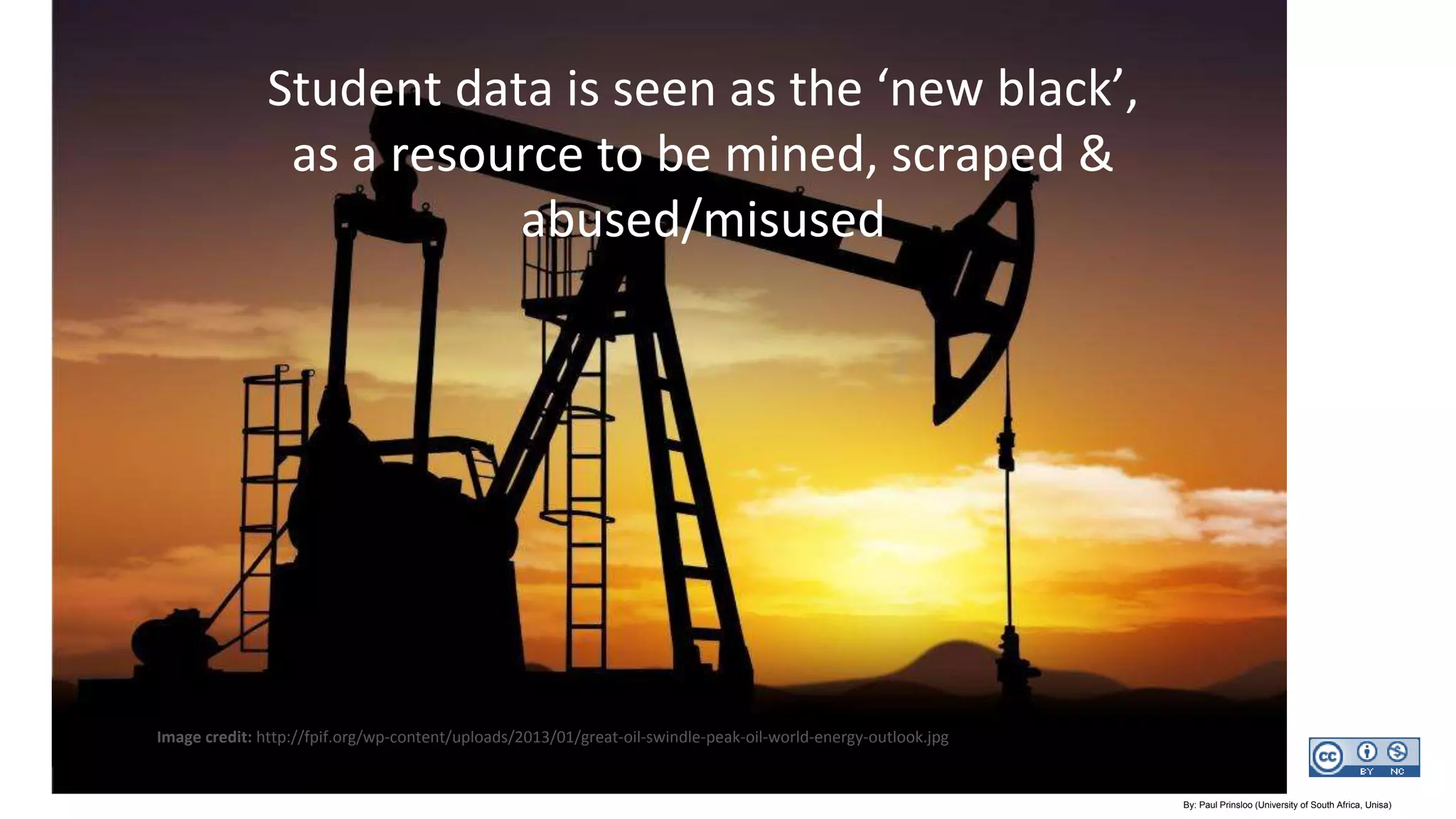

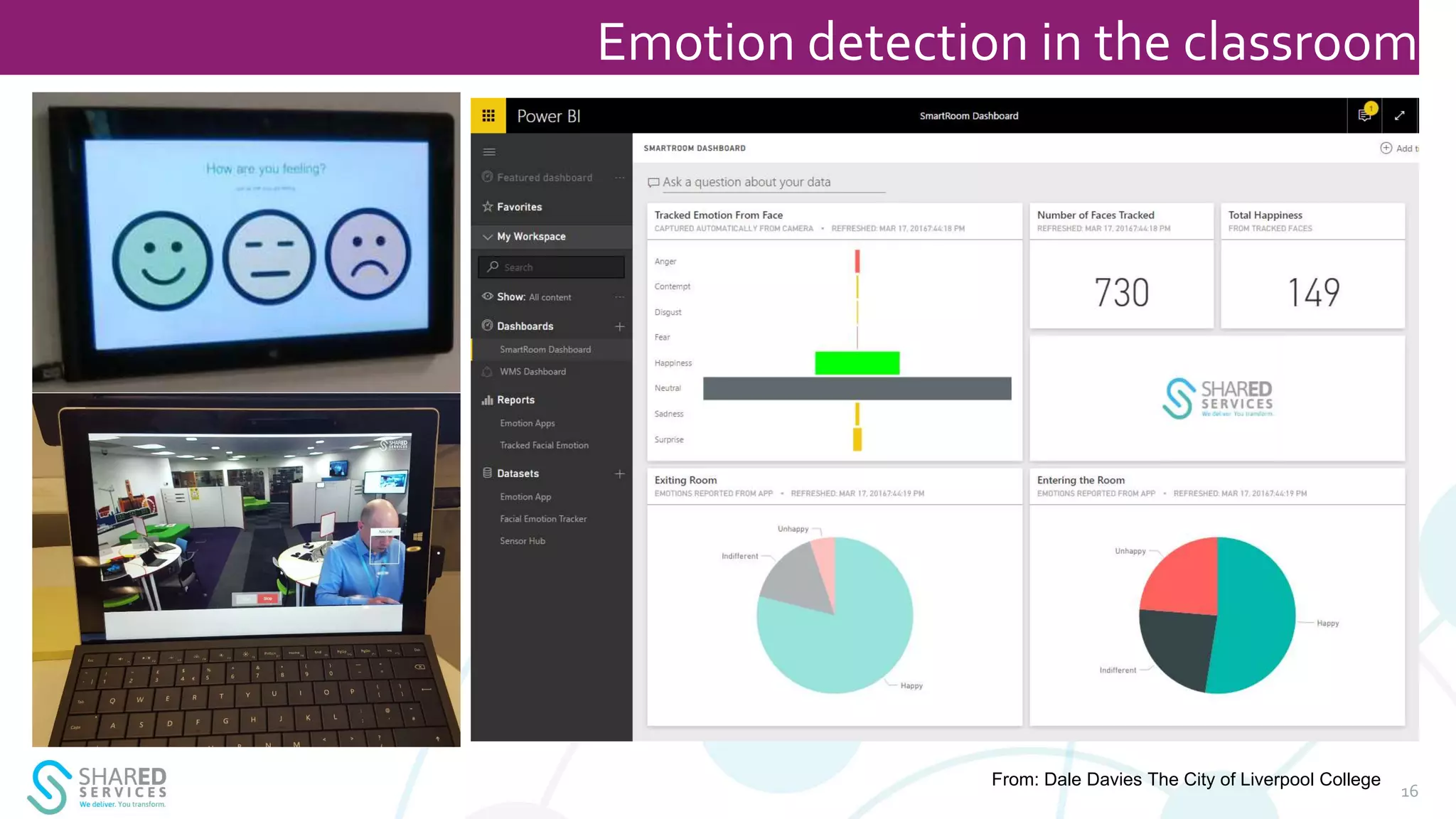

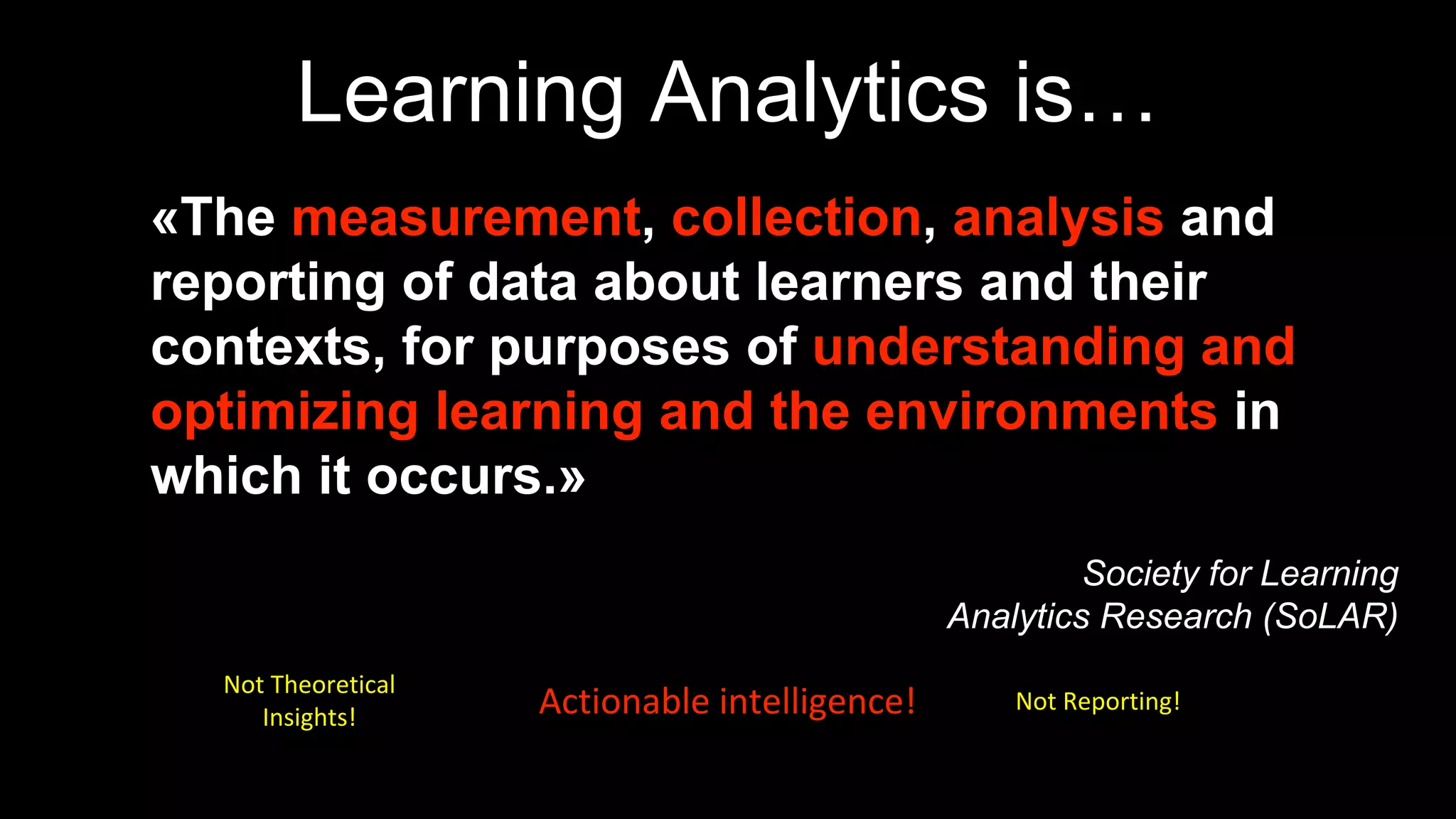

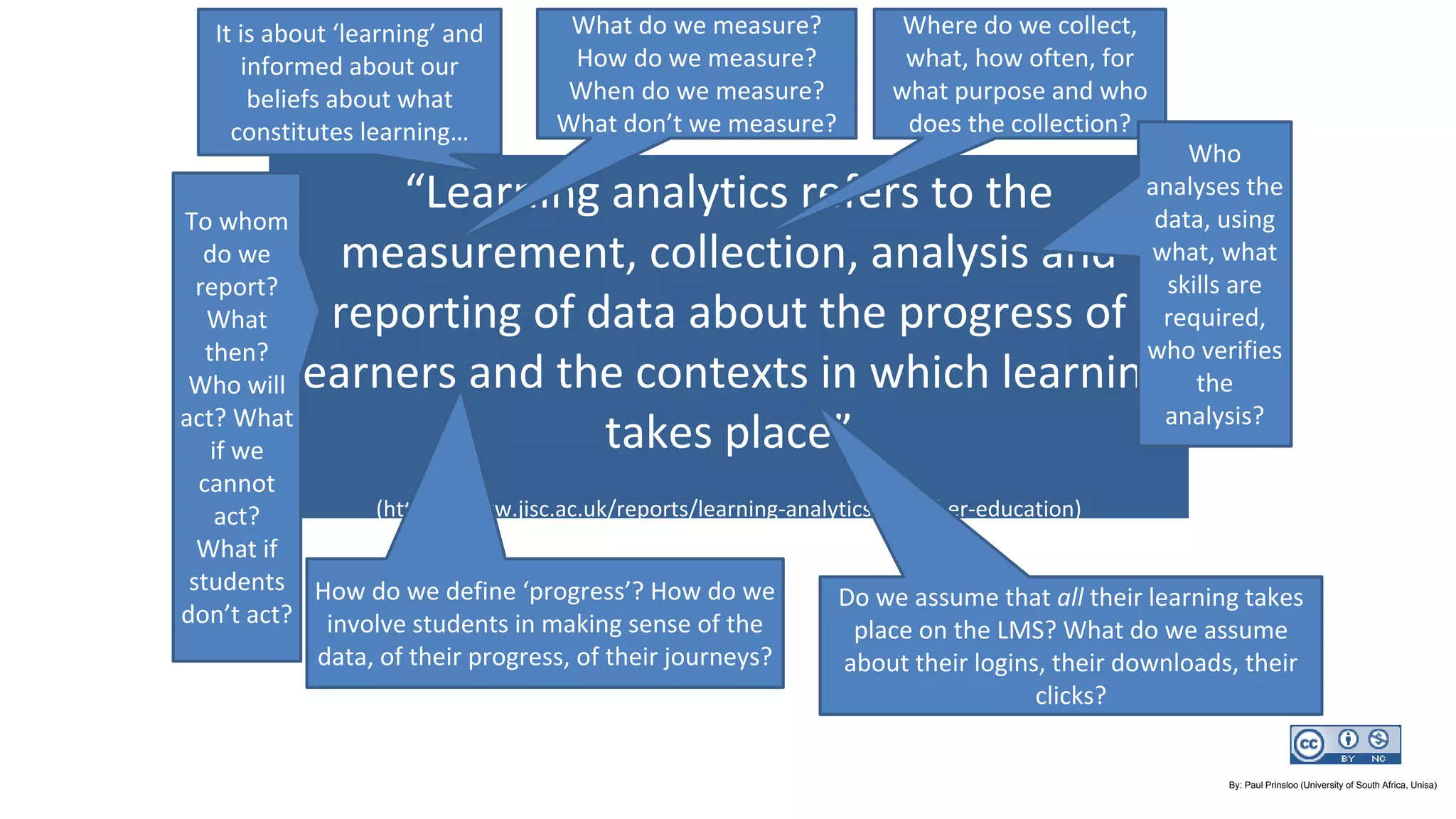

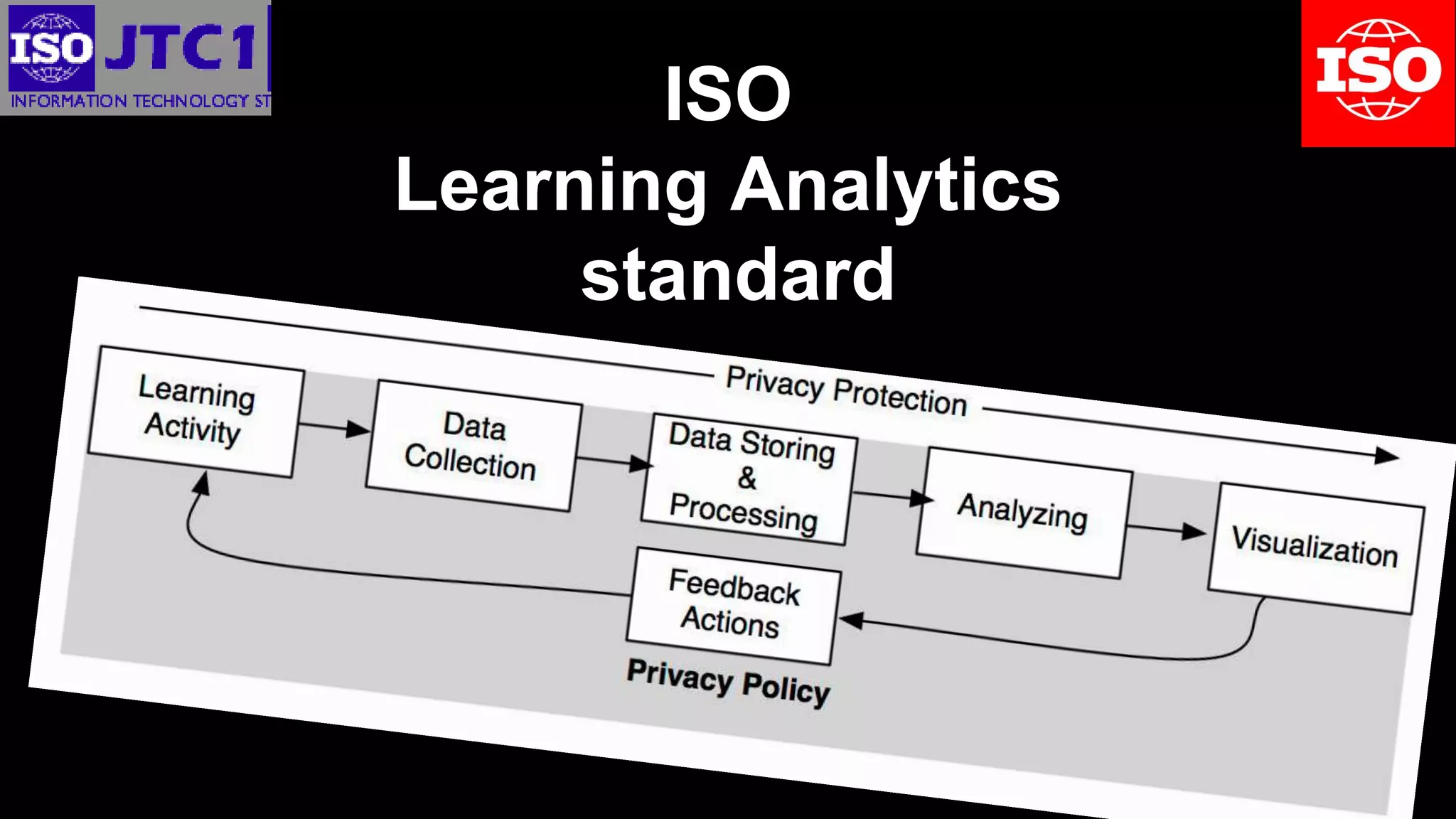

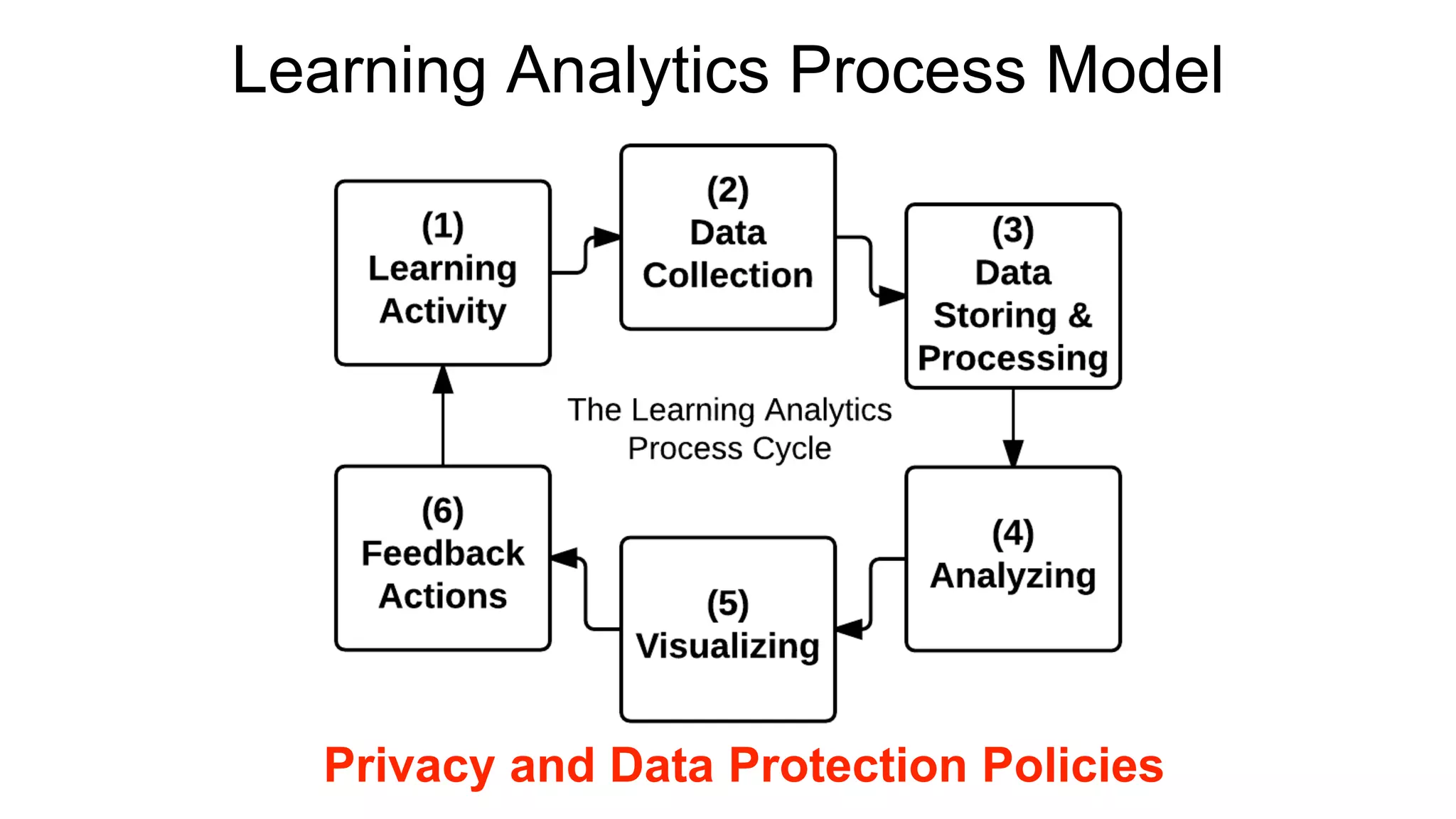

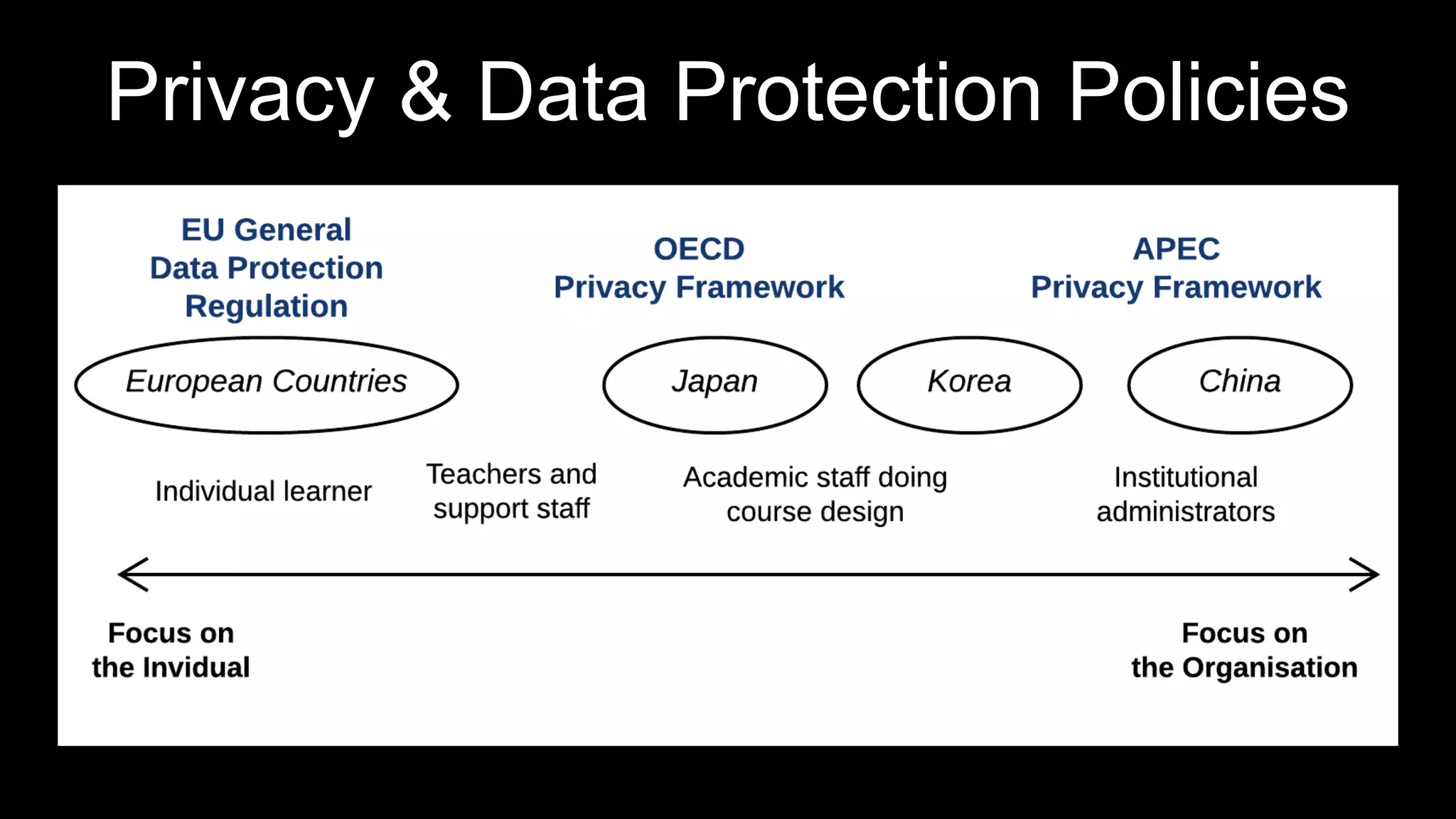

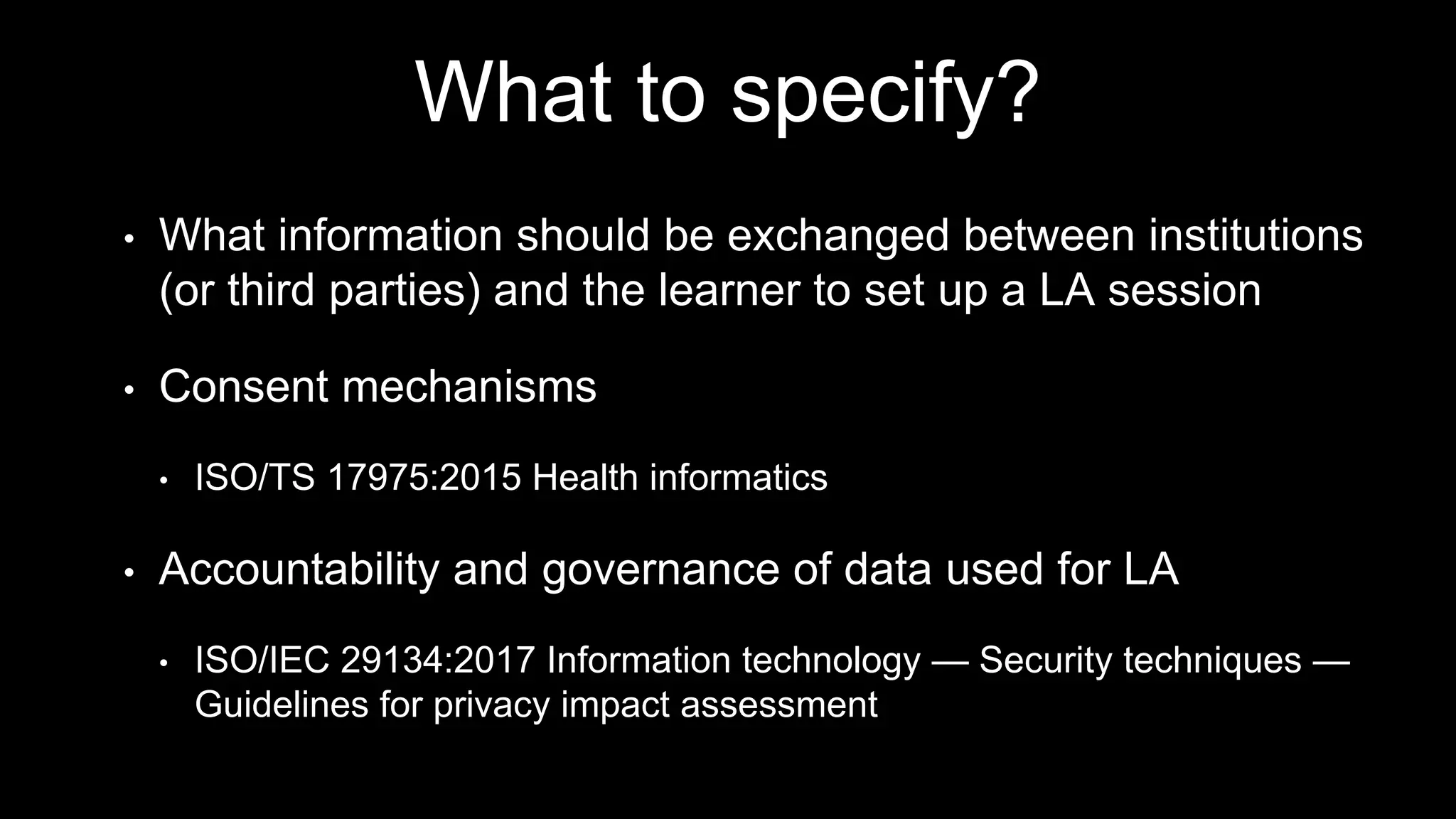

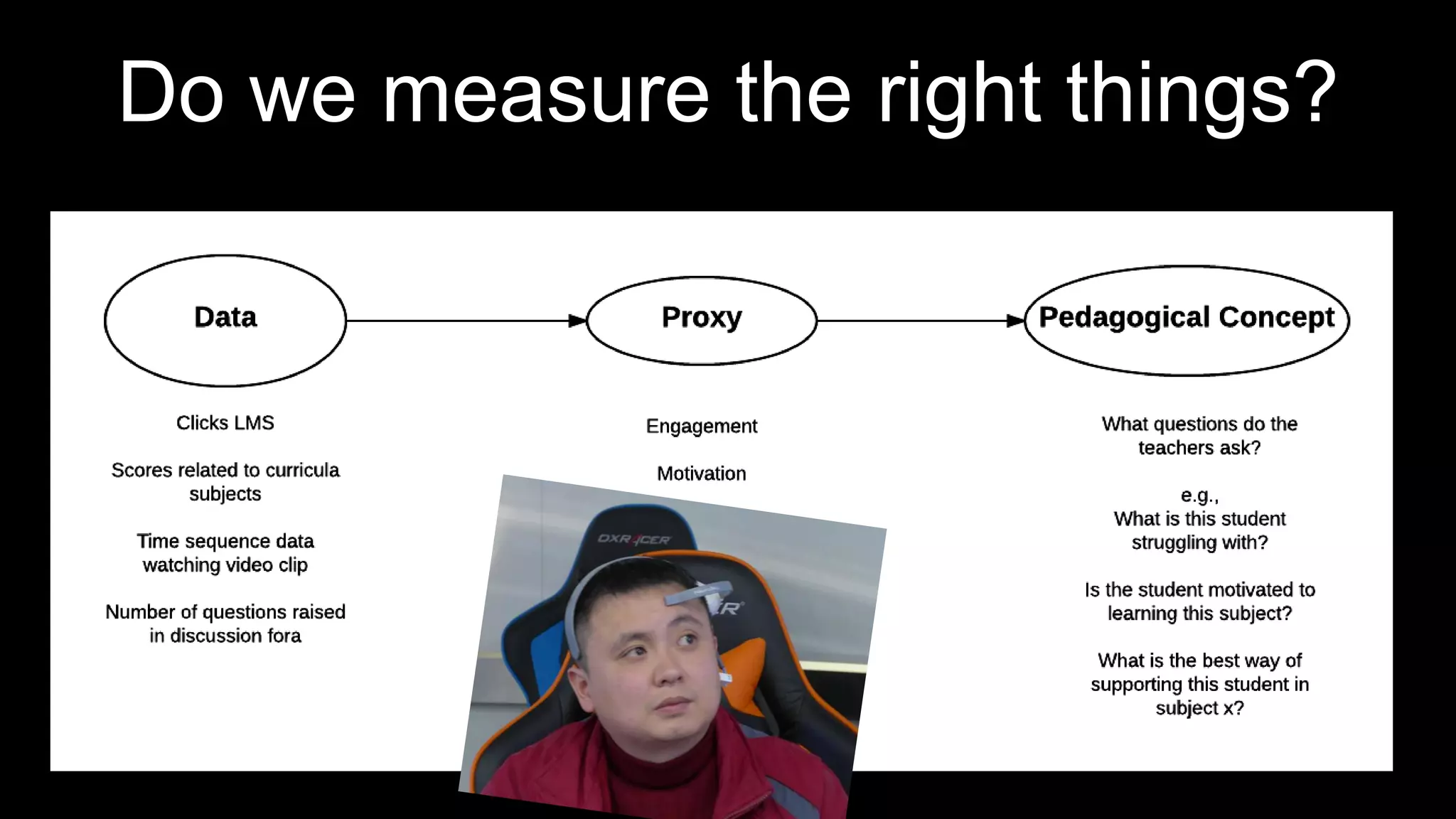

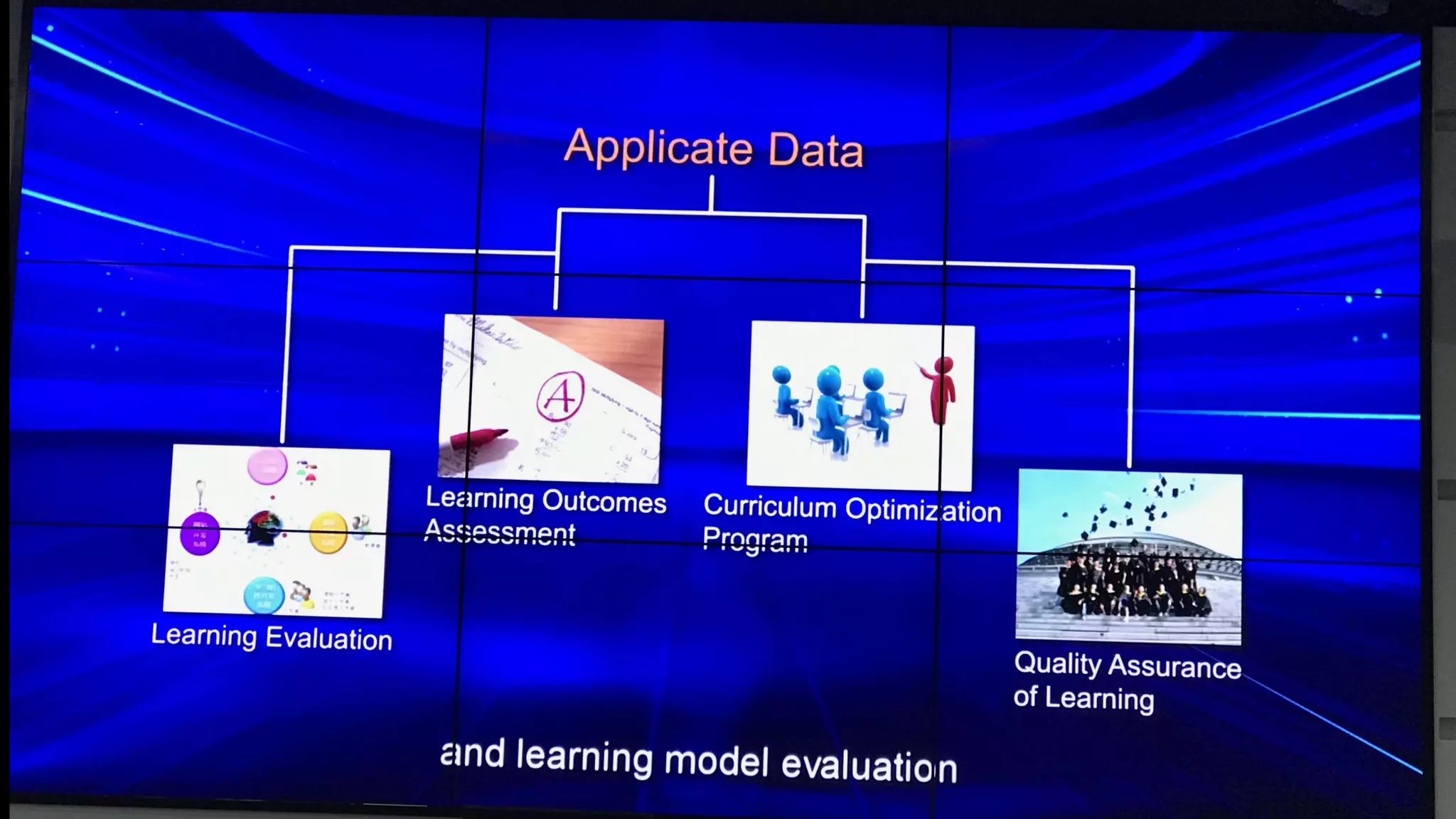

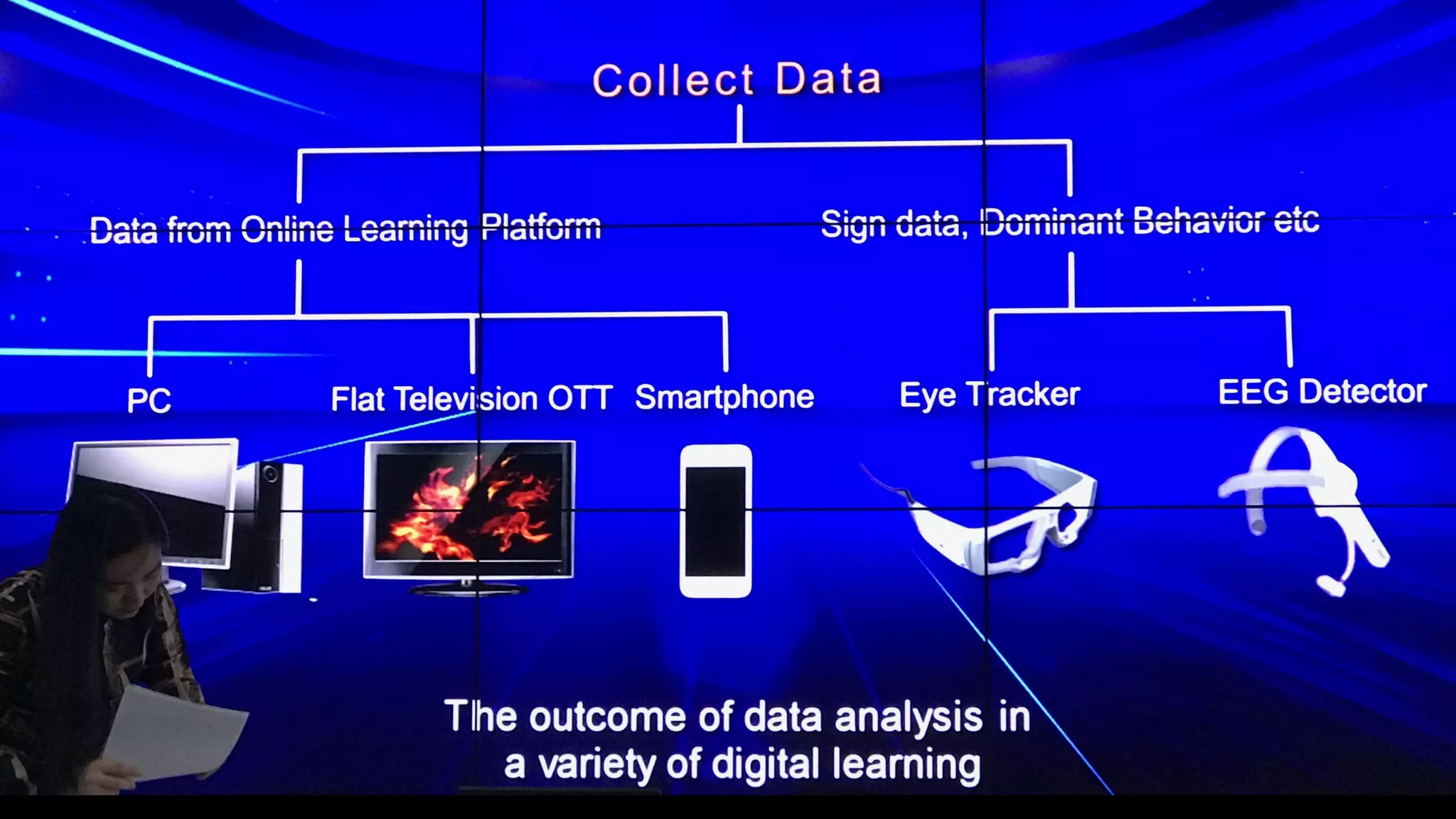

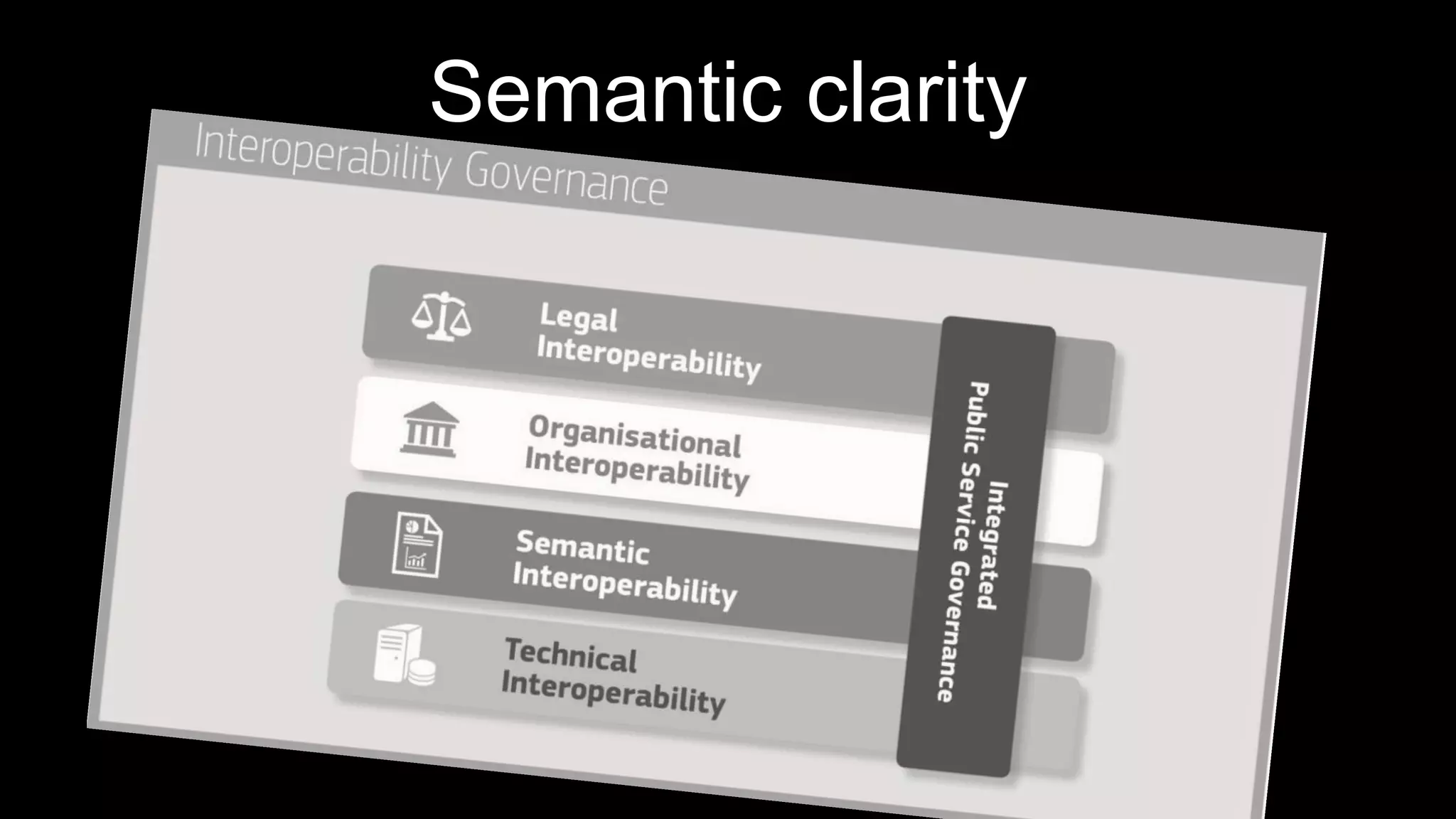

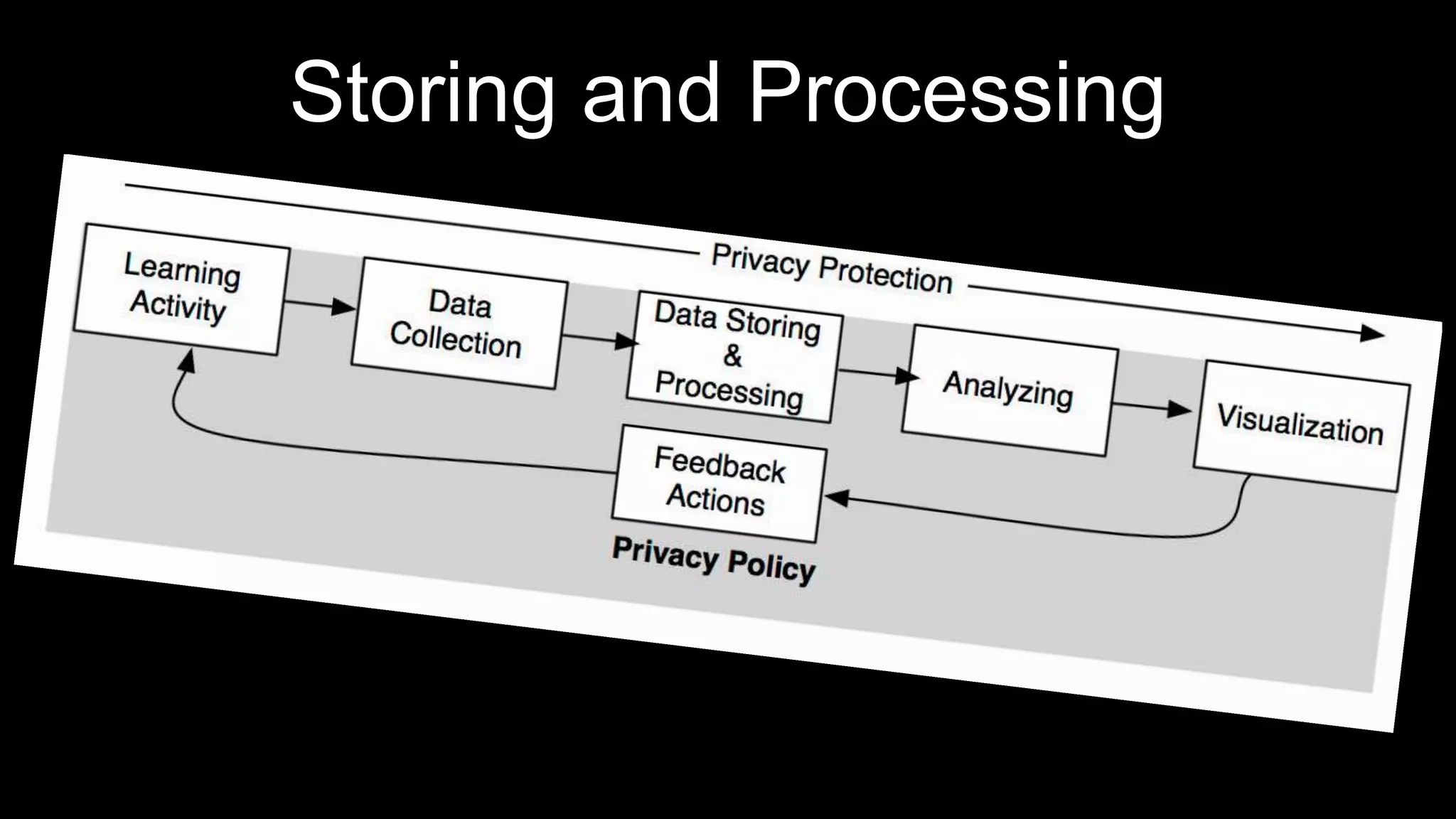

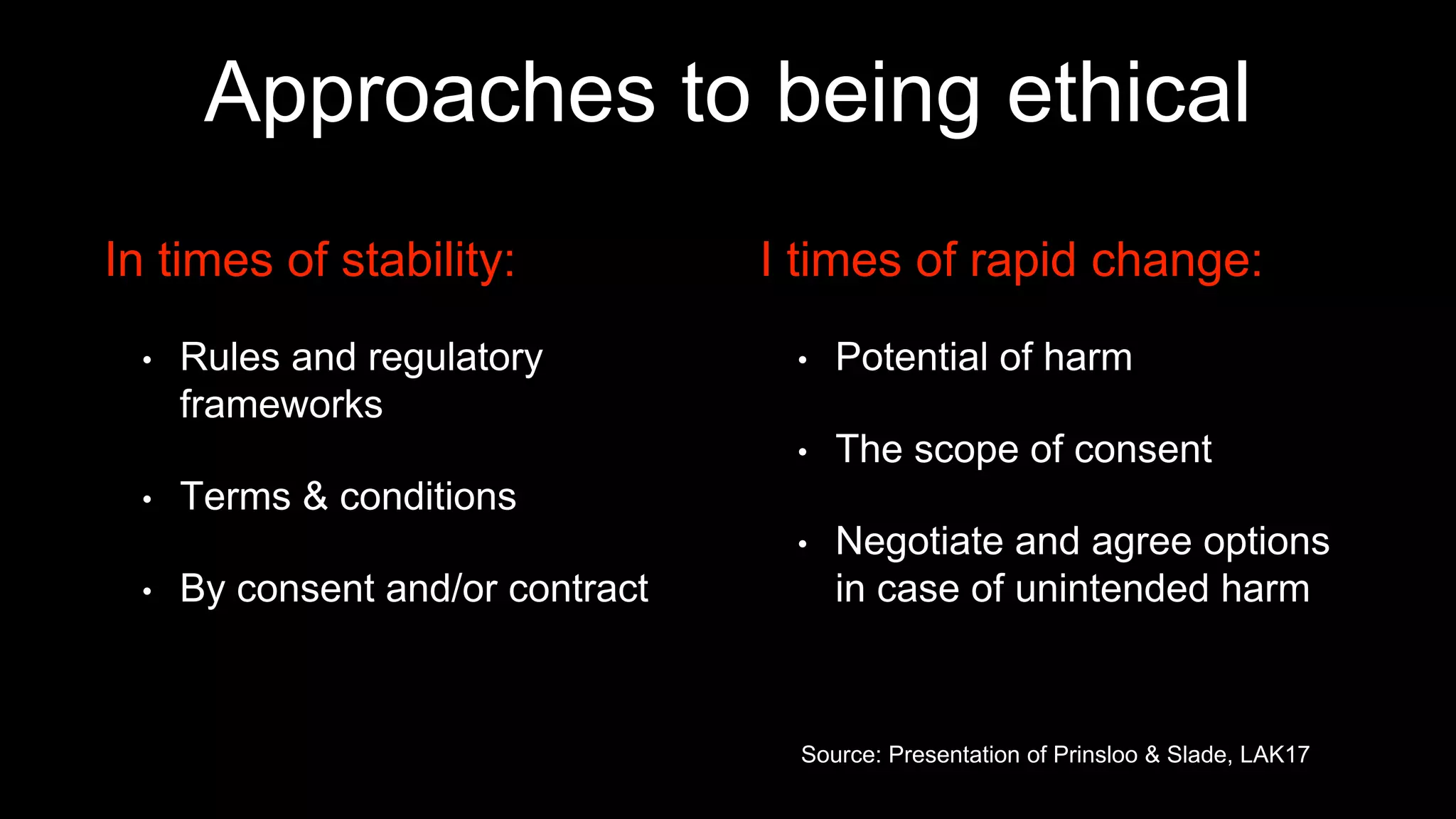

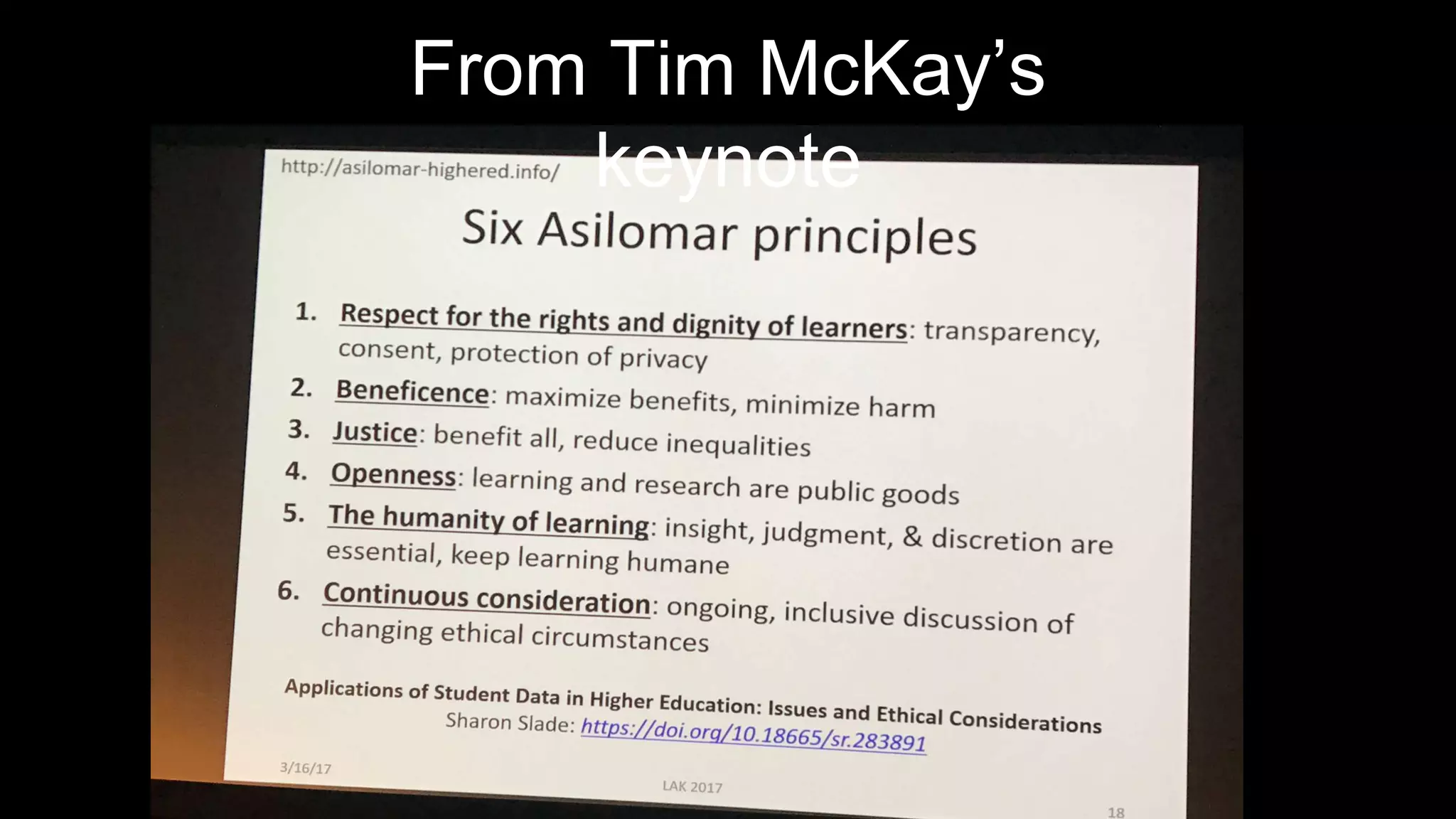

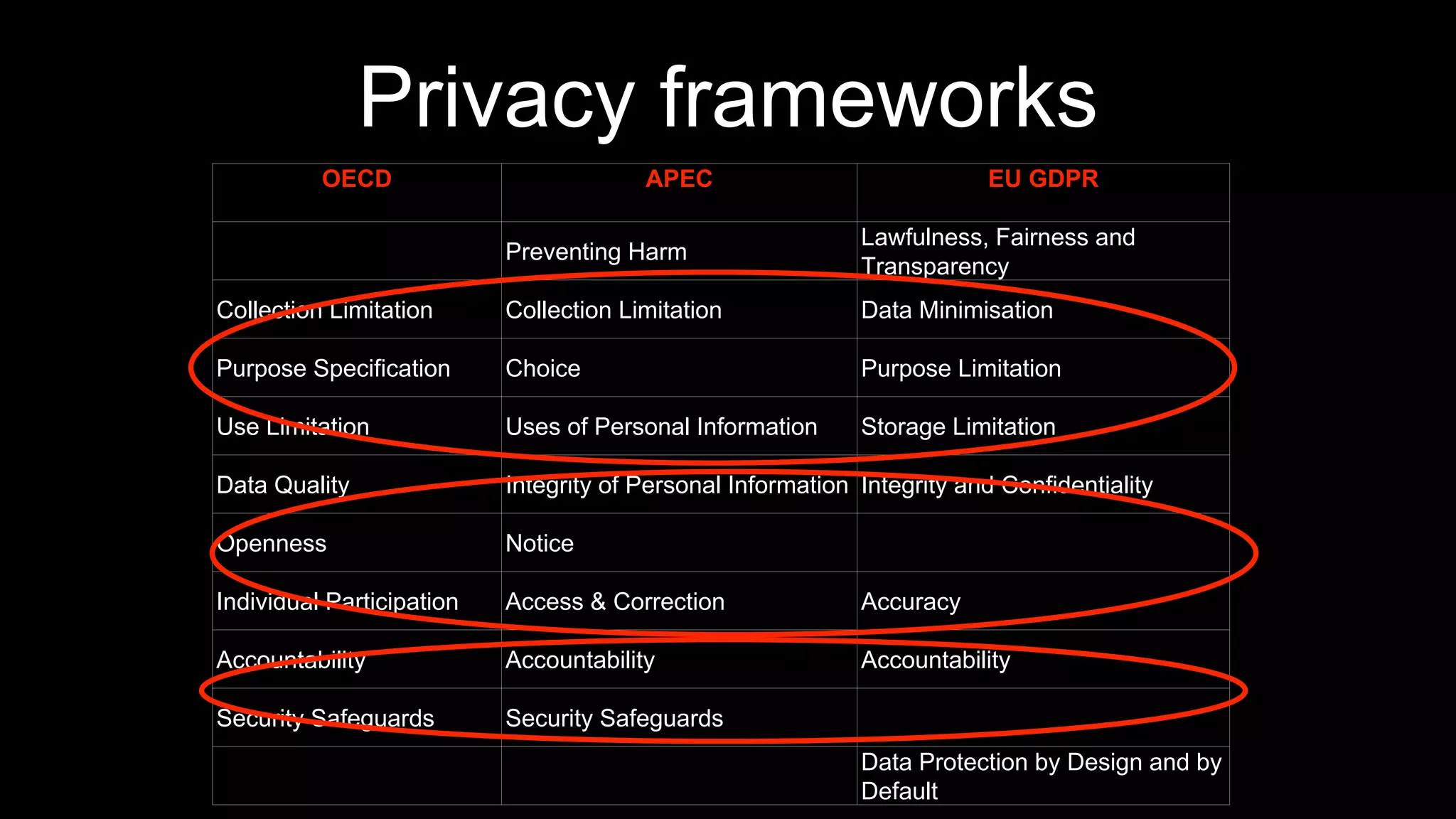

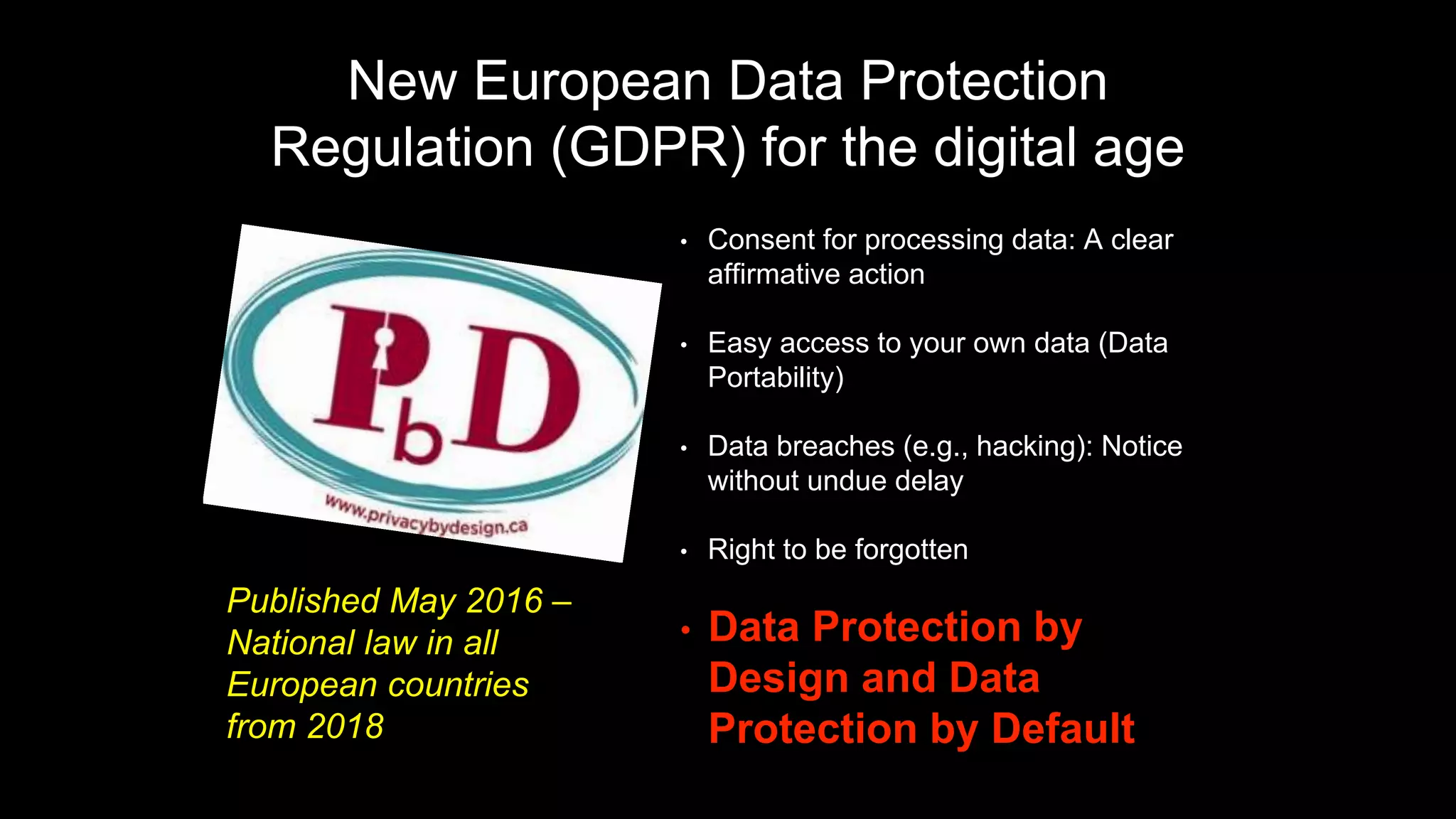

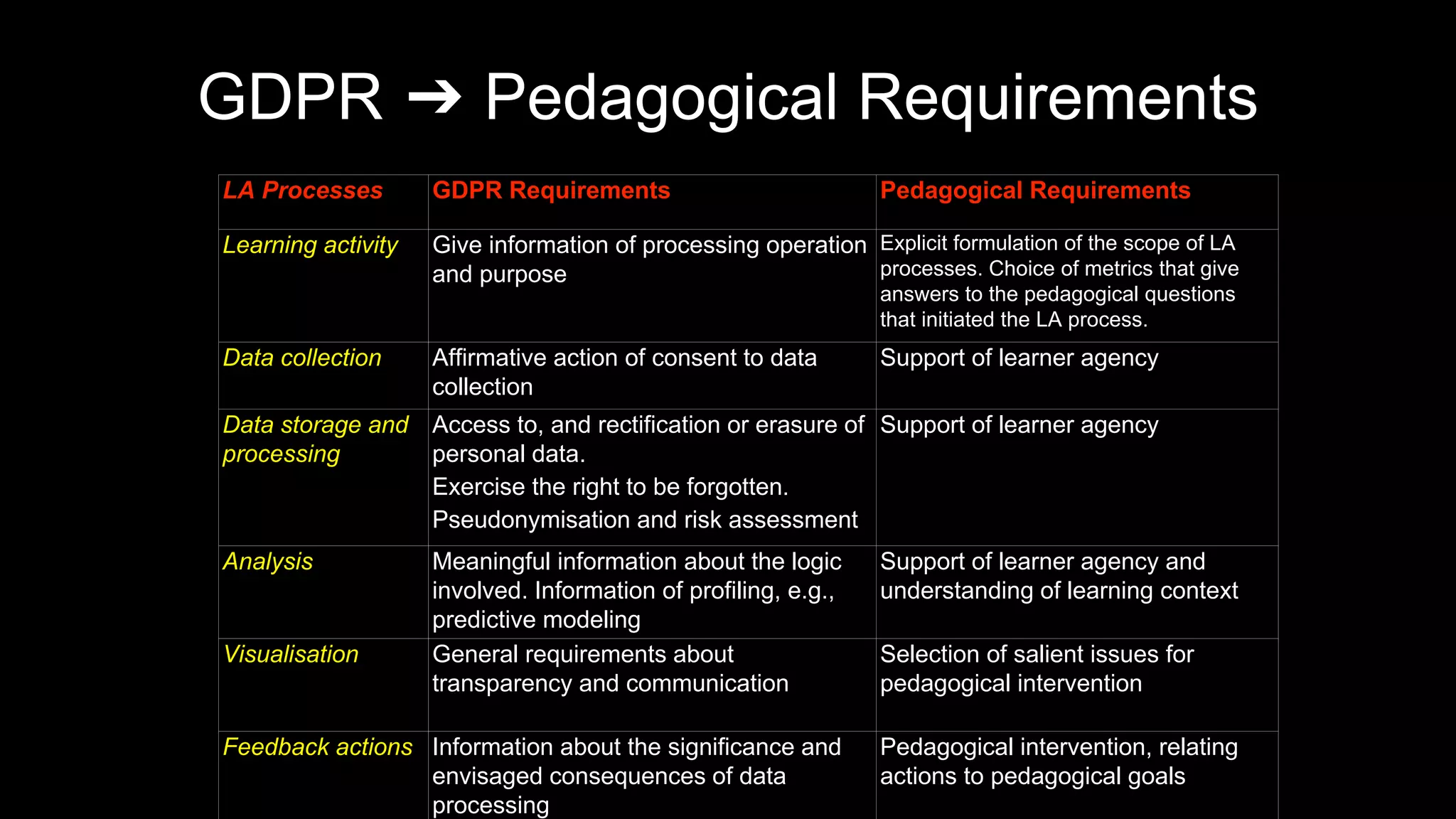

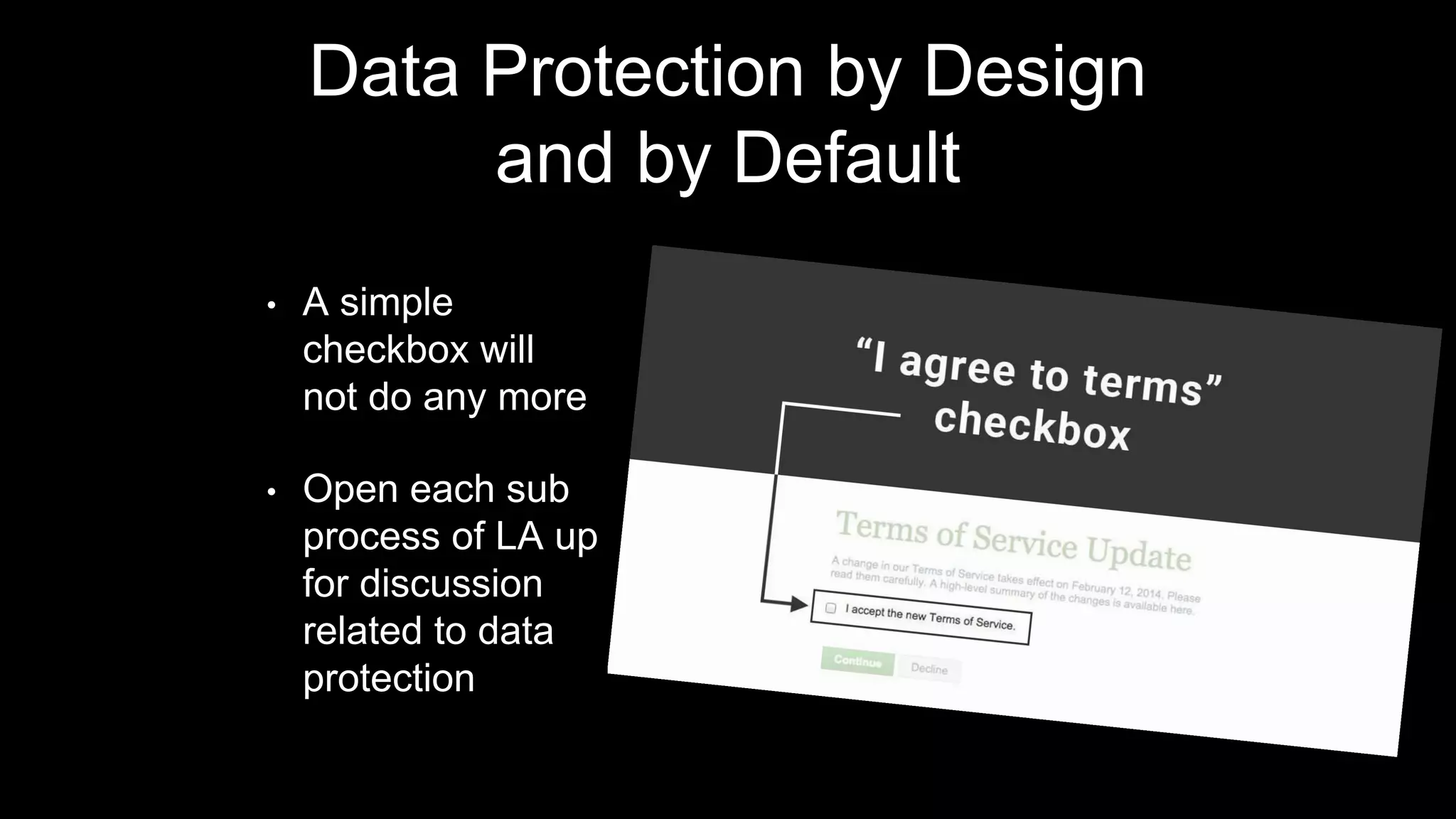

This document discusses learning analytics in the context of standardization. It begins by outlining 5 forces that are changing the world: access to data, viewing student data as a resource to be mined and used, concerns about student privacy, and using technology like fitbits and social media monitoring to track students. It then discusses key concepts in learning analytics like definitions, benefits for learners, teachers, and institutions. Other topics covered include the learning analytics process, addressing privacy and data protection, and the potential role of standardization in learning analytics.