Namespaces, Cgroups and systemd document discusses:

1. Namespaces and cgroups which provide isolation and resource management capabilities in Linux.

2. Systemd which is a system and service manager that aims to boot faster and improve dependencies between services.

3. Key components of systemd include unit files, systemctl, and tools to manage services, devices, mounts and other resources.

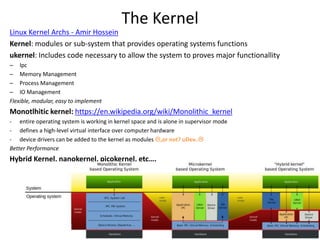

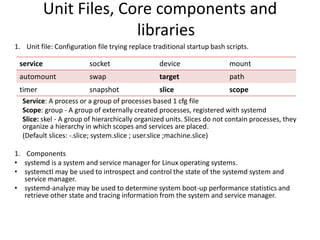

![Cgroups – Commands on libcgroup

tools

Description command

installation of packages tool to manage kernel API yum install libcgroup libcgroup-tools

creates persistent file snapshotting the currently hierarchy on runtime cgsnapshot -f /etc/cgconfig.conf

listing all available hierarchies along with their current mount points lssubsys -am

mount net_prio crontoller to a virtual file system mount -t cgroup -o net_prio none /sys/fs/cgroup/net_prio

unmount net_prio crontoller to a virtual file system umount /sys/fs/cgroup/controller_name

create transient cgroups in hierarchies, alternative 1 cgcreate -t uid:gid -a uid:gid -g controllers:path

create transient cgroups in hierarchies, alternative 2 mkdir /sys/fs/cgroup/net_prio/lab1/group1

remove cgroups cgdelete [-r] net_prio:/test-subgroup

set controller parameters by running cgset -r parameter=value path_to_cgroup

copy the parameters of one cgroup into another cgset --copy-from path_to_source_cgroup path_to_target_cgroup

Set controller parameters permanently vi /etc/cgconfig.conf ; systemctl stop cgconfig ; systemctl start cgconfig

Move a process into a cgroup cgclassify -g controllers:path_to_cgroup pidlist

Launch processes in a manually created cgroup cgexec -g controllers:path_to_cgroup command arguments

find the controllers that are available in your kernel cat /proc/cgroups

find the mount points of particular subsystem to find the mount points of particular subsystem

list the cgroups lscgroup

To restrict the output to a specific hierarchy lscgroup cpuset:adminusers

display the parameters of specific cgroups cgget -r parameter list_of_cgroups](https://image.slidesharecdn.com/learningaboutsystemdcgroups-150301112722-conversion-gate01/85/First-steps-on-CentOs7-12-320.jpg)

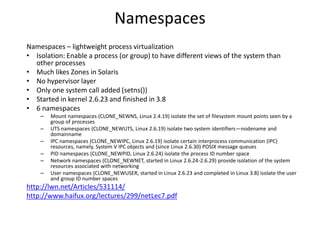

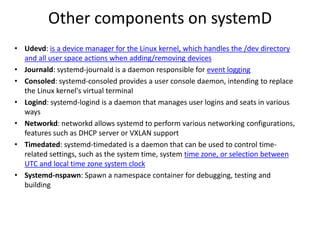

![SystemD commands

SYSV command OR Description SystemD command

init 3 systemctl isolate multi-user.target

service httpd [command] systemctl [command] httpd

ls /etc/rc.d/init.d/ systemctl list-units --all

chkconfig httpd [on|off]

D: creates/remove a unit file in the /usr/lib/systemd/system/ directory

(Persistent cgroups)

systemctl [enable|disable] httpd

D: run the top utility in a service unit in a new slice called test (Transcient) systemd-run --unit=toptest --slice=test top -b

D: Stop the unit non-gracefully signal systemctl kill name.service --kill-who=PID,... --signal=signal

chkconfig frobozz --add systemctl daemon-reload

runlevel systemctl list-units --type=target

D: limit the CPU and memory usage of httpd.service systemctl set-property httpd.service CPUShares=600 MemoryLimit=500M

D: limit the CPU and memory usage of httpd.service, temporary systemctl set-property --runtime httpd.service CPUShares=600

D: Recursively show control group contents systemd-cgls

D: show control group for resource systemd-cgls memory

D: Add cgroup info in ps psc='ps xawf -eo pid,user,cgroup,args'

D: List dependencies in target systemctl show -p "Wants" multi-user.target

D: Analyze system boot-up performance systemd-analyze

D: Show top control groups by their resource usage systemd-cgtop

D: Run programs in transient scope or service units systemd-run

D: Control the systemd machine manager (LXC or VM) Machinectl

D: show cgroups hierarchy attached to a process cat proc/PID/cgroup](https://image.slidesharecdn.com/learningaboutsystemdcgroups-150301112722-conversion-gate01/85/First-steps-on-CentOs7-19-320.jpg)

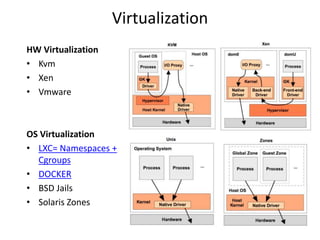

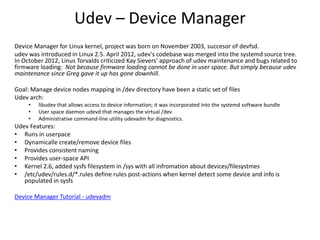

![systemd-nspawn and machinectl

• Systemd-nspawn is chroot on steroids

• Goal - Spawn a minimal namespace container for debugging, testing and building

# yum –releasever=20 --nogpg --installroot=/srv/mycontainer --disablerepo='*' --

enablerepo=fedora install systemd passwd yum fedora-release vim-minimal

…

# systemd-nspawn -bD /srv/mycontainer/

[root@fedora20 ~]# machinectl

MACHINE CONTAINER SERVICE

mycontainer container nspawn

LINKS

Lennart Poettering, Linux Conf 2013

Wiki - fedoraproject.org – SystemdLightweightContainers

Wiki - freedesktop.org - VirtualizedTesting](https://image.slidesharecdn.com/learningaboutsystemdcgroups-150301112722-conversion-gate01/85/First-steps-on-CentOs7-28-320.jpg)

![• Thanks... Questions?

• Tips:

1. Wiki freedesktop.org TipsAndTricks

2. Trick to Know systemd version –

fedora20 ~]# /usr/bin/timedatectl --version

systemd 208](https://image.slidesharecdn.com/learningaboutsystemdcgroups-150301112722-conversion-gate01/85/First-steps-on-CentOs7-30-320.jpg)