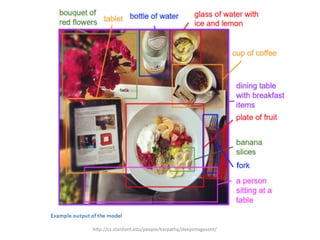

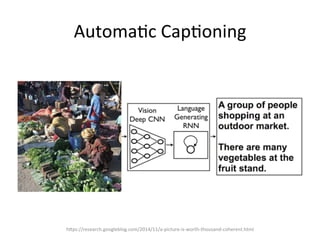

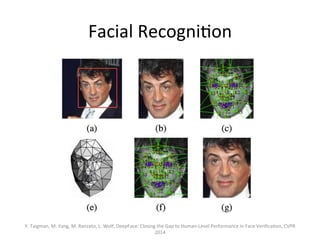

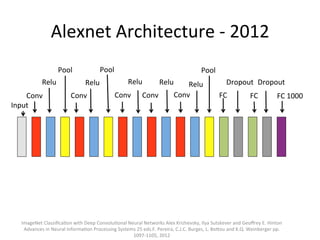

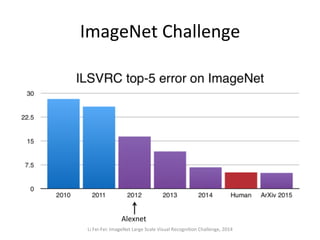

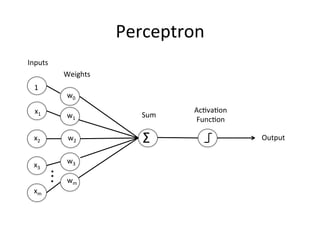

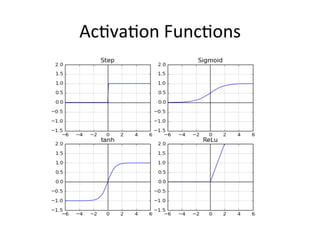

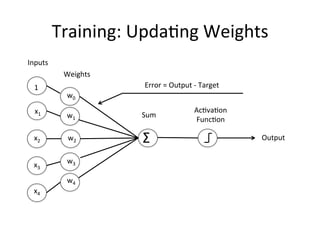

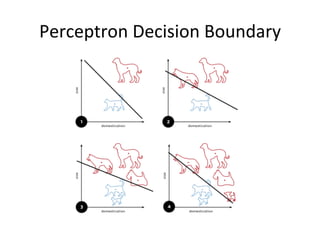

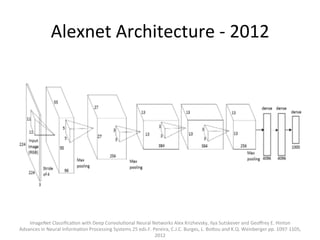

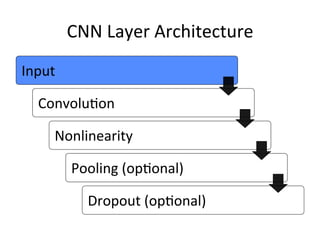

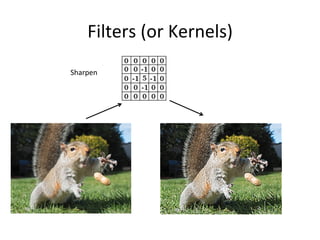

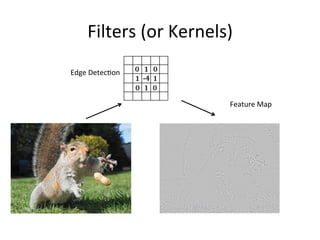

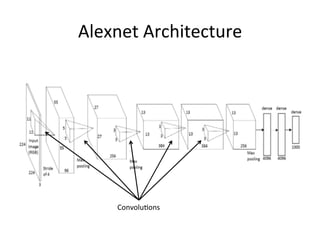

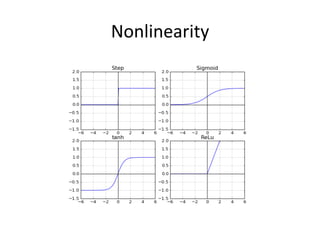

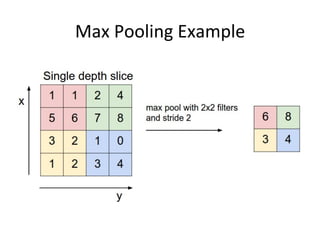

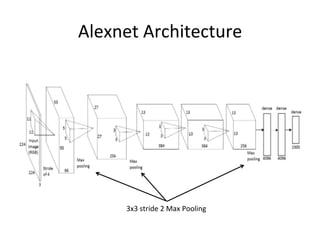

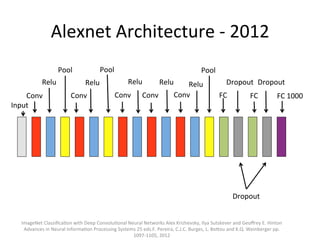

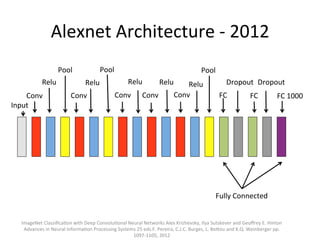

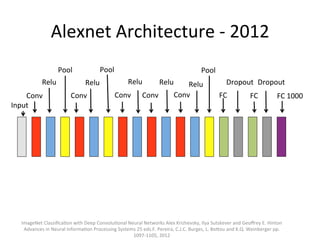

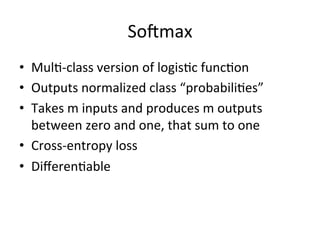

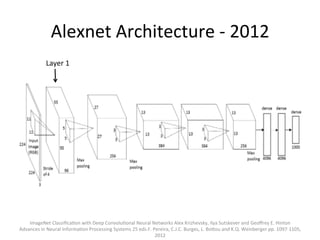

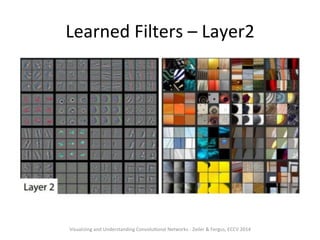

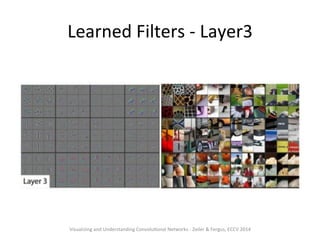

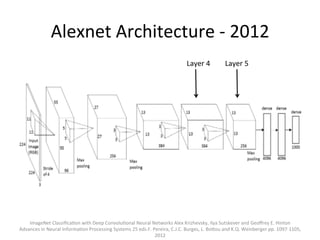

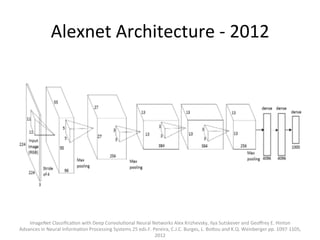

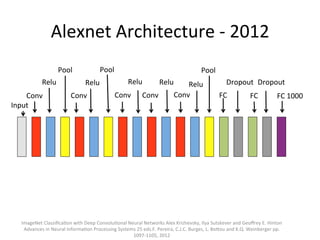

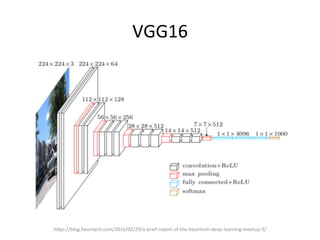

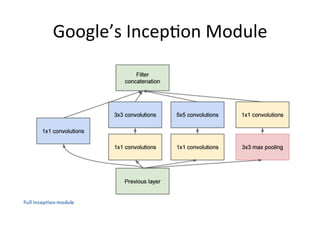

The document provides a comprehensive introduction to Convolutional Neural Networks (CNNs), describing their architecture and significance in tasks such as image classification and object recognition. It discusses key concepts like feature extraction, pooling, dropout, and the functionality of neural network layers, particularly in relation to AlexNet and other models. Additionally, the importance of learning representations in images and the evolution of performance metrics in challenges like ImageNet are highlighted.