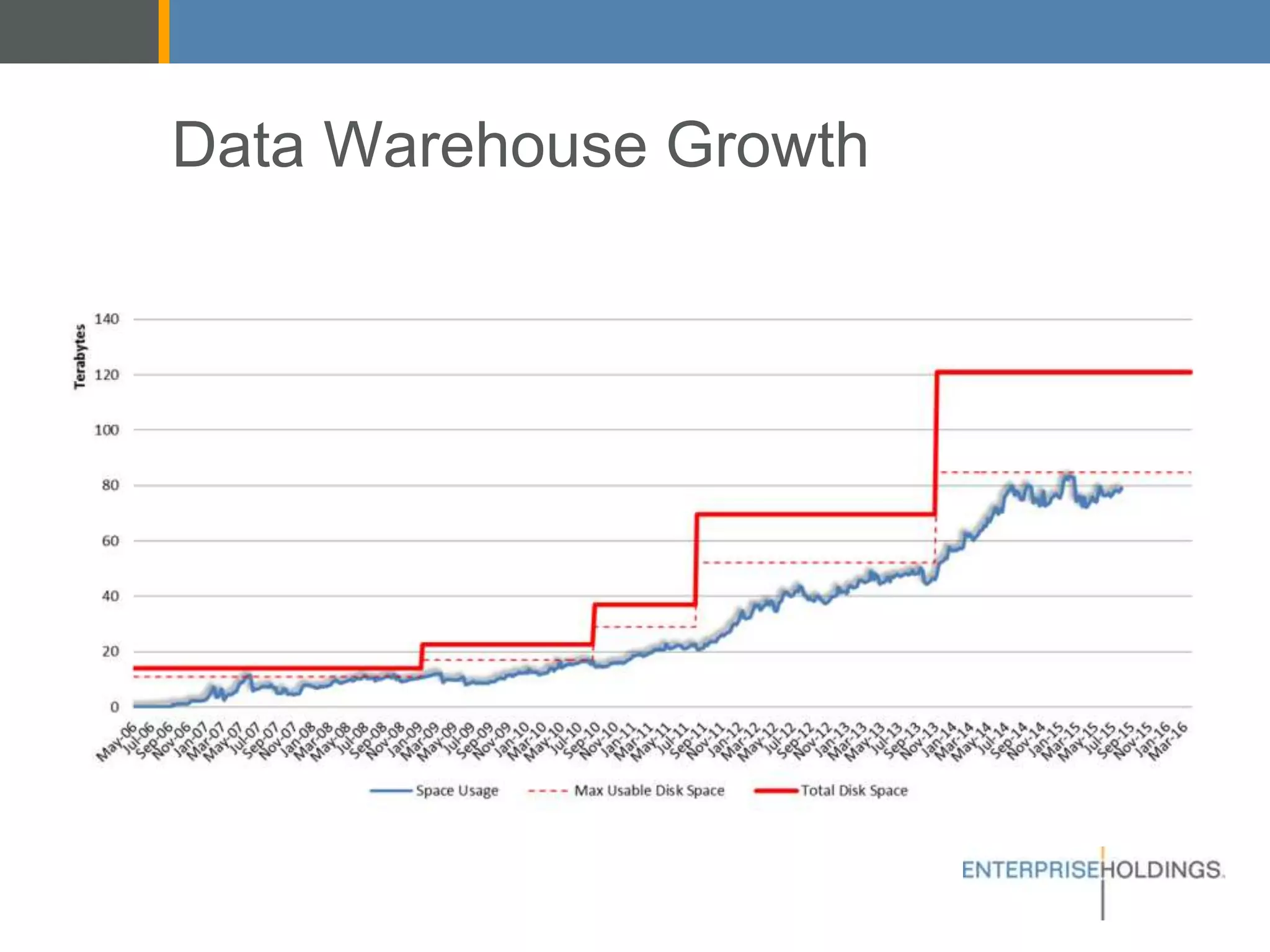

The document discusses Enterprise Holdings' data warehouse challenges, including capacity limitations and the need for a hybrid architecture that incorporates Hadoop alongside Teradata. It explores different big data architectures (batch, lambda, and kappa) and their respective advantages, along with decision points regarding cloud vs. on-premises solutions. Recommendations focus on leveraging Hadoop for improved agility and the management of diverse data sources, while also addressing considerations for security, maintenance, and development complexities.