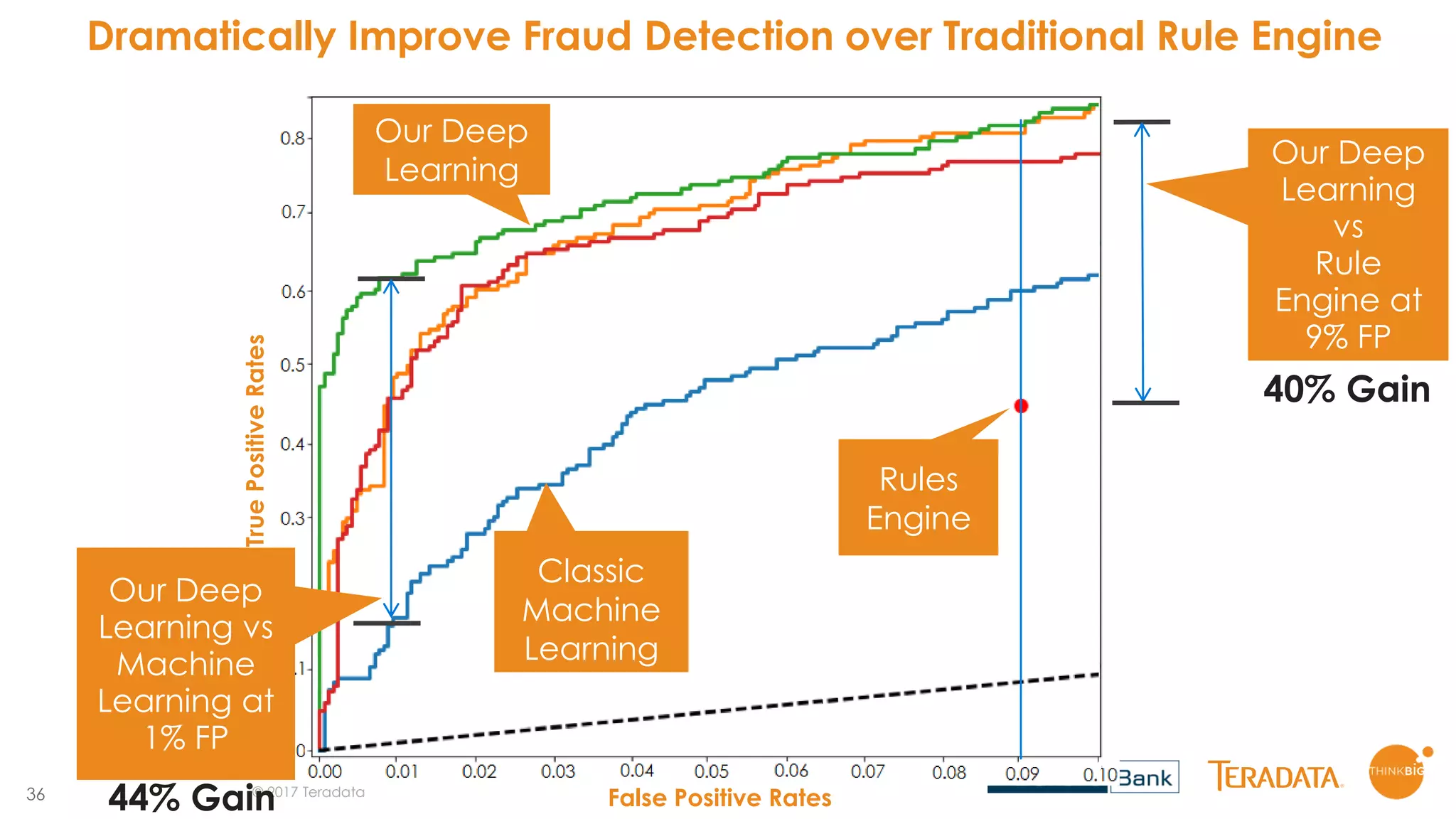

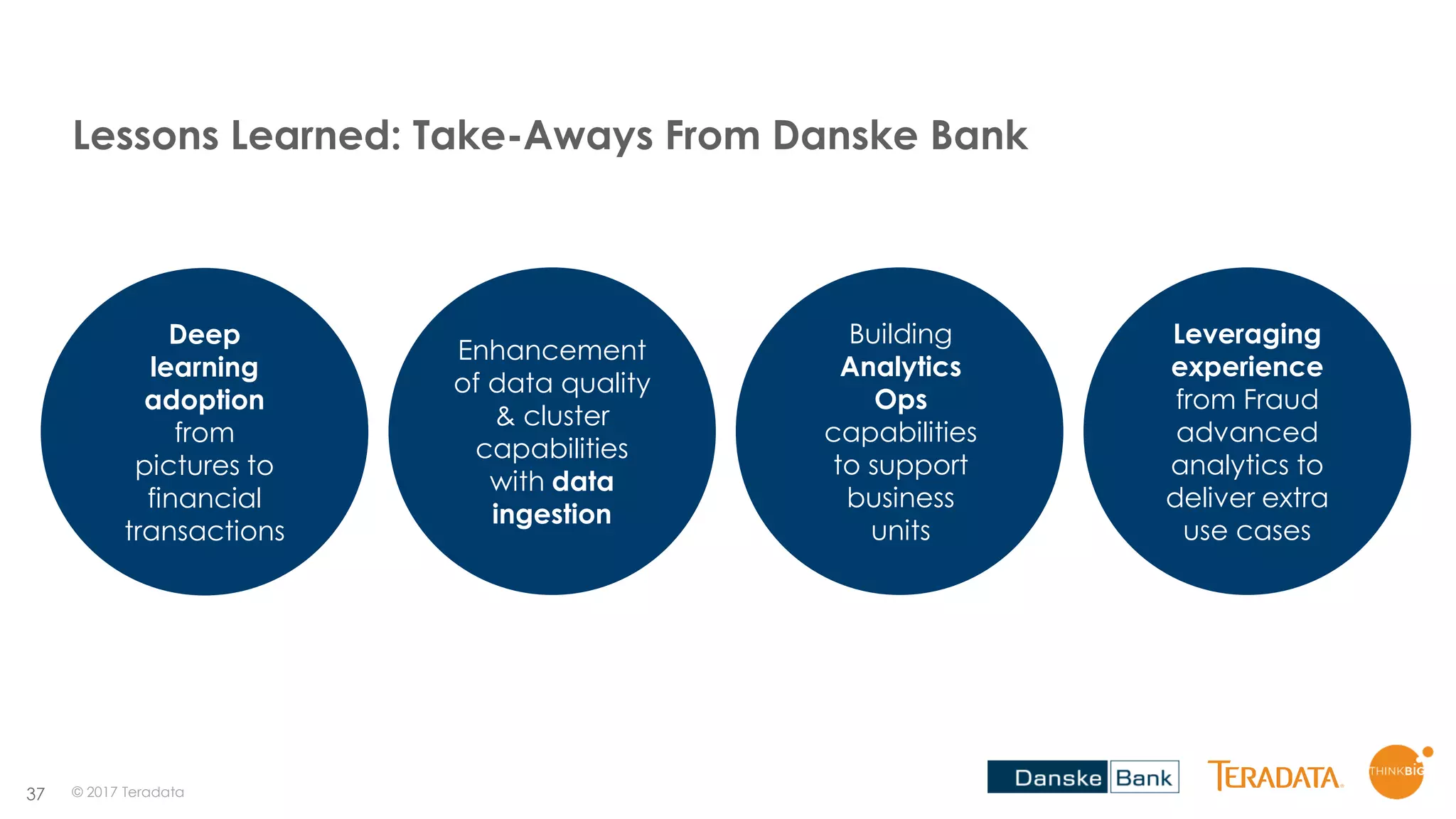

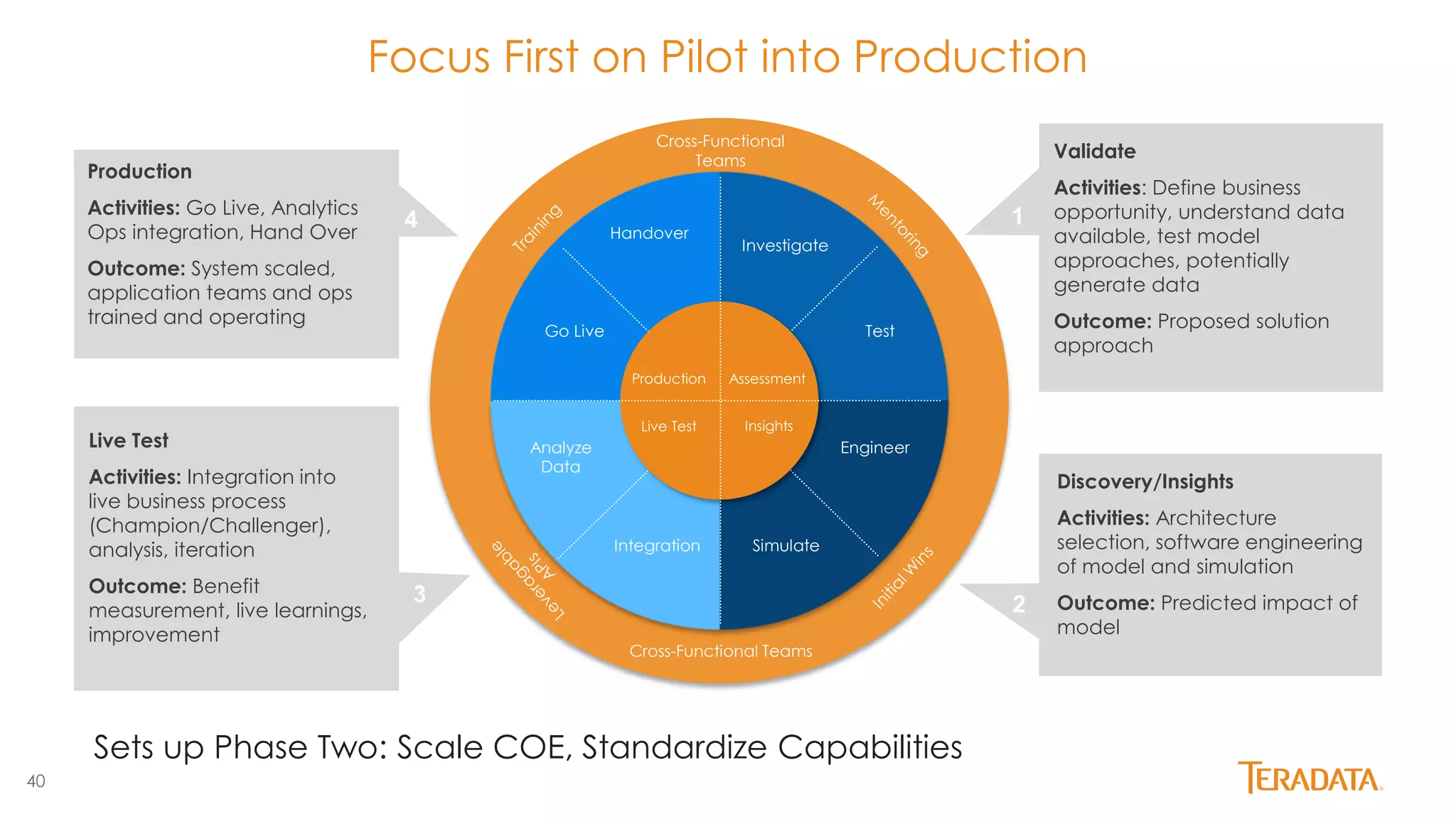

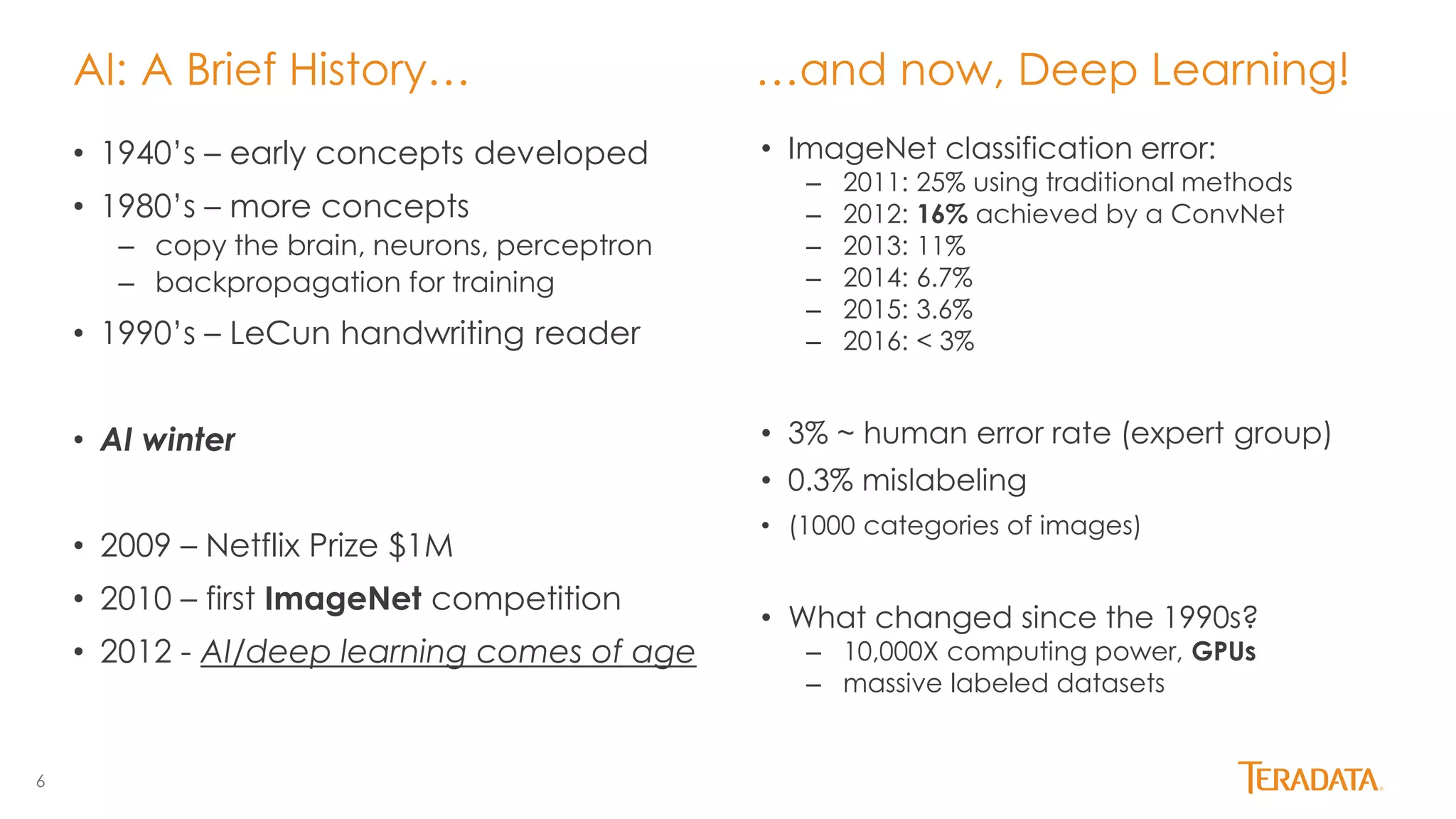

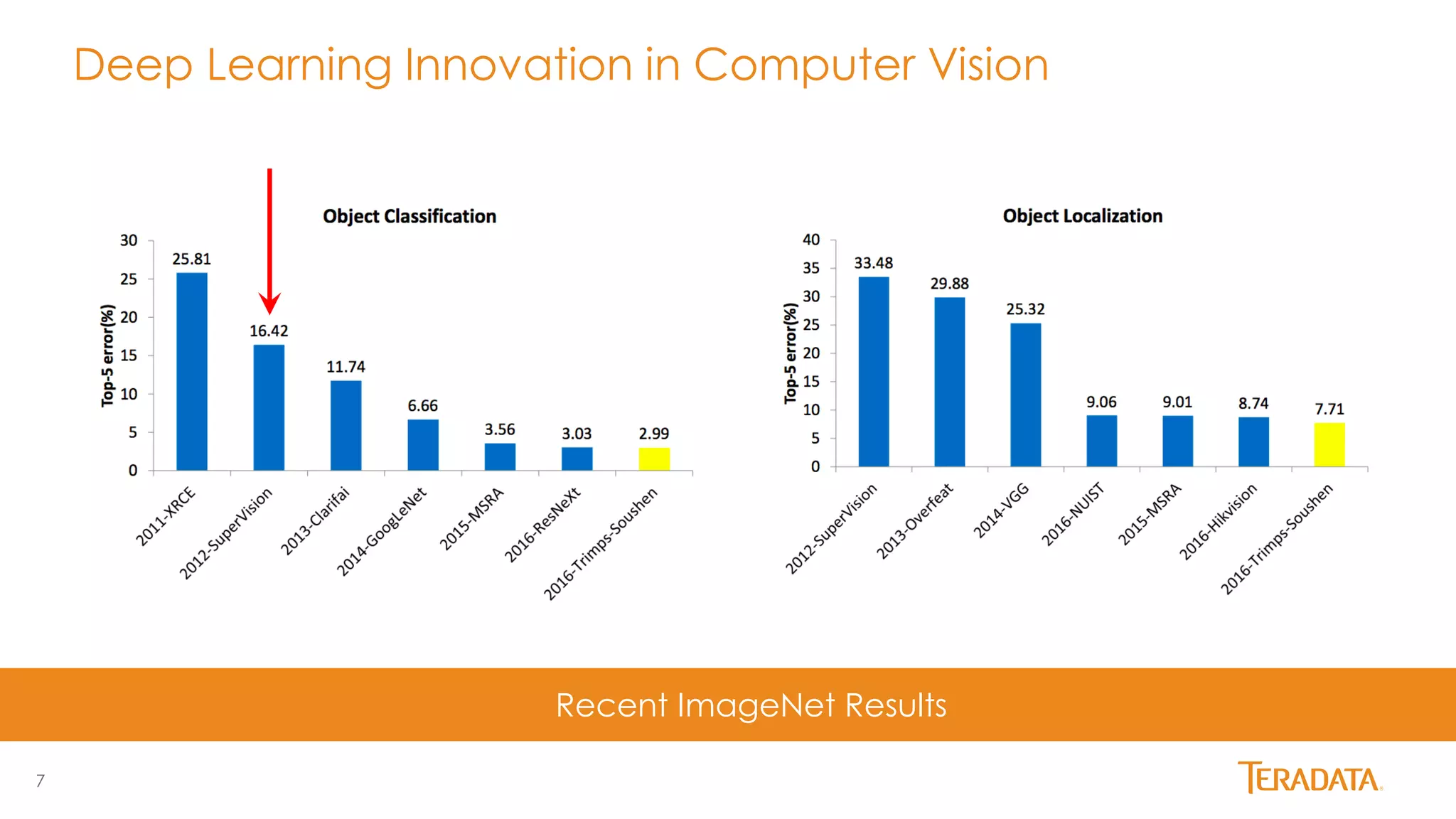

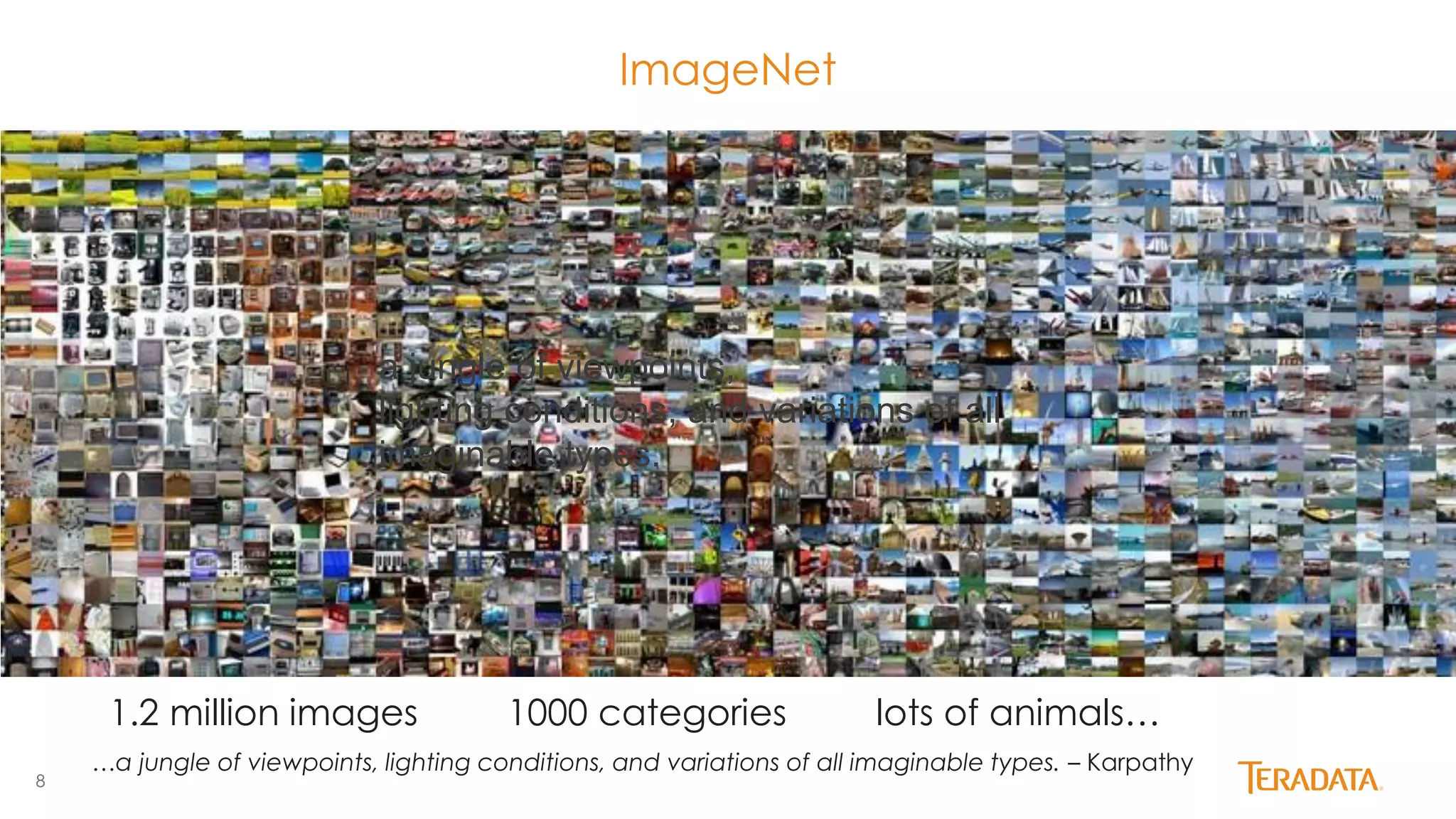

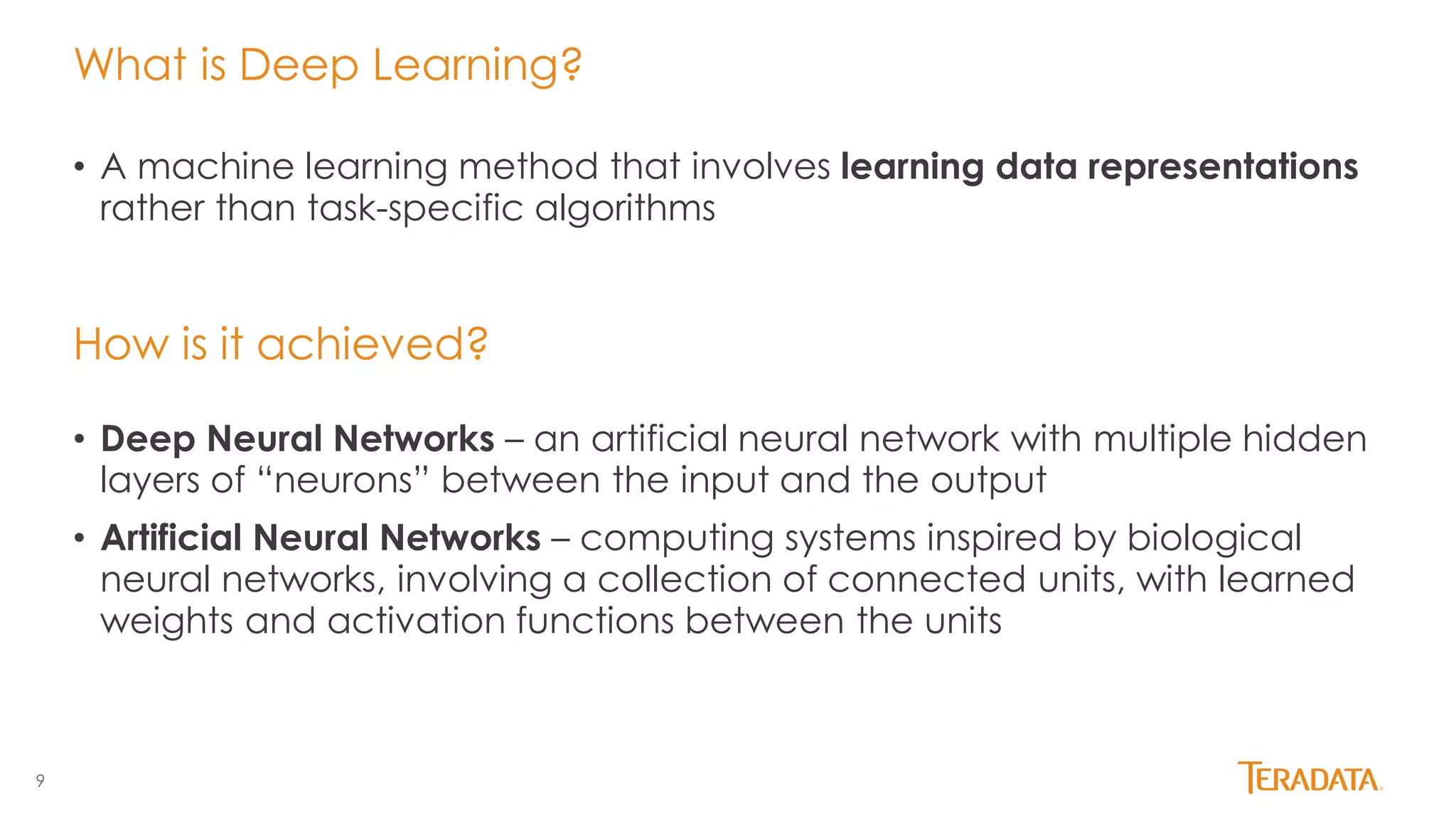

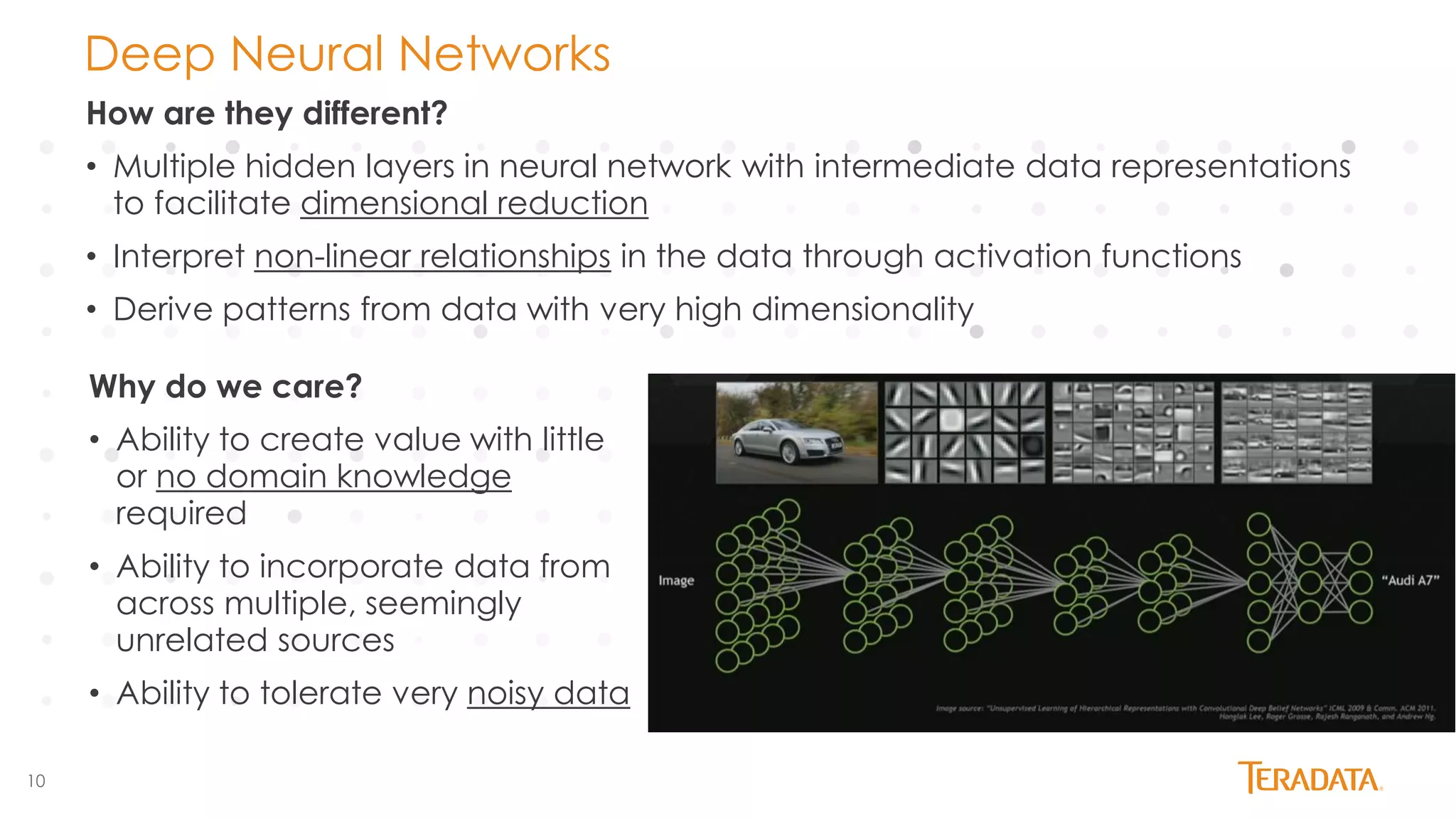

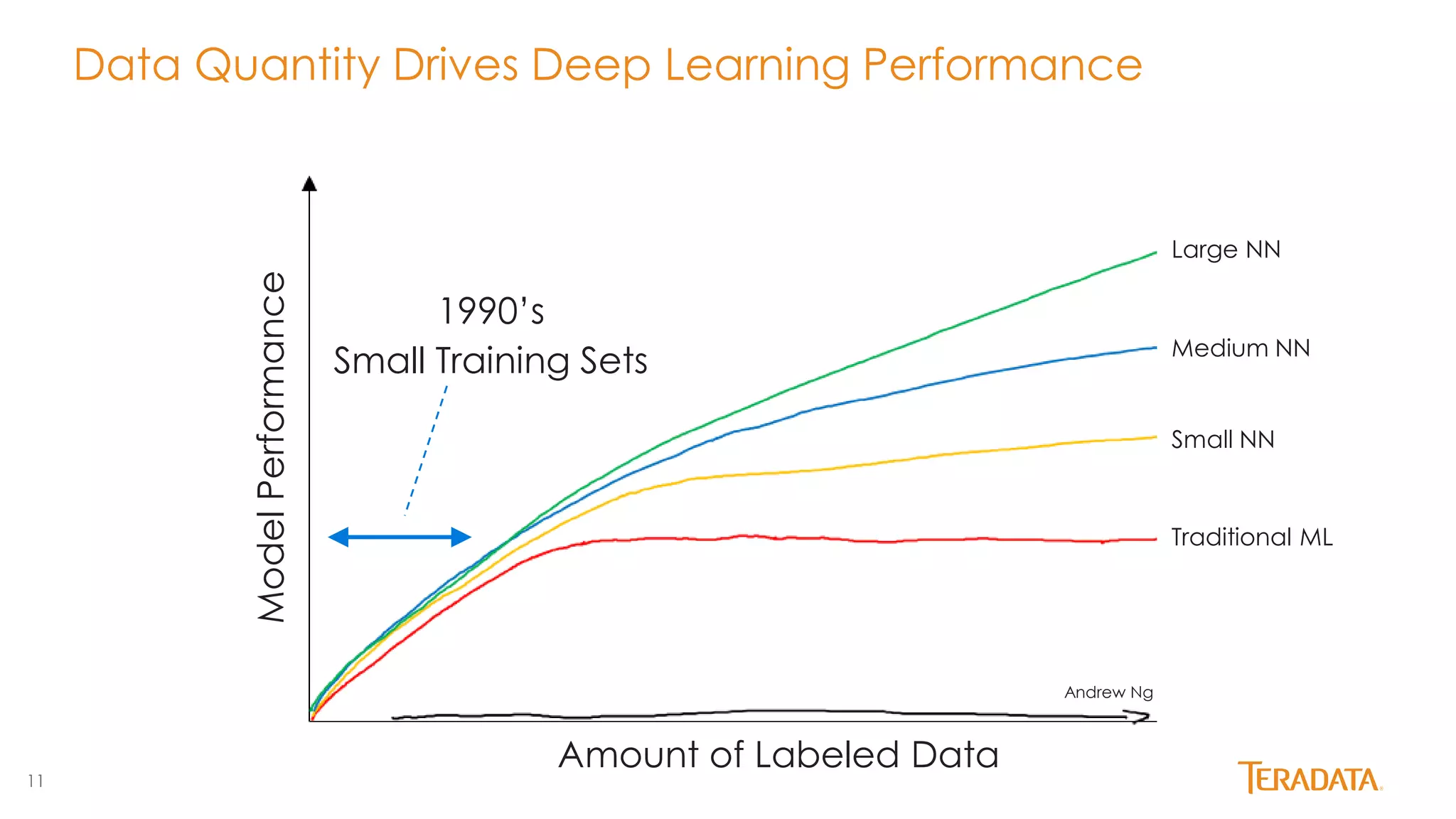

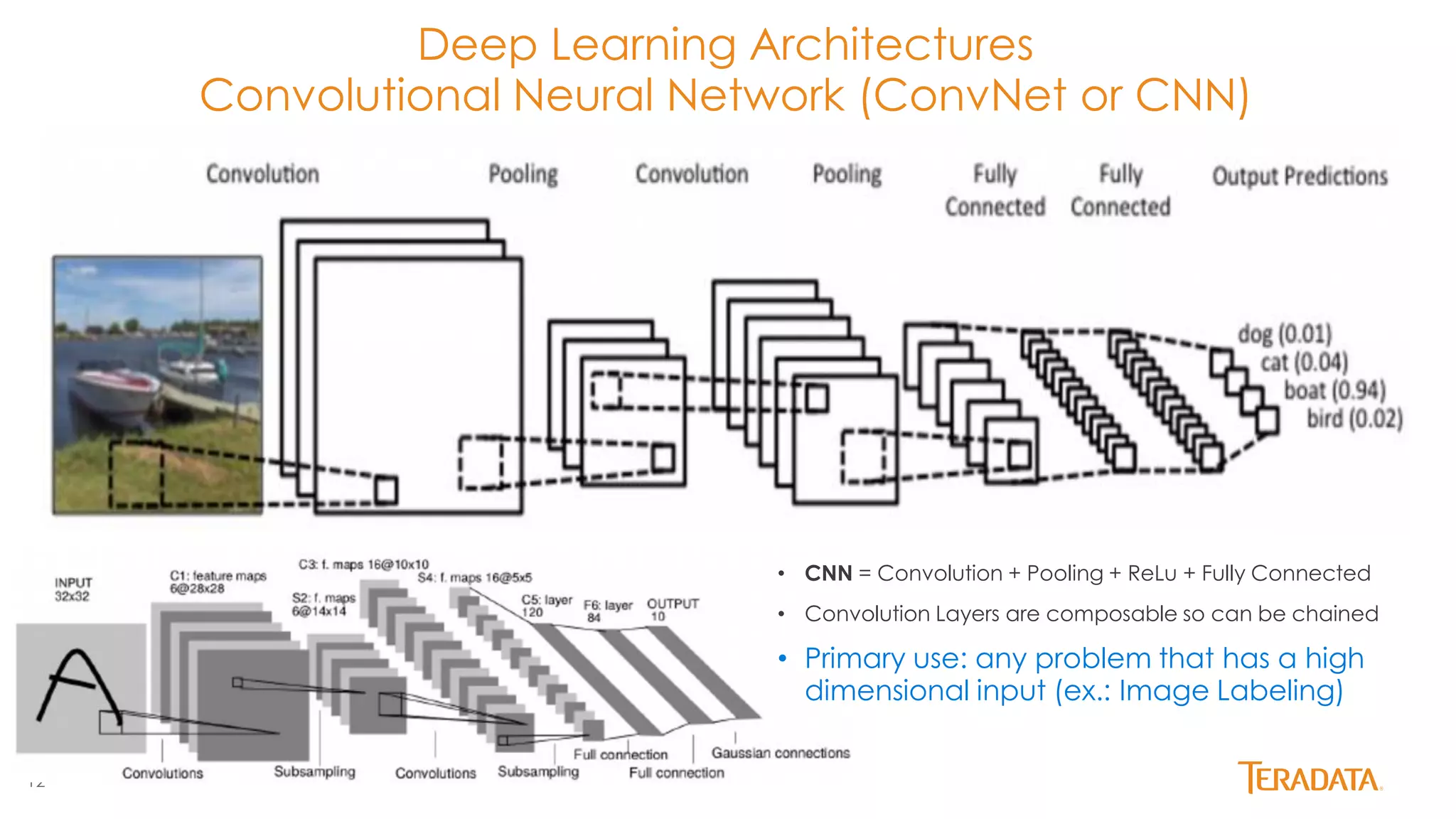

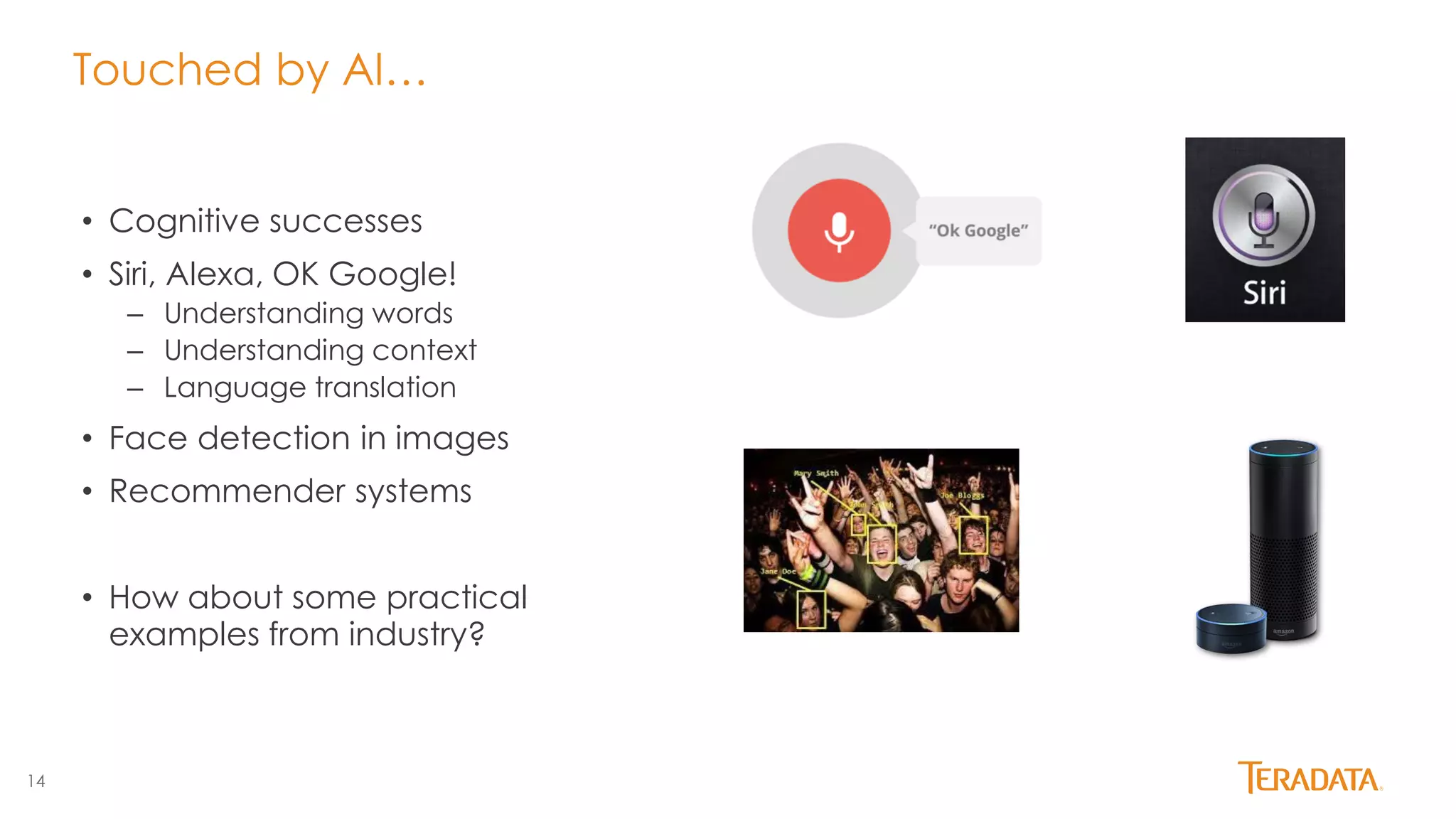

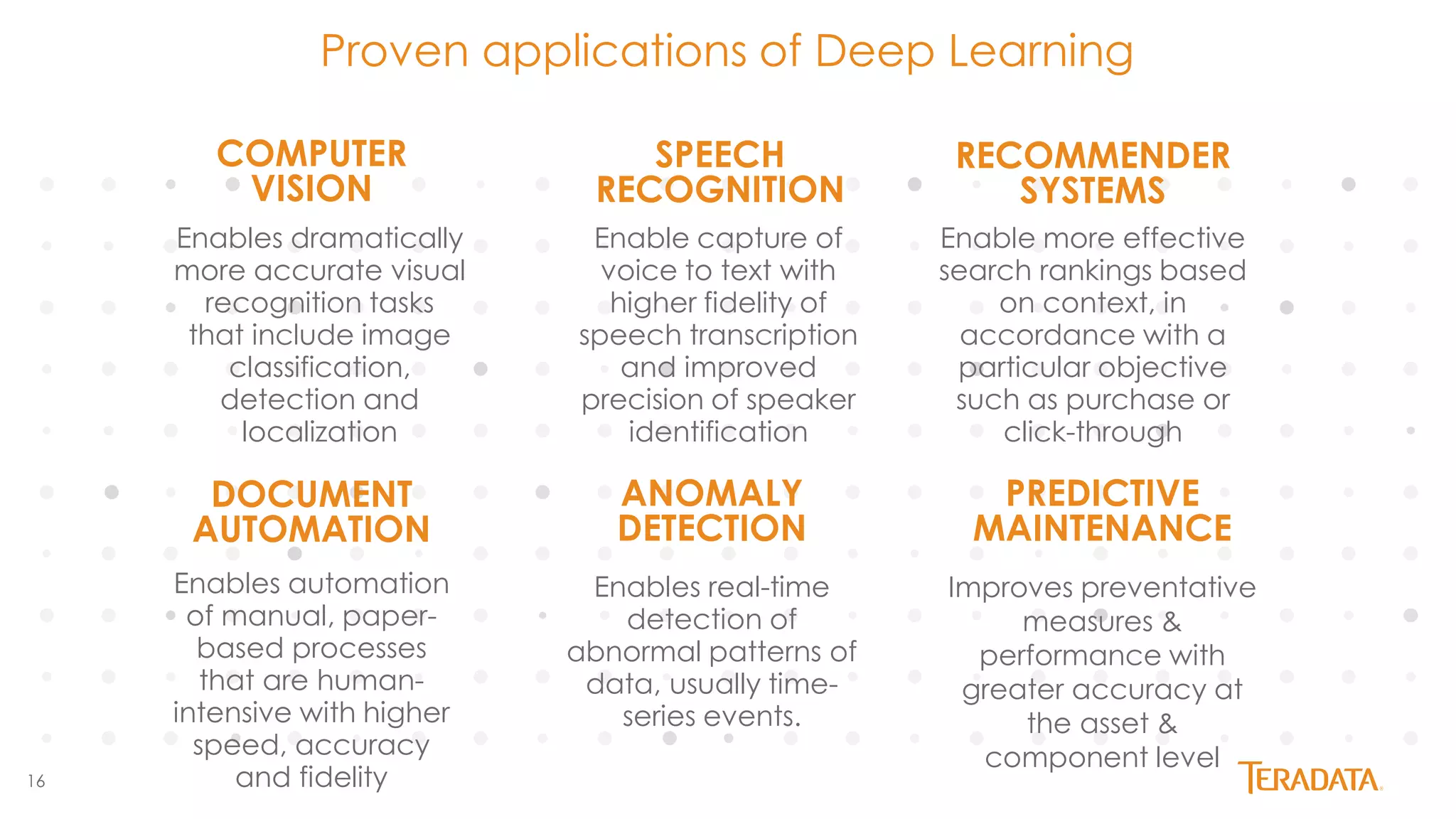

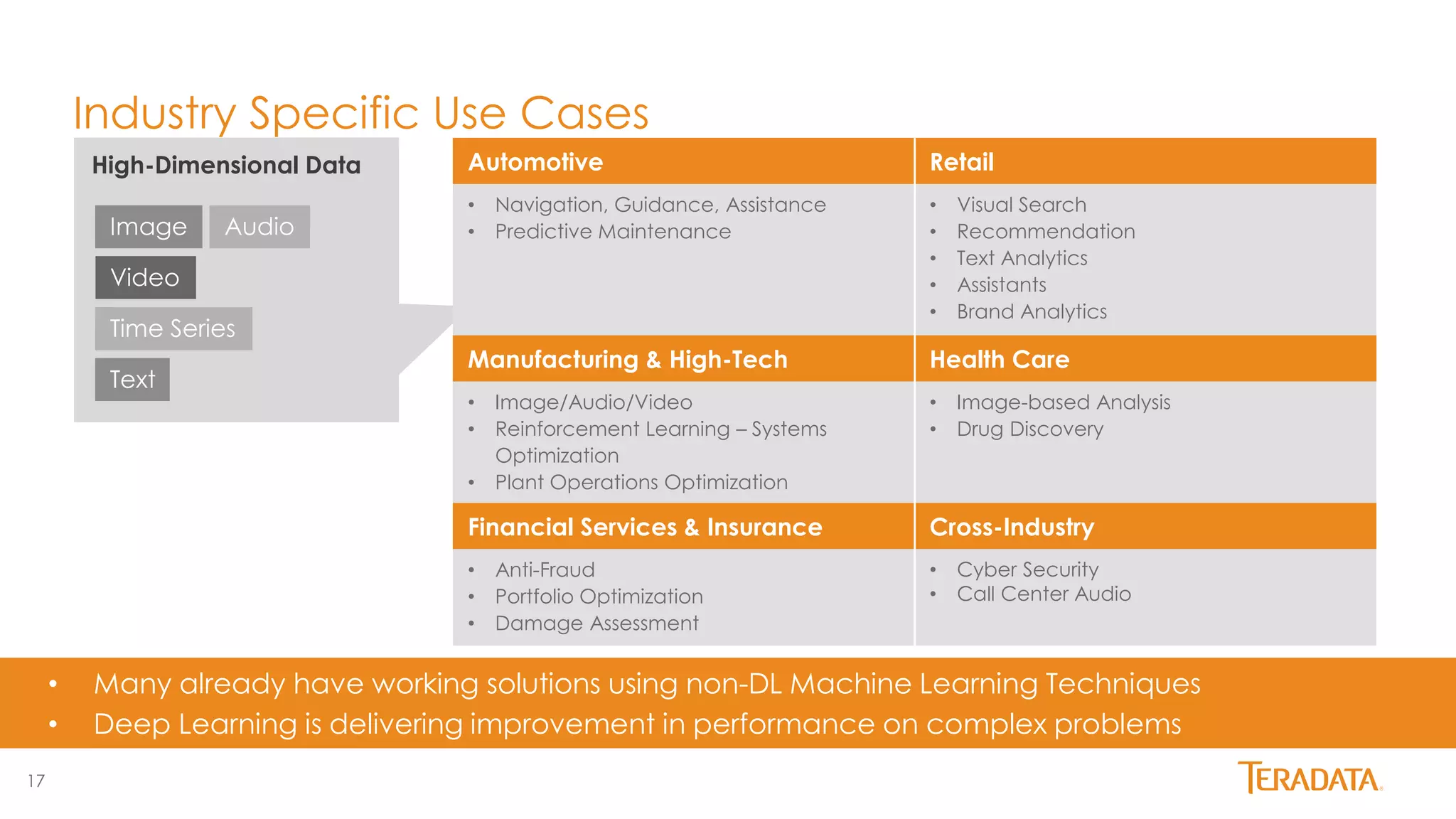

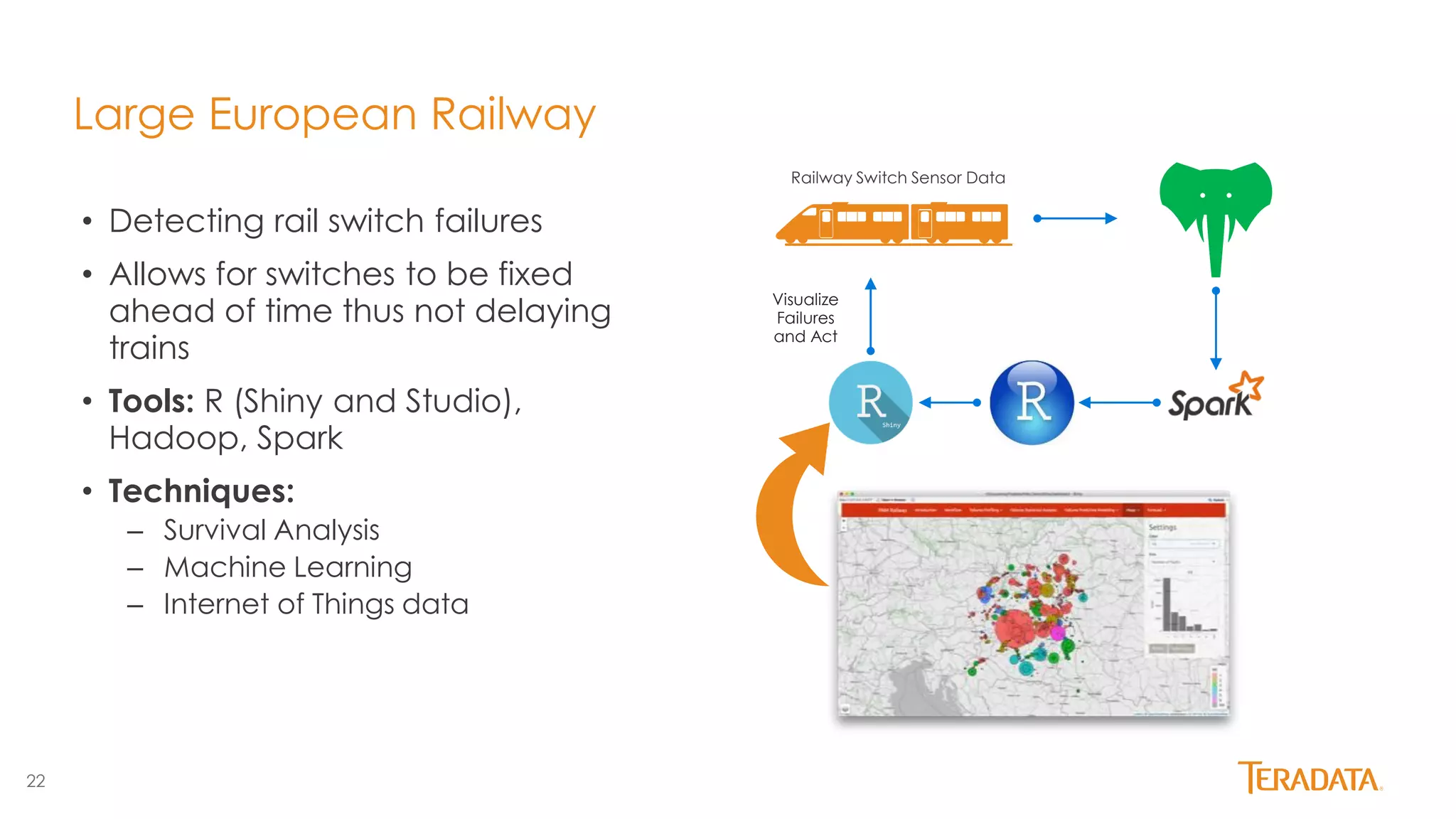

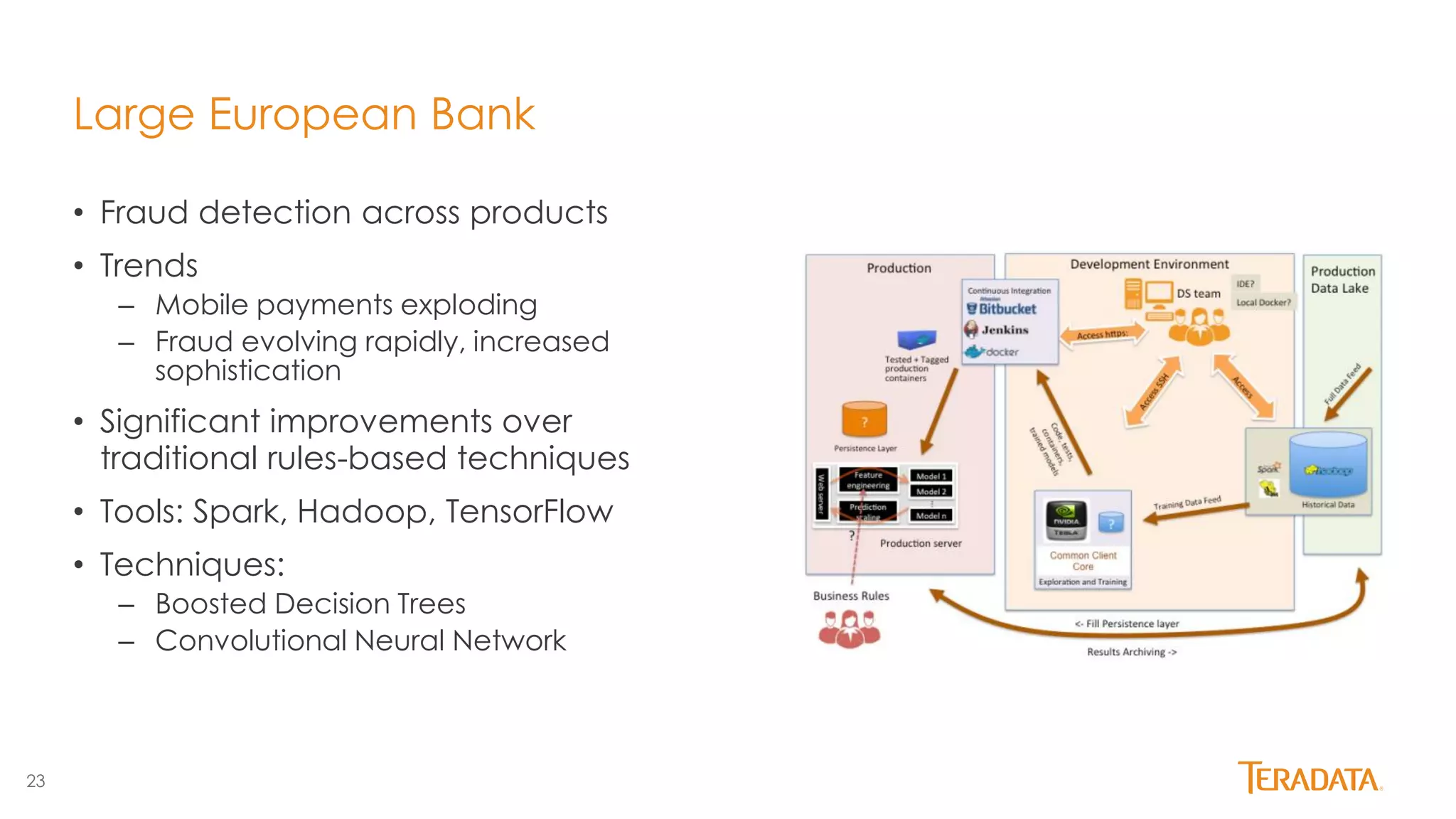

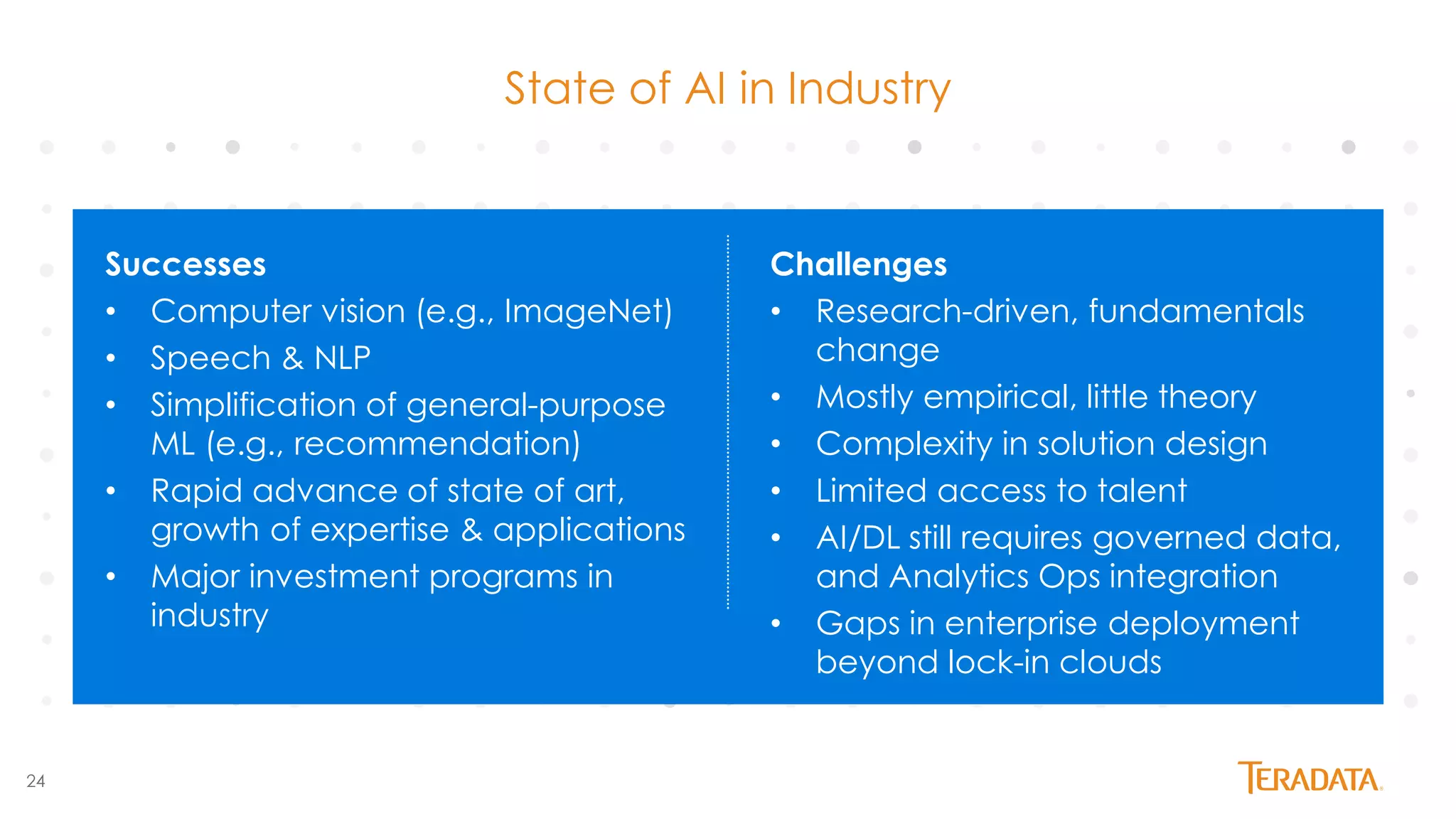

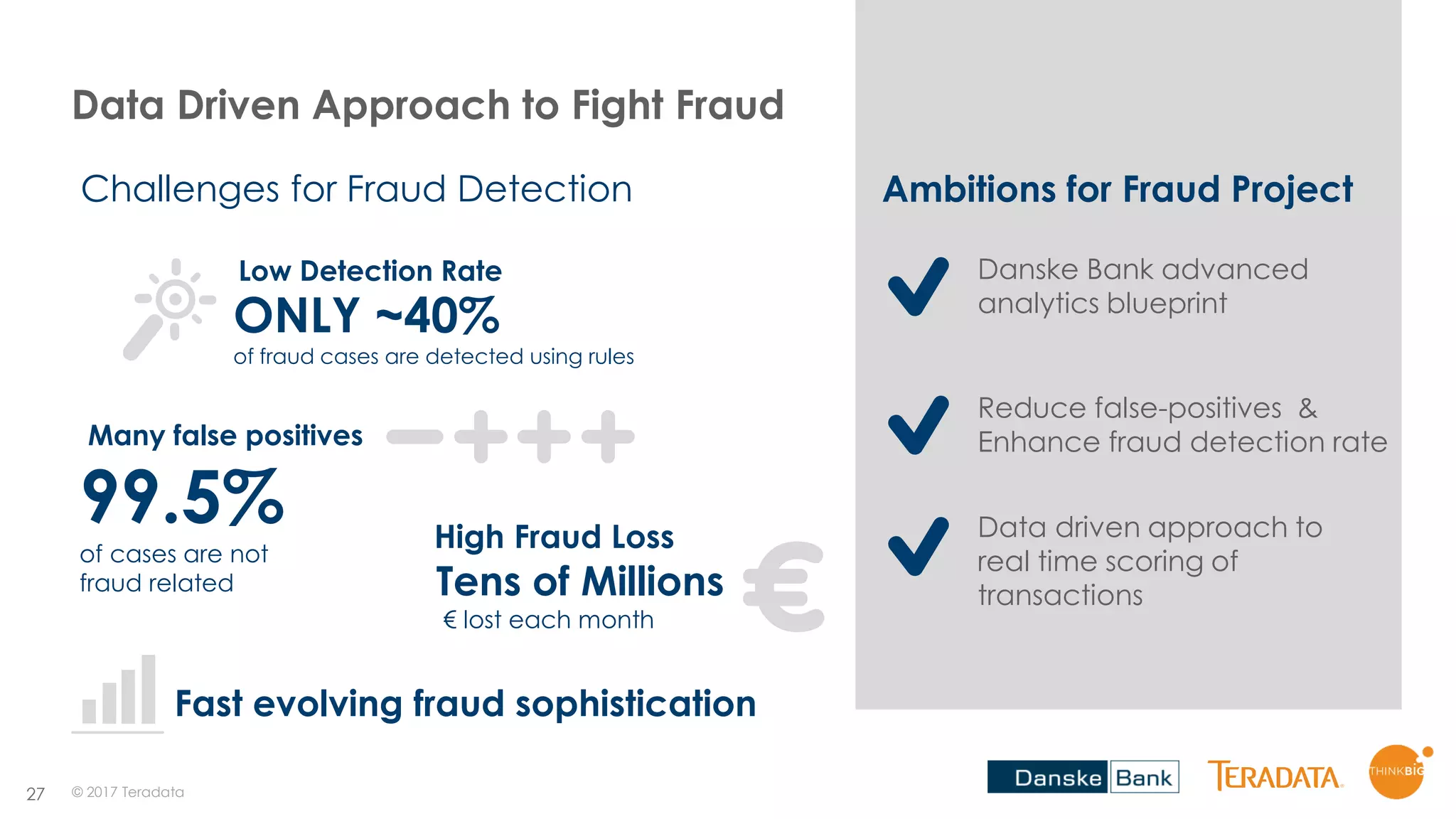

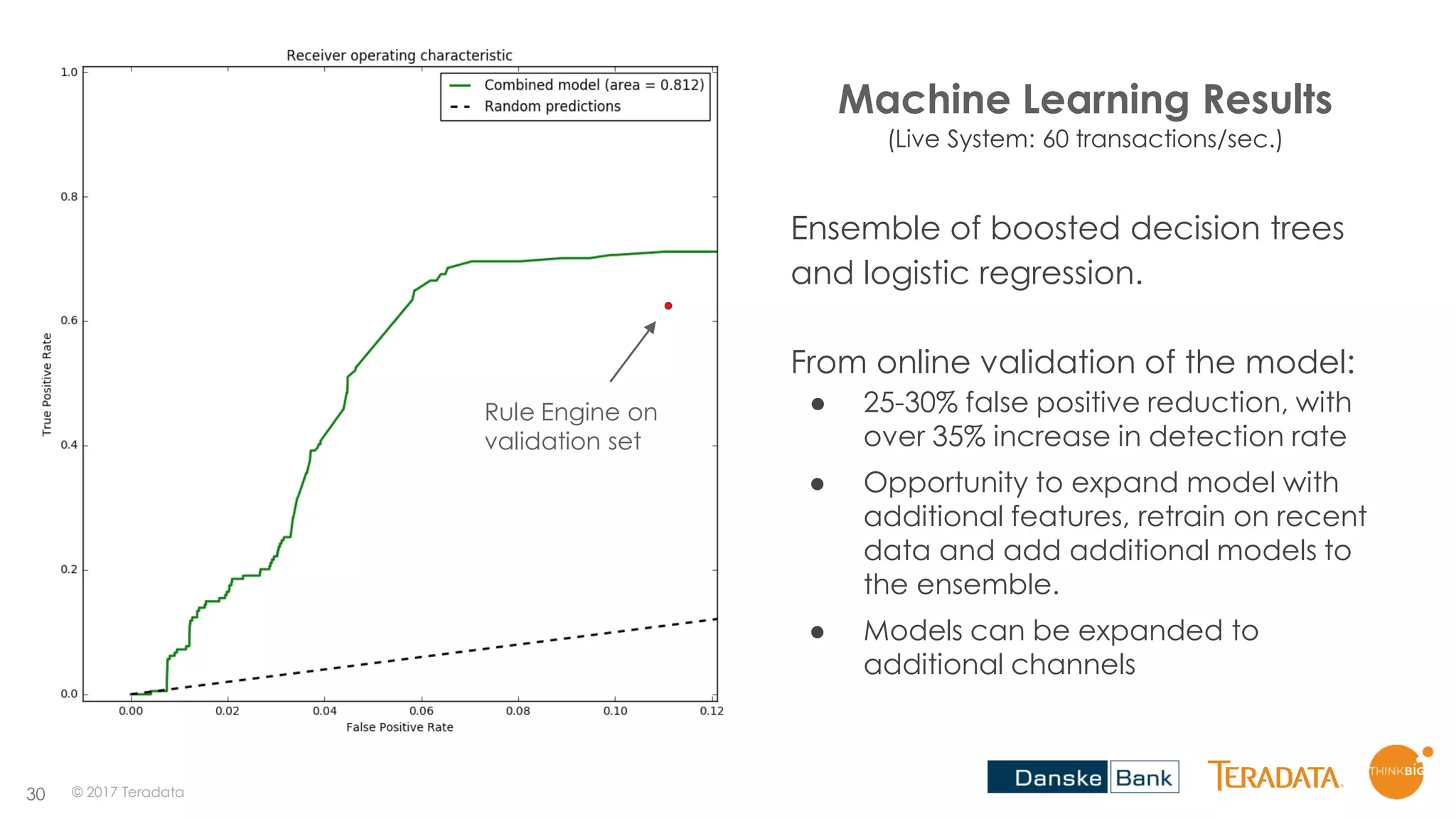

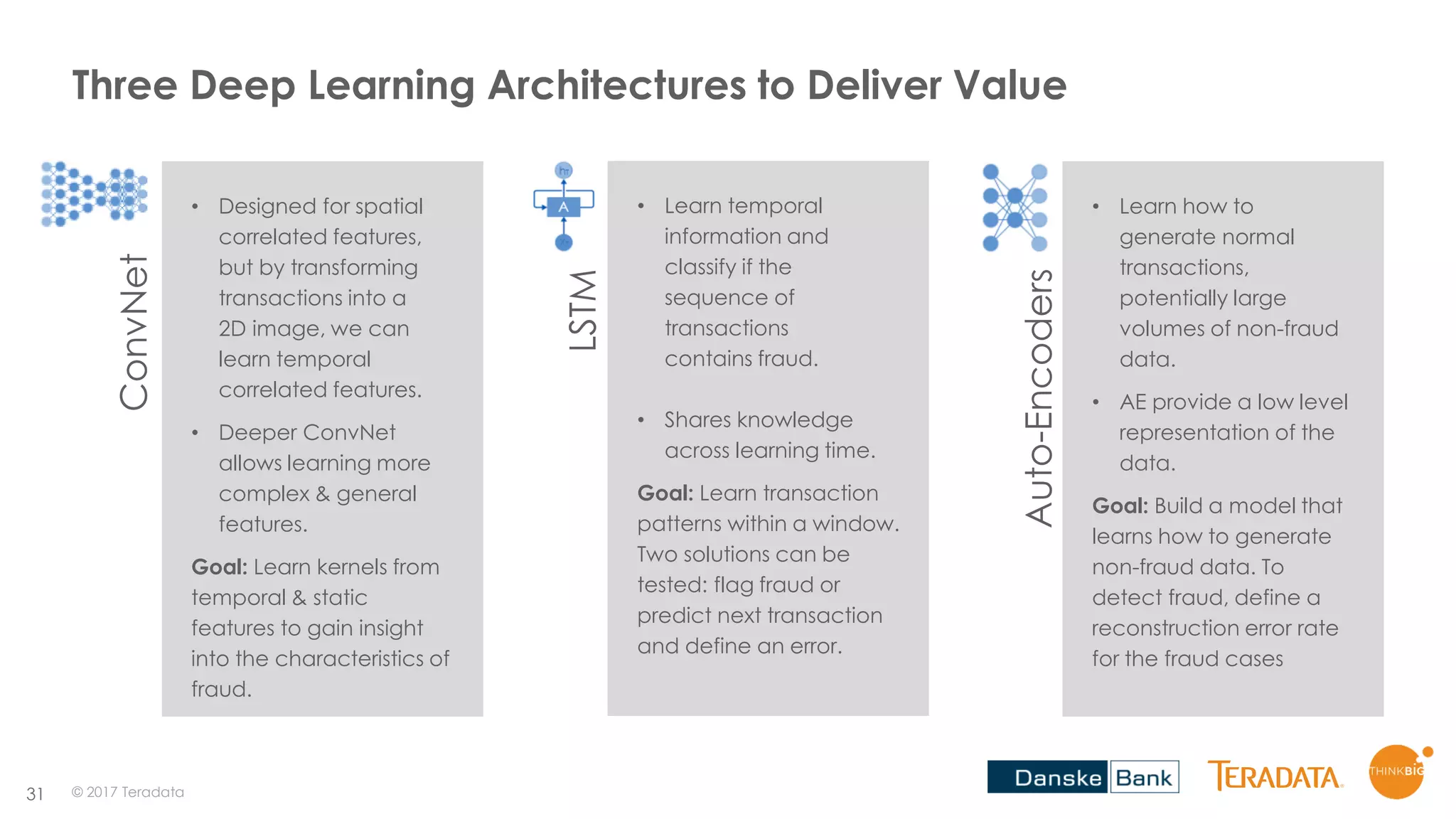

This document discusses AI in the enterprise from past, present, and future perspectives. It provides an overview of the history and recent developments in AI and deep learning, including improved performance on tasks like image recognition. Case studies are presented showing how various large companies have successfully applied deep learning techniques like convolutional neural networks to problems in different industries involving computer vision, predictive maintenance, fraud detection, and more. The importance of data quantity for deep learning performance is highlighted. The final sections discuss challenges in AI adoption and the importance of piloting models before full production deployment.

![32

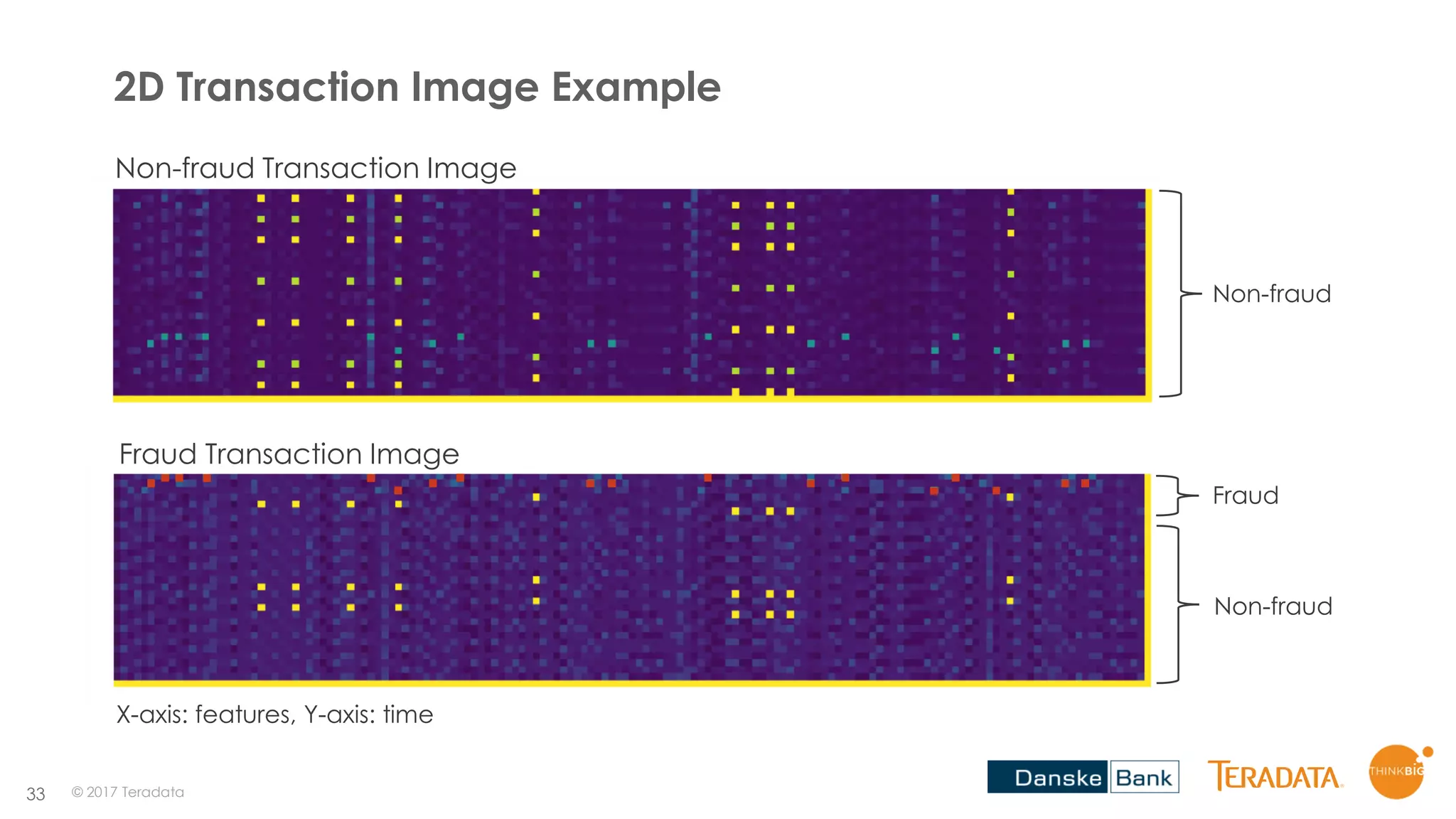

How Can We Create an Image From Bank Transactions?

t0 X_0, X_1, ... X_n

dt

t1 X_0, X_1, ... X_n

t2 X_0, X_1, ... X_n

ts X_0, X_1, ... X_n

...

Top k Features Correlation

...

X_0

X_41 X_5

X_30

X_29X_31X_10

X_37

X_3

X_1

X_42 X_40

X_32

X_15X_35X_2

X_16

X_31

X_2

X_3 X_15

X_4

X_1X_28X_40

X_31

X_49

X_n

X_26 X_9

X_40

X_35X_28X_2

X_17

X_1

...

X_0

X_41 X_5

X_30

X_29X_31X_10

X_37

X_3

X_1

X_42 X_40

X_32

X_15X_35X_2

X_16

X_31

X_2

X_3 X_15

X_4

X_1X_28X_40

X_31

X_49

X_n

X_26 X_9

X_40

X_35X_28X_2

X_17

X_1

...

...

...

...

...

X_0

X_41 X_5

X_30

X_29X_31X_10

X_37

X_3

X_1

X_42 X_40

X_32

X_15X_35X_2

X_16

X_31

X_2

X_3 X_15

X_4

X_1X_28X_40

X_31

X_49

X_n

X_26 X_9

X_40

X_35X_28X_2

X_17

X_1

N

dt

t0

t1

ts

Input Output

Raw Features

Add correlated features

in a clock-wise manner

© 2017 Teradata

Image size is:

[10 x 3, 50 x 3, 1]

Time

Strides of 3](https://image.slidesharecdn.com/aiintheenterprisepastpresentfuture-stampedeconaisummit2017-171030121010/75/AI-in-the-Enterprise-Past-Present-Future-StampedeCon-AI-Summit-2017-32-2048.jpg)