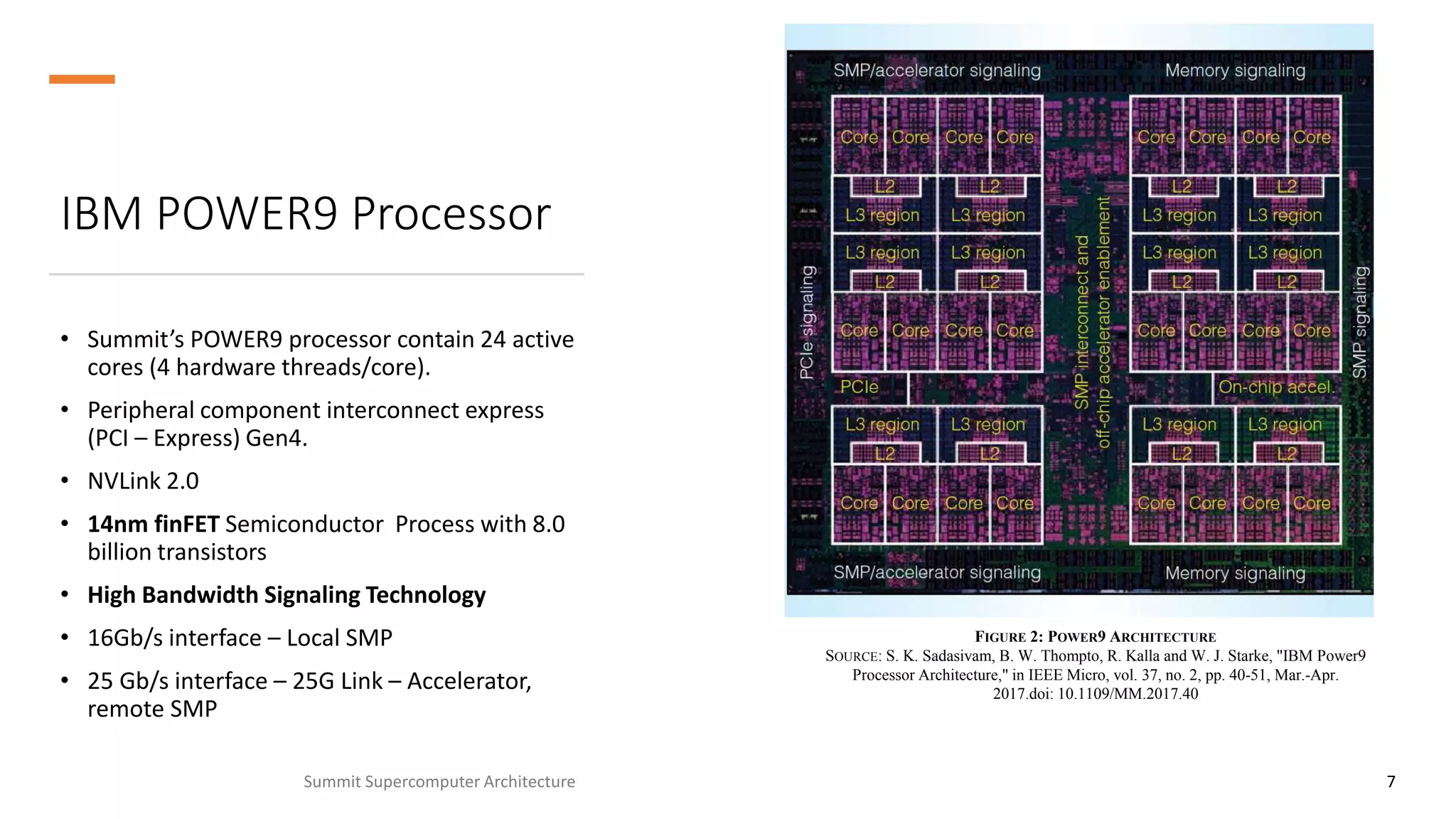

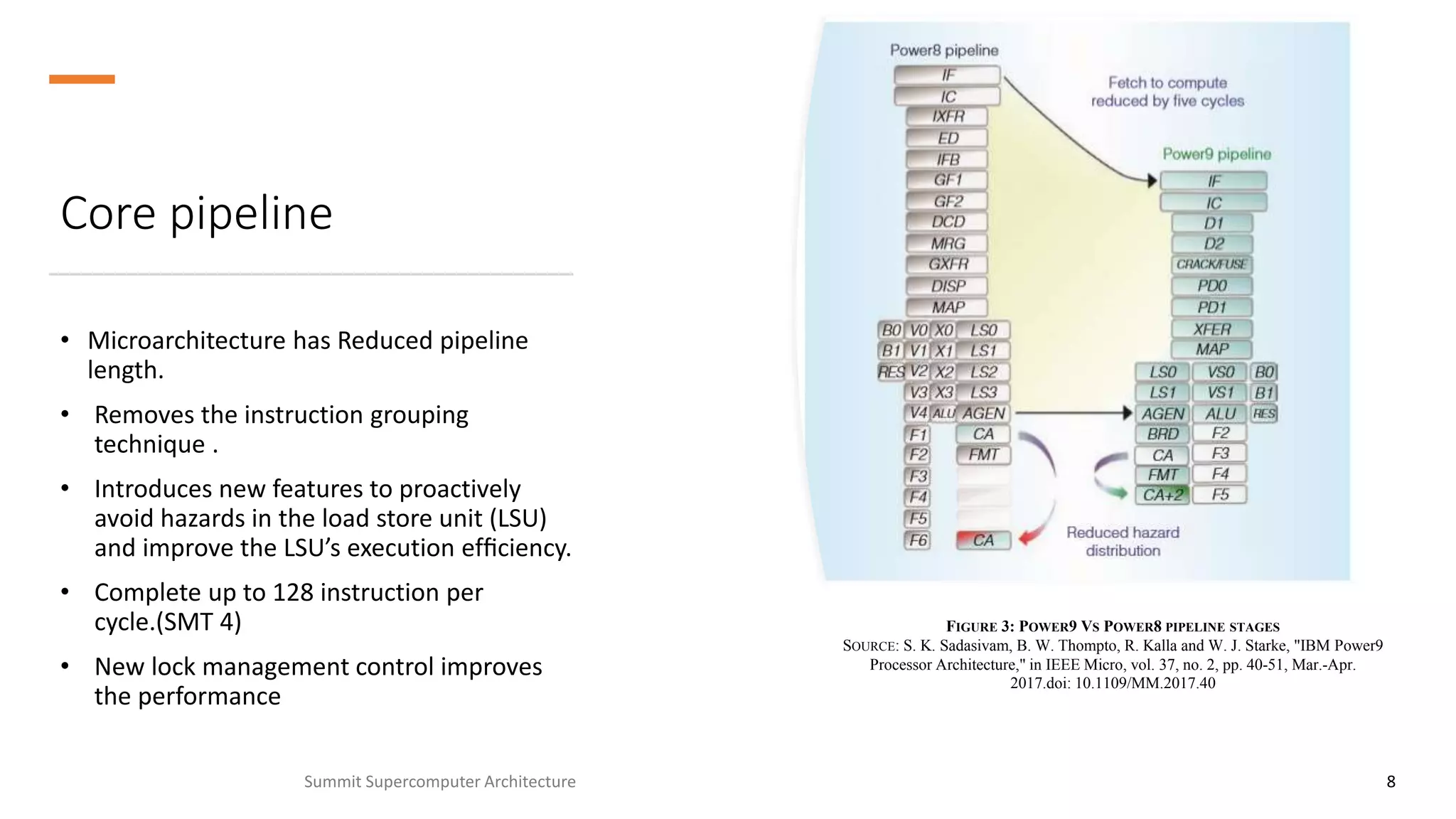

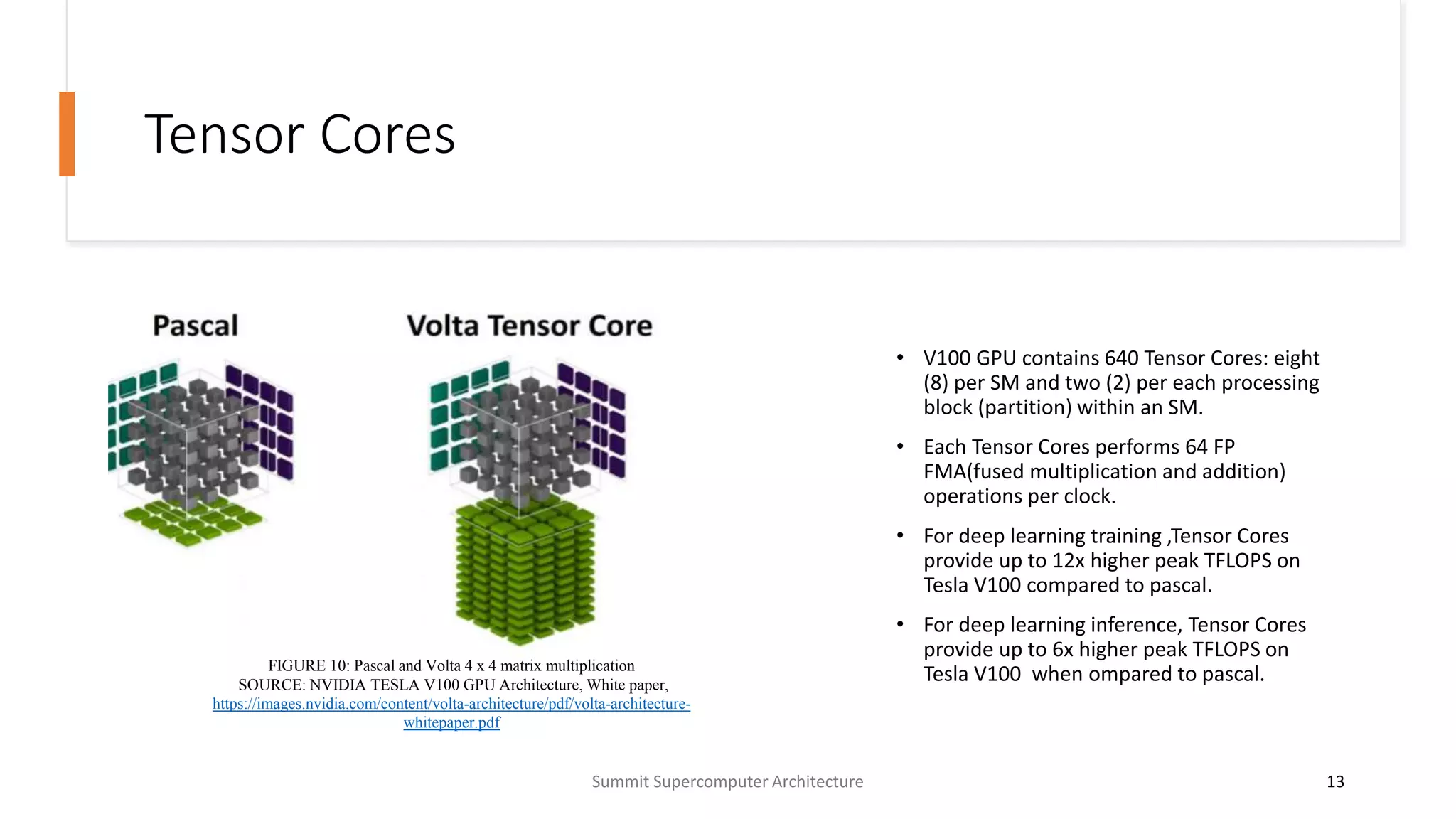

The Summit supercomputer, operational from 2018 to 2020, was the fastest computer globally, achieving a peak performance of 148.6 petaflops and power efficiency of 14.719 gflops/watt. It features 2,414,592 cores powered by IBM POWER9 processors and 27,648 NVIDIA Tesla V100 GPUs, with a total of over 250 petabytes of storage. Notably, Summit was utilized in drug compound research against the coronavirus, screening 8,000 datasets in just two days.