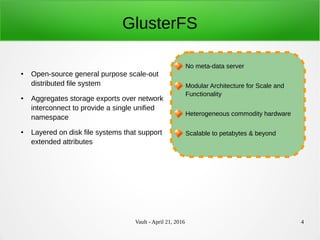

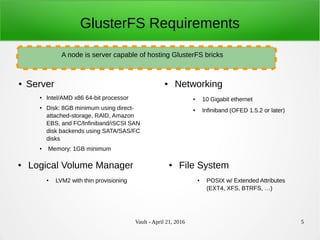

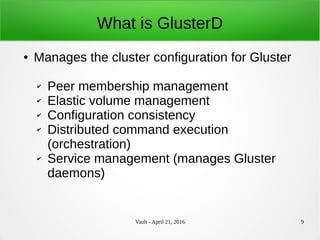

The document discusses GlusterD 2.0, a redesign of the Gluster distributed file system management daemon. Some key points:

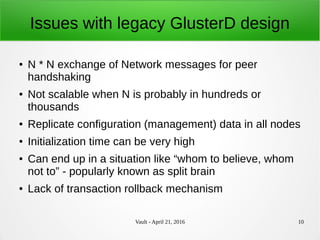

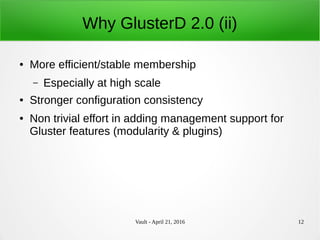

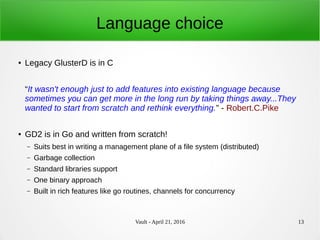

- GlusterD 1.0 had scalability and consistency issues that limited it to hundreds of nodes. GlusterD 2.0 was rewritten from scratch in Go for better performance.

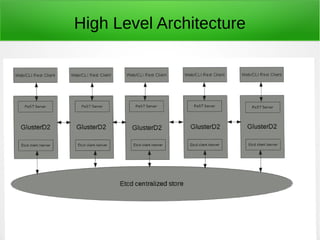

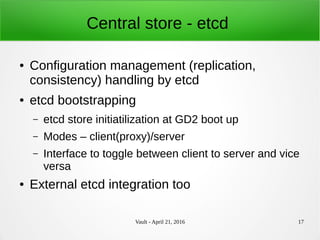

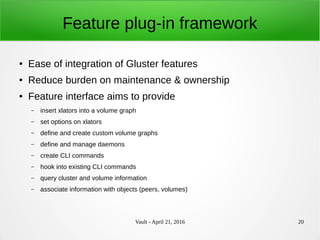

- GlusterD 2.0 uses etcd for centralized management and configuration storage. It has REST APIs and plugins for modularity.

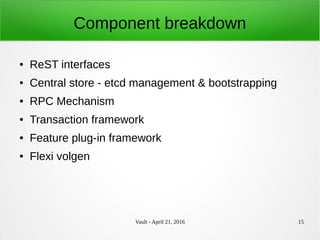

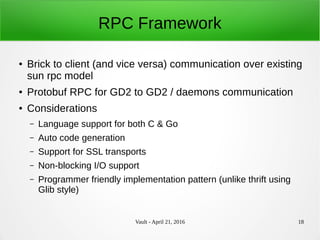

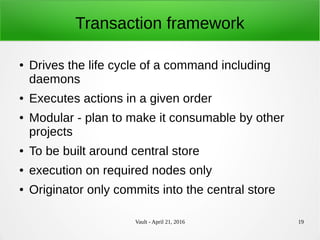

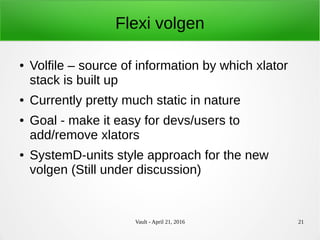

- Components include REST interfaces, etcd backend, RPC framework, transaction system, and a flexible volume generator.

- Upgrades from Gluster 3.x to 4.x will be disruptive but provide a migration path. Gluster