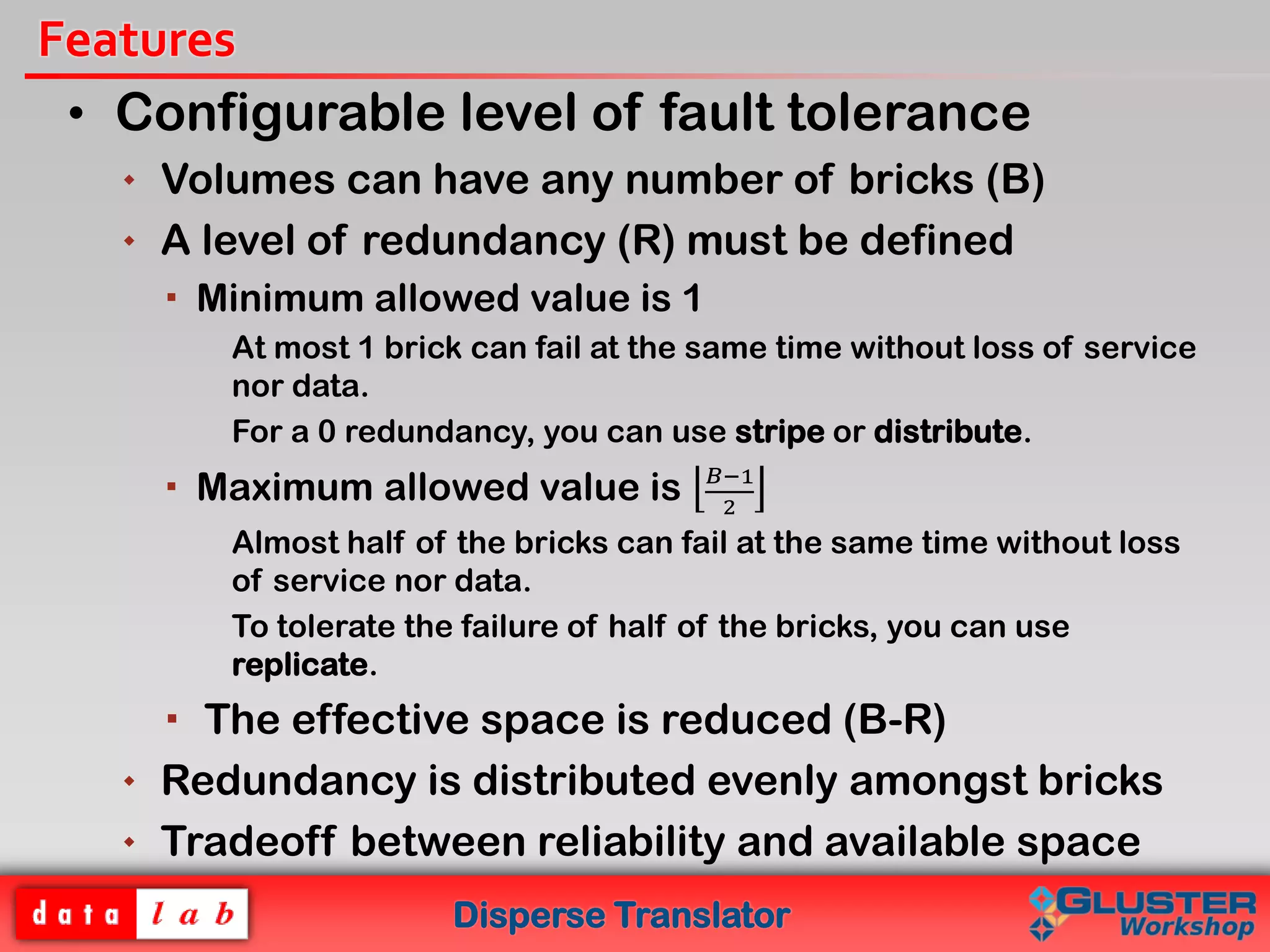

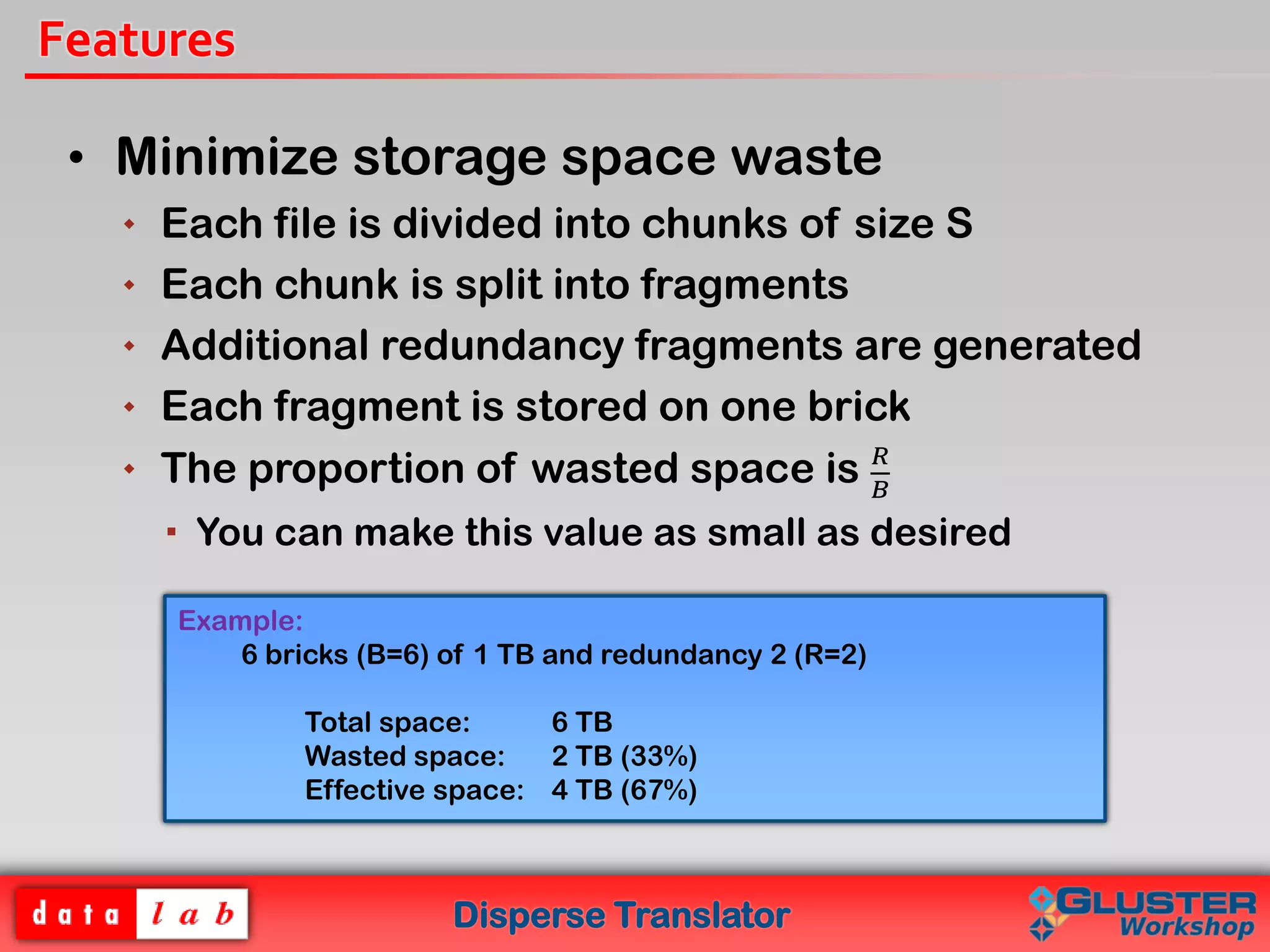

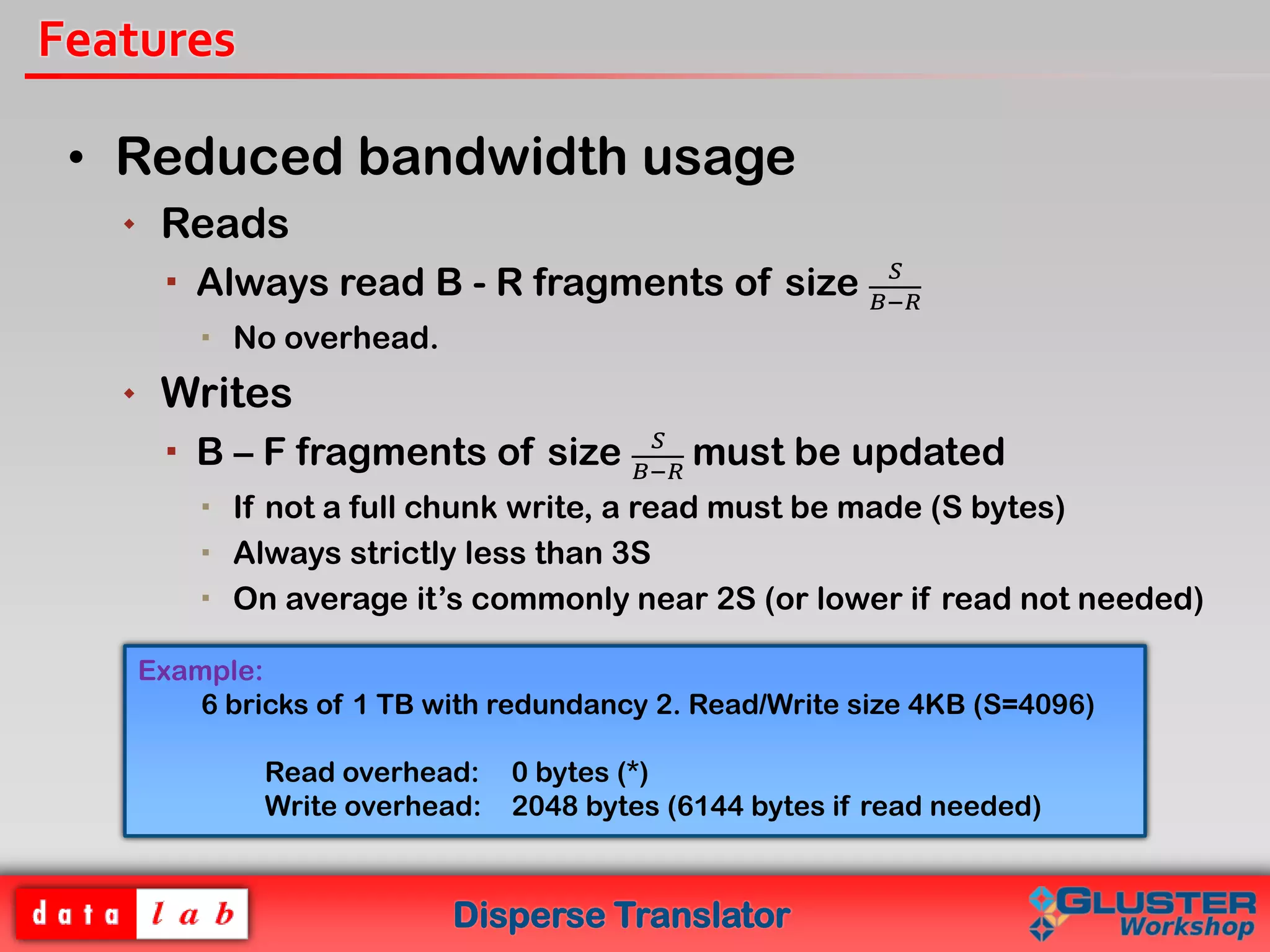

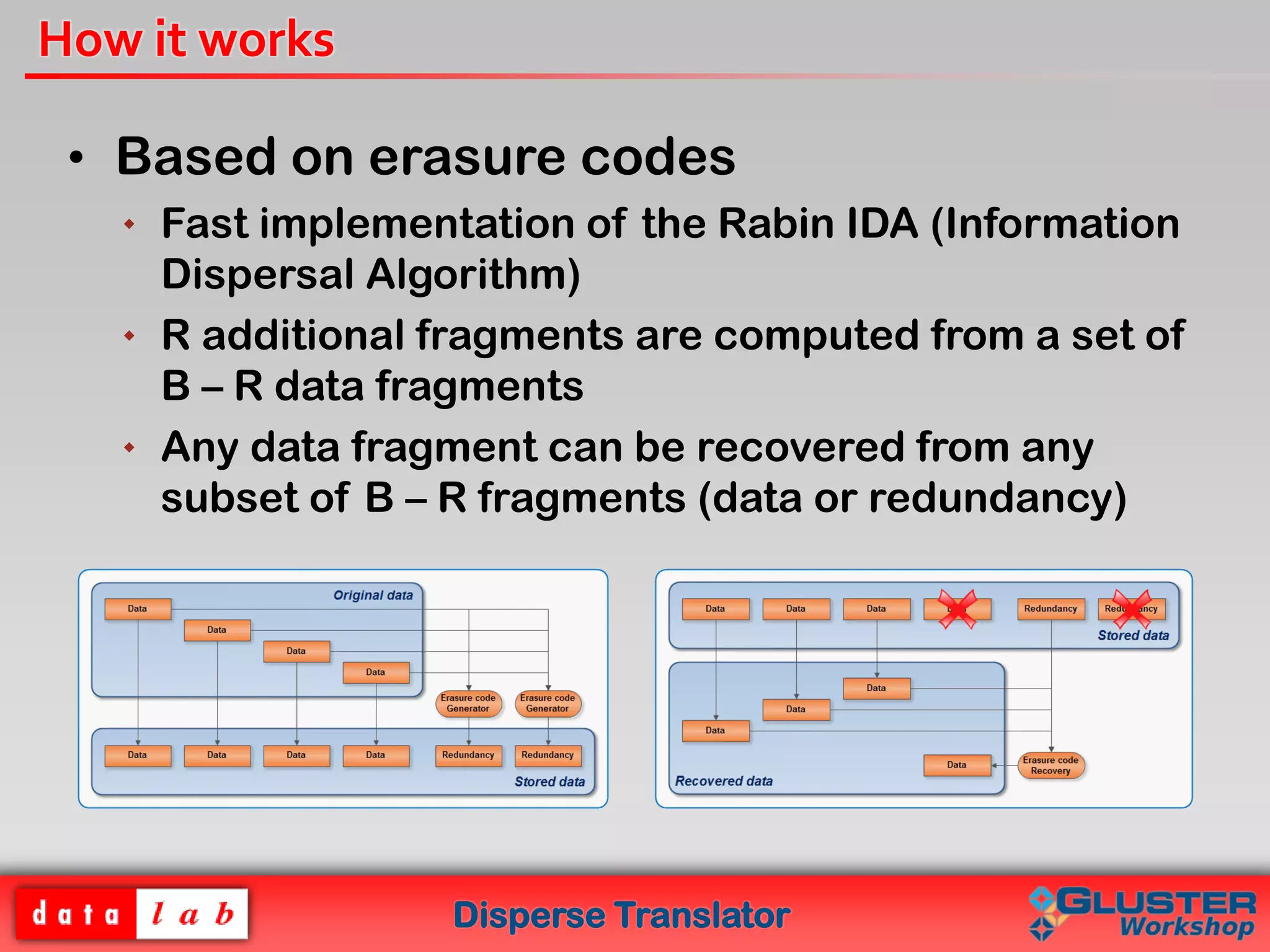

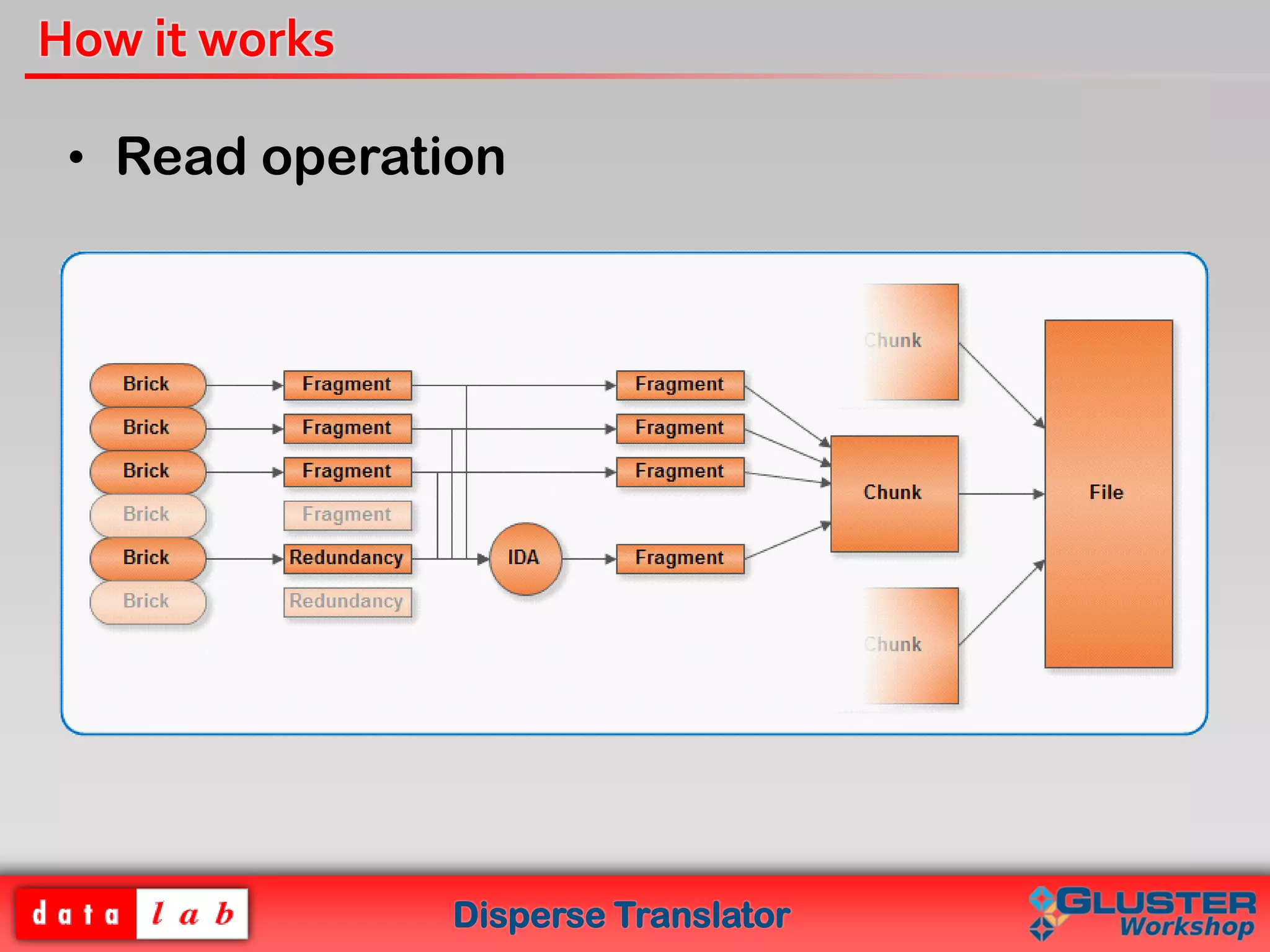

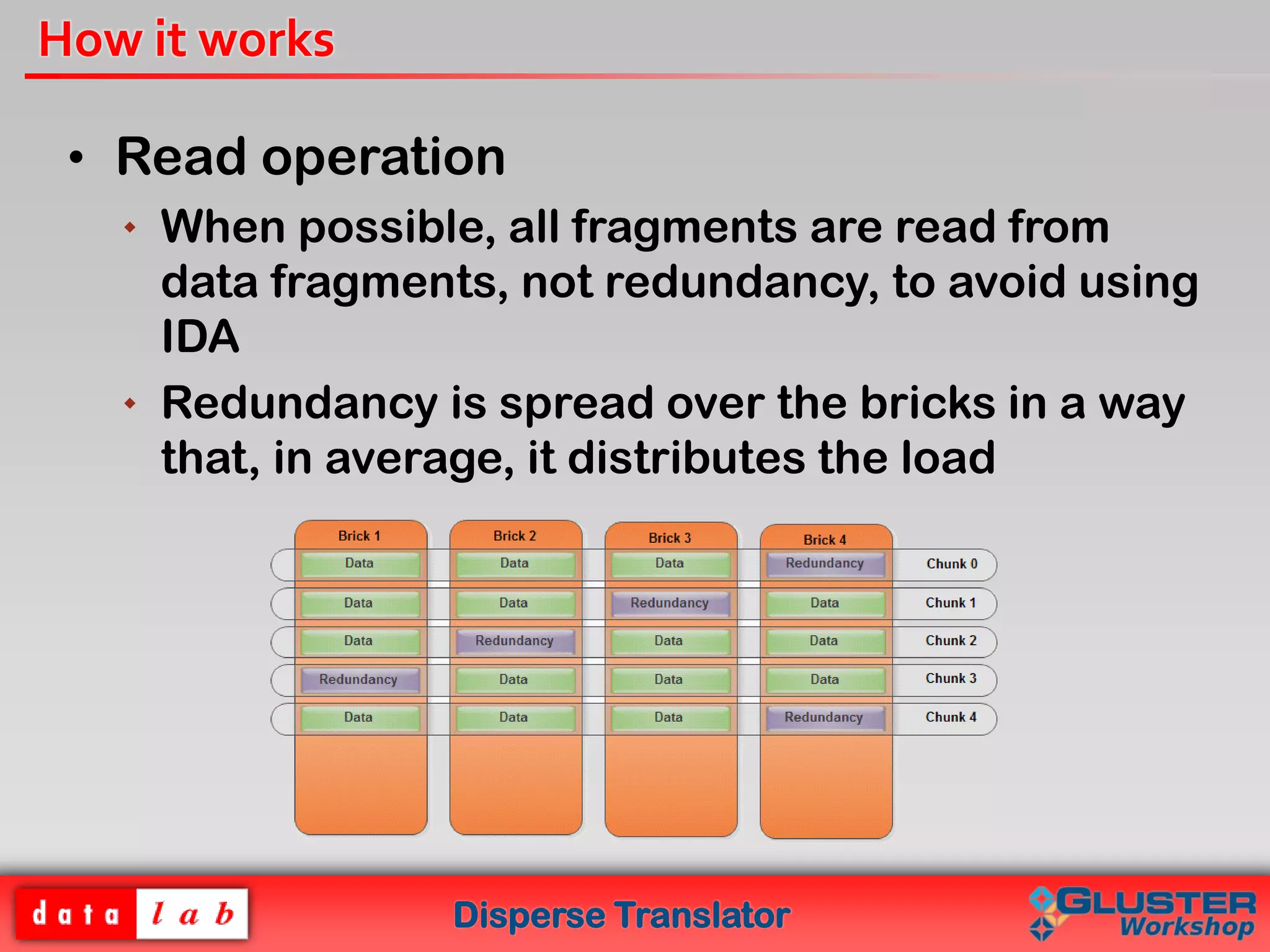

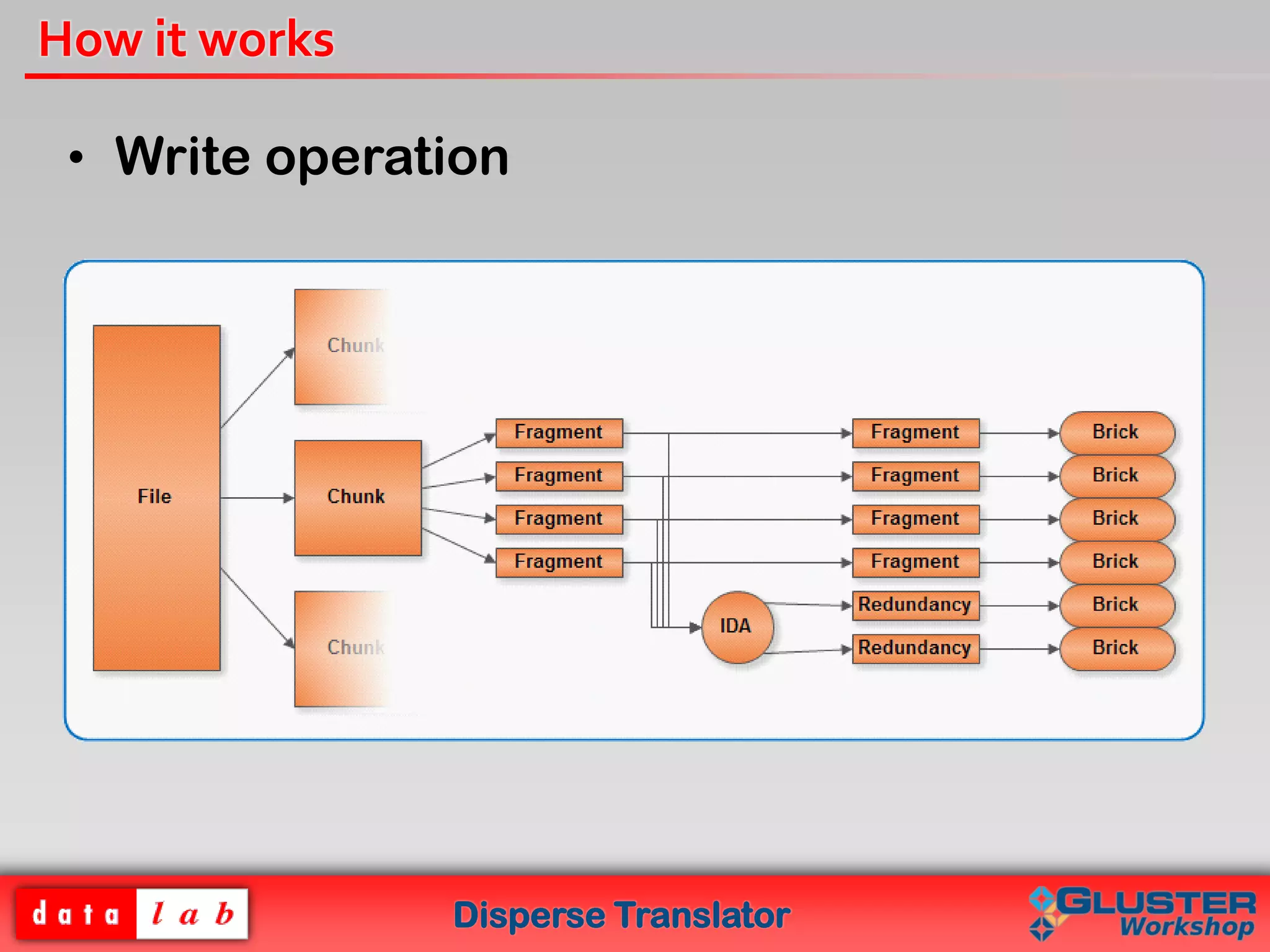

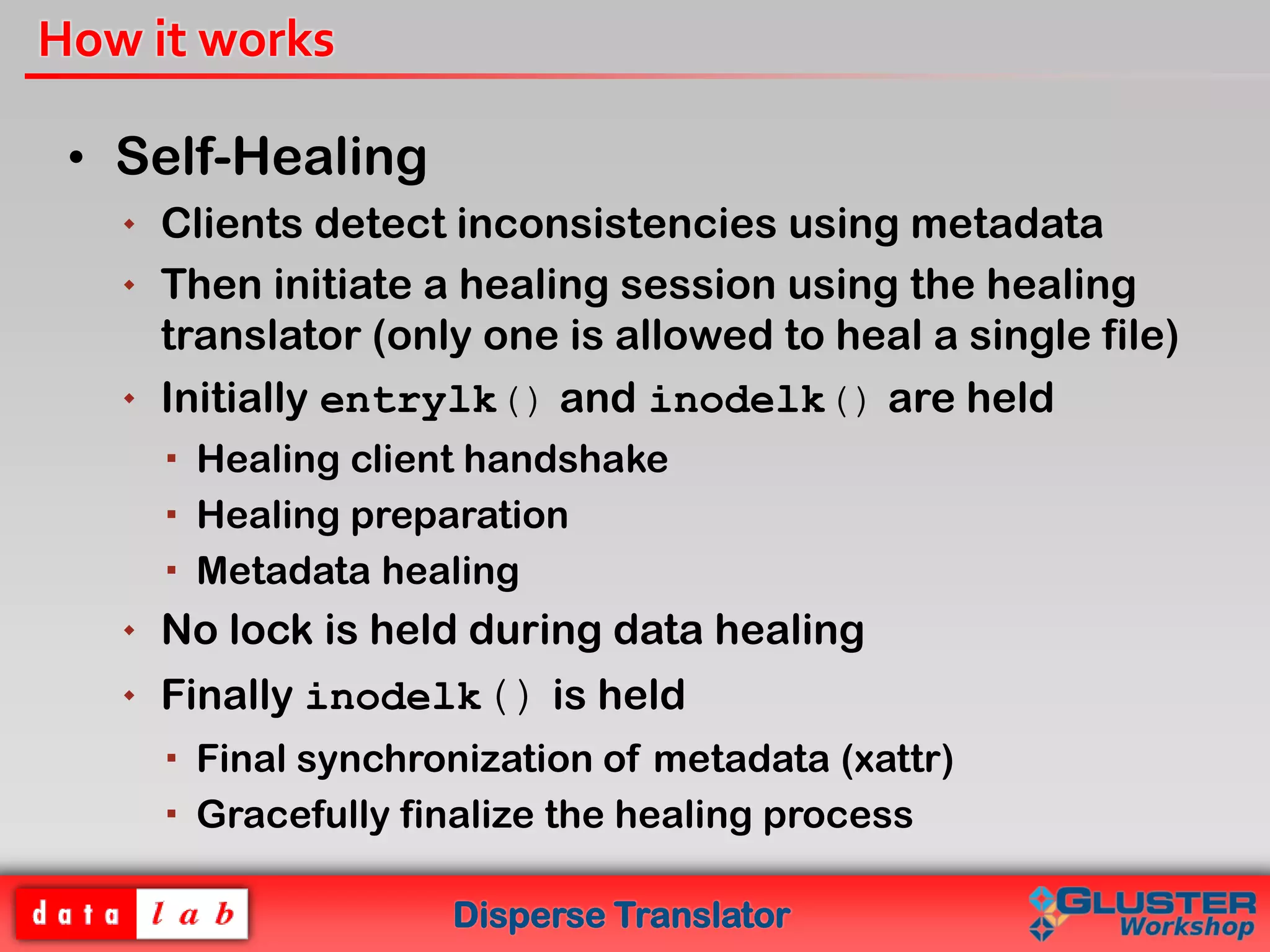

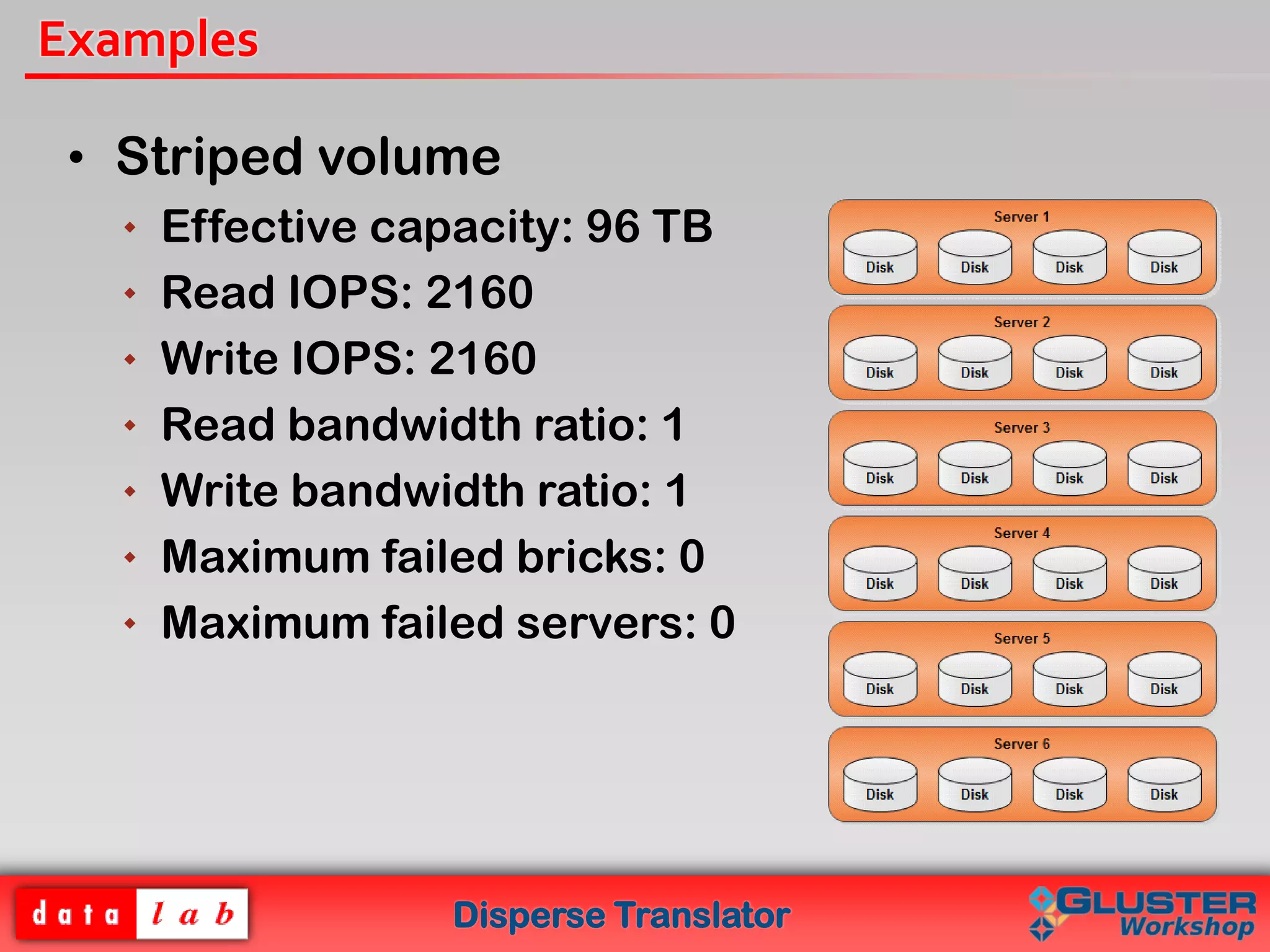

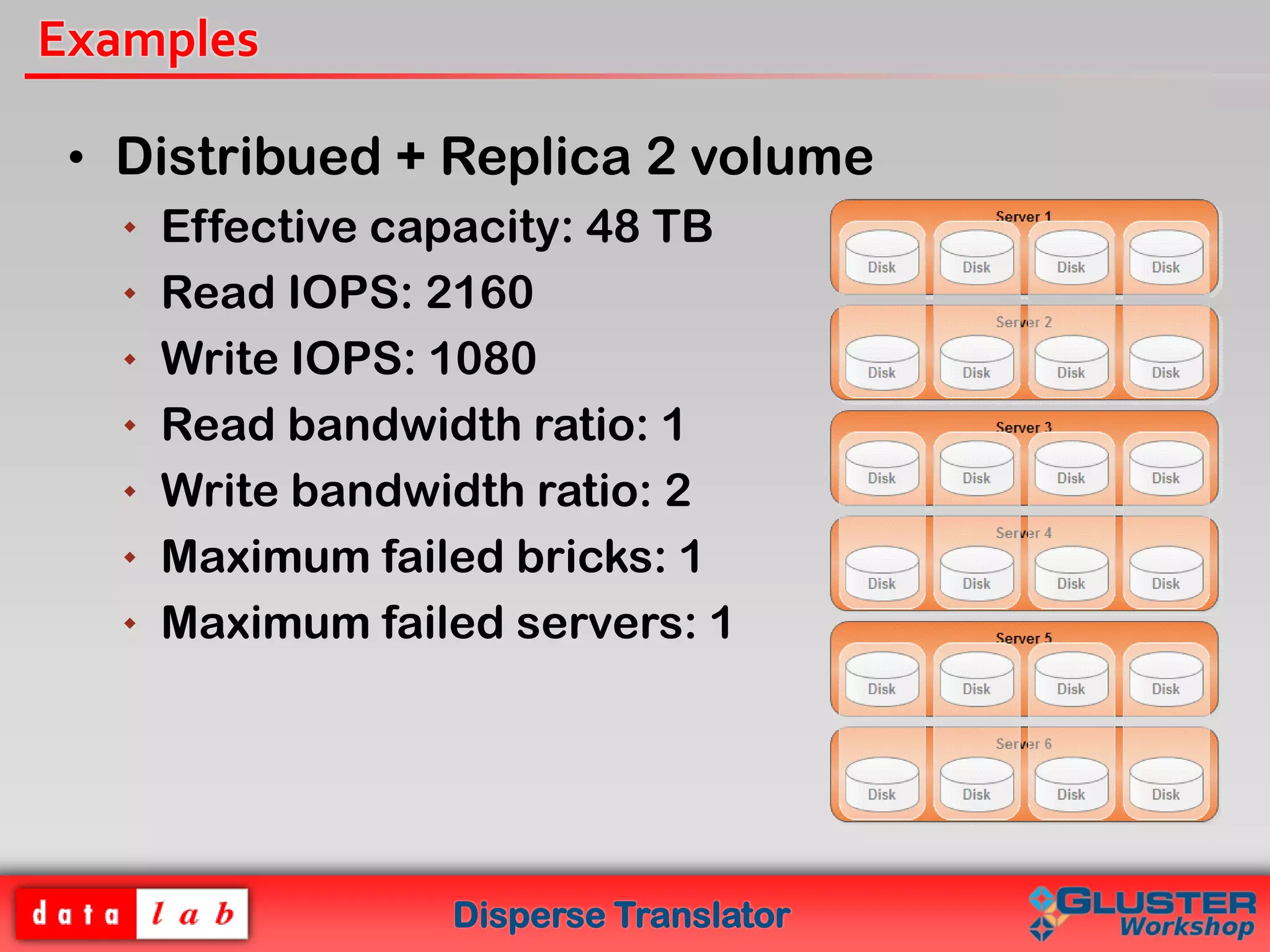

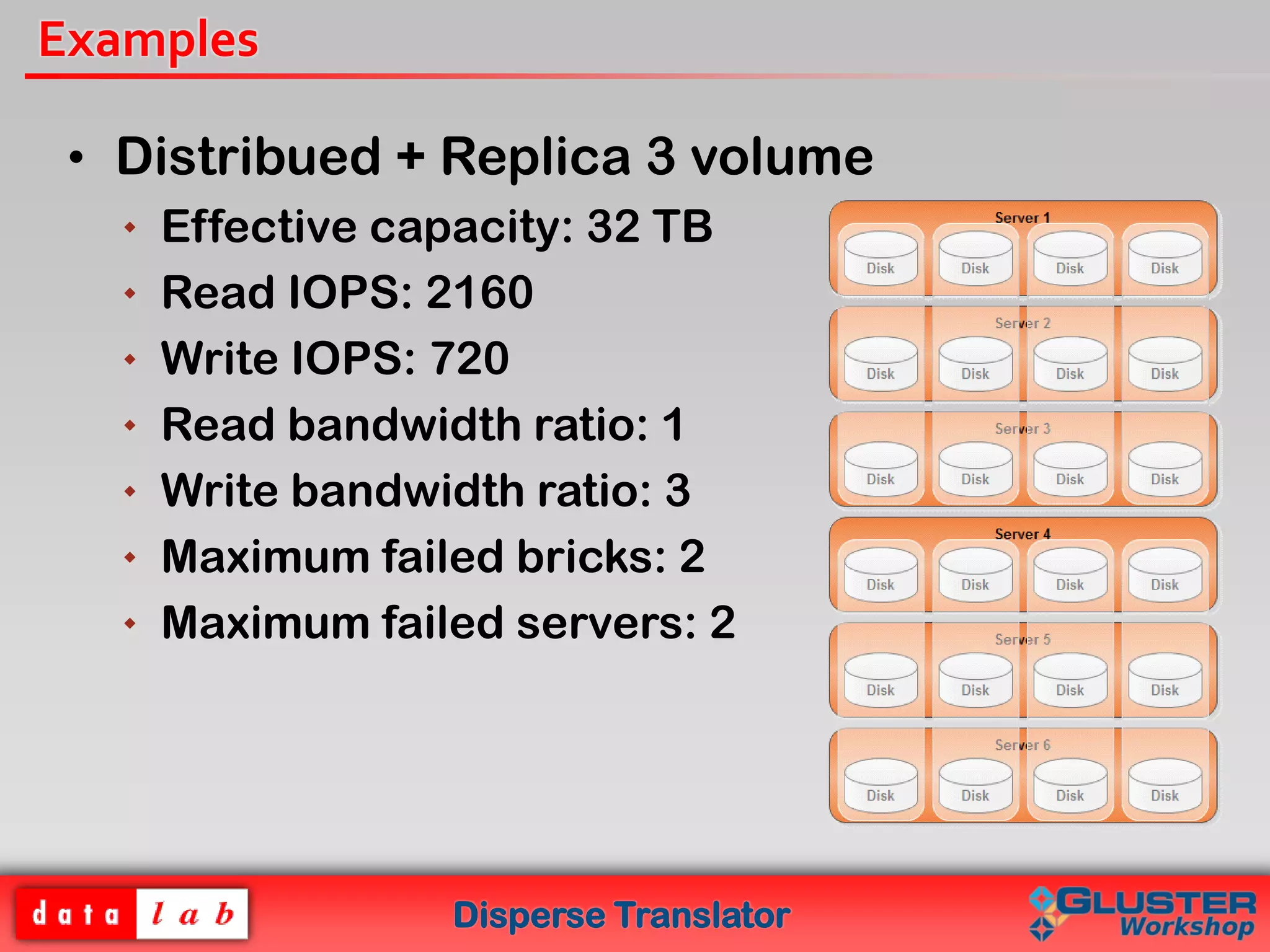

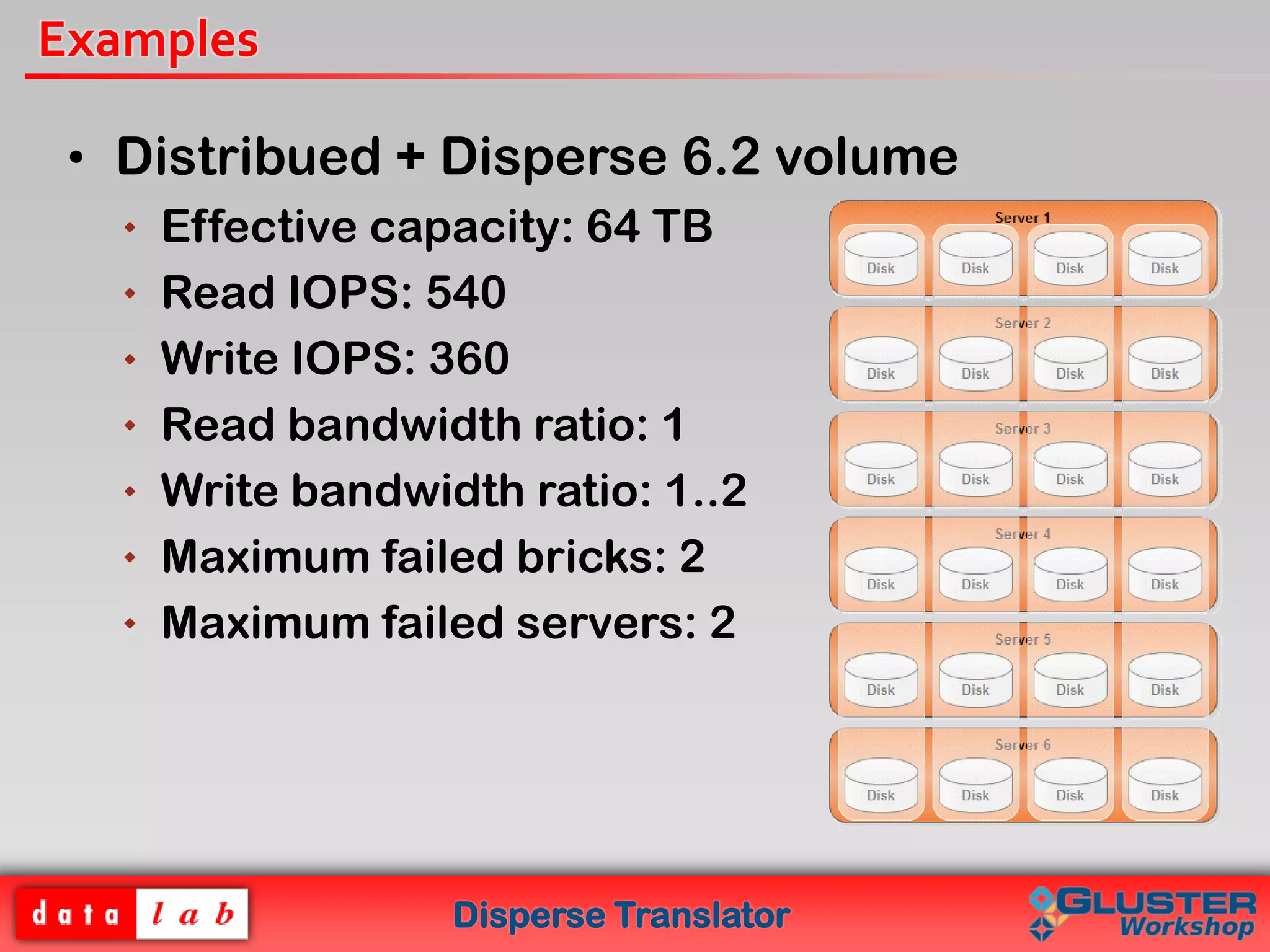

The document introduces the Disperse Translator, which allows for configurable fault tolerance in Gluster volumes using erasure codes. Key features include adjustable redundancy levels, minimized storage waste, and reduced bandwidth usage. It works by dispersing and encoding file chunks across bricks. The current implementation provides a functional disperse translator and healing processes, with future plans to add CLI support and optimize performance.