The document summarizes the five generations of computers from the 1940s to present day:

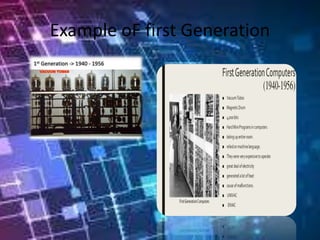

1) First generation used vacuum tubes and were room-sized machines. Programming was done through machine language and punch cards. Examples include UNIVAC and ENIAC.

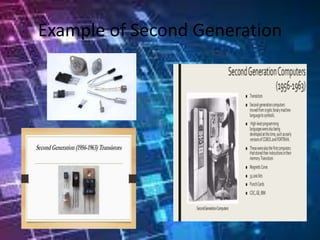

2) Second generation saw the introduction of transistors, making computers smaller and more efficient. Assembly languages were also developed.

3) Third generation used integrated circuits, allowing multiple programs to run simultaneously through operating systems. Computers became accessible to the mass market.

4) Fourth generation used microprocessors, putting entire computers on a single chip. Personal computers and networks became widespread.

5) Fifth generation, still in development, focuses on artificial