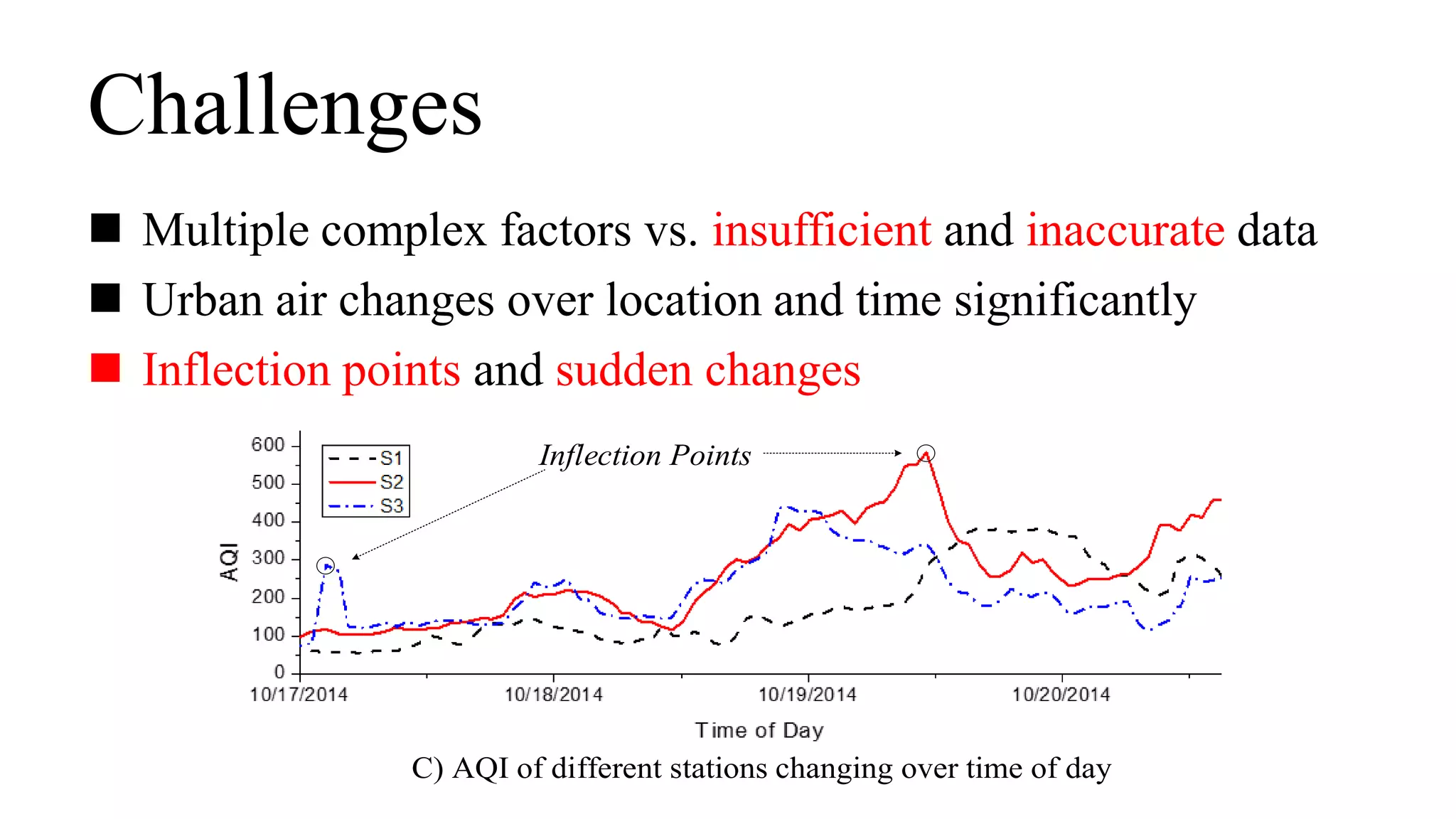

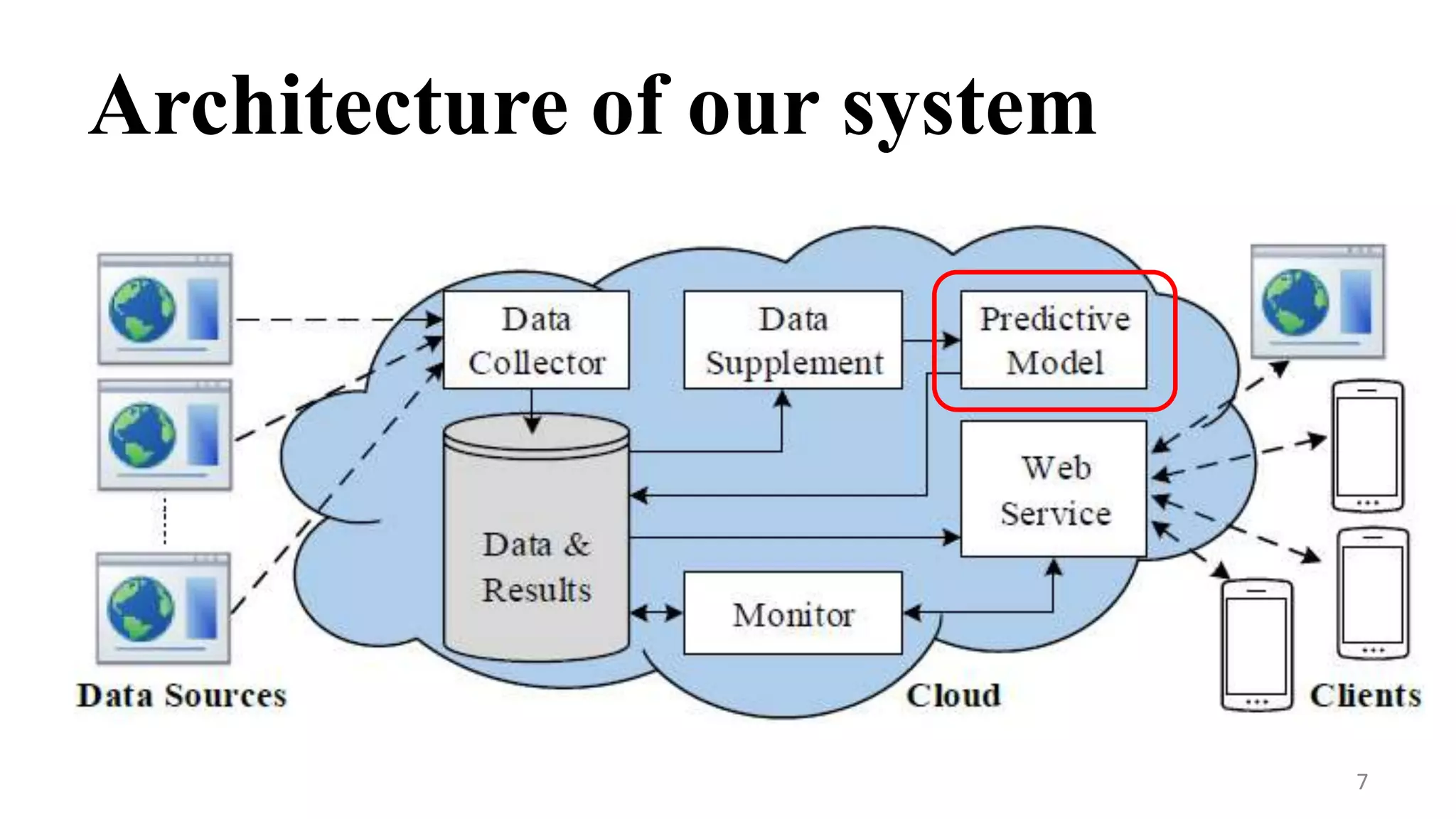

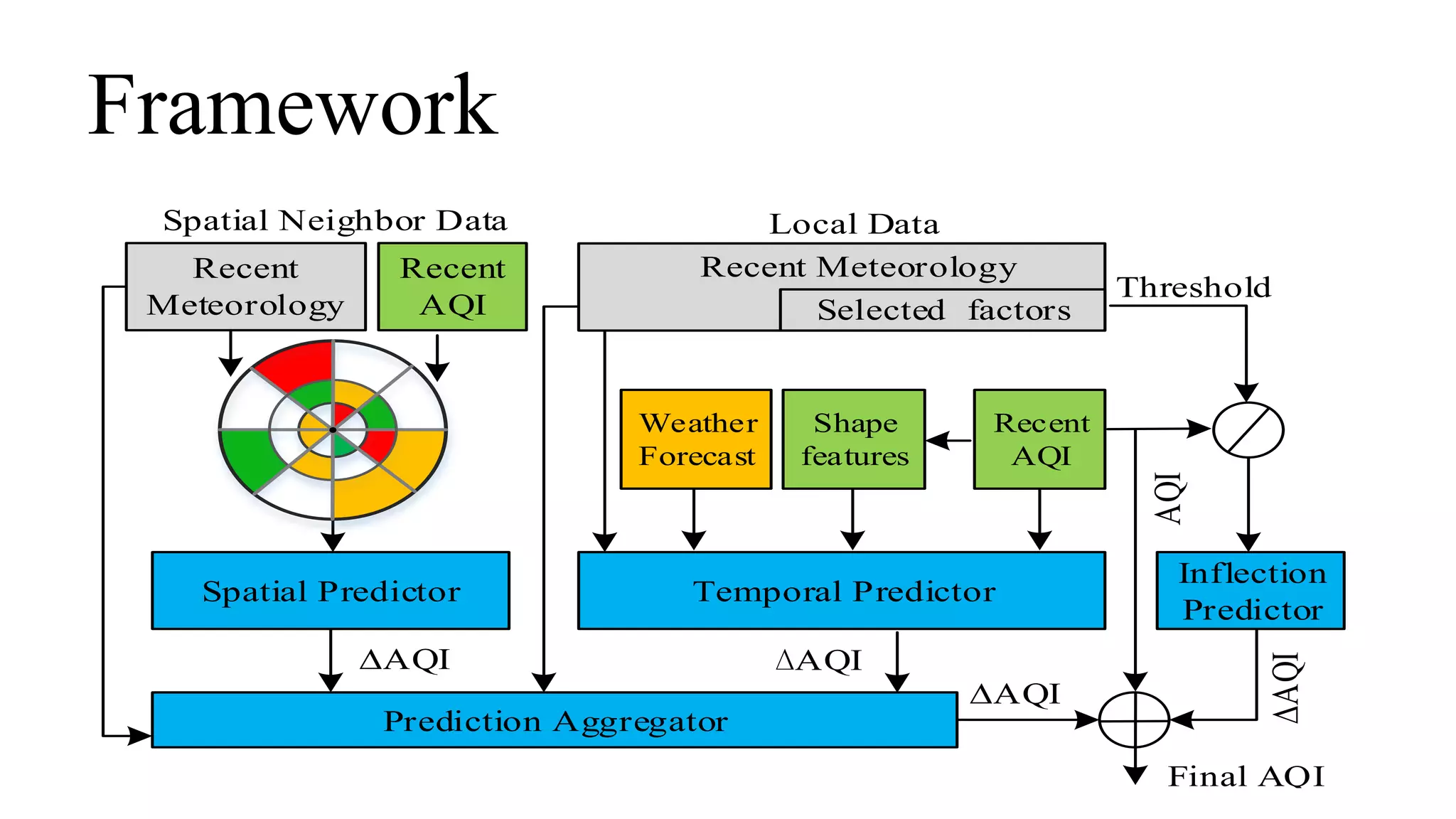

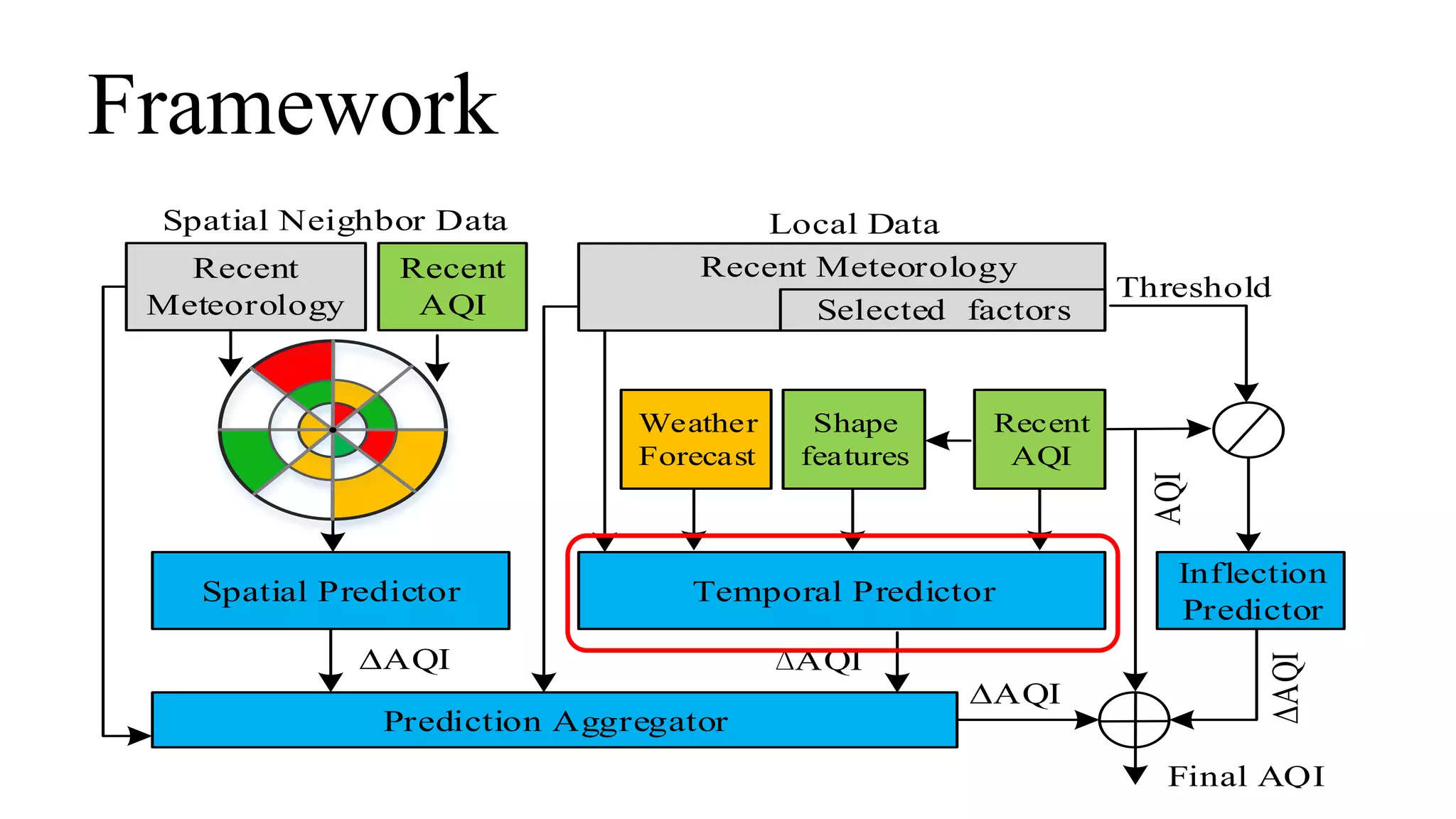

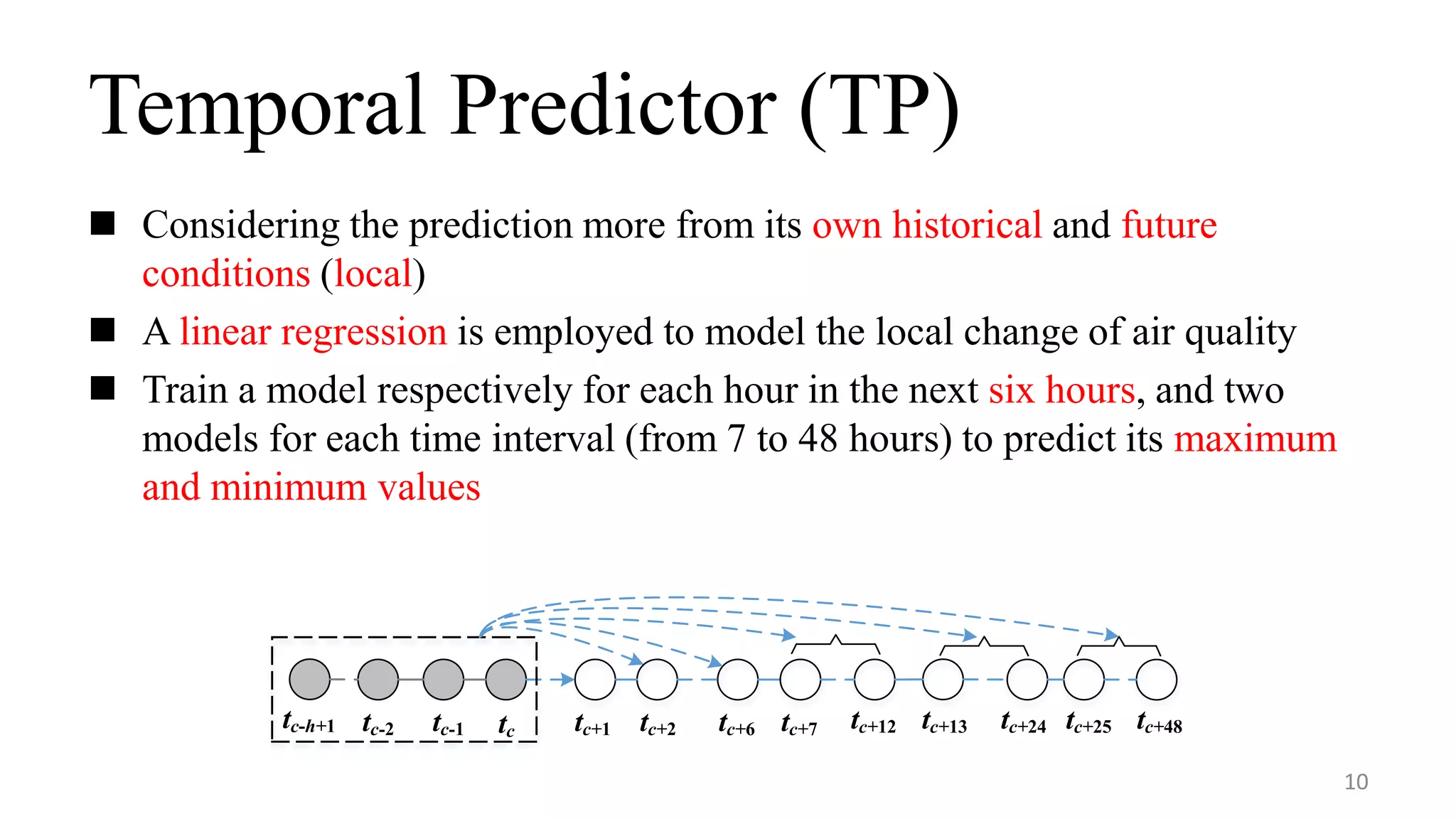

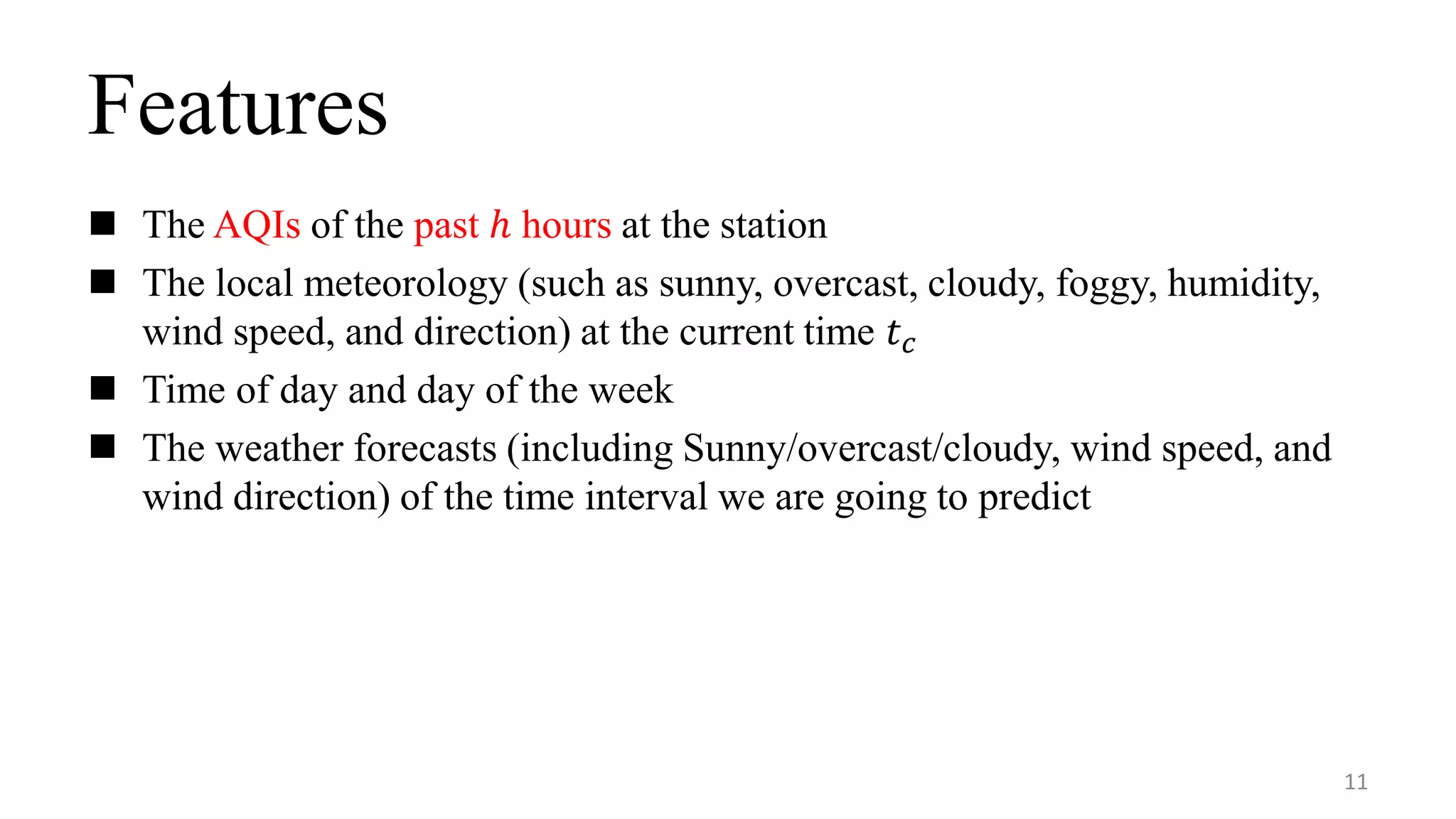

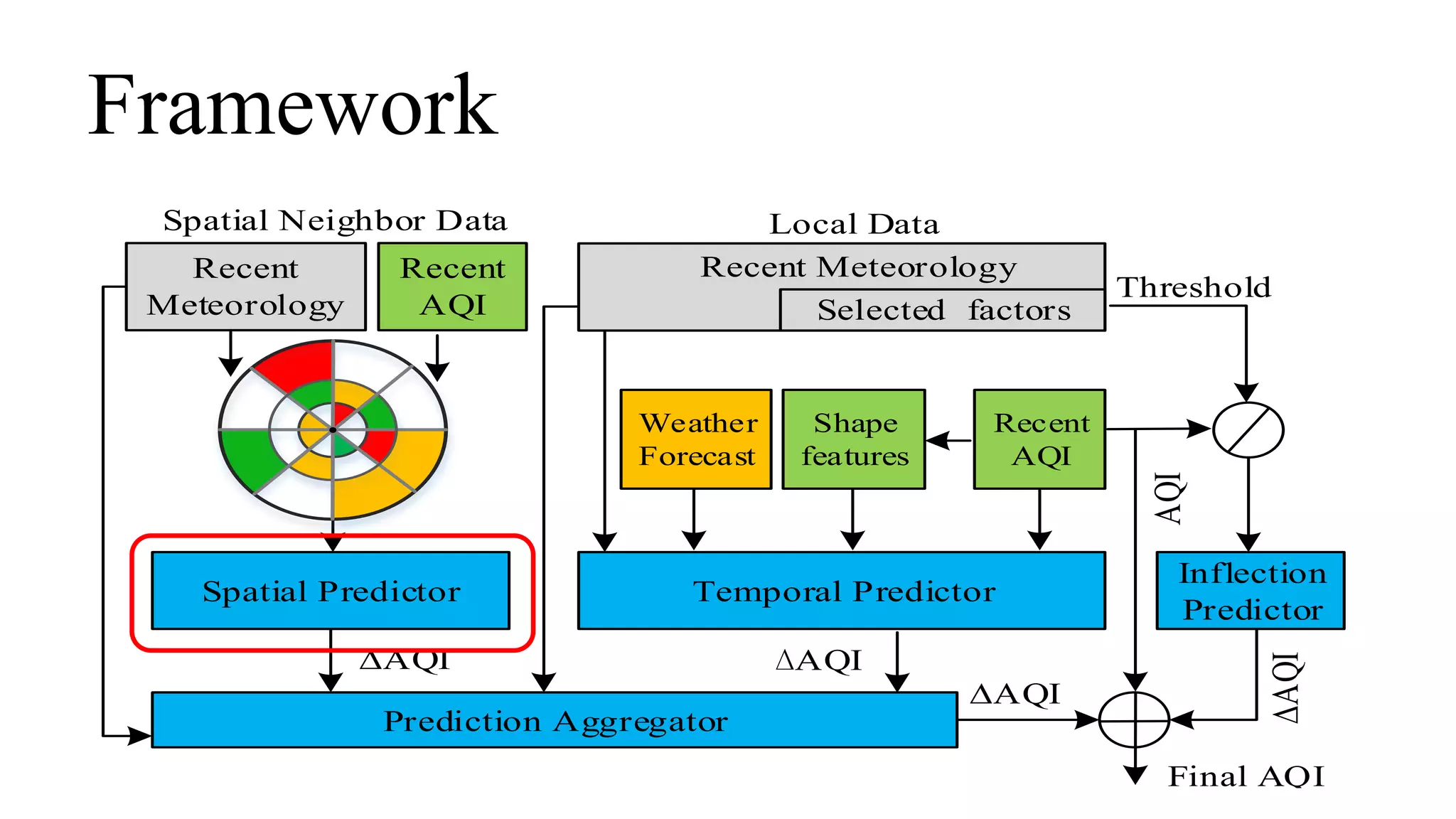

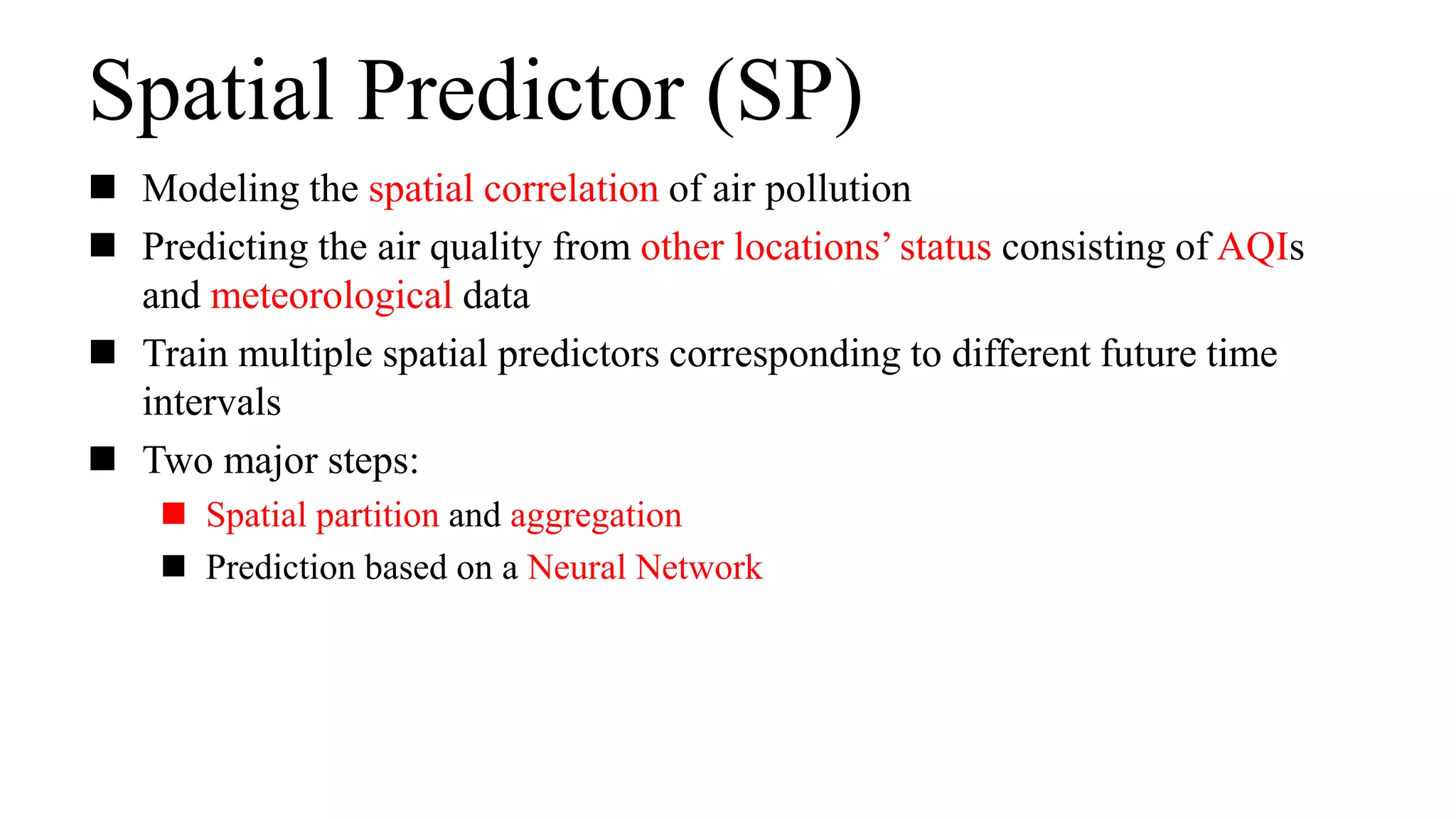

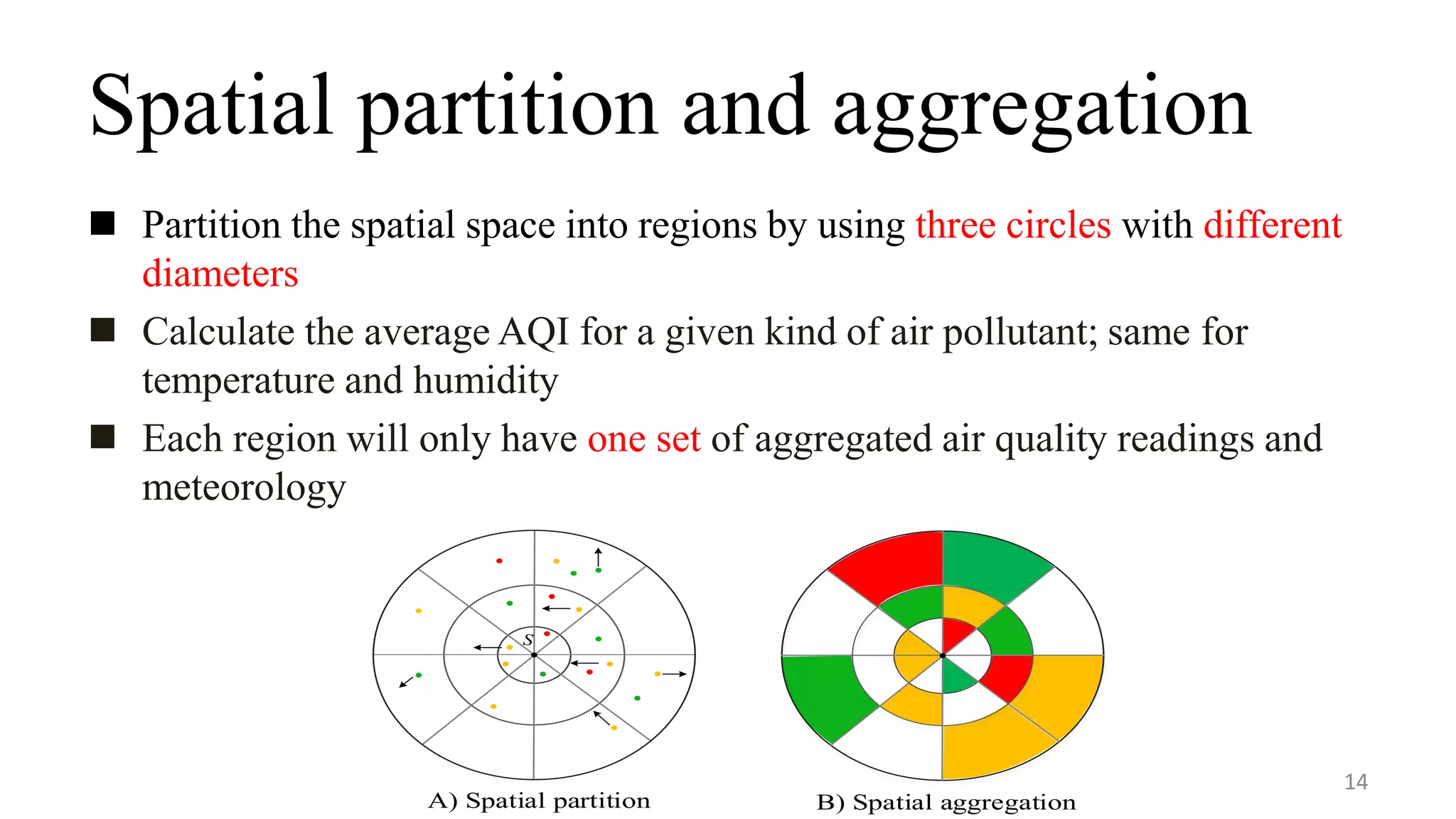

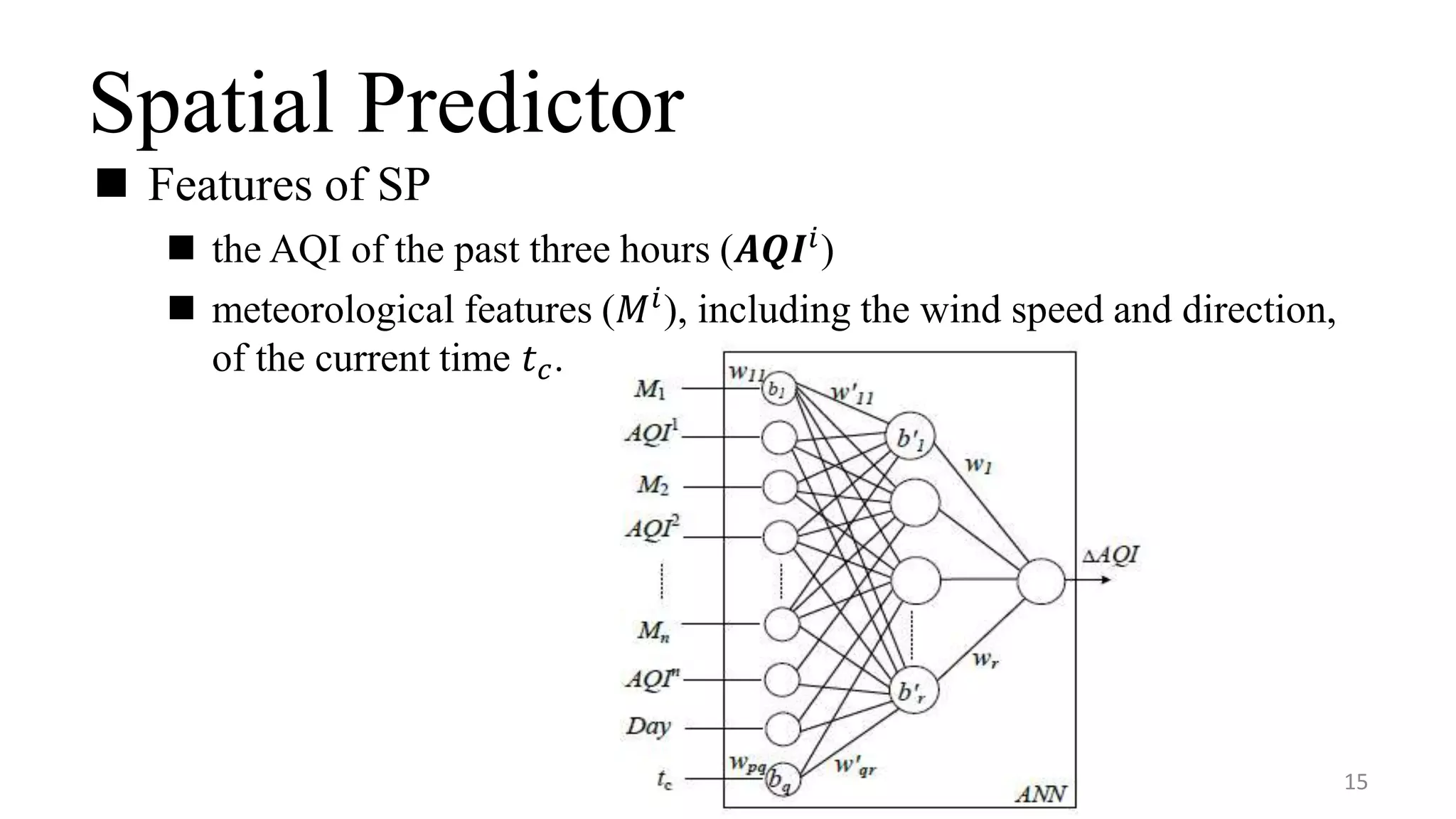

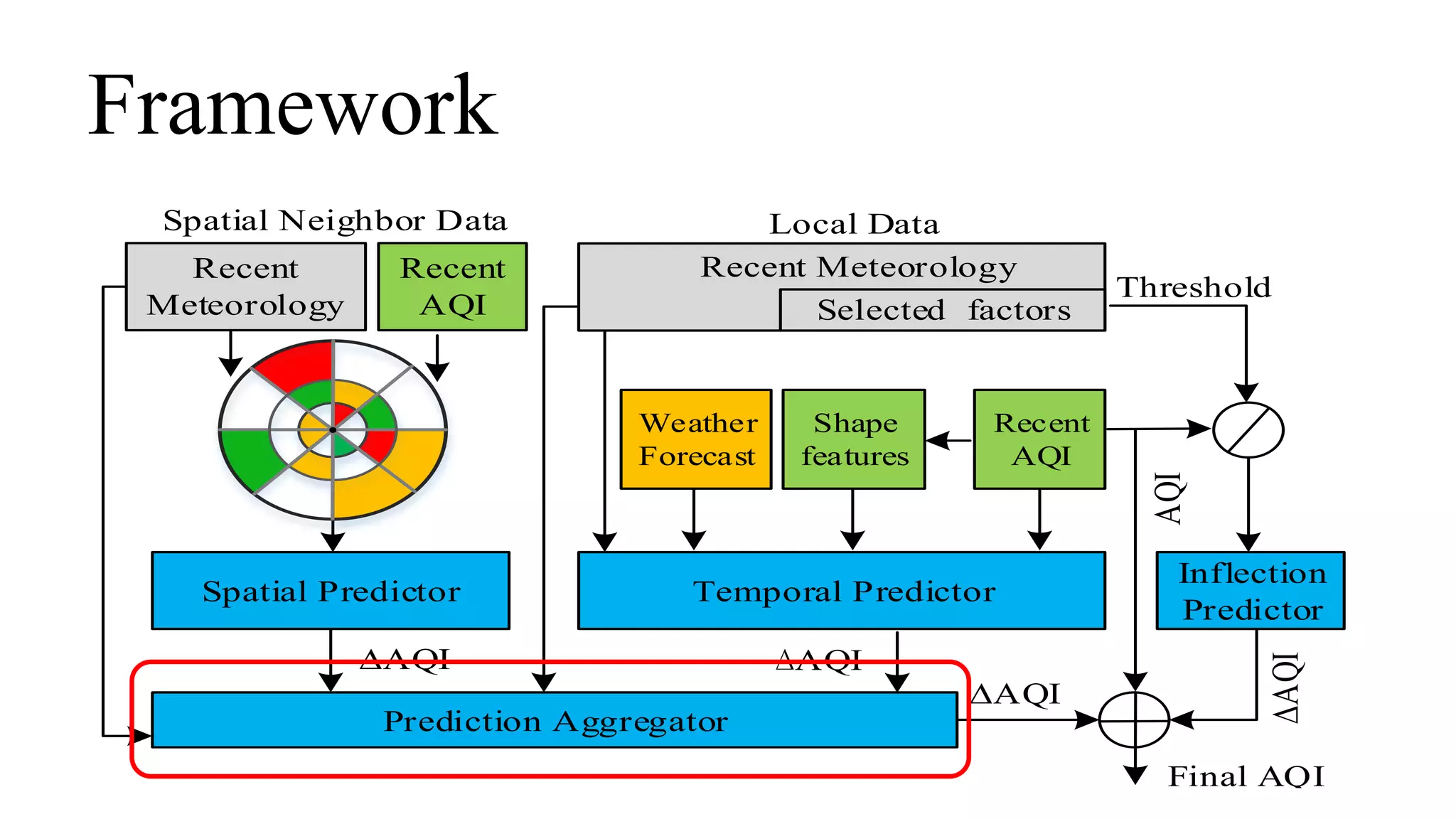

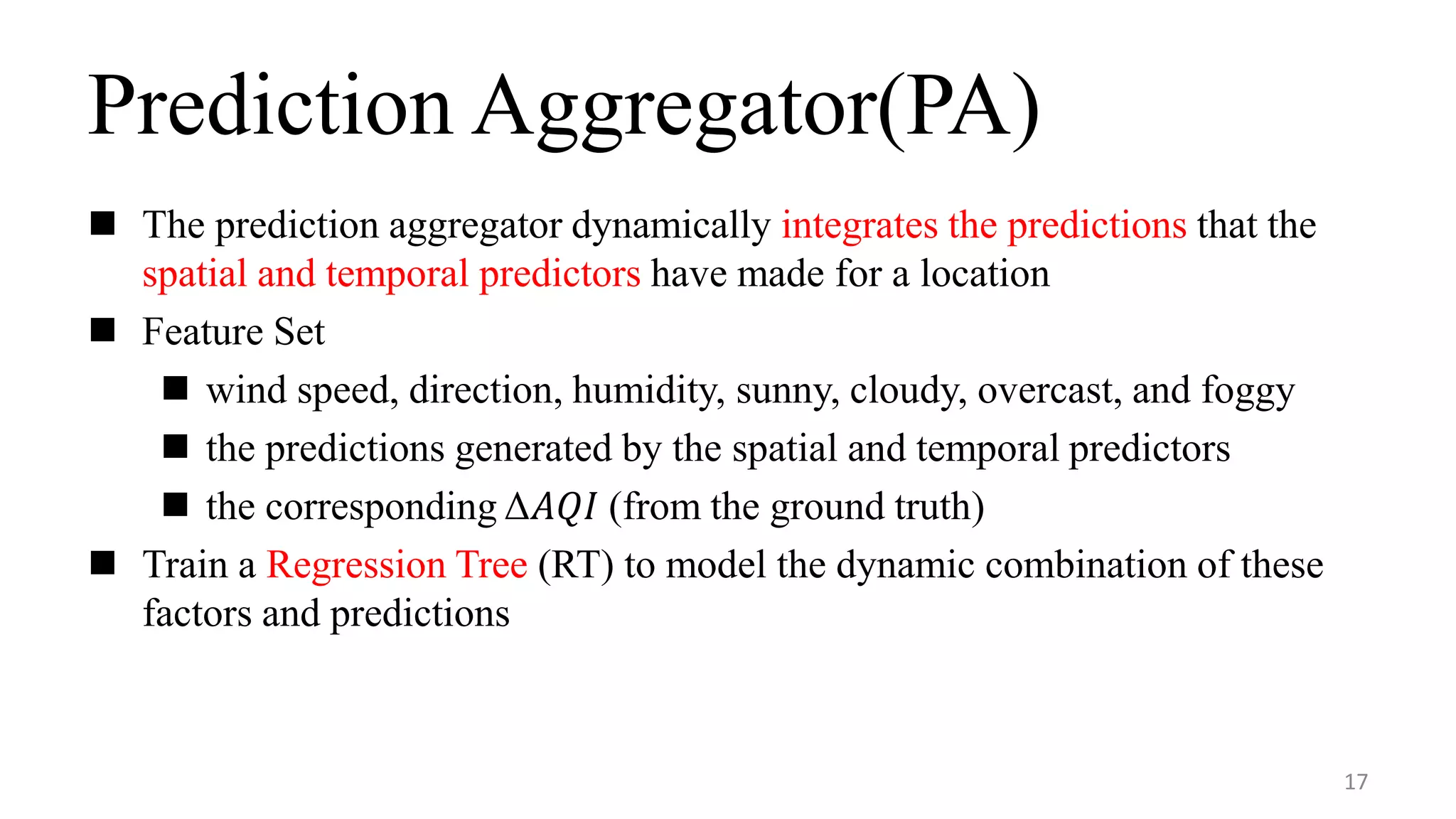

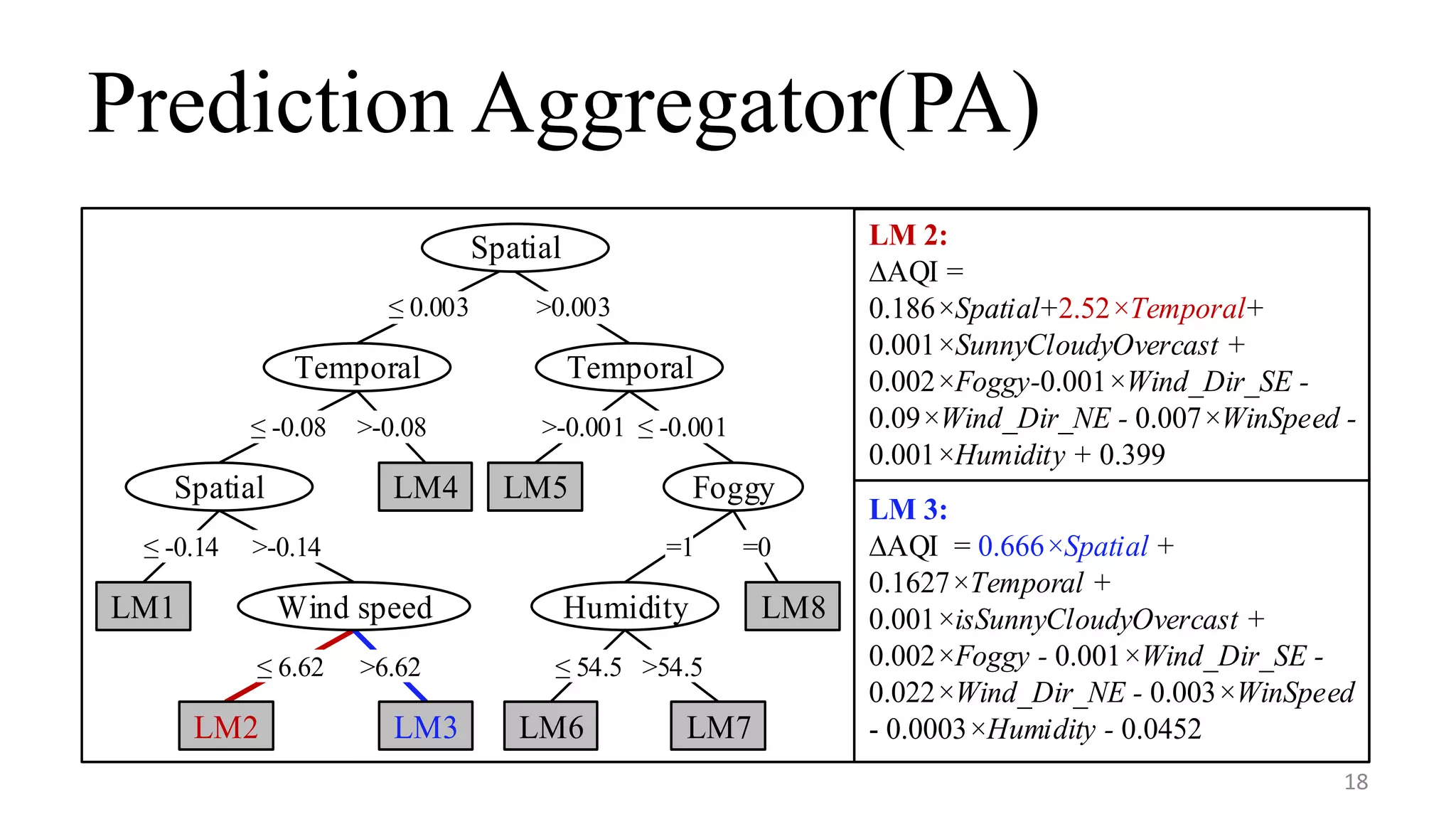

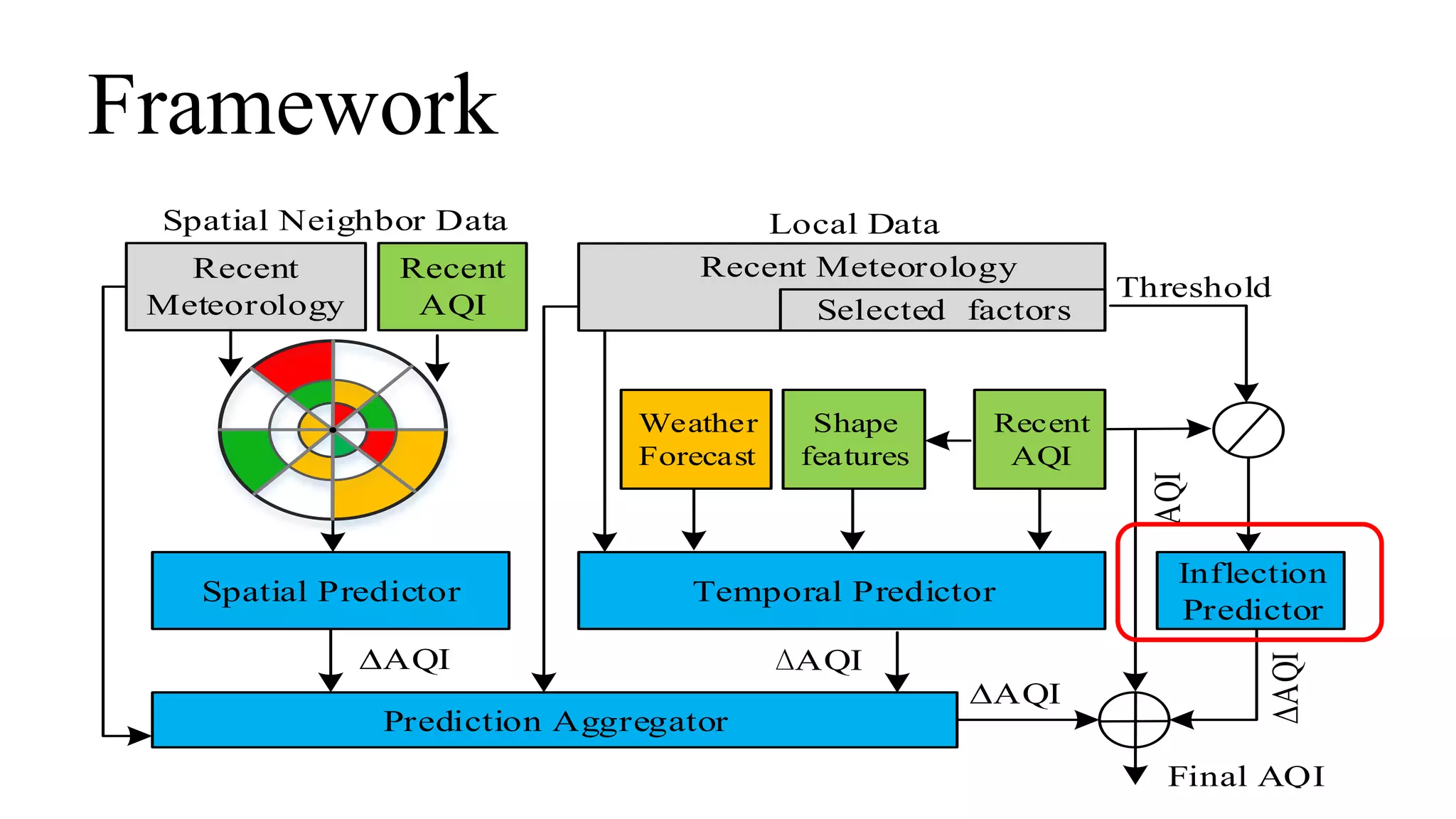

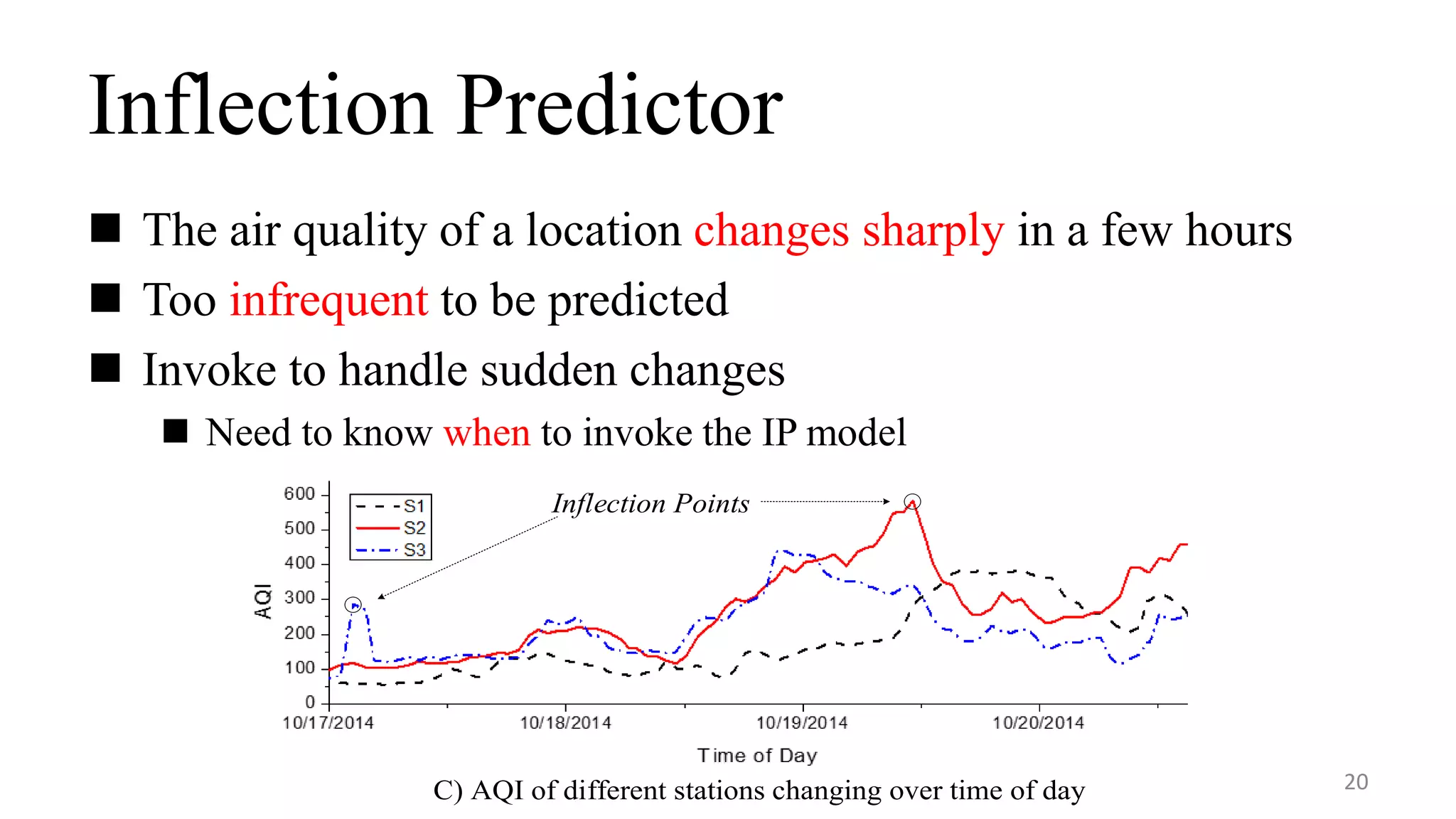

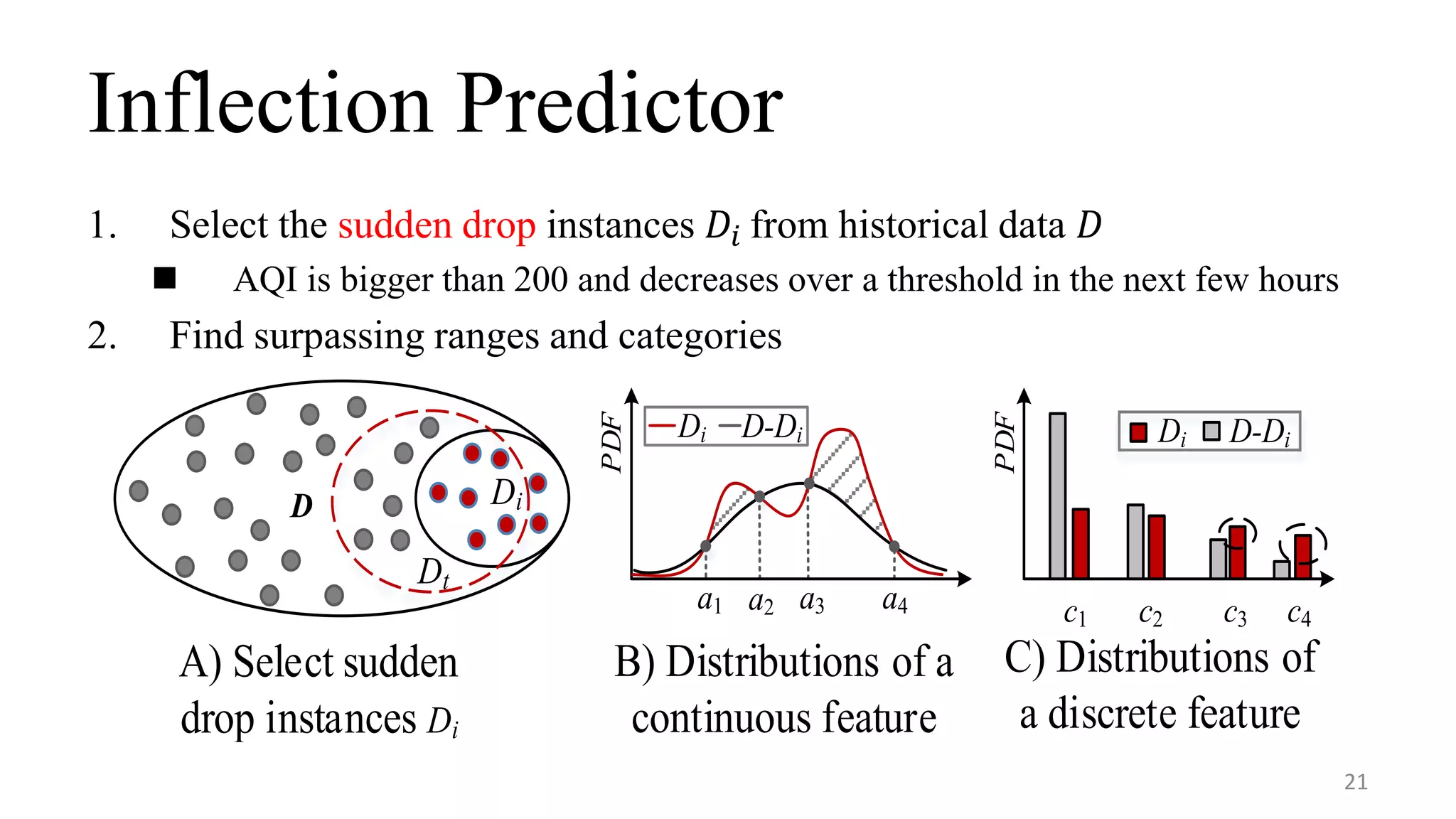

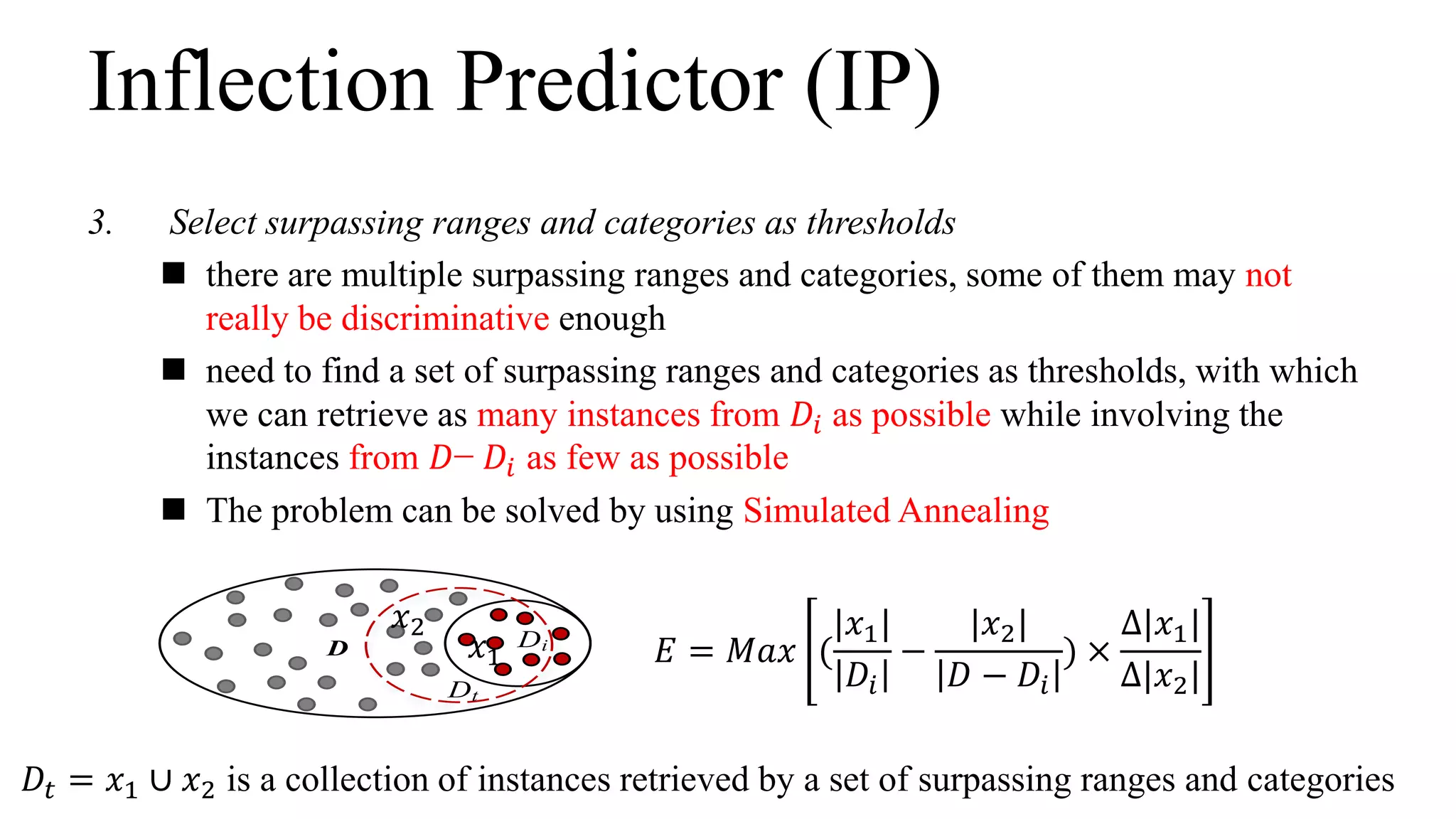

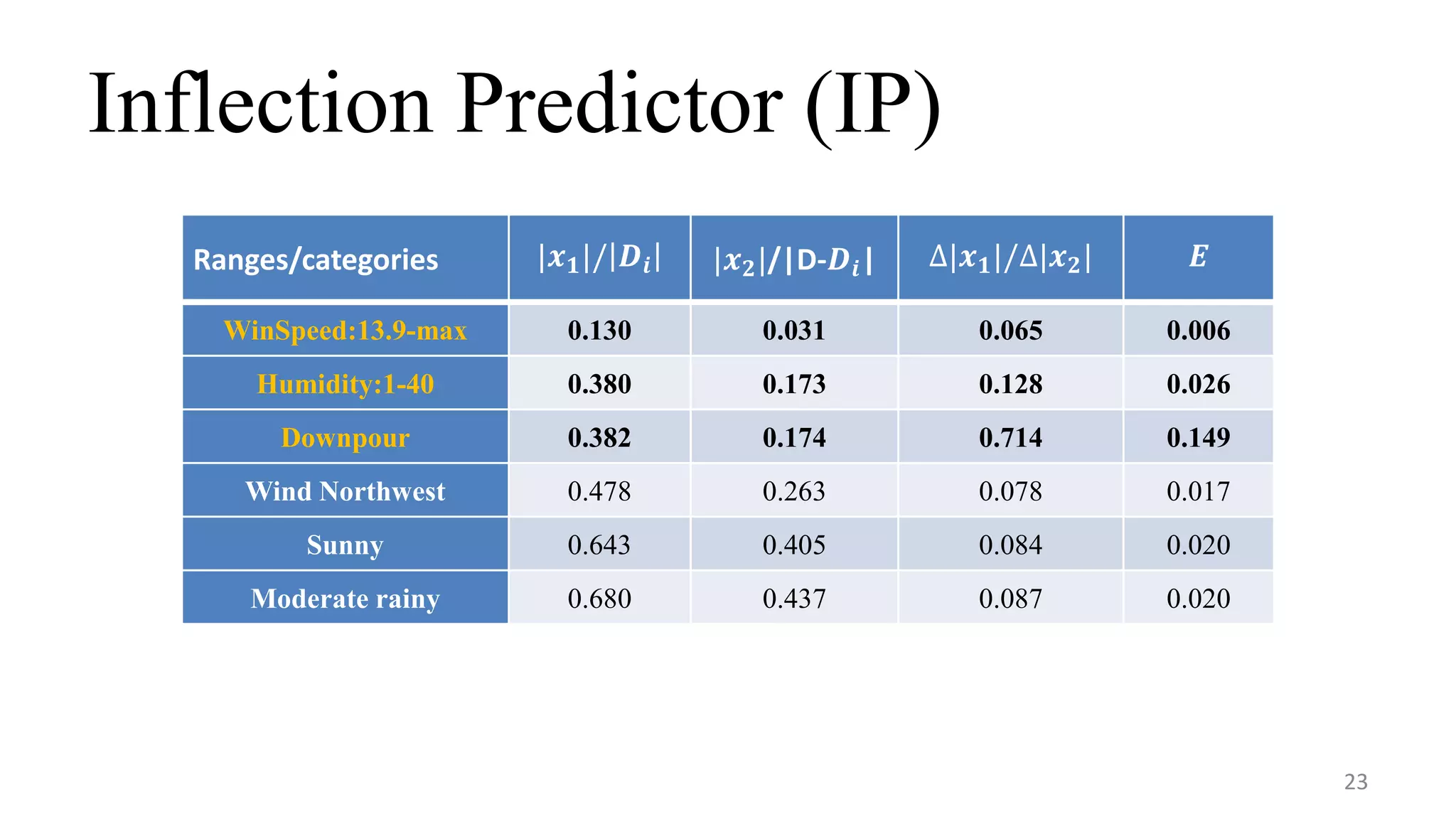

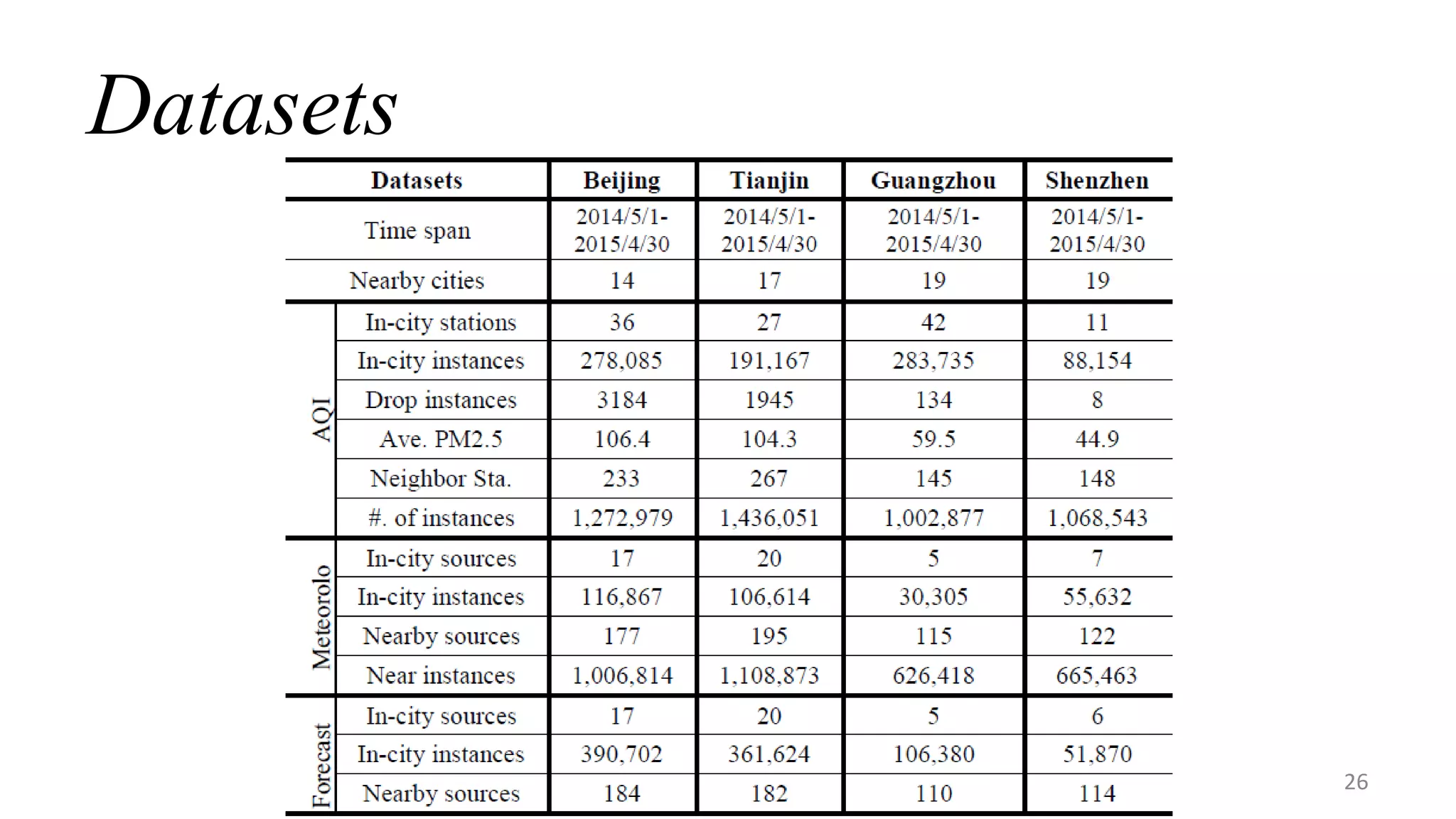

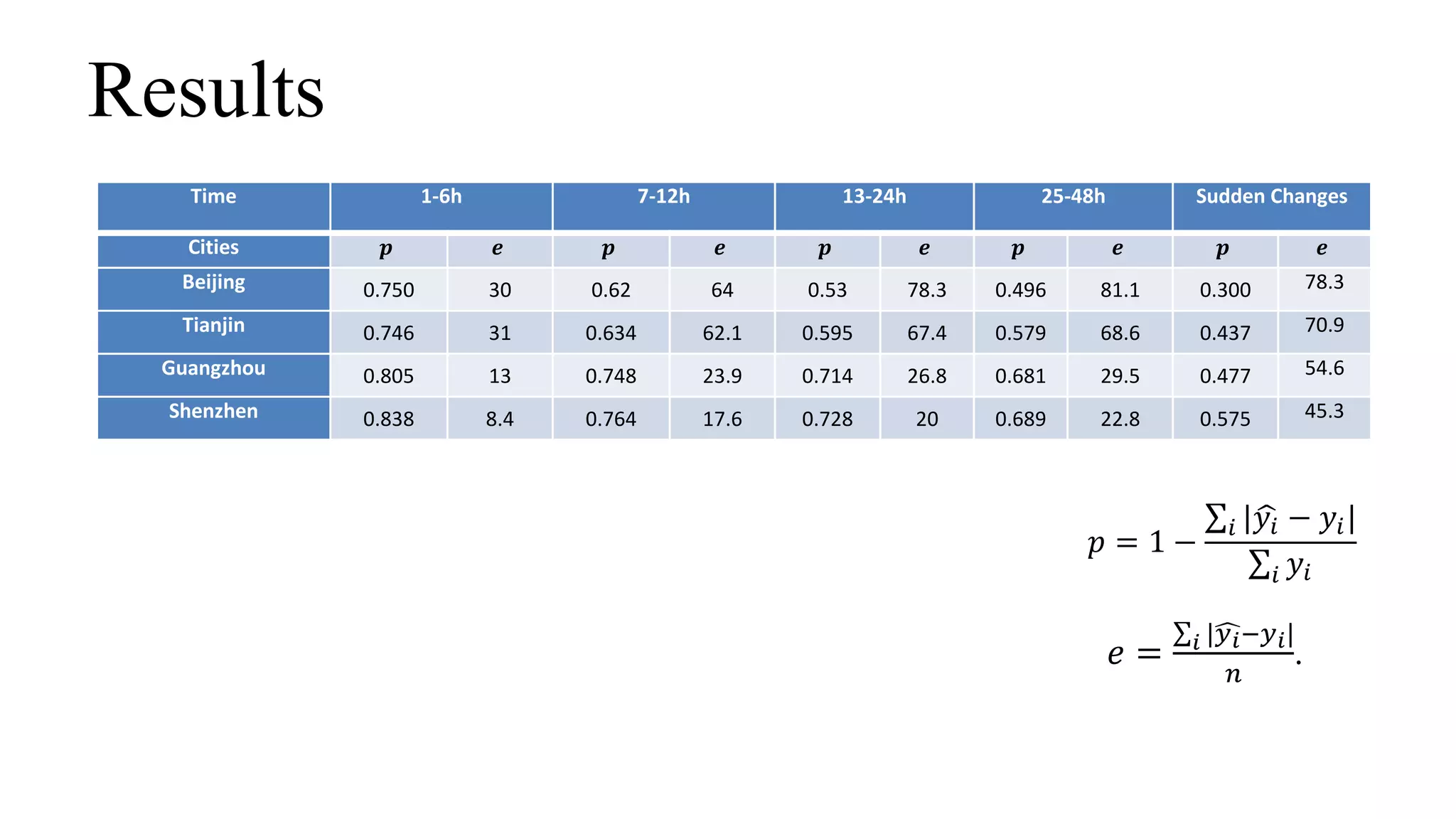

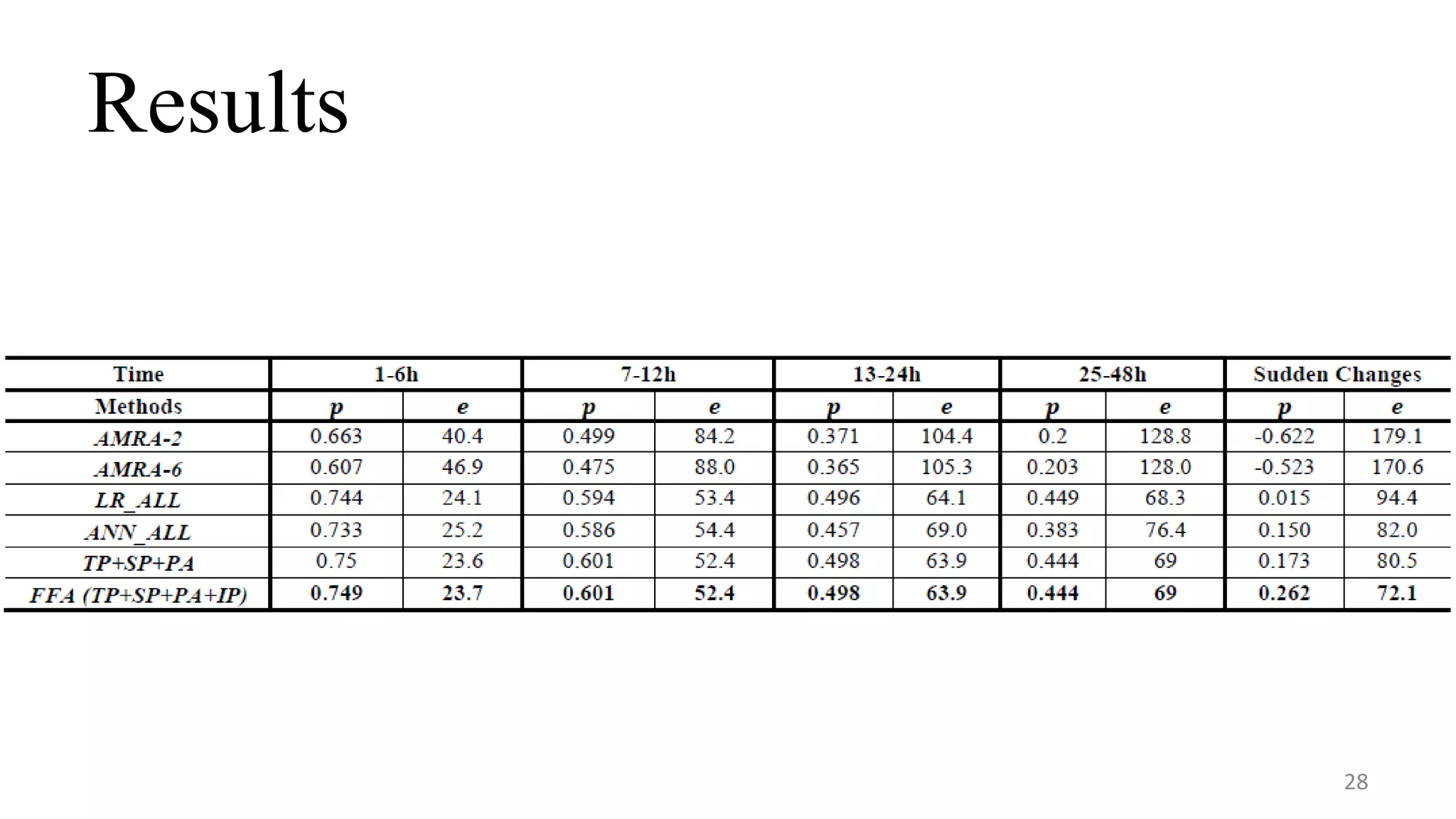

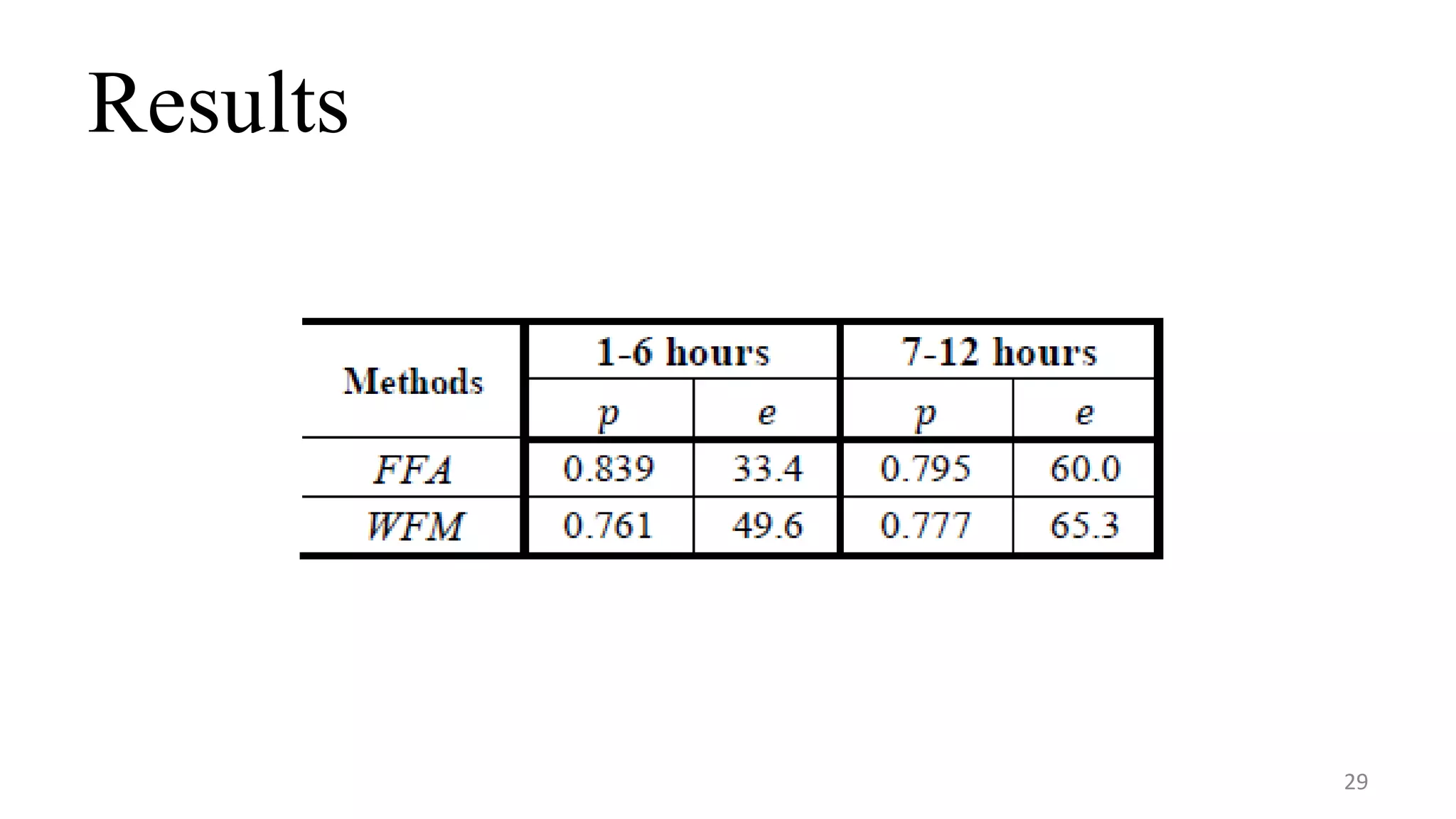

This document reports on a real-time air quality forecasting system that uses data-driven models to predict fine-grained air quality over the following 48 hours. The system uses a temporal predictor to model local air quality changes, a spatial predictor to model spatial correlations between locations, and an inflection predictor to handle sudden changes in air quality. An evaluation of the system in four Chinese cities found it could achieve 75% accuracy for the first 6 hours of predictions and 62% accuracy for the next 7-12 hours in Beijing, and it predicted sudden changes in air quality better than baseline methods.