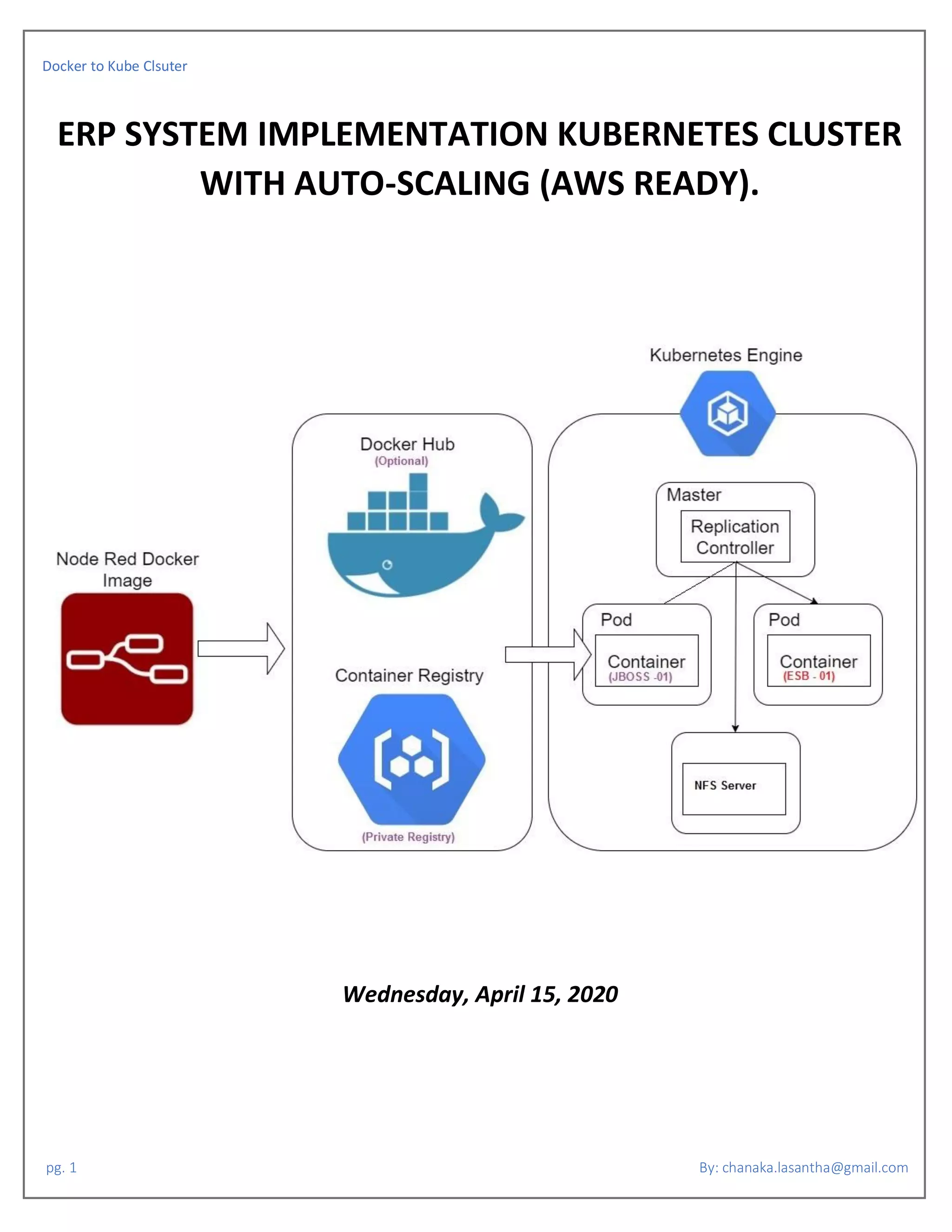

The document outlines the process of migrating a Docker application to a Kubernetes cluster with auto-scaling capabilities, detailing the setup of NFS servers, Dockerfiles for applications like JBoss and ESB, and configuration for persistent volumes. It provides step-by-step instructions, including commands for building and running Docker images, creating persistent volume claims, and configuring services and deployments in Kubernetes. Additionally, it covers network settings and SSH access considerations to facilitate deployment and management of the applications.

![Docker to Kube Clsuter

pg. 3 By: chanaka.lasantha@gmail.com

# Set ENV

CMD ["source /etc/profile"]

# Set root password

RUN echo 'root:z80cpu' >> /root/passwdfile

# Create user and it's password

RUN useradd -m -G sudo chanakan

RUN echo 'chanakan:z80cpu' >> /root/passwdfile

# Apply root password

RUN chpasswd -c SHA512 < /root/passwdfile

RUN rm -rf /root/passwdfile

# Enable ROOT access for the root user (Optional)

RUN sed -i 's/#PermitRootLogin prohibit-password/PermitRootLogin yes/g' /etc/ssh/sshd_config

# Port 22 is used for ssh

EXPOSE 22 8280 8243 9443 11111 35399 9999 9763

# Assign /data as static volume.

VOLUME ["/data"]

# Starting sshd

CMD ["supervisord", "-c", "/etc/supervisor.conf"]

USER root

DOCKERFILE OF JBOSS:

# Base system is the latest LTS version of Ubuntu.

FROM ubuntu

# Make sure we don't get notifications we can't answer during building.

ENV DEBIAN_FRONTEND non-interactive

# Prepare scripts and configs

ADD supervisor.conf /etc/supervisor.conf

# Download and install everything from the repos.

RUN apt-get -q -y update; apt-get -q -y upgrade &&

apt-get -q -y install sudo openssh-server supervisor vim iputils-ping net-tools curl unzip tcpdump alien &&

apt-get clean all &&

mkdir /var/run/sshd

# Create script folder

RUN mkdir -p /app/scripts

RUN mkdir -p /app/JAVADIR

RUN mkdir -p /app/logs

RUN mkdir -p /opt/images/temp/daily/

RUN mkdir -p /opt/images/approval/

RUN mkdir -p /opt/images/documents/

RUN mkdir -p /opt/images/signatures/

RUN mkdir -p /opt/images/documents/insurance/renewal

RUN mkdir -p /opt/images/documents/officerupload

RUN mkdir -p /opt/images/documents/cheque/statementUpload](https://image.slidesharecdn.com/kubernetesworkingcluster-200416174457/85/ERP-System-Implementation-Kubernetes-Cluster-with-Sticky-Sessions-3-320.jpg)

![Docker to Kube Clsuter

pg. 4 By: chanaka.lasantha@gmail.com

RUN mkdir -p /opt/images/documents/budget/

RUN mkdir -p /opt/images/documents/finance/jrnlUpload/

RUN mkdir -p /opt/images/documents/bulkReceipt/

RUN mkdir -p /opt/images/documents/recovery/bulkInteract/

RUN mkdir -p /opt/images/documents/borrow/scheduleUpload/

# Set working dir

WORKDIR /app

# Adding Jboss PID kill script into the docker container with permission.

COPY JBOSS_STOP.sh /app/scripts

RUN chmod 775 -R /app/scripts/*

# Adding JDK package as deb install.

COPY jdk-7u76-linux-x64.rpm /app

RUN alien --scripts -i /app/jdk-7u76-linux-x64.rpm

# Adding Jboss application into the /app folder.

COPY jboss-as-7.1.3.Final.zip /app

RUN unzip /app/jboss-as-7.1.3.Final.zip

RUN chmod 775 -R /app/jboss-as-7.1.3.Final

#ADD cc-erp-ear-4.0.0.ear /app/jboss-as-7.1.3.Final/standalone/deployments/

#RUN chown root:root /app/jboss-as-7.1.3.Final/standalone/deployments/cc-erp-ear-4.0.0.ear

# Set custom ENV for the node

ENV JAVA_HOME=/usr/java/jdk1.7.0_76/bin/java

RUN echo "export JBOSS_HOME=/app/jboss-as-7.1.3.Final" >> /etc/profile

# Set ENV

CMD ["source /etc/profile"]

# Set root password

RUN echo 'root:z80cpu' >> /root/passwdfile

# Create user and it's password

RUN useradd -m -G sudo chanakan

RUN echo 'chanakan:z80cpu' >> /root/passwdfile

# Apply root password

RUN chpasswd -c SHA512 < /root/passwdfile

RUN rm -rf /root/passwdfile

# Enable ROOT access for the root user (Optional)

RUN sed -i 's/#PermitRootLogin prohibit-password/PermitRootLogin yes/g' /etc/ssh/sshd_config

# Port 22 is used for ssh

EXPOSE 22 9191

# Assign /data as static volume.

VOLUME ["/data"]

# Starting sshd

CMD ["supervisord", "-c", "/etc/supervisor.conf"]

USER root](https://image.slidesharecdn.com/kubernetesworkingcluster-200416174457/85/ERP-System-Implementation-Kubernetes-Cluster-with-Sticky-Sessions-4-320.jpg)

![Docker to Kube Clsuter

pg. 5 By: chanaka.lasantha@gmail.com

SUPERVISOR CONFIG FOR JBOSS (supervisor.conf):

[supervisord]

nodaemon=true

[program:sshd]

directory=/usr/local/

command=/usr/sbin/sshd -D

autostart=true

autorestart=true

redirect_stderr=true

[program:jboss7]

command=/app/jboss-as-7.1.3.Final/bin/standalone.sh -b 0.0.0.0 -c standalone.xml

stdout_logfile=NONE

stderr_logfile=NONE

autorestart=true

autostart=true

user=root

directory=/app/jboss-as-7.1.3.Final

environment=JAVA_HOME=/usr/java/jdk1.7.0_76,JBOSS_HOME=/app/jboss-as-7.1.3.Final,JBOSS_BASE_DIR=/app/jboss-as-

7.1.3.Final/standalone,RUN_CONF=/app/jboss-as-7.1.3.Final/bin/standalone.conf

stopasgroup=true

SUPERVISOR CONFIG FOR ESB (supervisor.conf):

[supervisord]

nodaemon=true

[program:sshd]

directory=/usr/local/

command=/usr/sbin/sshd -D

autostart=true

autorestart=true

redirect_stderr=true

[program:esb]

command=/app/wso2esb-4.8.0/bin/wso2server.sh &

stdout_logfile=NONE

stderr_logfile=NONE

autorestart=true

autostart=true

user=root

directory=/app/wso2esb-4.8.0

environment=JAVA_HOME=/usr/java/jdk1.7.0_76

stopasgroup=true](https://image.slidesharecdn.com/kubernetesworkingcluster-200416174457/85/ERP-System-Implementation-Kubernetes-Cluster-with-Sticky-Sessions-5-320.jpg)

![Docker to Kube Clsuter

pg. 14 By: chanaka.lasantha@gmail.com

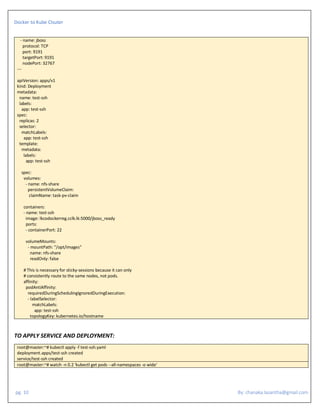

RESOURCE REQUESTS AND LIMITS OF POD AND CONTAINER:

Each Container of a Pod can specify one or more of the following:

spec.containers[].resources.limits.cpu

spec.containers[].resources.limits.memory

spec.containers[].resources.limits.hugepages-<size>

spec.containers[].resources.requests.cpu

spec.containers[].resources.requests.memory

spec.containers[].resources.requests.hugepages-<size>

Although requests and limits can only be specified on individual Containers, it is convenient to talk about Pod resource requests and limits. A Pod

resource request/limit for a particular resource type is the sum of the resource requests/limits of that type for each Container in the Pod.

MEANING OF CPU:

Limits and requests for CPU resources are measured in cpu units. One cpu, in Kubernetes, is equivalent to 1 vCPU/Core for cloud providers and 1

hyperthread on bare-metal Intel processors.

Fractional requests are allowed. A Container with spec.containers[].resources.requests.cpu of 0.5 is guaranteed half as much CPU as one that asks for 1

CPU. The expression 0.1 is equivalent to the expression 100m, which can be read as “one hundred millicpu”. Some people say “one hundred millicores”,

and this is understood to mean the same thing. A request with a decimal point, like 0.1, is converted to 100m by the API, and precision finer than 1m is

not allowed. For this reason, the form 100m might be preferred.

CPU is always requested as an absolute quantity, never as a relative quantity; 0.1 is the same amount of CPU on a single-core, dual-core, or 48-core

machine.

MEANING OF MEMORY:

Limits and requests for memory are measured in bytes. You can express memory as a plain integer or as a fixed-point integer using one of these suffixes:

E, P, T, G, M, K. You can also use the power-of-two equivalents: Ei, Pi, Ti, Gi, Mi, Ki. For example, the following represent roughly the same value:

128974848, 129e6, 129M, 123Mi

Here’s an example. The following Pod has two Containers. Each Container has a request of 0.25 cpu and 64MiB (226 bytes) of memory. Each Container

has a limit of 0.5 cpu and 128MiB of memory. You can say the Pod has a request of 0.5 cpu and 128 MiB of memory, and a limit of 1 cpu and 256MiB of

memory.

apiVersion: v1

kind: Pod

metadata:

name: frontend

spec:

containers:

- name: db

image: mysql

env:

- name: MYSQL_ROOT_PASSWORD

value: "password"

resources:

requests:

memory: "64Mi"

cpu: "250m"

limits:

memory: "128Mi"

cpu: "500m"

- name: wp

image: wordpress

resources:](https://image.slidesharecdn.com/kubernetesworkingcluster-200416174457/85/ERP-System-Implementation-Kubernetes-Cluster-with-Sticky-Sessions-14-320.jpg)

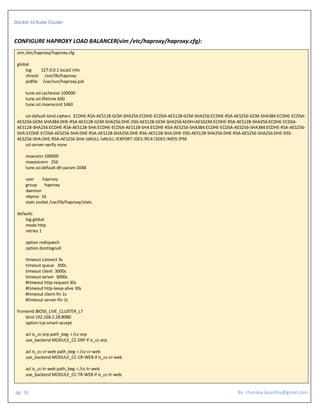

![Docker to Kube Clsuter

pg. 17 By: chanaka.lasantha@gmail.com

acl is_cc-ry-web path_beg -i /cc-ry-web

use_backend MODULE_CC-RY-WEB if is_cc-ry-web

acl is_cc-le-web path_beg -i /cc-le-web

use_backend MODULE_CC-LE-WEB if is_cc-le-web

acl is_cc-rp-web path_beg -i /cc-rp-web

use_backend MODULE_CC-RP-WEB if is_cc-rp-web

acl is_cc-fd-web path_beg -i /cc-fd-web

use_backend MODULE_CC-FD-WEB if is_cc-fd-web

default_backend MODULE_CC-ERP

backend MODULE_CC-ERP

mode http

balance roundrobin

option abortonclose

option tcp-smart-connect

cookie SERVERID insert indirect nocache

option httpclose

option forwardfor

reqirep ^([^ :]*) /cc-erp/(.*) 1 /cc-erp/2

server LIVE-JBOSS-192.168.2.29:32767 192.168.2.29:32767 maxconn 2500 check cookie LIVE-JBOSS-192.168.2.29:32767 inter 1000

server LIVE-JBOSS-192.168.2.30:32767 192.168.2.30:32767 maxconn 2500 check cookie LIVE-JBOSS-192.168.2.30:32767 inter 1000

backend MODULE_CC-CR-WEB

mode http

balance roundrobin

option abortonclose

option tcp-smart-connect

cookie SERVERID insert indirect nocache

option httpclose

option forwardfor

reqirep ^([^ :]*) /cc-cr-web/(.*) 1 /cc-cr-web/2

server LIVE-JBOSS-192.168.2.29:32767 192.168.2.29:32767 maxconn 2500 check cookie LIVE-JBOSS-192.168.2.29:32767 inter 1000

server LIVE-JBOSS-192.168.2.30:32767 192.168.2.30:32767 maxconn 2500 check cookie LIVE-JBOSS-192.168.2.30:32767 inter 1000

backend MODULE_CC-TR-WEB

mode http

balance roundrobin

option abortonclose

option tcp-smart-connect

cookie SERVERID insert indirect nocache

option httpclose

option forwardfor

reqirep ^([^ :]*) /cc-tr-web/(.*) 1 /cc-tr-web/2

server LIVE-JBOSS-192.168.2.29:32767 192.168.2.29:32767 maxconn 2500 check cookie LIVE-JBOSS-192.168.2.29:32767 inter 1000

server LIVE-JBOSS-192.168.2.30:32767 192.168.2.30:32767 maxconn 2500 check cookie LIVE-JBOSS-192.168.2.30:32767 inter 1000

backend MODULE_CC-RY-WEB

mode http

balance roundrobin

option abortonclose

option tcp-smart-connect

cookie SERVERID insert indirect nocache

option httpclose

option forwardfor

reqirep ^([^ :]*) /cc-ry-web/(.*) 1 /cc-ry-web/2

server LIVE-JBOSS-192.168.2.29:32767 192.168.2.29:32767 maxconn 2500 check cookie LIVE-JBOSS-192.168.2.29:32767 inter 1000

server LIVE-JBOSS-192.168.2.30:32767 192.168.2.30:32767 maxconn 2500 check cookie LIVE-JBOSS-192.168.2.30:32767 inter 1000](https://image.slidesharecdn.com/kubernetesworkingcluster-200416174457/85/ERP-System-Implementation-Kubernetes-Cluster-with-Sticky-Sessions-17-320.jpg)

![Docker to Kube Clsuter

pg. 18 By: chanaka.lasantha@gmail.com

backend MODULE_CC-RP-WEB

mode http

balance roundrobin

option abortonclose

option tcp-smart-connect

cookie SERVERID insert indirect nocache

option httpclose

option forwardfor

reqirep ^([^ :]*) /cc-rp-web/(.*) 1 /cc-rp-web/2

server LIVE-JBOSS-192.168.2.29:32767 192.168.2.29:32767 maxconn 2500 check cookie LIVE-JBOSS-192.168.2.29:32767 inter 1000

server LIVE-JBOSS-192.168.2.30:32767 192.168.2.30:32767 maxconn 2500 check cookie LIVE-JBOSS-192.168.2.30:32767 inter 1000

backend MODULE_CC-LE-WEB

mode http

balance roundrobin

option abortonclose

option tcp-smart-connect

cookie SERVERID insert indirect nocache

option httpclose

option forwardfor

reqirep ^([^ :]*) /cc-le-web/(.*) 1 /cc-le-web/2

server LIVE-JBOSS-192.168.2.29:32767 192.168.2.29:32767 maxconn 2500 check cookie LIVE-JBOSS-192.168.2.29:32767 inter 1000

server LIVE-JBOSS-192.168.2.30:32767 192.168.2.30:32767 maxconn 2500 check cookie LIVE-JBOSS-192.168.2.30:32767 inter 1000

backend MODULE_CC-FD-WEB

mode http

balance roundrobin

option abortonclose

option tcp-smart-connect

cookie SERVERID insert indirect nocache

option httpclose

option forwardfor

reqirep ^([^ :]*) /cc-fd-web/(.*) 1 /cc-fd-web/2

server LIVE-JBOSS-192.168.2.29:32767 192.168.2.29:32767 maxconn 2500 check cookie LIVE-JBOSS-192.168.2.29:32767 inter 1000

server LIVE-JBOSS-192.168.2.30:32767 192.168.2.30:32767 maxconn 2500 check cookie LIVE-JBOSS-192.168.2.30:32767 inter 1000

frontend TCP_SOAP_L4_A_FRN

bind 192.168.2.28:8078

mode tcp

option tcplog

backlog 4096

default_backend TCP_SOAP_L4_A

backend TCP_SOAP_L4_A

mode tcp

option tcplog

option log-health-checks

option tcpka

balance roundrobin

server ESB-SERVER-SOAP-192.168.2.29 192.168.2.29:31768 maxconn 2500 check inter 1000

server ESB-SERVER-SOAP-192.168.2.30 192.168.2.30:31768 maxconn 2500 check inter 1000

frontend HTTPS_AUTH_L4_A_FRN

bind 192.168.2.28:8041

mode tcp

option tcplog

backlog 4096

default_backend HTTPS_AUTH_L4_A

backend HTTPS_AUTH_L4_A](https://image.slidesharecdn.com/kubernetesworkingcluster-200416174457/85/ERP-System-Implementation-Kubernetes-Cluster-with-Sticky-Sessions-18-320.jpg)

![Docker to Kube Clsuter

pg. 19 By: chanaka.lasantha@gmail.com

mode tcp

option tcplog

option log-health-checks

option tcpka

balance roundrobin

reqadd X-Forwarded-Proto: http

server ESB-MANAGEMENT-INTERFACE-192.168.2.29 192.168.2.29:31769 maxconn 512 check inter 1000

server ESB-MANAGEMENT-INTERFACE-192.168.2.30 192.168.2.30:31769 maxconn 512 check inter 1000

frontend www-http-wso2

bind 192.168.2.28:10000 ssl crt /etc/pki/tls/certs/haproxy.pem

mode http

reqadd X-Forwarded-Proto: https

default_backend servers

backend servers

http-request set-header X-Forwarded-Port %[dst_port]

http-request add-header X-Forwarded-Proto https if { ssl_fc }

balance roundrobin

option httpclose

cookie SERVERID insert indirect nocache

cookie JSESSIONID prefix nocache

option forwardfor

reqadd X-Forwarded-Proto: http

server ESB-MANAGEMENT-INTERFACE-192.168.2.29 192.168.2.29:31770 maxconn 2500 check cookie check ssl verify none inter 1000

server ESB-MANAGEMENT-INTERFACE-192.168.2.30 192.168.2.30:31770 maxconn 2500 check cookie check ssl verify none inter 1000

frontend STATICTICS

bind 192.168.2.28:3128 ssl crt /etc/pki/tls/certs/haproxy.pem

reqadd X-Forwarded-Proto: http

default_backend stats

backend stats

mode http

option abortonclose

option httpclose

log global

stats enable

stats hide-version

stats refresh 15s

stats show-node

stats auth admin:z80cpu

stats uri /haproxy?stats

bind-process

root@master# systemctl restart haproxy

HAPROXY Dashboard: https://192.168.2.28:3128/haproxy?stats](https://image.slidesharecdn.com/kubernetesworkingcluster-200416174457/85/ERP-System-Implementation-Kubernetes-Cluster-with-Sticky-Sessions-19-320.jpg)