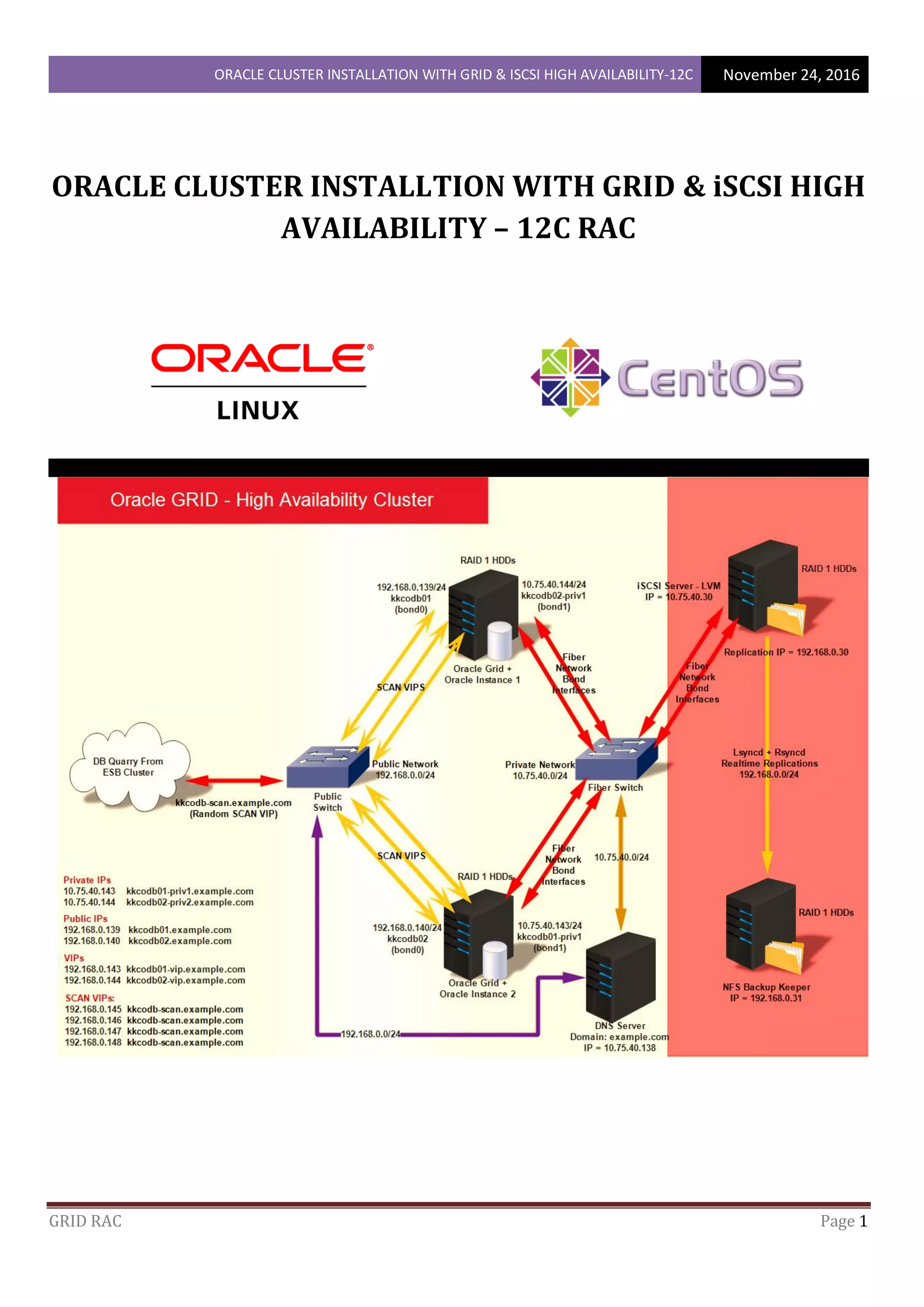

This document outlines the steps to install Oracle Grid Infrastructure and configure an Oracle Real Application Clusters (RAC) database with iSCSI high availability on two nodes. It describes pre-requisite tasks like setting up repositories, installing Oracle Grid and database packages, configuring users, directories and environment variables. Specific steps covered include bonding network interfaces, configuring the hosts file, setting swap space and installing Oracle Grid software.

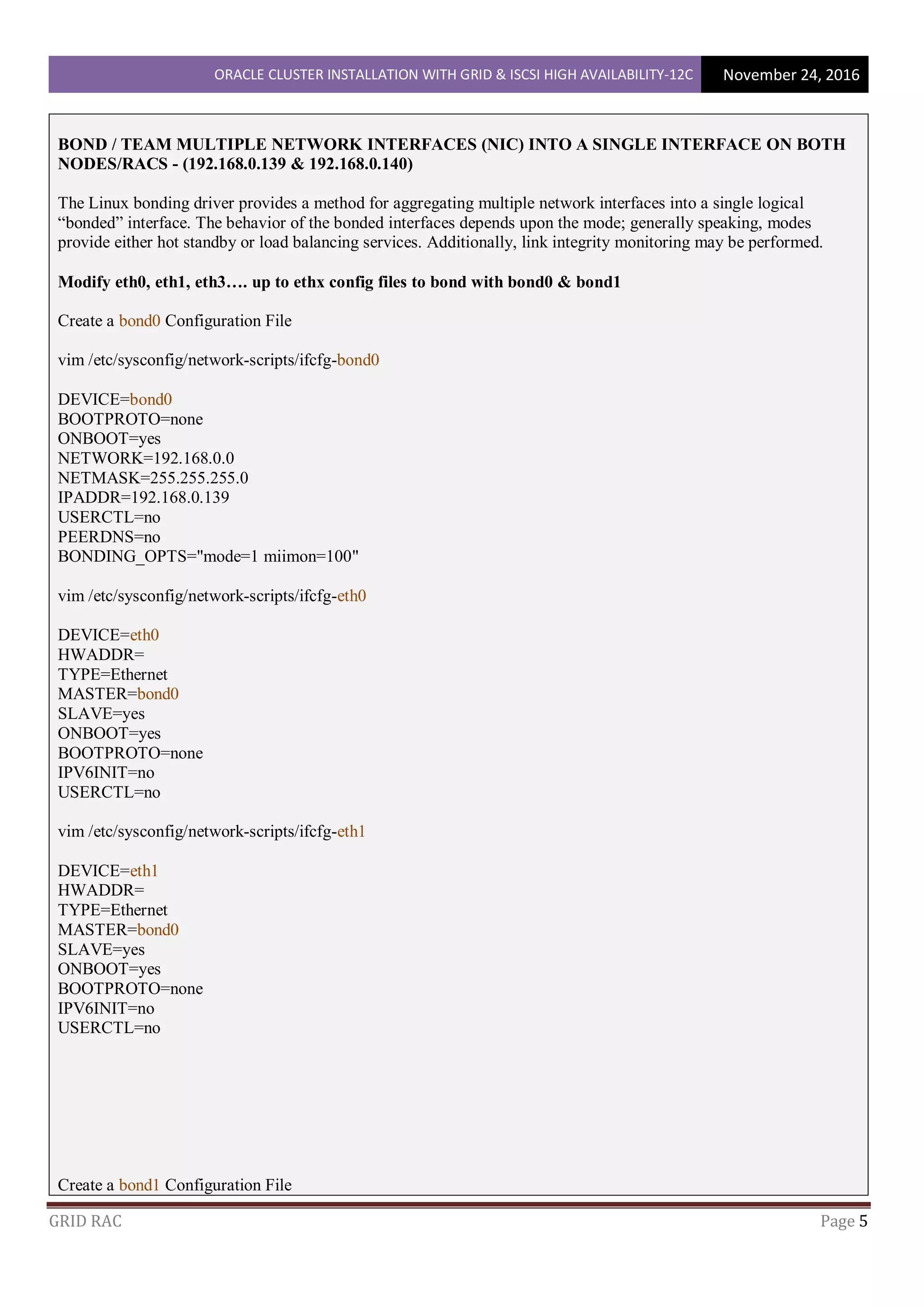

![ORACLE CLUSTER INSTALLATION WITH GRID & ISCSI HIGH AVAILABILITY-12C November 24, 2016

GRID RAC Page 7

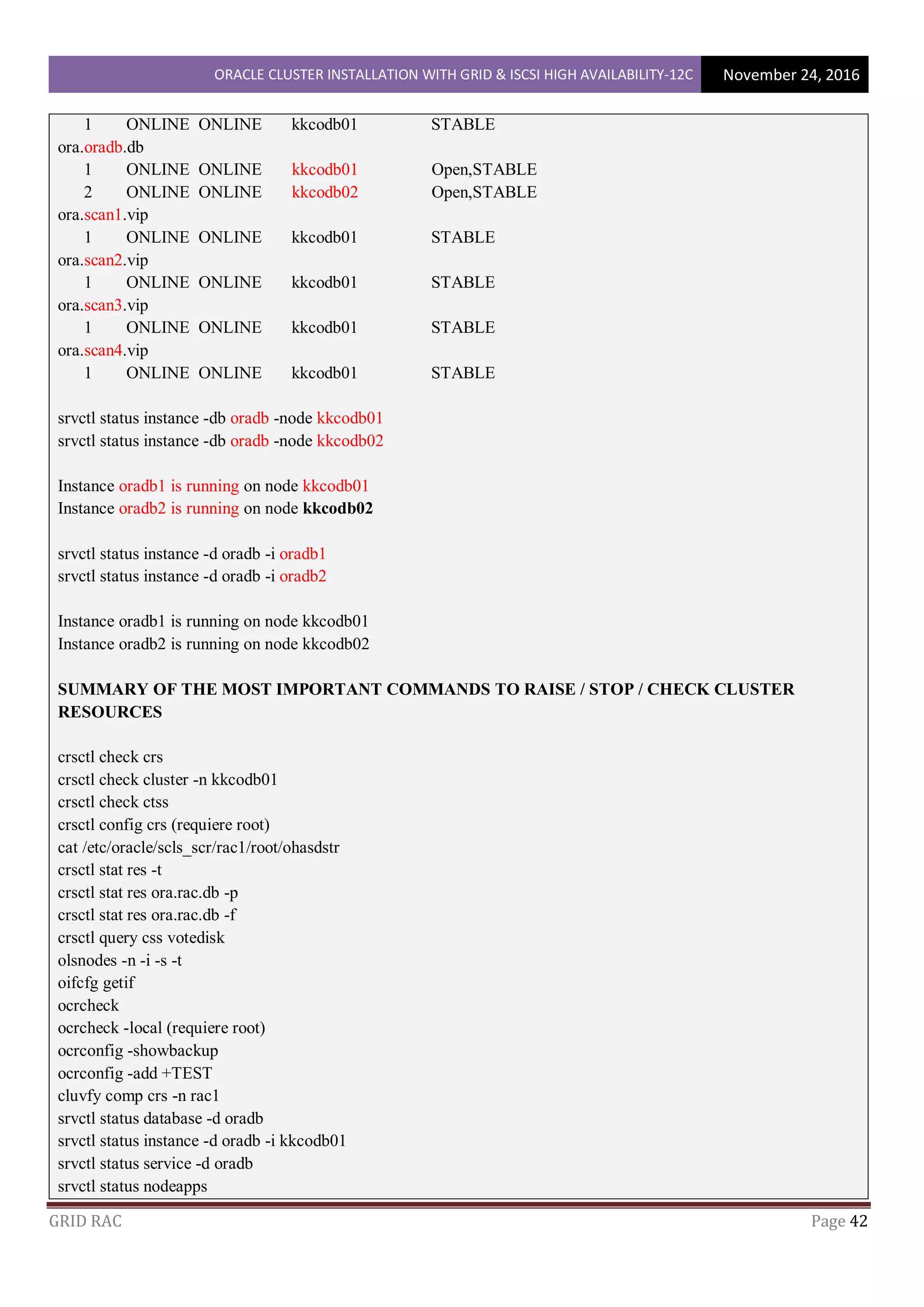

mkdir -p /app/oracle

mkdir -p /app/12.1.0/grid

chown grid:dba /app

chown grid:dba /app/oracle

chown grid:dba /app/12.1.0

chown grid:dba /app/12.1.0/grid

chmod -R 775 /app

mkdir -p /u01 ; mkdir -p /u02 ; mkdir -p /u03

(Giving R/W/E permission for grid user in dba gruop)

chown grid:dba /u01

chown grid:dba /u02

chown grid:dba /u03

chmod +x /u01

chmod +x /u02

chmod +x /u03

or

(Giving R/W/E permission for gird/oracle - all users in dba gruop)

chgrp dba /u01

chgrp dba /u02

chgrp dba /u03

chmod g+swr /u01

chmod g+swr /u02

chmod g+swr /u03

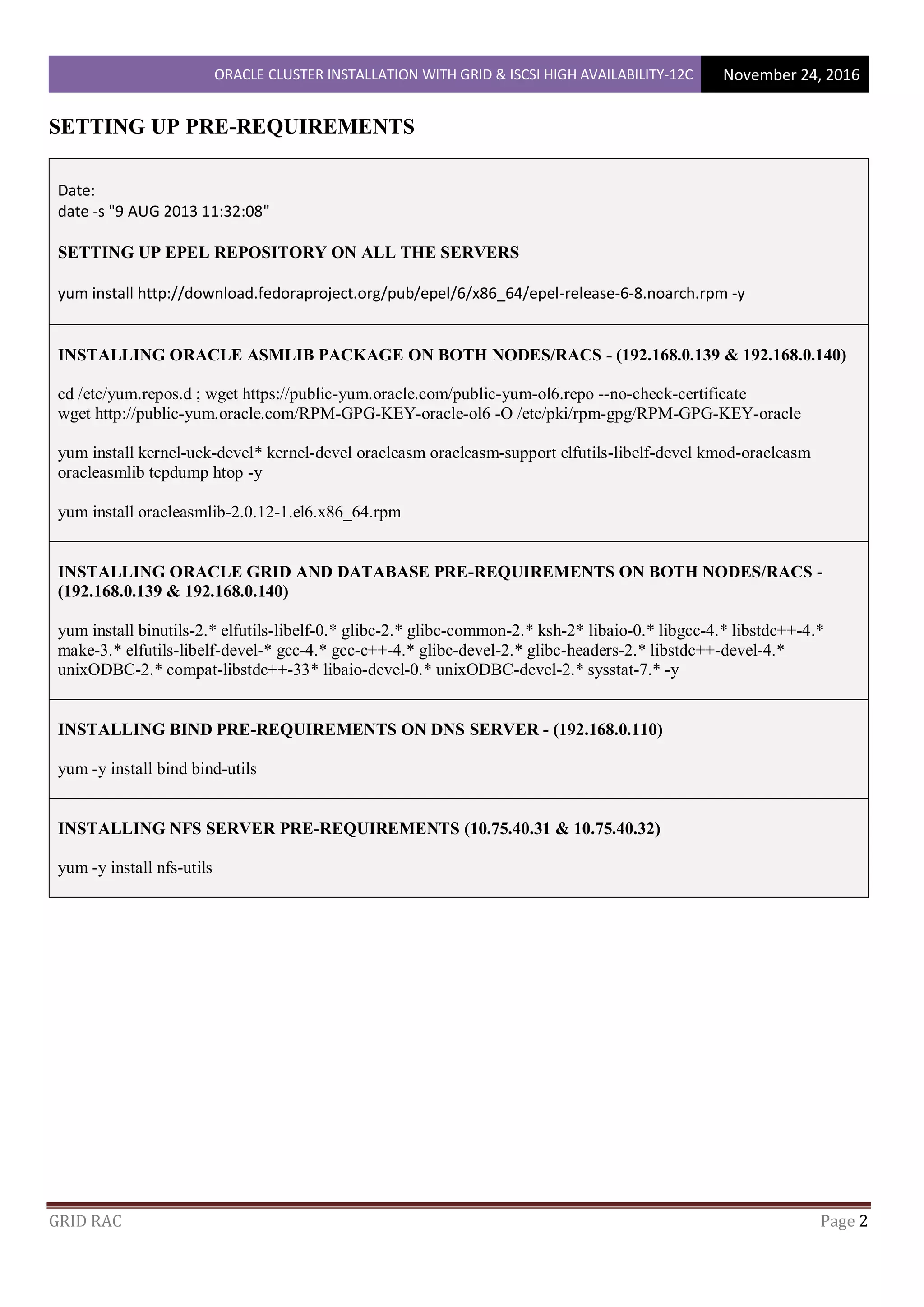

SETTING UP ENVIRONMENT VARIABLES FOR OS ACCOUNTS: GRID AND ORACLE ON BOTH

NODES/RACS - (192.168.0.139 & 192.168.0.140)

@ the kkcodb01 as the gird user

su – grid

vim /home/grid/.bash_profile

# Get the aliases and functions

if [ -f ~/.bashrc ]; then

. ~/.bashrc

fi

# User specific environment and startup programs

PATH=$PATH:$HOME/bin

export PATH

# Oracle Settings

TMP=/tmp; export TMP

TMPDIR=$TMP; export TMPDIR](https://image.slidesharecdn.com/oracleclusterinstallationwithgridiscsi-200213033147/75/Oracle-cluster-installation-with-grid-and-iscsi-7-2048.jpg)

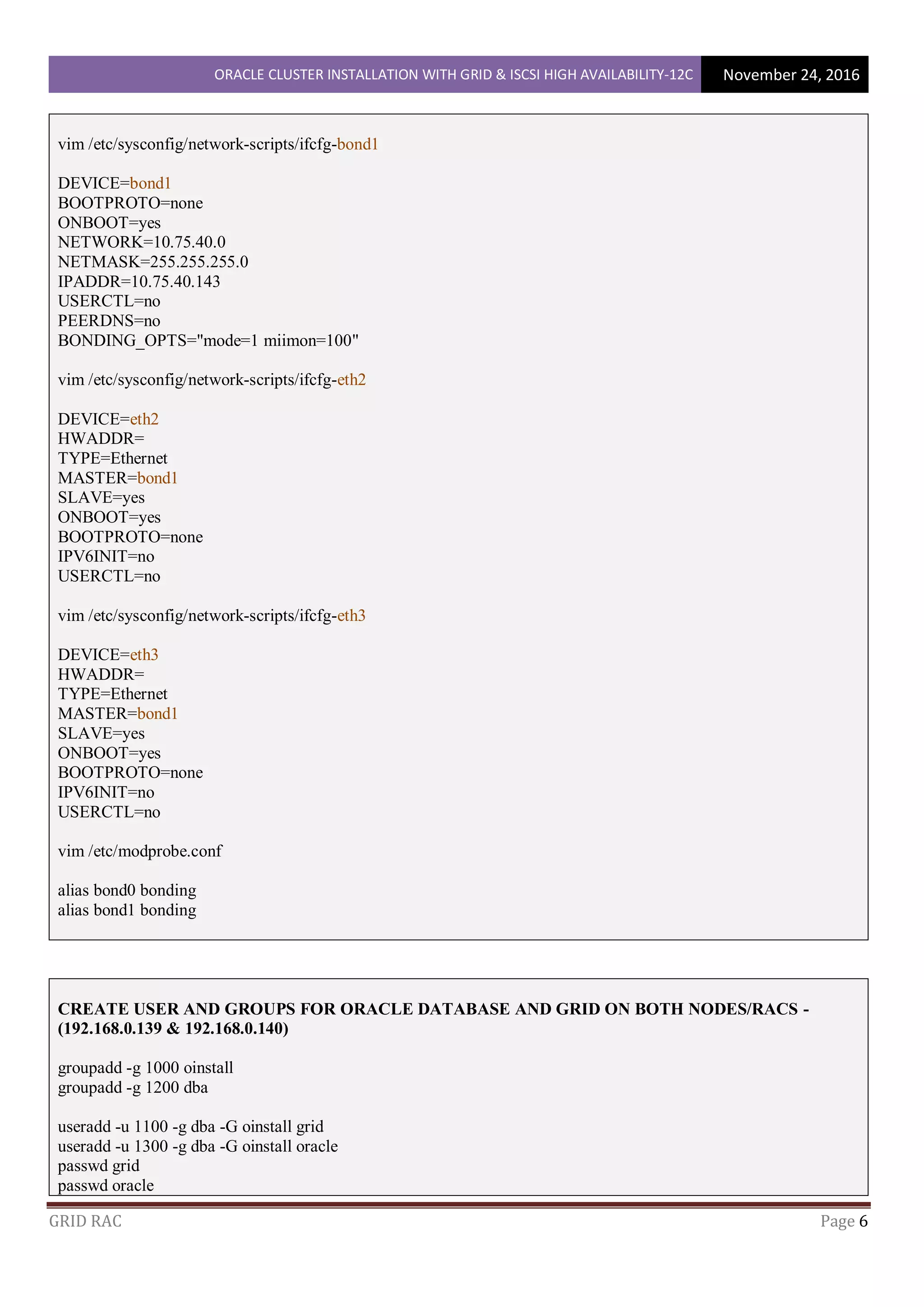

![ORACLE CLUSTER INSTALLATION WITH GRID & ISCSI HIGH AVAILABILITY-12C November 24, 2016

GRID RAC Page 8

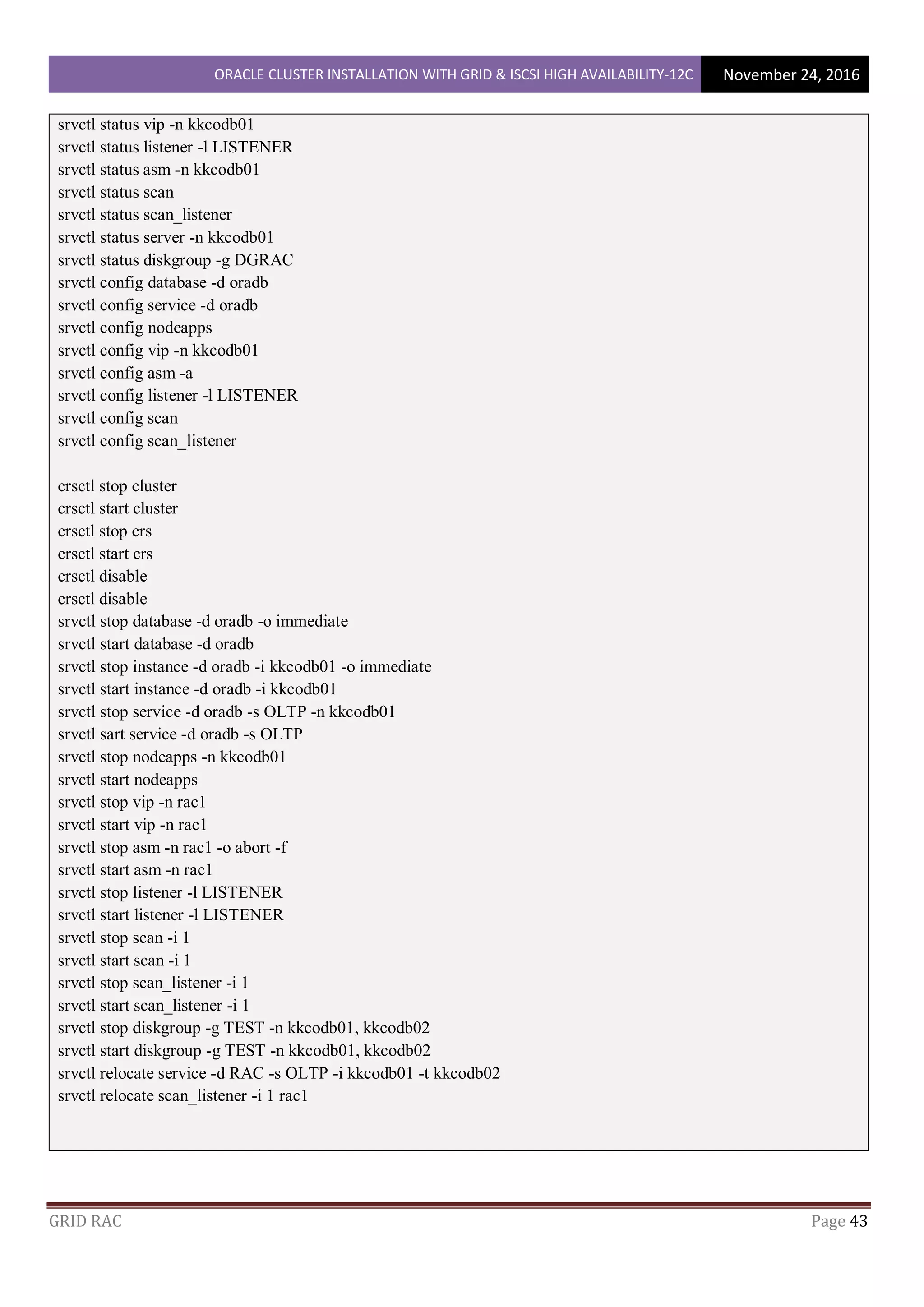

ORACLE_HOSTNAME=kkcodb01; export ORACLE_HOSTNAME

ORACLE_UNQNAME=RAC; export ORACLE_UNQNAME

ORACLE_BASE=/app/oracle; export ORACLE_BASE

GRID_HOME=/app/12.1.0/grid; export GRID_HOME

DB_HOME=$ORACLE_BASE/product/12.1.0/db_1; export DB_HOME

ORACLE_HOME=$GRID_HOME; export ORACLE_HOME

ORACLE_SID=RAC1; export ORACLE_SID

ORACLE_TERM=xterm; export ORACLE_TERM

BASE_PATH=/usr/sbin:$PATH; export BASE_PATH

PATH=$ORACLE_HOME/bin:$BASE_PATH; export PATH

LD_LIBRARY_PATH=$ORACLE_HOME/lib:/lib:/usr/lib; export LD_LIBRARY_PATH

CLASSPATH=$ORACLE_HOME/JRE:$ORACLE_HOME/jlib:$ORACLE_HOME/rdbms/jlib; export

CLASSPATH

umask 022

@ the kkcodb02 as the gird user

su – grid

vim /home/grid/.bash_profile

# Get the aliases and functions

if [ -f ~/.bashrc ]; then

. ~/.bashrc

fi

# User specific environment and startup programs

PATH=$PATH:$HOME/bin

export PATH

# Oracle Settings

TMP=/tmp; export TMP

TMPDIR=$TMP; export TMPDIR

ORACLE_HOSTNAME=kkcodb02; export ORACLE_HOSTNAME

ORACLE_UNQNAME=RAC; export ORACLE_UNQNAME

ORACLE_BASE=/app/oracle; export ORACLE_BASE

GRID_HOME=/app/12.1.0/grid; export GRID_HOME

DB_HOME=$ORACLE_BASE/product/12.1.0/db_1; export DB_HOME

ORACLE_HOME=$GRID_HOME; export ORACLE_HOME

ORACLE_SID=RAC2; export ORACLE_SID

ORACLE_TERM=xterm; export ORACLE_TERM

BASE_PATH=/usr/sbin:$PATH; export BASE_PATH

PATH=$ORACLE_HOME/bin:$BASE_PATH; export PATH

LD_LIBRARY_PATH=$ORACLE_HOME/lib:/lib:/usr/lib; export LD_LIBRARY_PATH

CLASSPATH=$ORACLE_HOME/JRE:$ORACLE_HOME/jlib:$ORACLE_HOME/rdbms/jlib; export

CLASSPATH

umask 022](https://image.slidesharecdn.com/oracleclusterinstallationwithgridiscsi-200213033147/75/Oracle-cluster-installation-with-grid-and-iscsi-8-2048.jpg)

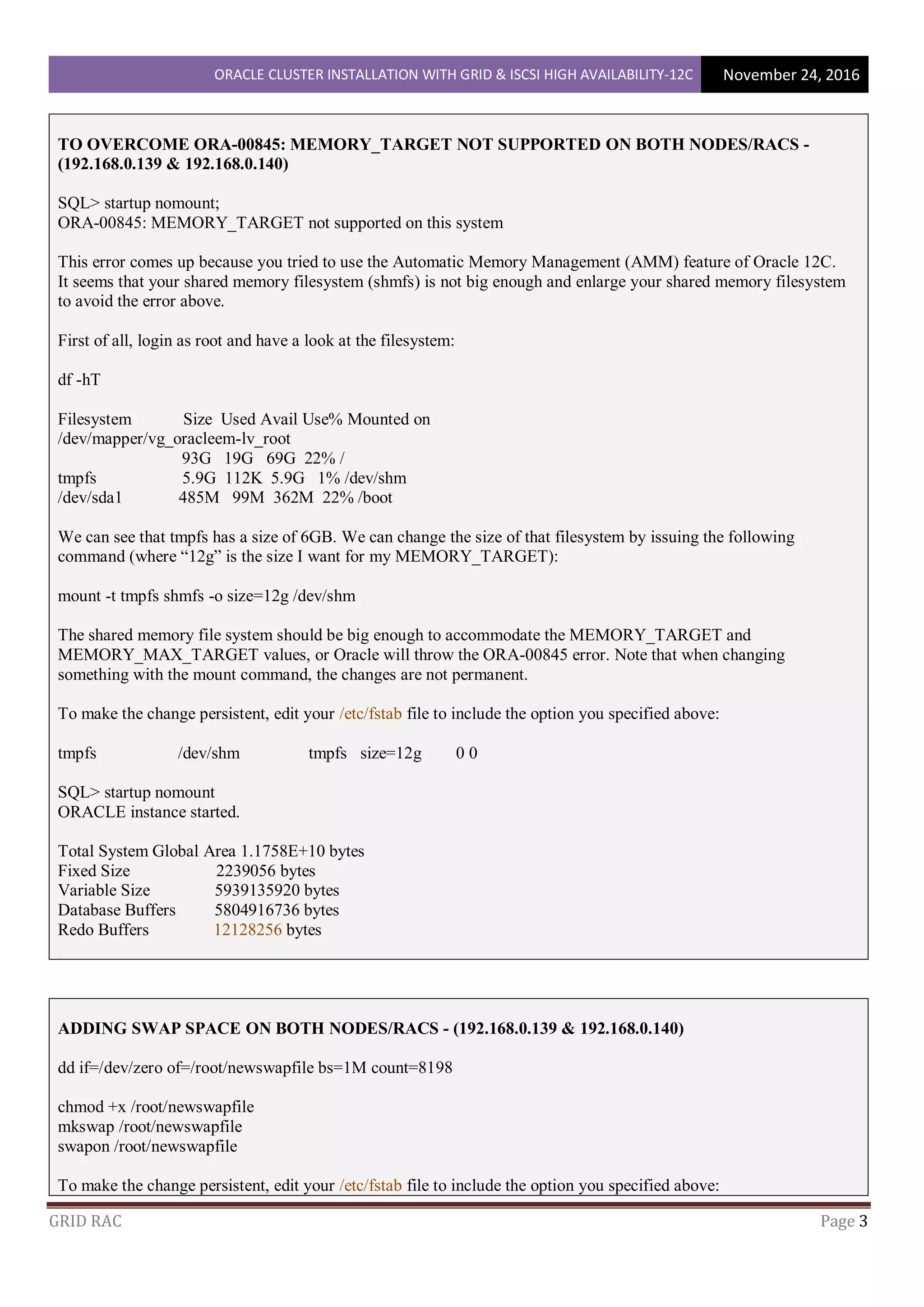

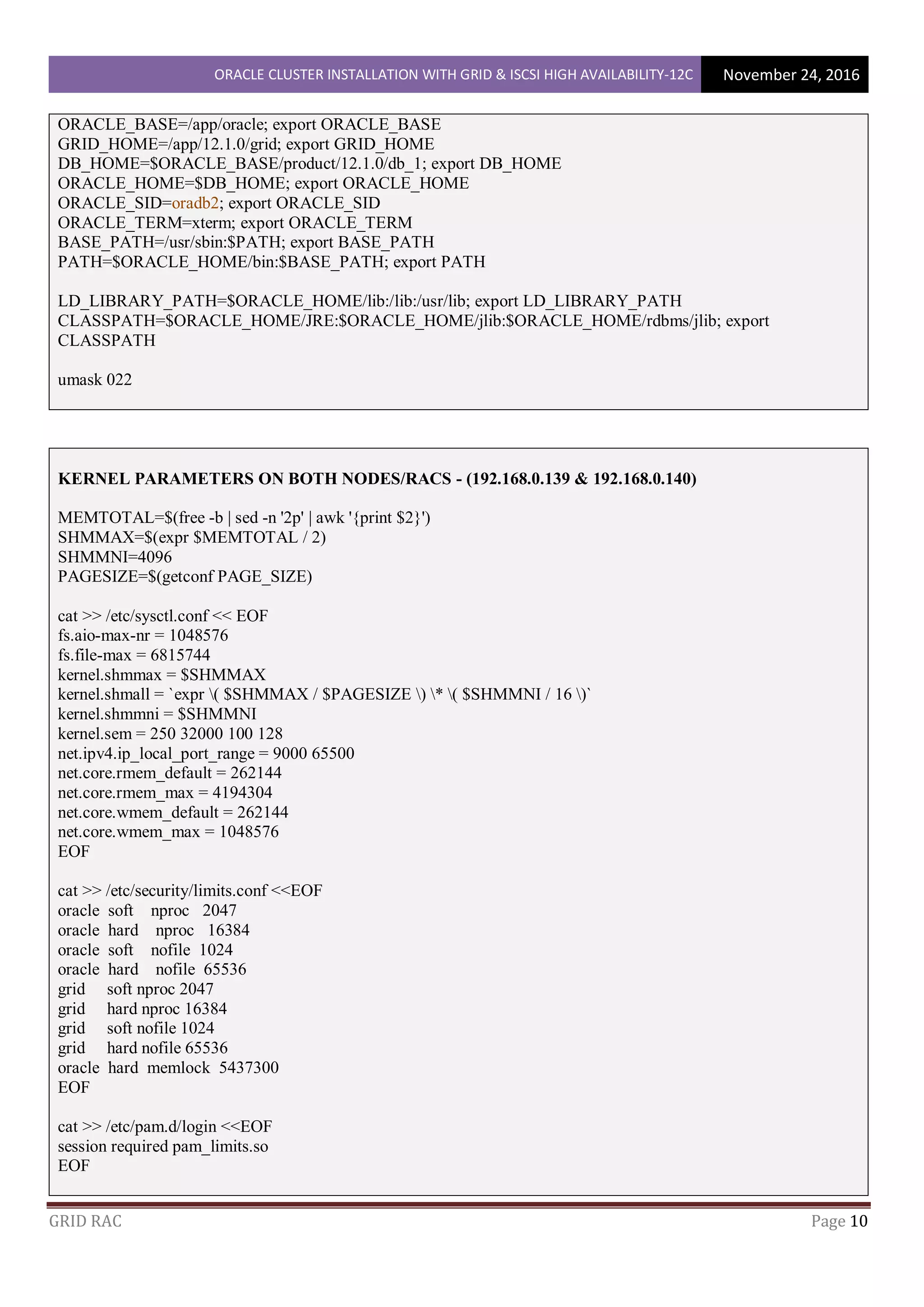

![ORACLE CLUSTER INSTALLATION WITH GRID & ISCSI HIGH AVAILABILITY-12C November 24, 2016

GRID RAC Page 9

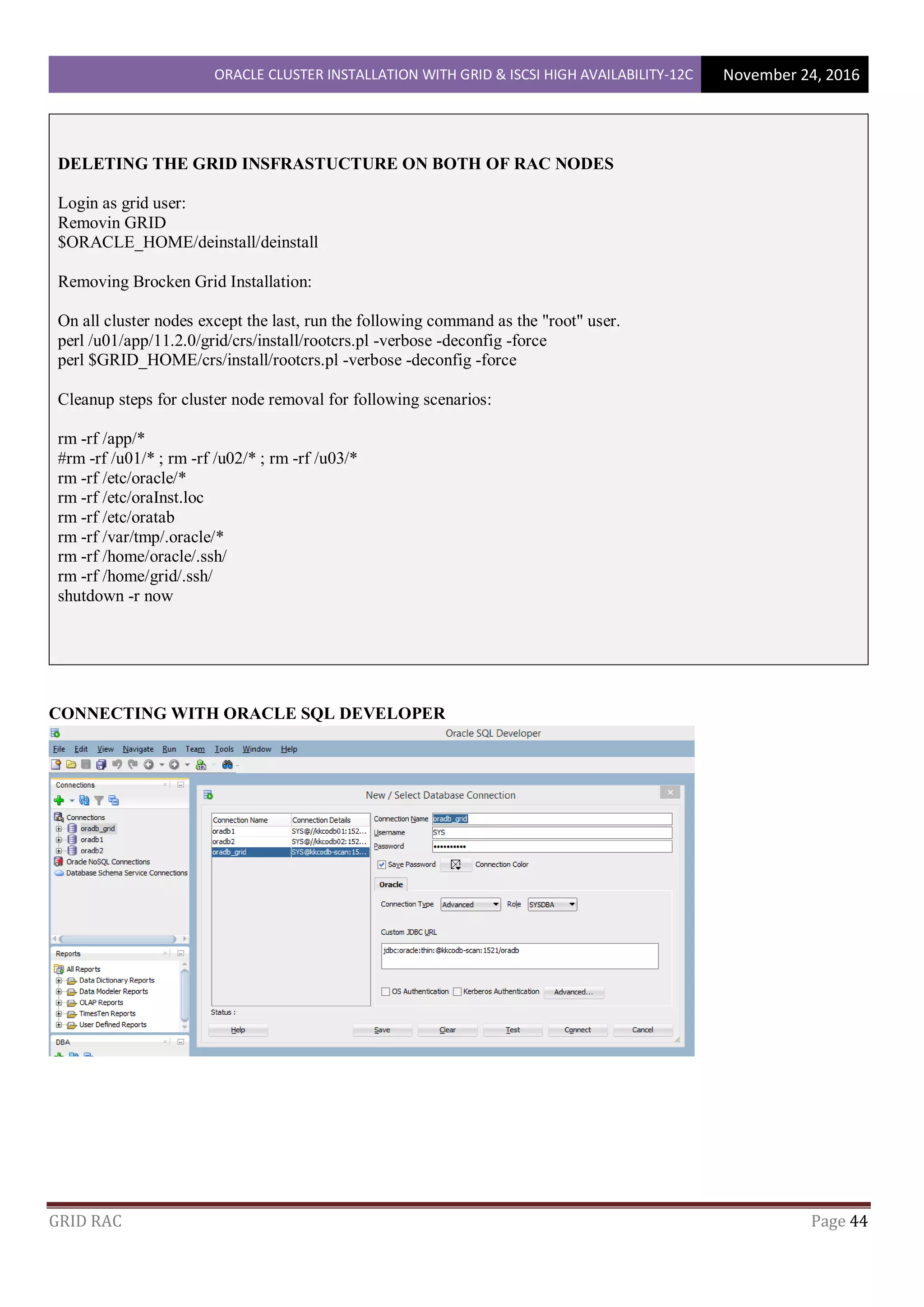

@ the kkcodb01 as the oracle user

su – oracle

vim /home/grid/.bash_profile

# Get the aliases and functions

if [ -f ~/.bashrc ]; then

. ~/.bashrc

fi

# User specific environment and startup programs

PATH=$PATH:$HOME/bin

export PATH

# Oracle Settings

TMP=/tmp; export TMP

TMPDIR=$TMP; export TMPDIR

ORACLE_HOSTNAME=kkcodb01; export ORACLE_HOSTNAME

ORACLE_UNQNAME=oradb; export ORACLE_UNQNAME

ORACLE_BASE=/app/oracle; export ORACLE_BASE

GRID_HOME=/app/12.1.0/grid; export GRID_HOME

DB_HOME=$ORACLE_BASE/product/12.1.0/db_1; export DB_HOME

ORACLE_HOME=$DB_HOME; export ORACLE_HOME

ORACLE_SID=oradb1; export ORACLE_SID

ORACLE_TERM=xterm; export ORACLE_TERM

BASE_PATH=/usr/sbin:$PATH; export BASE_PATH

PATH=$ORACLE_HOME/bin:$BASE_PATH; export PATH

LD_LIBRARY_PATH=$ORACLE_HOME/lib:/lib:/usr/lib; export LD_LIBRARY_PATH

CLASSPATH=$ORACLE_HOME/JRE:$ORACLE_HOME/jlib:$ORACLE_HOME/rdbms/jlib; export

CLASSPATH

umask 022

@ the kkcodb02 as the oracle user

su – oracle

vim /home/grid/.bash_profile

# Get the aliases and functions

if [ -f ~/.bashrc ]; then

. ~/.bashrc

fi

# User specific environment and startup programs

PATH=$PATH:$HOME/bin

export PATH

# Oracle Settings

TMP=/tmp; export TMP

TMPDIR=$TMP; export TMPDIR

ORACLE_HOSTNAME=kkcodb02; export ORACLE_HOSTNAME

ORACLE_UNQNAME=oradb; export ORACLE_UNQNAME](https://image.slidesharecdn.com/oracleclusterinstallationwithgridiscsi-200213033147/75/Oracle-cluster-installation-with-grid-and-iscsi-9-2048.jpg)

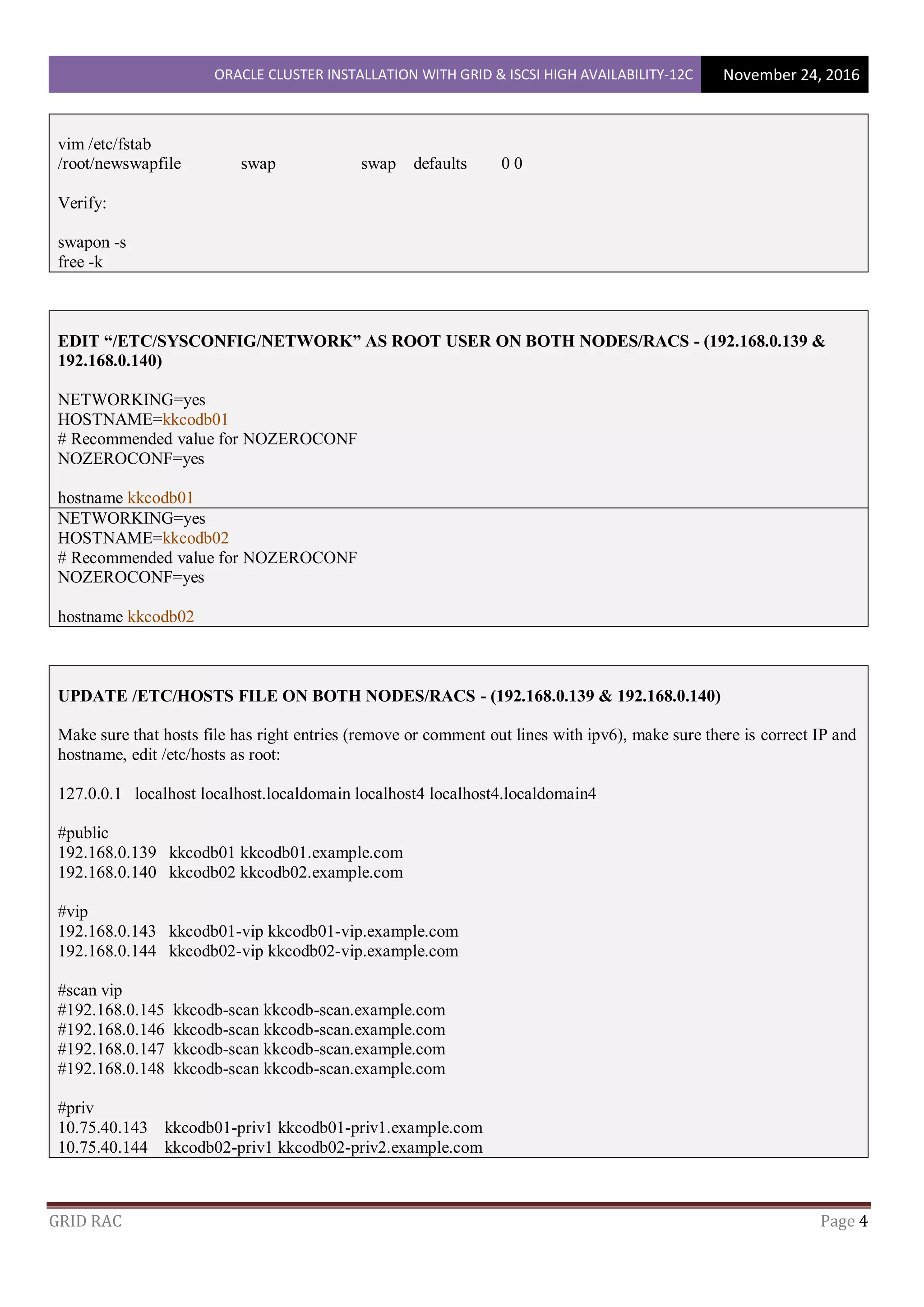

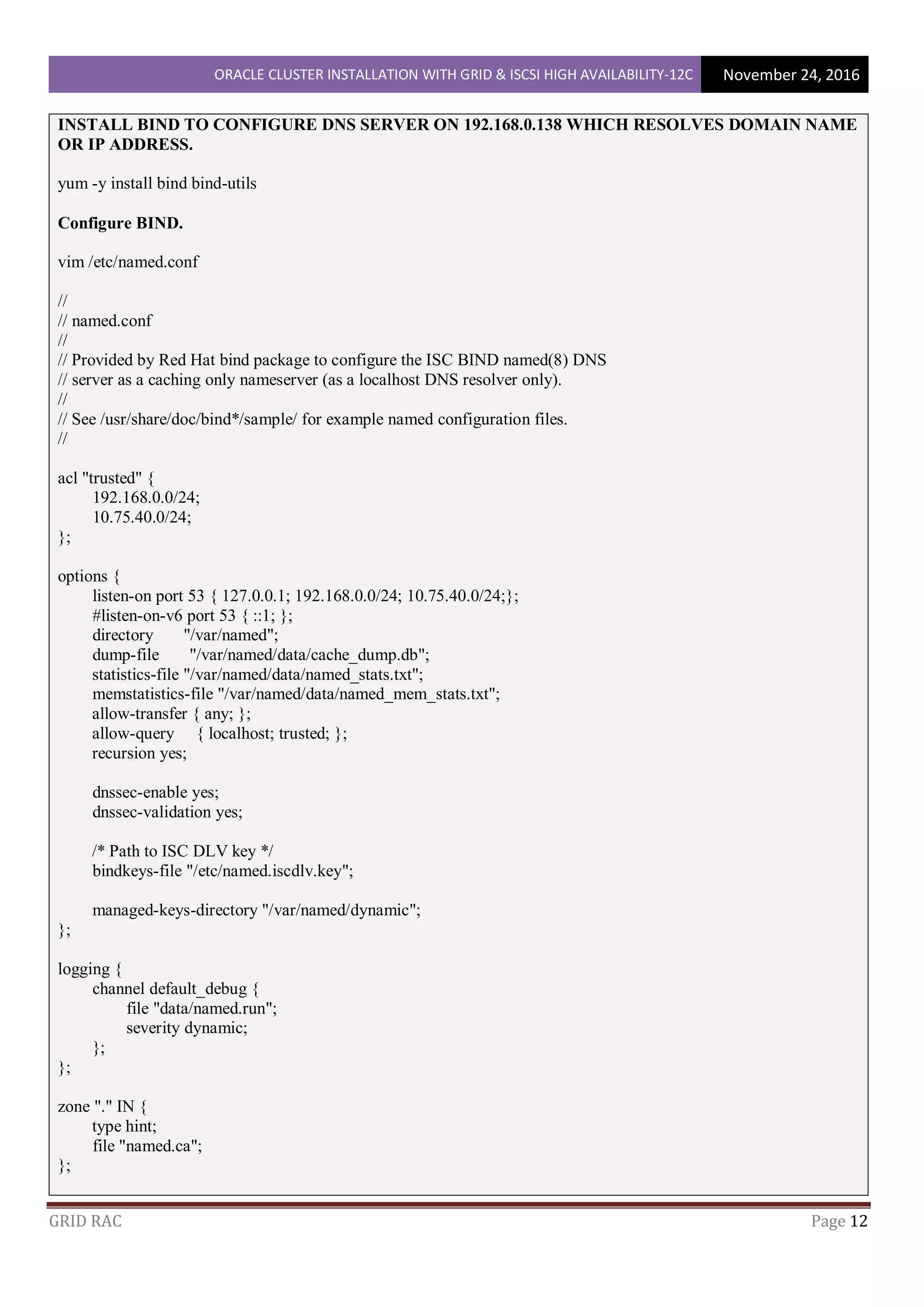

![ORACLE CLUSTER INSTALLATION WITH GRID & ISCSI HIGH AVAILABILITY-12C November 24, 2016

GRID RAC Page 11

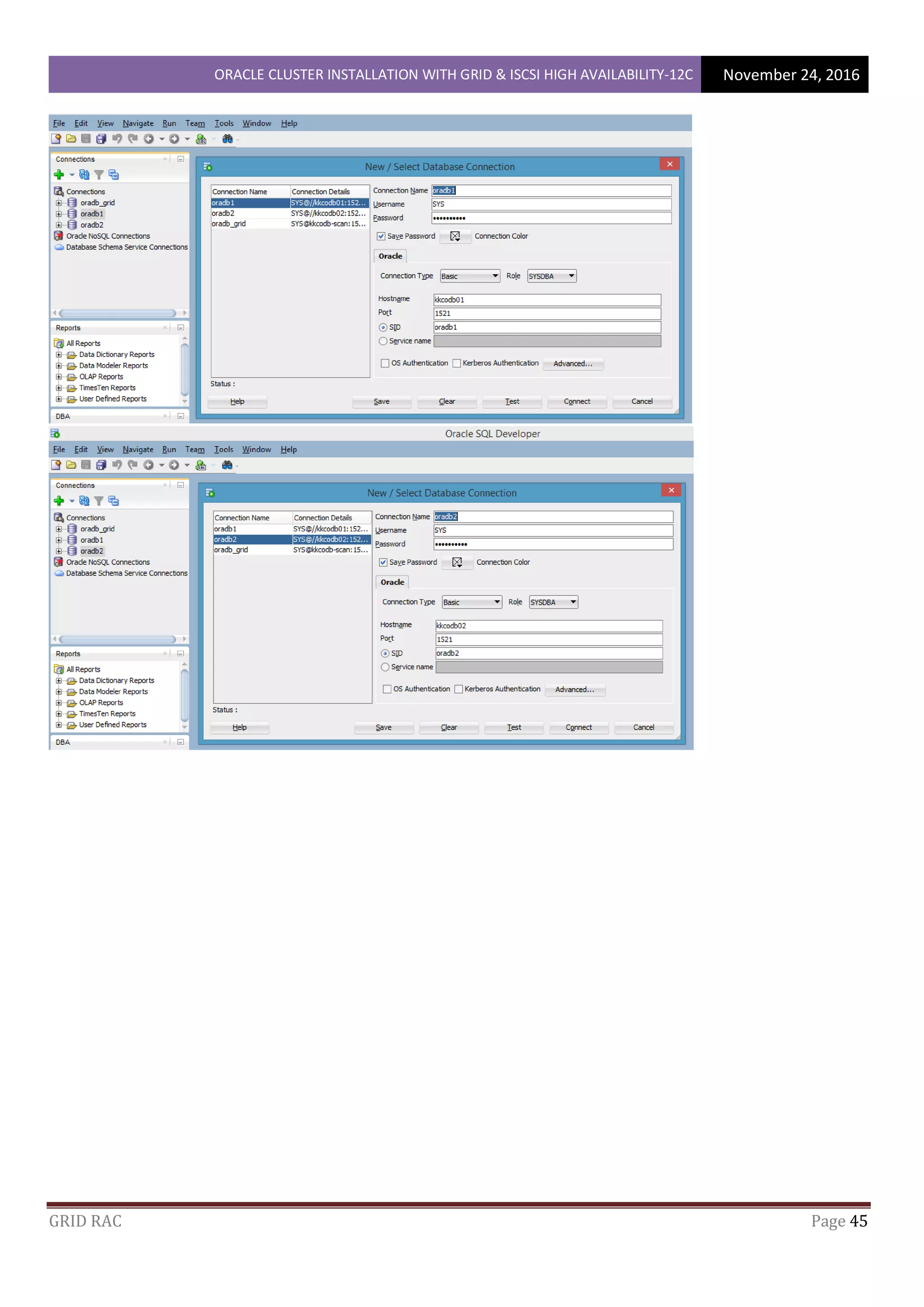

cat >> /etc/profile <<EOF

if [ $USER = "oracle" ] || [ $USER = "grid" ]; then

if [ $SHELL = "/bin/ksh" ]; then

ulimit -p 16384

ulimit -n 65536

else

ulimit -u 16384 -n 65536

fi

umask 022

fi

EOF

cat >> /etc/csh.login <<EOF

if ( $USER == "oracle" || $USER == "grid" )

then

limit maxproc 16384

limit descriptors 65536

endif

EOF

Execute the shutdown -r now on both nodes

DOWNLOADING ORACLE DATABASE AND GRID INFRASTRUCTURE SOFTWARE

You would have to download Oracle Database 12c Release 1 Grid Infrastructure (12.1.0.2.0) for Linux x86-64 –

here

Download – linuxamd64_12102_grid_1of2.zip

Download – linuxamd64_12102_grid_2of2.zip

Downloading and installing Oracle Database software

You would have to download Oracle Database 12c Release (12.1.0.2.0) for Linux x86-64 – here

Download – linuxamd64_12102_database_1of2.zip

Download – linuxamd64_12102_database_2of2.zip

Copy zip files to kkcodb01 server to /tmp directory using WinSCP

As a root user,

cd /tmp

chmod +x *.zip

for i in /tmp/linuxamd64_12102_grid_*.zip; do unzip $i -d /home/grid/stage; done

for i in /tmp/linuxamd64_12102_database_*.zip; do unzip $i -d /home/oracle/stage; done](https://image.slidesharecdn.com/oracleclusterinstallationwithgridiscsi-200213033147/75/Oracle-cluster-installation-with-grid-and-iscsi-11-2048.jpg)

![ORACLE CLUSTER INSTALLATION WITH GRID & ISCSI HIGH AVAILABILITY-12C November 24, 2016

GRID RAC Page 19

perms = true,

rsh = "/usr/bin/ssh -p 22 -o StrictHostKeyChecking=no"

service lsyncd start

chkconfig lsyncd on

mkdir -p /var/log/lsyncd

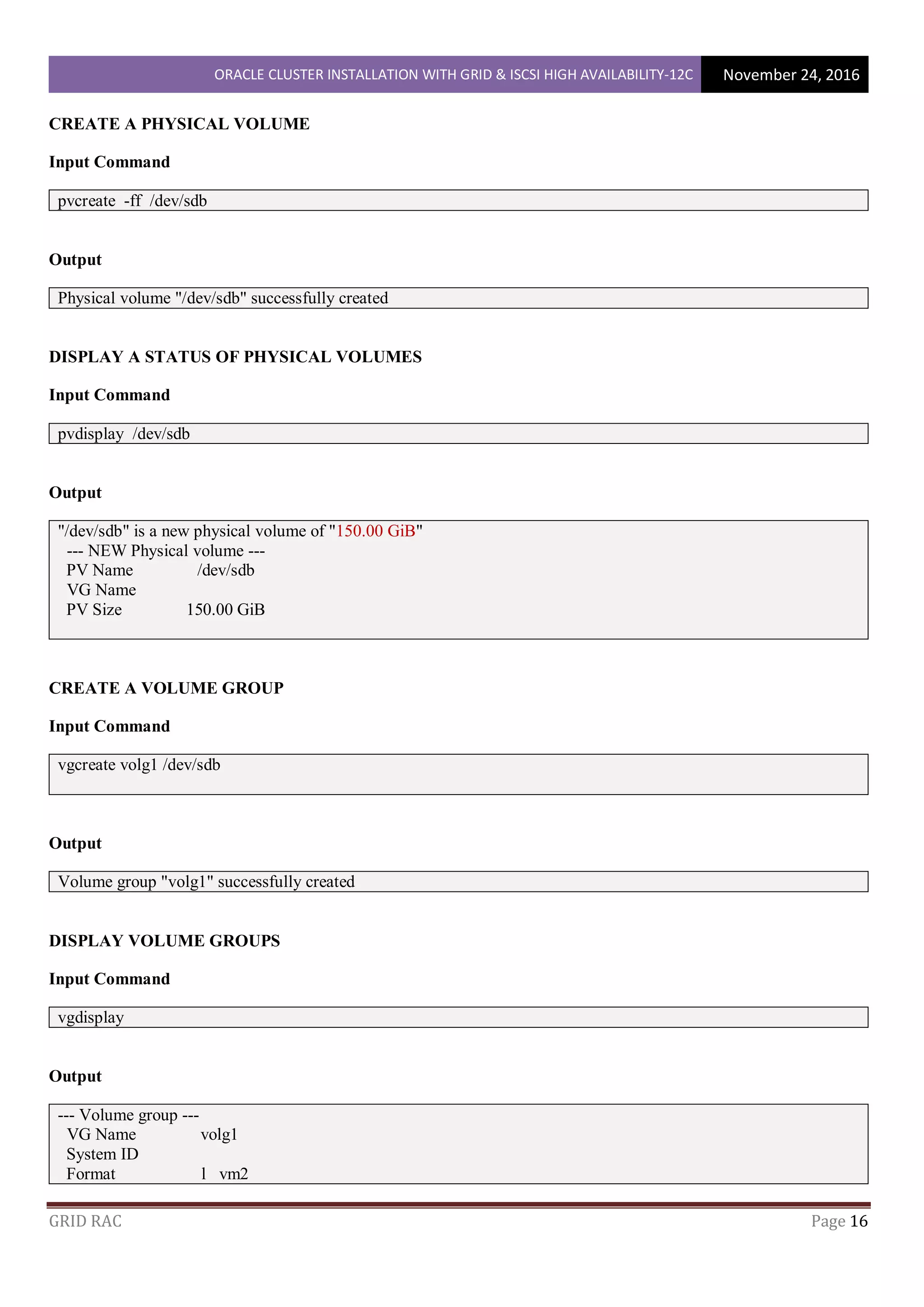

CONFIGURING iSCSI SERVER (10.75.40.30 / 192.168.0.30)

groupadd -g 1000 oinstall

groupadd -g 1200 dba

useradd -u 1100 -g dba -G oinstall grid

useradd -u 1300 -g dba -G oinstall oracle

passwd grid

passwd oracle

mkdir -p /u01/VM/iSCSI_shares/shared_1

mkdir -p /u01/VM/iSCSI_shares/shared_2

mkdir -p /u01/VM/iSCSI_shares/shared_3

chown grid:dba /u01/VM/iSCSI_shares/shared_1

chown grid:dba /u01/VM/iSCSI_shares/shared_2

chown grid:dba /u01/VM/iSCSI_shares/shared_3

chmod +x /u01/VM/iSCSI_shares/shared_1

chmod +x /u01/VM/iSCSI_shares/shared_2

chmod +x /u01/VM/iSCSI_shares/shared_3

CONFIGURE ISCSI TARGET

A storage on a network is called iSCSI Target, a Client which connects to iSCSI Target is called iSCSI Initiator.

Install administration tools.

yum -y install scsi-target-utils

Configure iSCSI Target

For example, create a disk image under the [/u01/VM/iSCSI_shares] directory and set it as a shared disk.

dd if=/dev/zero of=/u01/VM/iSCSI_shares/shared_1/disk01.img count=0 bs=1 seek=50G

dd if=/dev/zero of=/u01/VM/iSCSI_shares/shared_2/disk02.img count=0 bs=1 seek=50G

dd if=/dev/zero of=/u01/VM/iSCSI_shares/shared_3/disk03.img count=0 bs=1 seek=50G](https://image.slidesharecdn.com/oracleclusterinstallationwithgridiscsi-200213033147/75/Oracle-cluster-installation-with-grid-and-iscsi-19-2048.jpg)

![ORACLE CLUSTER INSTALLATION WITH GRID & ISCSI HIGH AVAILABILITY-12C November 24, 2016

GRID RAC Page 21

Show established session

iscsiadm -m session -o show

Show partitions

cat /proc/partitions

fdisk -l | grep Disk

Added new device provided from target as [sdb, sdc, sdd]

yum -y install parted

Create a label

parted --script /dev/sdb "mklabel msdos"

parted --script /dev/sdc "mklabel Msdos"

parted --script /dev/sdd "mklabel MSdos"

Create a partition

parted --script /dev/sdb "mkpart primary 0% 100%"

parted --script /dev/sdc "mkpart primary 0% 100%"

parted --script /dev/sdd "mkpart primary 0% 100%"

Format with EXT4

mkfs.ext4 /dev/sdb1

mkfs.ext4 /dev/sdc1

mkfs.ext4 /dev/sdd1

mount /dev/sdb1 /u01

mount /dev/sdc1 /u02

mount /dev/sdd1 /u03

df -hT

vim /etc/rc.local

iscsiadm -m discovery -t sendtargets -p 192.168.0.30

iscsiadm -m node --login

mount /dev/sdb1 /u01

mount /dev/sdc1 /u02

mount /dev/sdd1 /u03

init 6](https://image.slidesharecdn.com/oracleclusterinstallationwithgridiscsi-200213033147/75/Oracle-cluster-installation-with-grid-and-iscsi-21-2048.jpg)