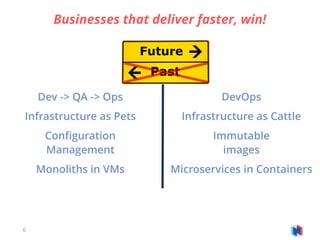

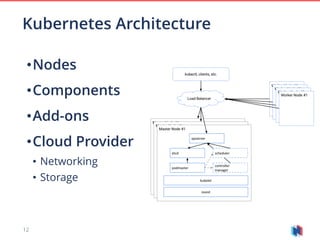

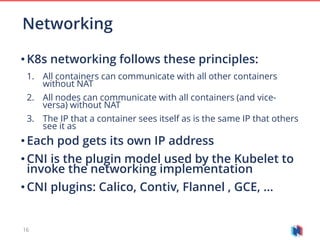

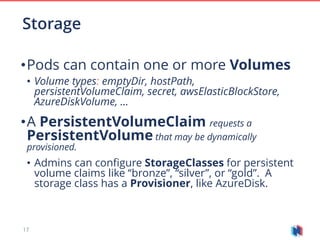

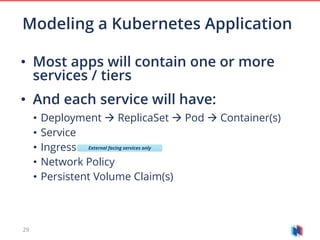

Kubernetes is a powerful open-source container orchestration solution that enhances the efficiency of enterprise development by enabling microservices and containerization. It offers a scalable and extensible platform, supporting various application architectures while simplifying deployment and management processes. Enterprises are encouraged to adopt a Kubernetes strategy to streamline operations and improve productivity.