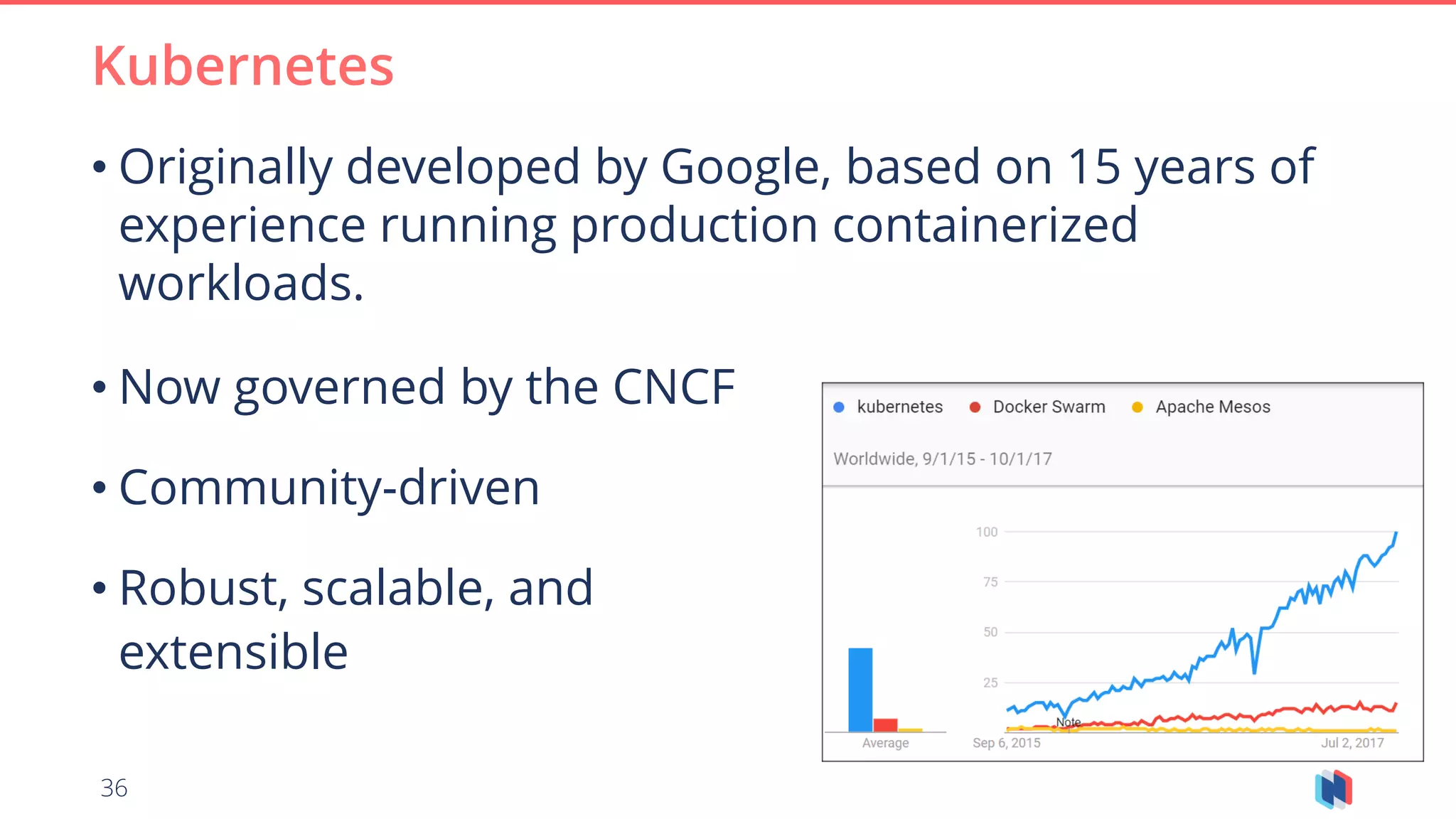

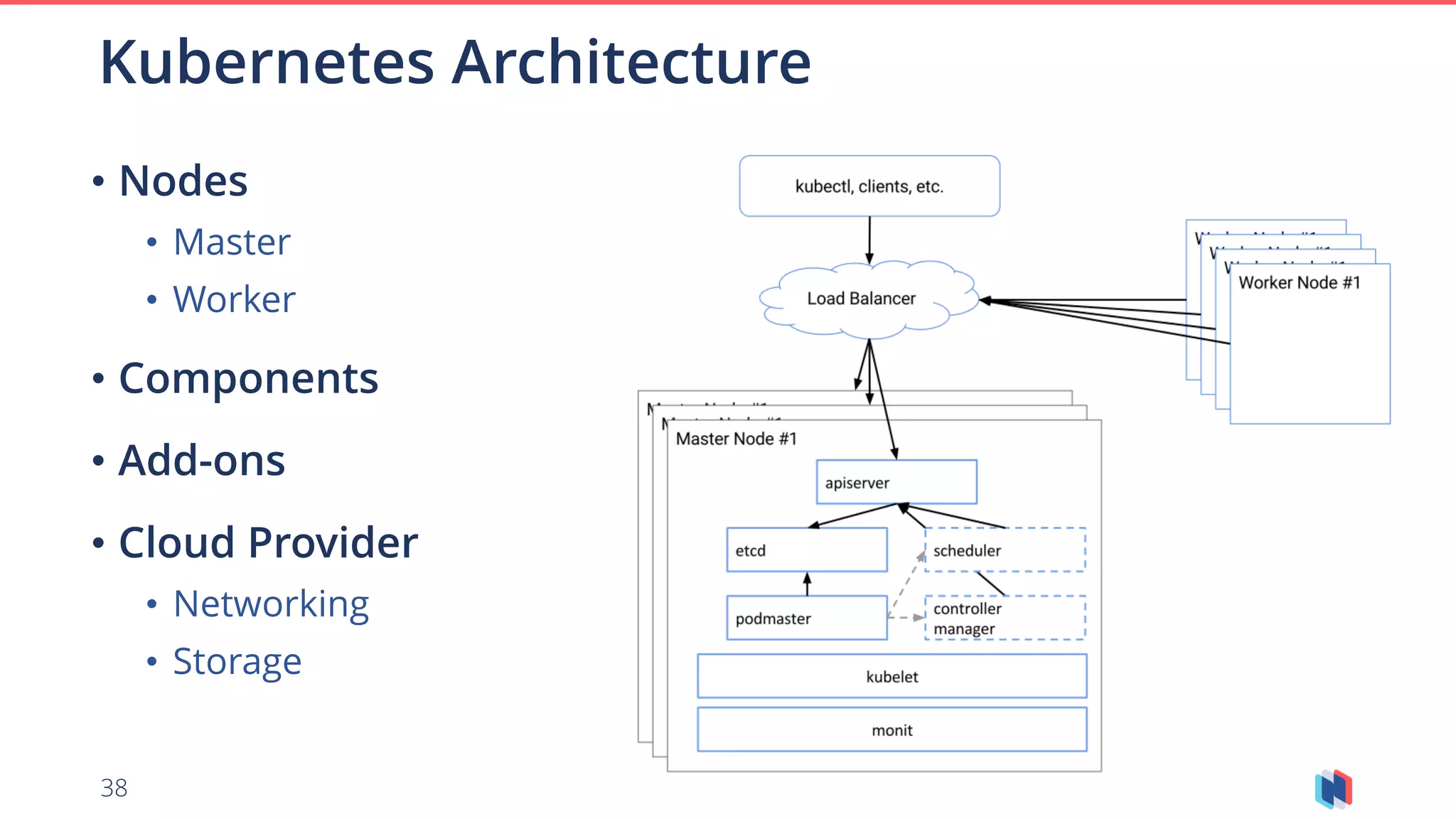

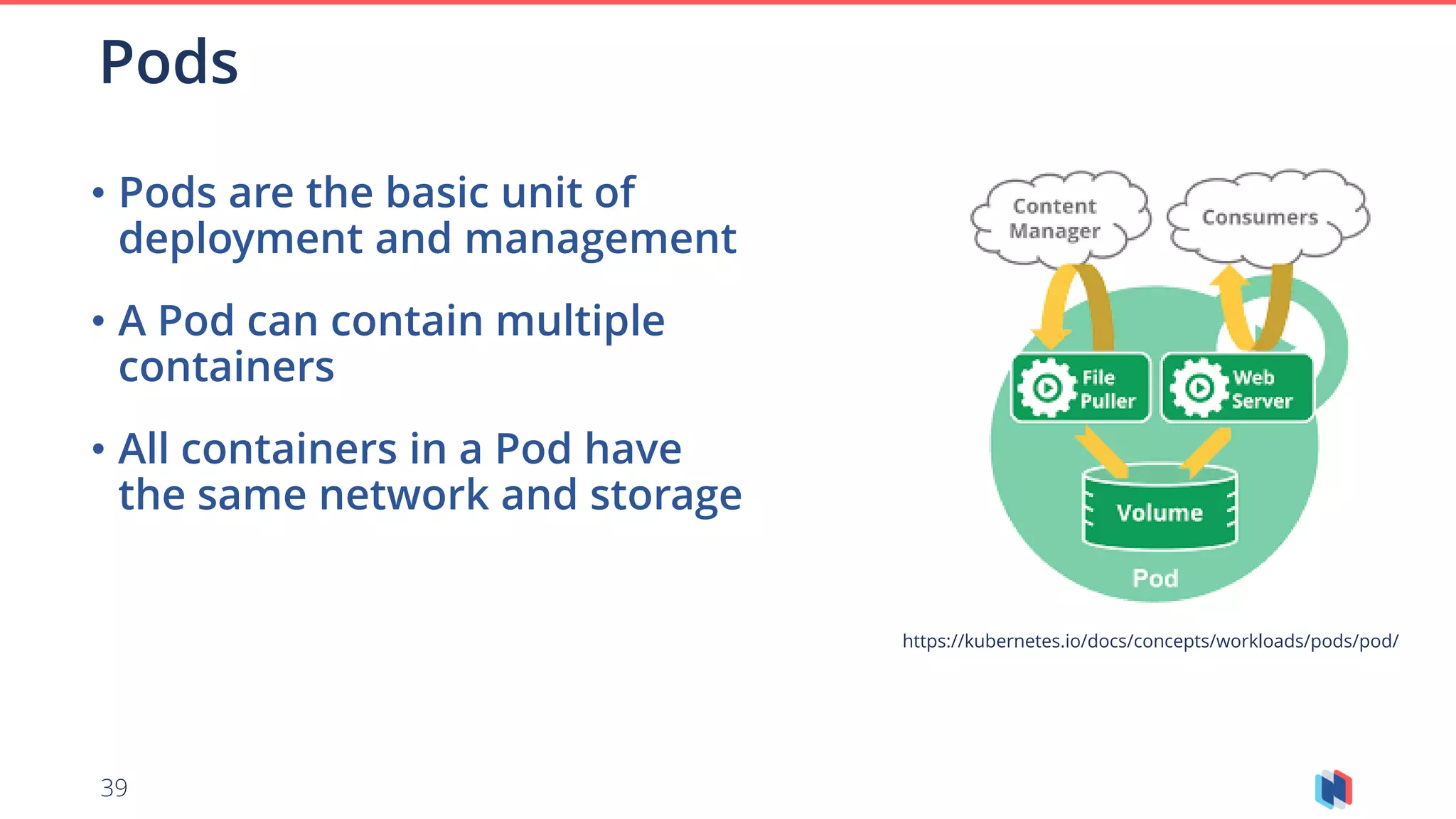

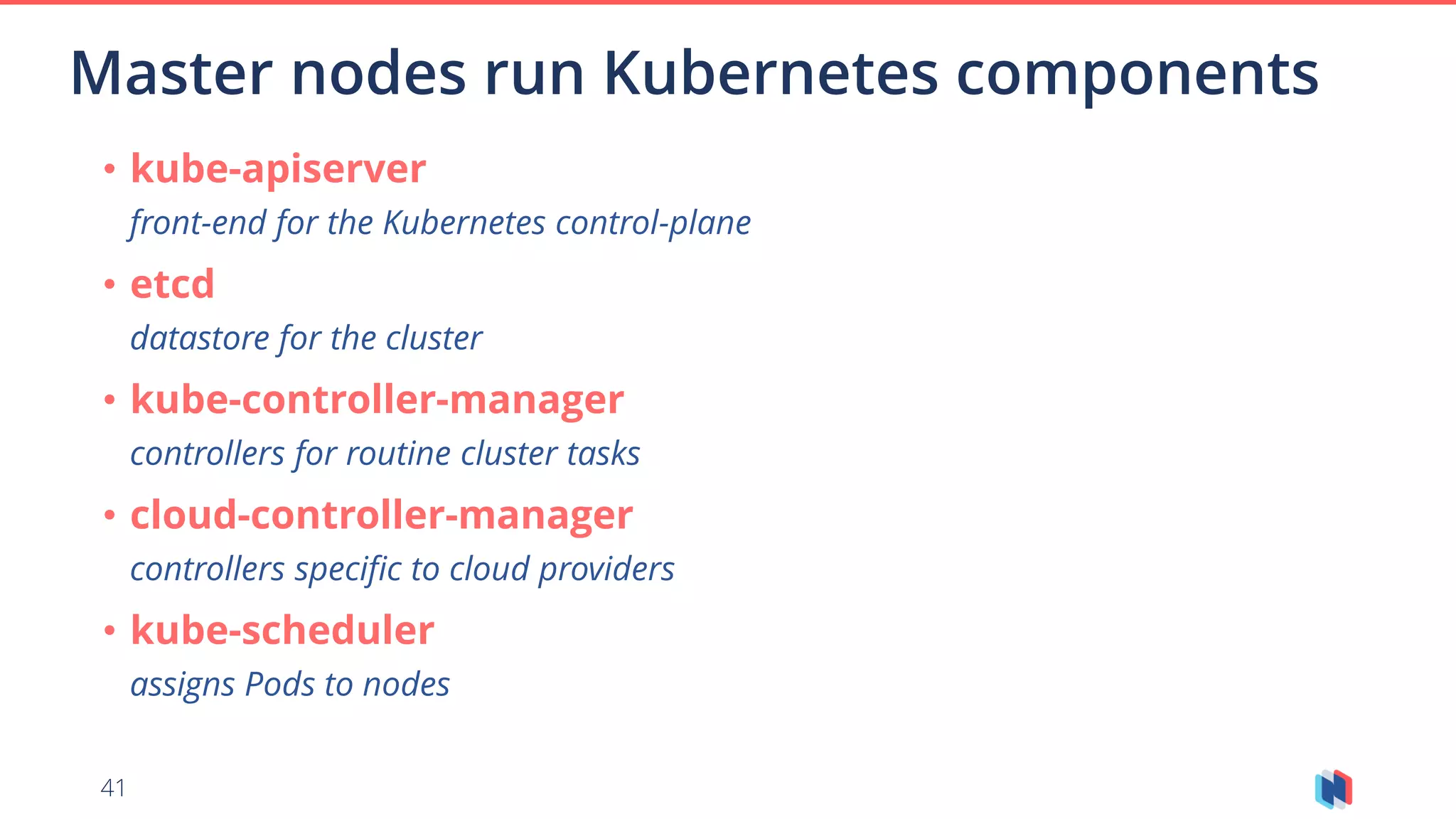

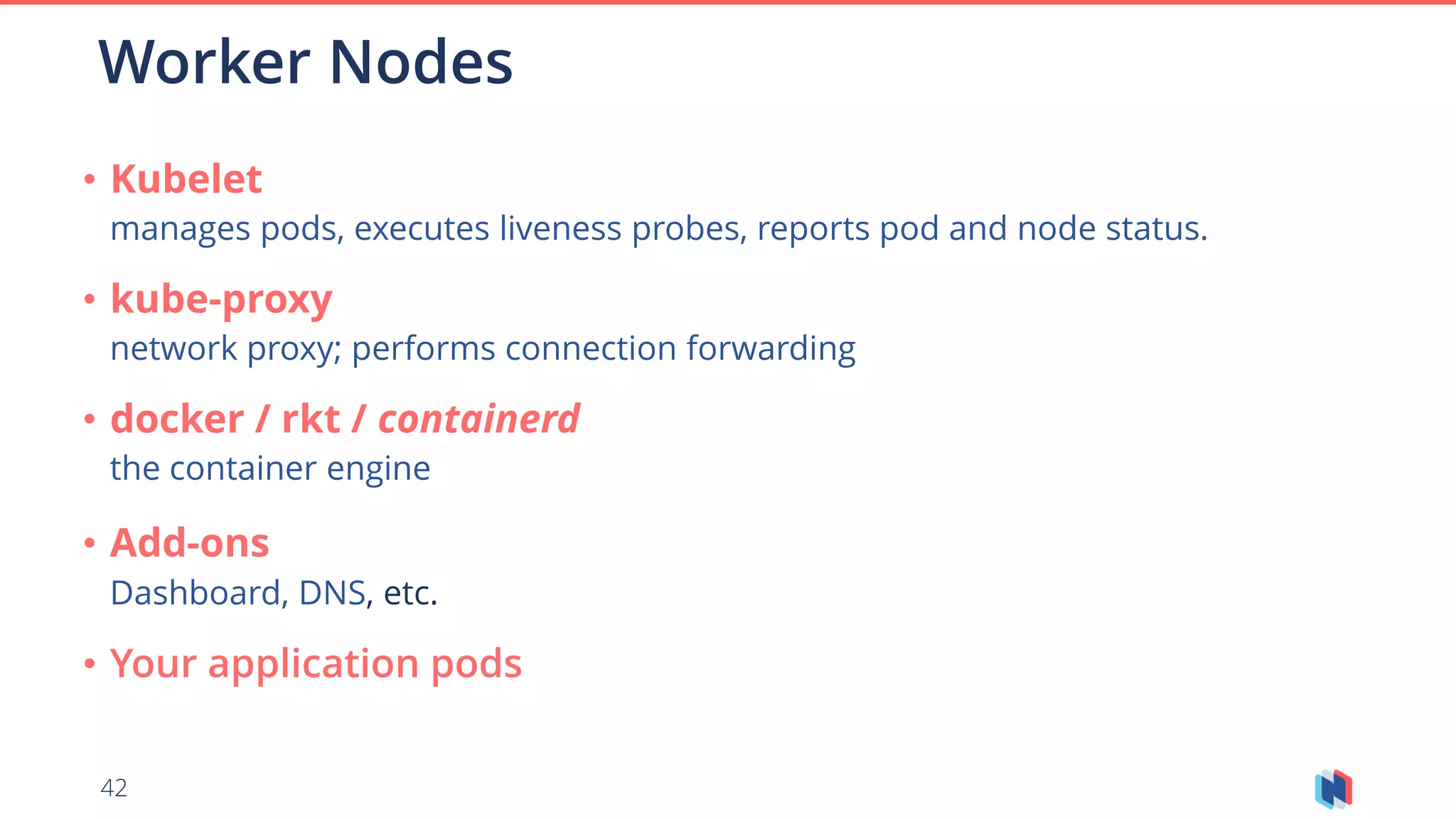

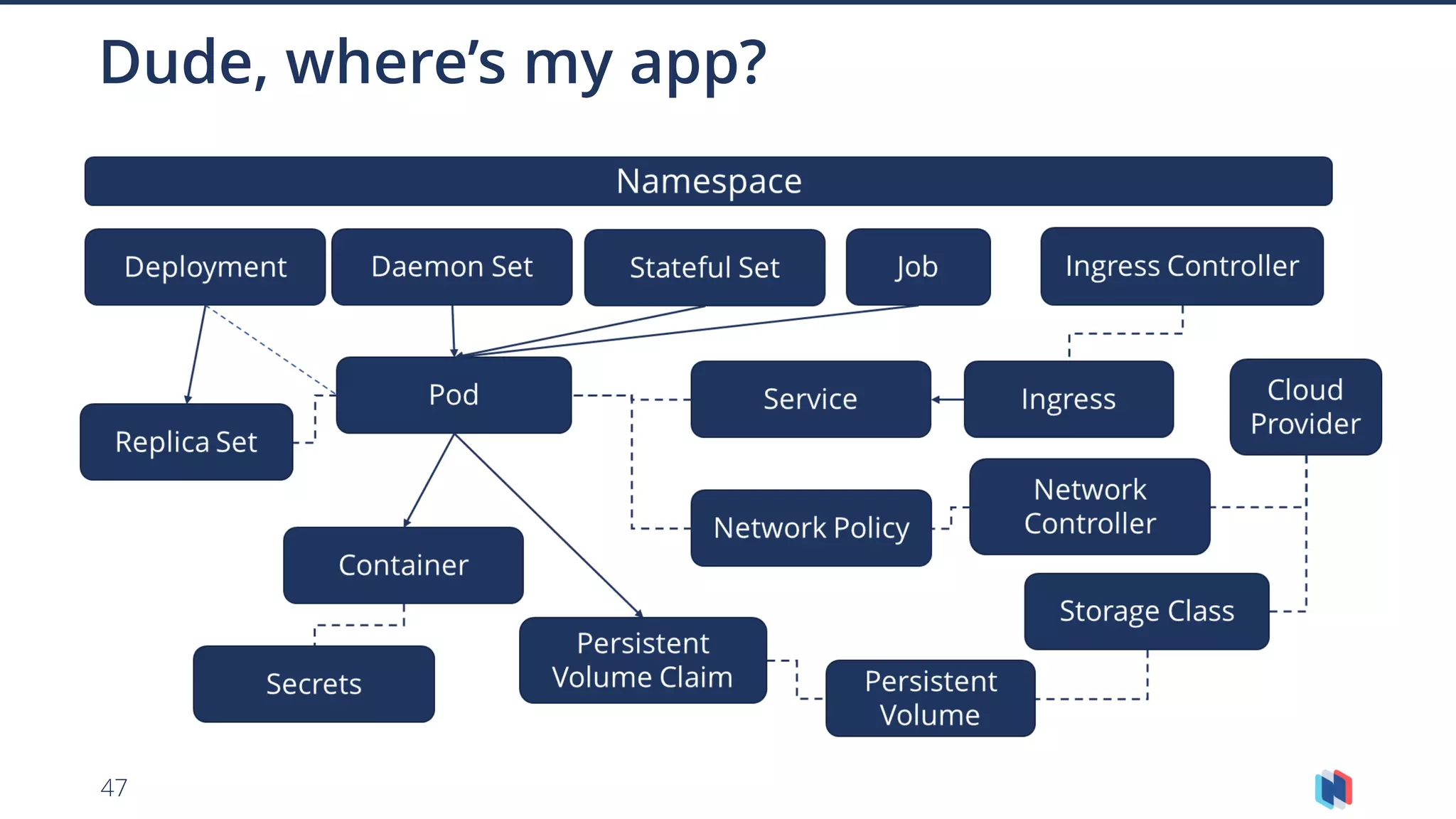

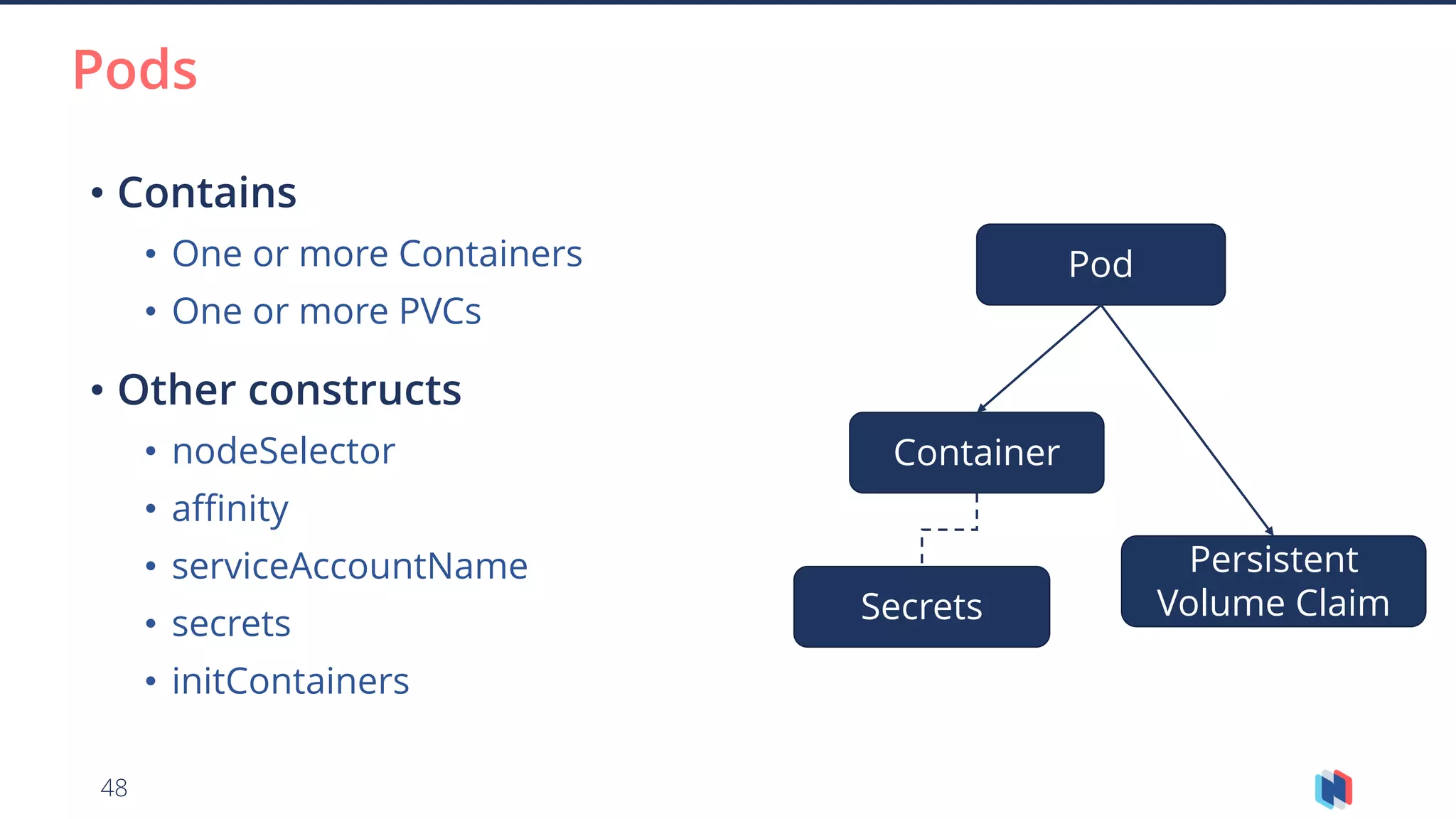

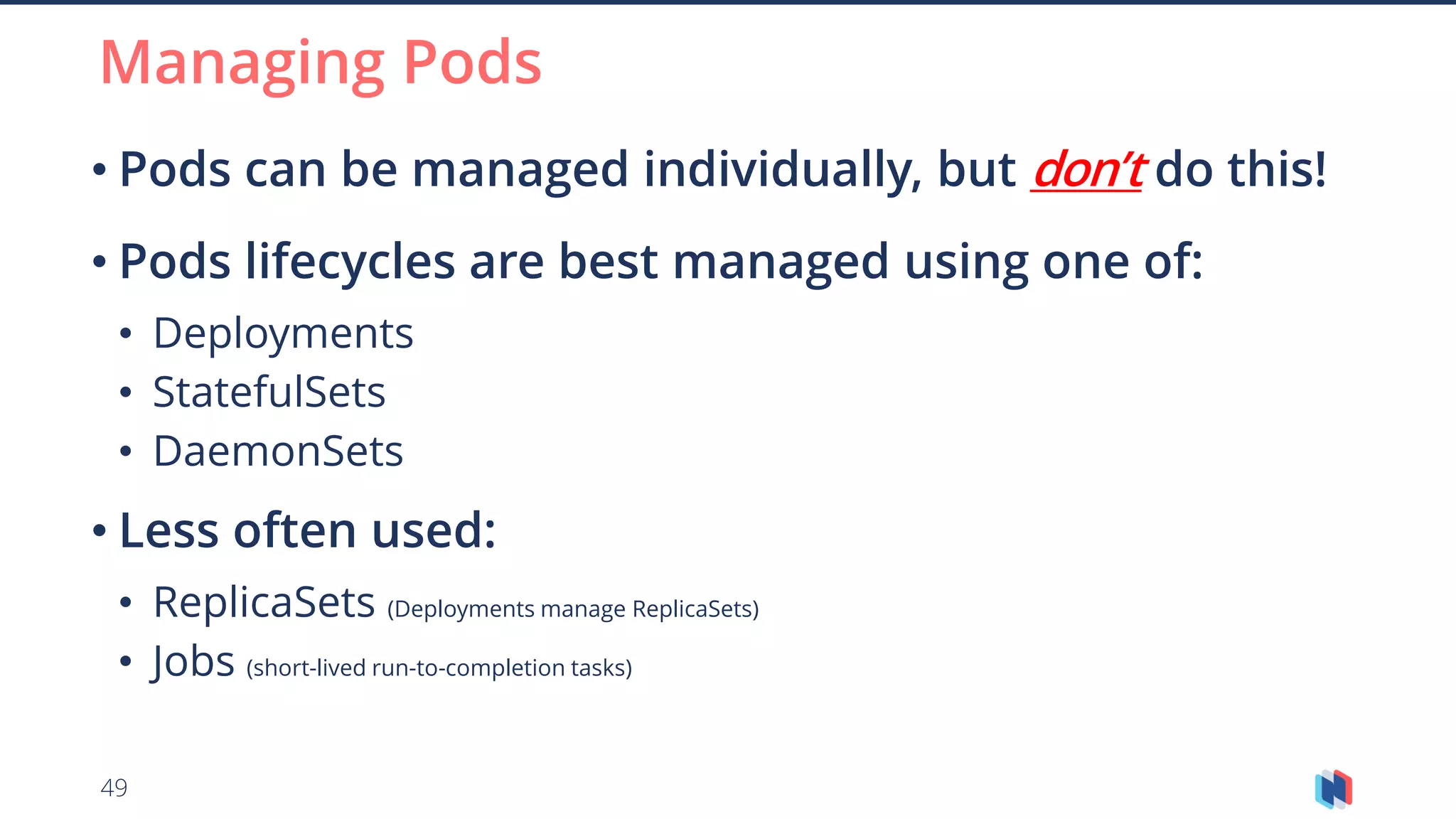

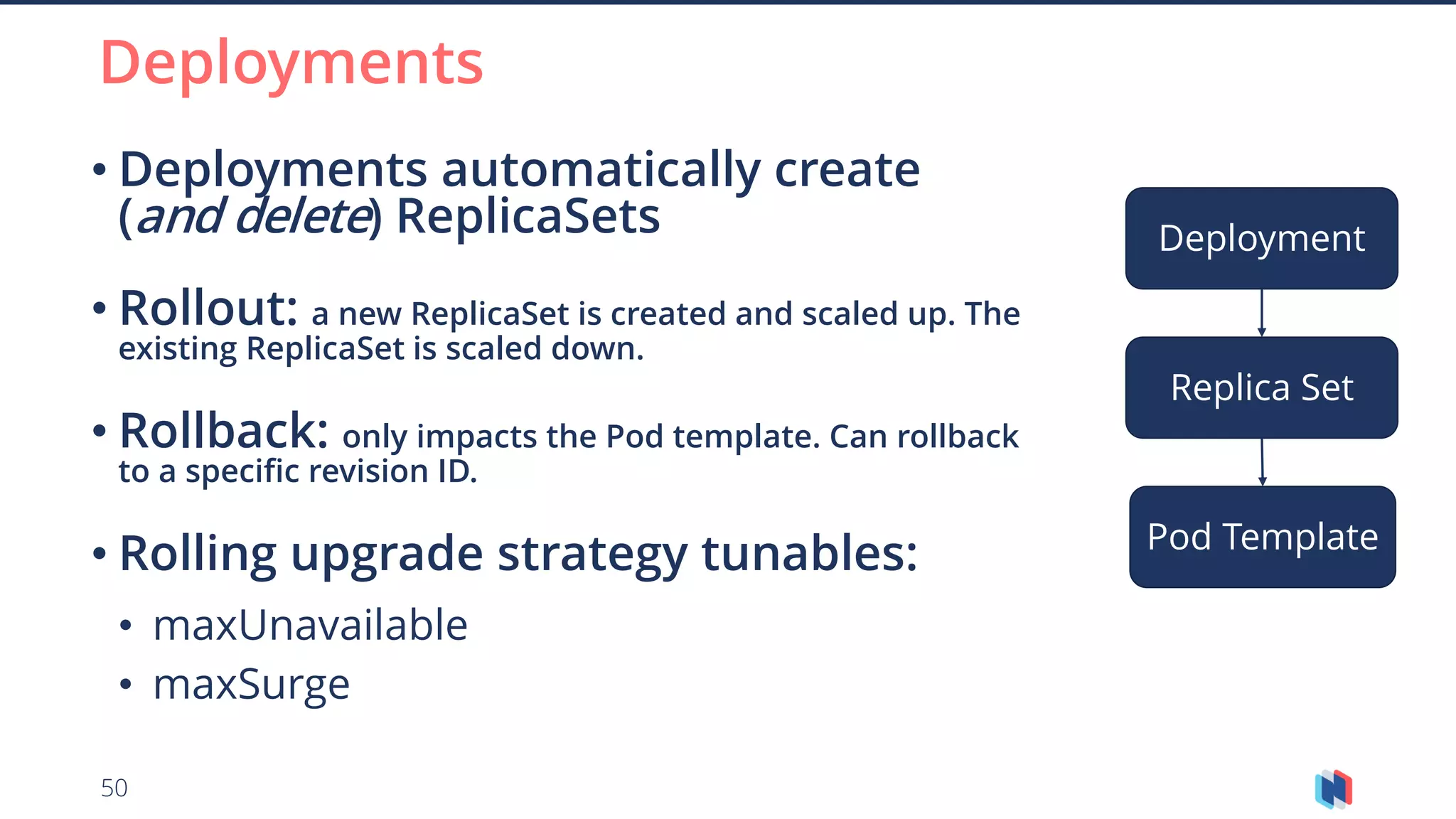

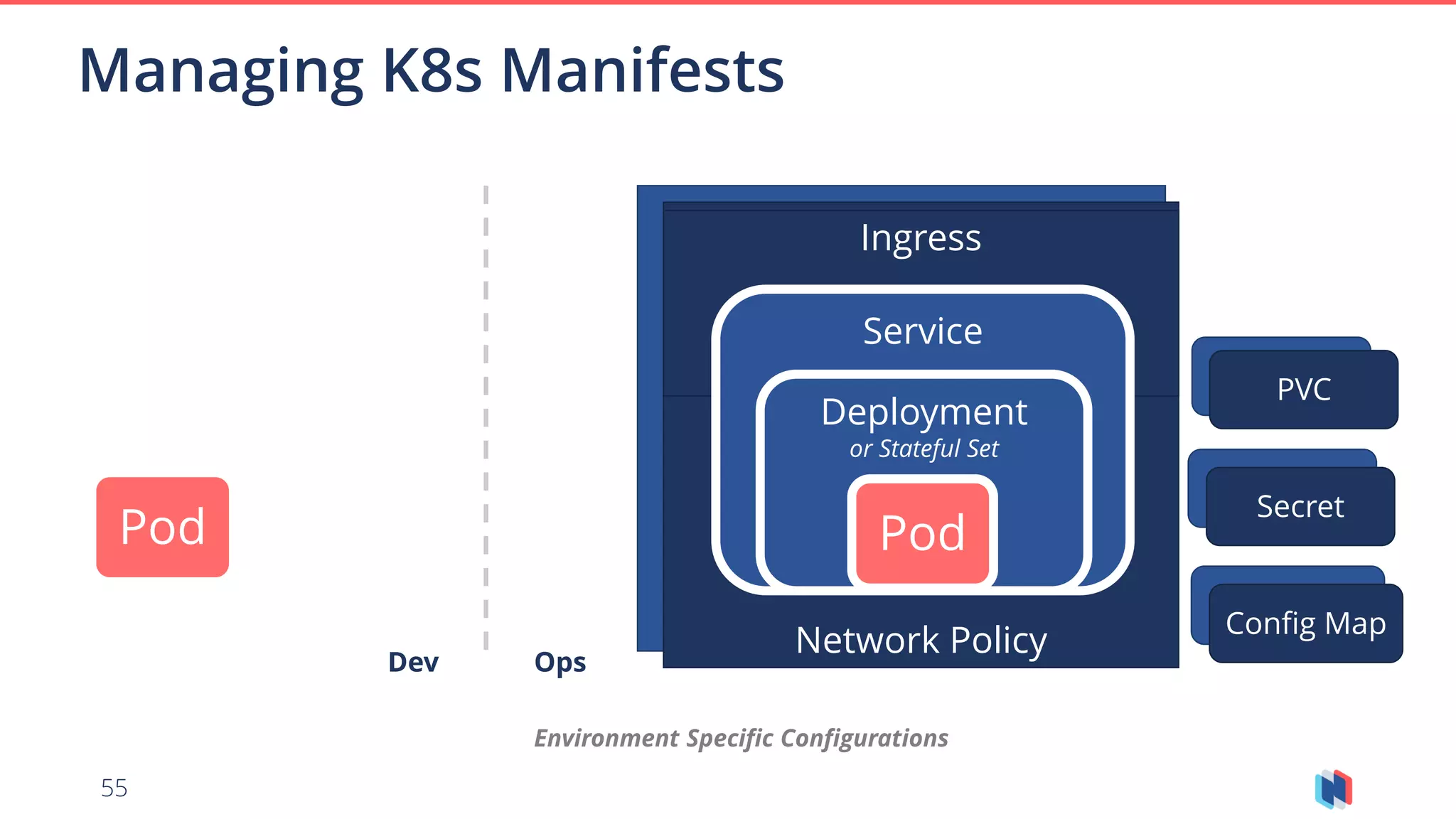

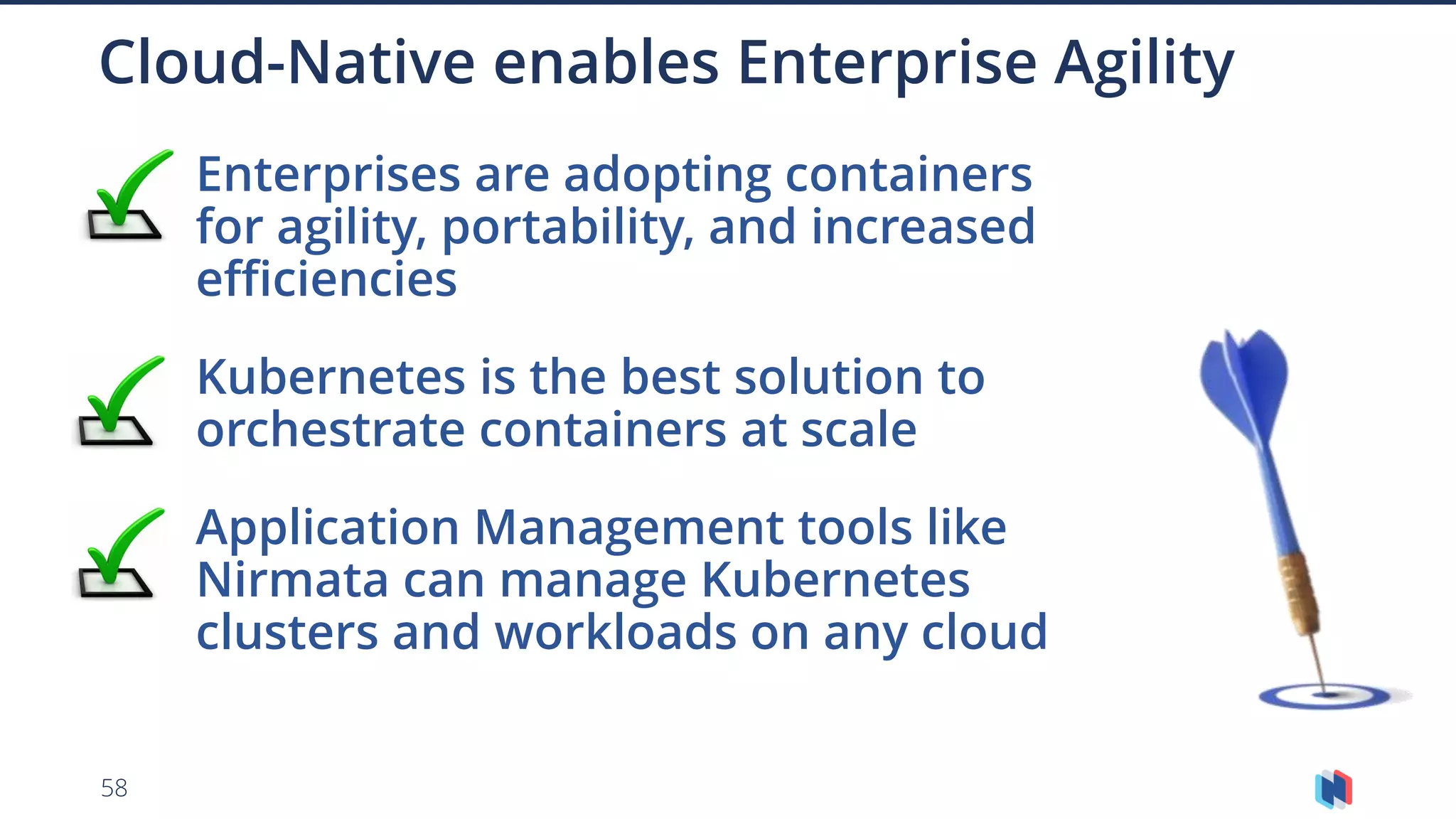

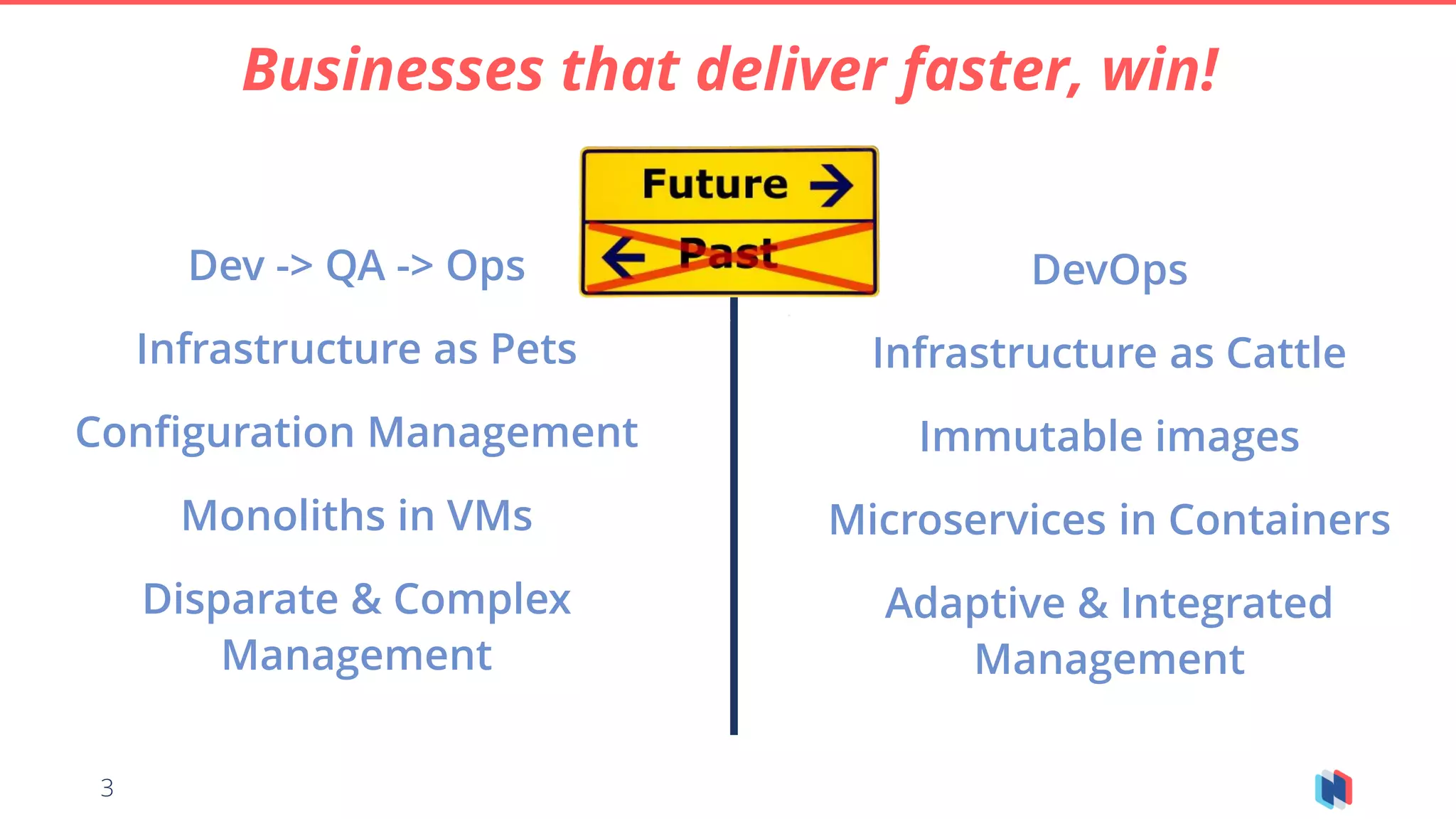

This document provides an overview of cloud-native concepts and technologies like containers, microservices, and Kubernetes. It discusses how containers package applications and provide isolation using technologies like Docker. Microservices are described as a way to build applications as independent, interoperable services. Kubernetes is presented as an open-source system for automating deployment and management of containerized workloads at scale. The document outlines Kubernetes concepts like pods, deployments, services and how they help developers and operations teams manage applications in a cloud-native way.

![17

• Specifies what goes in a container image and

how it executes

Dockerfile

FROM alpine:latest

RUN apk add --update bash && rm -rf /var/cache/apk/*

RUN apk add --update curl && rm -rf /var/cache/apk/*

COPY bin /opt/bin

RUN chmod a+x /opt/bin/*

ENV PATH=$PATH:/opt/bin/

ENTRYPOINT [ "/opt/bin/run.sh" ]](https://image.slidesharecdn.com/azuremeetup-cloudnativeconcepts-may28th2018-180602222553/75/Azure-meetup-cloud-native-concepts-may-28th-2018-17-2048.jpg)

![18

Docker file - Tomcat Example

FROM tomcat:8.0-jre8-alpine

LABEL Author jim@nirmata.com

ADD build/libs/hello-1.0.0-SNAPSHOT.war /usr/local/tomcat/webapps/hello.war

EXPOSE 8080

CMD ["catalina.sh", "run"]](https://image.slidesharecdn.com/azuremeetup-cloudnativeconcepts-may28th2018-180602222553/75/Azure-meetup-cloud-native-concepts-may-28th-2018-18-2048.jpg)

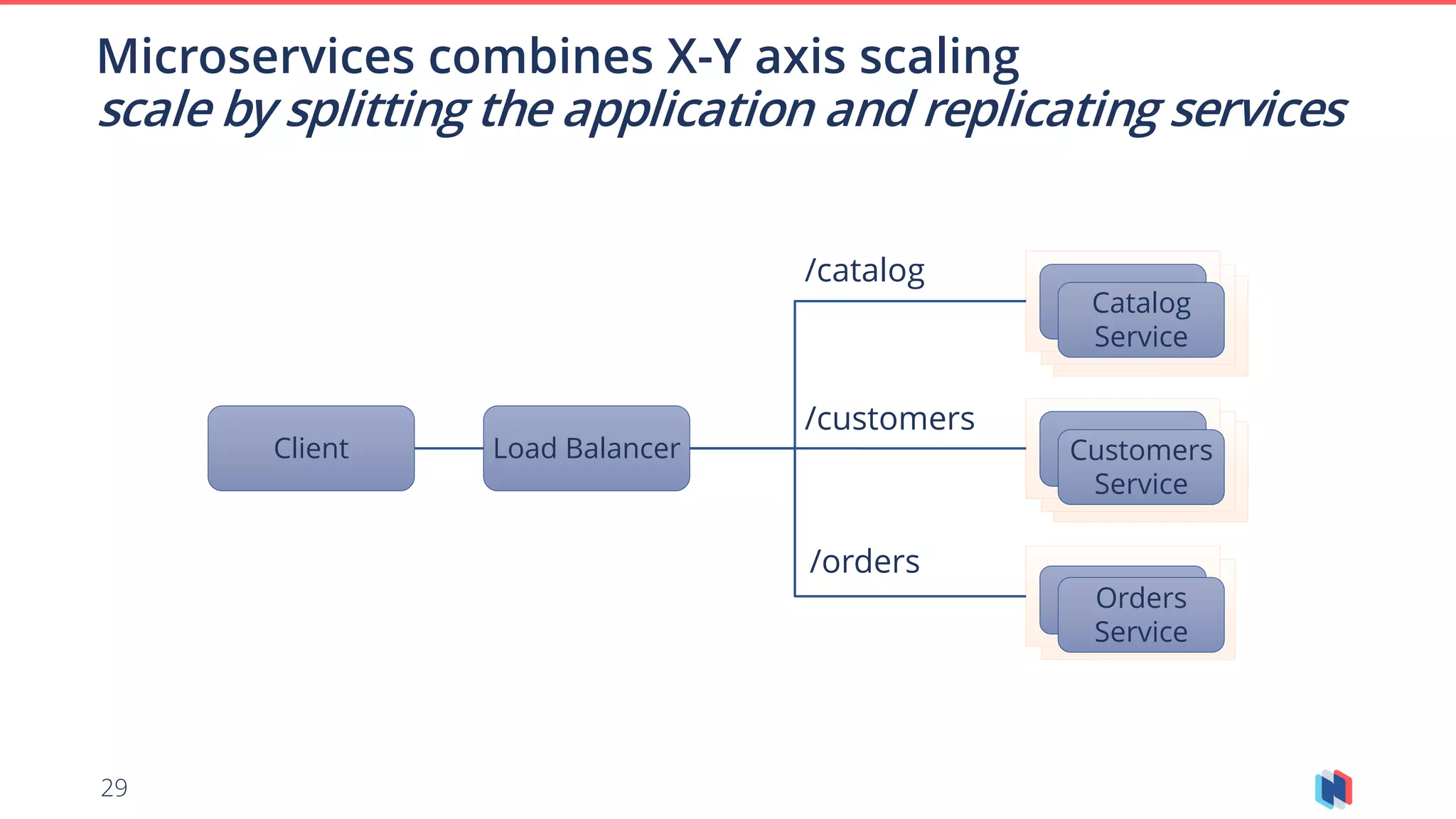

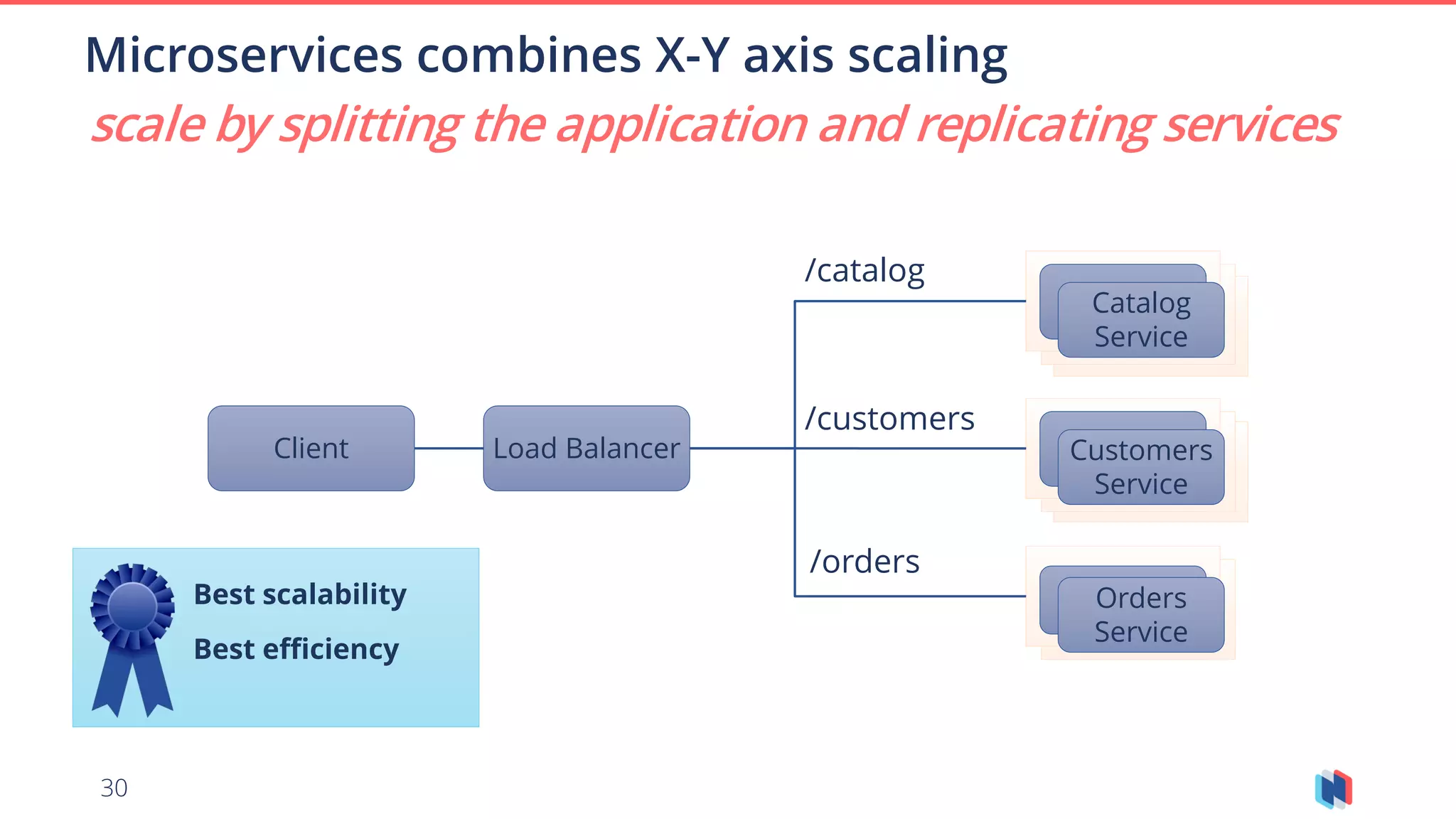

![28

Z-Axis Scaling

Client Load Balancer

Catalog

Service 3

Catalog

Service 1

Catalog

Service 3

/catalog [A – I]

/catalog [J – R]

/catalog [S – Z]

scale by splitting the data](https://image.slidesharecdn.com/azuremeetup-cloudnativeconcepts-may28th2018-180602222553/75/Azure-meetup-cloud-native-concepts-may-28th-2018-28-2048.jpg)