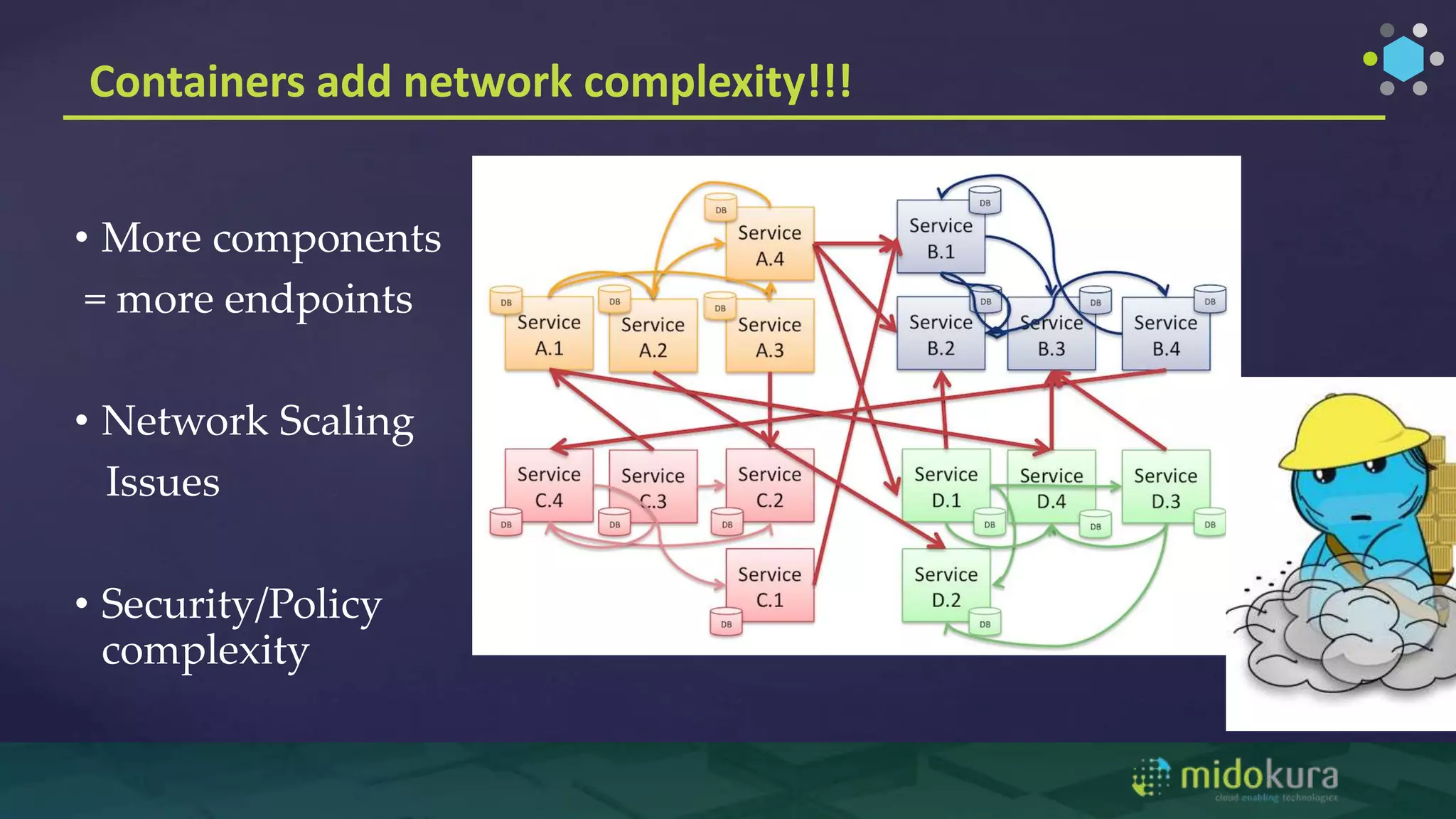

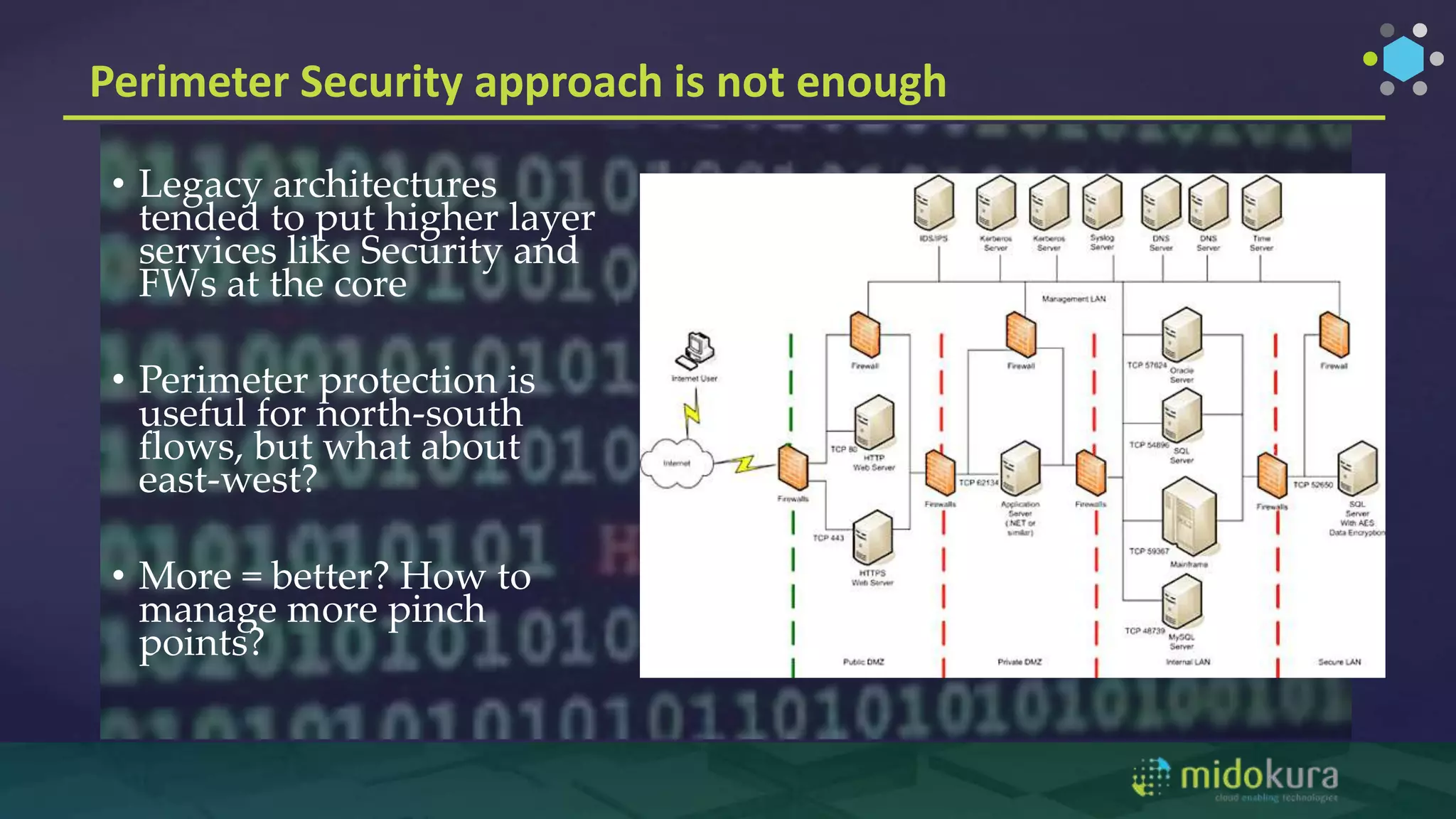

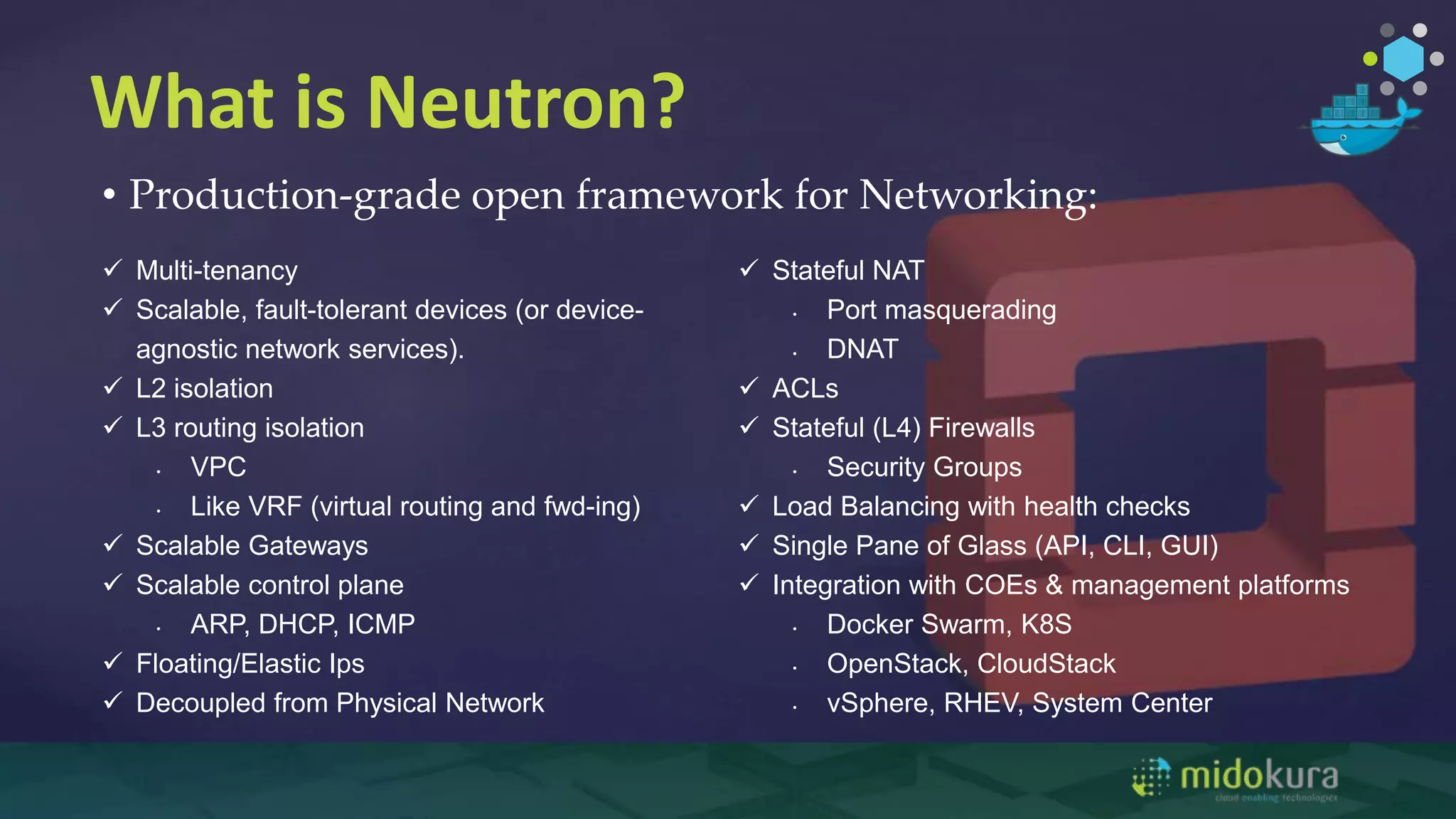

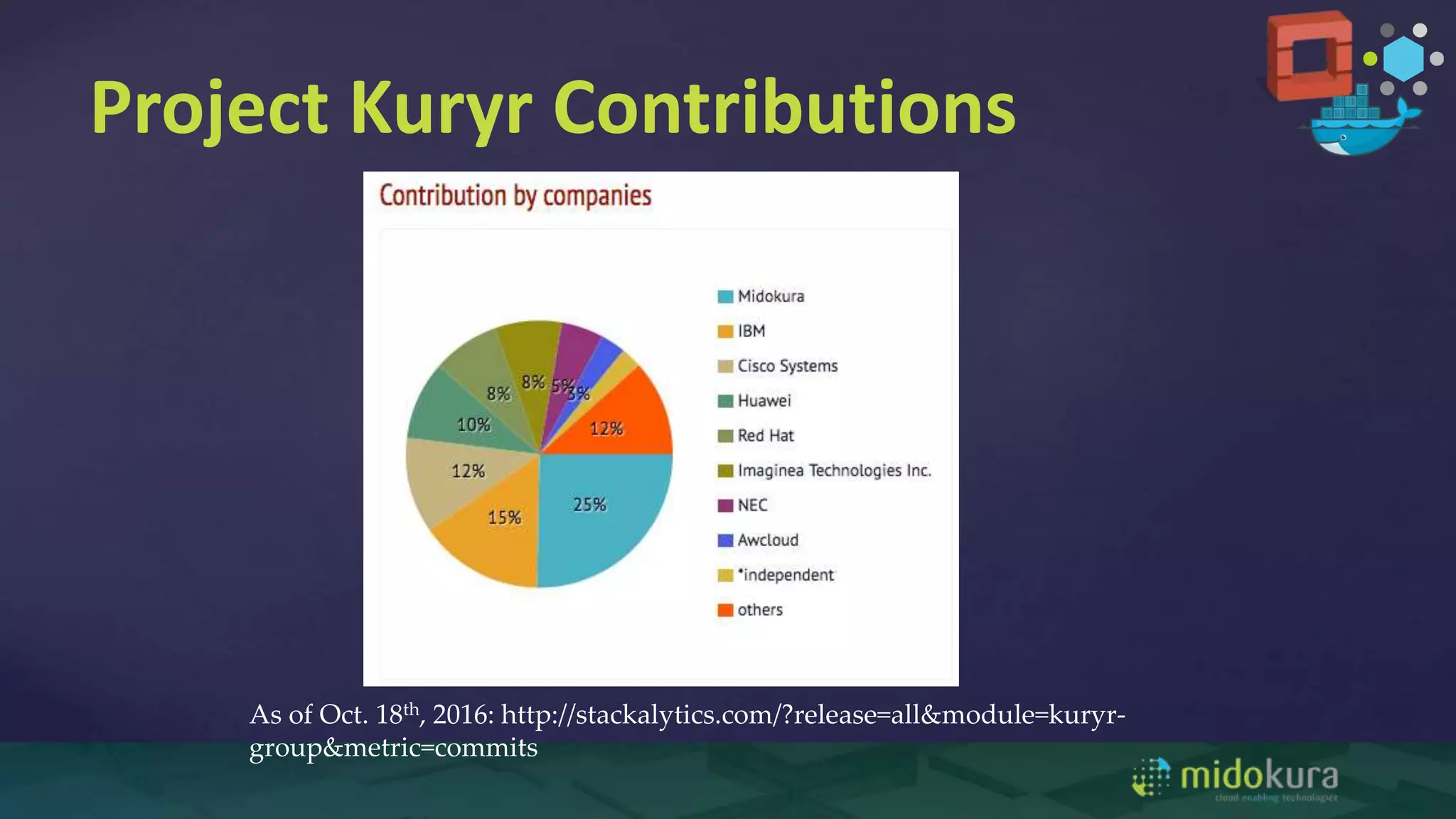

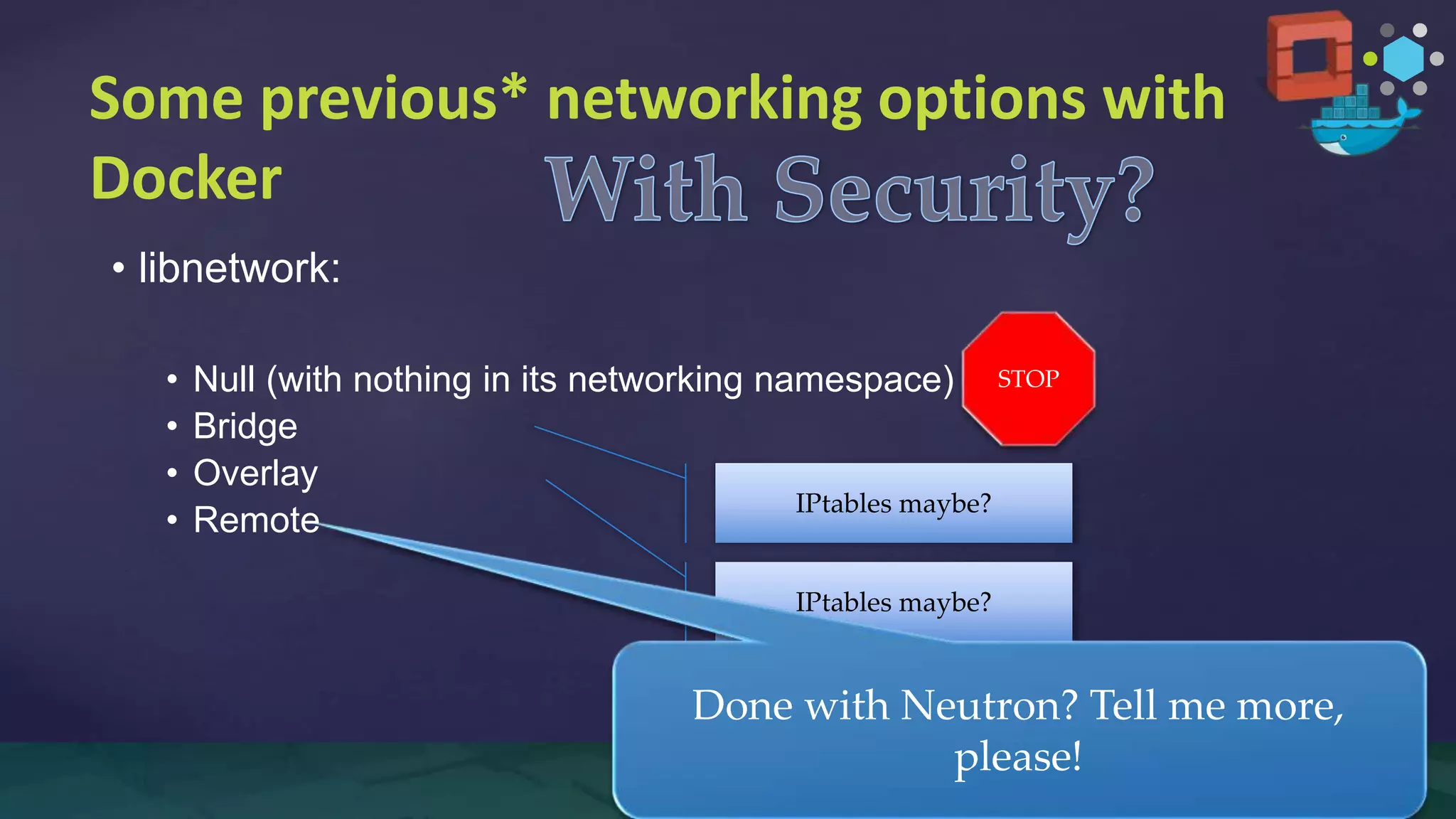

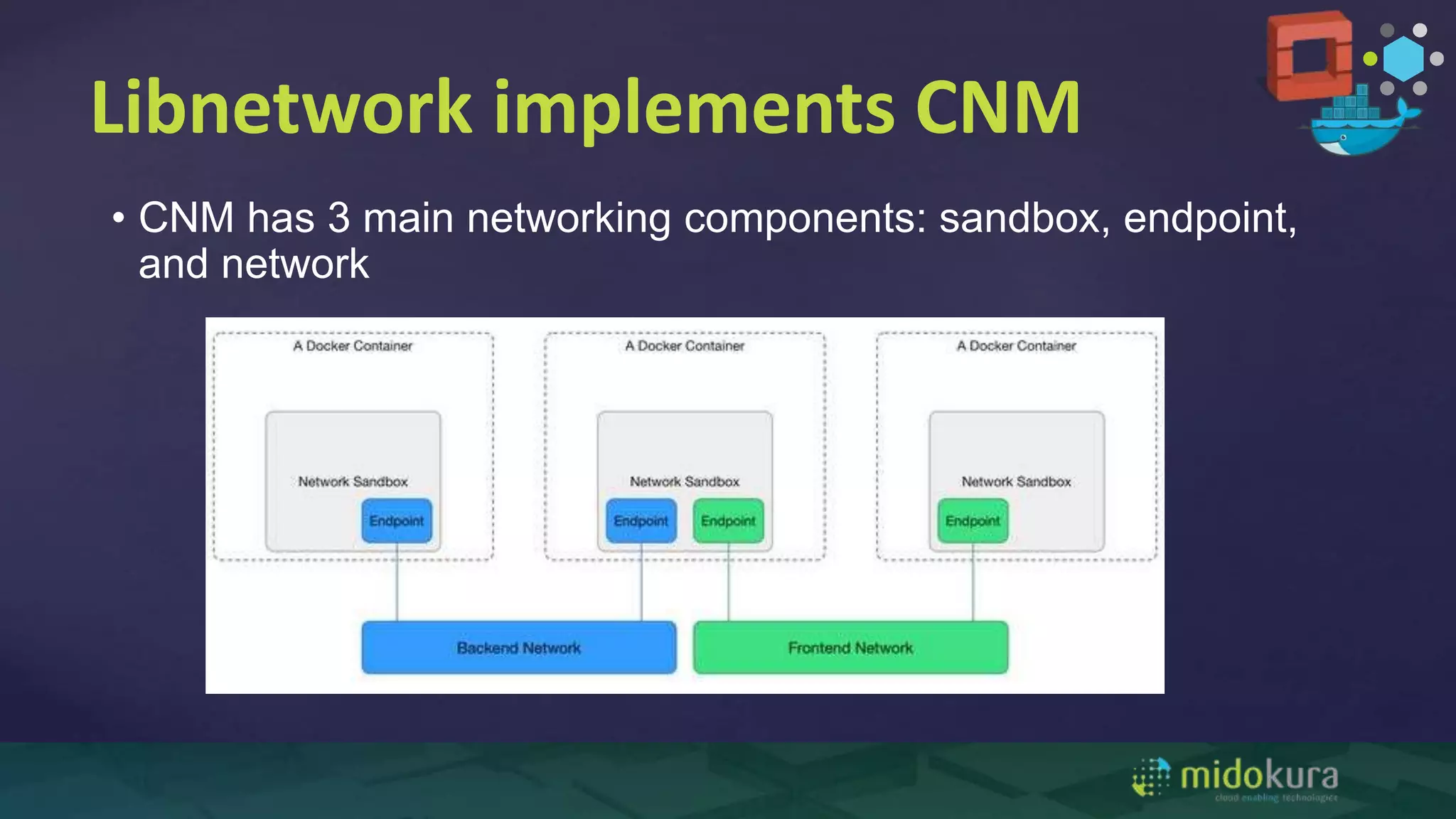

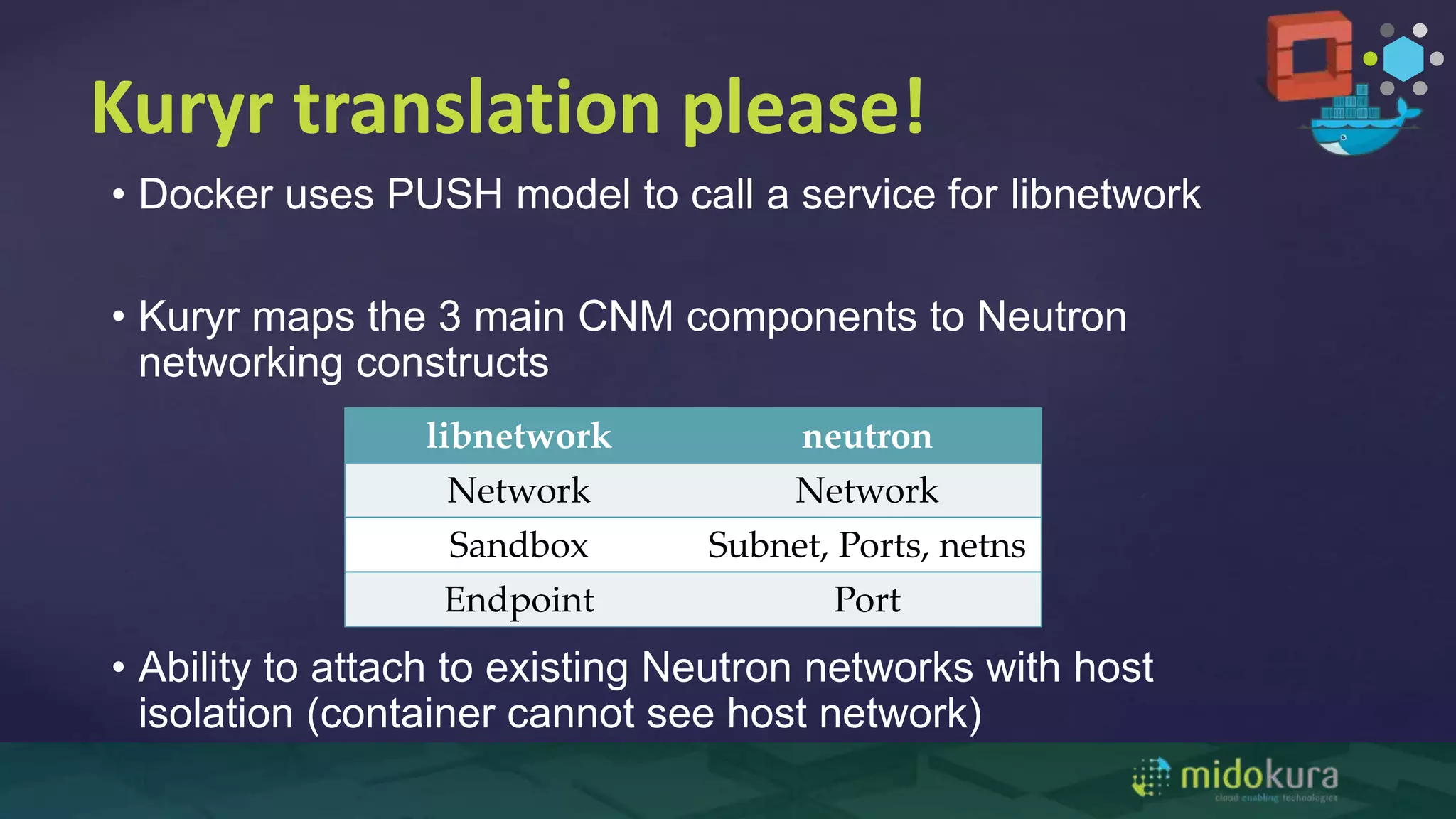

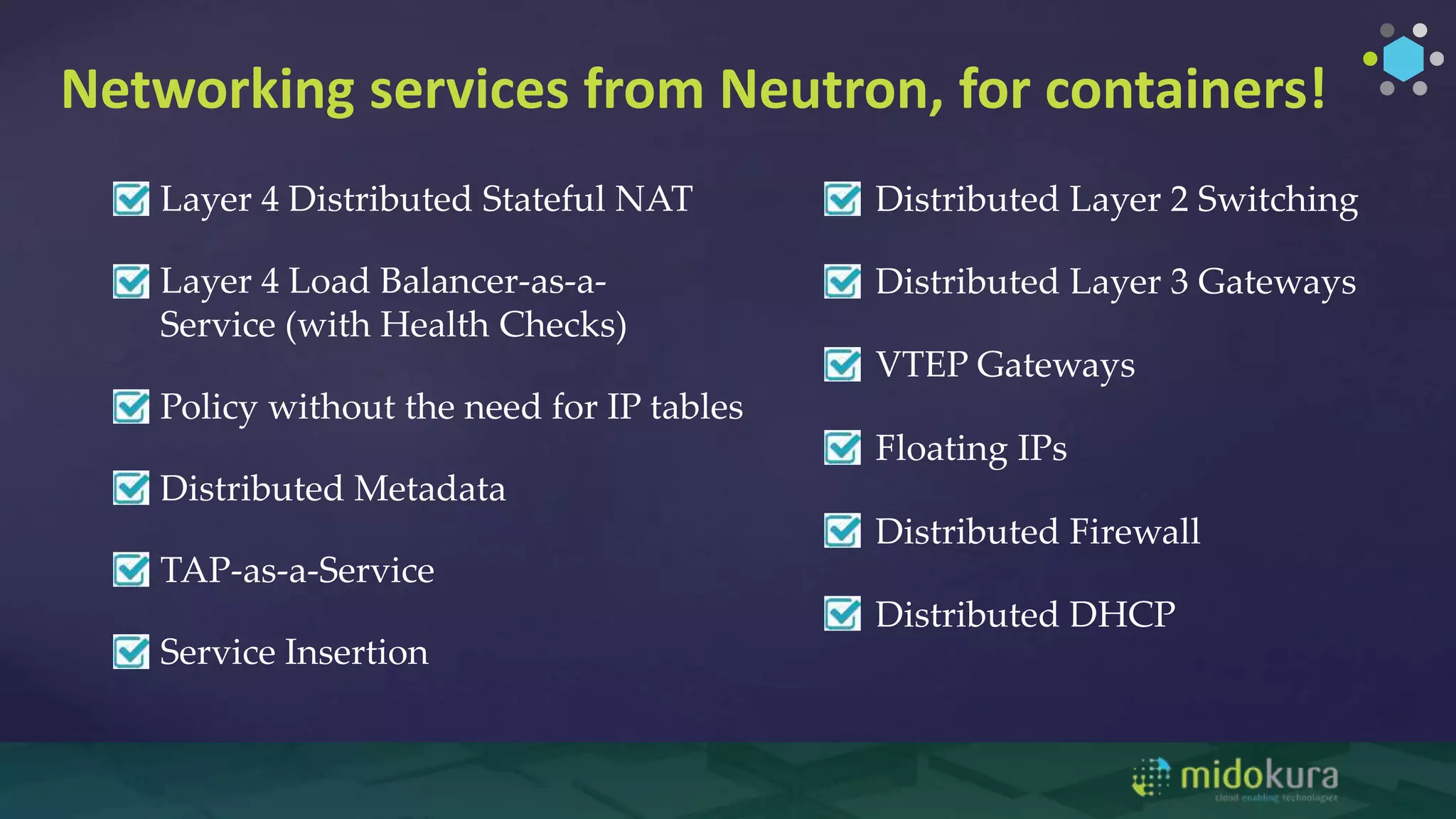

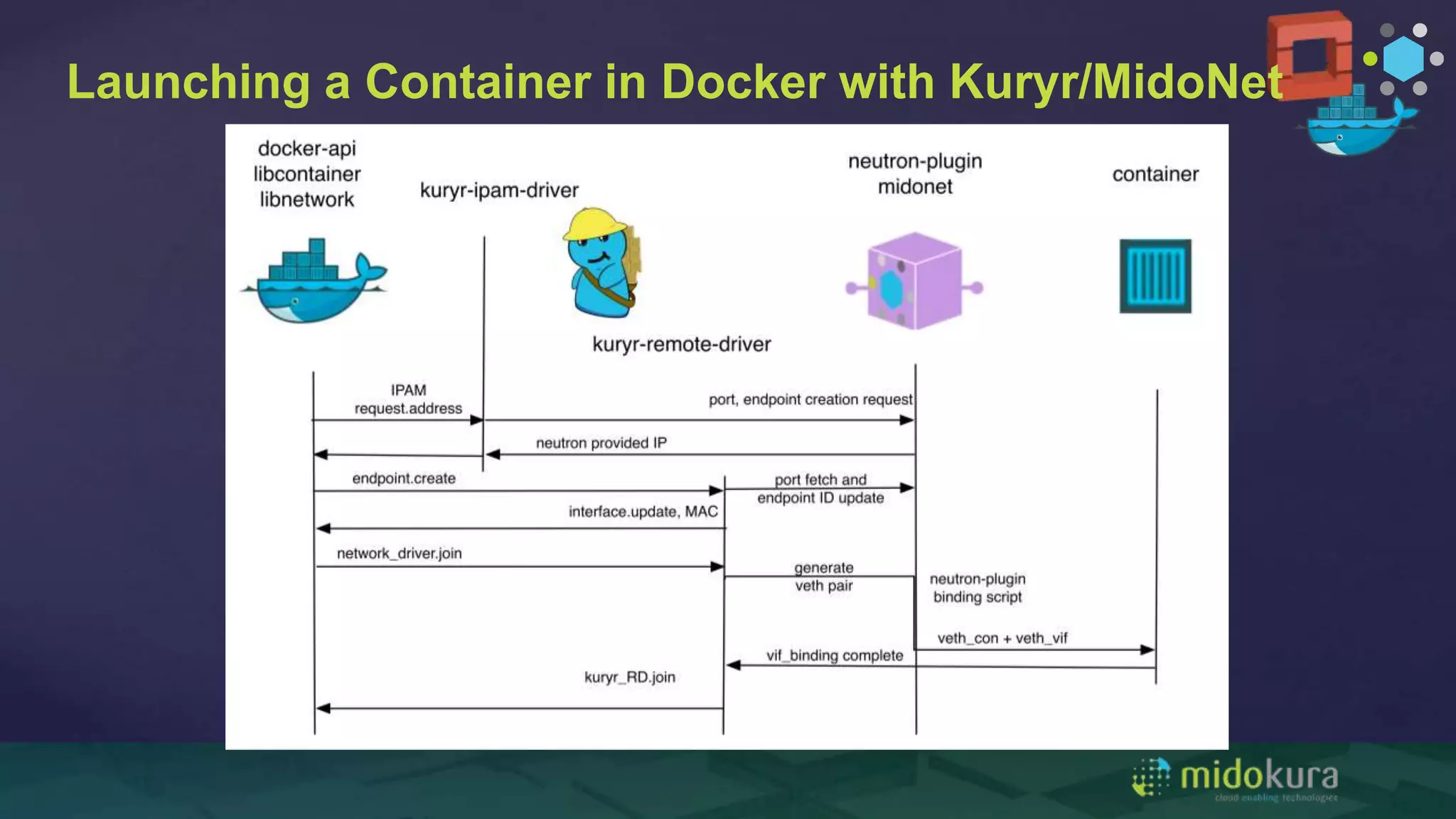

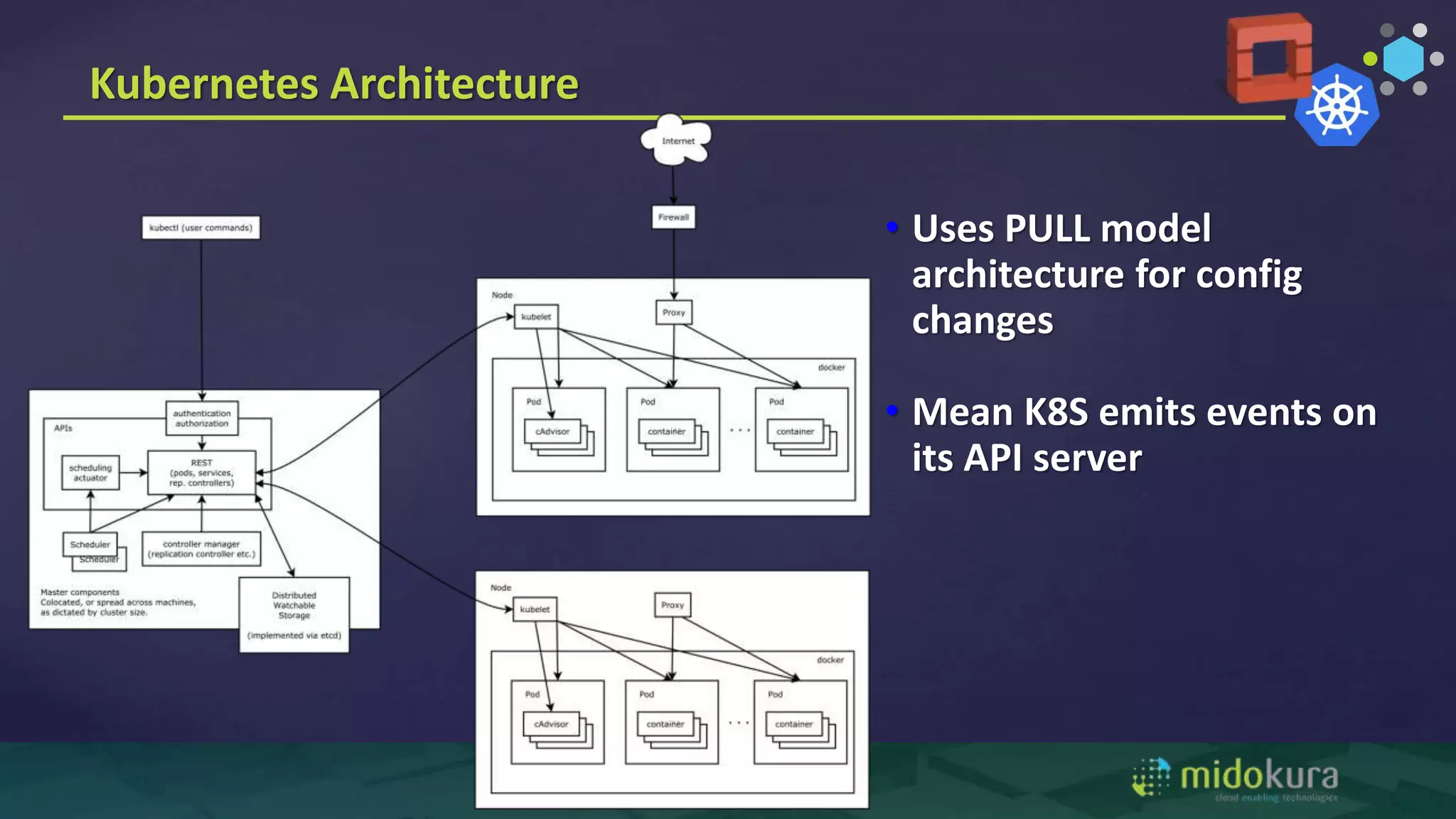

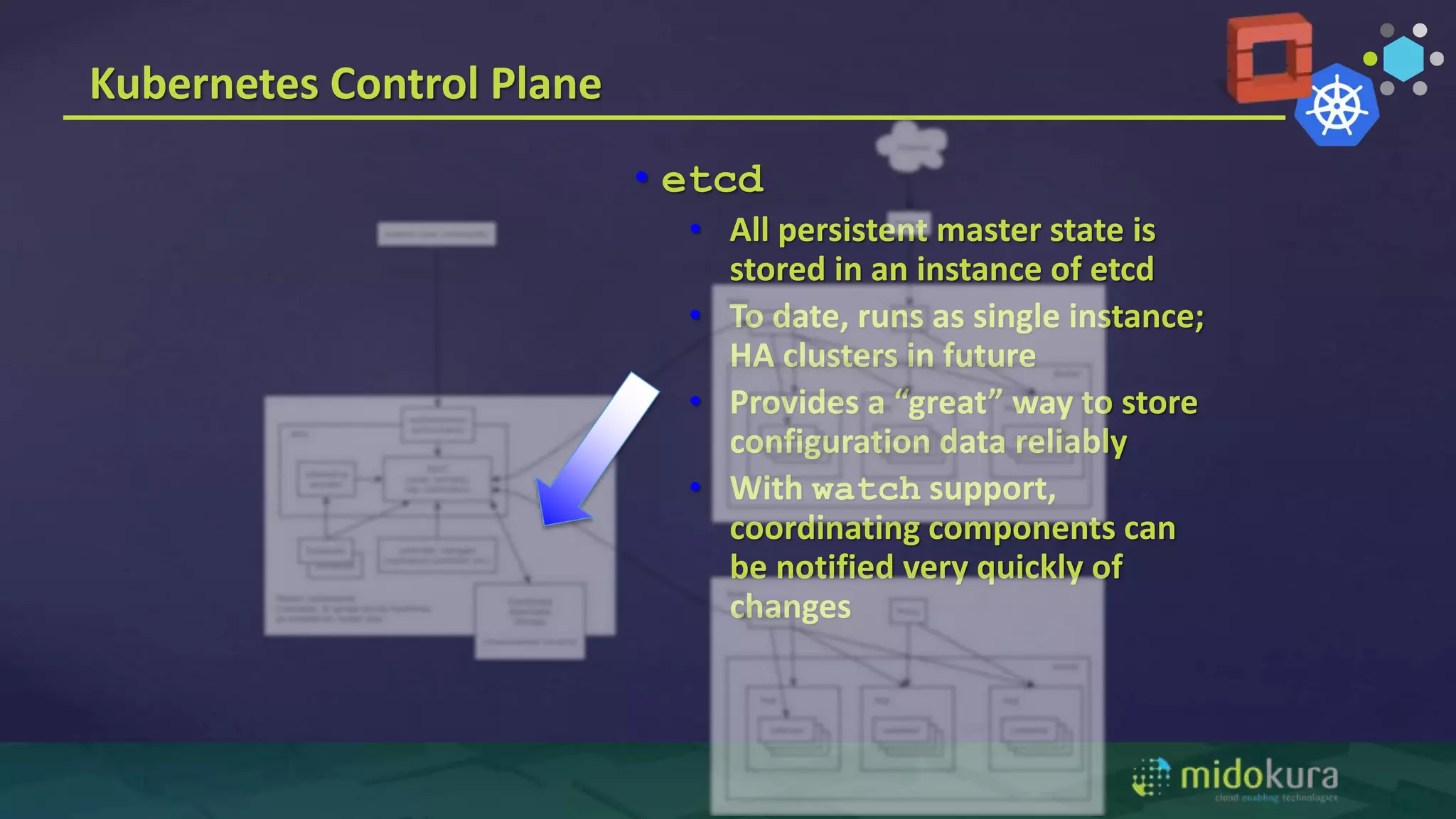

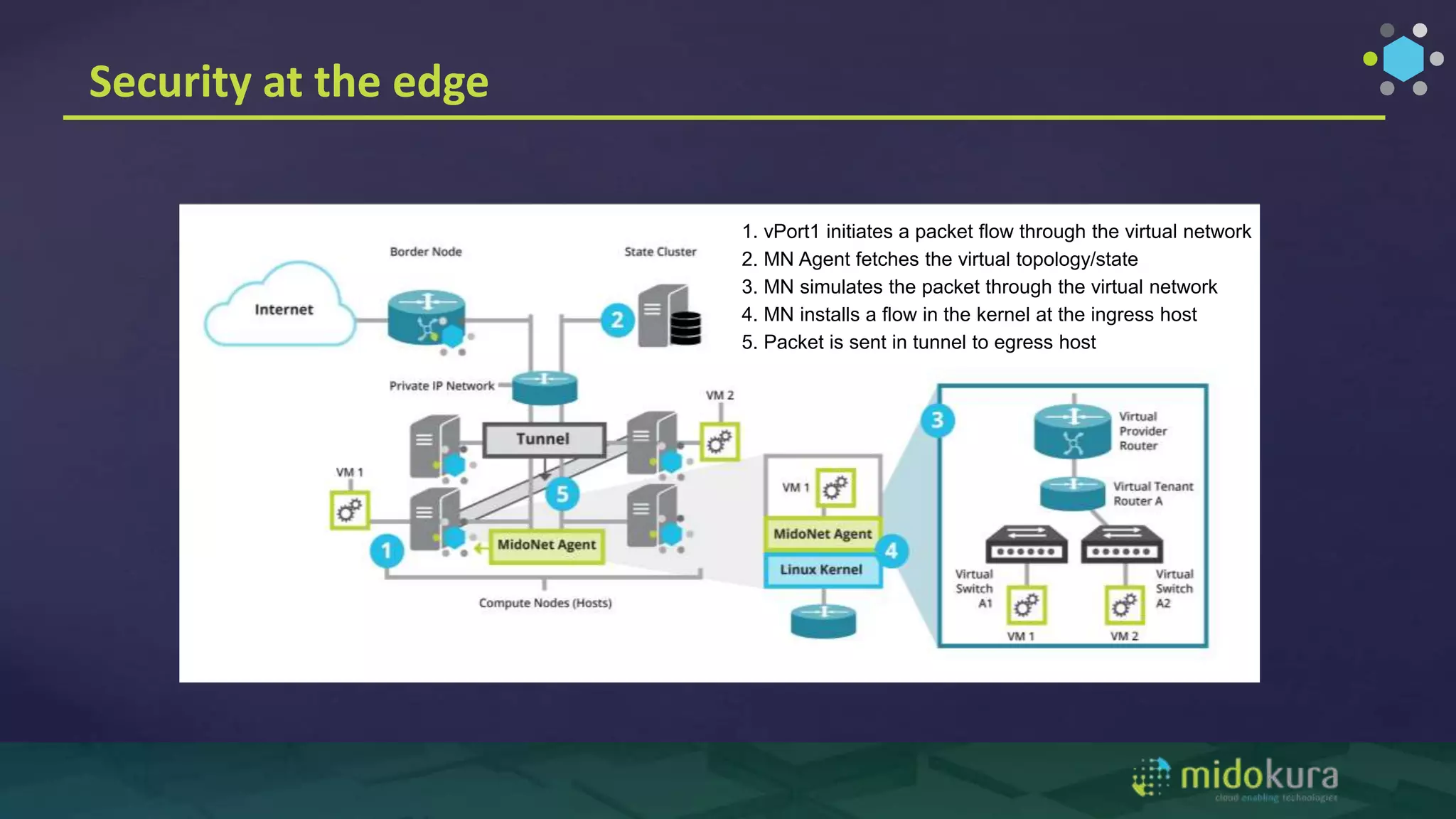

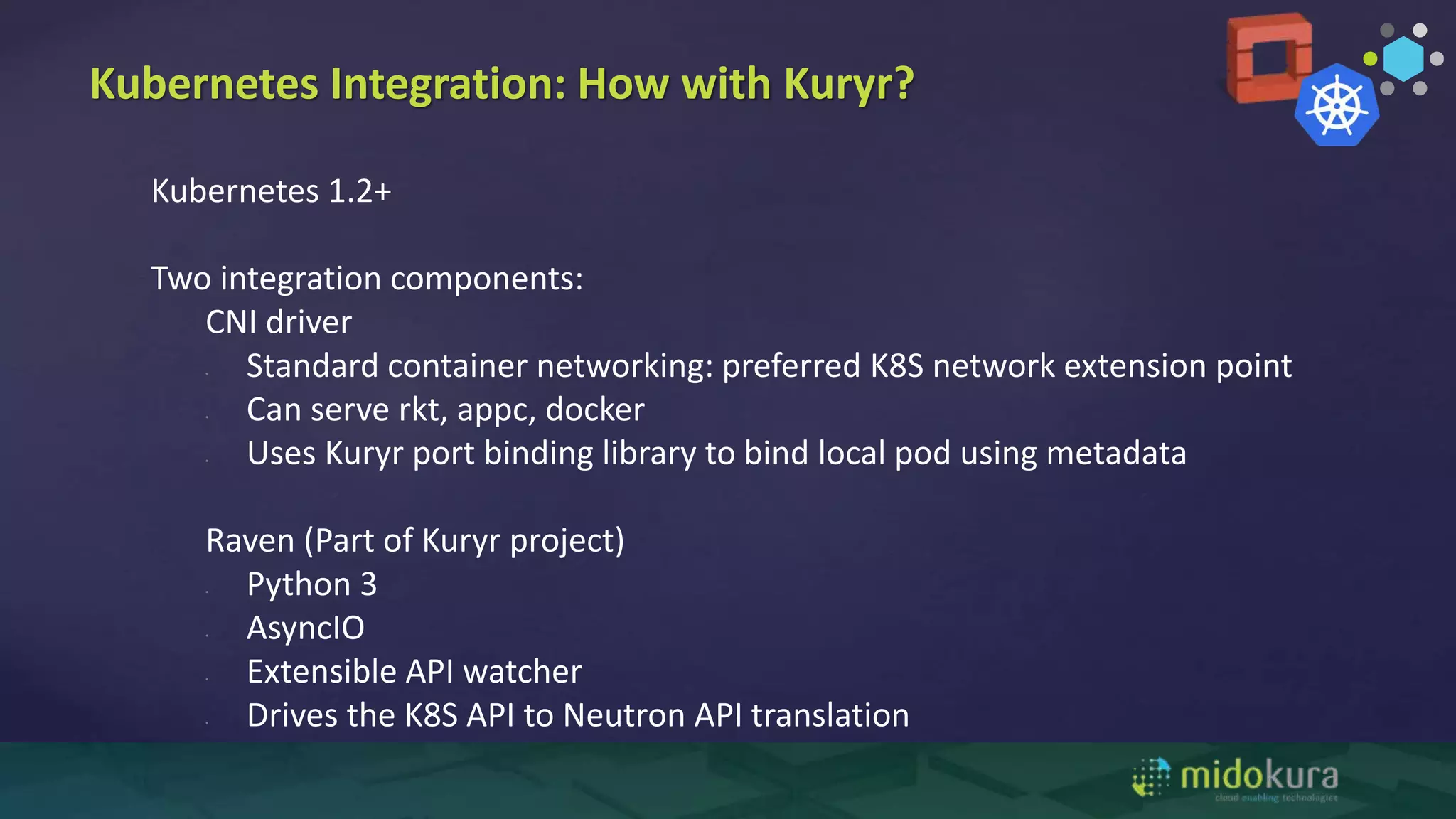

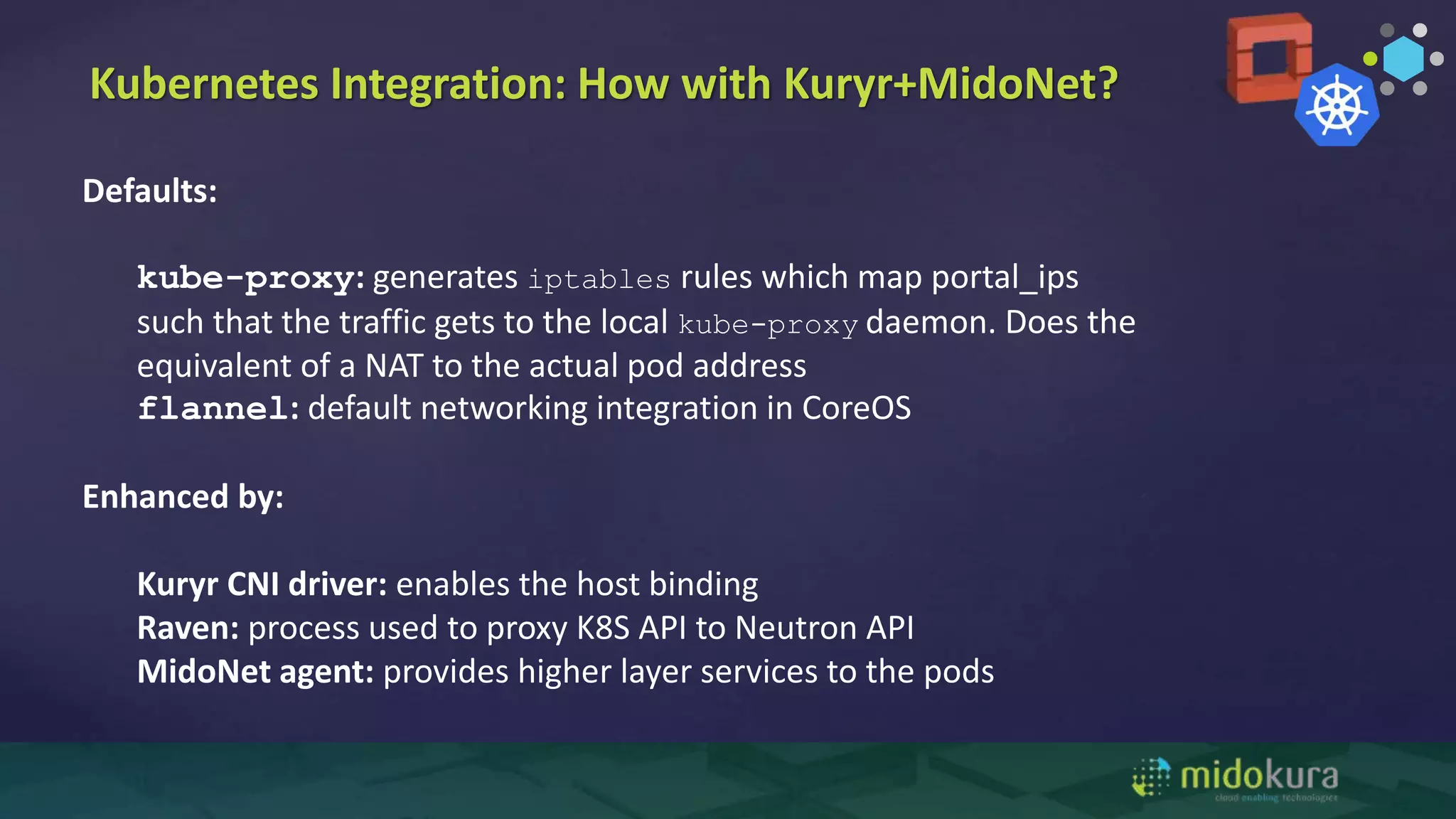

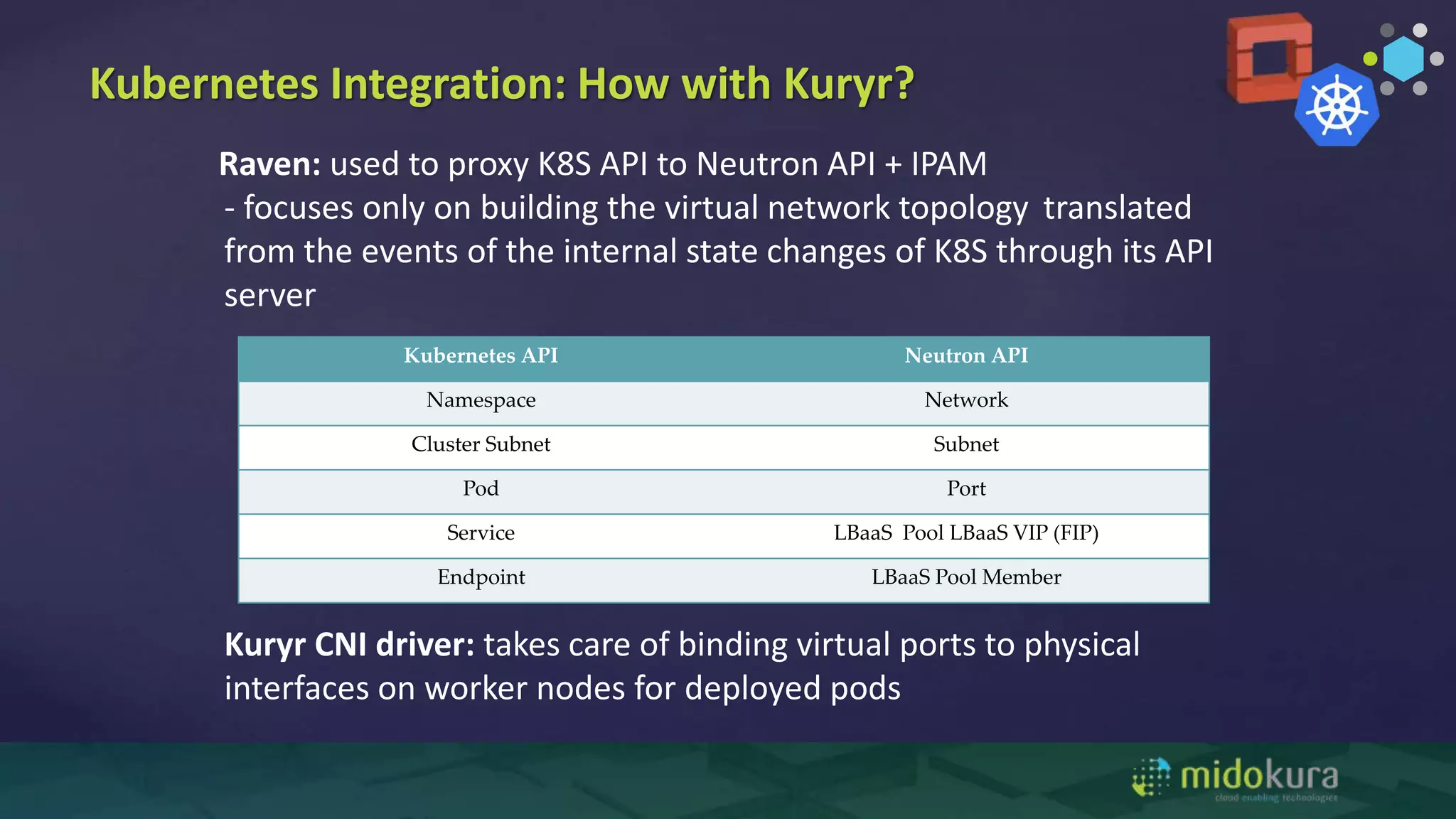

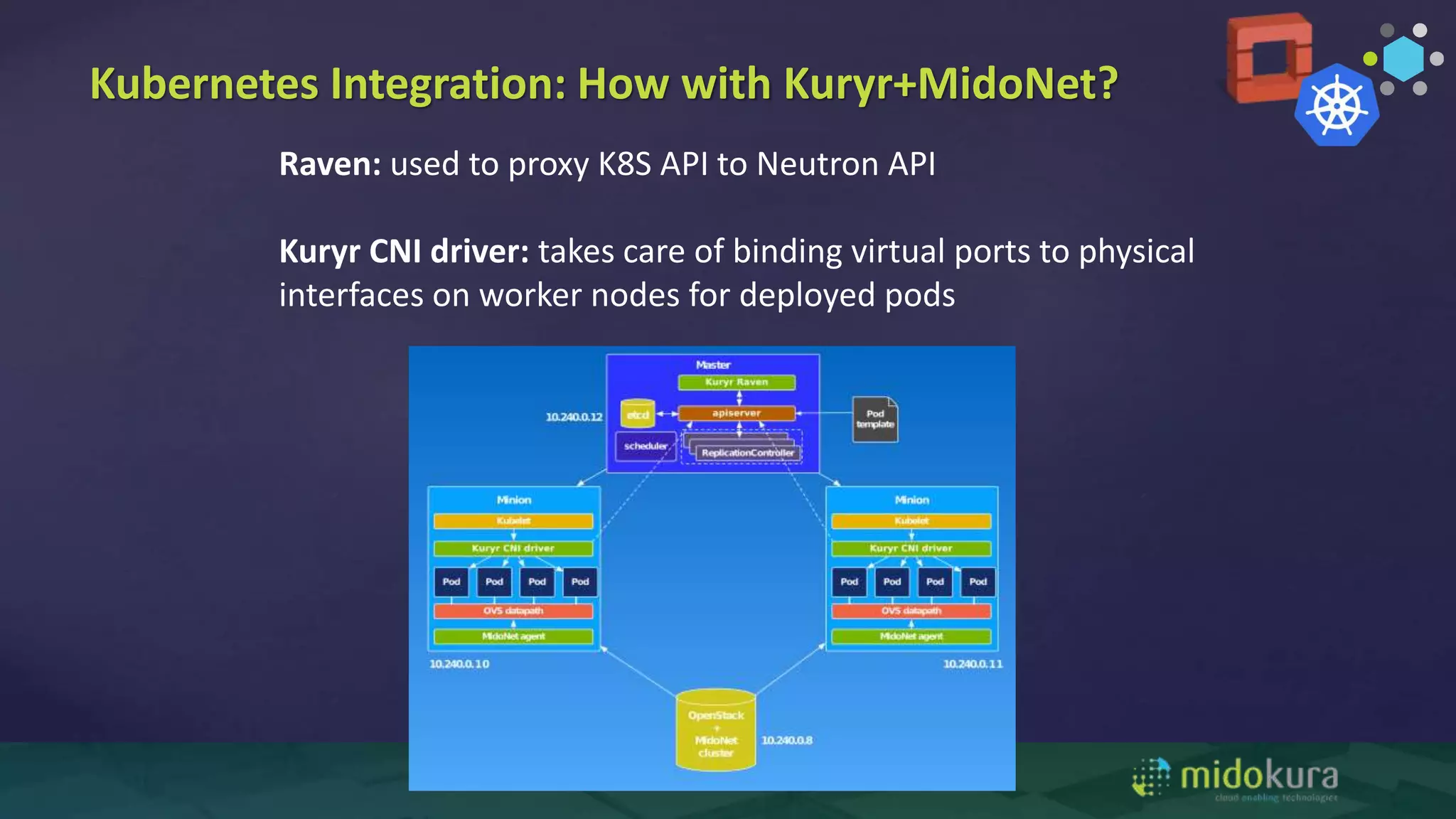

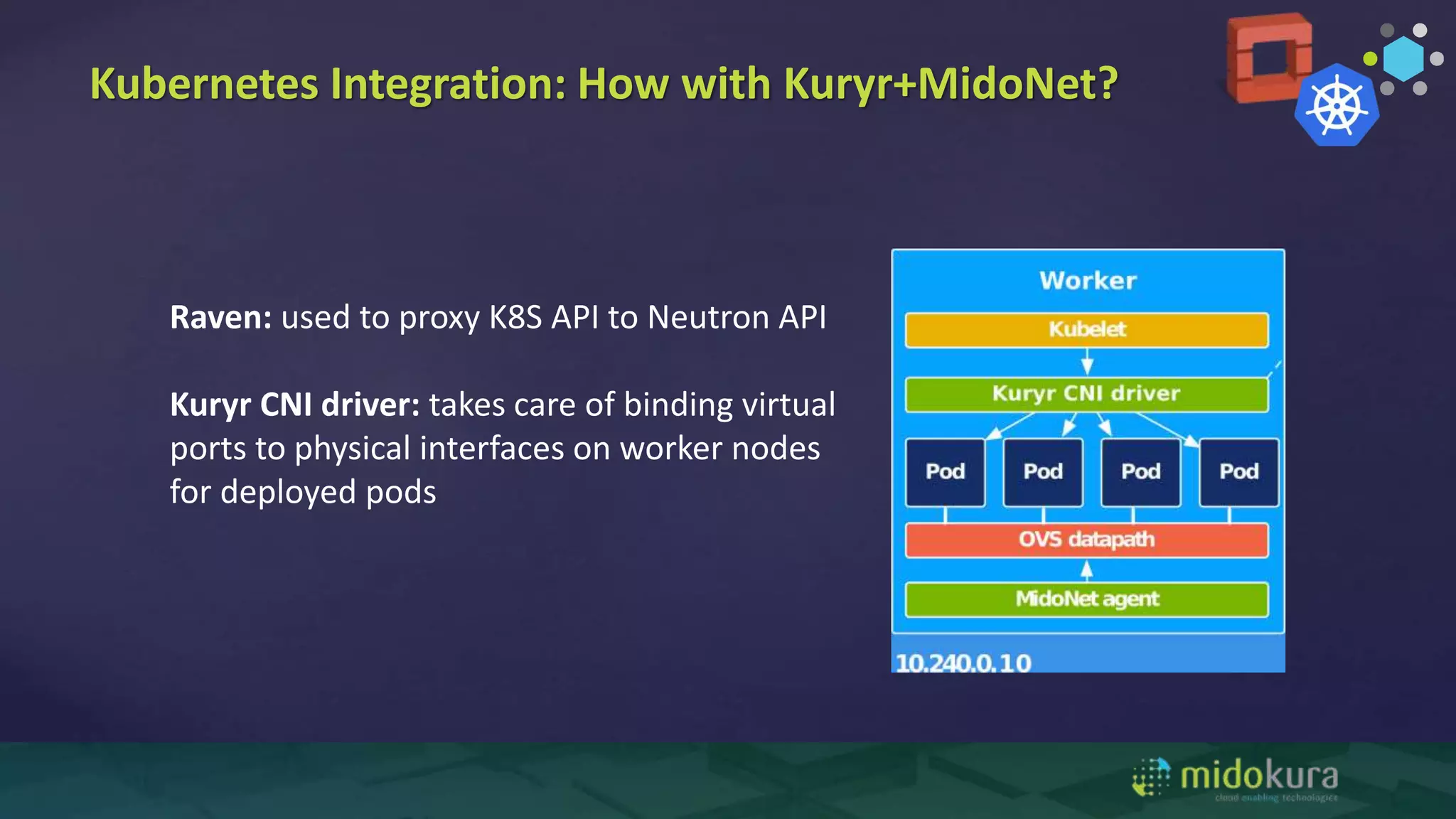

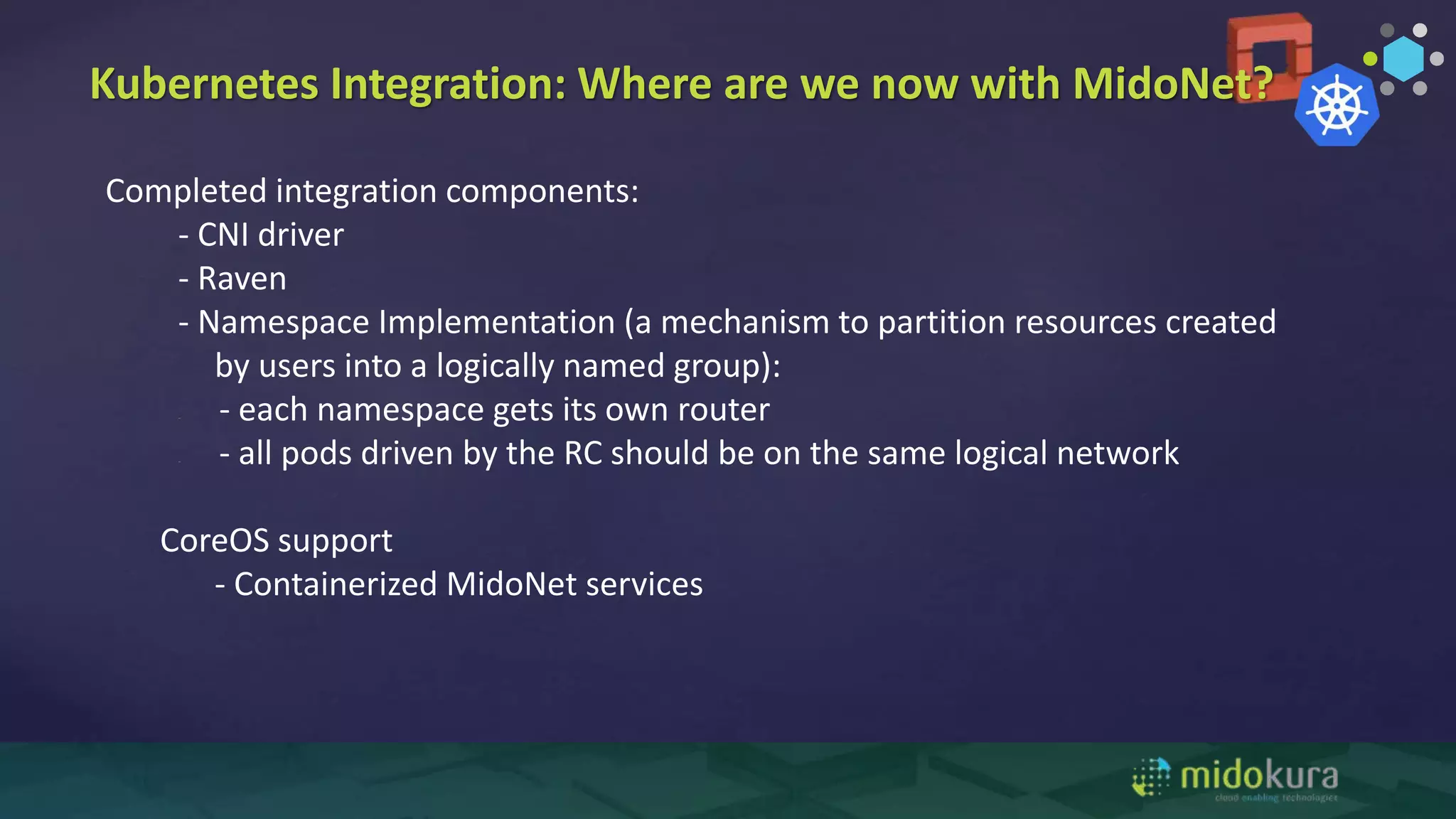

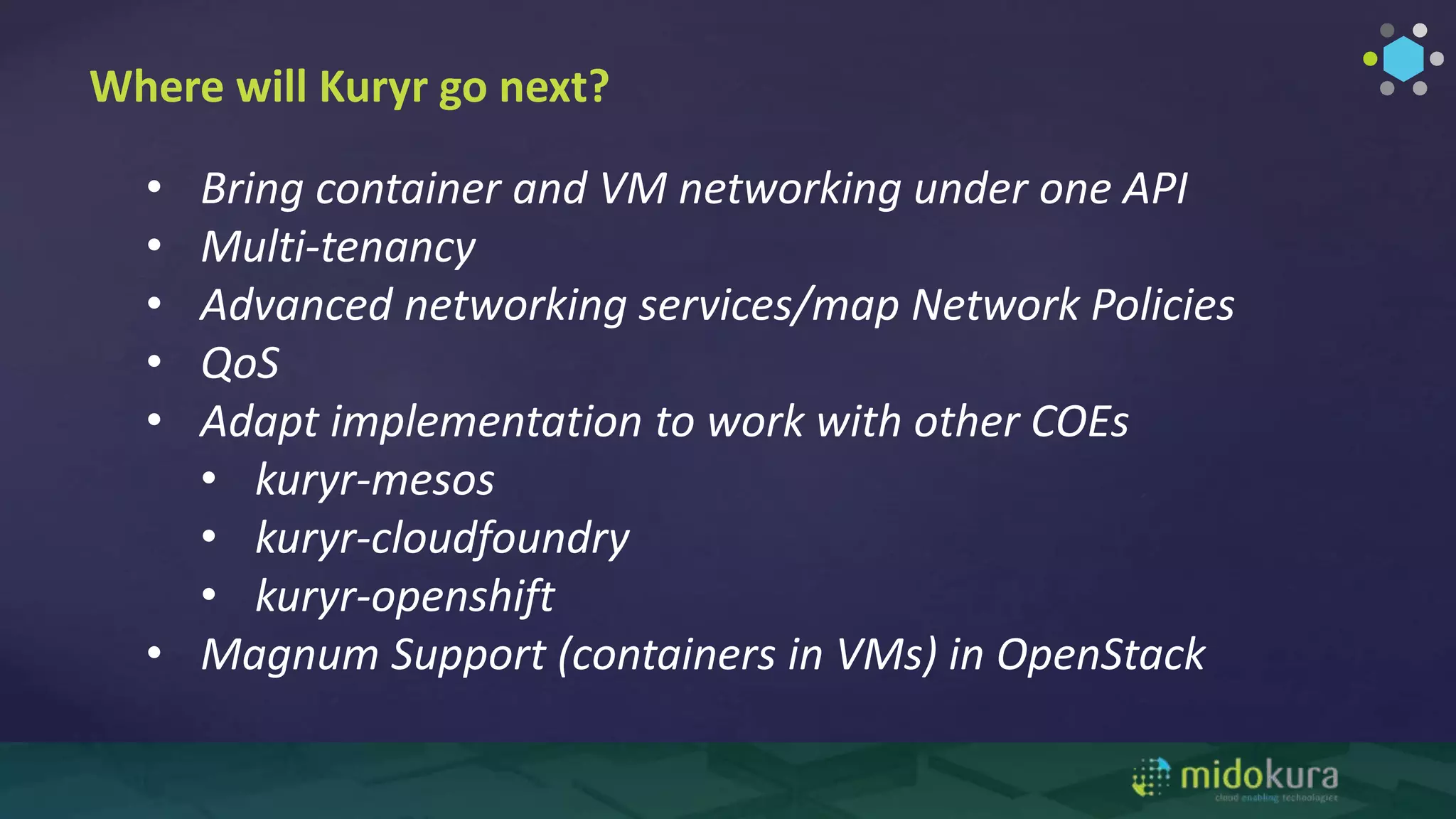

The document discusses the importance of networking considerations when deploying containers in production environments, highlighting the advantages of containers over virtual machines. It outlines various container orchestration engines and the complexities in networking and security that arise as a result of increased endpoints and ad-hoc security implementations. It also introduces tools like Kuryr and Neutron that facilitate networking for containers, emphasizing the need for enhanced multi-tenancy and advanced network services.