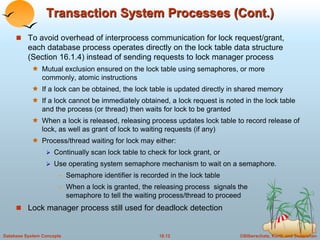

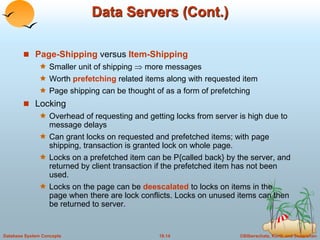

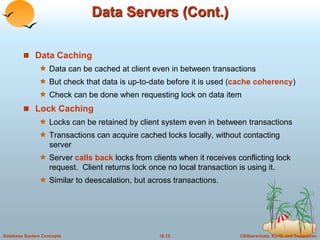

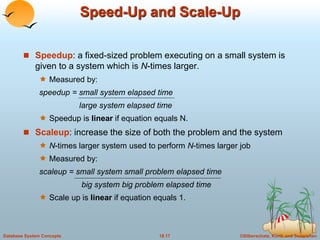

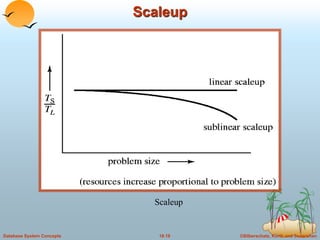

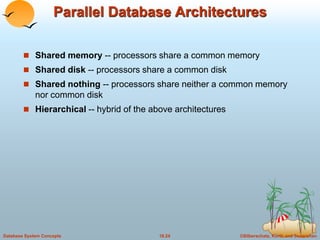

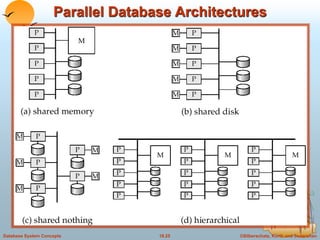

This document discusses different database system architectures including centralized systems, client-server systems, parallel systems, and distributed systems. It provides details on the components and functionality of centralized, client-server, and transaction server architectures. It also describes data servers, addressing issues like page shipping versus item shipping, locking, data caching, and lock caching in data server systems. The document concludes with explanations of speedup and scaleup in parallel database systems.