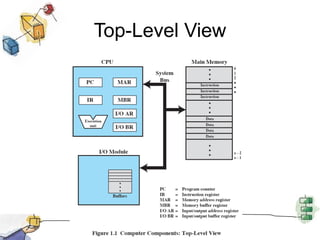

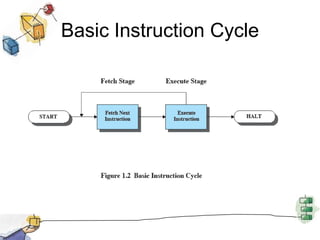

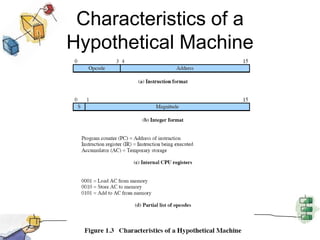

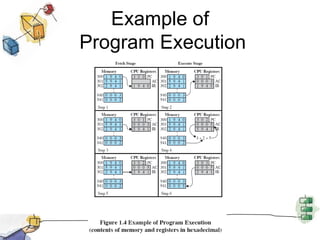

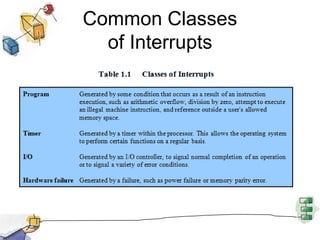

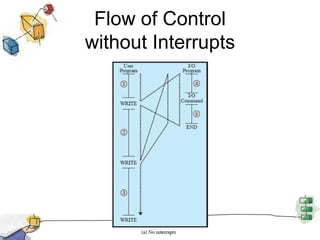

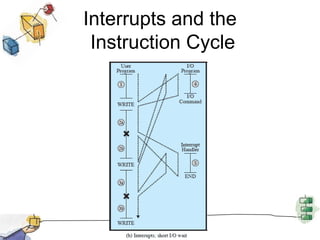

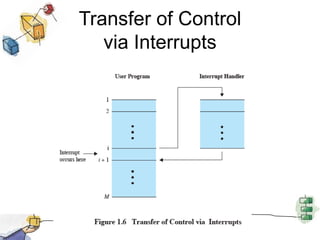

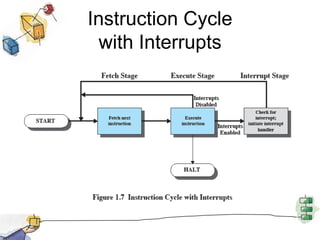

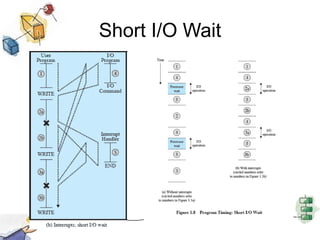

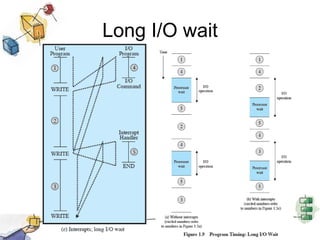

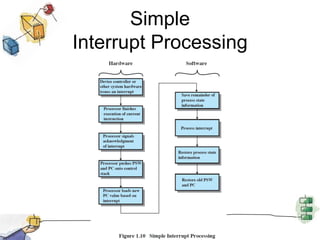

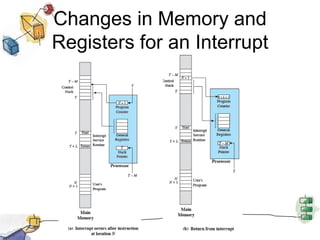

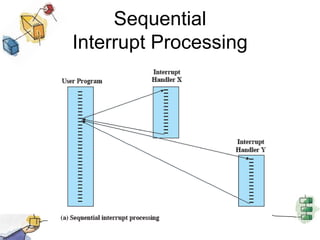

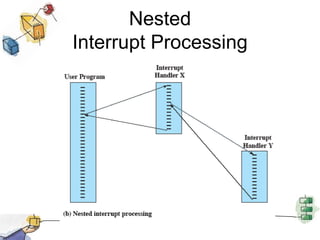

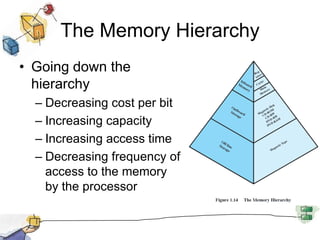

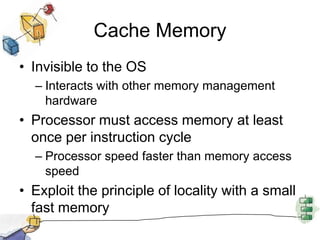

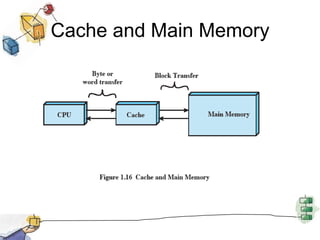

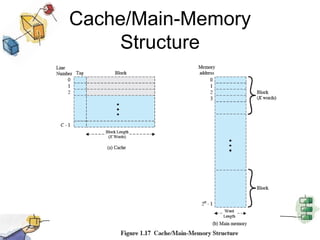

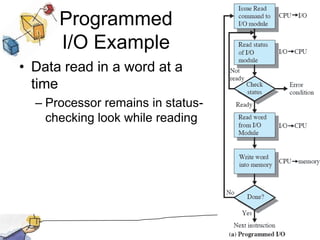

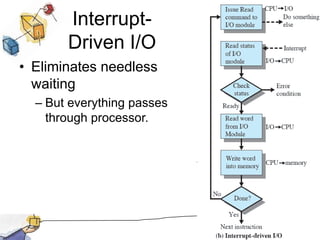

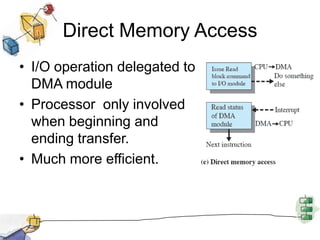

This document provides an overview of basic computer system elements and operating system concepts. It discusses the processor, memory, I/O modules, and system bus. It then covers processor registers, instruction execution, interrupts, the memory hierarchy including cache memory, and I/O communication techniques like programmed I/O, interrupt-driven I/O, and direct memory access. The goal is to provide a high-level roadmap of core computer hardware and software concepts.