The document outlines a presentation by Brian on automating cloud infrastructure using Terraform and Ansible on DigitalOcean. It emphasizes the concept of immutable infrastructure to prevent configuration drift, demonstrates creating and provisioning servers with Terraform, and explains server management with Ansible for tasks such as installing software and configuring services. Additionally, it covers best practices for provisioning and adopting reusable roles for efficient infrastructure management.

![Define a server

touch web-1.tf

resource "digitalocean_droplet" "web-1" {

image = "ubuntu-16-04-x64"

name = "web-1"

region = "nyc3"

monitoring = true

size = "1gb"

ssh_keys = [

"${var.ssh_fingerprint}"

]

}

output "web-1-address" {

value = "${digitalocean_droplet.web-1.ipv4_address}"

}](https://image.slidesharecdn.com/automate2018-180117015218/75/Automating-the-Cloud-with-Terraform-and-Ansible-21-2048.jpg)

![Ansible connects to your servers using SSH and uses host key checking. When you first log in to a

remote machine with SSH, the SSH client app will ask if you want to add the server to your "known

hosts." If you have to rebuild your server, or add a new server, you'll get this prompt when Ansible

tries to connect. It's a nice security feature, but you should turn it off. Add this section to the new file:

By default, Ansible makes a new SSH connection for each command it runs. This is slow. As your

playbooks get larger, this will take more time. You can tell Ansible to share SSH connections using

pipelining. However, this requires your servers to disable the requiretty for sudo users.

Create ansible.cfg

touch ansible.cfg

[defaults]

host_key_checking = False

[ssh_connection]

pipelining = True](https://image.slidesharecdn.com/automate2018-180117015218/75/Automating-the-Cloud-with-Terraform-and-Ansible-31-2048.jpg)

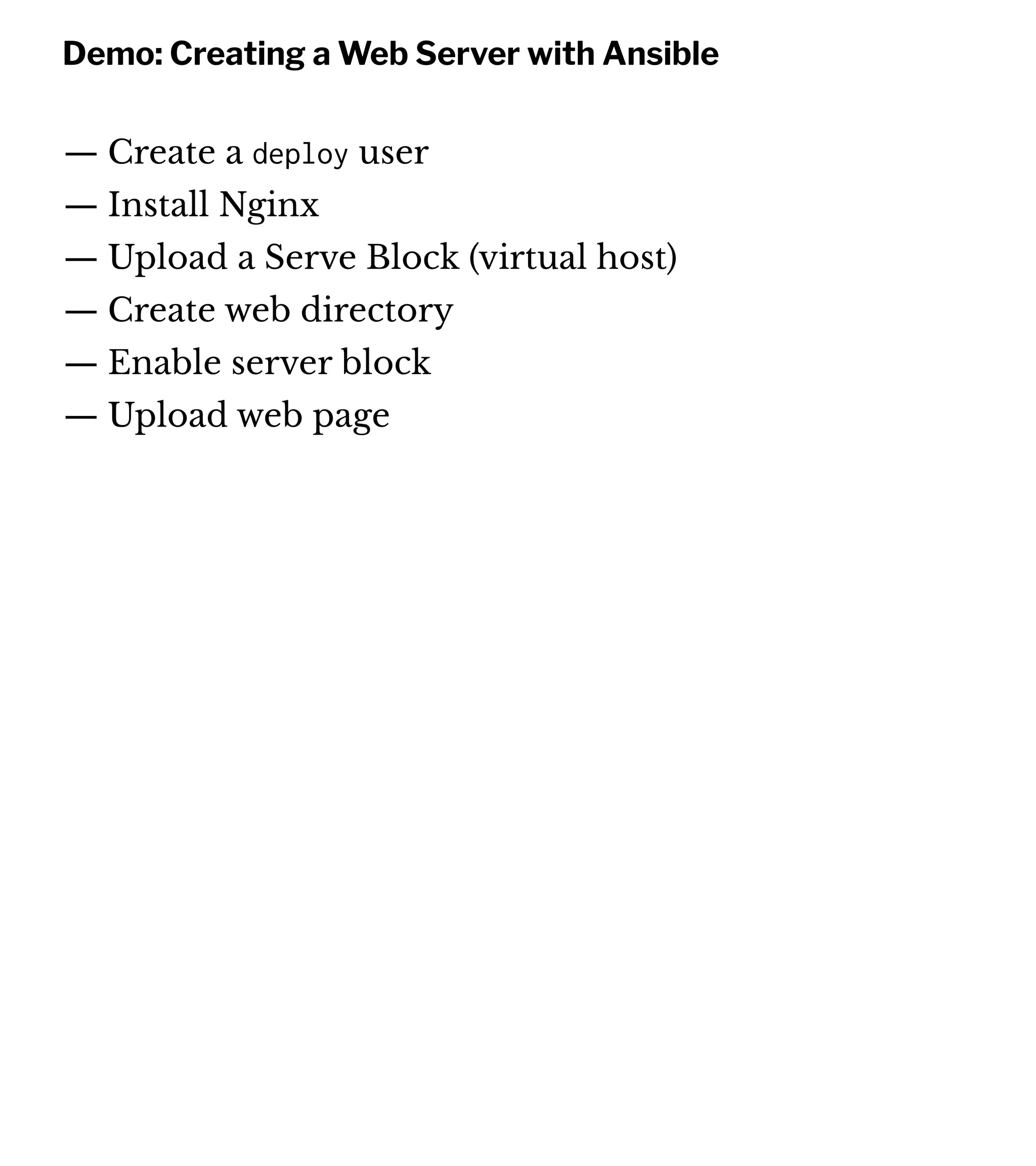

![Ansible uses an inventory file to list out the servers. We're going to start with one. First we define a host called web-1 and assign it the IP address of our

machine. We need to tell Ansible what private key file we want to use to connect to the server over SSH, and since we'll use the same one for all our

servers, we'll create a group called servers. We put the web-1 host in the servers group, and then we create variables for the servers. We're using

Ubuntu 16, which only ships with Python3. Ansible uses python2 by default, so we're just telling Ansible to use Python3 for all members of the servers

group.

Creating an Inventory

touch inventory

web-1 ansible_host=xx.xx.xx.xx

[servers]

web-1

[servers:vars]

ansible_private_key_file='/Users/your_username/.ssh/id_rsa'

ansible_python_interpreter=/usr/bin/python3](https://image.slidesharecdn.com/automate2018-180117015218/75/Automating-the-Cloud-with-Terraform-and-Ansible-32-2048.jpg)

![Run the playbook

ansible-playbook -i inventory.txt playbook.yml

PLAY [all] *********************************************************************

TASK [Gathering Facts] *********************************************************

ok: [web-1]

TASK [Add deploy user and add to sudoers] **************************************

changed: [web-1]

PLAY RECAP *********************************************************************

web-1 : ok=2 changed=1 unreachable=0 failed=0](https://image.slidesharecdn.com/automate2018-180117015218/75/Automating-the-Cloud-with-Terraform-and-Ansible-37-2048.jpg)

![Since the user is already there, Ansibe won't try creating it

again. But it will add the key:

Apply the change to the server

$ ansible-playbook -i inventory.txt playbook.yml

TASK [Add deploy user and add to sudoers] **************************************

ok: [web-1]

TASK [add public key for deploy user] ******************************************

changed: [web-1]

PLAY RECAP *********************************************************************

web-1 : ok=3 changed=1 unreachable=0 failed=0](https://image.slidesharecdn.com/automate2018-180117015218/75/Automating-the-Cloud-with-Terraform-and-Ansible-39-2048.jpg)

![In order to use sudo, you have to provide a password. Ansible is non-interactive, so if you

try to run the playbook, it'll stall out and error saying there was no password provided.

You provide the password for sudo access by adding the --ask-become-pass flag.

Run Ansible and apply changes

ansible-playbook -i inventory.txt playbook.yml

--ask-become-pass

SUDO password:

PLAY [all] *********************************************************************

...

TASK [Update apt cache] ********************************************************

changed: [web-1]

...](https://image.slidesharecdn.com/automate2018-180117015218/75/Automating-the-Cloud-with-Terraform-and-Ansible-42-2048.jpg)

![Install and Configure nginx

$ ansible-playbook -u deploy -i inventory playbook.yml --ask-become-pass

TASK [Update apt cache] ********************************************************

changed: [web-1]

TASK [Install nginx] ***********************************************************

changed: [web-1]

TASK [Create the web directory] ************************************************

changed: [web-1]

TASK [Disable `default` site] **************************************************

ok: [web-1]](https://image.slidesharecdn.com/automate2018-180117015218/75/Automating-the-Cloud-with-Terraform-and-Ansible-49-2048.jpg)

![Creating the Server Block with a Template

touch site.conf

server {

listen 80;

listen [::]:80;

root /var/www/example.com/;

index index.html;

server_name example.com

location / {

try_files $uri $uri/ =404;

}

}](https://image.slidesharecdn.com/automate2018-180117015218/75/Automating-the-Cloud-with-Terraform-and-Ansible-51-2048.jpg)

![Update Inventory

web-1 ansible_host=xx.xx.xx.xx

web-2 ansible_host=xx.xx.xx.yy

[servers]

web-1

web-2

...](https://image.slidesharecdn.com/automate2018-180117015218/75/Automating-the-Cloud-with-Terraform-and-Ansible-69-2048.jpg)

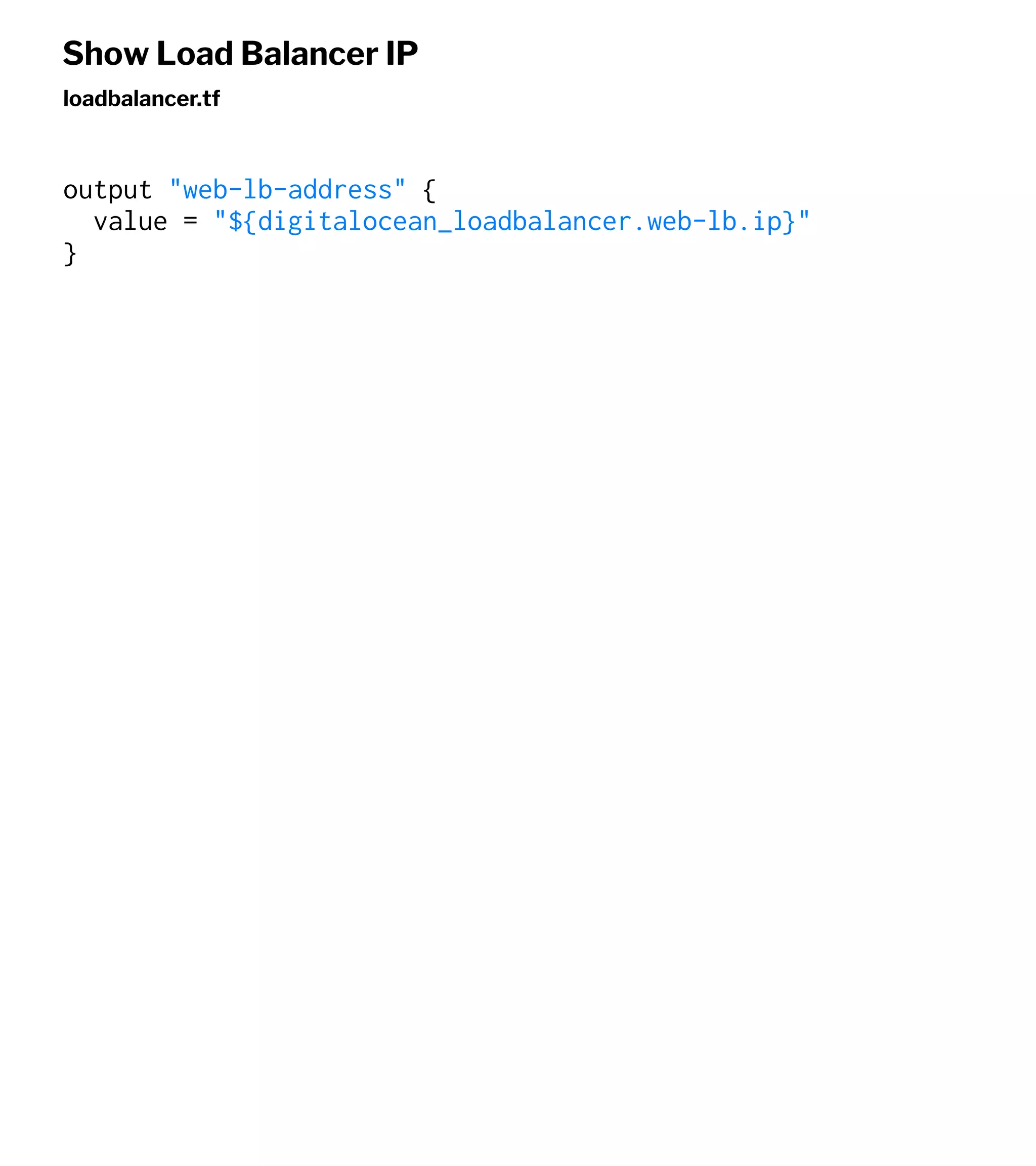

![We define the forwarding rule and a health check, and then

we specify the IDs of the Droplets we want to configure.

Add a DO Load Balancer with Terraform

touch loadbalancer.tr

resource "digitalocean_loadbalancer" "web-lb" {

name = "web-lb"

region = "nyc3"

forwarding_rule {

entry_port = 80

entry_protocol = "http"

target_port = 80

target_protocol = "http"

}

healthcheck {

port = 22

protocol = "tcp"

}

droplet_ids = ["${digitalocean_droplet.web-1.id}","${digitalocean_droplet.web-2.id}" ]

}](https://image.slidesharecdn.com/automate2018-180117015218/75/Automating-the-Cloud-with-Terraform-and-Ansible-72-2048.jpg)