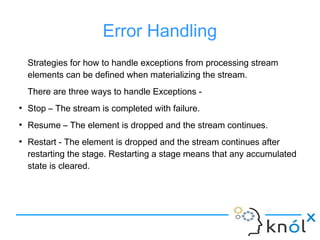

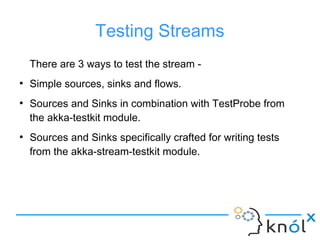

The document presents an overview of Akka Streams, describing it as a toolkit for processing streams addressing issues like blocking and back pressure. It outlines key components such as sources, flows, and sinks, as well as fan-in and fan-out junctions, which facilitate data processing efficiency. The document also covers error handling strategies and testing methods relevant to stream processing.

![Fan-out Junctions

Fan-out operations give us the ability to split a stream

into sub-streams.

●

Broadcast[T] – (1 input, N outputs)

Ingests elements from one input and emits duplicated

events across more than one output.

●

Balance[T] – (1 input, N outputs)

Ingests elements from one input and emits to the first

available output port.](https://image.slidesharecdn.com/akka-streams1-160822100124/85/Akka-streams-9-320.jpg)

![Fan-in Junctions

Fan-in operations give us the ability to join multiple streams into a single

output stream.

●

Merge[In] – (N inputs , 1 output)

Picks randomly from inputs pushing them one by one to its output.

●

MergePreferred[In]

Similar to Merge but if elements are available on preferred port, it picks

from it, otherwise randomly from others.

●

Concat[A] – (2 inputs, 1 output)

Concatenates two streams (first consume one, then the second one)](https://image.slidesharecdn.com/akka-streams1-160822100124/85/Akka-streams-10-320.jpg)

![References

[1] Akka Streams Documentation

[2] Lightbend Activator

[3] Opencredo Blog](https://image.slidesharecdn.com/akka-streams1-160822100124/85/Akka-streams-13-320.jpg)