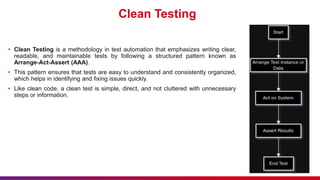

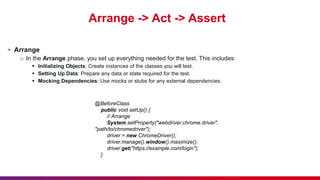

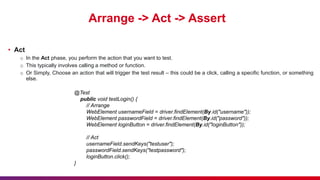

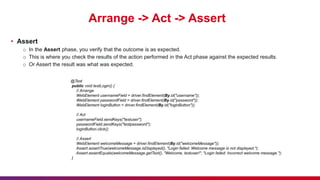

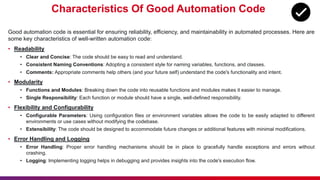

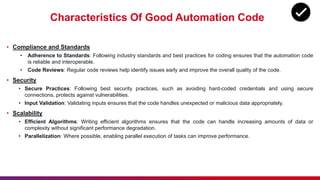

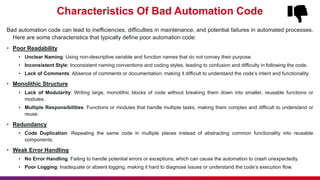

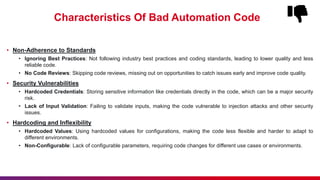

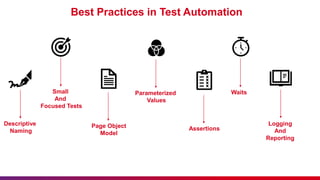

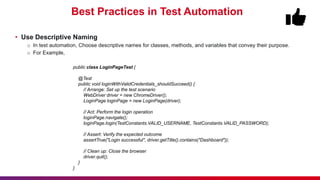

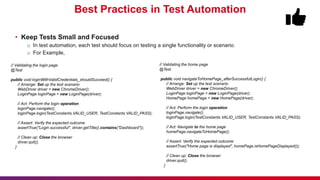

The document discusses the importance of clean code in test automation, emphasizing the arrange-act-assert (AAA) testing methodology for writing understandable and maintainable tests. It outlines characteristics of good and bad test automation code, introducing clean code principles such as single responsibility and dependency injection, along with best practices like descriptive naming and proper logging. Overall, it aims to promote effective testing by encouraging best practices that enhance code quality and maintainability.

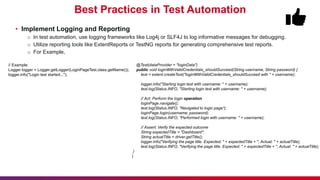

![Best Practices in Test Automation

• Parameterize Tests

o In test automation, use parameterization to run tests with different data sets.

o For Example,

@Test(dataProvider = "loginData")

public void loginWithValidCredentials_shouldSucceed(String username, String password) {

// Act: Perform the login operation

loginPage.navigate();

loginPage.login(username, password);

// Assert: Verify the expected outcome

assertEquals(driver.getTitle(), "Dashboard", "The page title should be Dashboard'");

}

@Test(dataProvider = "loginData")

public void navigateToHomePage_afterSuccessfulLogin(String username, String password) {

// Act: Perform the login operation

loginPage.navigate();

loginPage.login(username, password);

// Act: Navigate to the home page

homePage.navigateToHomePage();

// Assert: Verify the expected outcome

assertTrue(homePage.isHomePageDisplayed(), "The home page should be displayed after navigation");

}

// Example:

@DataProvider(name = "loginData")

public Object[][] loginData() {

return new Object[][] {

{"username1", "password1"},

{"username2", "password2"}

};

}](https://image.slidesharecdn.com/cleancodeintestautomationdifferentiatingbetweenthegoodandthebad-240522060333-476a6b57/85/Clean-Code-in-Test-Automation-Differentiating-Between-the-Good-and-the-Bad-24-320.jpg)