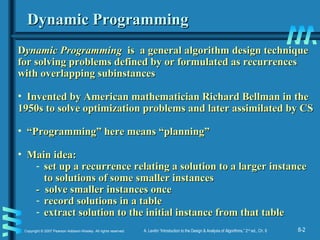

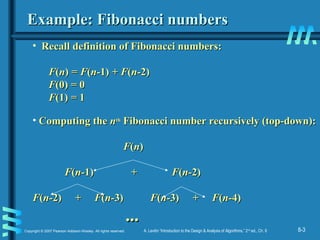

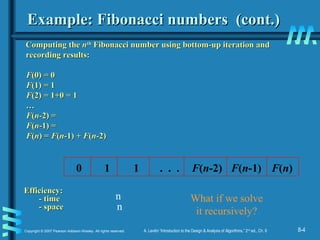

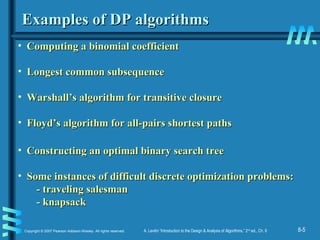

Dynamic programming is a technique for solving problems with overlapping subproblems and optimal substructure. It works by breaking problems down into smaller subproblems and storing the results in a table to avoid recomputing them. Examples where it can be applied include the knapsack problem, longest common subsequence, and computing Fibonacci numbers efficiently through bottom-up iteration rather than top-down recursion. The technique involves setting up recurrences relating larger instances to smaller ones, solving the smallest instances, and building up the full solution using the stored results.

![8-8Copyright © 2007 Pearson Addison-Wesley. All rights reserved. A. Levitin “Introduction to the Design & Analysis of Algorithms,” 2nd

ed., Ch. 8

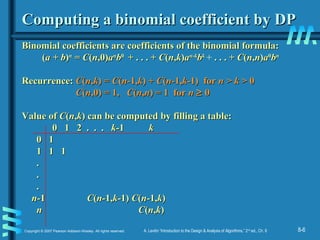

Knapsack Problem by DPKnapsack Problem by DP

GivenGiven nn items ofitems of

integer weights:integer weights: ww11 ww22 … w… wnn

values:values: vv11 vv22 … v… vnn

a knapsack of integer capacitya knapsack of integer capacity WW

find most valuable subset of the items that fit into the knapsackfind most valuable subset of the items that fit into the knapsack

Consider instance defined by firstConsider instance defined by first ii items and capacityitems and capacity jj ((jj ≤≤ WW))..

LetLet VV[[ii,,jj] be optimal value of such an instance. Then] be optimal value of such an instance. Then

max {max {VV[[ii-1,-1,jj],], vvii ++ VV[[ii-1,-1,j-j- wwii]} if]} if j-j- wwii ≥≥ 00

VV[[ii,,jj] =] =

VV[[ii-1,-1,jj] if] if j-j- wwii < 0< 0

Initial conditions:Initial conditions: VV[0,[0,jj] = 0 and] = 0 and VV[[ii,0] = 0,0] = 0

{](https://image.slidesharecdn.com/5-150507111633-lva1-app6891/85/5-3-dynamic-programming-8-320.jpg)

![8-10Copyright © 2007 Pearson Addison-Wesley. All rights reserved. A. Levitin “Introduction to the Design & Analysis of Algorithms,” 2nd

ed., Ch. 8

Knapsack Problem by DP (pseudocode)Knapsack Problem by DP (pseudocode)

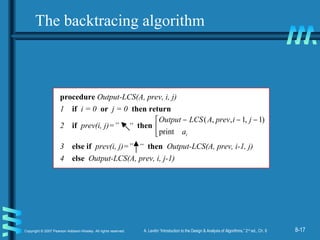

Algorithm DPKnapsack(Algorithm DPKnapsack(ww[[11....nn],], vv[[1..n1..n],], WW))

varvar VV[[0..n,0..W0..n,0..W]], P, P[[1..n,1..W1..n,1..W]]:: intint

forfor j := 0j := 0 toto WW dodo

VV[[0,j0,j] :=] := 00

forfor i := 0i := 0 toto nn dodo

VV[[i,0i,0]] := 0:= 0

forfor i := 1i := 1 toto nn dodo

forfor j := 1j := 1 toto WW dodo

ifif ww[[ii]] ≤≤ jj andand vv[[ii]] + V+ V[[i-1,j-wi-1,j-w[[ii]]]] > V> V[[i-1,ji-1,j] then] then

VV[[i,ji,j]] := v:= v[[ii]] + V+ V[[i-1,j-wi-1,j-w[[ii]]]]; P; P[[i,ji,j]] := j-w:= j-w[[ii]]

elseelse

VV[[i,ji,j]] := V:= V[[i-1,ji-1,j]]; P; P[[i,ji,j]] := j:= j

returnreturn VV[[n,Wn,W] and the optimal subset by backtracing] and the optimal subset by backtracing

Running time and space:

O(nW).](https://image.slidesharecdn.com/5-150507111633-lva1-app6891/85/5-3-dynamic-programming-10-320.jpg)

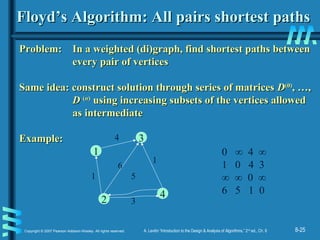

![8-20Copyright © 2007 Pearson Addison-Wesley. All rights reserved. A. Levitin “Introduction to the Design & Analysis of Algorithms,” 2nd

ed., Ch. 8

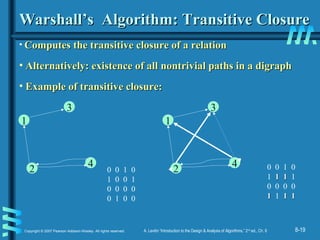

Warshall’s AlgorithmWarshall’s Algorithm

Constructs transitive closureConstructs transitive closure TT as the last matrix in the sequenceas the last matrix in the sequence

ofof nn-by--by-nn matricesmatrices RR(0)(0)

, … ,, … , RR((kk))

, … ,, … , RR((nn))

wherewhere

RR((kk))

[[ii,,jj] = 1 iff there is nontrivial path from] = 1 iff there is nontrivial path from ii toto jj with only thewith only the

firstfirst kk vertices allowed as intermediatevertices allowed as intermediate

Note thatNote that RR(0)(0)

== AA (adjacency matrix)(adjacency matrix),, RR((nn))

= T= T (transitive closure)(transitive closure)

3

42

1

3

42

1

3

42

1

3

42

1

R(0)

0 0 1 0

1 0 0 1

0 0 0 0

0 1 0 0

R(1)

0 0 1 0

1 0 11 1

0 0 0 0

0 1 0 0

R(2)

0 0 1 0

1 0 1 1

0 0 0 0

11 1 1 11 1

R(3)

0 0 1 0

1 0 1 1

0 0 0 0

1 1 1 1

R(4)

0 0 1 0

1 11 1 1

0 0 0 0

1 1 1 1

3

42

1](https://image.slidesharecdn.com/5-150507111633-lva1-app6891/85/5-3-dynamic-programming-20-320.jpg)

![8-21Copyright © 2007 Pearson Addison-Wesley. All rights reserved. A. Levitin “Introduction to the Design & Analysis of Algorithms,” 2nd

ed., Ch. 8

Warshall’s Algorithm (recurrence)Warshall’s Algorithm (recurrence)

On theOn the k-k-th iteration, the algorithm determines for every pair ofth iteration, the algorithm determines for every pair of

verticesvertices i, ji, j if a path exists fromif a path exists from ii andand jj with just vertices 1,…,with just vertices 1,…,kk

allowedallowed asas intermediateintermediate

RR((kk-1)-1)

[[i,ji,j]] (path using just 1 ,…,(path using just 1 ,…,k-k-1)1)

RR((kk))

[[i,ji,j] =] = oror

RR((kk-1)-1)

[[i,ki,k] and] and RR((kk-1)-1)

[[k,jk,j]] (path from(path from ii toto kk

and fromand from kk toto jj

using just 1 ,…,using just 1 ,…,k-k-1)1)

i

j

k

{

Initial condition?](https://image.slidesharecdn.com/5-150507111633-lva1-app6891/85/5-3-dynamic-programming-21-320.jpg)

![8-22Copyright © 2007 Pearson Addison-Wesley. All rights reserved. A. Levitin “Introduction to the Design & Analysis of Algorithms,” 2nd

ed., Ch. 8

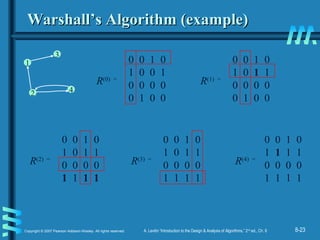

Warshall’s Algorithm (matrix generation)Warshall’s Algorithm (matrix generation)

Recurrence relating elementsRecurrence relating elements RR((kk))

to elements ofto elements of RR((kk-1)-1)

is:is:

RR((kk))

[[i,ji,j] =] = RR((kk-1)-1)

[[i,ji,j] or] or ((RR((kk-1)-1)

[[i,ki,k] and] and RR((kk-1)-1)

[[k,jk,j])])

It implies the following rules for generatingIt implies the following rules for generating RR((kk))

fromfrom RR((kk-1)-1)

::

Rule 1Rule 1 If an element in rowIf an element in row ii and columnand column jj is 1 inis 1 in RR((k-k-1)1)

,,

it remains 1 init remains 1 in RR((kk))

Rule 2Rule 2 If an element in rowIf an element in row ii and columnand column jj is 0 inis 0 in RR((k-k-1)1)

,,

it has to be changed to 1 init has to be changed to 1 in RR((kk))

if and only ifif and only if

the element in its rowthe element in its row ii and columnand column kk and the elementand the element

in its columnin its column jj and rowand row kk are both 1’s inare both 1’s in RR((k-k-1)1)](https://image.slidesharecdn.com/5-150507111633-lva1-app6891/85/5-3-dynamic-programming-22-320.jpg)

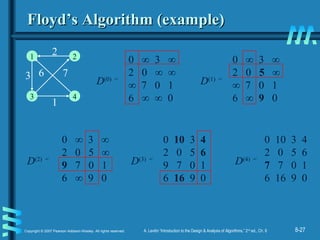

![8-26Copyright © 2007 Pearson Addison-Wesley. All rights reserved. A. Levitin “Introduction to the Design & Analysis of Algorithms,” 2nd

ed., Ch. 8

Floyd’s Algorithm (matrix generation)Floyd’s Algorithm (matrix generation)

On theOn the k-k-th iteration, the algorithm determines shortest pathsth iteration, the algorithm determines shortest paths

between every pair of verticesbetween every pair of vertices i, ji, j that use only vertices among 1,that use only vertices among 1,

…,…,kk as intermediateas intermediate

DD((kk))

[[i,ji,j] = min {] = min {DD((kk-1)-1)

[[i,ji,j],], DD((kk-1)-1)

[[i,ki,k] +] + DD((kk-1)-1)

[[k,jk,j]}]}

i

j

k

DD((kk-1)-1)

[[i,ji,j]]

DD((kk-1)-1)

[[i,ki,k]]

DD((kk-1)-1)

[[k,jk,j]]

Initial condition?](https://image.slidesharecdn.com/5-150507111633-lva1-app6891/85/5-3-dynamic-programming-26-320.jpg)

![8-28Copyright © 2007 Pearson Addison-Wesley. All rights reserved. A. Levitin “Introduction to the Design & Analysis of Algorithms,” 2nd

ed., Ch. 8

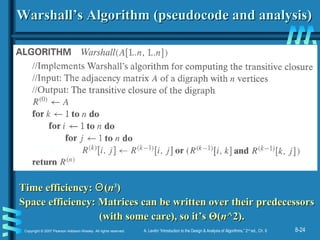

Floyd’s Algorithm (pseudocode and analysis)Floyd’s Algorithm (pseudocode and analysis)

Time efficiency:Time efficiency: ΘΘ((nn33

))

Space efficiency: Matrices can be written over their predecessorsSpace efficiency: Matrices can be written over their predecessors

Note: Works on graphs with negative edges but without negative cycles.Note: Works on graphs with negative edges but without negative cycles.

Shortest paths themselves can be found, too.Shortest paths themselves can be found, too. How?How?

If D[i,k] + D[k,j] < D[i,j] then P[i,j] k

Since the superscripts k or k-1 make

no difference to D[i,k] and D[k,j].](https://image.slidesharecdn.com/5-150507111633-lva1-app6891/85/5-3-dynamic-programming-28-320.jpg)

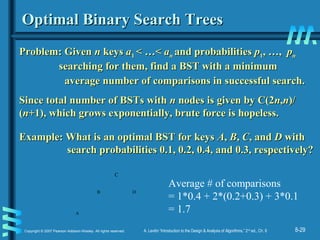

![8-30Copyright © 2007 Pearson Addison-Wesley. All rights reserved. A. Levitin “Introduction to the Design & Analysis of Algorithms,” 2nd

ed., Ch. 8

DP for Optimal BST ProblemDP for Optimal BST Problem

LetLet CC[[i,ji,j] be minimum average number of comparisons made in] be minimum average number of comparisons made in

T[T[i,ji,j], optimal BST for keys], optimal BST for keys aaii < …<< …< aajj ,, where 1 ≤where 1 ≤ ii ≤≤ jj ≤≤ n.n.

Consider optimal BST among all BSTs with someConsider optimal BST among all BSTs with some aakk ((ii ≤≤ kk ≤≤ jj ))

as their root; T[as their root; T[i,ji,j] is the best among them.] is the best among them.

a

Optimal

BST for

a , ..., a

Optimal

BST for

a , ..., ai

k

k-1 k+1 j

CC[[i,ji,j] =] =

min {min {ppkk ·· 1 +1 +

∑∑ ppss (level(level aass in T[in T[i,k-i,k-1] +1)1] +1) ++

∑∑ ppss (level(level aass in T[in T[k+k+11,j,j] +1)}] +1)}

ii ≤≤ kk ≤≤ jj

ss == ii

k-k-11

s =s =k+k+11

jj](https://image.slidesharecdn.com/5-150507111633-lva1-app6891/85/5-3-dynamic-programming-30-320.jpg)

![8-31Copyright © 2007 Pearson Addison-Wesley. All rights reserved. A. Levitin “Introduction to the Design & Analysis of Algorithms,” 2nd

ed., Ch. 8

goal0

0

C[i,j]

0

1

n+1

0 1 n

p 1

p2

np

i

j

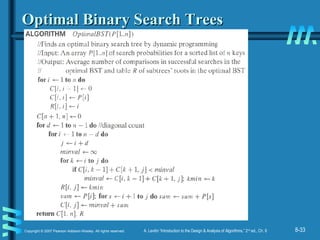

DP for Optimal BST Problem (cont.)DP for Optimal BST Problem (cont.)

After simplifications, we obtain the recurrence forAfter simplifications, we obtain the recurrence for CC[[i,ji,j]:]:

CC[[i,ji,j] =] = min {min {CC[[ii,,kk-1] +-1] + CC[[kk+1,+1,jj]} + ∑]} + ∑ ppss forfor 11 ≤≤ ii ≤≤ jj ≤≤ nn

CC[[i,ii,i] =] = ppii for 1for 1 ≤≤ ii ≤≤ jj ≤≤ nn

ss == ii

jj

ii ≤≤ kk ≤≤ jj](https://image.slidesharecdn.com/5-150507111633-lva1-app6891/85/5-3-dynamic-programming-31-320.jpg)

![Example: keyExample: key A B C DA B C D

probability 0.1 0.2 0.4 0.3probability 0.1 0.2 0.4 0.3

The tables below are filled diagonal by diagonal: the left one is filledThe tables below are filled diagonal by diagonal: the left one is filled

using the recurrenceusing the recurrence

CC[[i,ji,j] =] = min {min {CC[[ii,,kk-1] +-1] + CC[[kk+1,+1,jj]} + ∑]} + ∑ pps ,s , CC[[i,ii,i] =] = ppii ;;

the right one, for trees’ roots, recordsthe right one, for trees’ roots, records kk’s values giving the minima’s values giving the minima

00 11 22 33 44

11 00 .1.1 .4.4 1.11.1 1.71.7

22 00 .2.2 .8.8 1.41.4

33 00 .4.4 1.01.0

44 00 .3.3

55 00

00 11 22 33 44

11 11 22 33 33

22 22 33 33

33 33 33

44 44

55

ii ≤≤ kk ≤≤ jj ss == ii

jj

optimal BSToptimal BST

B

A

C

D

ii

jj

ii

jj](https://image.slidesharecdn.com/5-150507111633-lva1-app6891/85/5-3-dynamic-programming-32-320.jpg)

![8-34Copyright © 2007 Pearson Addison-Wesley. All rights reserved. A. Levitin “Introduction to the Design & Analysis of Algorithms,” 2nd

ed., Ch. 8

Analysis DP for Optimal BST ProblemAnalysis DP for Optimal BST Problem

Time efficiency:Time efficiency: ΘΘ((nn33

) but can be reduced to) but can be reduced to ΘΘ((nn22

)) by takingby taking

advantage of monotonicity of entries in theadvantage of monotonicity of entries in the

root table, i.e.,root table, i.e., RR[[i,ji,j] is always in the range] is always in the range

betweenbetween RR[[i,ji,j-1] and R[-1] and R[ii+1,j]+1,j]

Space efficiency:Space efficiency: ΘΘ((nn22

))

Method can be expanded to include unsuccessful searchesMethod can be expanded to include unsuccessful searches](https://image.slidesharecdn.com/5-150507111633-lva1-app6891/85/5-3-dynamic-programming-34-320.jpg)