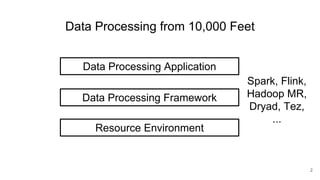

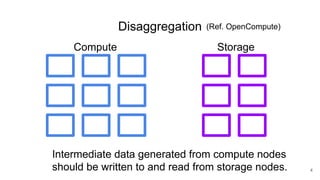

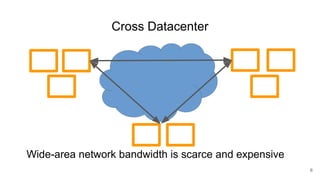

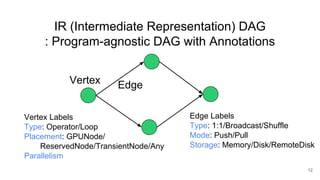

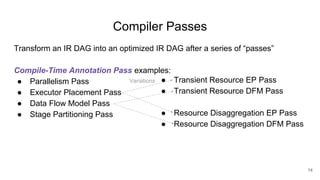

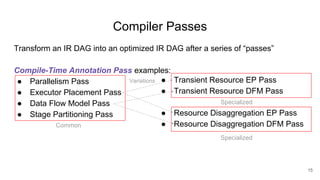

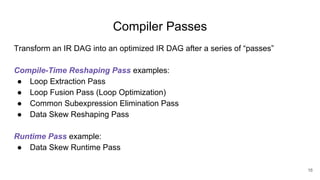

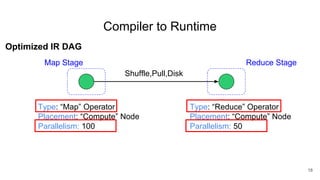

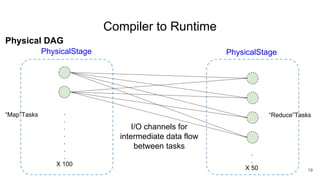

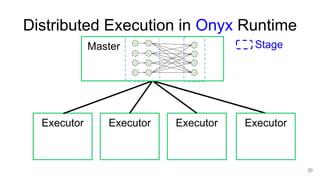

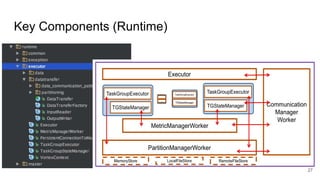

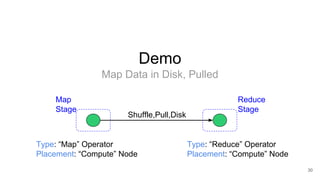

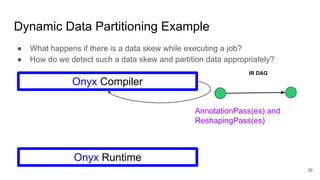

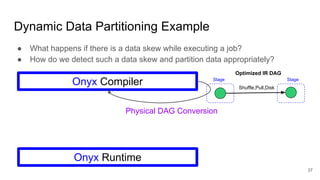

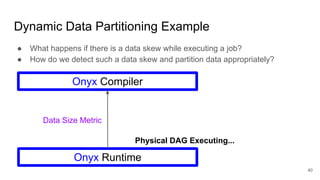

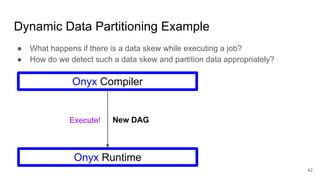

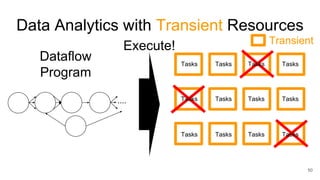

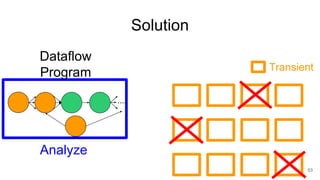

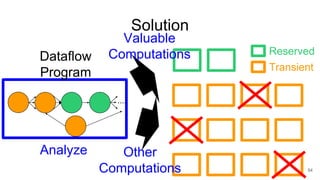

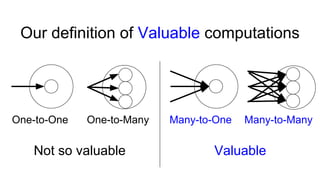

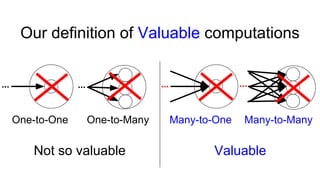

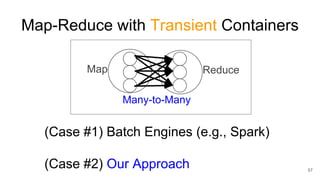

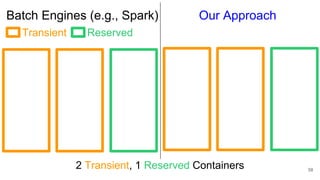

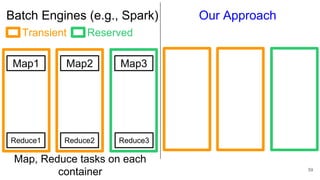

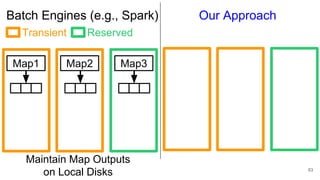

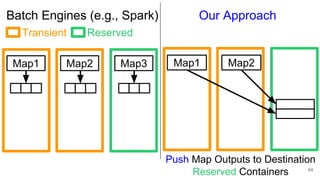

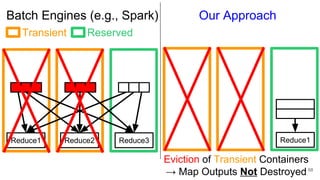

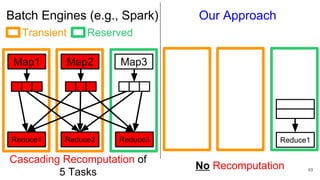

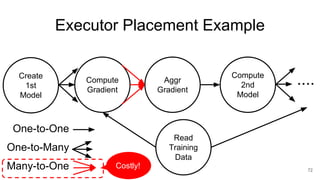

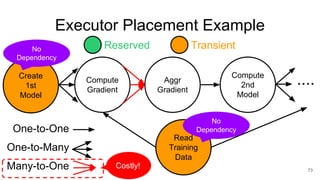

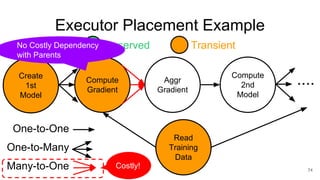

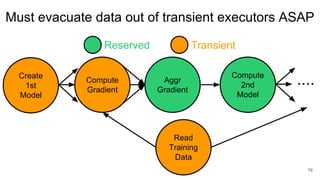

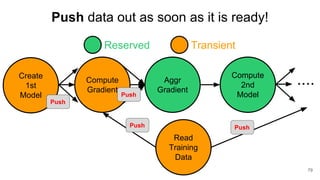

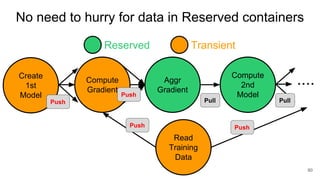

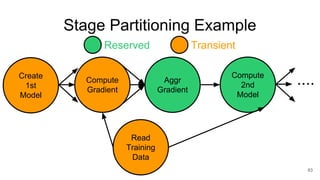

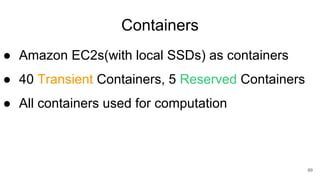

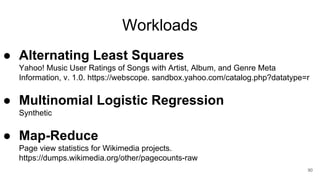

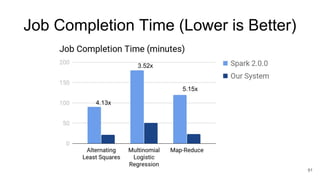

The document describes Onyx, a new flexible and extensible data processing system. It discusses limitations of existing frameworks in new resource environments like resource disaggregation and transient resources. The Onyx architecture includes a compiler that transforms dataflow programs into optimized physical execution plans using passes, and a runtime that executes the plans across cluster resources. It provides examples of compiling and running MapReduce and ALS jobs, and handling dynamic data skew through runtime optimization.