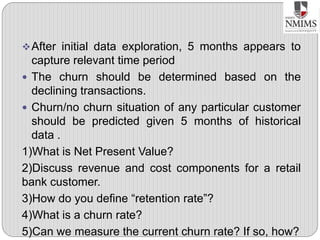

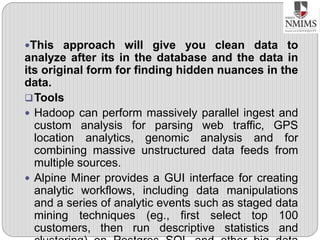

This document outlines the phases of the data analytics lifecycle, with a focus on Phase 1: Discovery. The Discovery phase involves understanding the business problem, available resources, and formulating initial hypotheses to test. Key activities in Discovery include interviewing stakeholders, learning the domain, assessing available data and tools, and framing the business and analytics problems. The goal is to have enough information to draft an analytic plan and scope the project before moving to the next phase of data preparation.