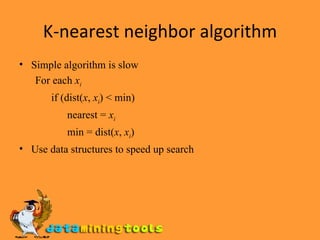

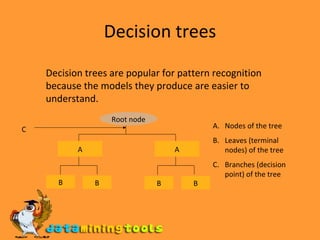

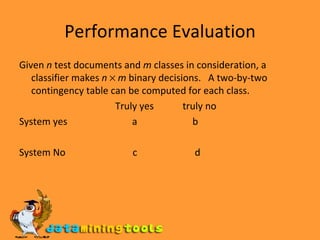

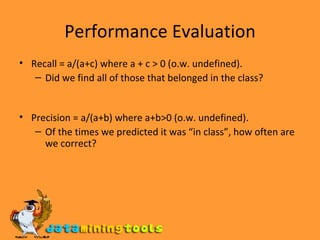

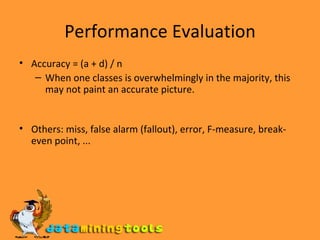

This document discusses various techniques for document classification in text mining, including k-nearest neighbor algorithms, decision trees, and naive Bayes classifiers. It covers how each technique works, their advantages and drawbacks, how to evaluate classifier performance, and examples of applications for document classification.