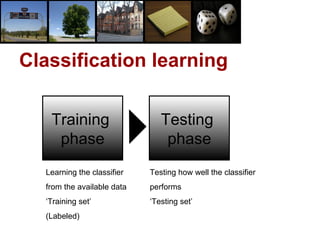

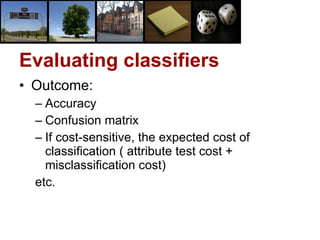

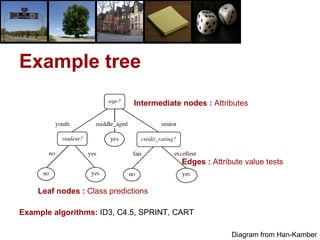

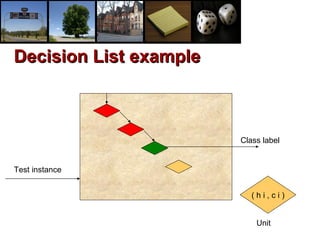

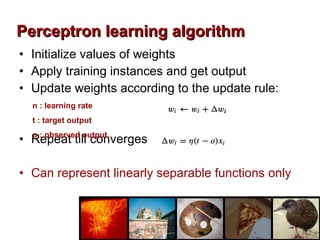

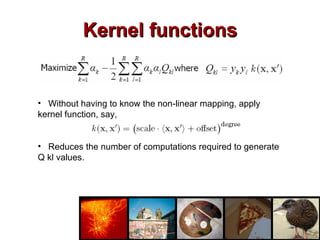

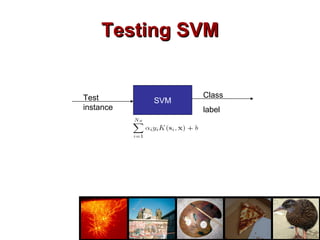

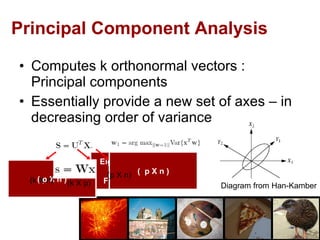

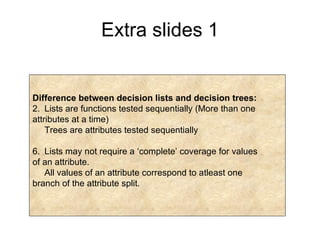

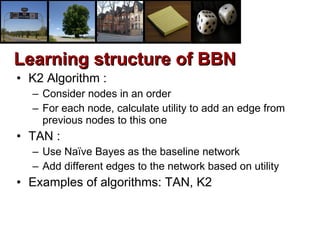

The document provides an overview of various machine learning classification algorithms including decision trees, lazy learners like K-nearest neighbors, decision lists, naive Bayes, artificial neural networks, and support vector machines. It also discusses evaluating and combining classifiers, as well as preprocessing techniques like feature selection and dimensionality reduction.

![“ Classifiers” R & D project by Aditya M Joshi [email_address] IIT Bombay Under the guidance of Prof. Pushpak Bhattacharyya [email_address] IIT Bombay](https://image.slidesharecdn.com/ppt2931/85/ppt-2-320.jpg)