The document provides information about computer graphics concepts including:

1. Summarizing questions and answers about 3D triangles, rotation matrices, vector operations, splines, and computer graphics techniques like environment mapping and anti-aliasing.

2. Explaining modifications made to the active edge list algorithm to enable scan conversion of different triangle types like smoothly shaded, textured, and environment mapped triangles.

3. Deriving the 4x4 projection matrix that maps a 3D object point to its shadow point on a plane, to create planar shadows.

![Question

• Consider the animation of a spherical particle of radius r moving with constant velocity v towards a wall at the z = 0 plane. Given an initial position p0

at t = 0 that is farther from the wall than r, the position of the particle center at any later time t prior to the collision is given by: p(t) = p0 + vt

• (a) At what time will the particle contact the wall?

• The answer is: tb =(r − pz)/ vz

• (b) Let tb (“time of bounce”) be the solution to Part 3a. Since this is an an animation, you only render frames at discrete multiples of the frame time t

(i.e., t = 0,t, 2t, 3t, ...). If tb is not a multiple of t – and in general, it isn’t – the animated particle can penetrate the wall from the time step before tb

to the one after it.

• Give (pseudo)code for computing p[] in this:

• // given:

• int n; // the number of frames to render (a positive int)

• float dt; // time step

• float v[3]; // the initial velocity of the particle

• float p0[3]; // the initial position of the particle

• float r; // the radius of the particle

• // derived:

• float tb = // assume you have the answer to (3a)

• float p[3];

• for (int i = 0; i < n; i++) { // i is the frame number

• // compute p[] <-- your code goes here

• drawParticle(p, r);

• }

• Allow for the possibility of collision. Assume an elastic collision, so that the effect of the “bounce” is to negate the z component of v (i.e. v[2]) but

leave the x and y components alone.

• The answer is:

• t = i * dt;

• p[0] = p0[0] + t * v[0]

• p[1] = p0[1] + t * v[1]

• if (t < tb)

• p[2] = p0[2] + t * v[2]

• else

• p[2] = r - v{2] * (t - tb)](https://image.slidesharecdn.com/computergraphics-120104181113-phpapp02/85/testpang-6-320.jpg)

![Question

• Consider the cubic spline segment, p(t) = a t3 + b t2 + c t + d; on the unit interval t 2 [0; 1]. Recall that Hermite splines use p and p0 controls at each

end point, i.e., p0 = p(0), p0 0 = p0(0), p1 = p(1), p0 1 = p0(1). In this question you will derive a spline which instead uses the following controls: at t =

0, use position (p0), velocity (p0 0) and acceleration (p00 0); at t = 1, use position (p1).

• (a) What is this spline’s 4x4 geometry matrix G such that

• The answer is:

• Taking derivatives of p(t) we have

• so that

• It follows that

• Therefore the curve and geometry matrix are given by

• (b) Given a cubic spline contructed from these segments, is the resulting curve (i) C0 continuous? (ii) C1 continuous? (iii) G0 continuous? (iv) G1

continuous? Briefly explain your reasoning in each case.

• The answer is:

• The question asks you to consider continuity at the endpoints of a spline segment. You can assume that we have a spline that has uniformly spaced

knots, with each knot specified by triples of values (p0, p0 0, p00 0) at t = 0, then (p1, p0 1, p00 1) at t = 1, then (p2, p0 2, p00 2) at t = 2, etc. Assume

that we have two spline segments, p(t) for t 2 [0; 1] and a separate q(t) for t 2 [1; 2] (which could be mapped to our [0,1] derivation by a translation,

= t 1), and that we will consider what happens at t = 1. Since the cubic spline constructed by joining these segments is geometrically connected at t =

1 (it must share the same endpoint p1) it must have G0 geometric continuity. Furthermore, since the coordinate functions are cubic polynomials

(which are continuous to all orders), then the curve must also have the same limits at t = 1, i.e., limt! p(t) = limt!1+ q(t) = p1, and therefore it must

have C0 parametric continuity. However, unlike the Hermite cubic spline, there is no reason that the curve’s tangents should be equal at the

endpoints; the values of p0(1) and q0(1) depend on different control parameters, (p0, p0 0, p00 0, p1) and (p1, p0 1, p00 1, p2), respectively, and

therefore we won’t have C1 parametric continuity. Furthermore, since the endpoint tangents needn’t even point in the same direction, we can’t

have G1 geometric continuity either.](https://image.slidesharecdn.com/computergraphics-120104181113-phpapp02/85/testpang-8-320.jpg)

is an identity matrix with m12, m13, and m14 modified by the function’s arguments:

• a) Fill in the following table to indicate which elements of E (starting out as an identity matrix in each case) would be affected by the arguments

of the given OpenGL function(s). Do not give the formula for each element, just a list of which elements get modified. This has already been

done for glTranslate[df]() as illustration.

• Do not assume anything about the values of the arguments passed. (i. e., It doesn’t matter that glTranslate[df](0.0, 0.0, 0.0) doesn’t really

change the values of the matrix.)

• The answer is:

• b) Consider a modelview matrix represented as in (a) as a change of coordinates from a “model” coordinate system to a “view” coordinate

system. In terms of the elements mi, what are the components of ˆz′, the direc on of the model’s ˆz unit vector in the view coordinate system?

(Remember that ˆz′ is of unit length.)

• The answer is:](https://image.slidesharecdn.com/computergraphics-120104181113-phpapp02/85/testpang-10-320.jpg)

![Question

• Uniform subdivisions are commonly used for ray tracing, wherein a ray is intersected with cell boundaries to “walk” through the subdivision’s cells. In

this problem, you will generate pseudocode to walk through a uniform triangular subdivision (see figure). It can be viewed as the union of three 1D

uniform subdivisions (color-coded for convenience), each associated with a normalized direction vector (n0, n1 or n2). Each 1D cell is of width, h.

Denote each triangular cell by a 3-index label [i0, i1, i2] (RGB color coded) based on the cell’s location in each of the 1D subdivisions. Assume that our

ray r(t) originates from the origin, 0, so that r(t) = 0 + tu, t > 0. Assume that the origin lies at the centroid of the triangular cell indexed by [0, 0, 0].

• Write pseudocode to efficiently generate the sequence of traversed cells when given the ray direction, u and these particular cell direction vectors

(assume normalized). For example, given the u vector shown in the figure, your code would output the infinite sequence:

• [0, 0, 0], [1, 0, 0], [1, 1, 0], [1, 1, 1], [1, 2, 1], …Output a traversed cell with the function “output [i0, i1, i2].”

• The answer is:

• This is basically another ray-plane intersection problem. The rate at which the ray r(t) travels along the unit cell directions ni is given by the

component of r0 = u along each ni direction. In otherwords, the rate of position change in direction i is given by uTni, and since each direction must

travel h far between line crossings, the change in t between crossings for direction i is given by

• which may be negative. The remaining question is how far each direction must travel before reaching the first crossing, and this is related to the

position of the origin at the centroid of the [0,0,0] triangle. A simple geometric fact (that you can easily show) is that for an equilateral triangle placed

flat against the ground, of height h, the centroid occurs at height h=3. Therefore for directions n0 and n2, if their ti > 0 they must travel a distance

h=3 to their first crossing, but for ti < 0 its a distance of 2h=3. Unfortunately n1 is somewhat backwards: for positive t1 it must travel 2h=3, but for

negative t1 it must travel h=3. These spatial distances can be converted to t distances as with ti in. The algorithm then proceeds as follows. For each

direction i, we computeti, and initialize a variable tnext i for the next crossing time. The cell indices are set to [0,0,0]. At each step, we find the k

associated with the smallest tnext k , increment/decrement ik by 1 (depending on the sign of tk), increase tnext k by jtkj, and output [i0, i1, i2].

Pseudocode to do this is given below.

• For speed we could precompute/cache the jtkj and sign(tk) constants used in the forever loop.](https://image.slidesharecdn.com/computergraphics-120104181113-phpapp02/85/testpang-12-320.jpg)

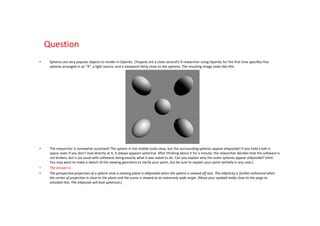

![Question

• Recall that Phong Shading interpolates vertex normals across a triangle for smooth shading on low-resolution meshes, i.e., the unnormalized surface

normal at barycentric coordinate (u; v;w) (where w = 1 - u - v) is approximated by barycentrically interpolated vertex normals,

• where the unit vertex normals are ni, nj and nk. Of course, since each triangle is still planar,

• the piecewise planar shape is still apparent at silhouettes.

• Recently, Boubekeur and Alexa [SIGGRAPH Asia 2008] introduced Phong Tesselation as a simple way to use vertex normals to deform a triangle mesh

to have smoother silhouettes (see Figures 1 and 2). In the following, you will derive their formula for a curved triangle patch, p(u, v), and analyze

surface continuity.

• (a) Consider the plane passing though vertex i’s position, pi, and sharing the same normal, ni. Give an expression for the orthogonal projection of a

point p onto vertex i’s plane, hereafter denoted by pi(p).

• The answer is:

• The component of v = (p-pi) along the normal is vTni. Therefore the component along the surface is v-ninTi v, and so

• (b) The deformed position p(u; v) is simply the barycentrically interpolated projections of the undeformed point p(u; v) onto the three vertex planes,

i.e., the barycentric interpolation of i(p(u; v)), j(p(u; v)), and k(p(u; v)). Derive a polynomial expression for p(u; v) in terms of u, v and w—you can also

write it only in terms of u and v but it is messier. (Hint: Express your answer in terms of projected-vertex positions, such as i(pj).)

• The answer is:

• The provided definition says that

• (c) What degree is this triangular bivariate polynomial patch, p(u; v)?

• The answer is:

• From our derived formulae, it is clear that p(u; v) is a quadratic (or degree-2) polynomial patch.

• (Note: It is incorrect to state that “it is a quadratic patch since it has 3 control points” supposedly in analogy with the 1D curve setting. Note that a

planar triangle patch (30) also has 3 control points, but is degree one.)](https://image.slidesharecdn.com/computergraphics-120104181113-phpapp02/85/testpang-15-320.jpg)