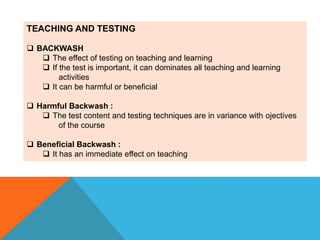

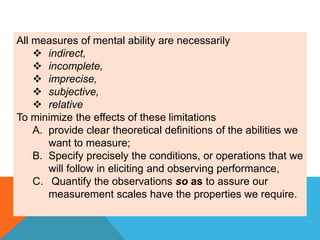

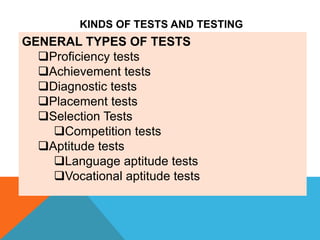

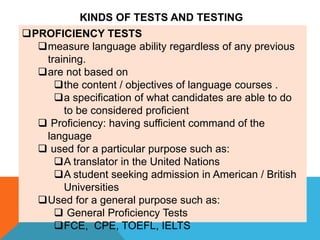

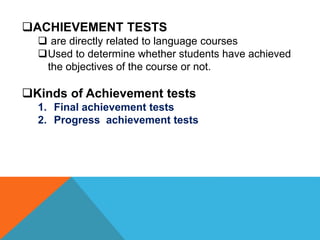

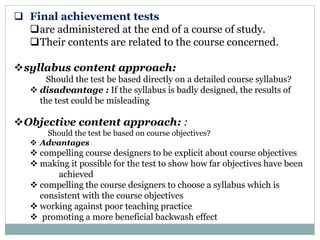

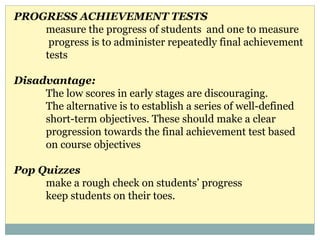

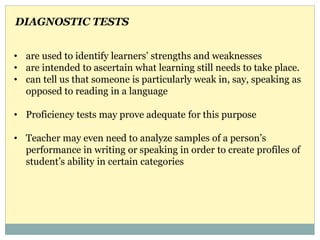

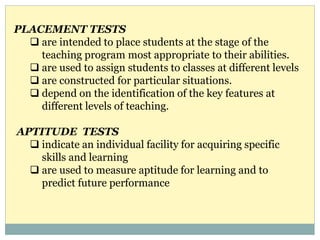

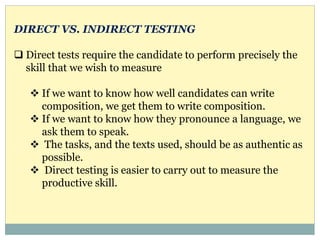

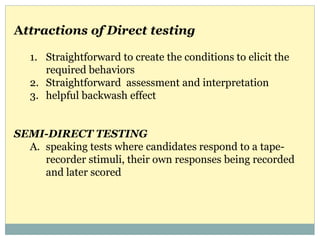

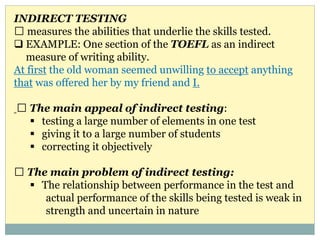

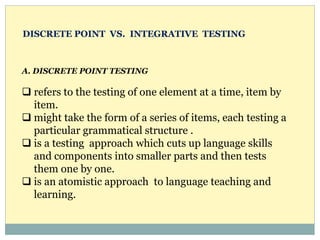

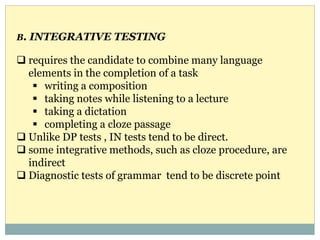

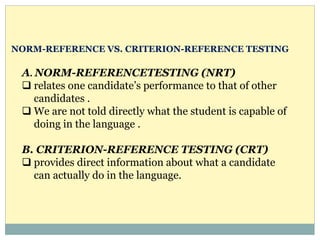

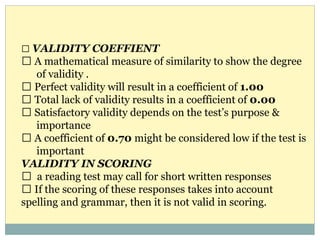

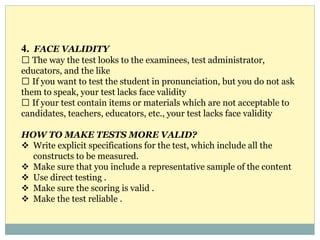

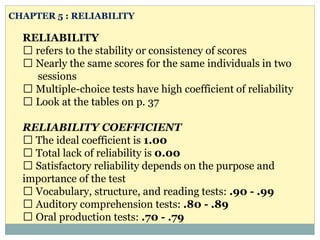

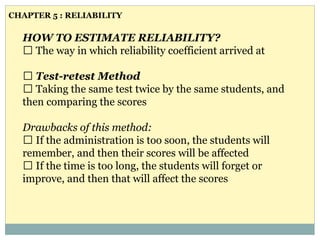

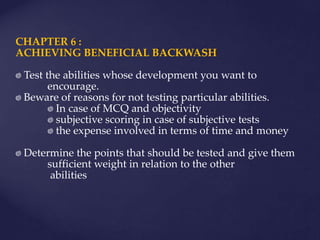

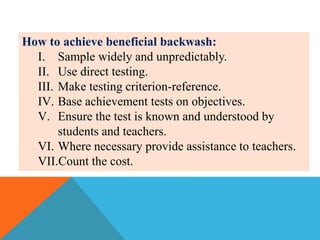

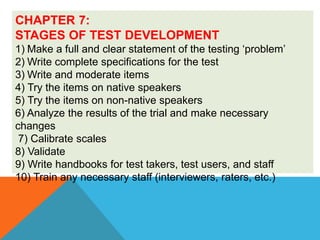

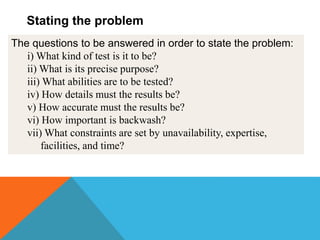

The document discusses various aspects of testing in education, focusing on the impact of tests on teaching and learning, the types of tests used (including proficiency, achievement, diagnostic, and aptitude tests), and the importance of validity and reliability in test design. It highlights the beneficial and harmful backwash effects tests can have and outlines strategies for creating effective assessments that accurately reflect student abilities. Key concepts such as direct versus indirect testing, content and construct validity, and methods for ensuring reliability are explored.