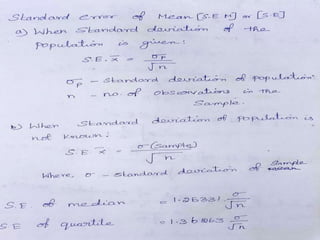

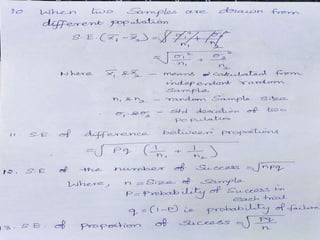

The document discusses the concept of standard error and its applications in hypothesis testing at different significance levels (5% and 1%). It classifies tests of significance into three types based on sample size—attributes, large samples, and small samples—and provides examples of their application in biological sciences. Additionally, it outlines the use of t-tests and f-tests for small samples to evaluate specific health and agricultural statistics.