6/10 (金) 09:30~10:40メイン会場

講師:シモセラ エドガー 氏(早稲田大学)

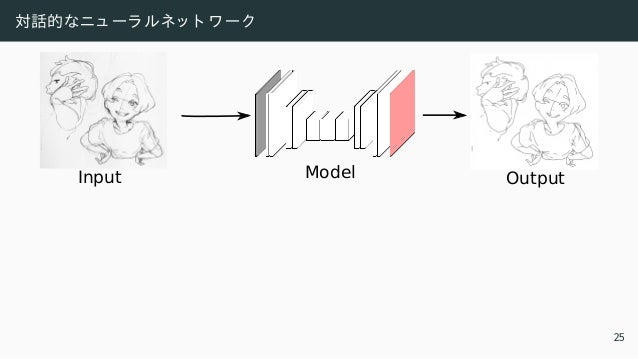

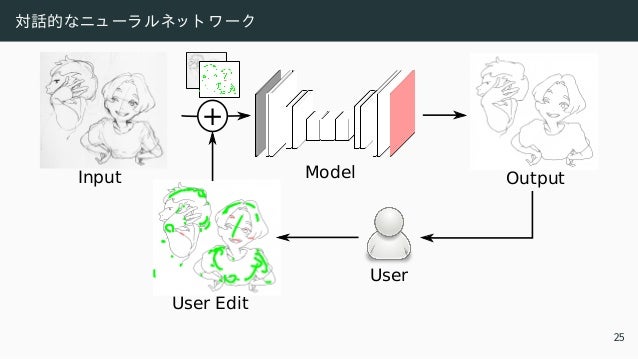

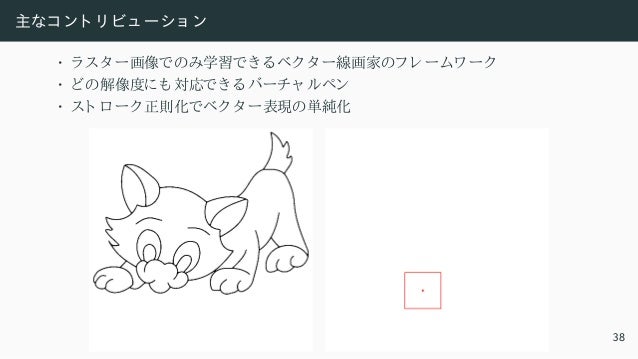

概要: インターネットが現代社会の柱の基本的な構成要素になりつつある現在、大規模なコンテンツ制作がこれまで以上に重要になってきています。しかし、イラストレーションやウェブデザインなどのコンテンツ制作には、高解像度、構造付きデータ、インタラクティブ性など、コンピュータービジョンと機械学習にとって一連のユニークな課題があります。本講演では、機械学習技術を利用して、コンテンツ制作の多様な課題を解決し、クリエイターの能力を向上させる方法について説明します。

![AI で生成さ れたアート

• Computers Do Not Make Art [Hertzmann 2020]

• アート はソ ーシャ ルアク ティ ビティ

• コ ード やデータ は人間が集める

• AI はアート を 作れずにただのツ ール

• Christie で$432,500 の GAN を 売買

4](https://image.slidesharecdn.com/ts32022ssiiess-220607054523-e80be8dc/95/SSII2022-TS3-6-638.jpg)

![敵対的学習

min

S

max

D

教師あり

z }| {

E(x,y∗)∼ρx,y

通常教師あり ロ ス

z }| {

kS(x) − y∗

k2 +

教師あり 敵対的ロ ス

z }| {

α log D(y∗

) + α log(1 − D(S(x)))

+ β

線画

z }| {

Ey∼ρy [ log D(y) ] + β

ラ フ スケッ チ

z }| {

Ex∼ρx [ log(1 − D(S(x))) ]

| {z }

教師なし 敵対的ロ ス

入力 通常ロ ス + 敵対的ロ ス + 教師なし ロ ス

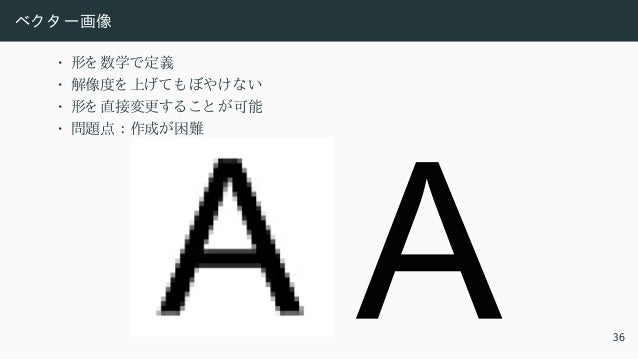

22](https://image.slidesharecdn.com/ts32022ssiiess-220607054523-e80be8dc/95/SSII2022-TS3-45-638.jpg)

![全自動の限界

“1. The inker’s main purpose is to translate the penciller’s graphite pencil lines

into reproducible, black, ink lines.

2. The inker must honor the penciller’s original intent while adjusting any obvious

mistakes.

3. The inker determines the look of the finished art.”

— Gary Martin, The Art of Comic Book Inking [1997]

24](https://image.slidesharecdn.com/ts32022ssiiess-220607054523-e80be8dc/95/SSII2022-TS3-51-638.jpg)

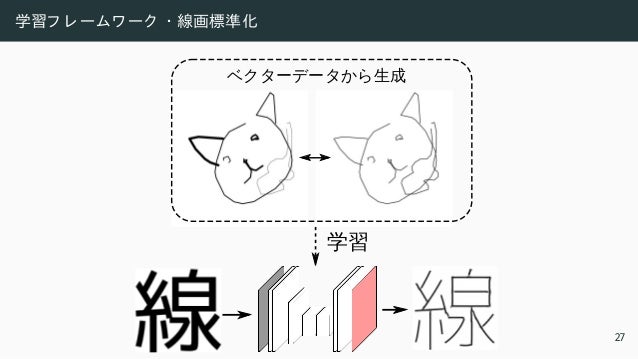

![学習フ レ ームワ ーク ・ 線画標準化

Input [Zhang and Suen 1984] Ours

27](https://image.slidesharecdn.com/ts32022ssiiess-220607054523-e80be8dc/95/SSII2022-TS3-57-638.jpg)

![(1 + γ (1 − y∗

))

| {z }

Weight lines with γ

|

• L1 ロ スを 使用

• γ の重みで線に重視

103

104

105

103

104

105

103

104

105

103

104

105

Input [Simo-Serra+ 2016] Baseline Ours

©David Revoy www.davidrevoy.com 29](https://image.slidesharecdn.com/ts32022ssiiess-220607054523-e80be8dc/95/SSII2022-TS3-66-638.jpg)

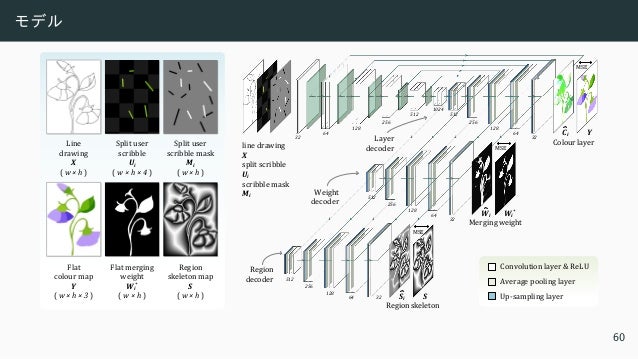

![モデル

• エンコ ーダー ・ ディ コ ーダー型 [Simo-Serra+ 2016]

• 24 レ イ ヤーの全層畳み込みニュ ーラ ルネッ ト ワ ーク

• フ ィ ルタ ーの数を 減少

• 約三倍の加速

Approach Parameters 10242

px 15122

px 20482

px 25602

px

Baseline 44,551,425 238.8ms 562.4ms 984.7ms 1.59s

Ours 12,795,169 89.9ms 225.5ms 382.7ms 592.9ms

31](https://image.slidesharecdn.com/ts32022ssiiess-220607054523-e80be8dc/95/SSII2022-TS3-68-638.jpg)

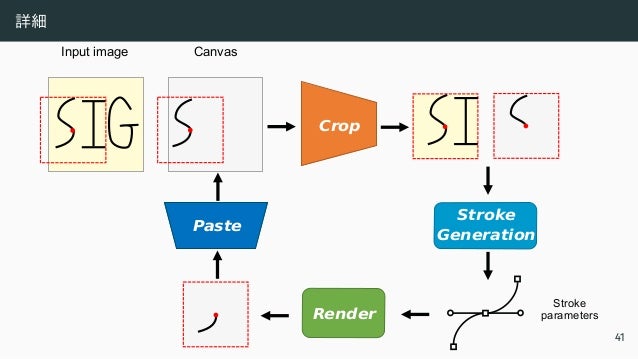

![スト ローク と は

• 二次ベジェ曲線

B(τ) = (1 − τ)2

P0 + 2(1 − τ)τP1 + τ2

P2, τ ∈ [0, 1] (1)

• (0, 0) から 描く ので、 P0 = 0

• モデルの出力

at = xc, yc, ∆x, ∆y, w

| {z }

曲線のパラ メ ータ と 幅 w

, ∆s, p

t

, t = 1, 2, ..., T (2)

• [−1, +1] の座標系

• ∆s は Canvas のスケール変更

• p ∈ [0, 1] は線を 描く か移動だけする か決める 変数

40](https://image.slidesharecdn.com/ts32022ssiiess-220607054523-e80be8dc/95/SSII2022-TS3-82-638.jpg)

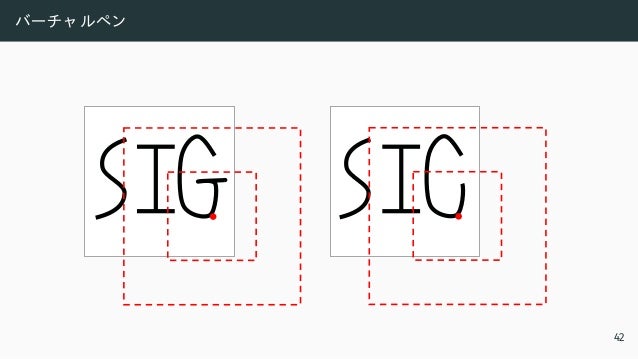

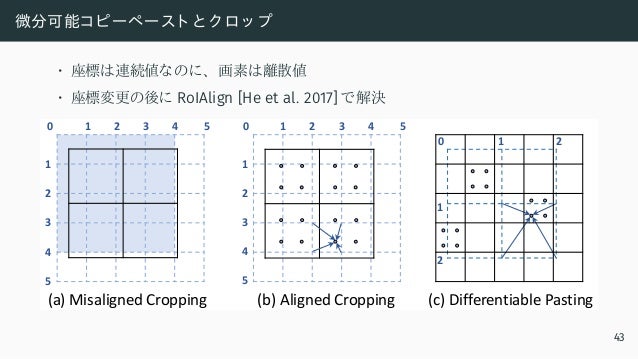

![微分可能コピーペースト と ク ロッ プ

• 座標は連続値なのに、 画素は離散値

• 座標変更の後に RoIAlign [He et al. 2017] で解決

0 1 2 3 4 5

1

2

3

4

5

0 1 2

1

2

(a) Misaligned Cropping (c) Differentiable Pasting

(b) Aligned Cropping

0 1 2 3 4 5

1

2

3

4

5

43](https://image.slidesharecdn.com/ts32022ssiiess-220607054523-e80be8dc/95/SSII2022-TS3-89-638.jpg)

![微分化レ ンダーリ ング

• ベク タ ースト ロ ーク から ラ スタ ー画像を 生成

• VGG16 を 使用 [Simonyan and Zisserman 2015]

Neural

Network

Neural

Renderer

Raster

Loss

Input image Stroke parameters Rendered image

44](https://image.slidesharecdn.com/ts32022ssiiess-220607054523-e80be8dc/95/SSII2022-TS3-90-638.jpg)

![ラスタ ーの損失関数

• VGG16 を 使用 [Simonyan and Zisserman 2015]

Loss Network (VGG-16)

Rendered

image

Target

image

45](https://image.slidesharecdn.com/ts32022ssiiess-220607054523-e80be8dc/95/SSII2022-TS3-91-638.jpg)

![ベク タ ー化の結果

75s 69s 29s (GPU)

Fidelity-vs-Simplicity

[Favreau et al. 2016]

PolyVectorization

[Bessmeltsev et al. 2019] Our results (vector)

Dracolion (1024px)

48](https://image.slidesharecdn.com/ts32022ssiiess-220607054523-e80be8dc/95/SSII2022-TS3-96-638.jpg)

![ベク タ ー化の結果

Fidelity-vs-Simplicity

[Favreau et al. 2016]

PolyVectorization

[Bessmeltsev et al. 2019] Our results (vector)

Mouse (1024px)

89s 61s 23s (GPU)

48](https://image.slidesharecdn.com/ts32022ssiiess-220607054523-e80be8dc/95/SSII2022-TS3-97-638.jpg)

![ペン入れの結果

PolyVectorization

[Bessmeltsev et al. 2019]

Sketch Simplification (pixel)

[Simo-Serra et al. 2018]

+ PolyVectorization

Our results (vector)

Bird (384px)

50](https://image.slidesharecdn.com/ts32022ssiiess-220607054523-e80be8dc/95/SSII2022-TS3-100-638.jpg)

![ペン入れの結果

PolyVectorization

[Bessmeltsev et al. 2019]

Sketch Simplification (pixel)

[Simo-Serra et al. 2018]

+ PolyVectorization

Our results (vector)

Hand (433px)

50](https://image.slidesharecdn.com/ts32022ssiiess-220607054523-e80be8dc/95/SSII2022-TS3-101-638.jpg)

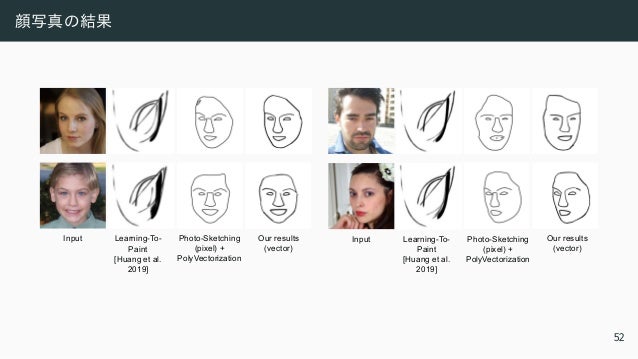

![顔写真の結果

Our results

(vector)

Input Learning-To-

Paint

[Huang et al.

2019]

Photo-Sketching

(pixel) +

PolyVectorization

Our results

(vector)

Input Learning-To-

Paint

[Huang et al.

2019]

Photo-Sketching

(pixel) +

PolyVectorization

52](https://image.slidesharecdn.com/ts32022ssiiess-220607054523-e80be8dc/95/SSII2022-TS3-103-638.jpg)