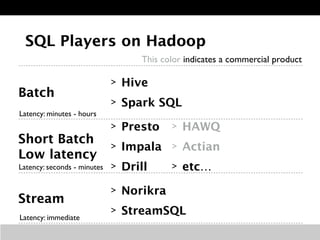

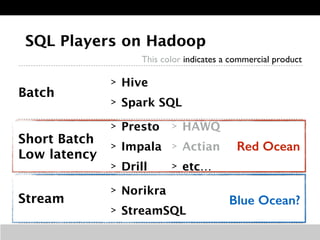

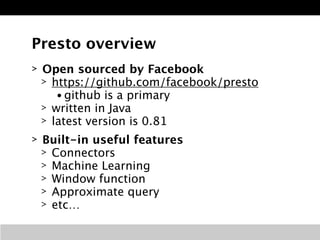

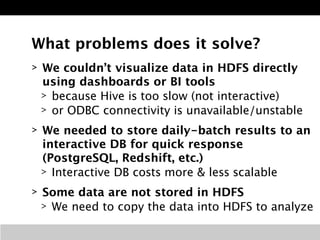

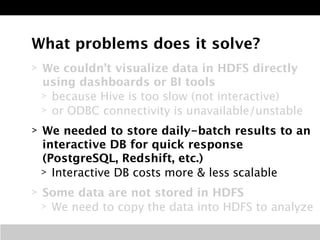

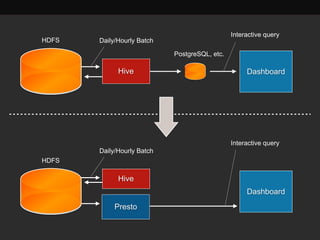

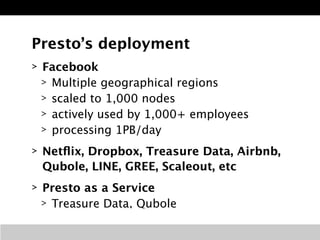

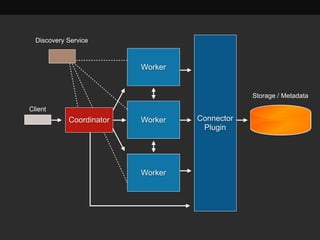

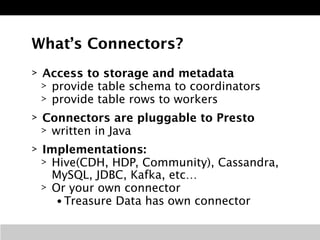

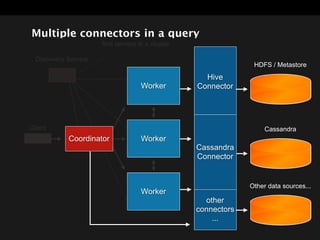

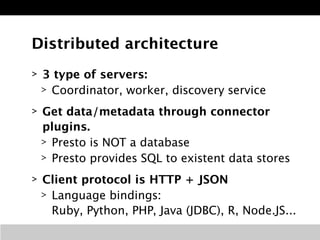

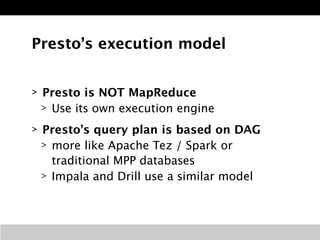

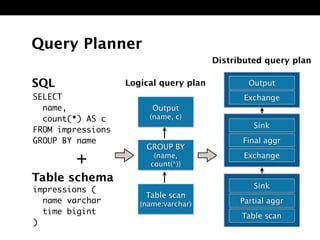

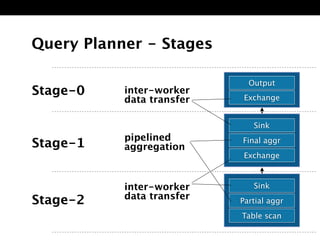

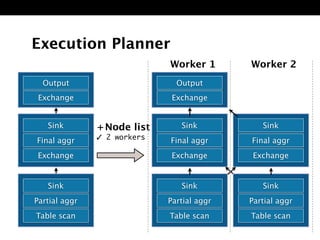

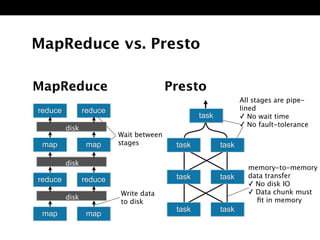

The document discusses Presto, an open source distributed SQL query engine for interactive analysis of large datasets. It provides summaries of Presto's capabilities, architecture, and how it addresses issues with other SQL engines on Hadoop like Hive being too slow. Key points include that Presto allows direct querying of data in HDFS without needing to copy it elsewhere, uses a distributed query execution model rather than MapReduce, and supports many connectors and a PostgreSQL gateway.