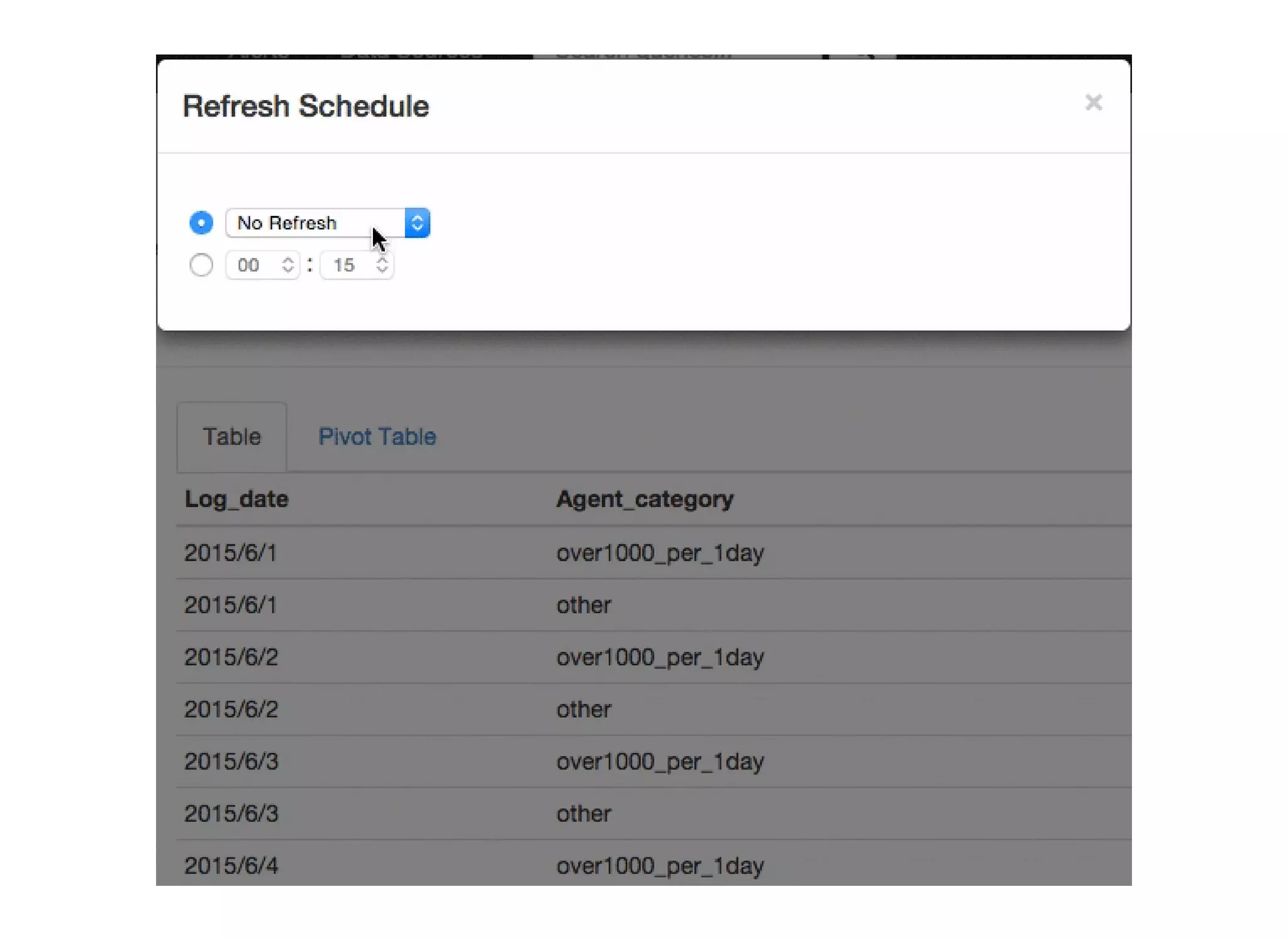

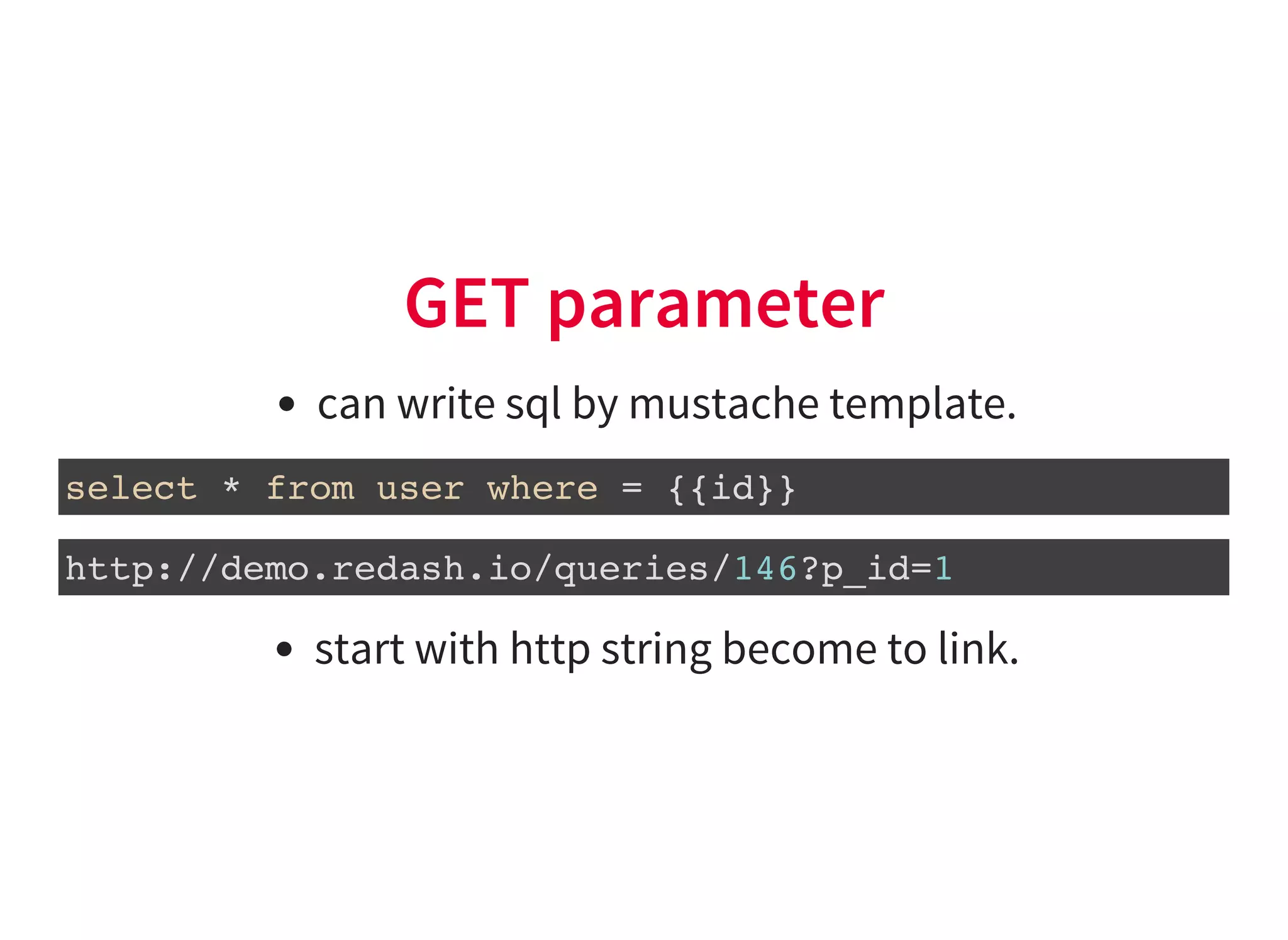

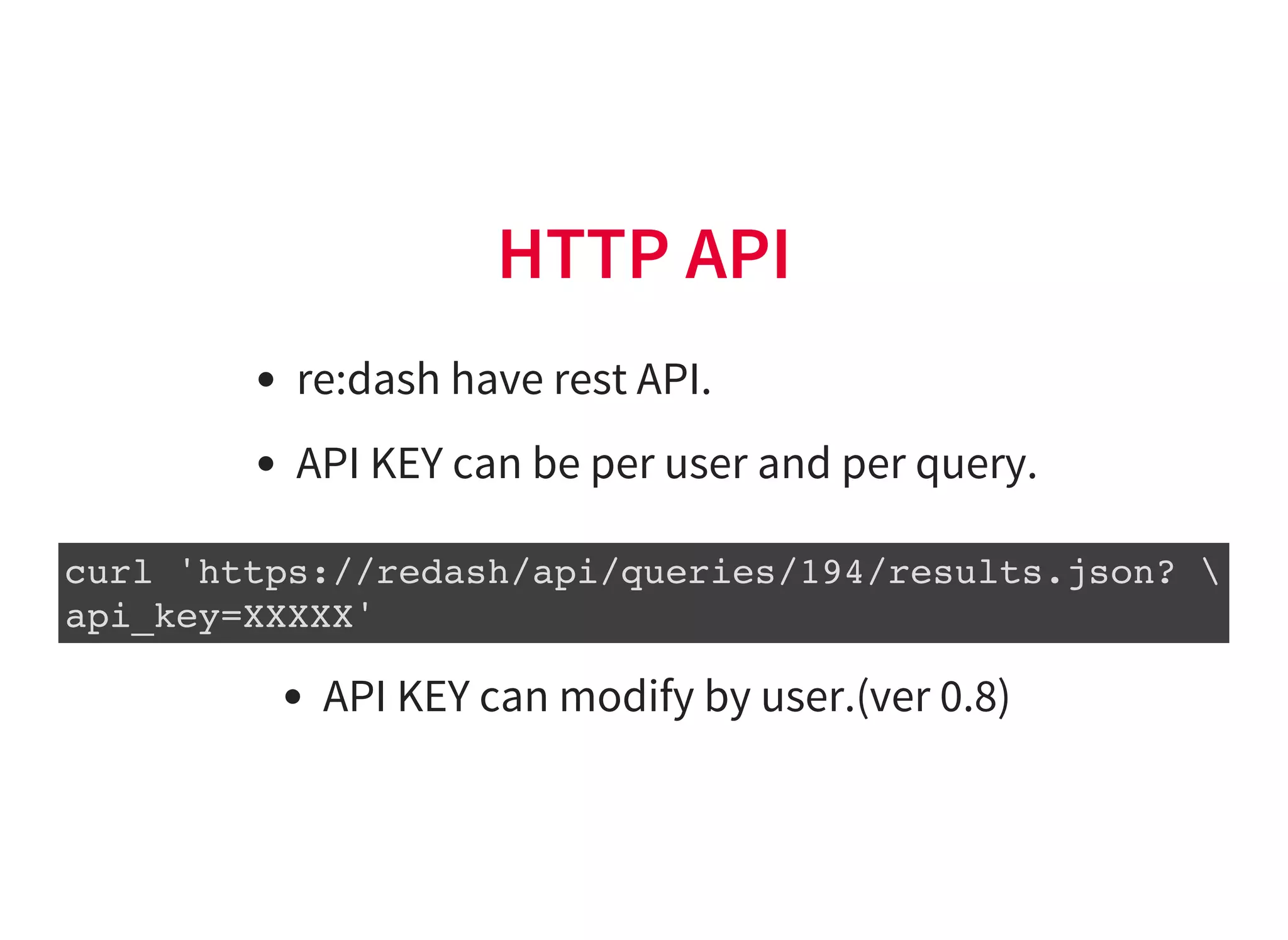

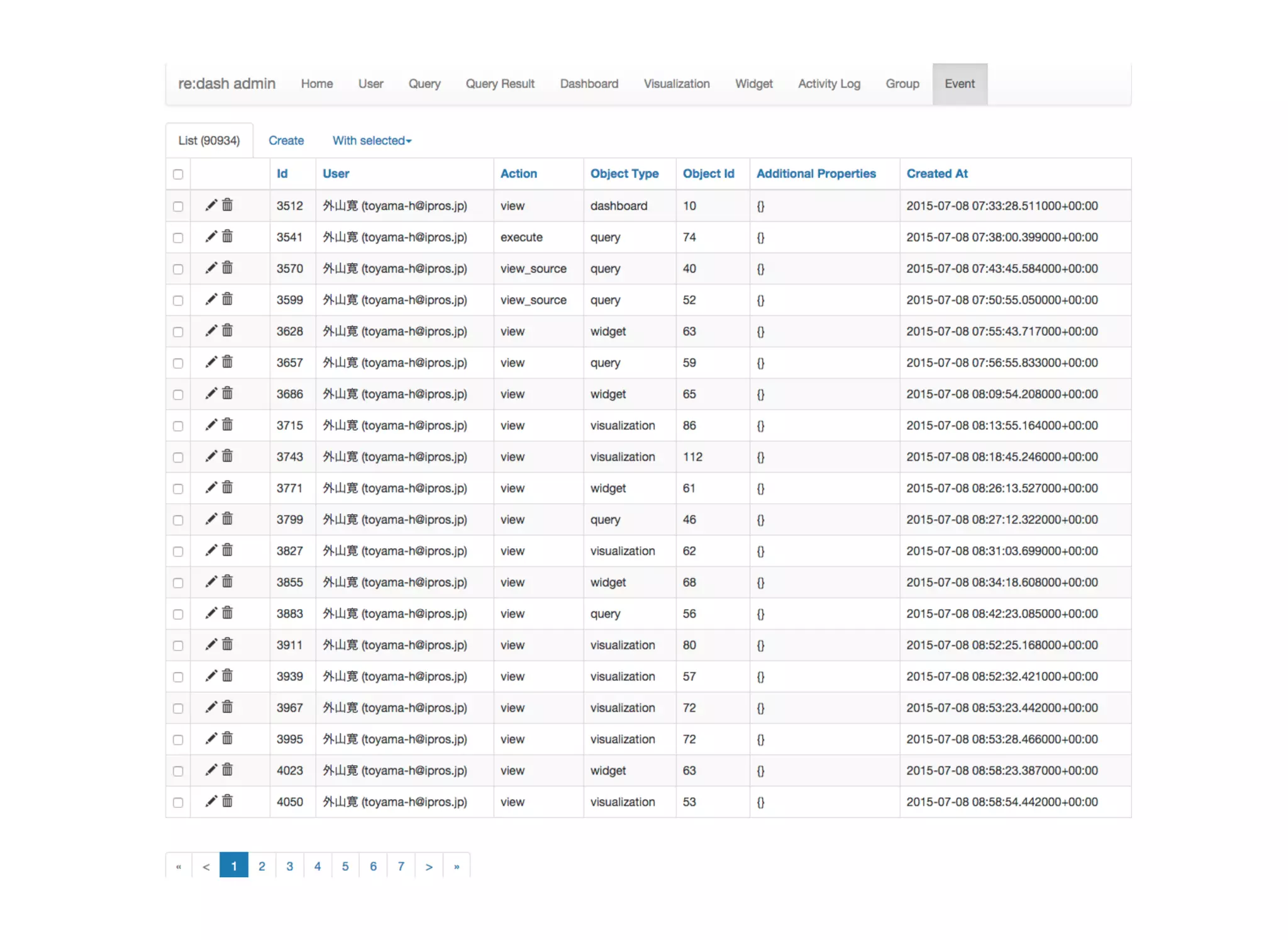

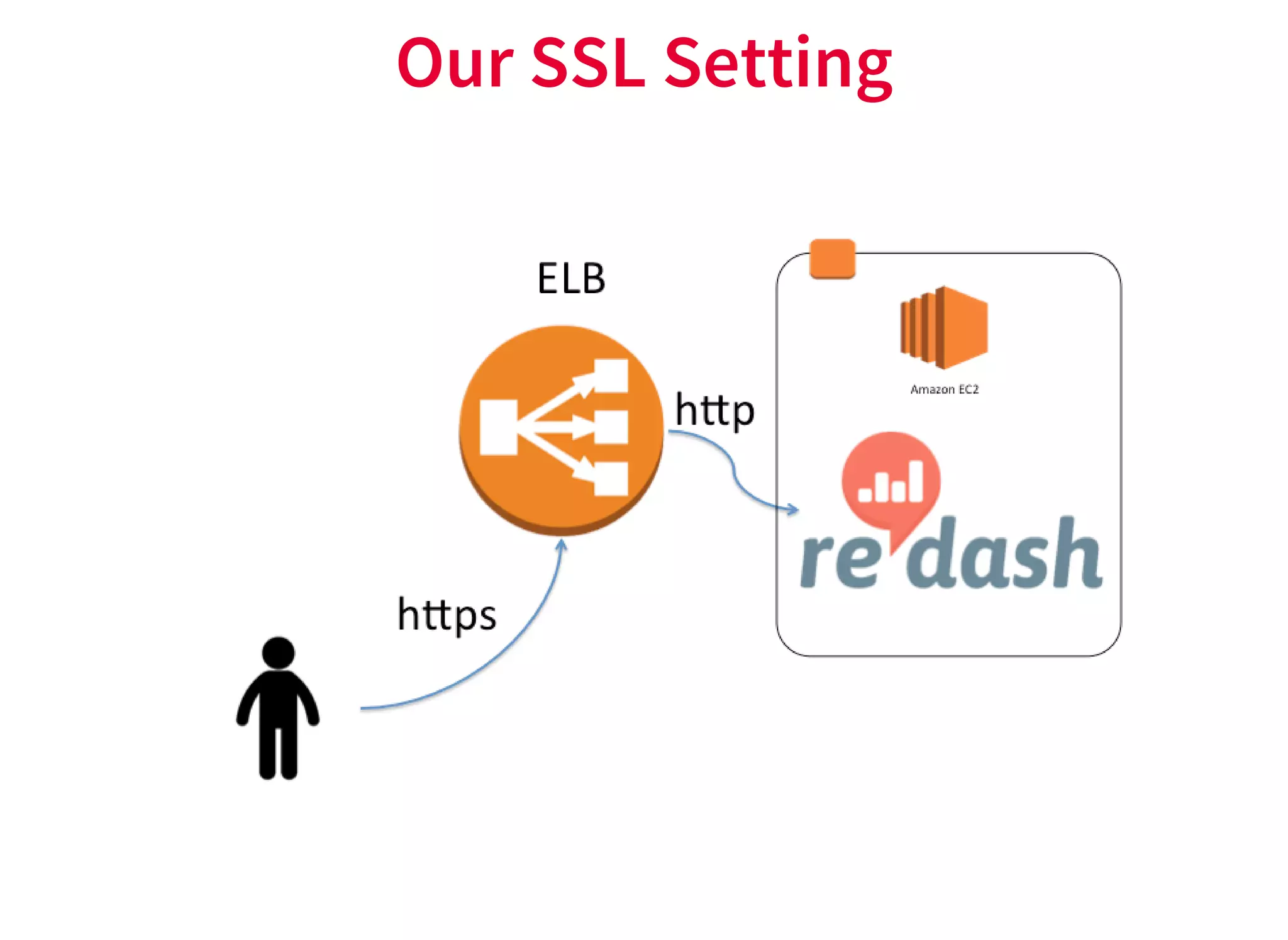

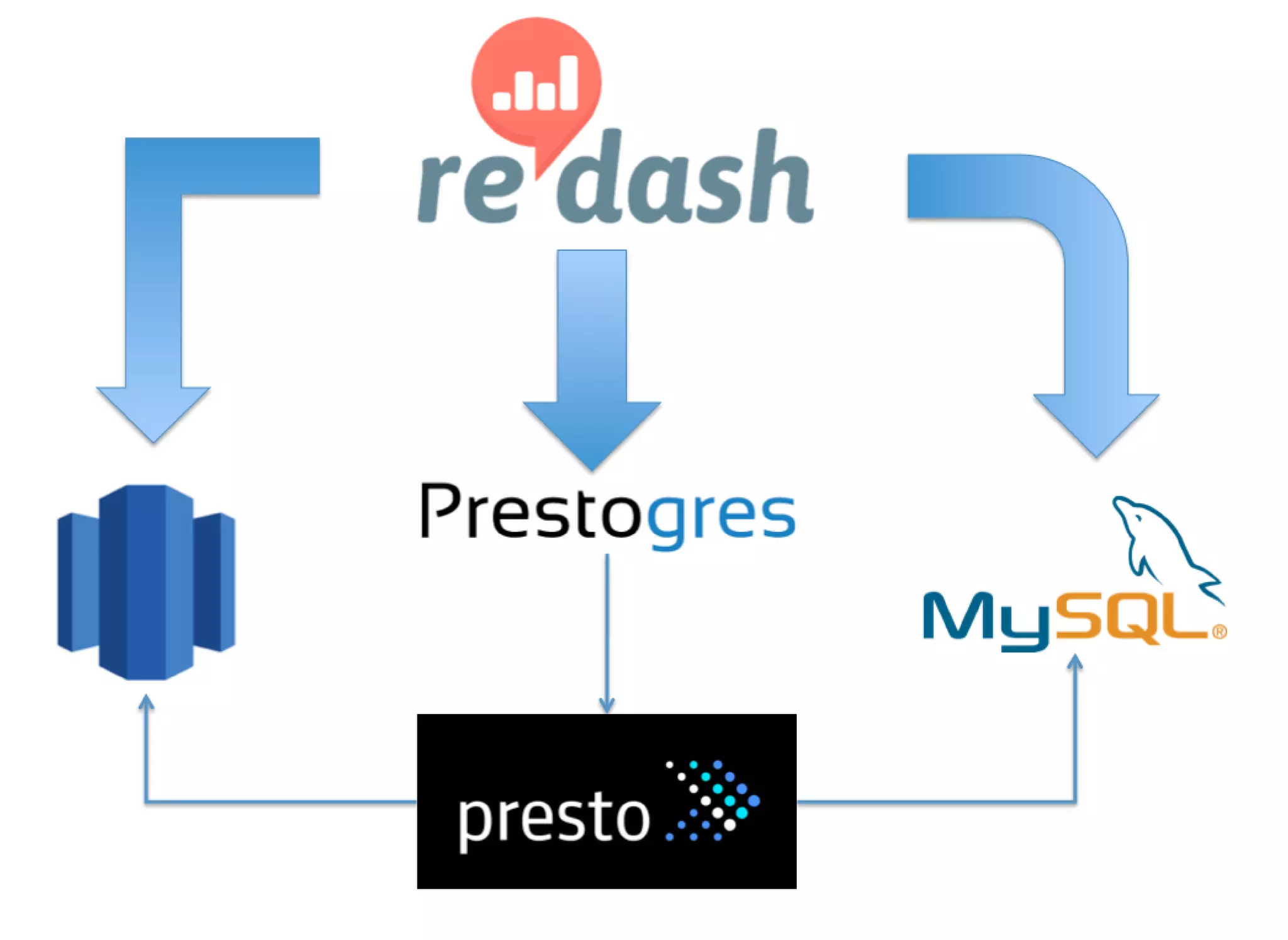

re:dash is a tool for sharing SQL queries, visualizing results, and scheduling automated refreshes. It supports connecting to various data sources, provides a low-cost option on AWS, and enables caching of query results for improved performance. Key features include sharing queries with team members, running queries on a schedule, connecting to backends like PostgreSQL, and programming visualizations and parameters through the HTTP API. It also focuses on security features such as authentication, authorization, auditing, and SSL encryption.