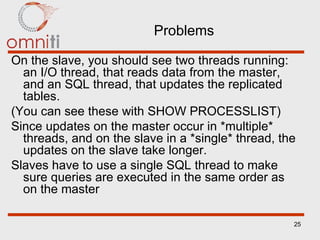

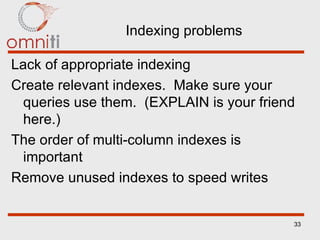

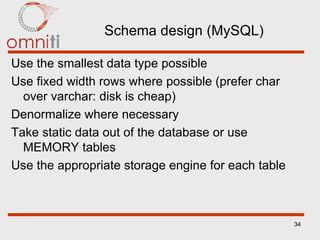

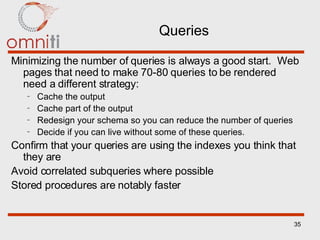

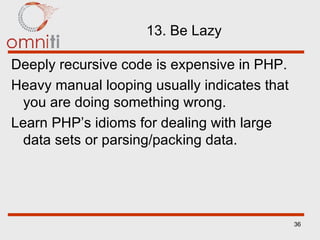

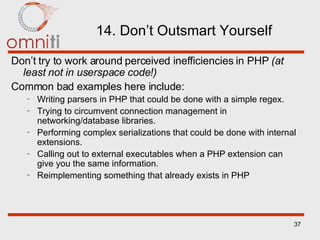

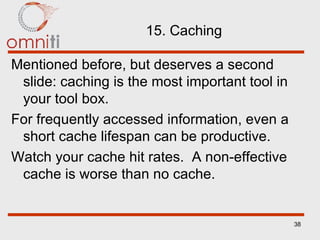

The document discusses best practices for scalability and performance when developing PHP applications. Some key points include profiling and optimizing early, cooperating between development and operations teams, testing on production-like data, caching frequently accessed data, avoiding overuse of hard-to-scale resources, and using compiler caching and query optimization. Decoupling applications, caching, data federation, and replication are also presented as techniques for improving scalability.

![Scalability and Performance Best Practices Laura Thomson OmniTI [email_address]](https://image.slidesharecdn.com/scaleperfbestpractices-25539/75/scale_perf_best_practices-1-2048.jpg)