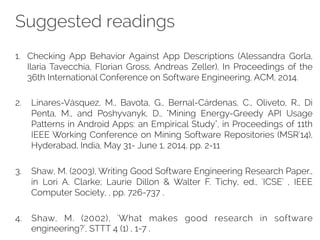

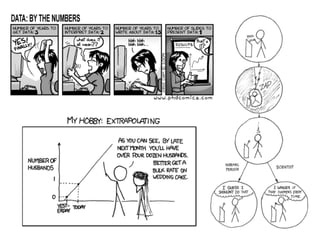

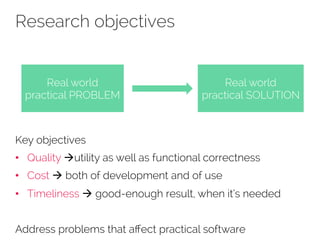

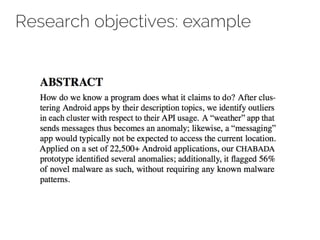

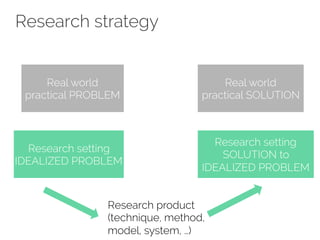

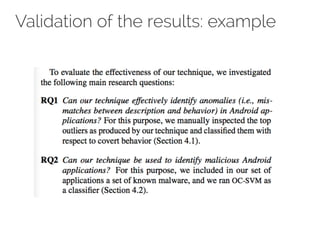

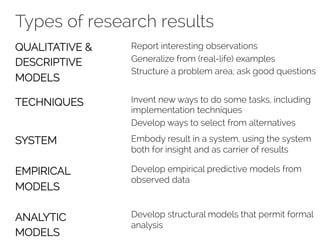

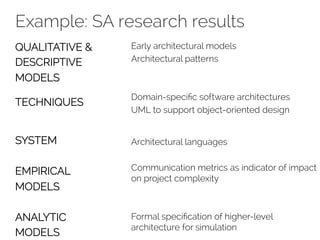

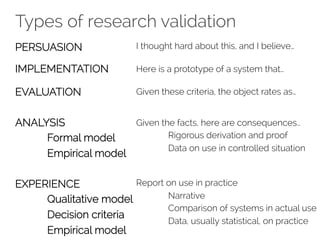

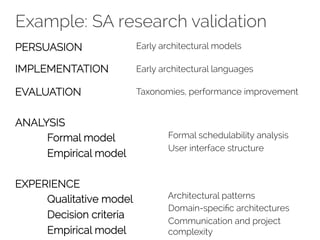

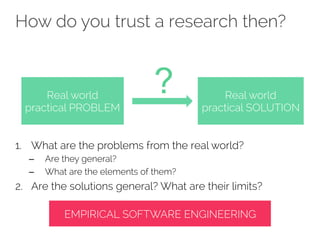

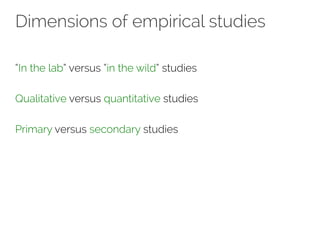

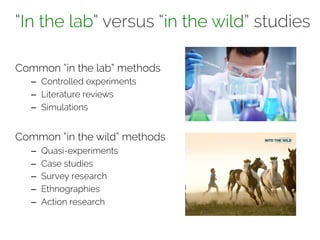

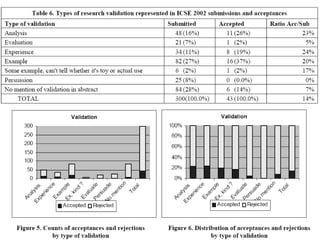

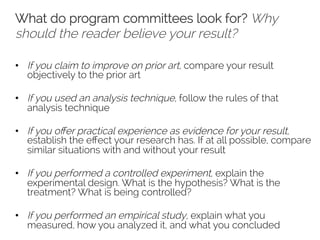

The document discusses key aspects of software engineering research, emphasizing the importance of addressing real-world practical problems and developing empirical strategies for validation. It outlines the processes of formulating research questions, conducting studies, and writing impactful research papers while highlighting common pitfalls and validation tasks in software engineering. The lecture notes are based on contributions from multiple experts and include suggestions for further reading and homework assignments.

![Empirical software engineering

Scientific use of quantitative and qualitative data to

– understand and

– improve

software products and software development processes

[Victor Basili]

Data is central to address any research question

Issues related to validity addressed continuously](https://image.slidesharecdn.com/03seresearch-141111081123-conversion-gate01/85/RESEARCH-in-software-engineering-37-320.jpg)

![Homework

GOALS:

1. to have the chance to study a specific area of software

engineering that may be of interest to you

2. to be exposed to recurrent and important problems in

software engineering

TASKS:

1. Pick an article from the FOSE 2014 proceedings

2. Carefully read it and analyse it in terms of:

– its research domain, its evolution over time, and its future challenges

– [where possible] understand which research strategies have been

applied either in the paper or in the research area in general

3. give a presentation (max 25 slides) to the classroom

– other post-docs and students will attend the presentations](https://image.slidesharecdn.com/03seresearch-141111081123-conversion-gate01/85/RESEARCH-in-software-engineering-81-320.jpg)