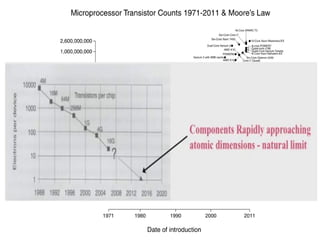

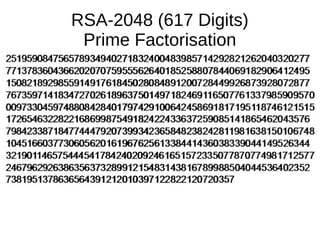

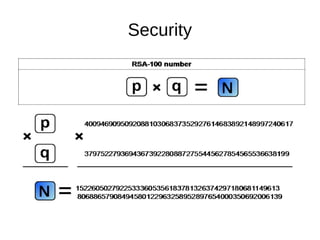

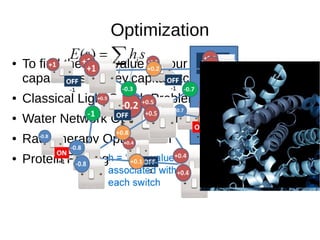

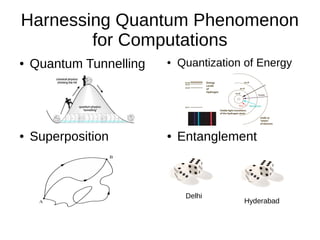

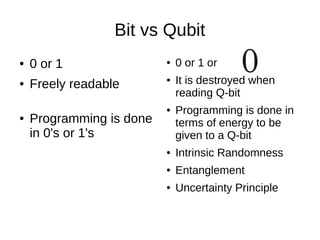

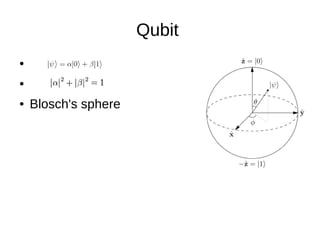

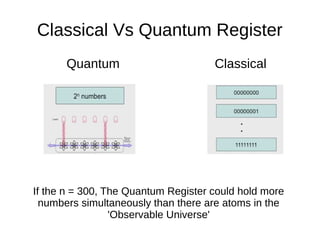

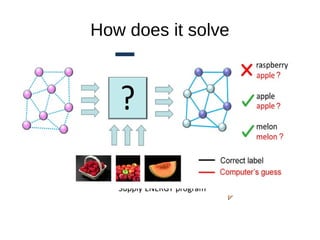

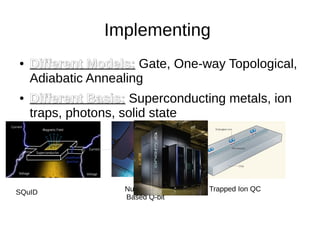

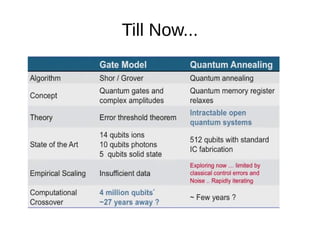

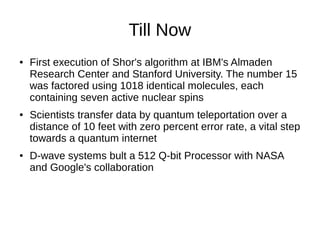

Quantum computing has several potential applications such as solving very large calculations, improving security and optimization problems, and advancing machine learning and simulation. It harnesses quantum phenomena like superposition and entanglement to store and process information using quantum bits that can be in multiple states at once. This allows quantum computers to massively parallelize computations and solve certain problems like integer factorization much faster than classical computers. However, quantum computers face challenges like decoherence that cause qubits to lose their quantum properties, limiting their size and capabilities. Researchers are working to develop different approaches to building larger, more useful quantum computers.