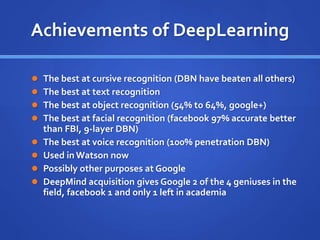

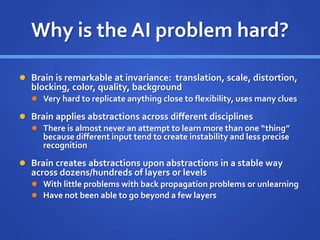

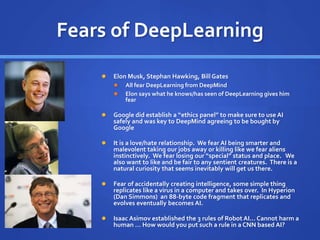

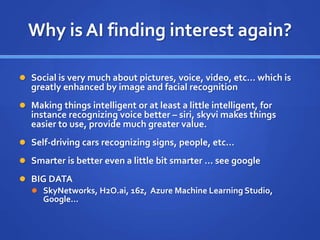

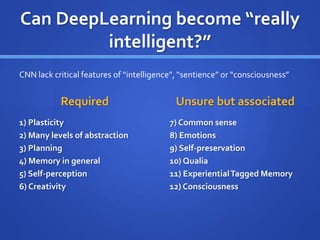

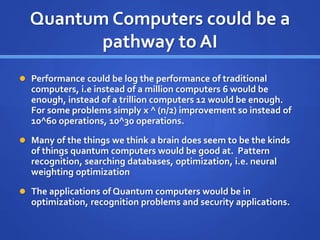

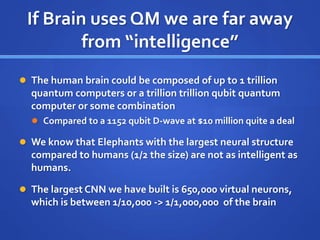

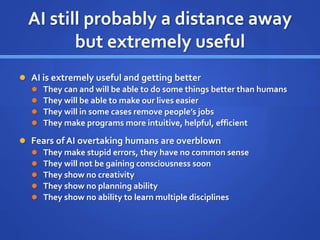

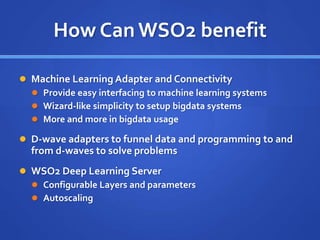

The document discusses the history and evolution of artificial intelligence (AI) from the 1980s to the present, highlighting key developments in AI techniques such as rule-based systems, machine learning, and deep learning. It explores the challenges and fears surrounding AI, including concerns about sentience and the potential for AI to surpass human capabilities, as well as the role of quantum computing in advancing AI technology. The text concludes by emphasizing that while AI is becoming more useful and effective, it still lacks true intelligence and creativity.