This document discusses concepts related to test validity and reliability. It covers:

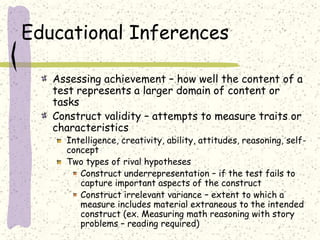

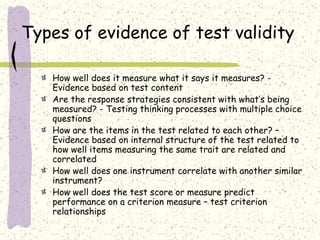

1. Test validity refers to how appropriate and meaningful test scores are and depends on the testing situation and purpose. Validity can be evaluated through test content, structure, and relationships to other criteria.

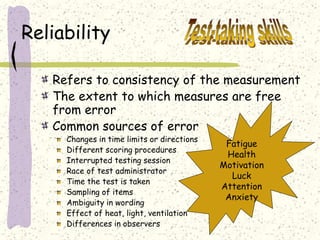

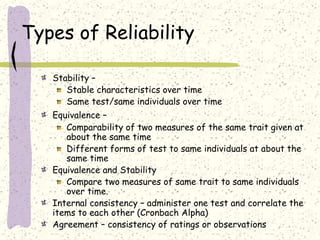

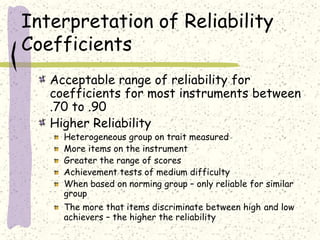

2. Reliability concerns consistency and freedom from error in measurements. It is assessed through stability, equivalence, internal consistency, and agreement. Higher reliability is associated with more heterogeneous groups and items that better discriminate scores.

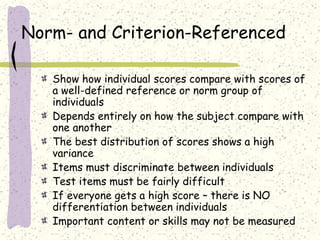

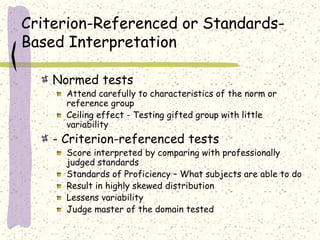

3. Tests can be norm-referenced, comparing scores to peers, or criterion-referenced, comparing scores to standards. Both have advantages and limitations depending on the testing goals.