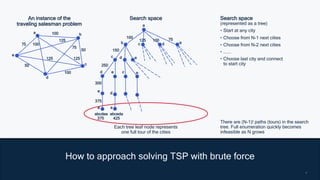

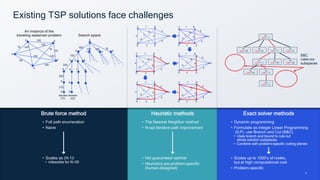

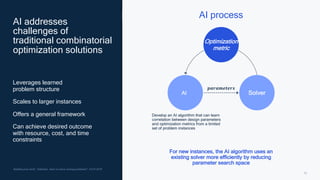

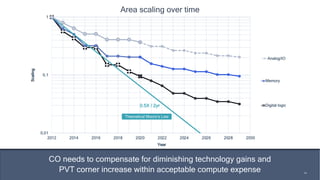

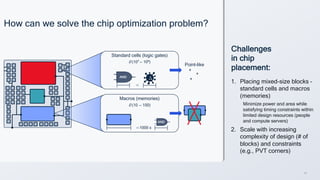

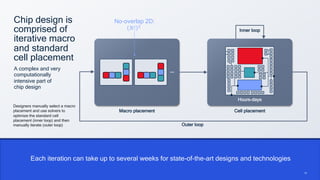

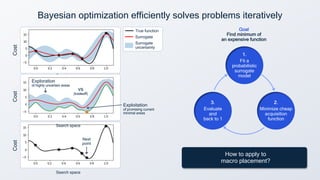

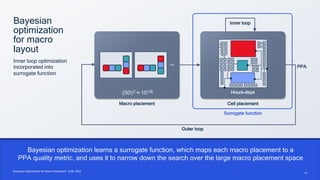

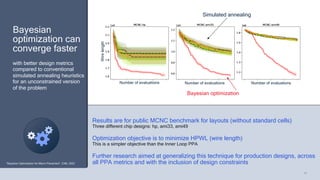

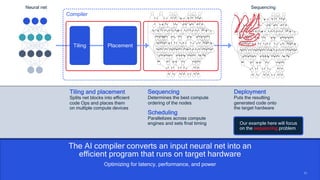

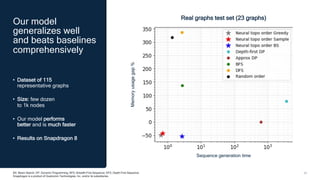

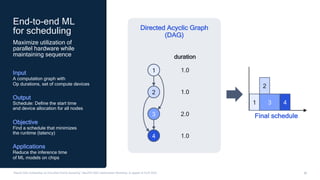

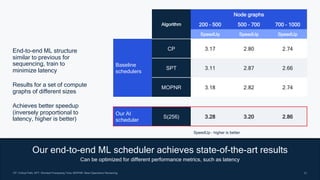

Chris Lott discusses the challenges of combinatorial optimization and how AI solutions can improve efficiency in fields like chip design and compiler optimization. He addresses the limitations of traditional methods and presents AI-driven techniques such as Bayesian optimization and machine learning to enhance performance and reduce costs. Qualcomm's AI research aims to leverage these innovations to tackle complex combinatorial problems across various industries.