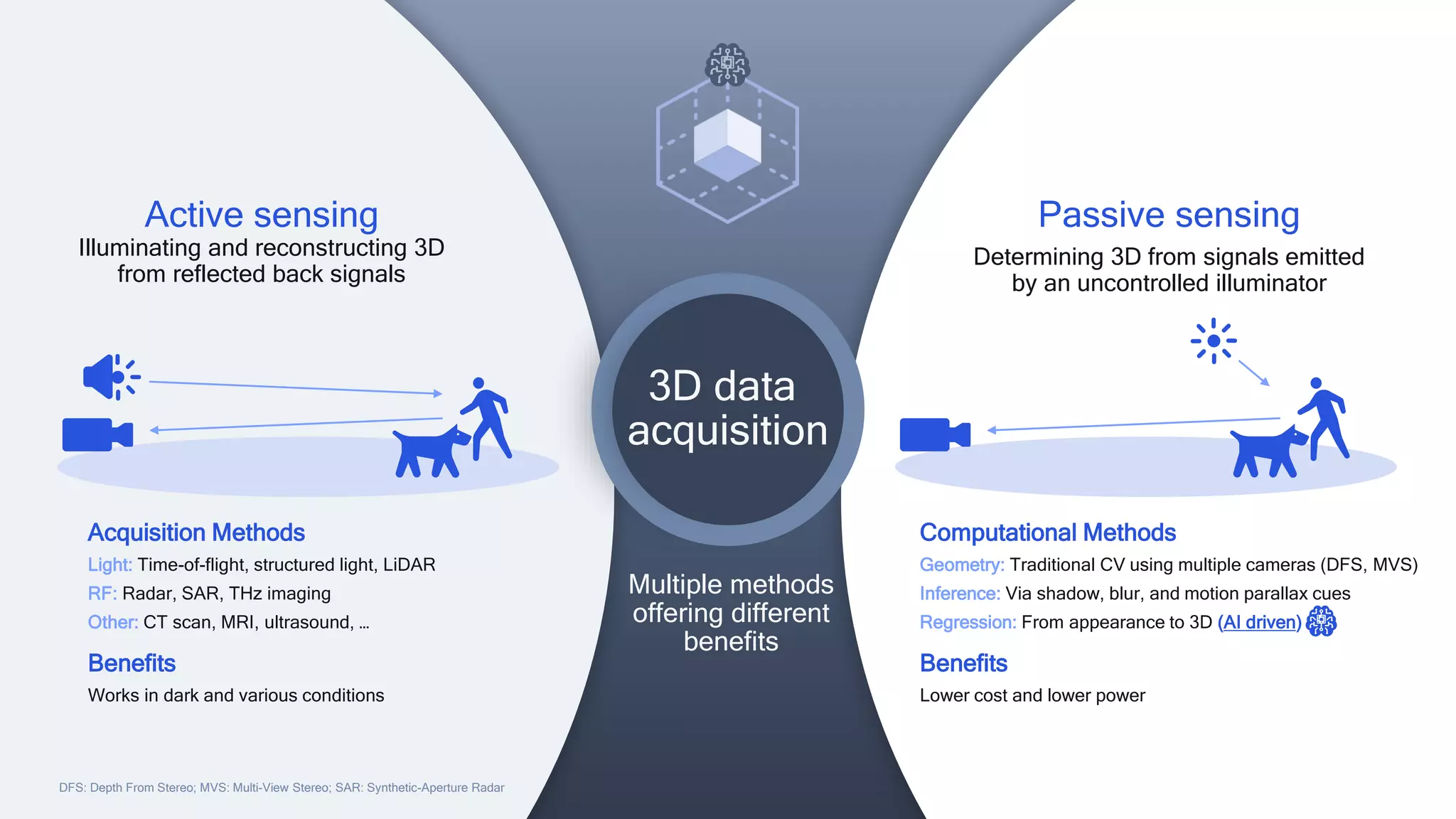

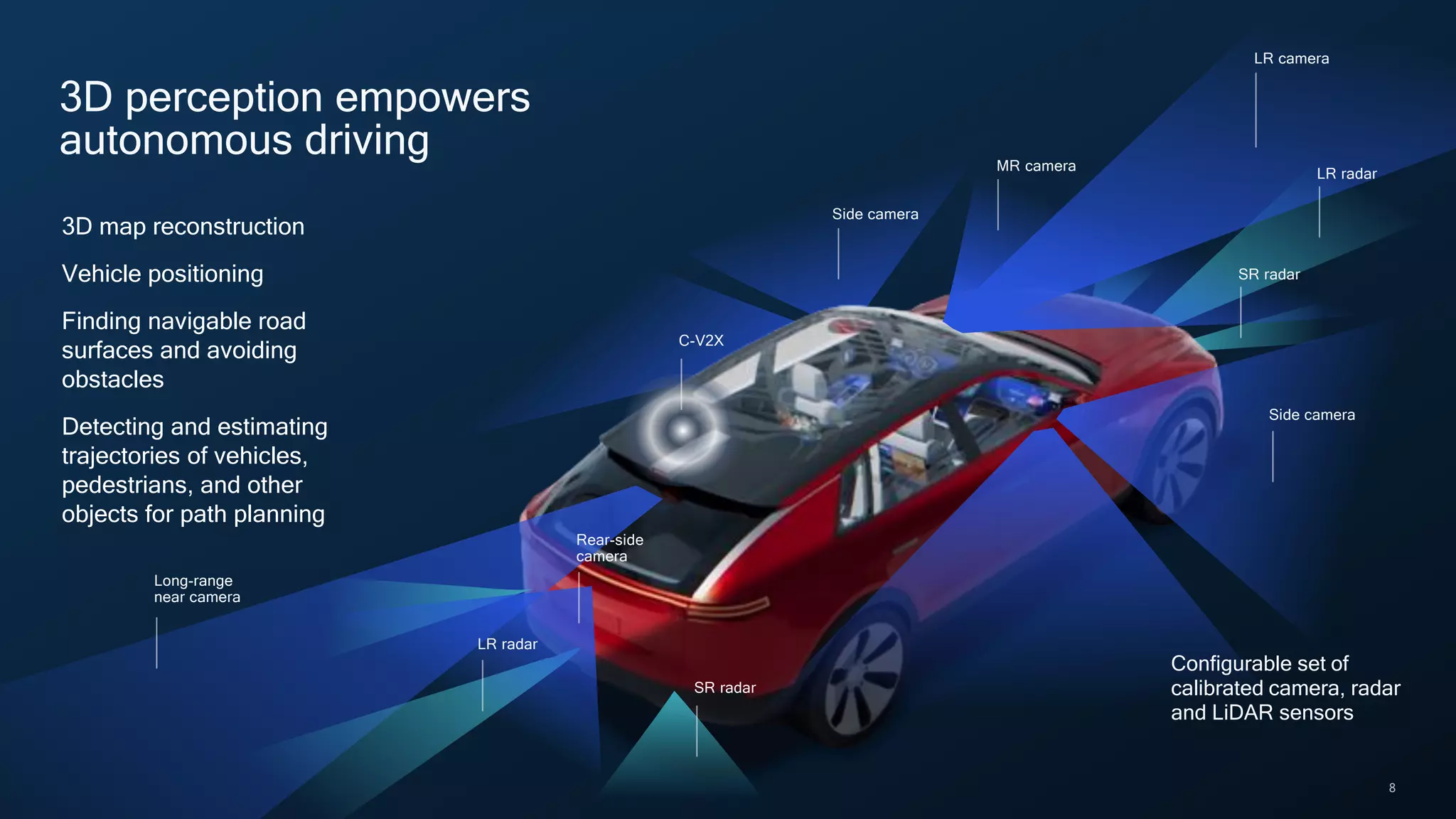

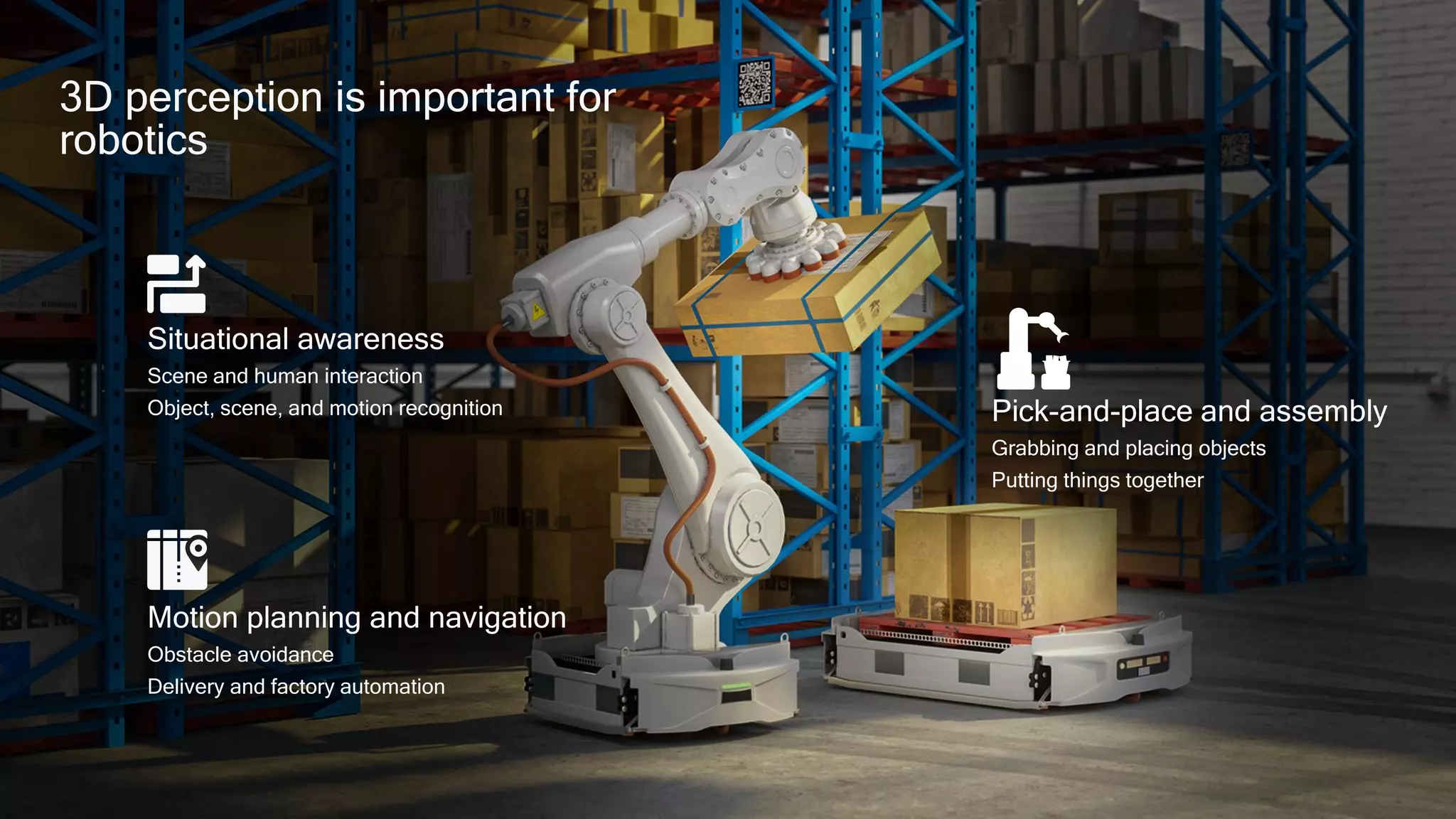

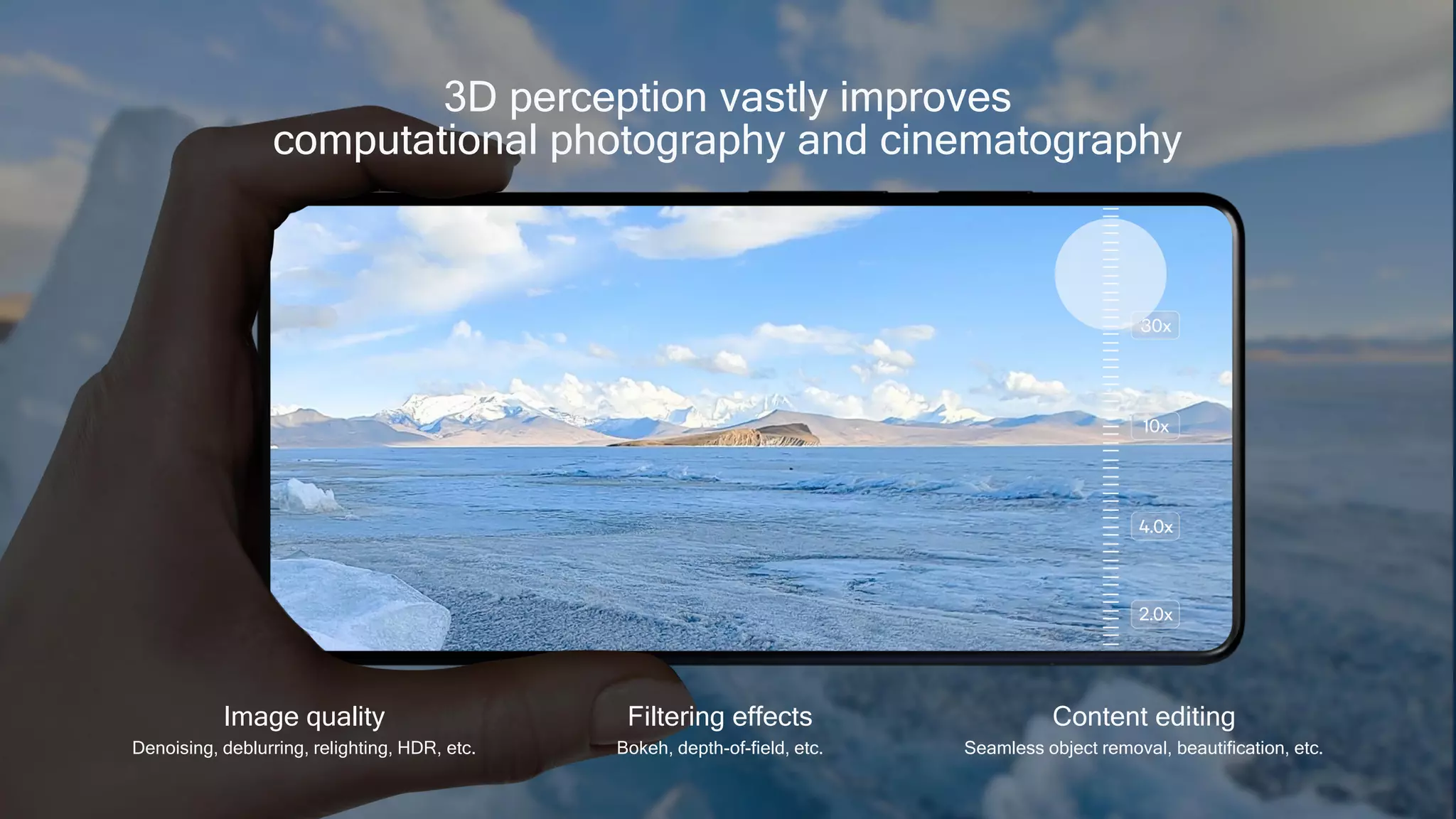

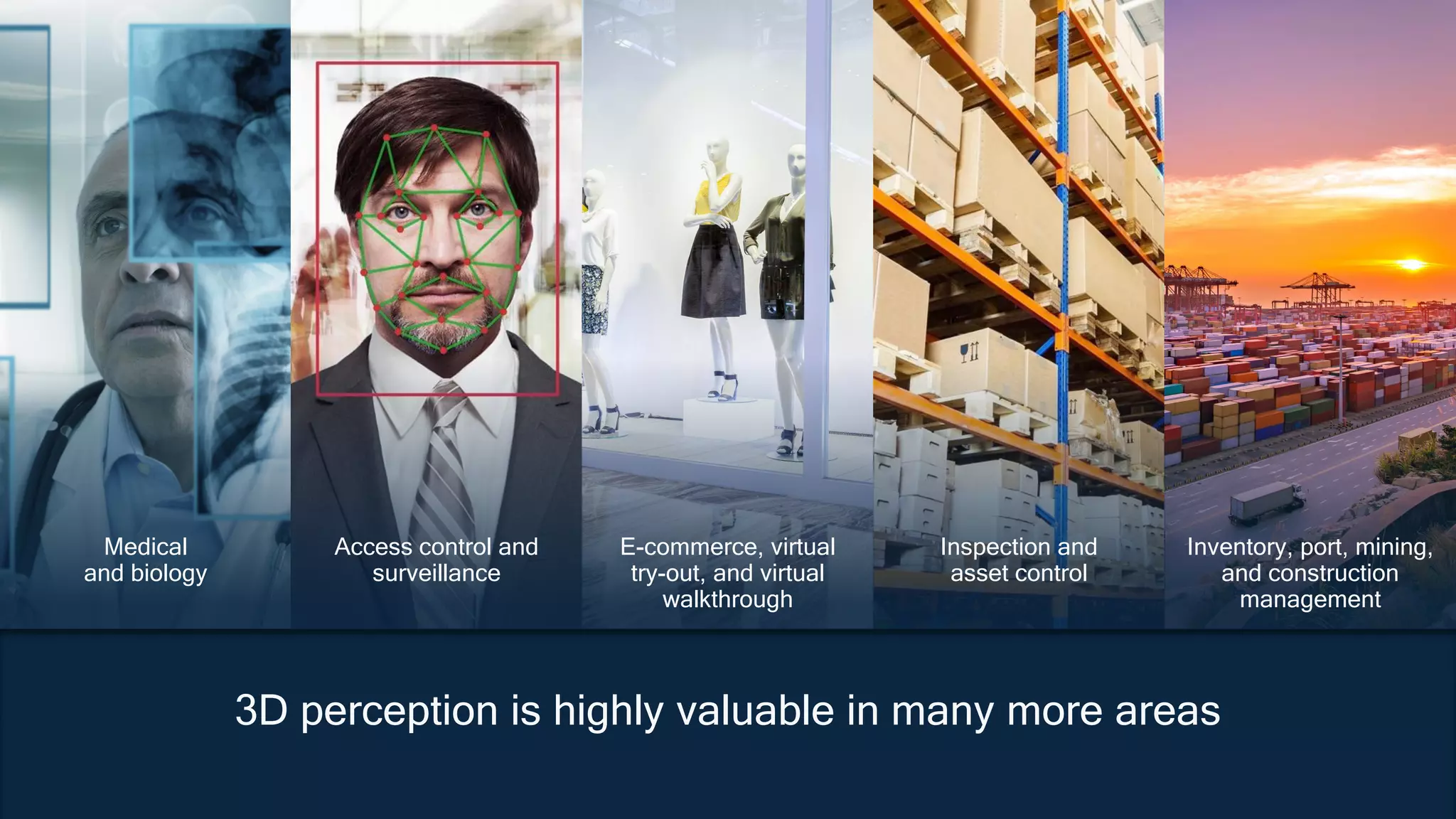

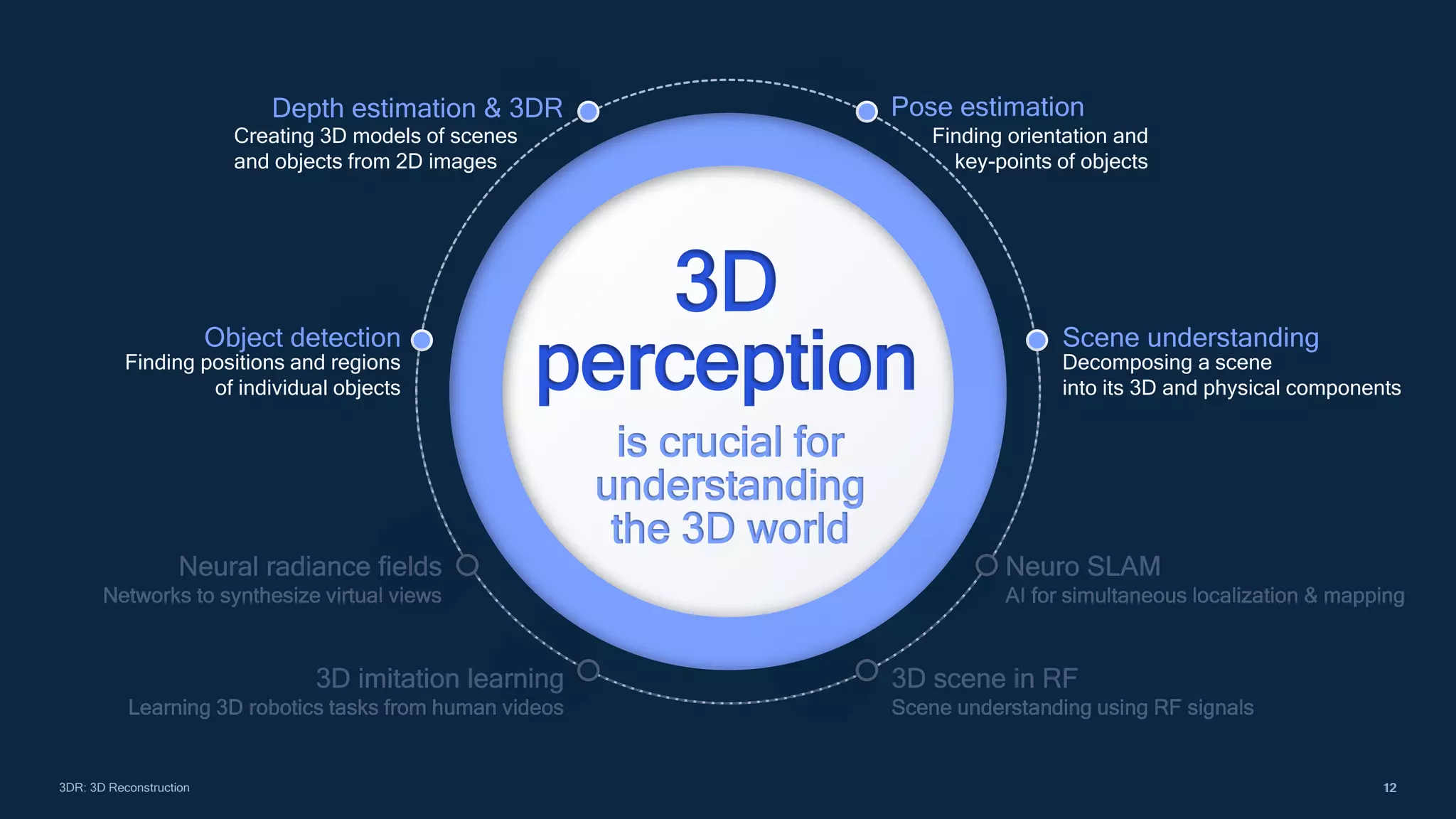

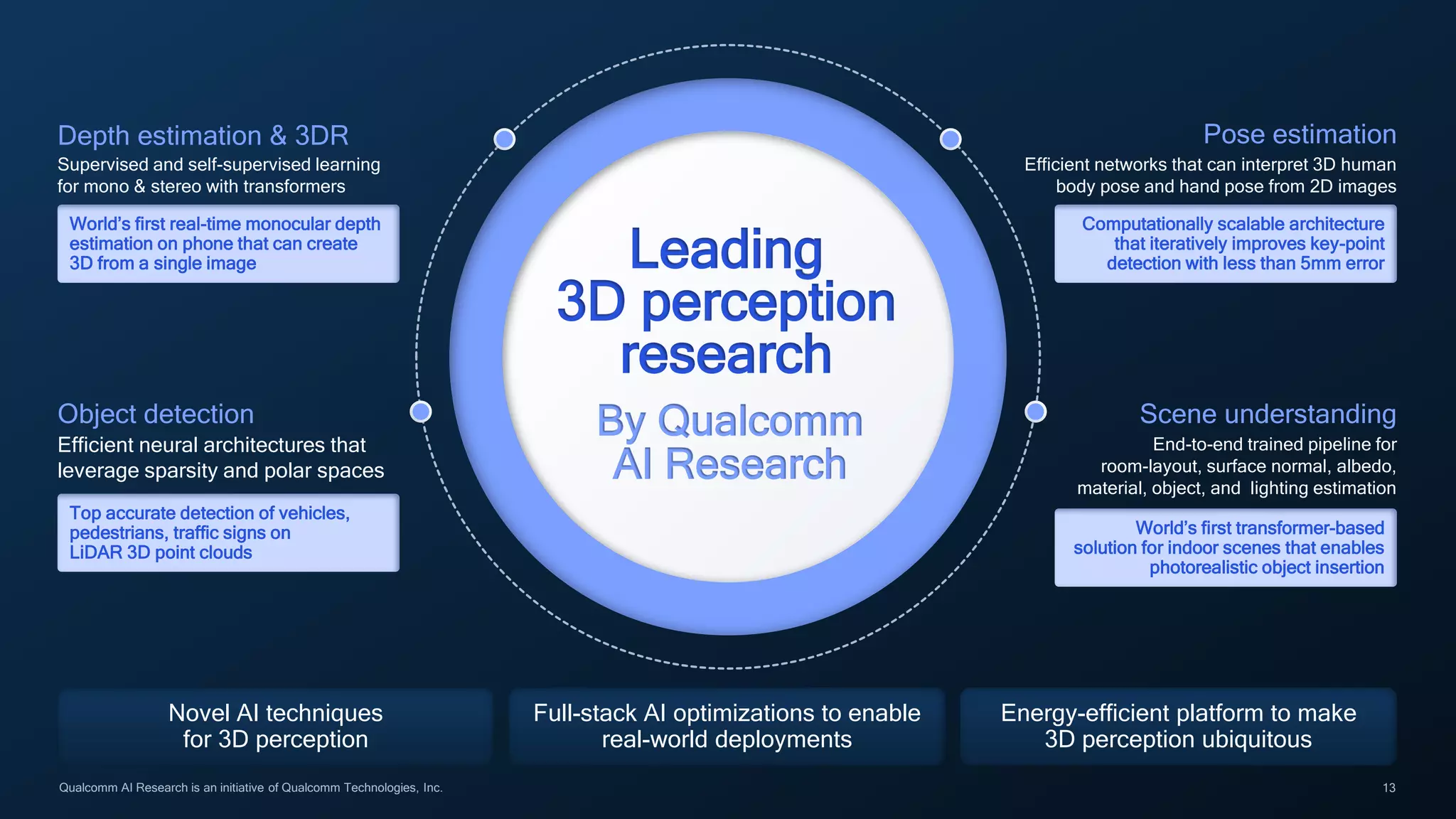

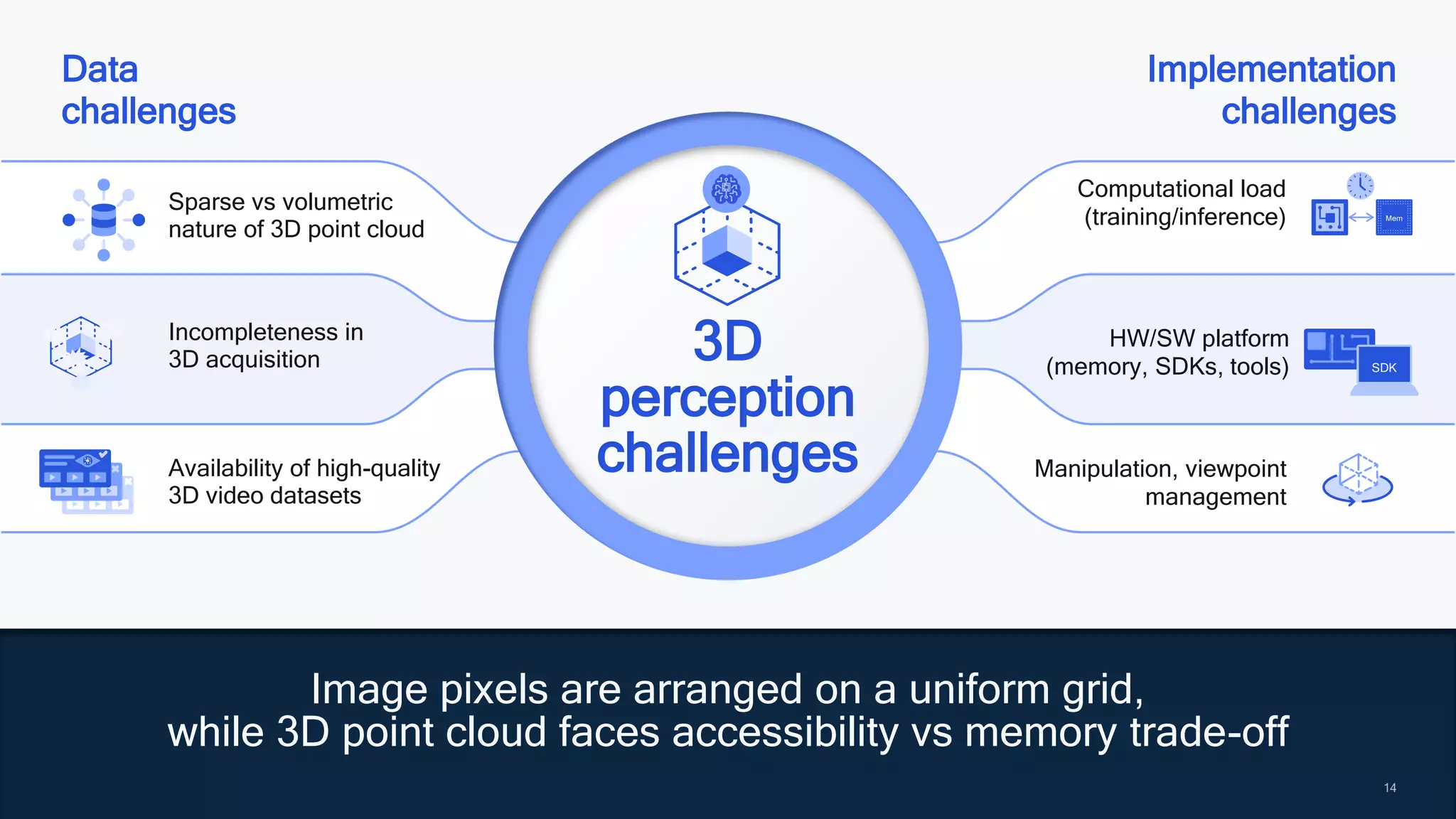

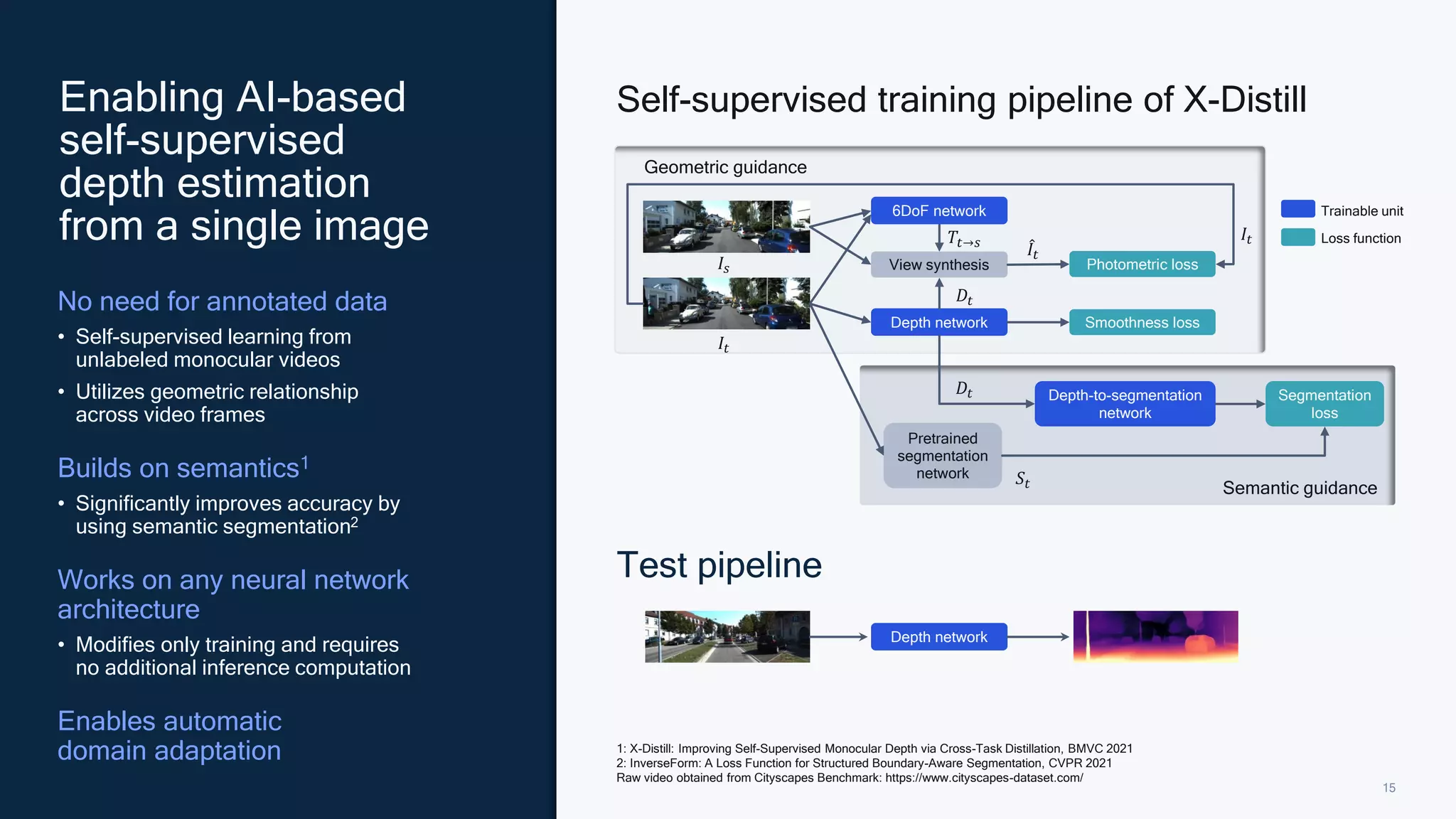

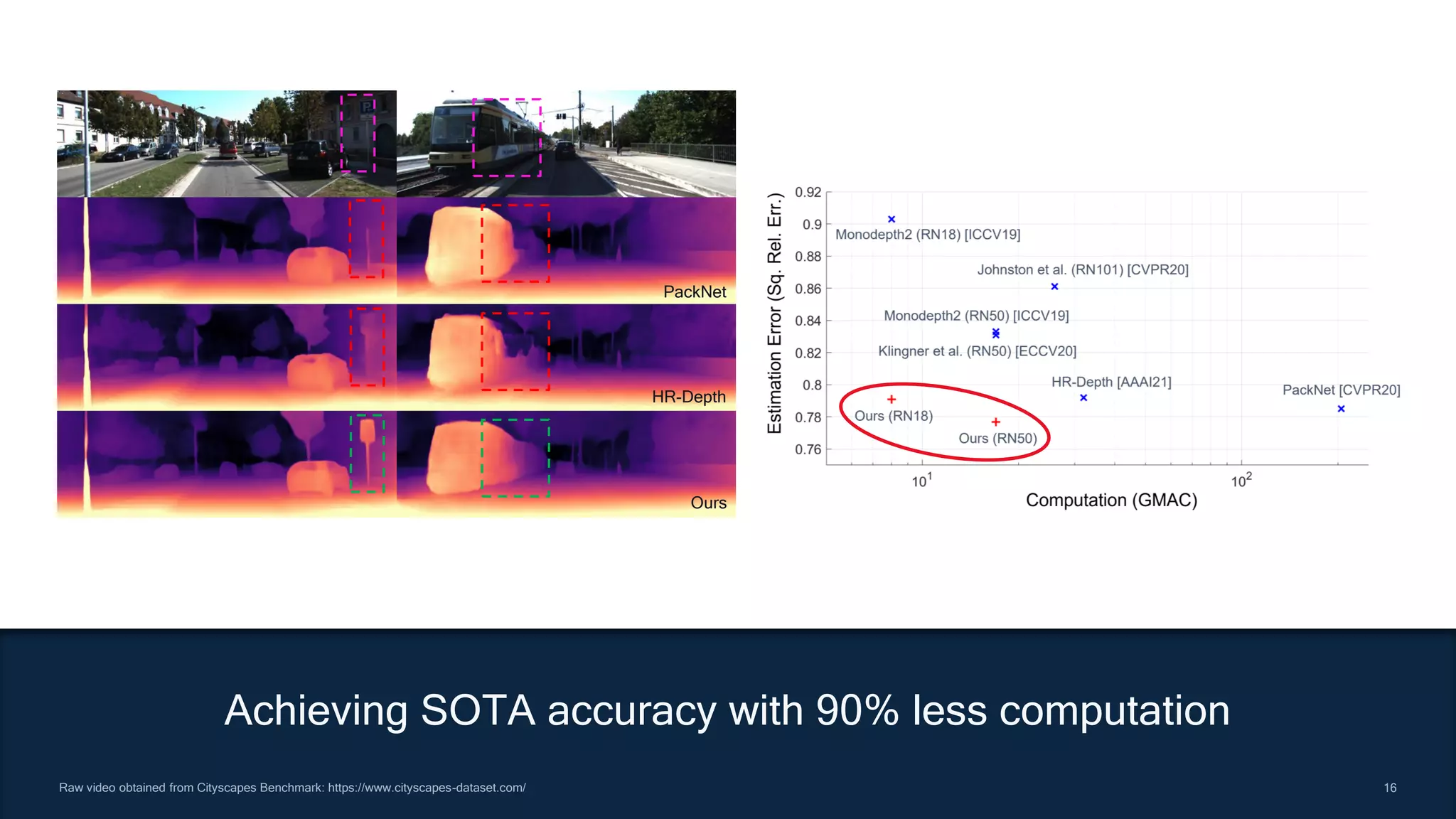

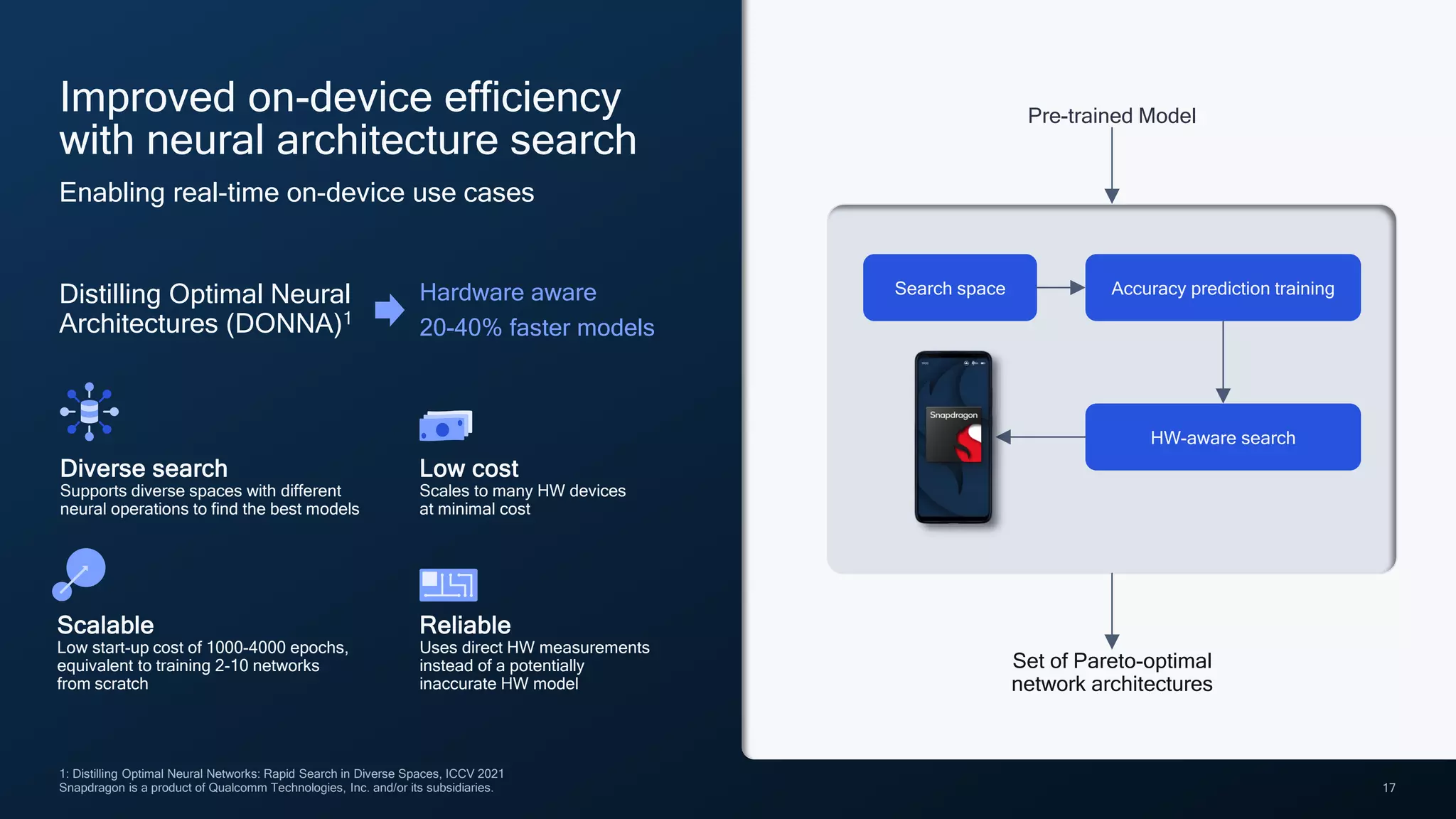

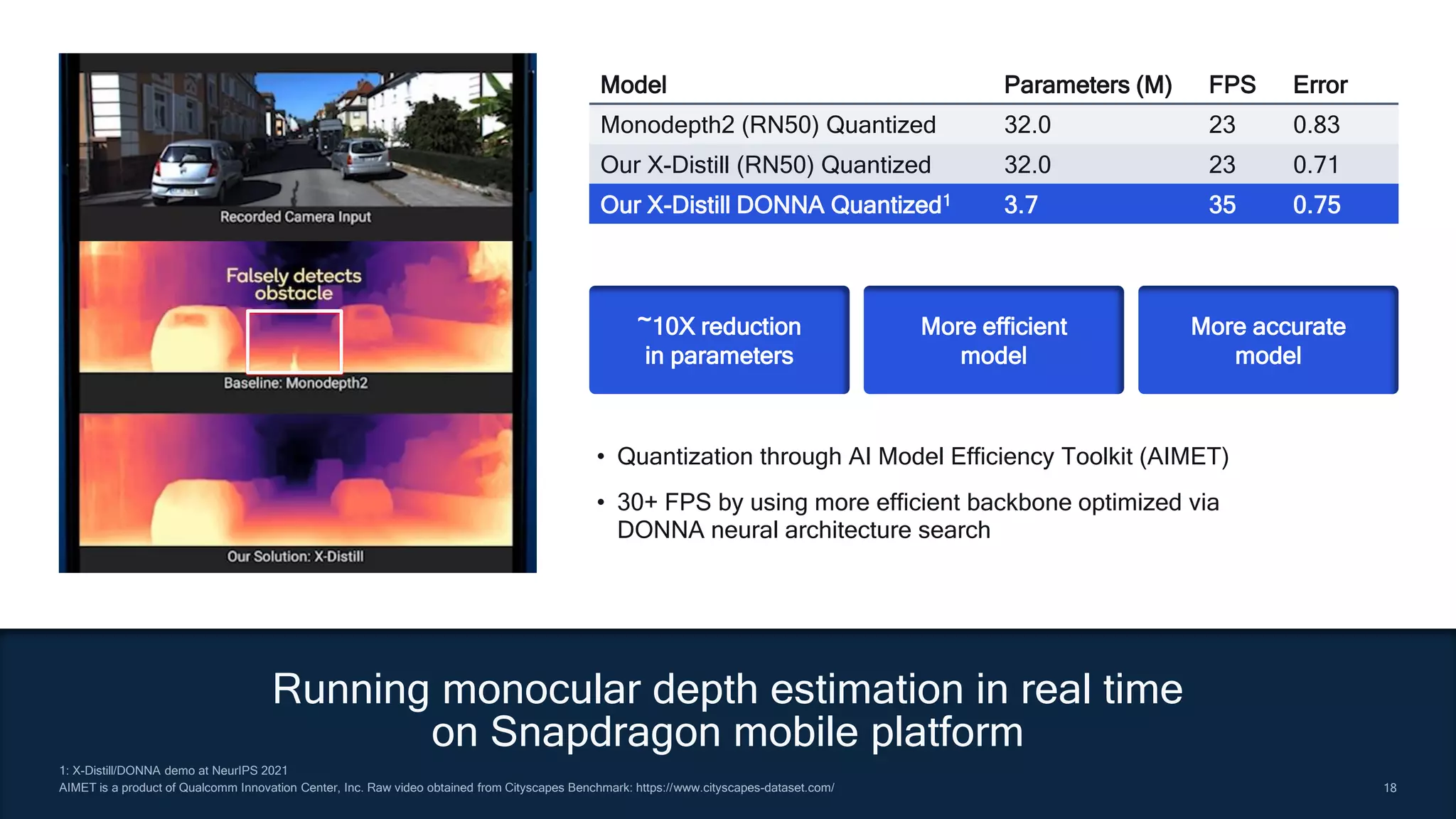

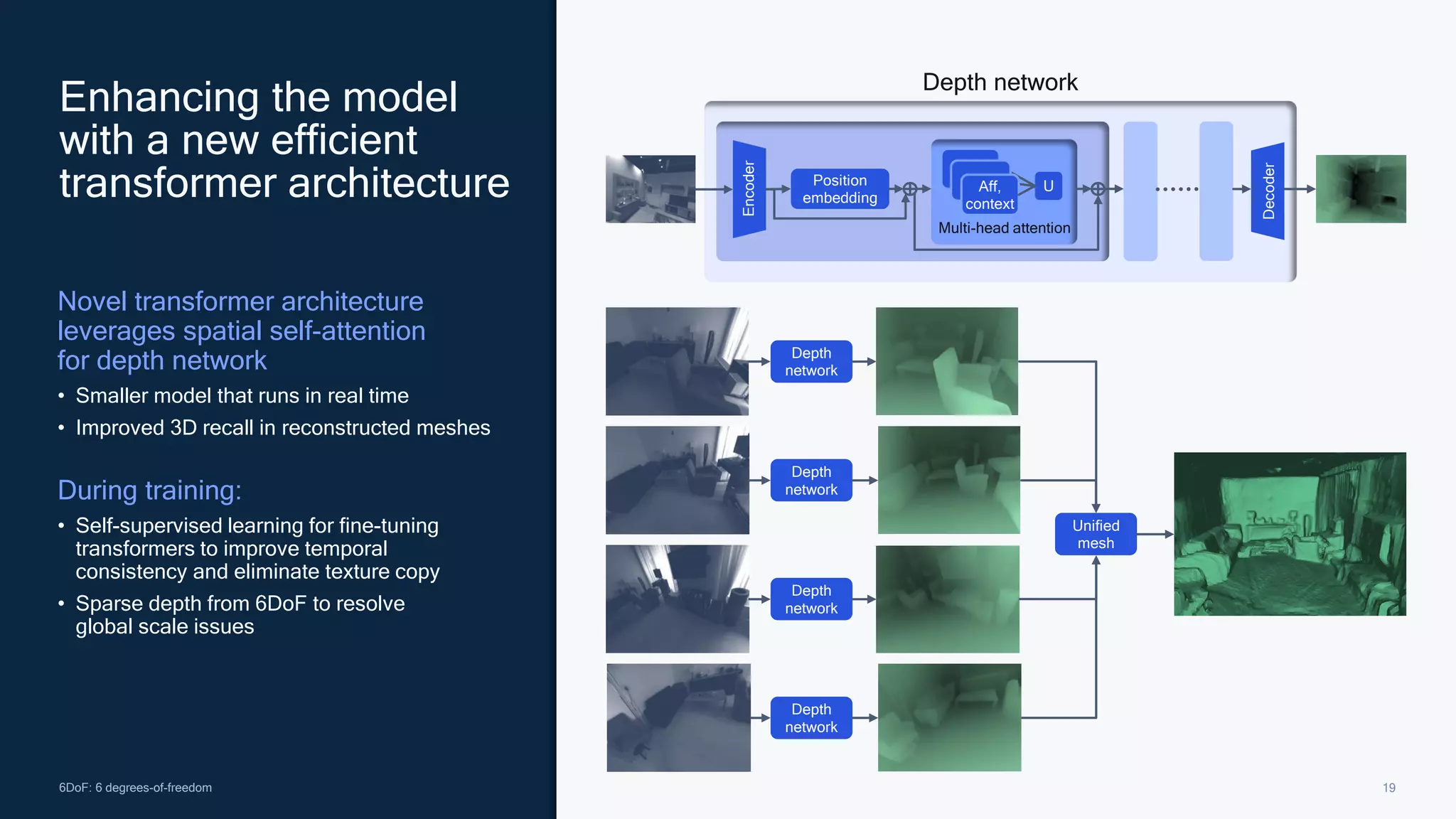

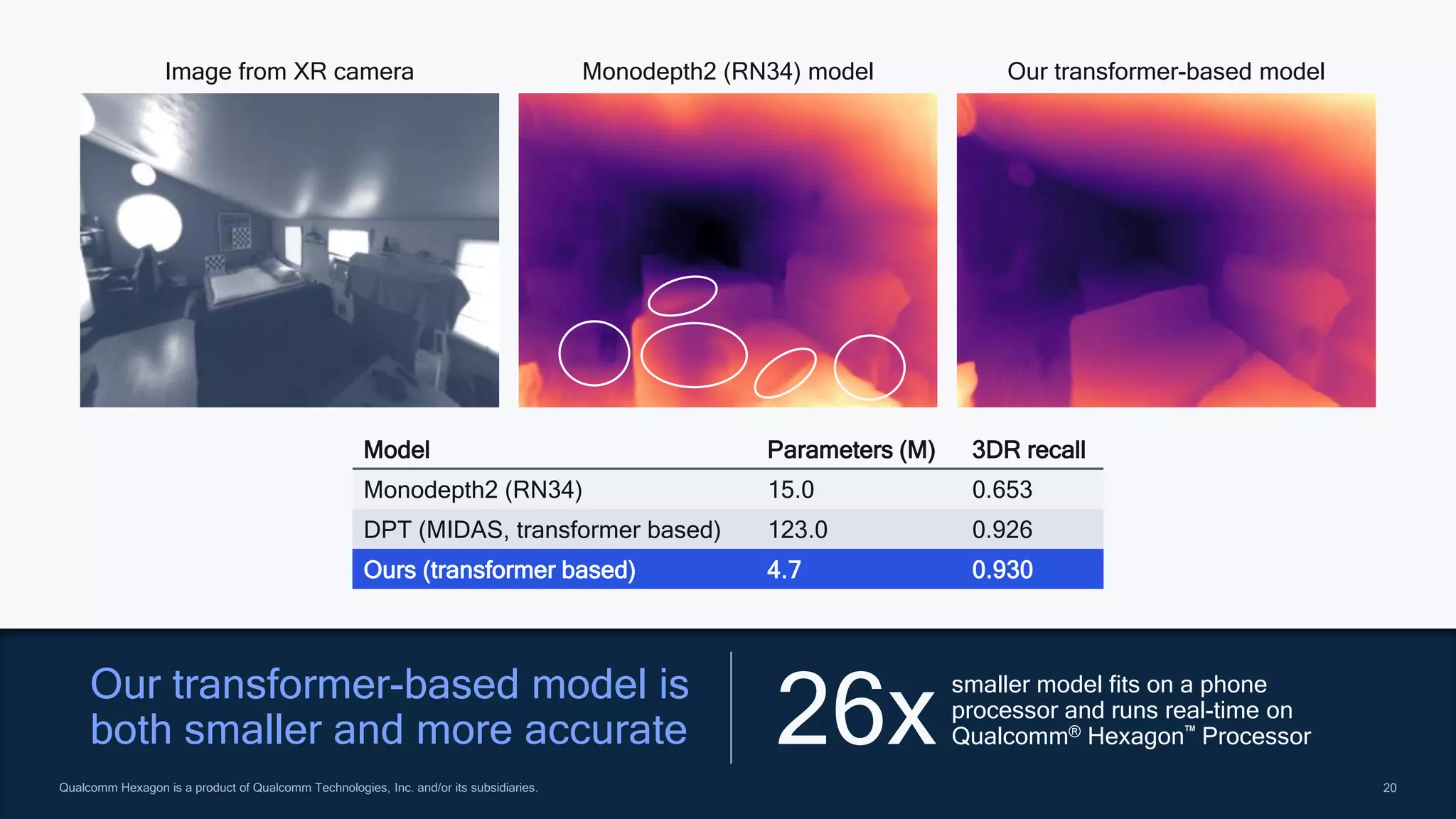

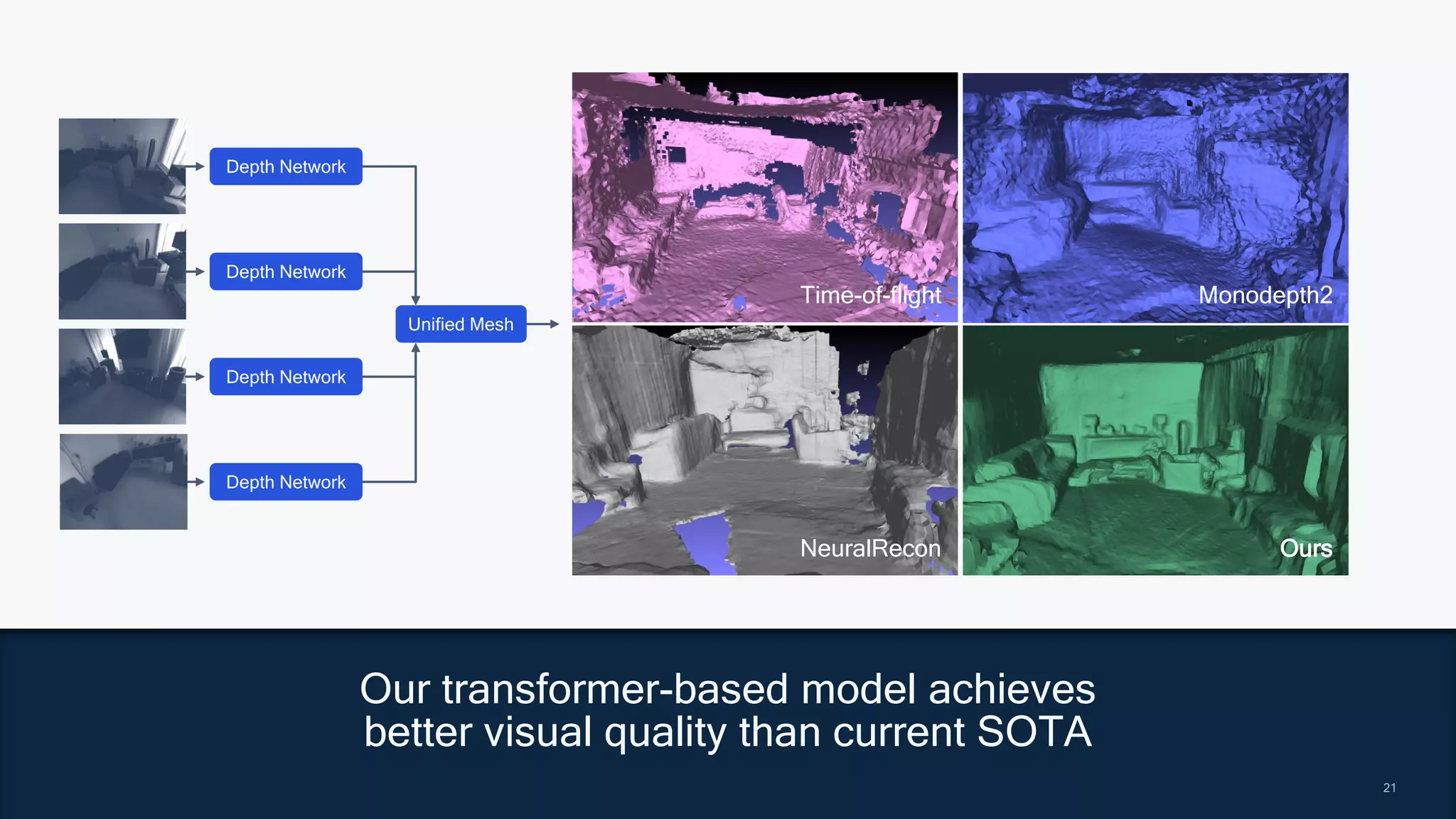

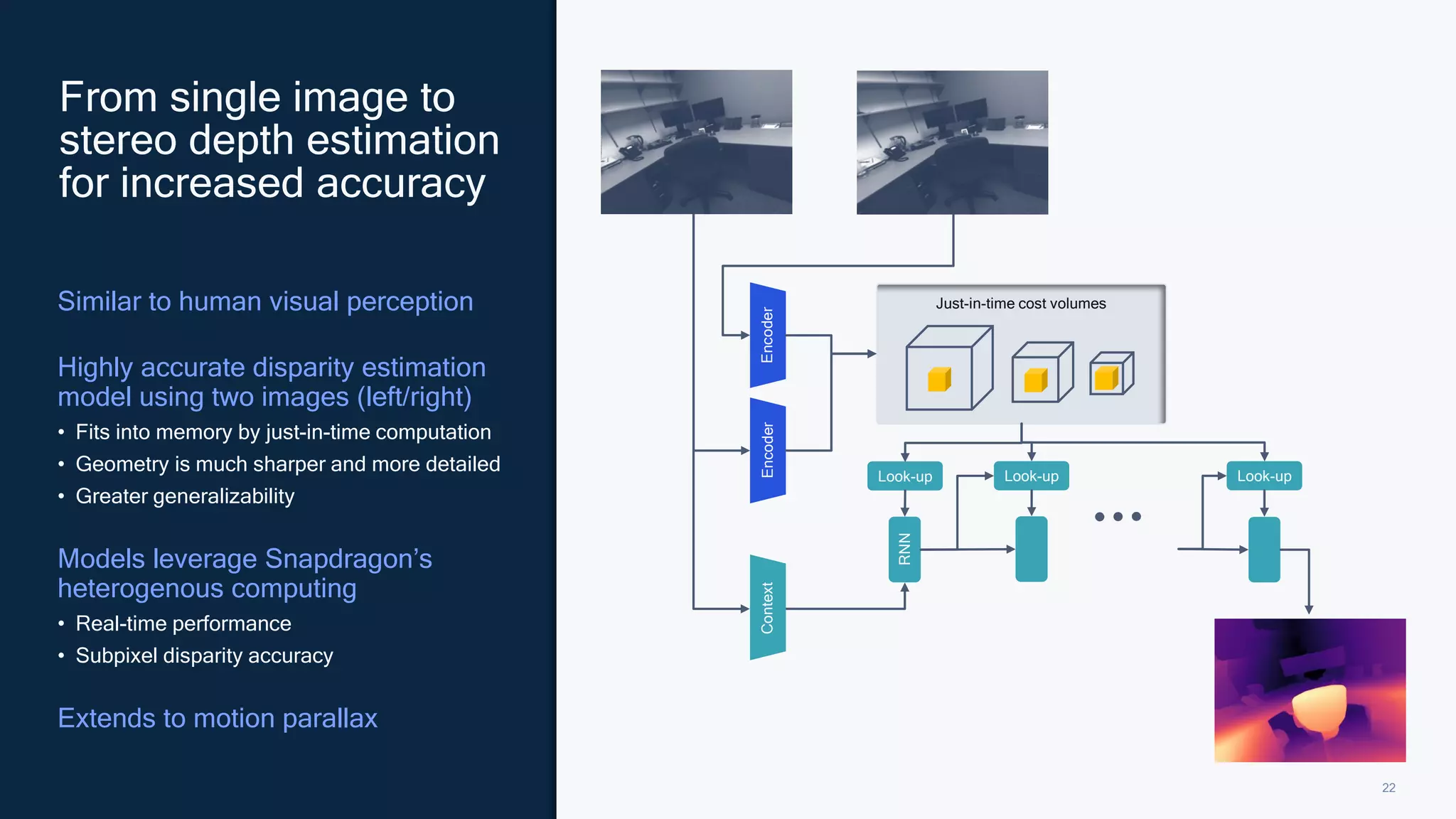

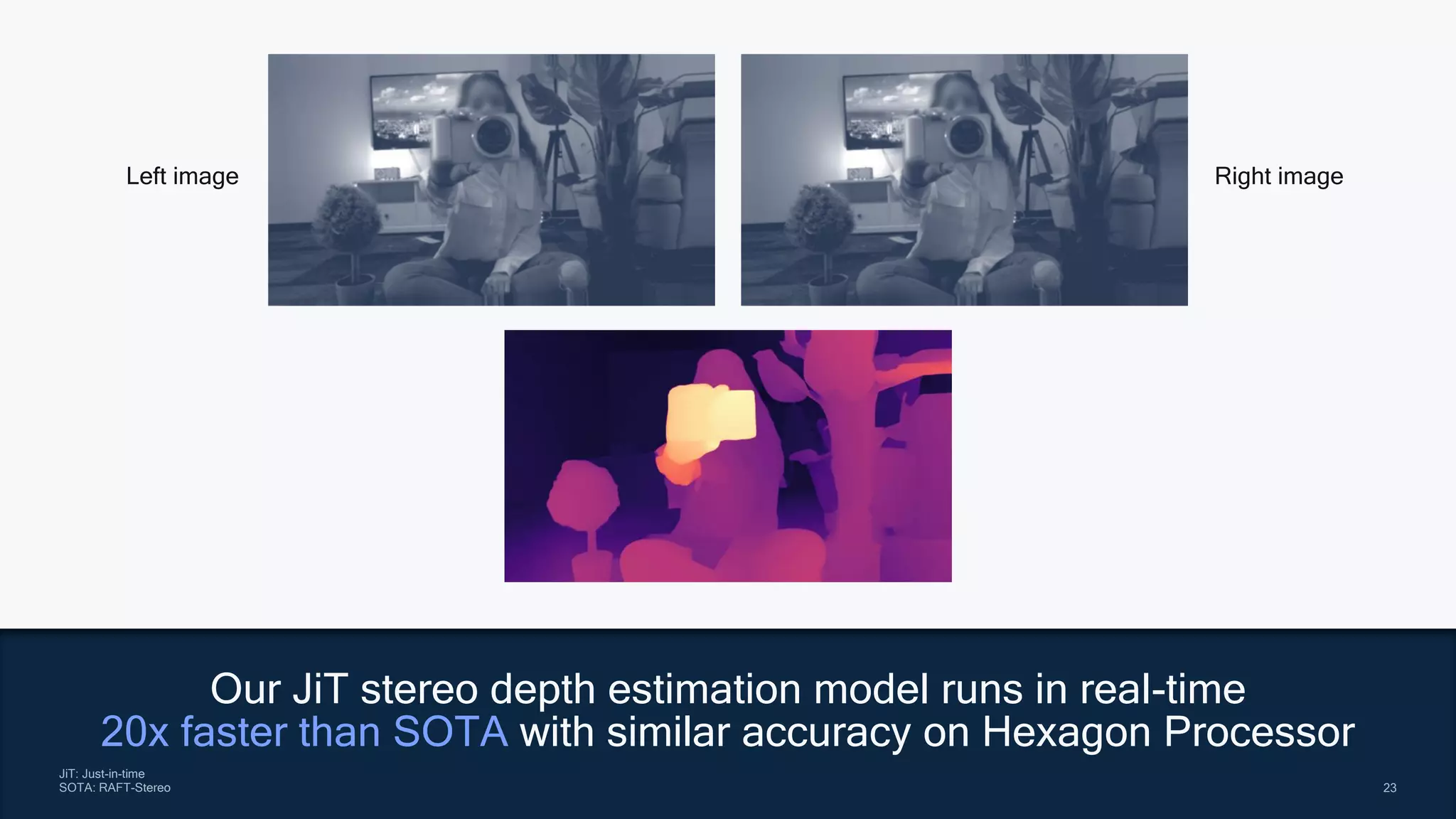

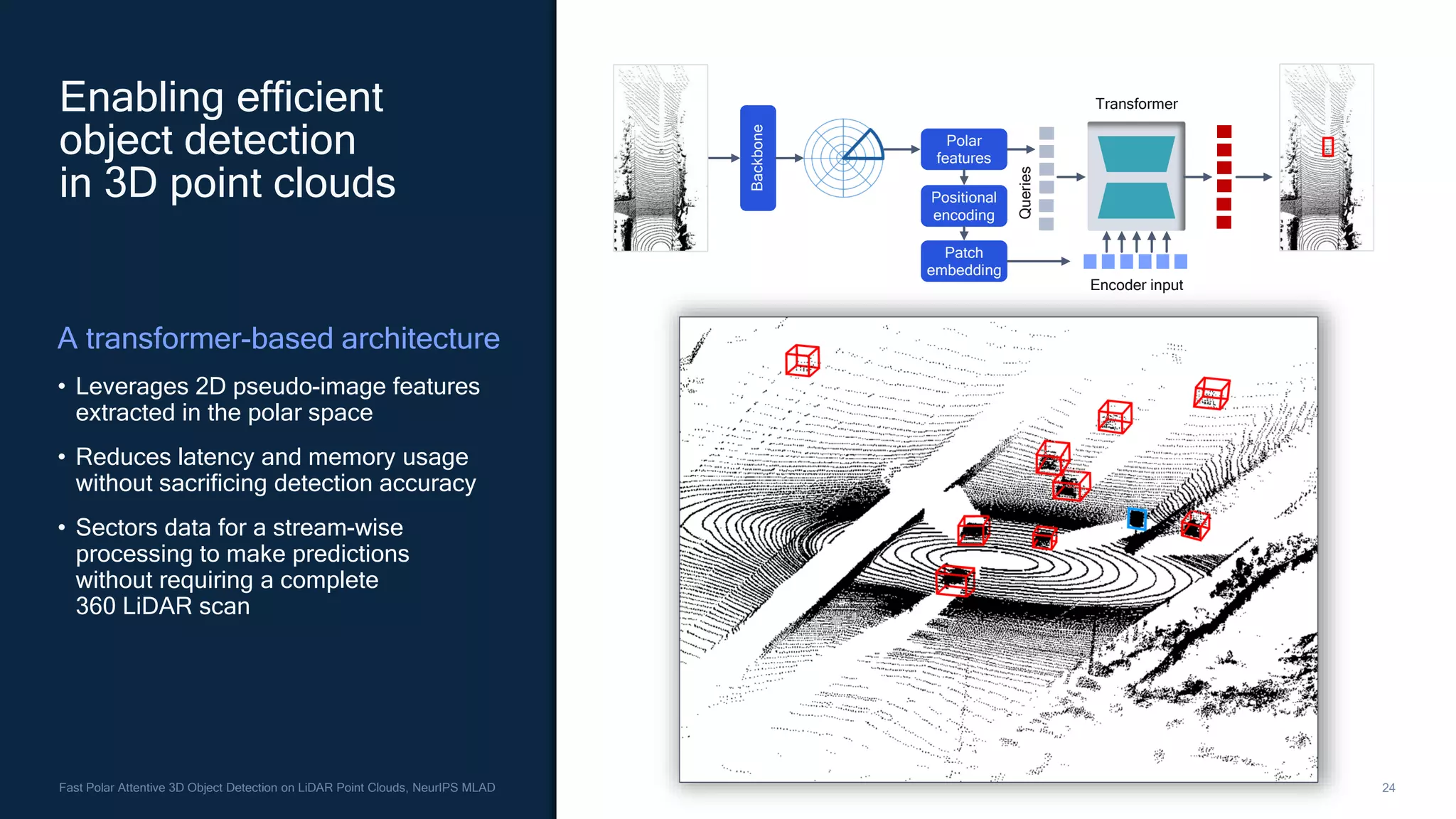

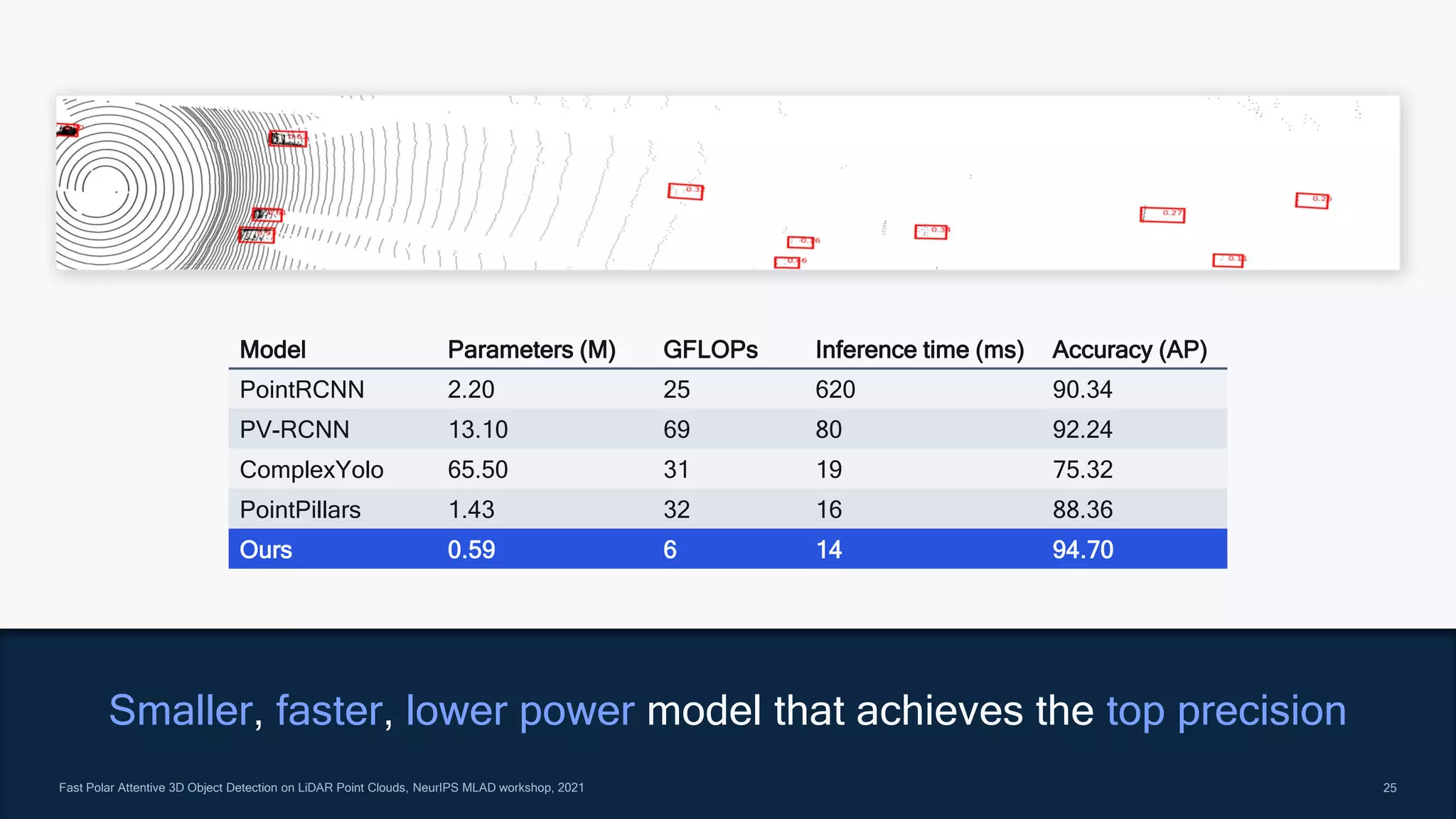

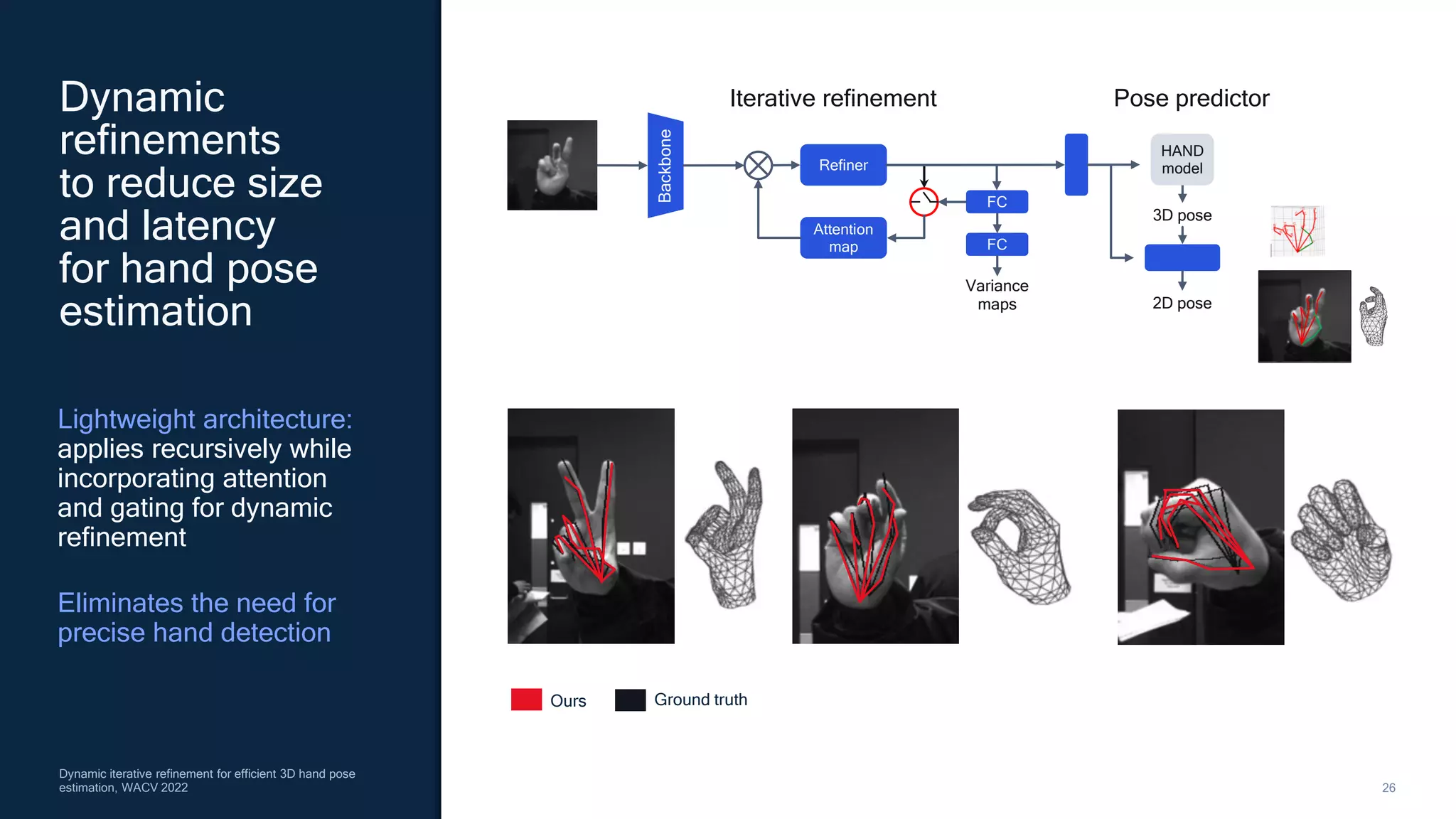

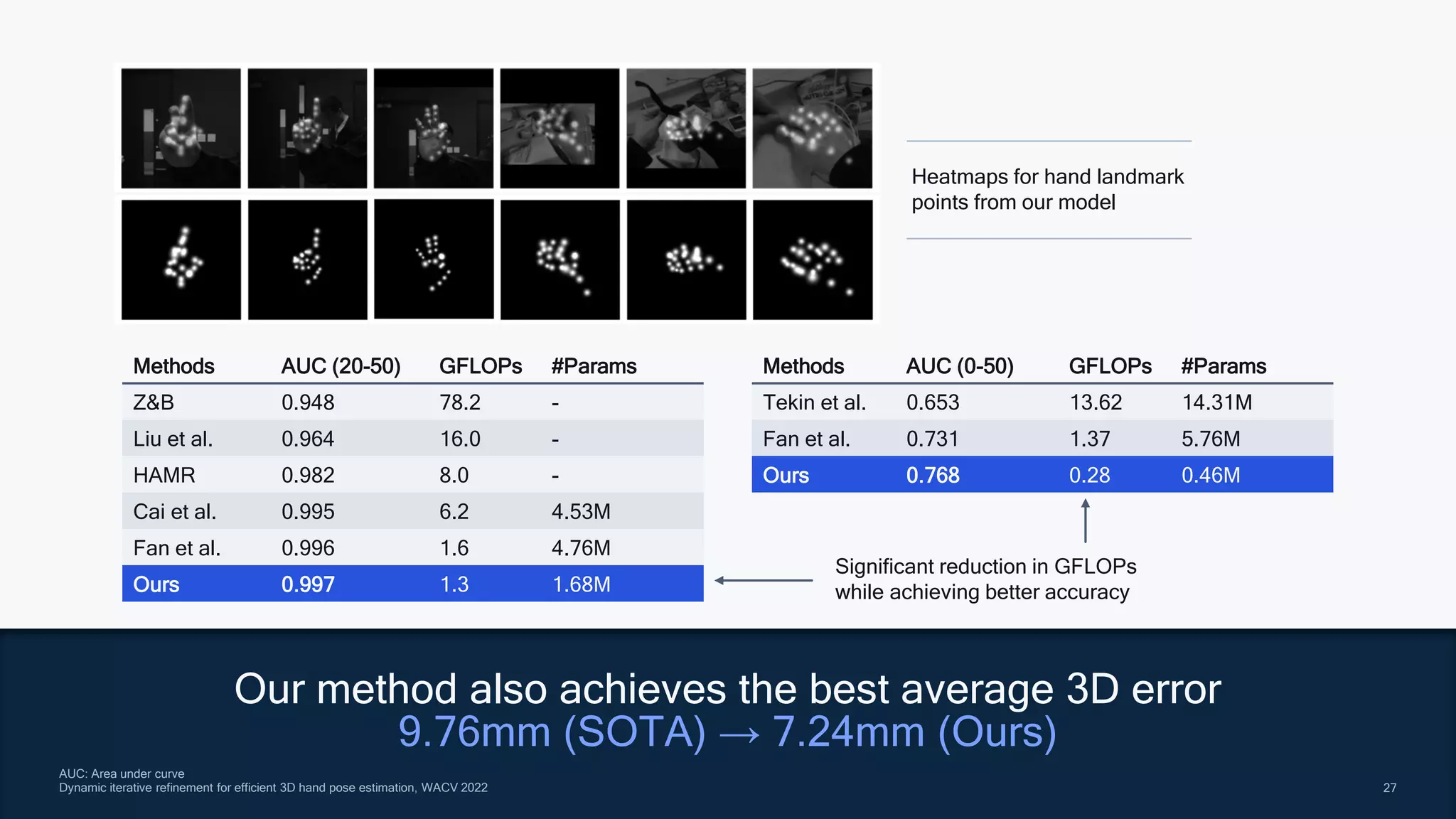

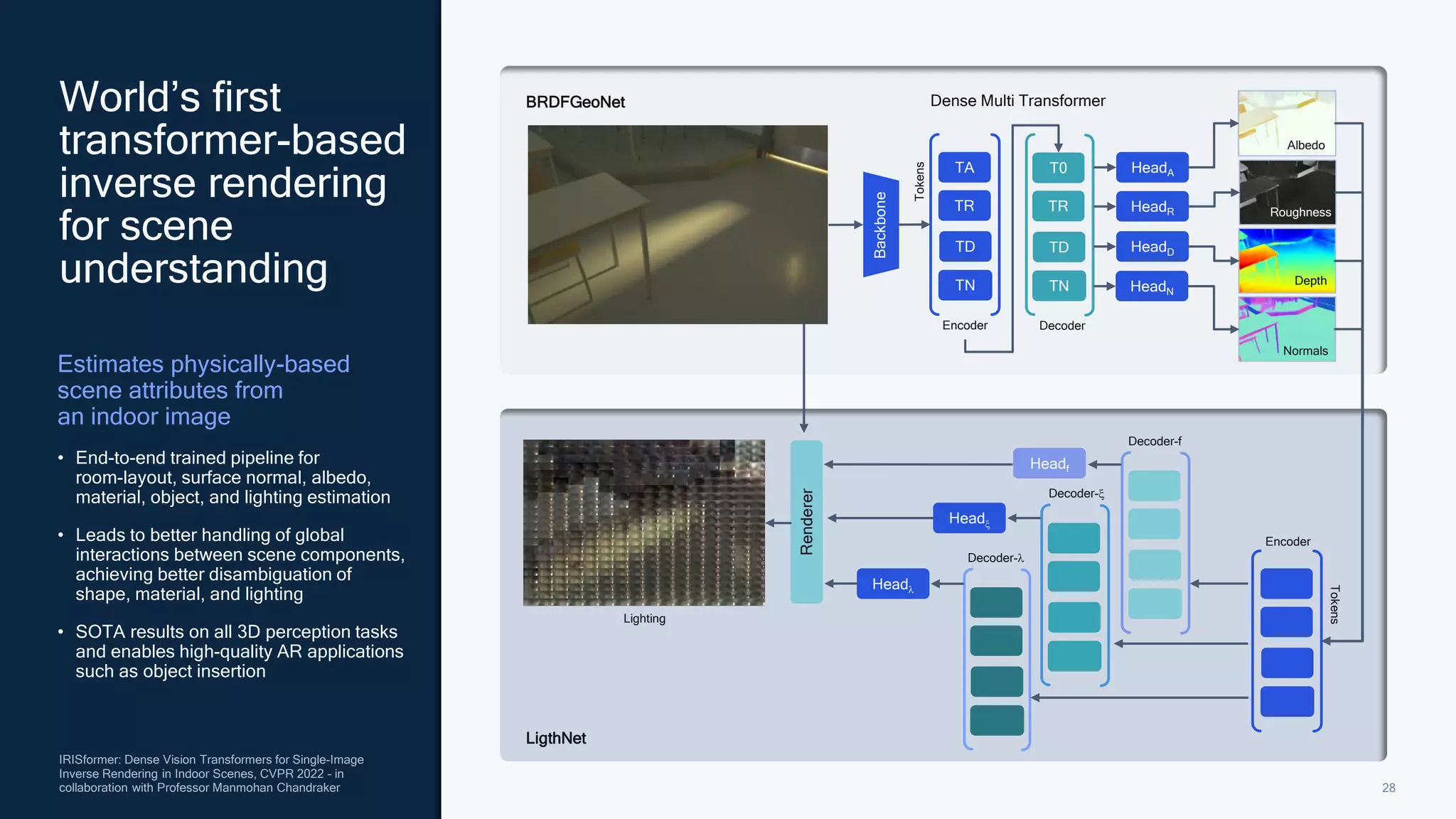

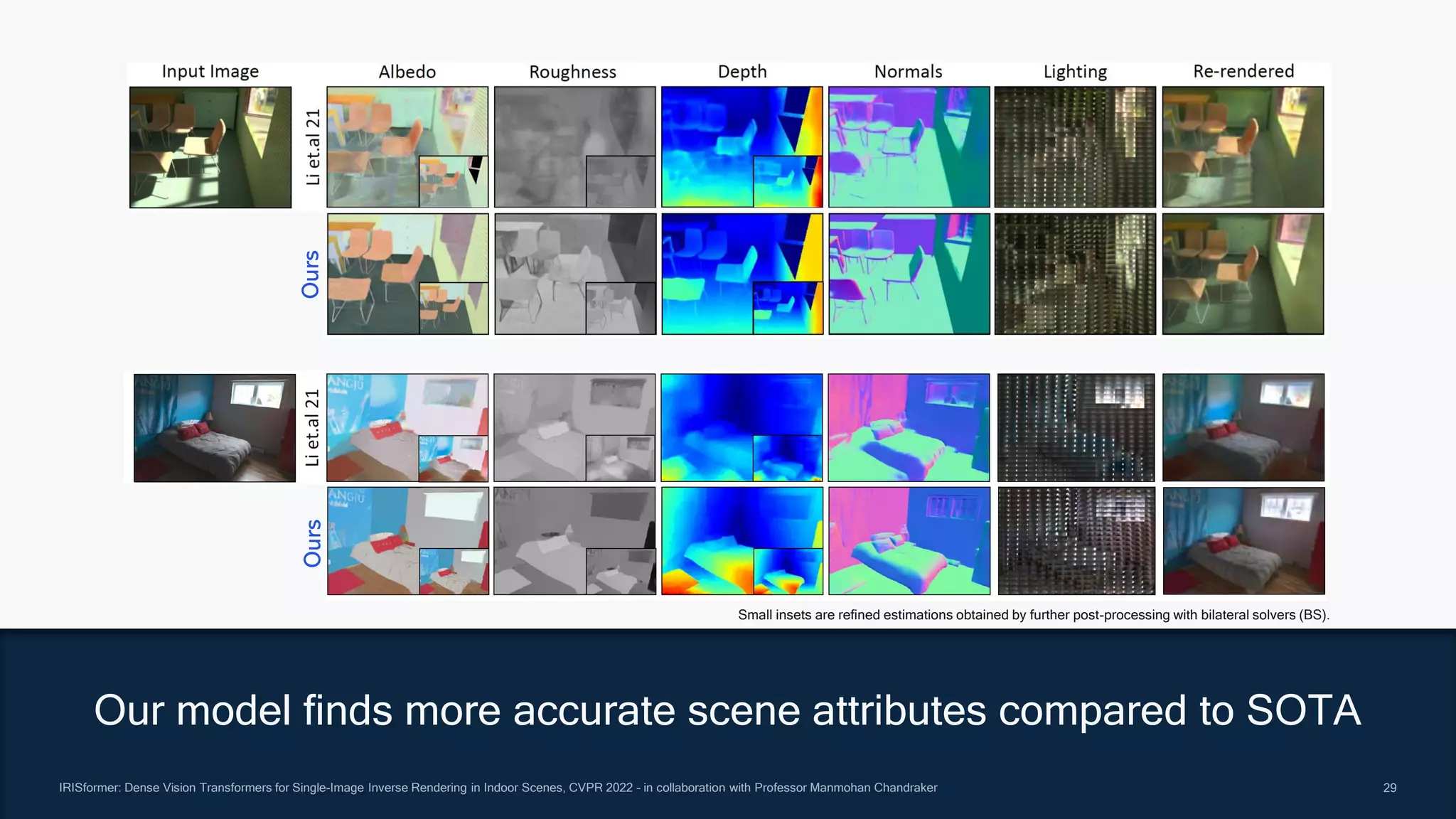

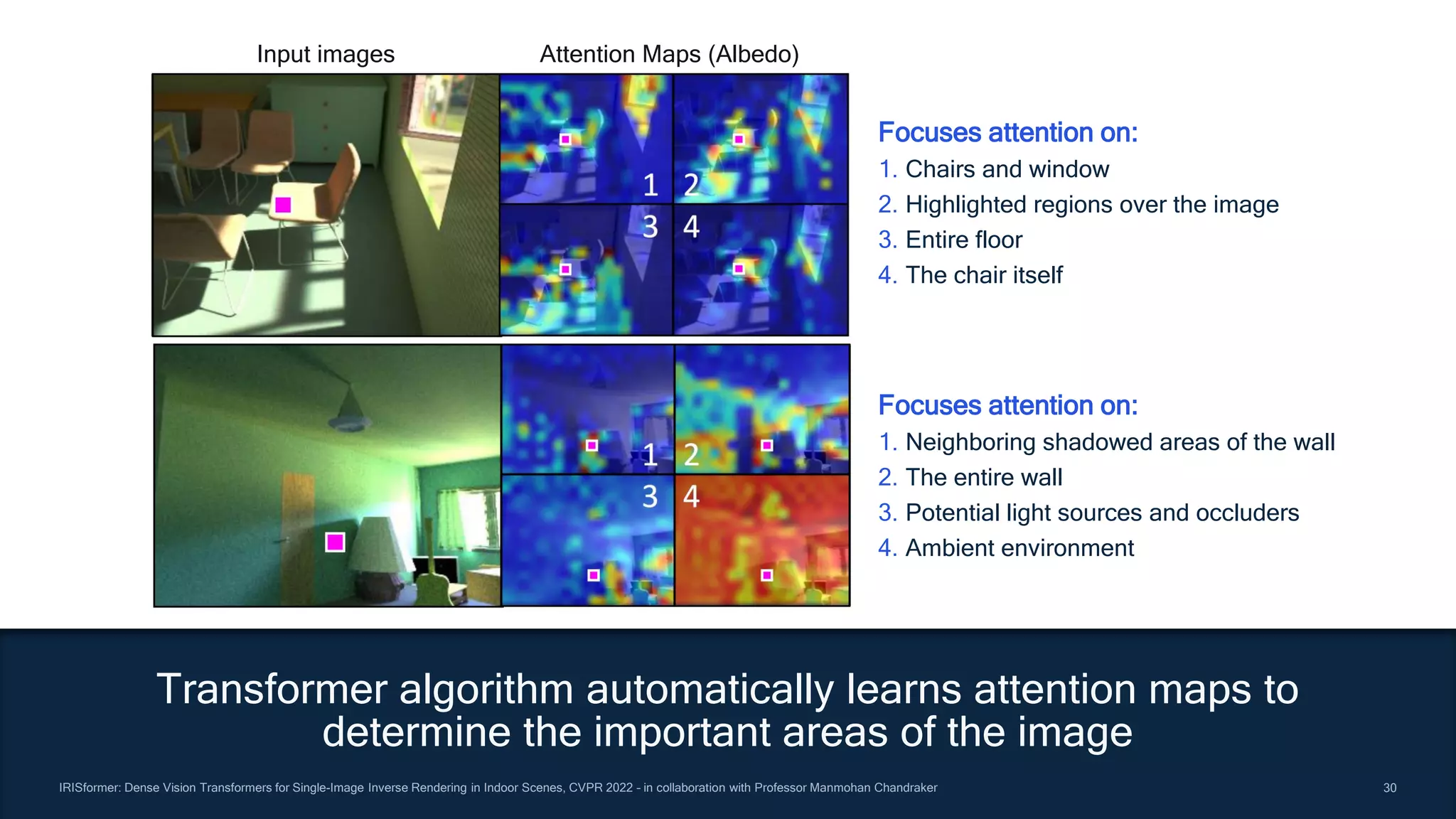

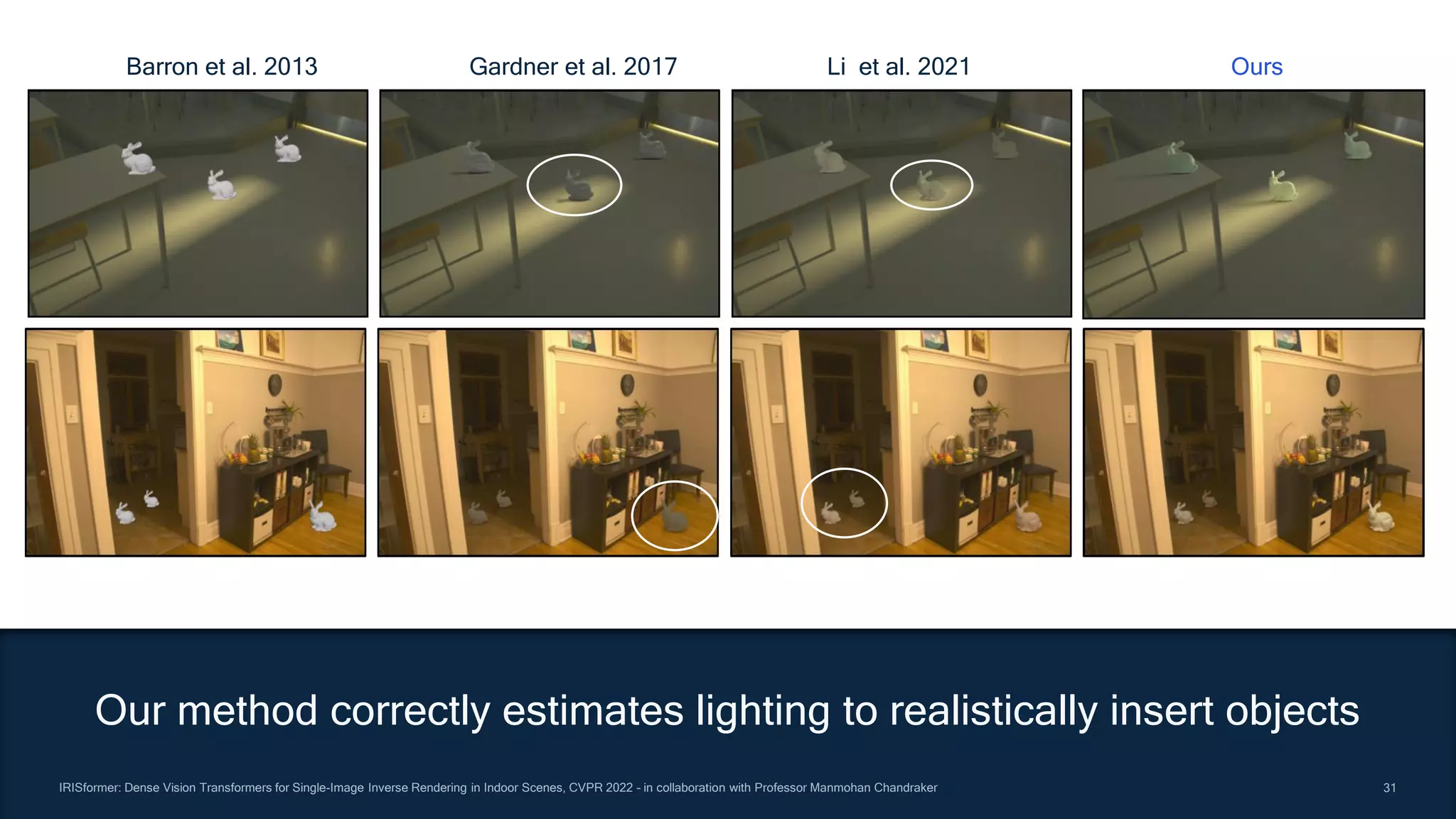

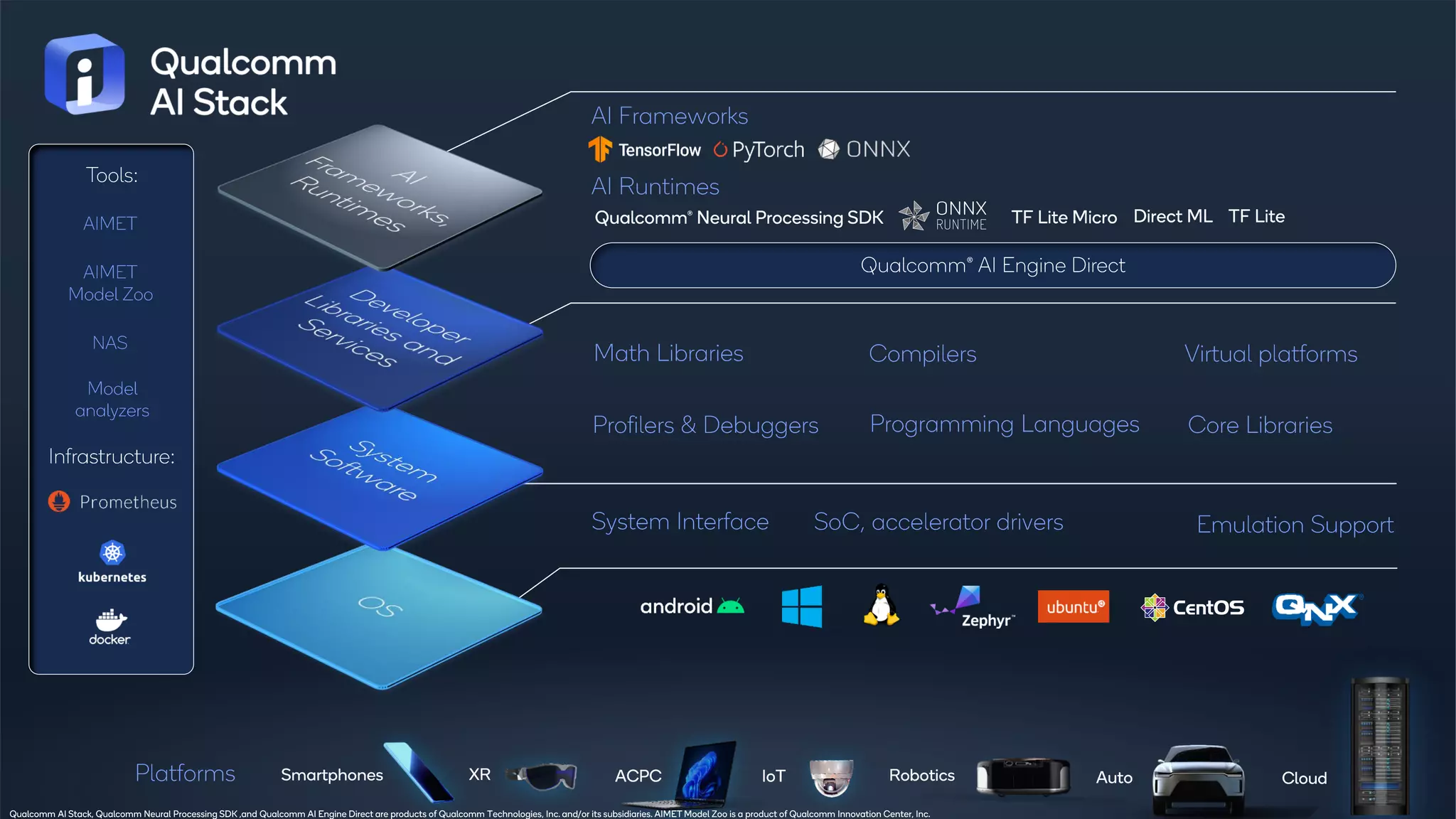

Qualcomm Technologies outlines advancements and applications of 3D perception technologies, emphasizing their advantages over 2D, including improved object recognition and motion estimation for diverse fields like autonomous driving, robotics, and computational photography. The document discusses the various methods for 3D data acquisition, ongoing research projects, and highlights pioneering AI techniques that enhance capabilities in mobile devices and other platforms. Future research directions aim to overcome challenges in real-time 3D understanding and applications in real-world environments.