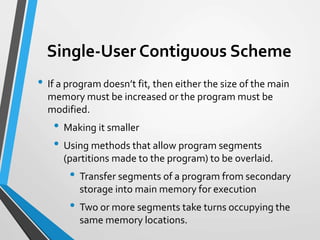

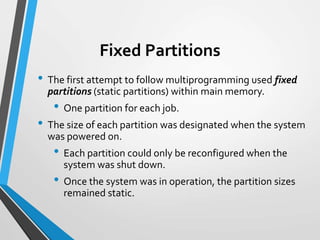

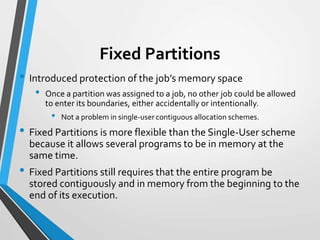

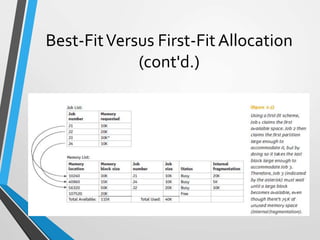

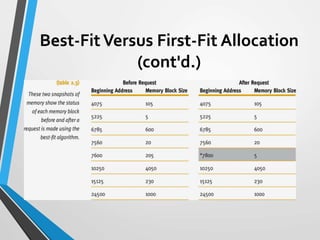

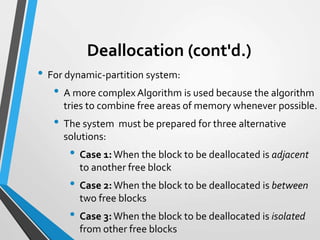

The document discusses early memory management schemes, emphasizing single-user and fixed partition methods, along with their advantages and disadvantages. It explains how these primitive allocation methods evolved, introducing concepts like dynamic partitions and fragmentation challenges. The text highlights the operational differences and issues related to memory allocation strategies such as first-fit and best-fit algorithms.