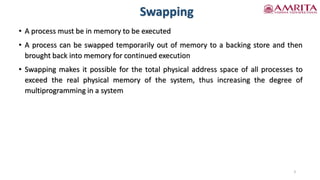

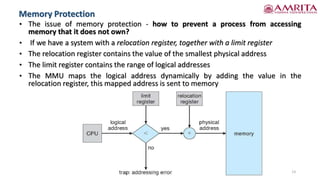

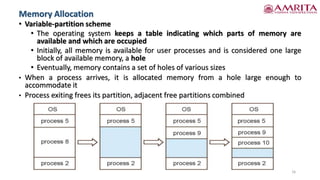

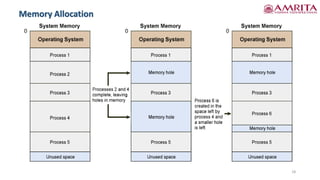

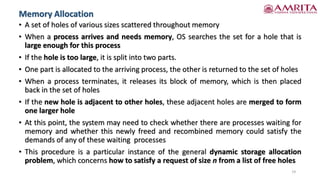

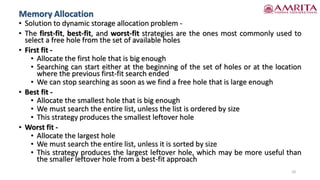

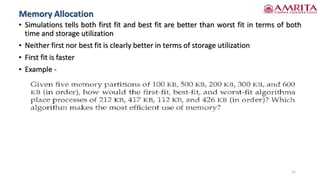

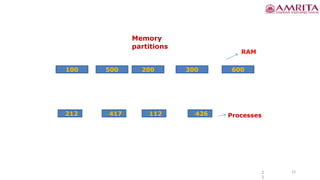

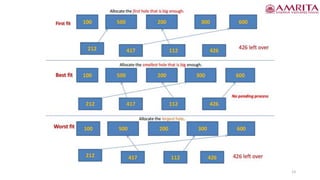

The document discusses various memory management techniques including swapping, contiguous memory allocation, and memory protection in operating systems. It details standard swapping methods, constraints, and their limitations, especially in modern and mobile systems. Additionally, it explores memory allocation strategies, fragmentation issues, and potential solutions to optimize memory use.