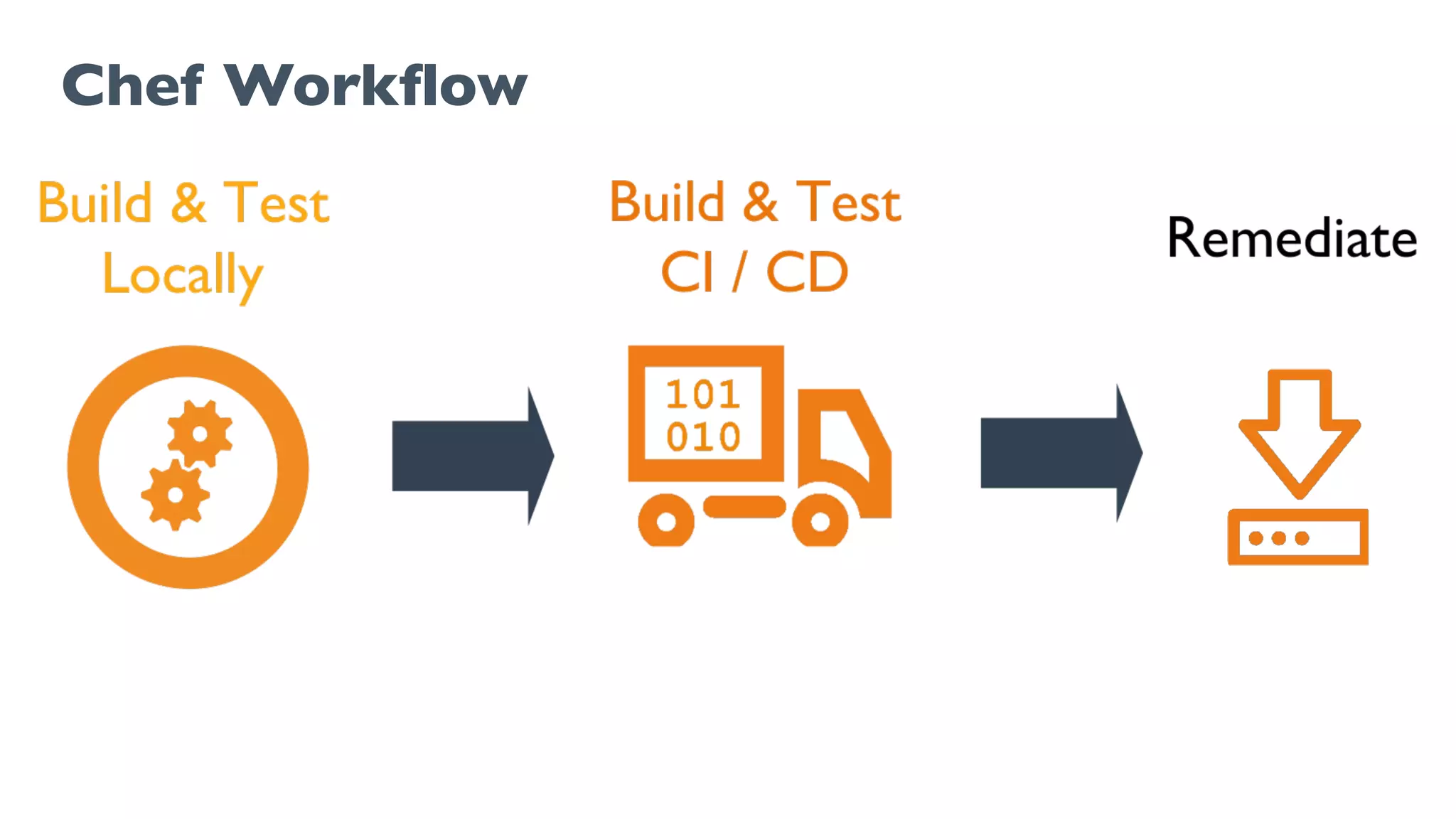

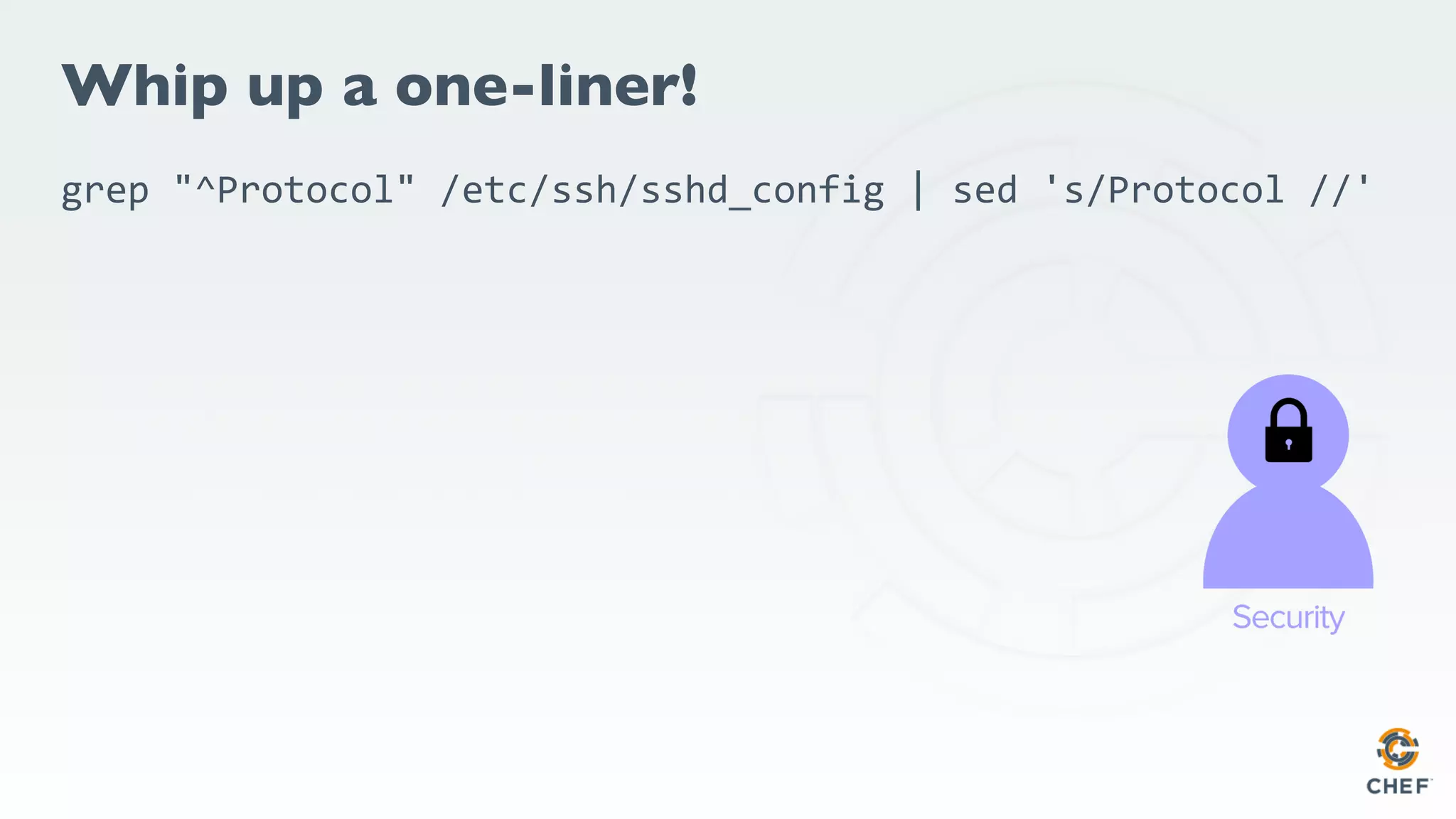

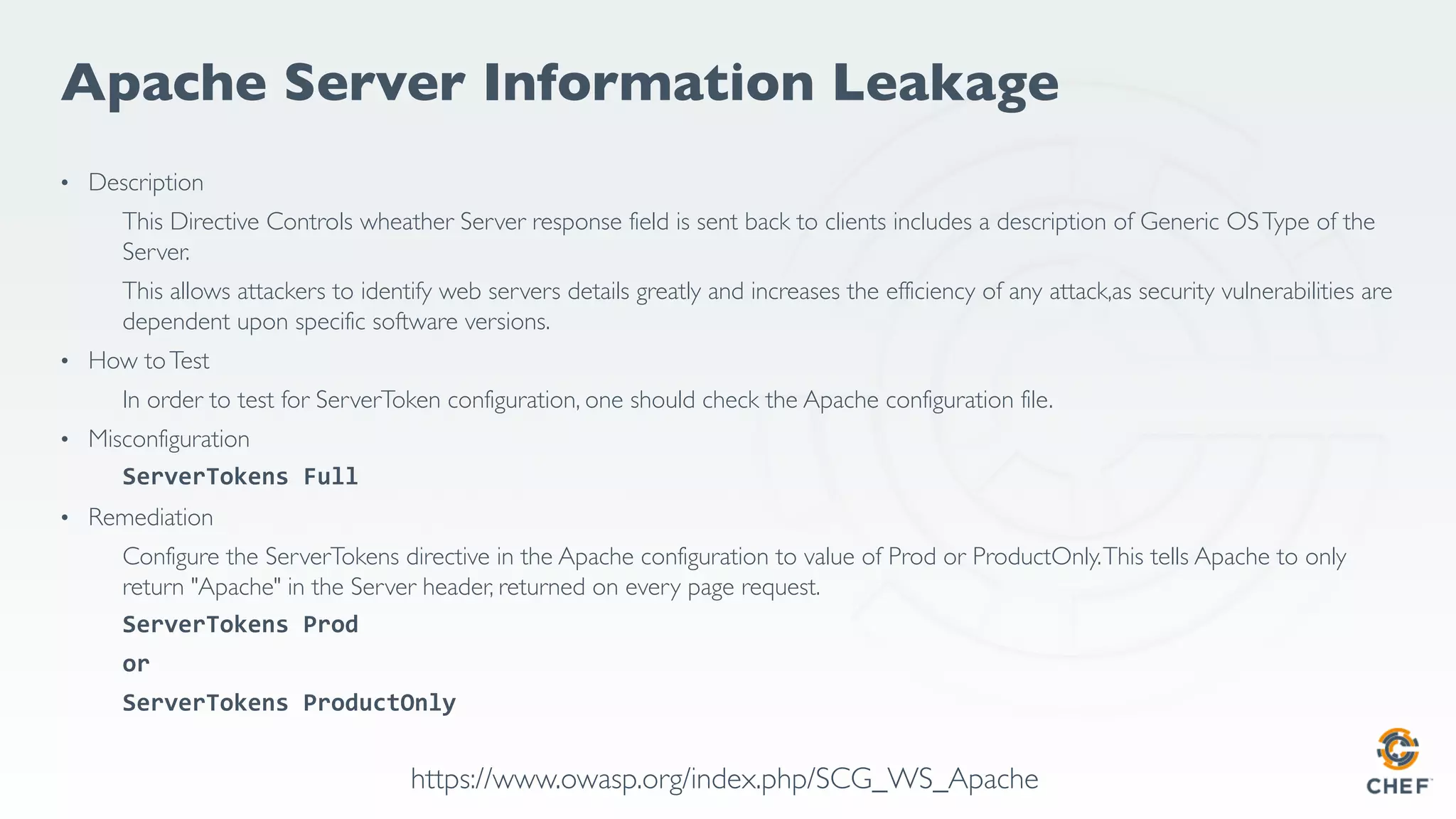

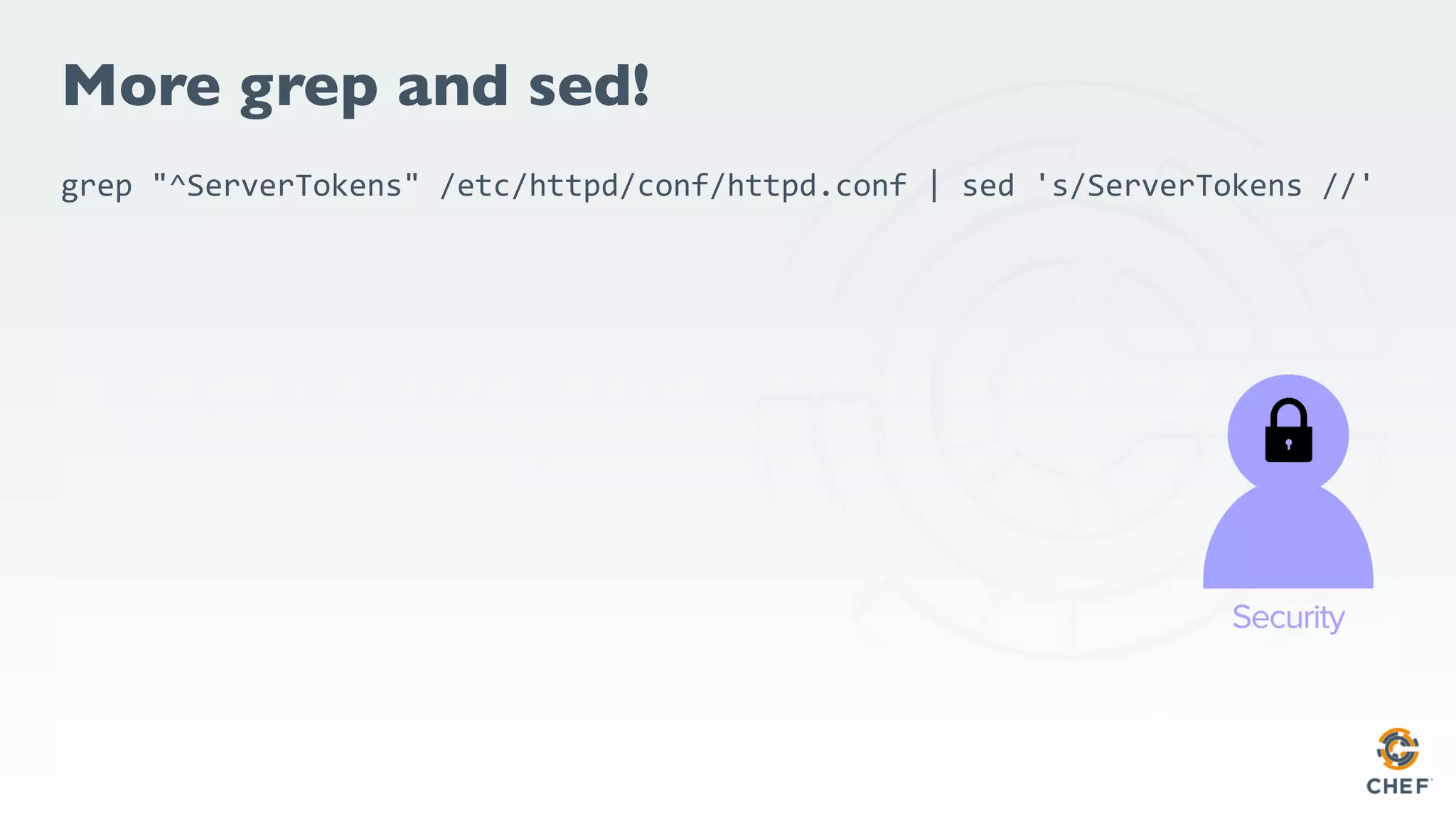

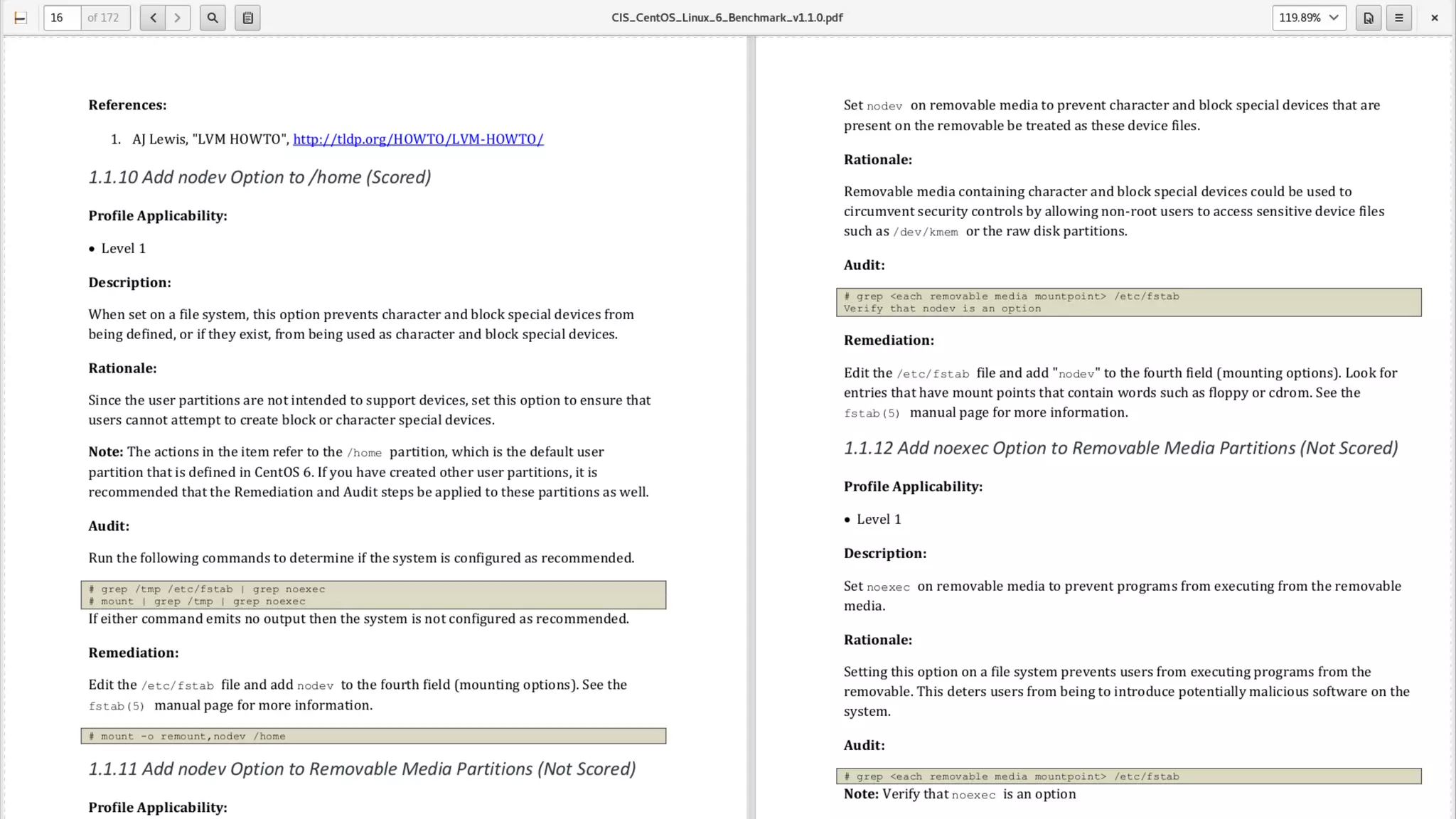

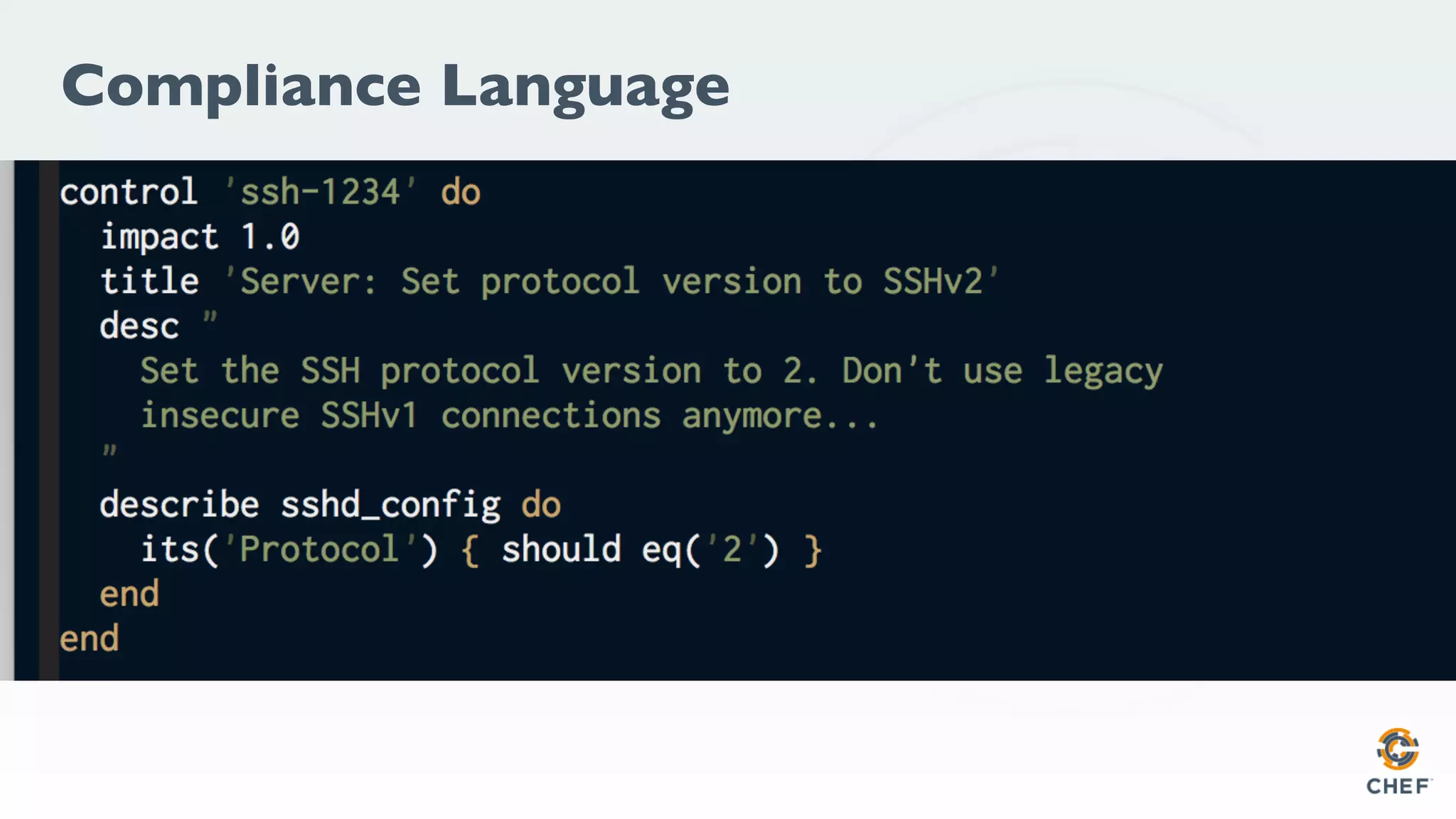

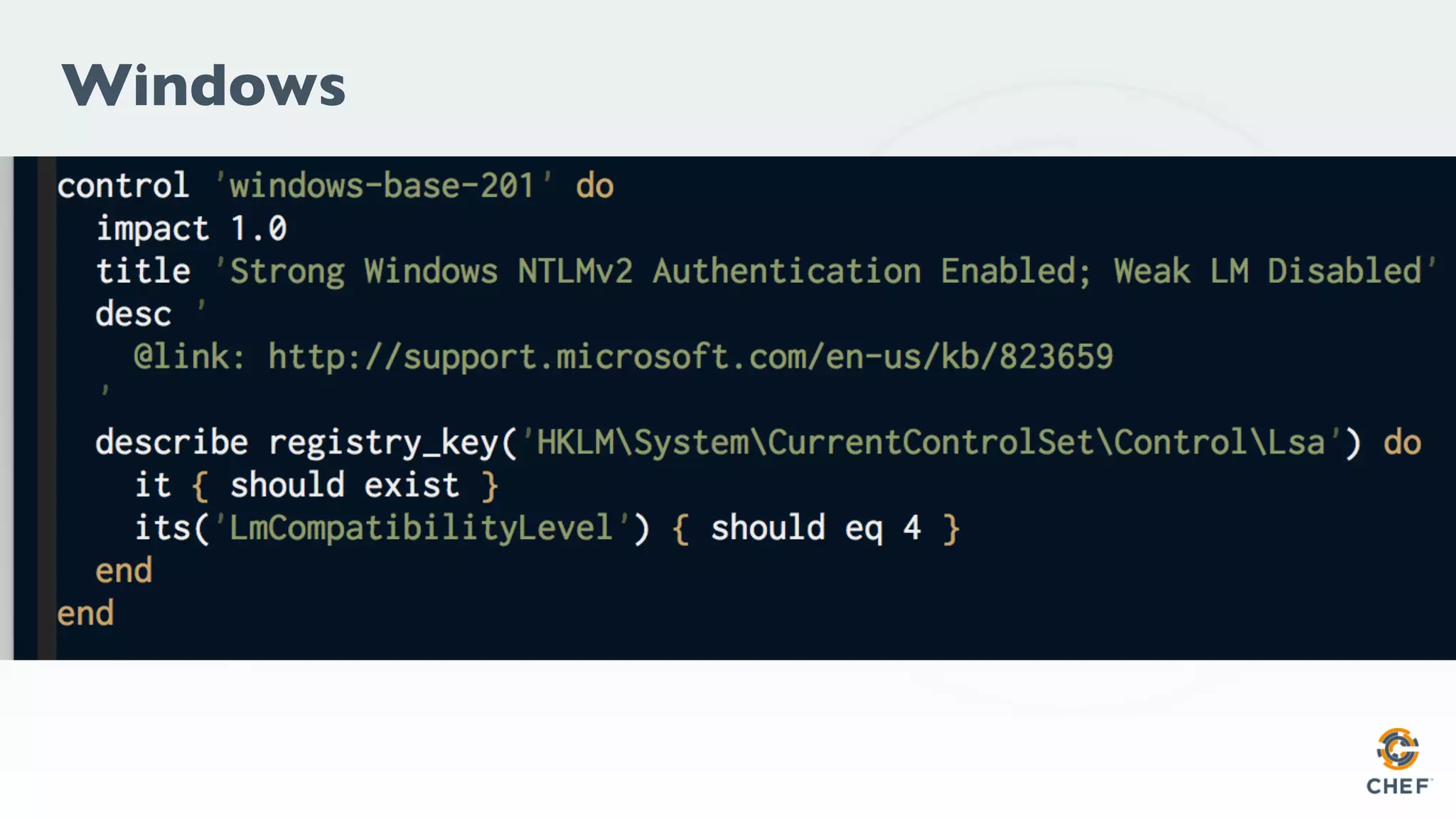

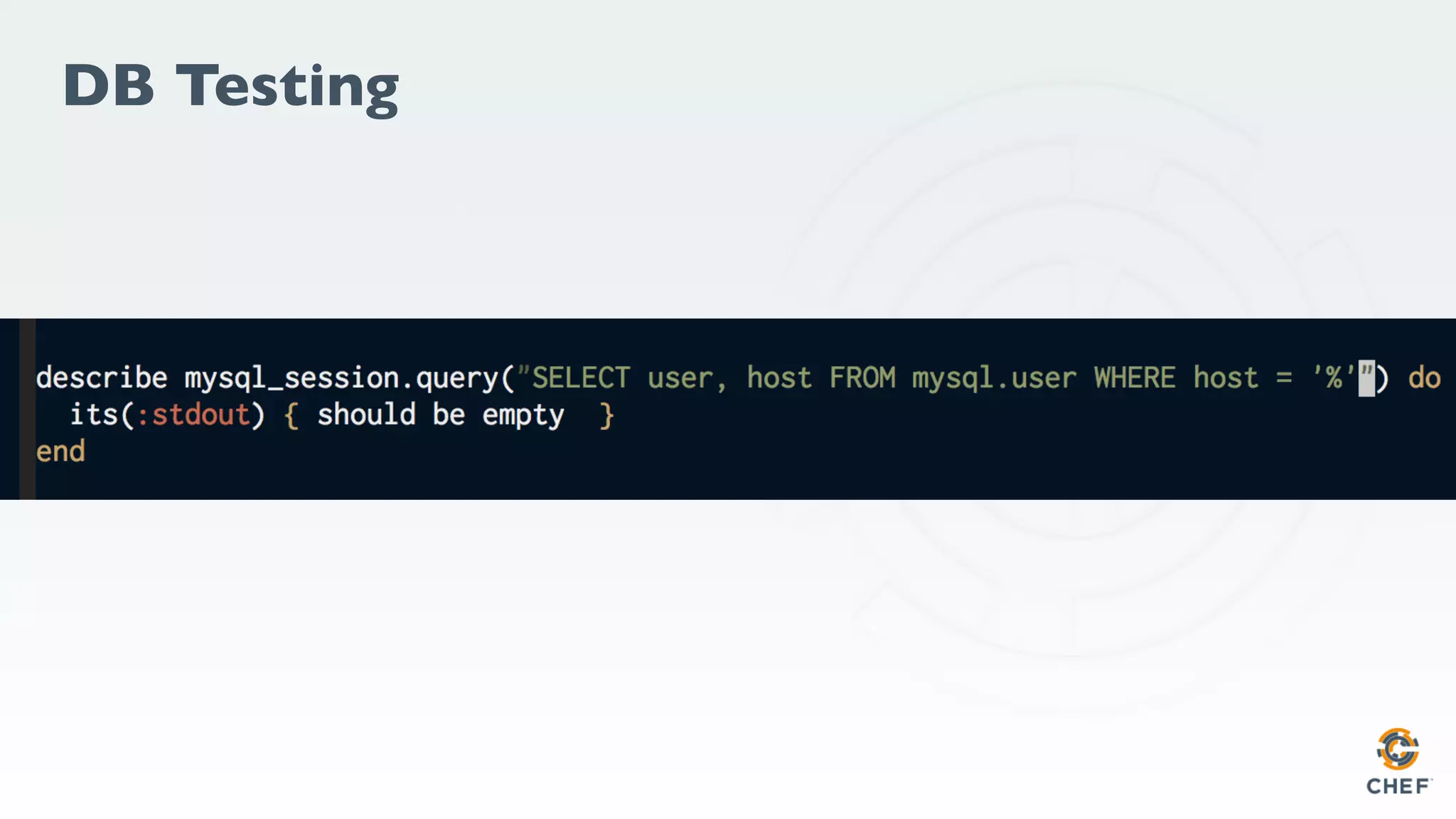

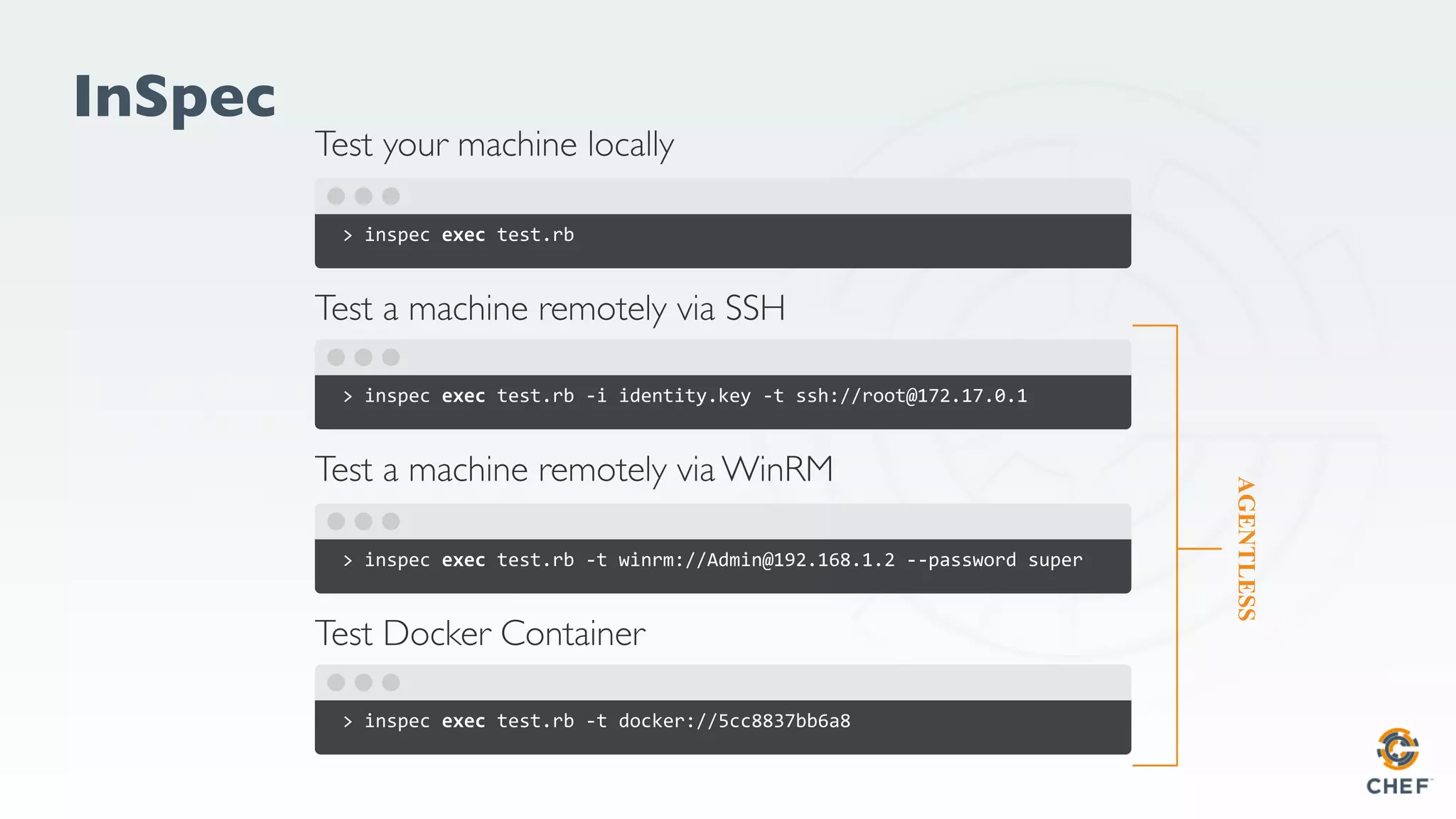

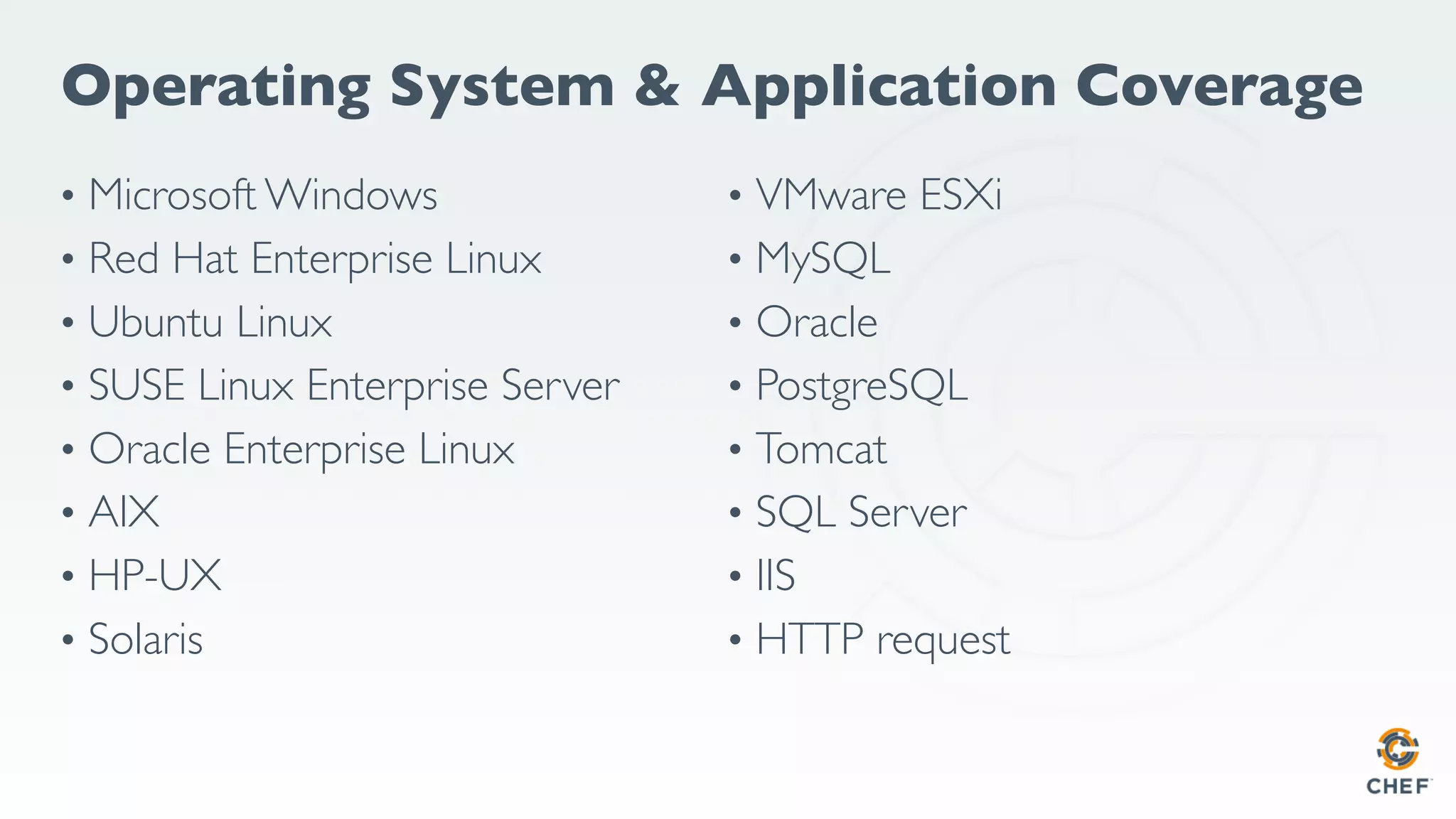

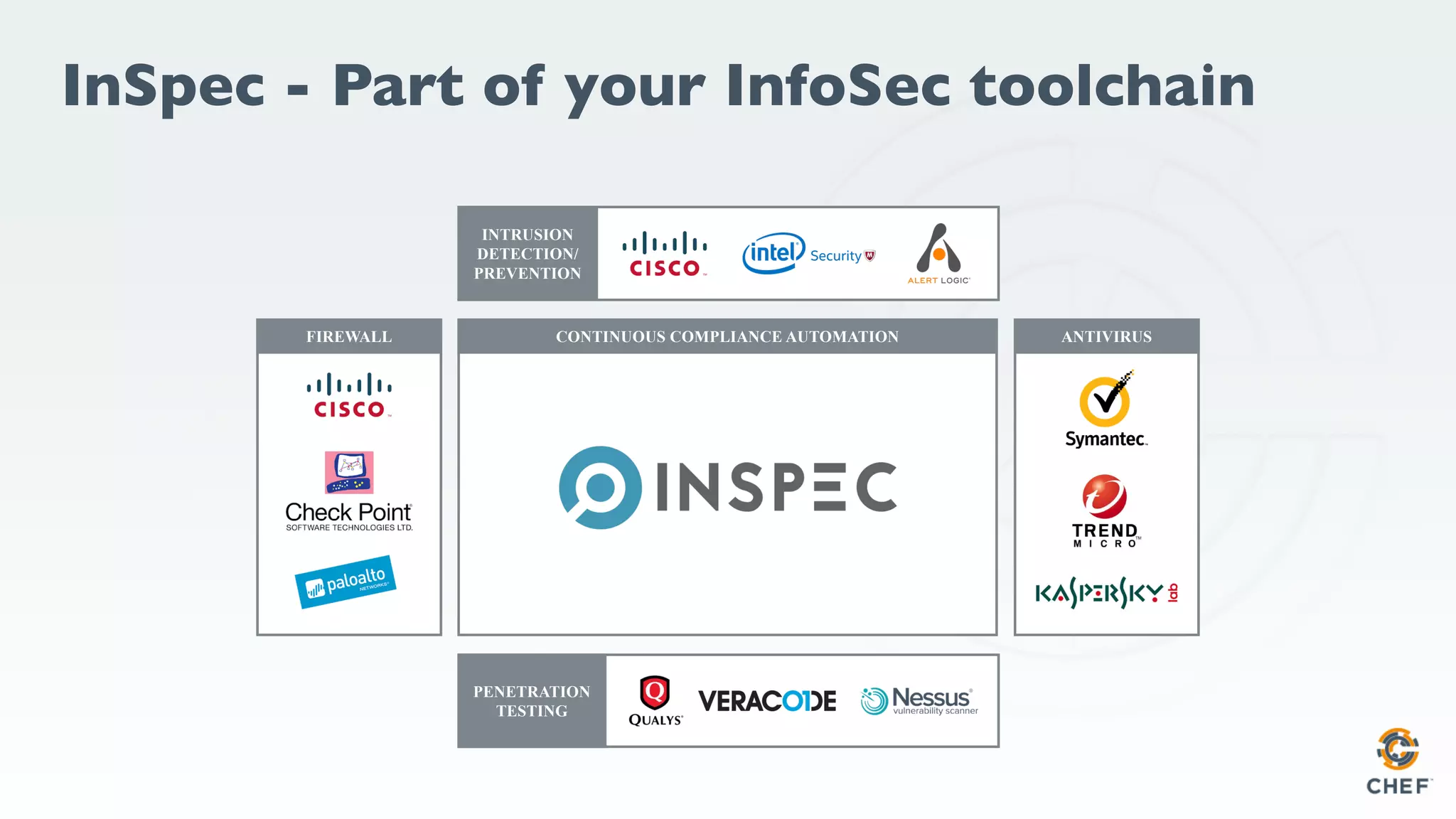

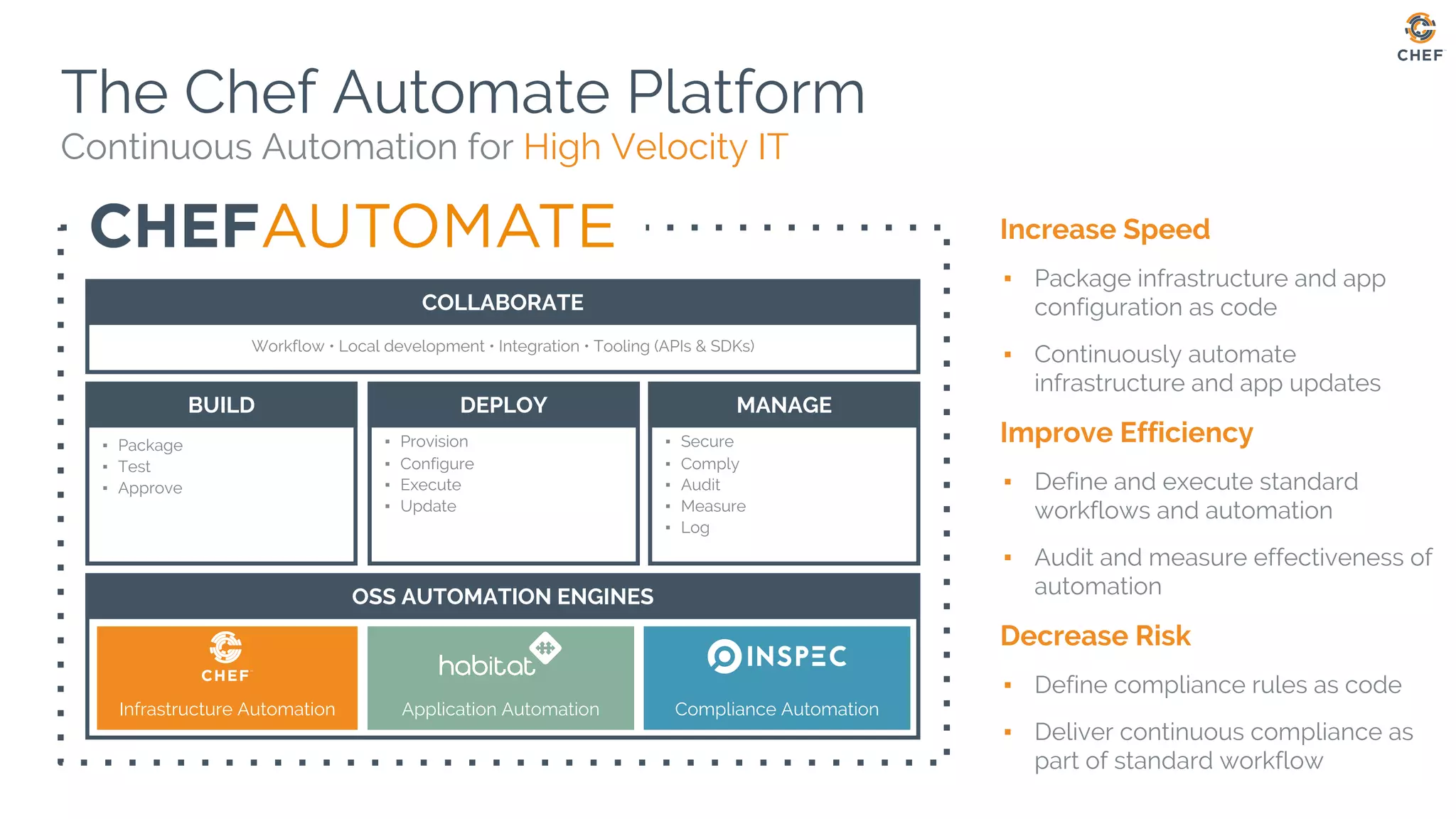

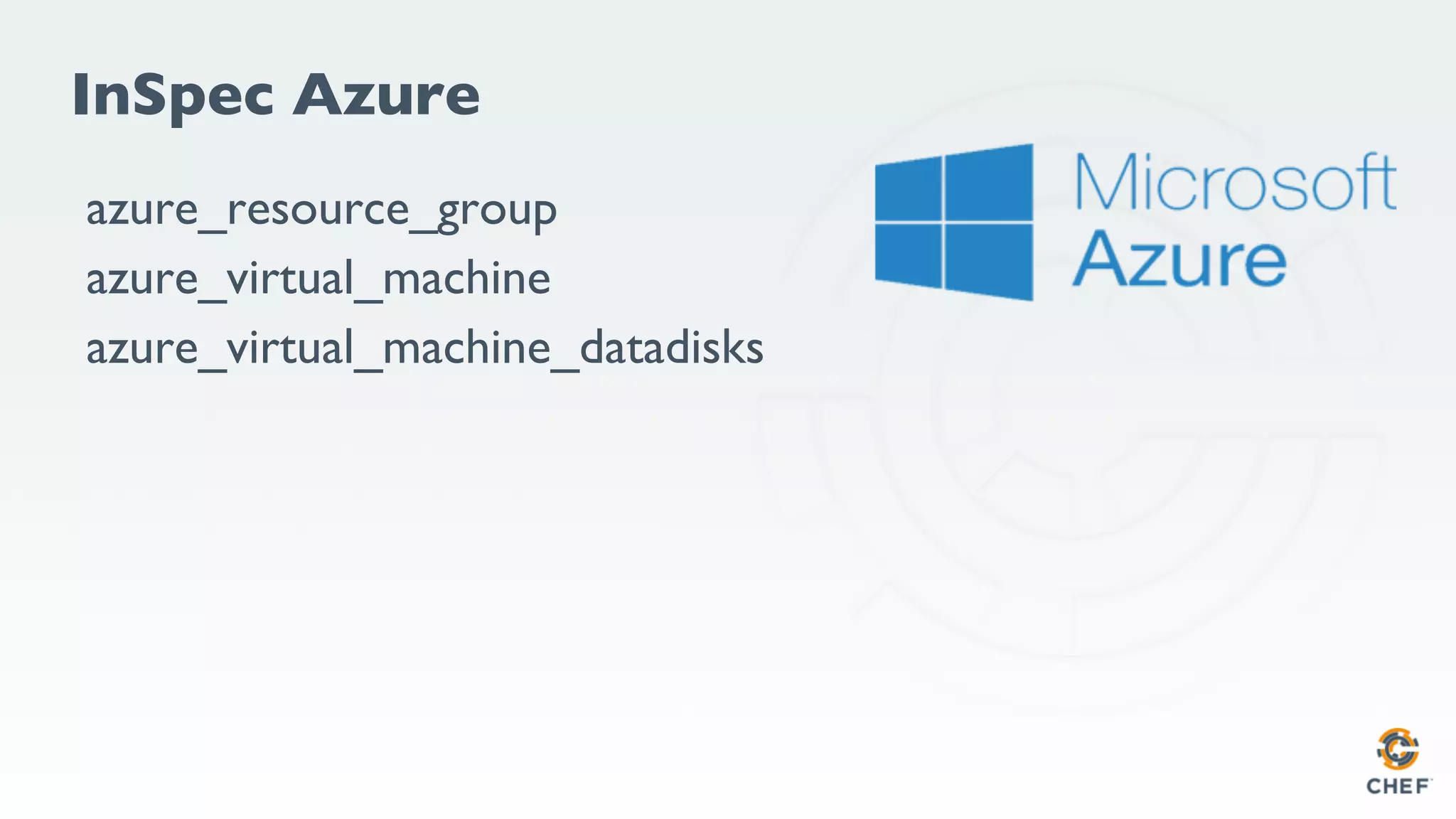

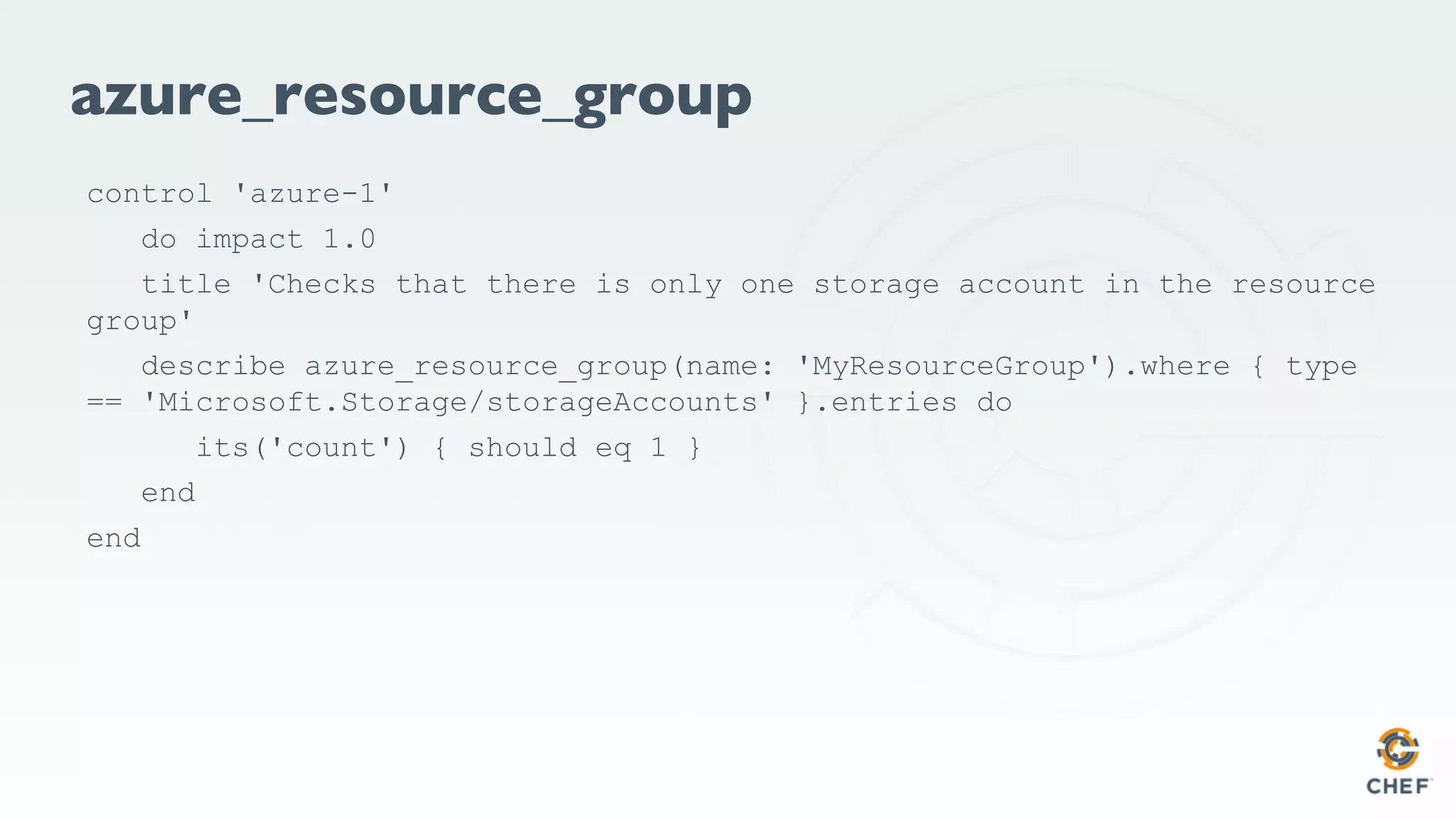

The document discusses automating compliance in Azure using InSpec and Chef, outlining security vulnerabilities and misconfigurations related to SSH and Apache servers. It highlights the importance of continuous testing and compliance and offers methods for verifying configurations via command line and code examples. Additionally, it includes references to various operating systems and tools for ensuring compliance automation within software development workflows.

![Infrastructure Code

package 'httpd' do

action :install

end

service 'httpd' do

action [ :start, :enable ]

end](https://image.slidesharecdn.com/20170626-chefmelbourne-inspec-170626115528/75/Melbourne-Chef-Meetup-Automating-Azure-Compliance-with-InSpec-23-2048.jpg)

![azure_virtual_machine

control 'azure-1' do

impact 1.0

title 'Make sure Ubuntu Servers are built from an Ubuntu template'

describe azure_virtual_machine(name: '[YOUR VM NAME]',

resource_group: '[YOUR RESOURCE GROUP]') do

its('sku') { should eq '16.04.0-LTS' }

its('publisher') { should eq 'Canonical' }

its('offer') { should eq 'UbuntuServer' }

end

end](https://image.slidesharecdn.com/20170626-chefmelbourne-inspec-170626115528/75/Melbourne-Chef-Meetup-Automating-Azure-Compliance-with-InSpec-62-2048.jpg)