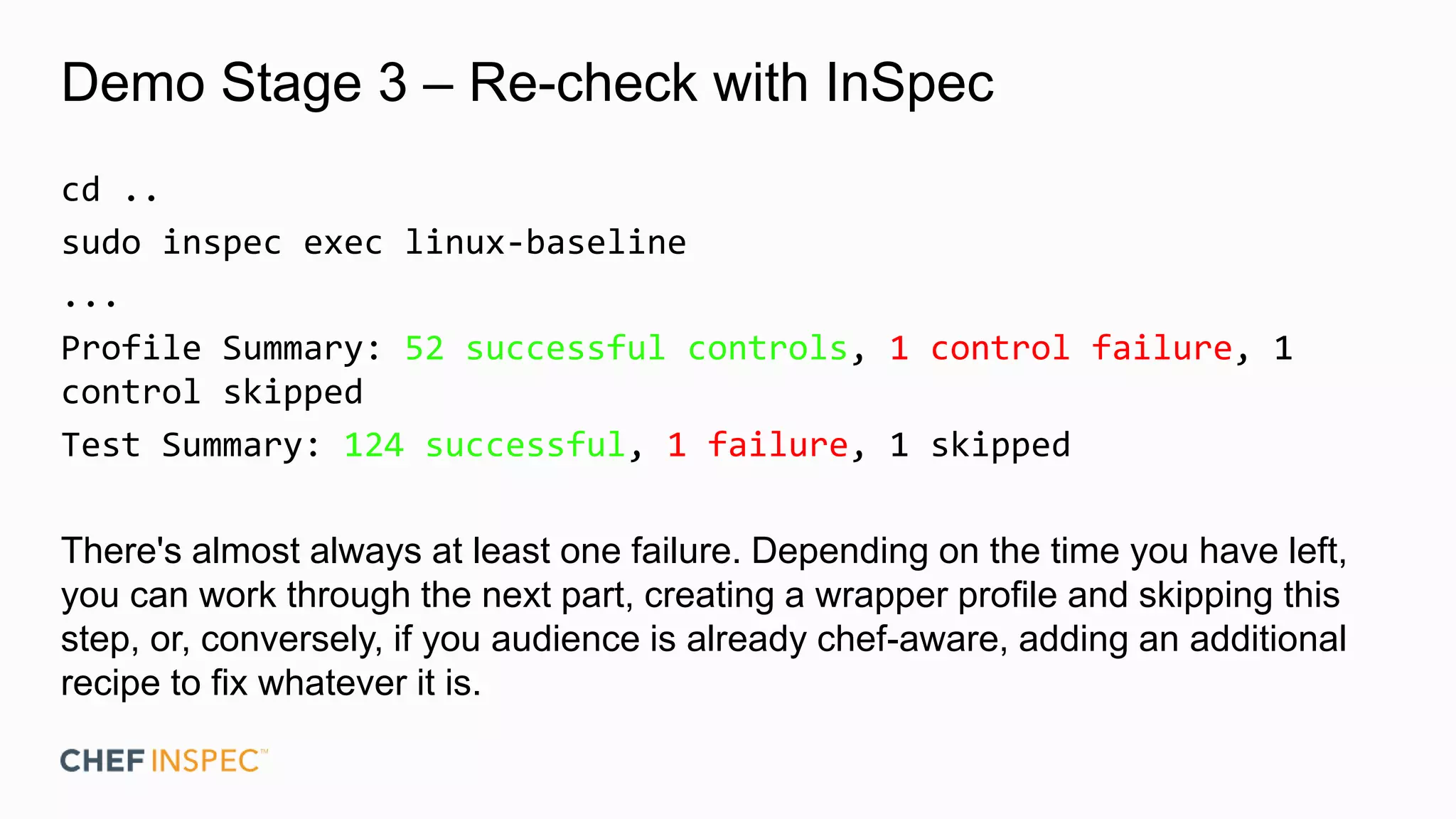

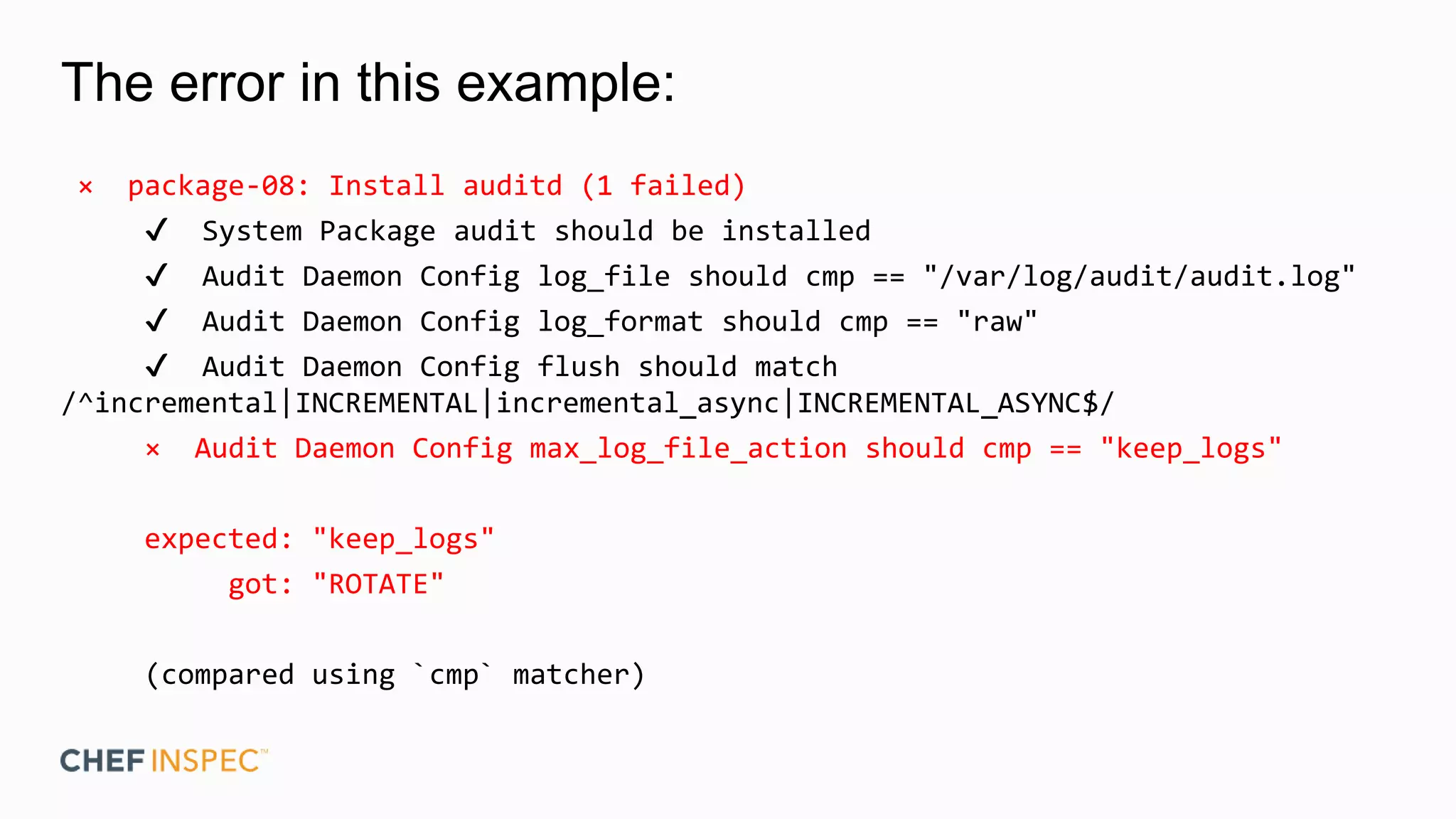

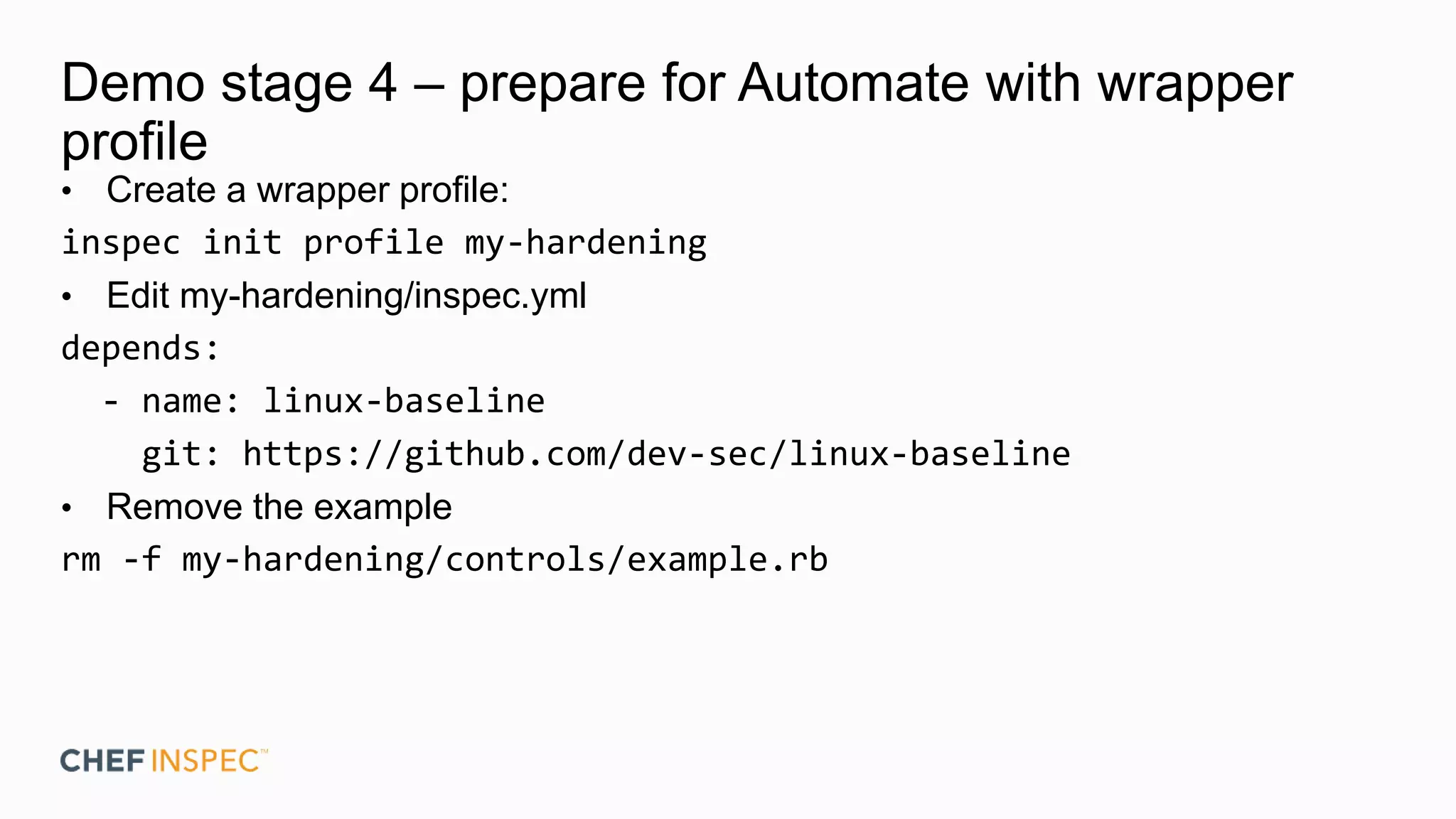

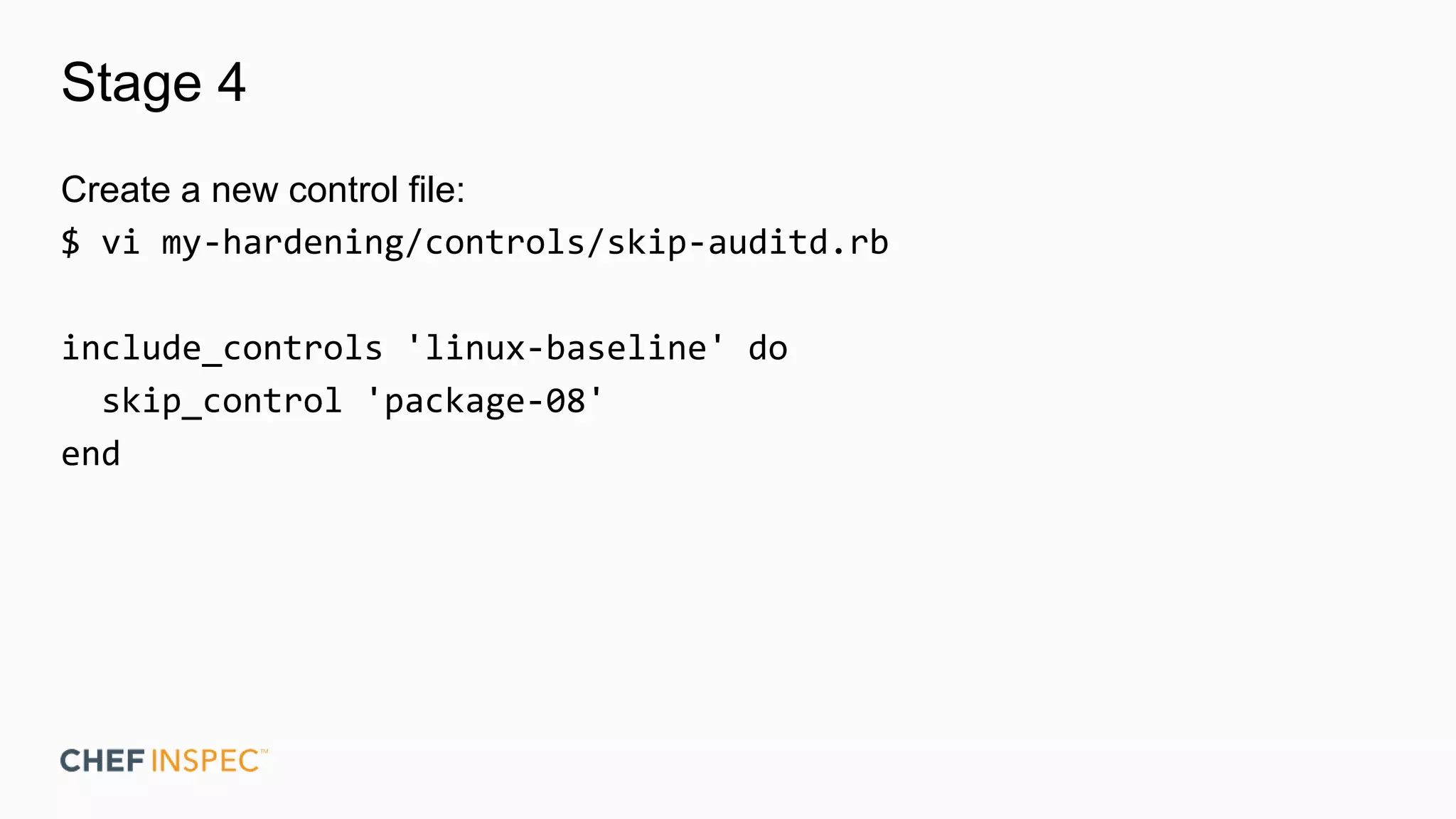

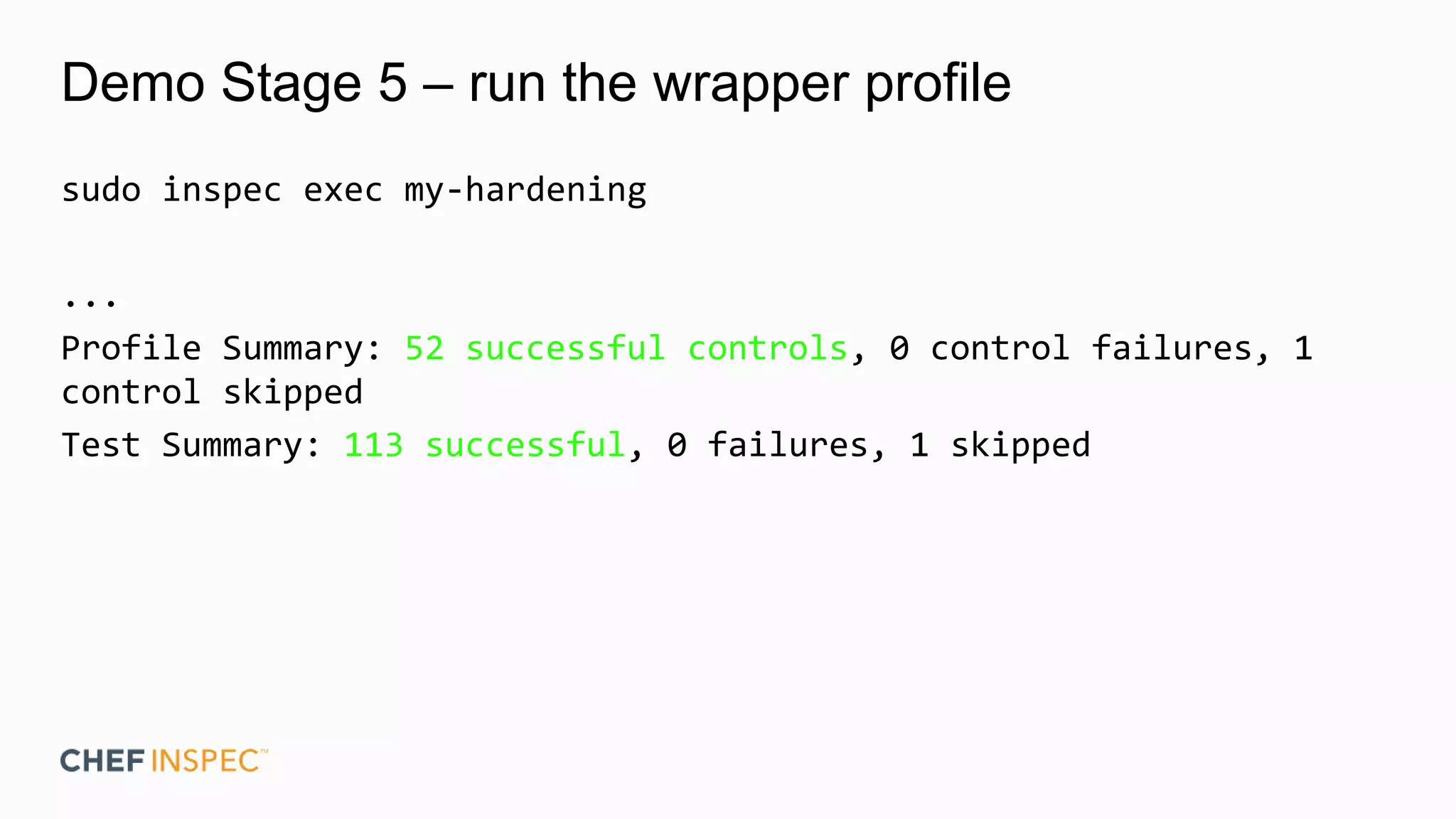

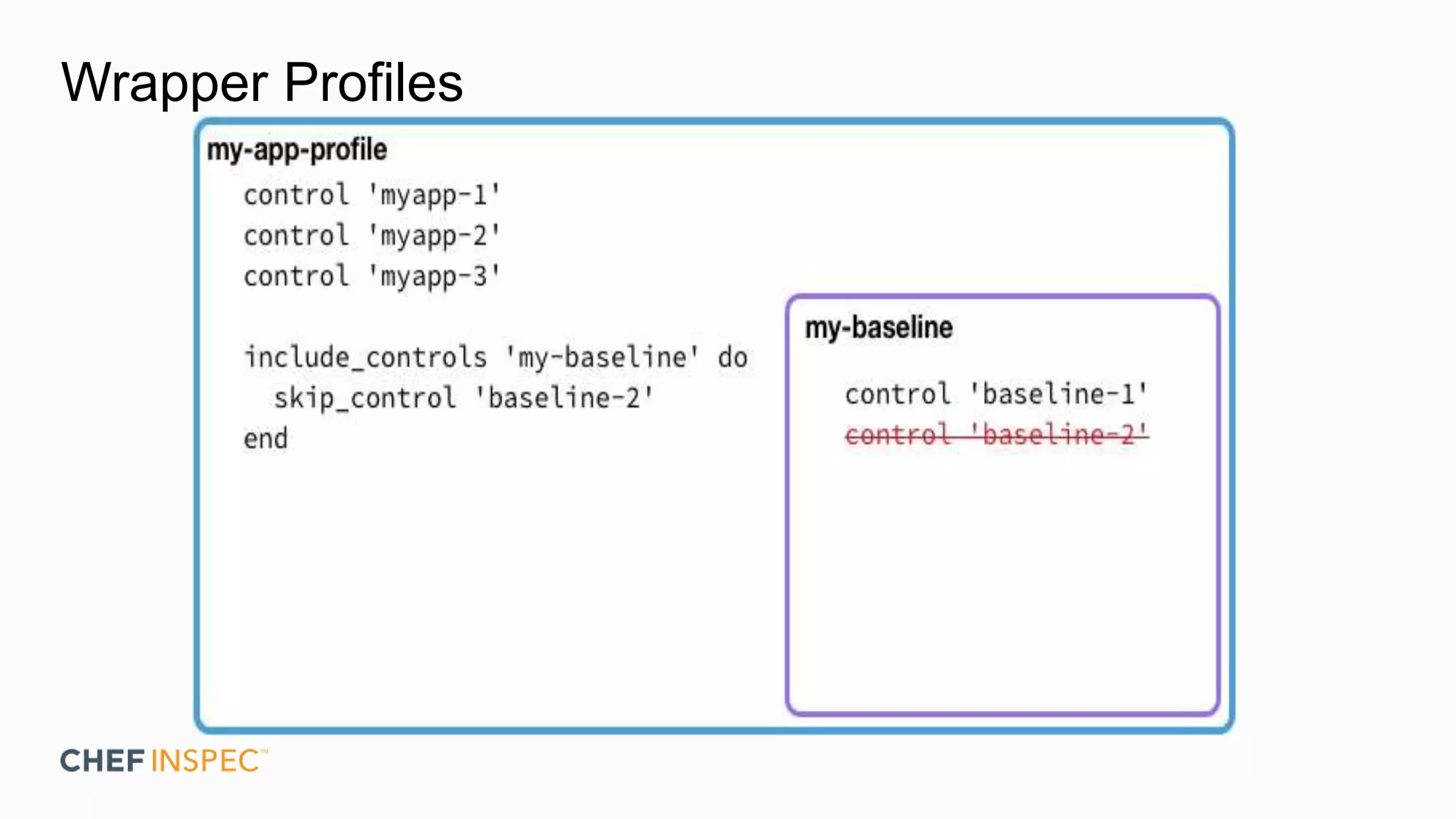

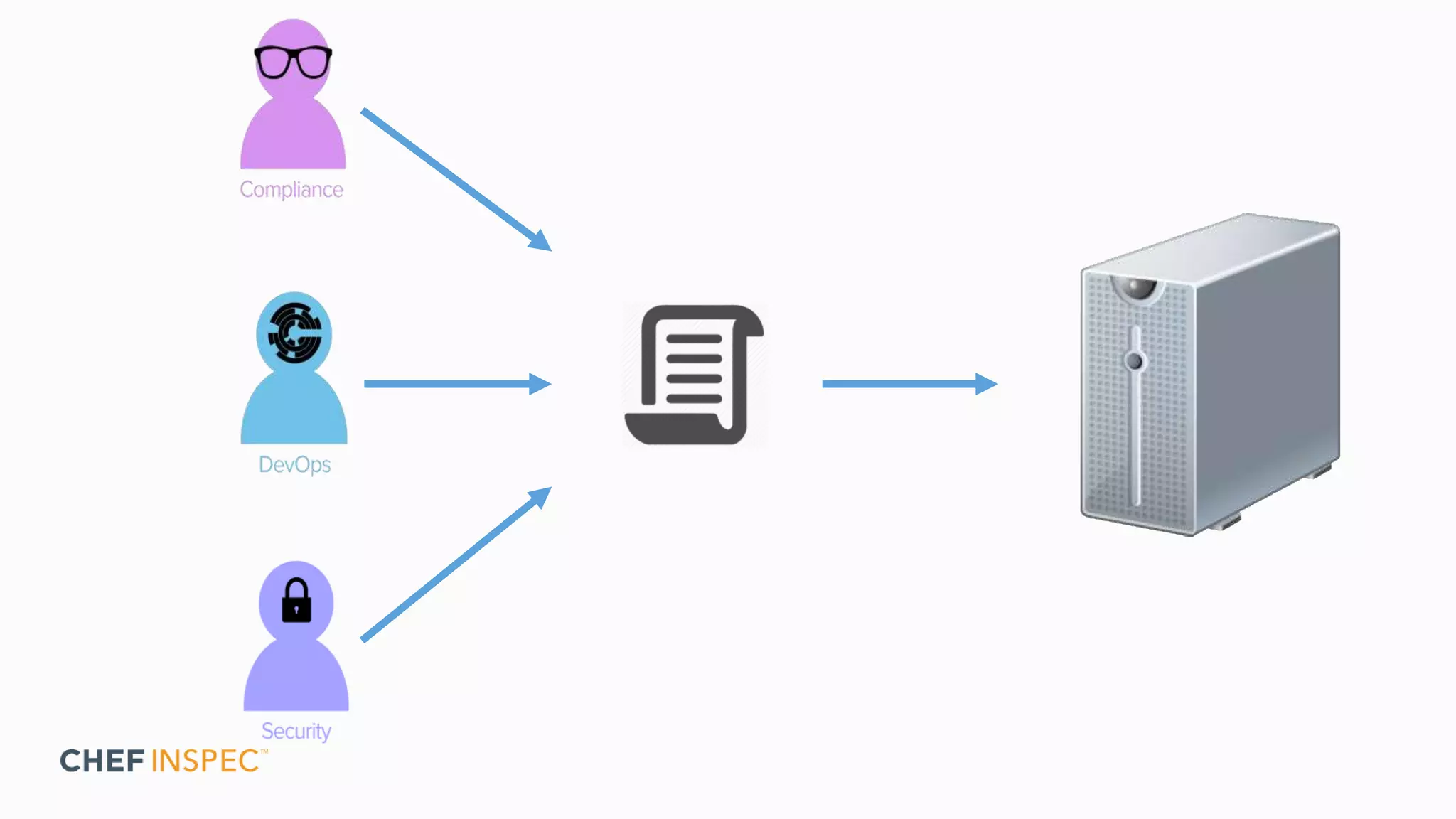

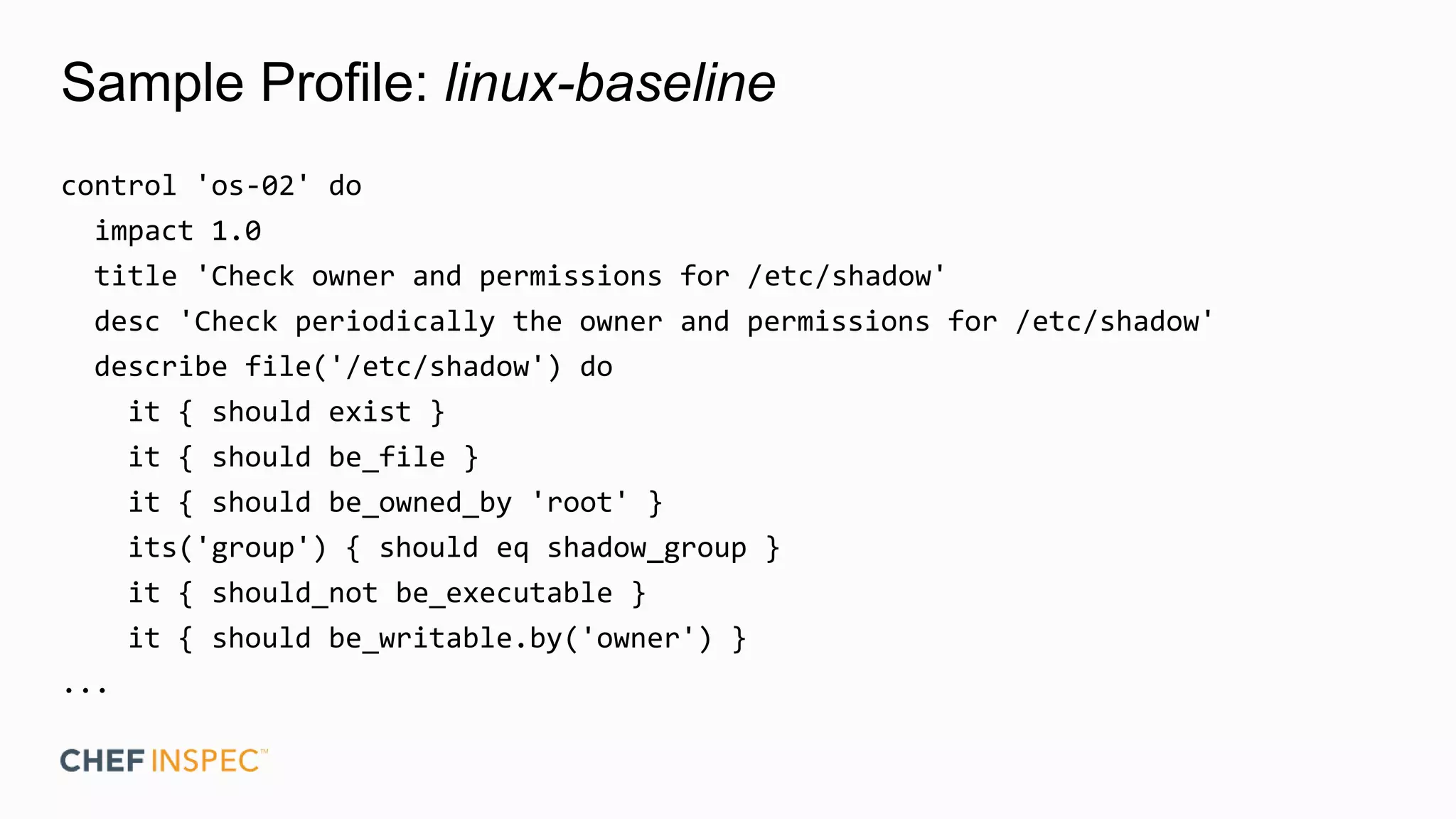

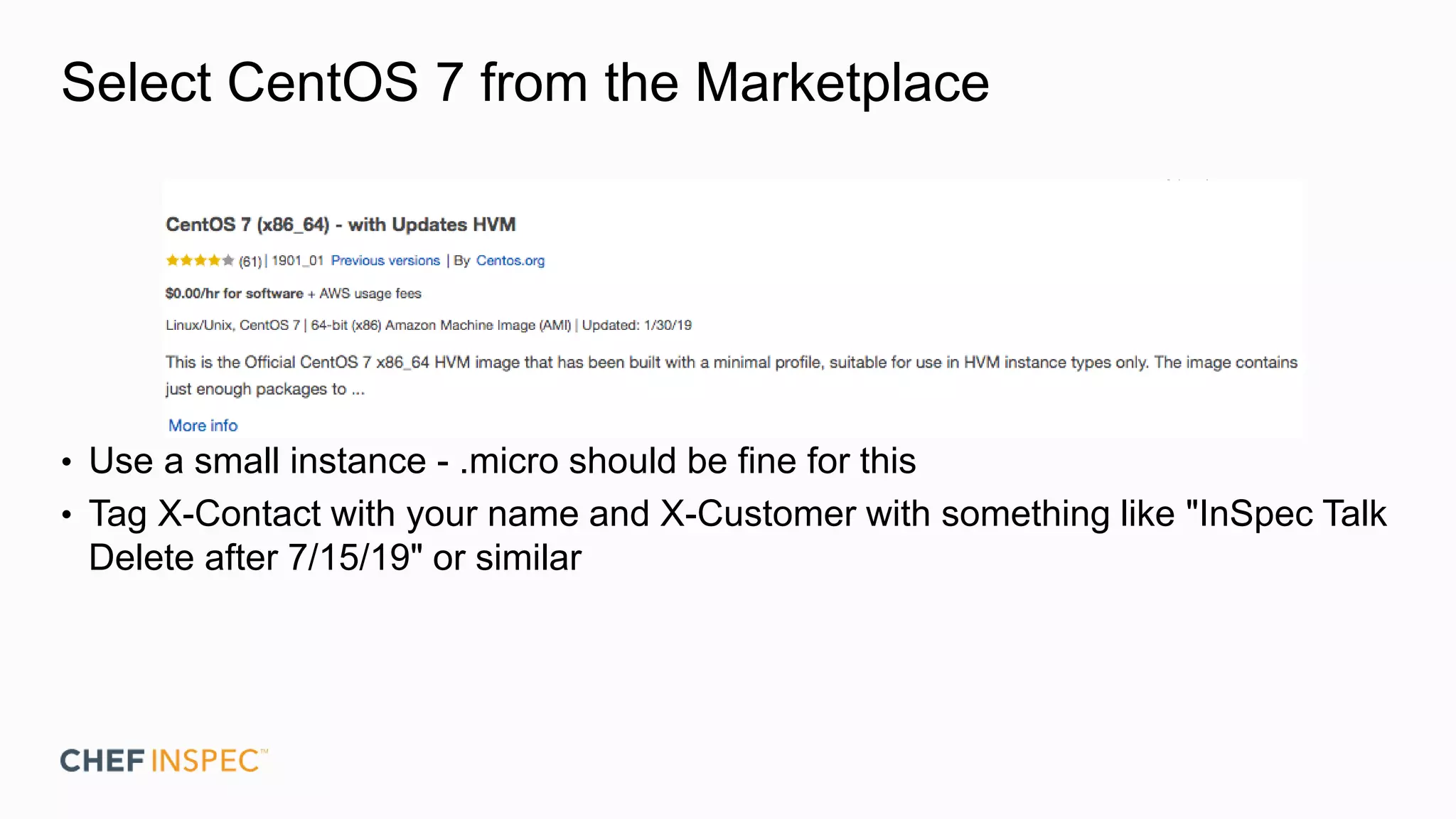

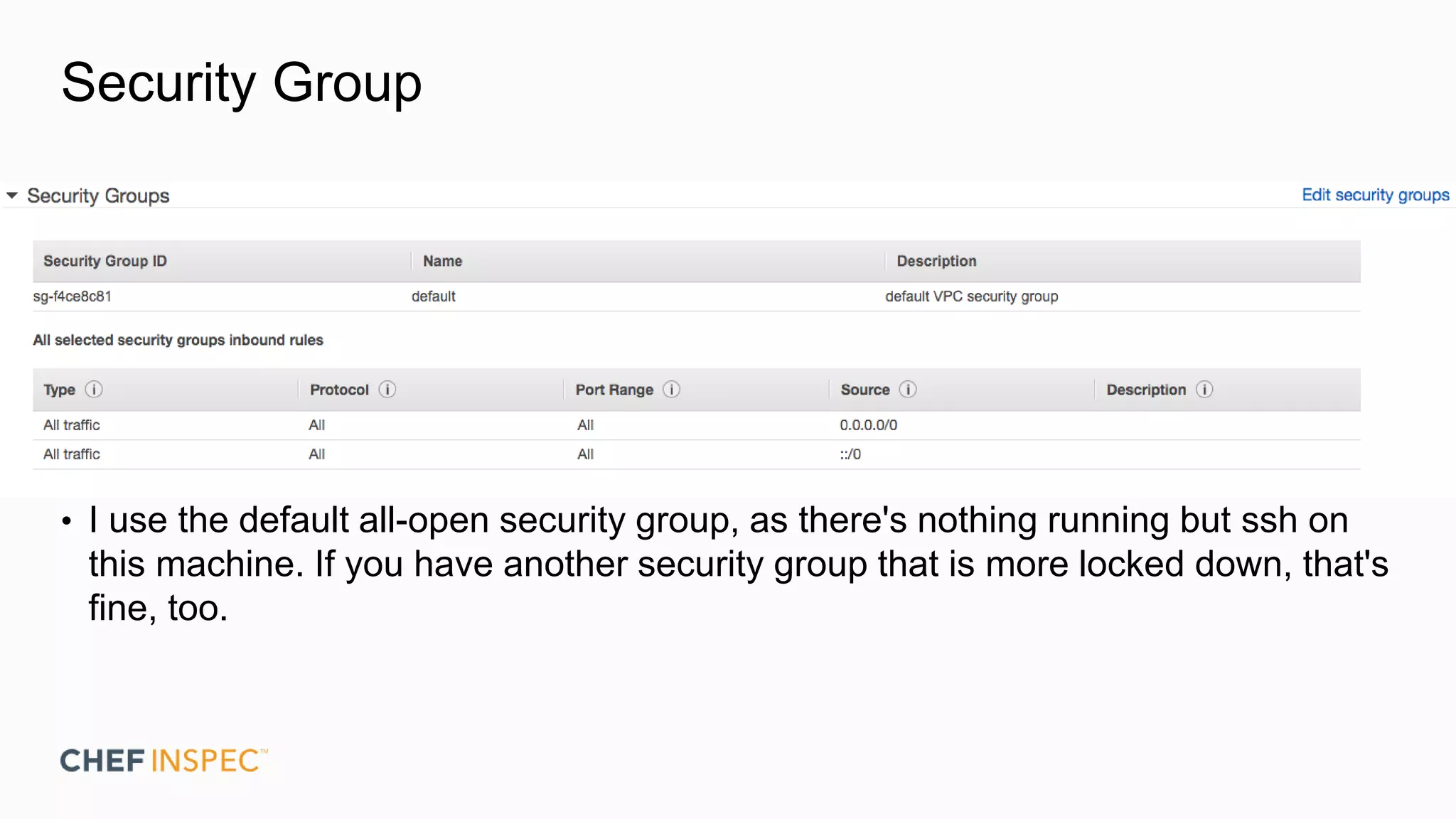

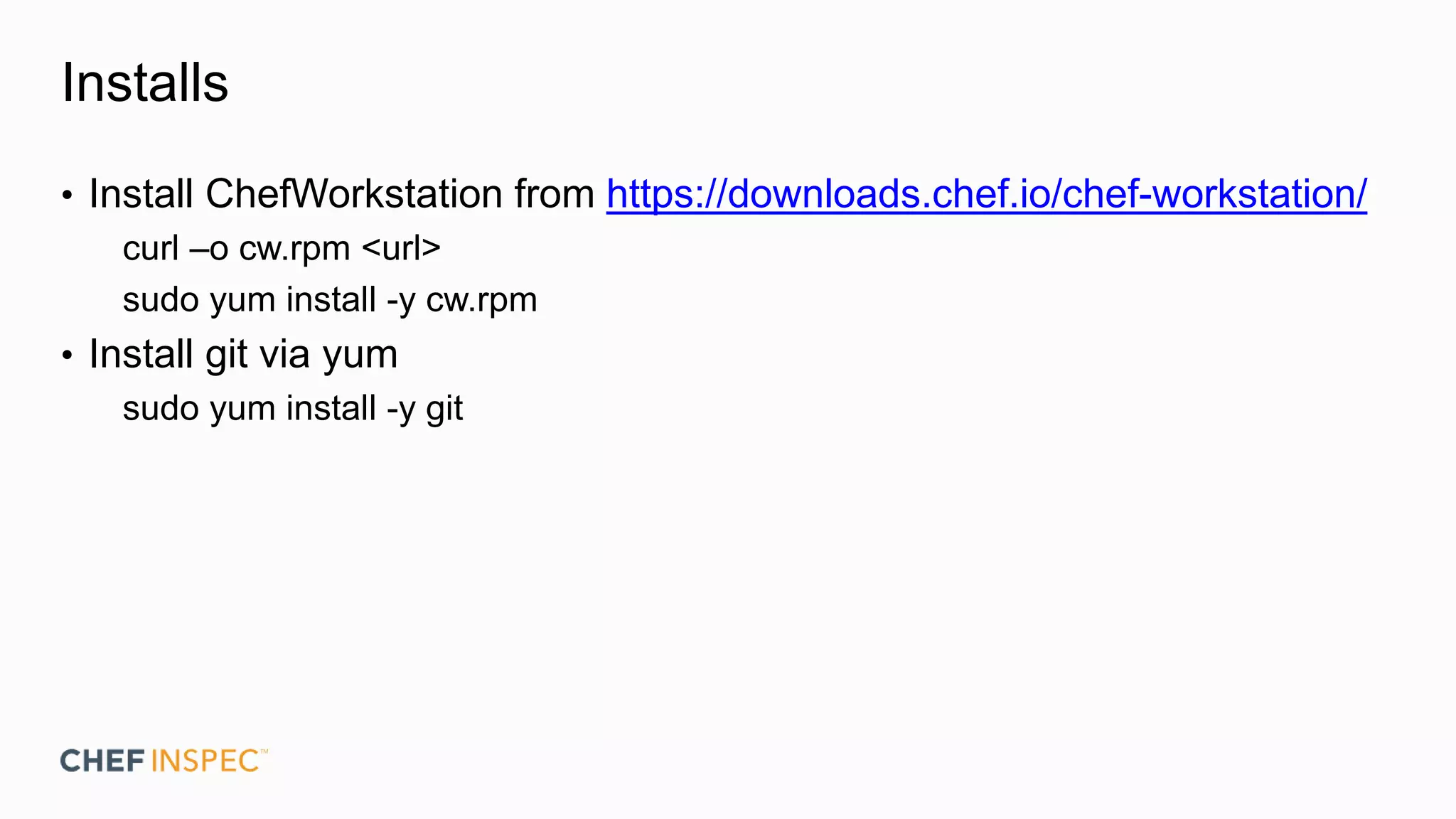

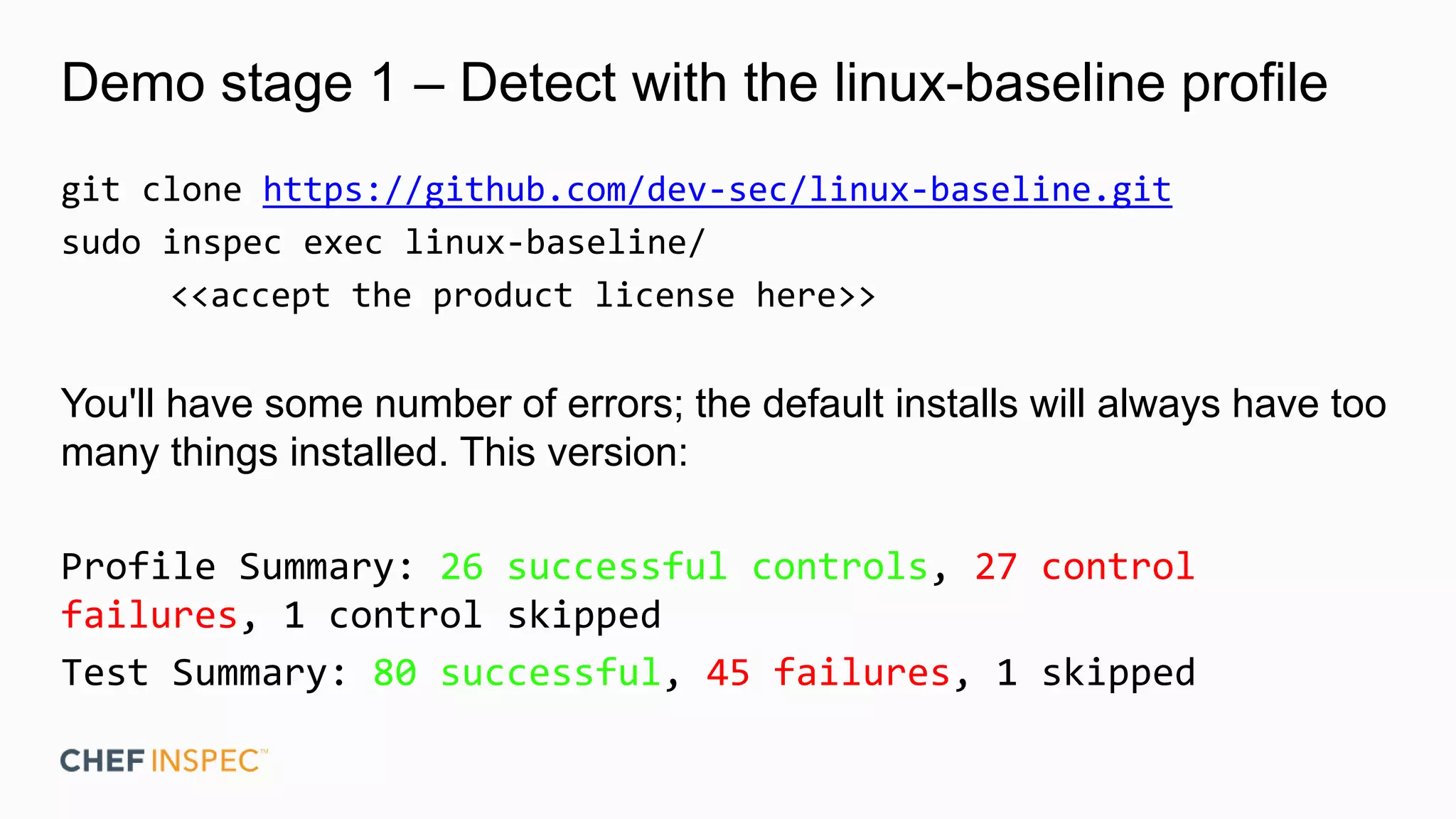

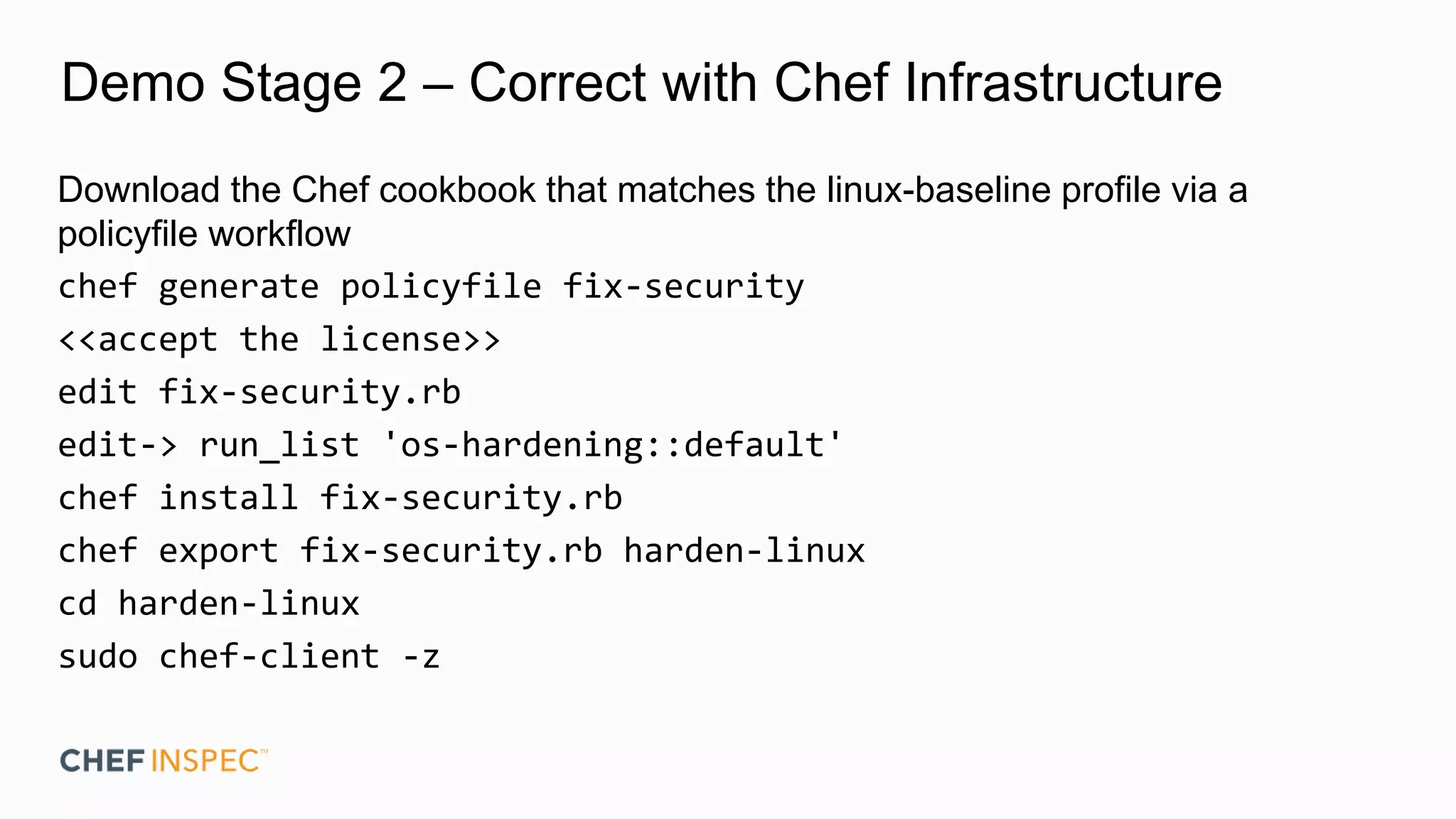

Chef InSpec can be used to test for system security and compliance by creating profiles of InSpec tests. Profiles allow complex compliance requirements to be tested across different teams and environments. The document demonstrates running the open source linux-baseline profile against a CentOS system using InSpec, remediating any failures using the corresponding Chef cookbook, and then wrapping the linux-baseline profile in a custom profile to skip a specific test.

![Correct with Chef con't

...things happening...

Recipe: os-hardening::auditd

* yum_package[audit] action install (up to date)

Running handlers:

Running handlers complete

Chef Infra Client finished, 141/206 resources updated in 07

seconds](https://image.slidesharecdn.com/walls-allthingsopen2019-inspec-191015154756/75/Prescriptive-Security-with-InSpec-All-Things-Open-2019-35-2048.jpg)