Embed presentation

Downloaded 13 times

![Mathematical analysis

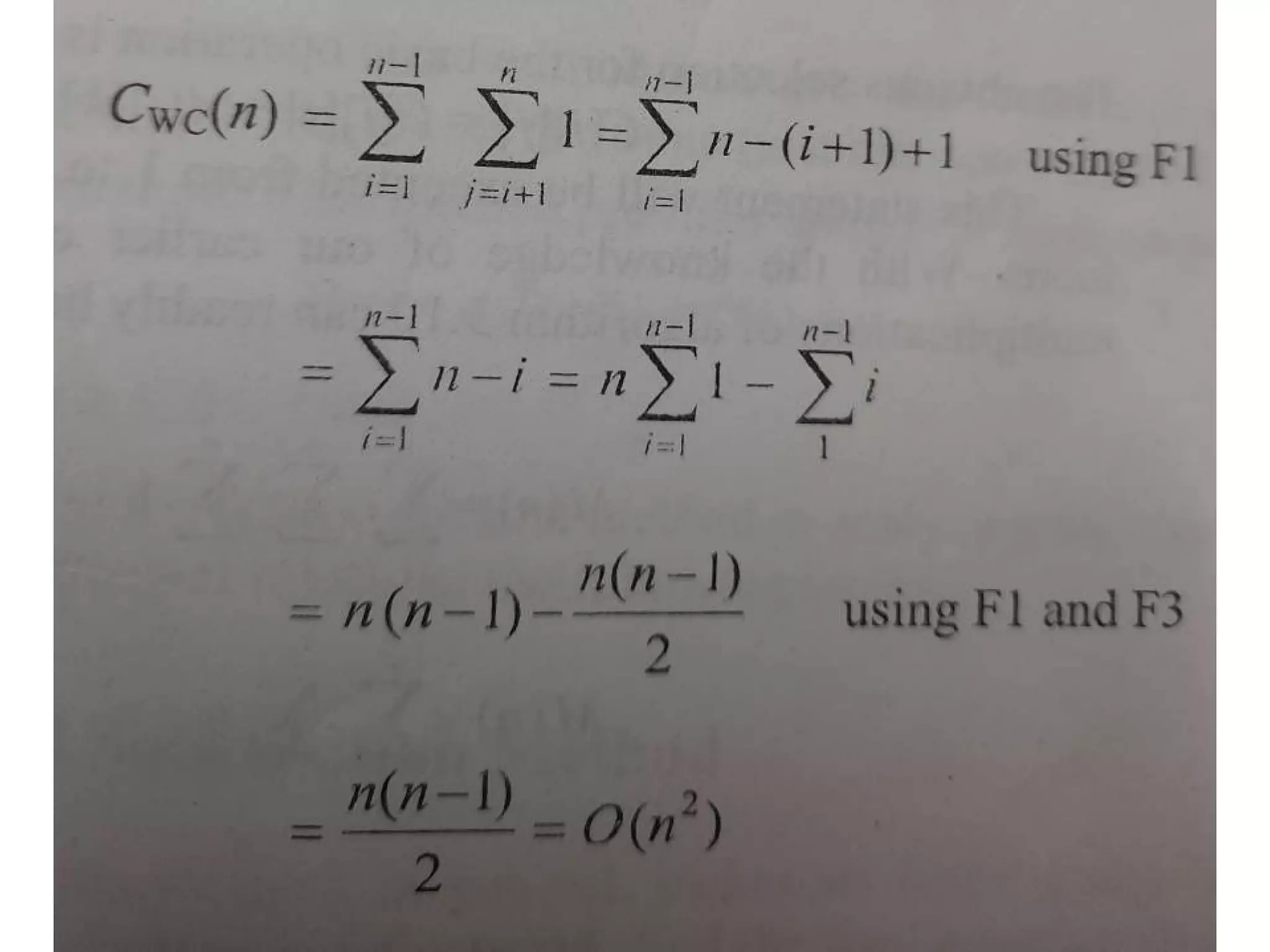

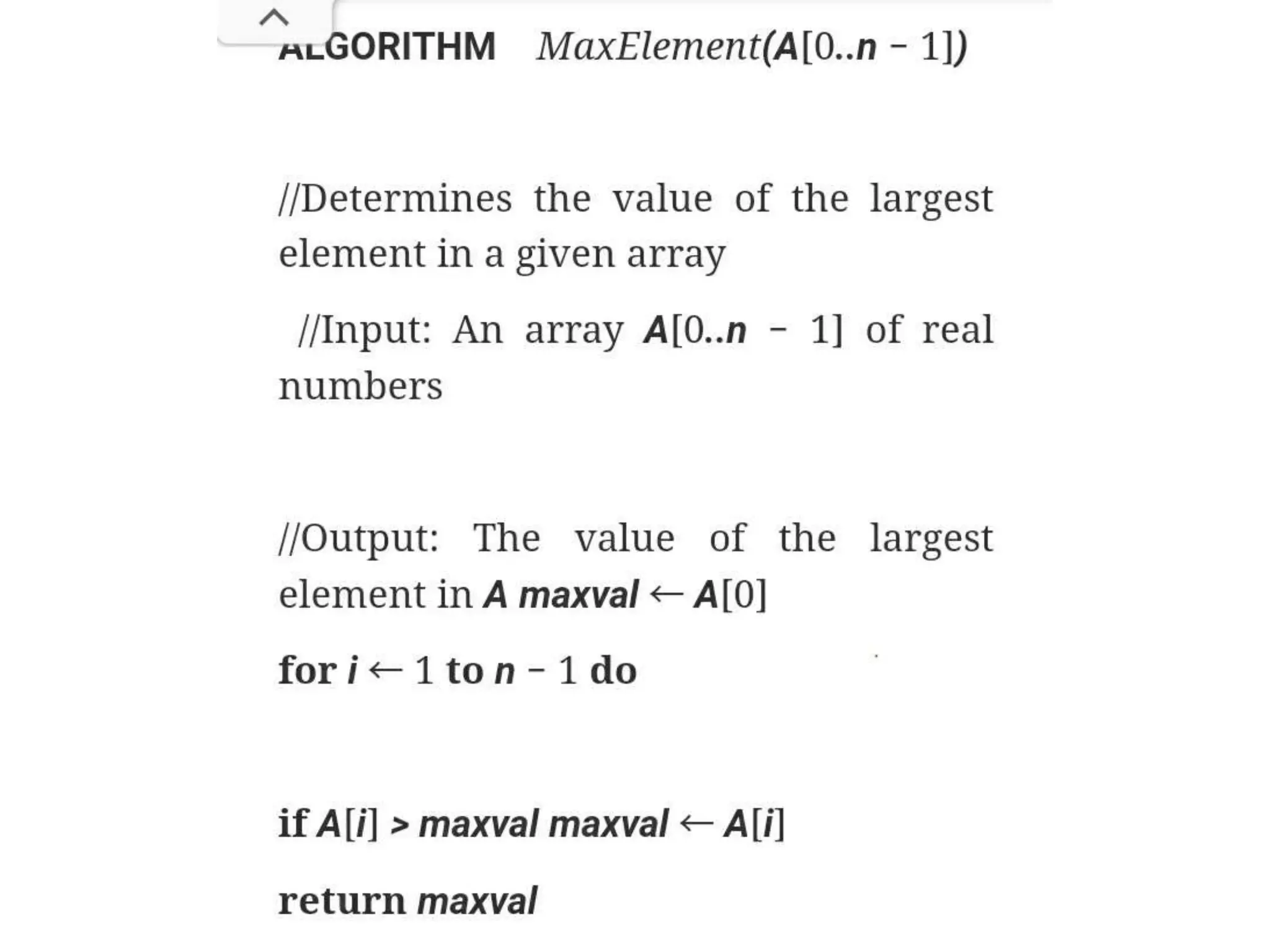

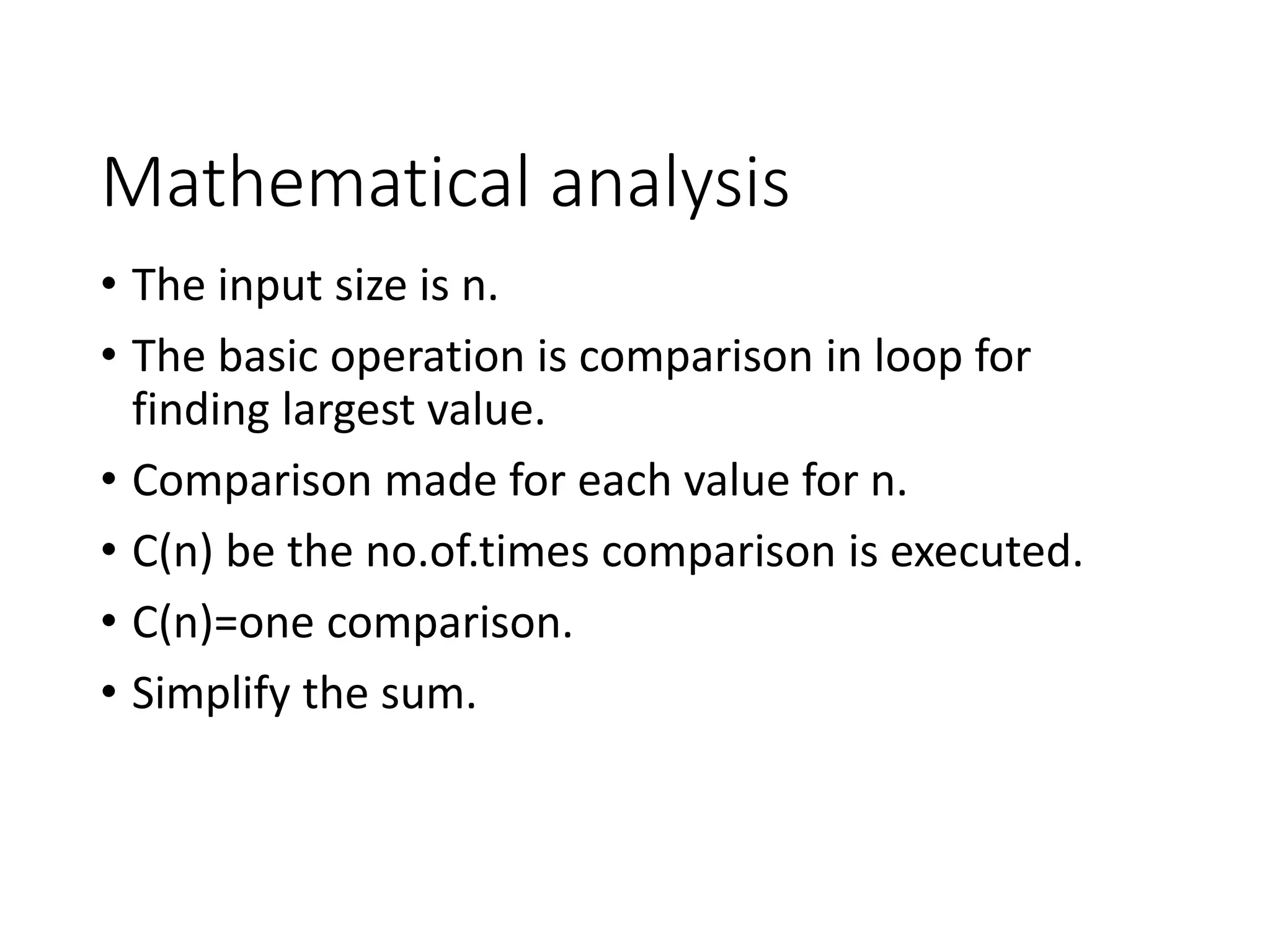

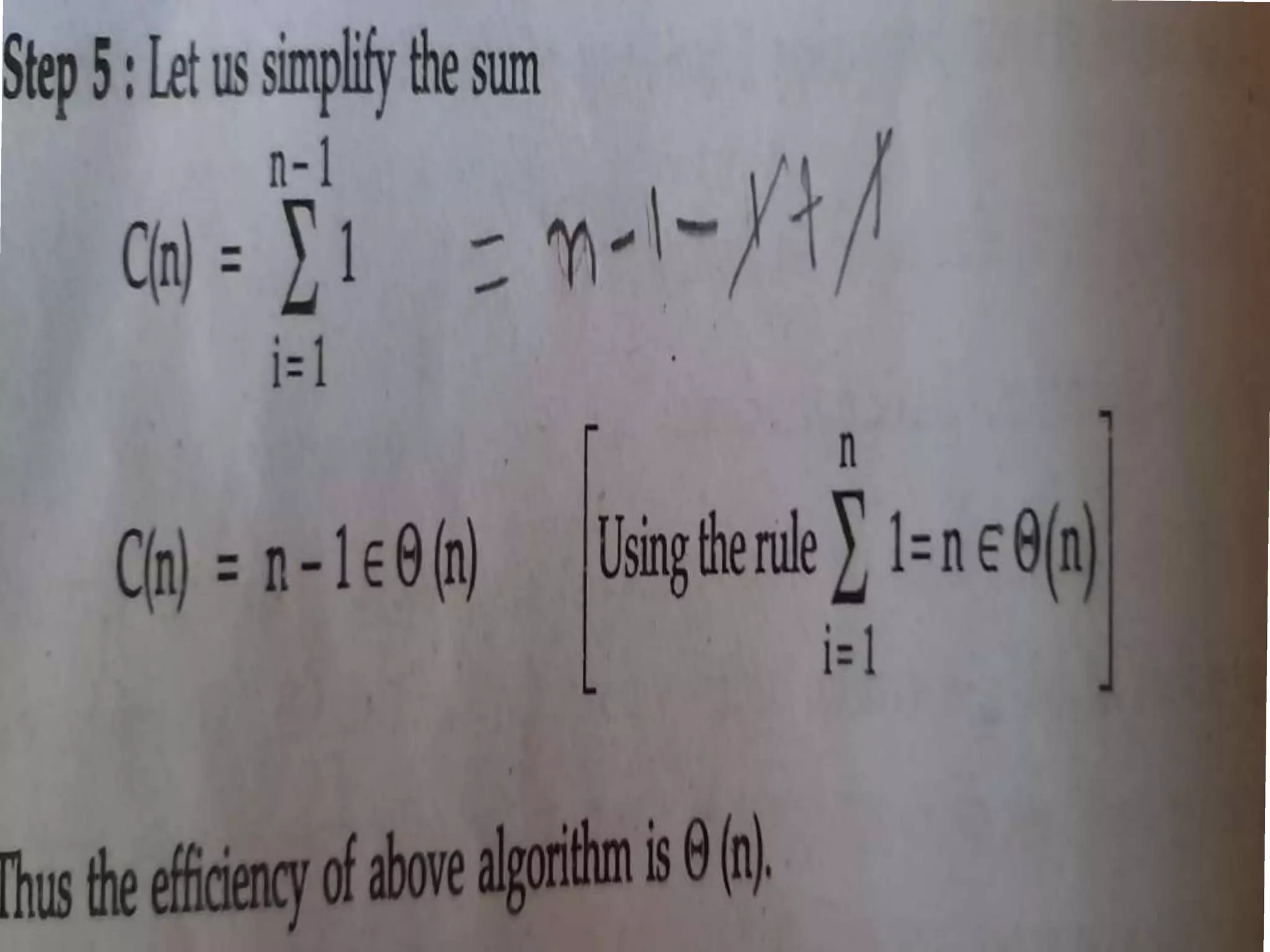

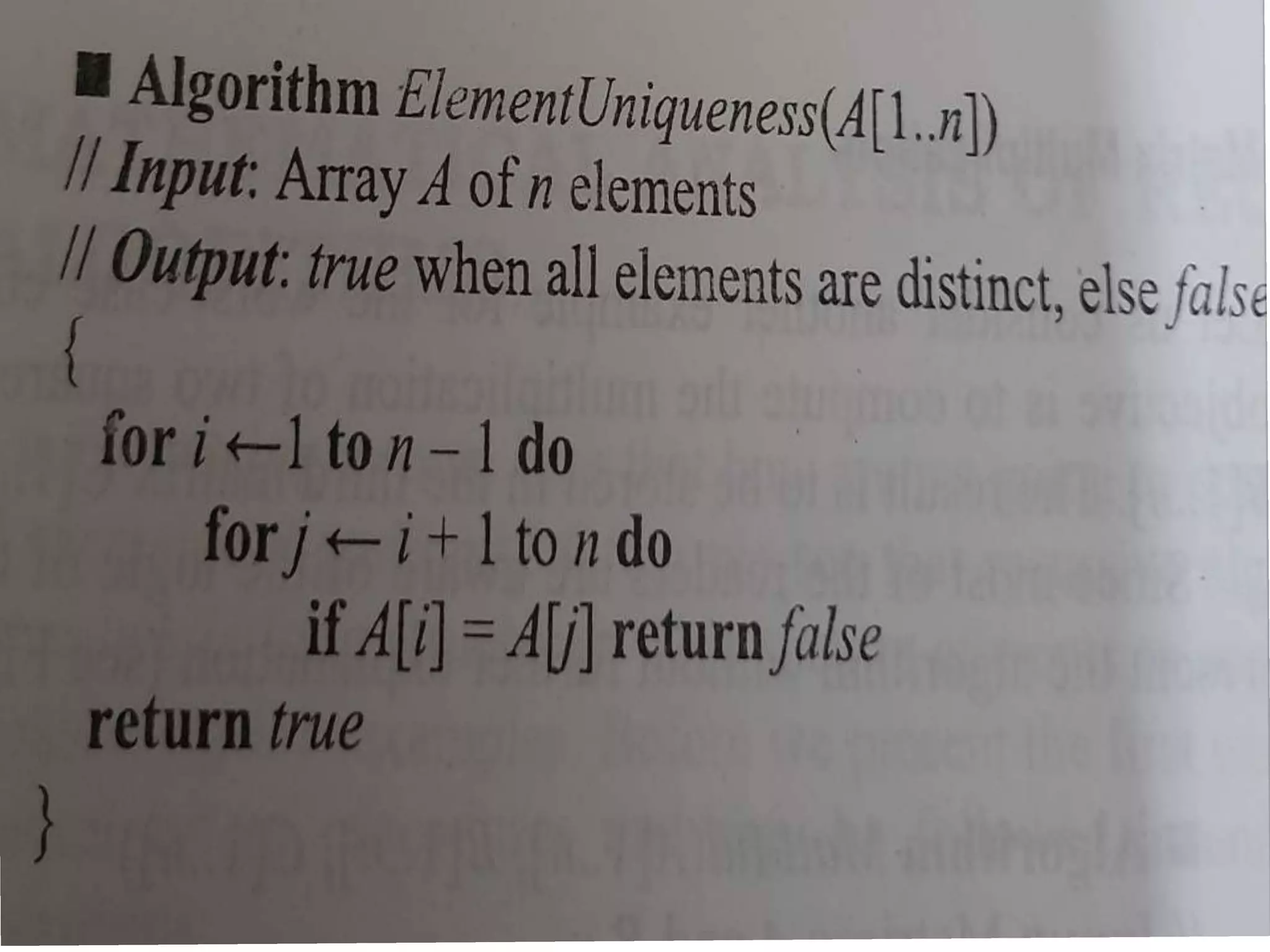

• The input size of the problem is n

• The basic operation is if A[i]==A[j]

• The outter loop will be executed first to last but

one element and inner loop is executed 2 to n

arrary index.

• C(n) no.of.comparison statement is executed in

worstcase.](https://image.slidesharecdn.com/mathanonrecur-210418115317/75/Mathematical-Analysis-of-Non-Recursive-Algorithm-7-2048.jpg)

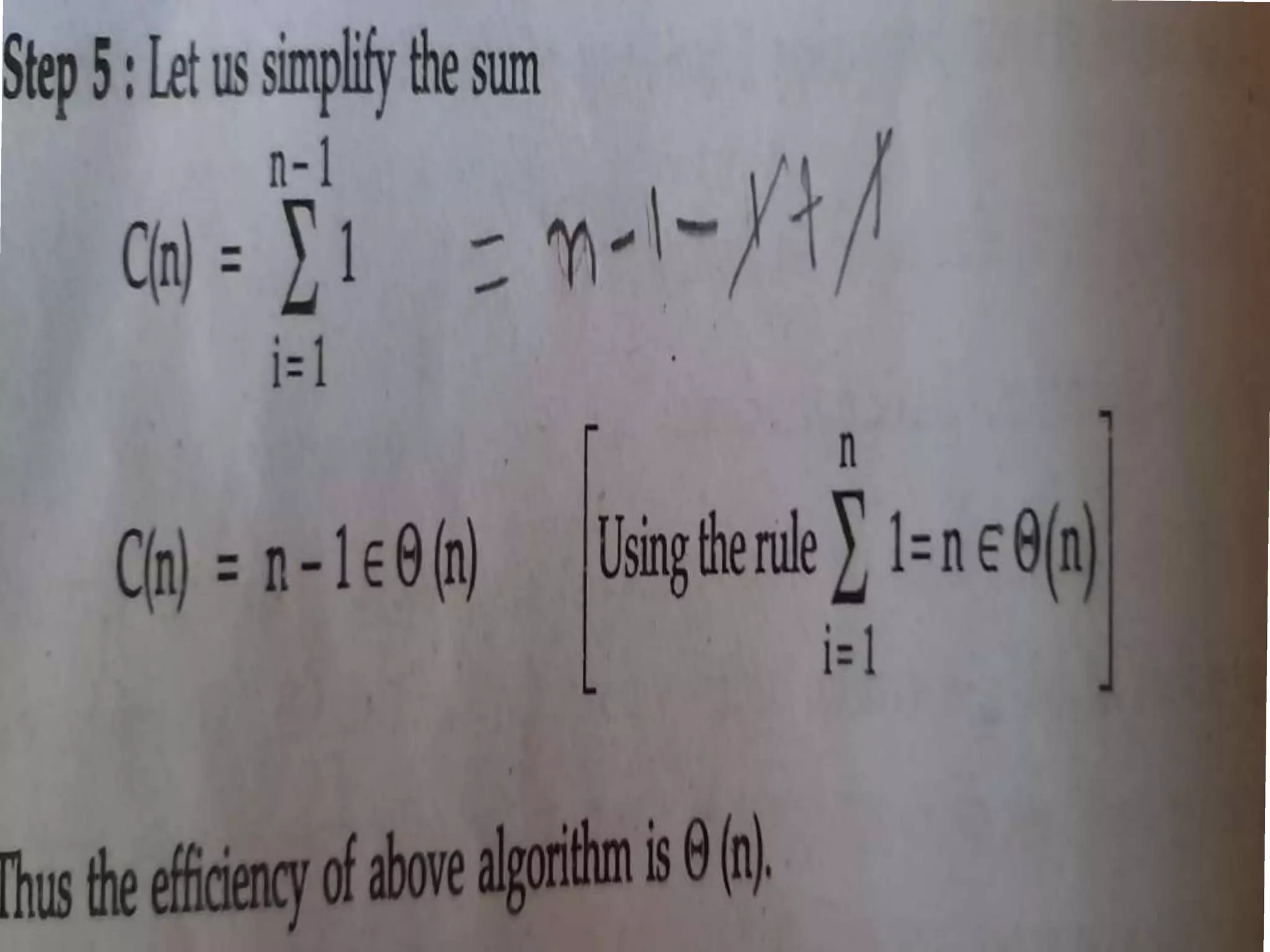

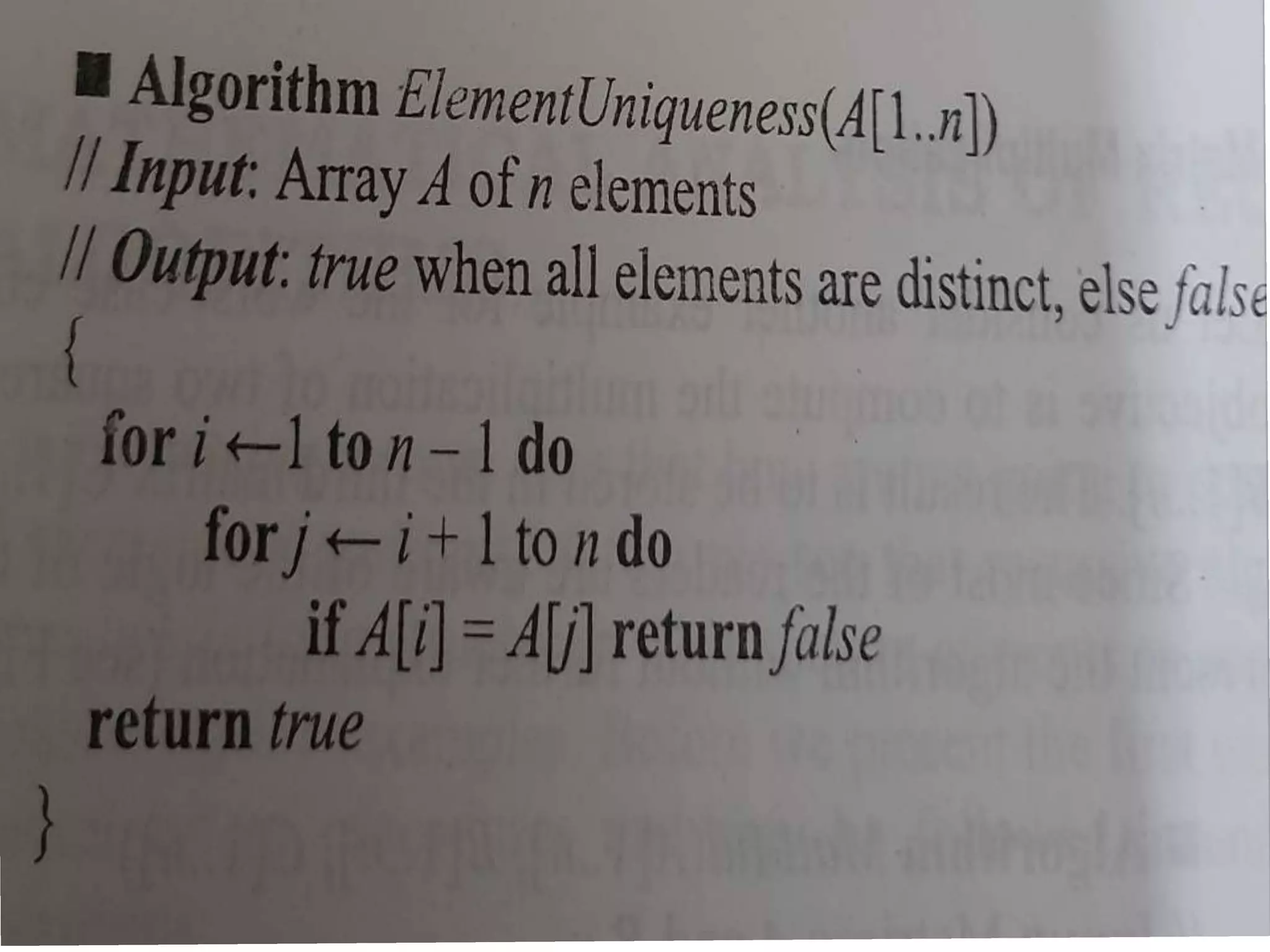

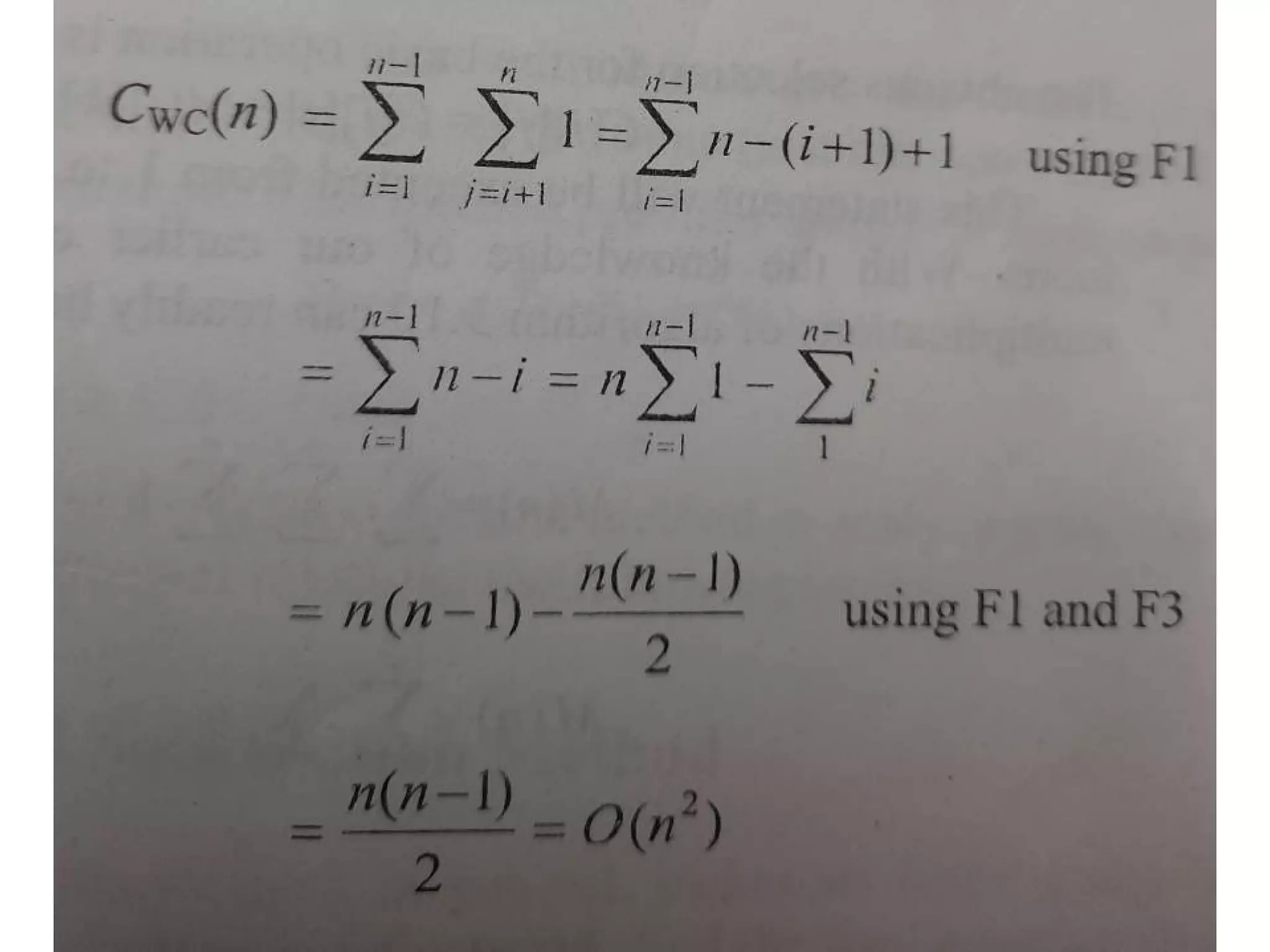

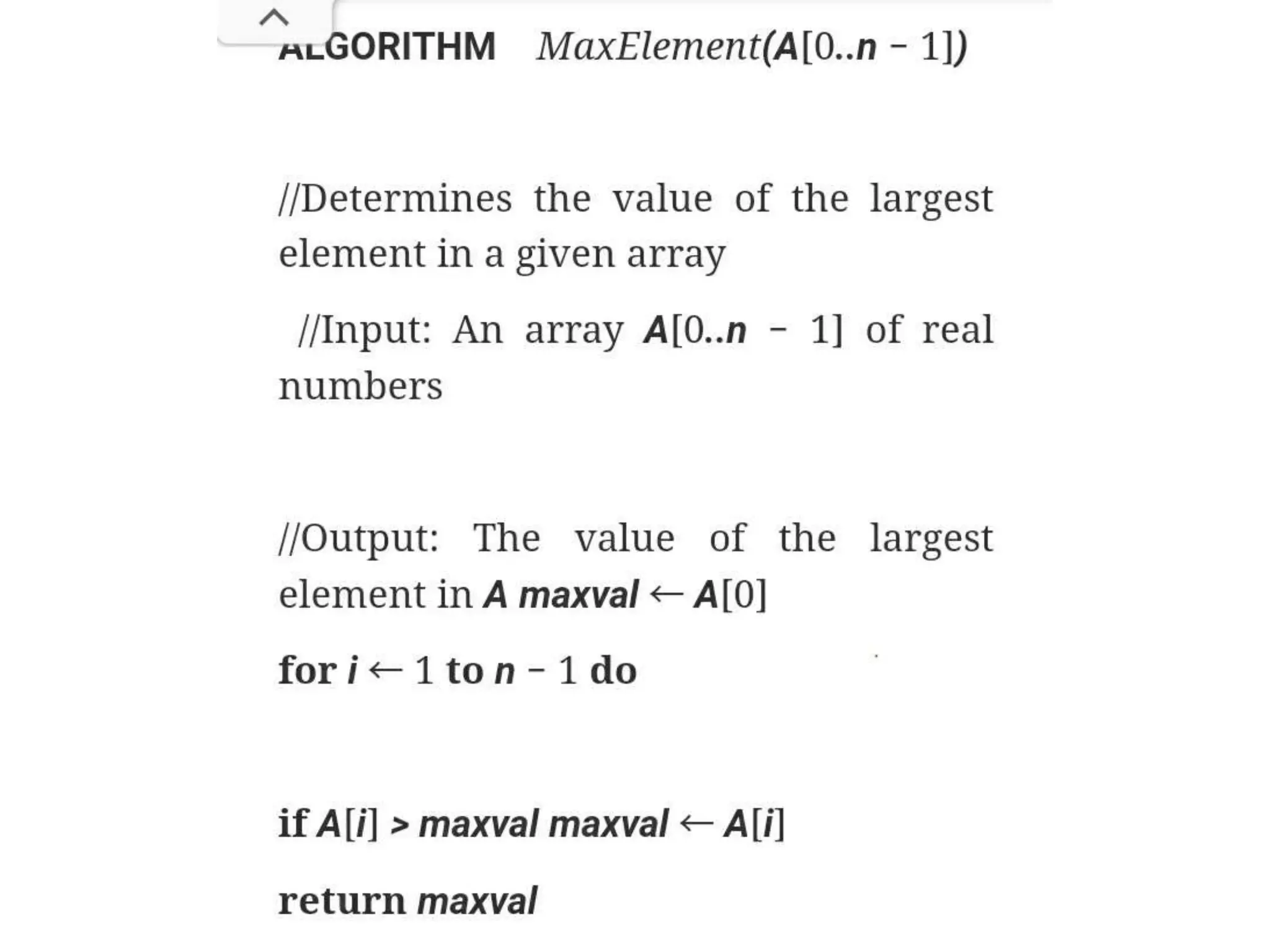

The document outlines a mathematical analysis of a non-recursive algorithm focusing on input size and basic operations. It emphasizes the execution of comparisons within nested loops to determine the largest value, analyzing best, worst, and average cases separately. The analysis provides a formula for simplifying the total number of comparisons based on the input size n.

![Mathematical analysis

• The input size of the problem is n

• The basic operation is if A[i]==A[j]

• The outter loop will be executed first to last but

one element and inner loop is executed 2 to n

arrary index.

• C(n) no.of.comparison statement is executed in

worstcase.](https://image.slidesharecdn.com/mathanonrecur-210418115317/75/Mathematical-Analysis-of-Non-Recursive-Algorithm-7-2048.jpg)