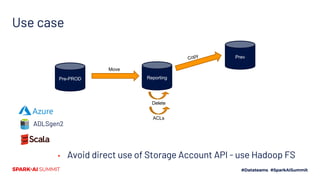

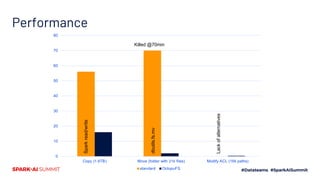

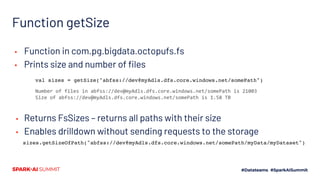

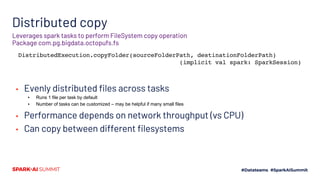

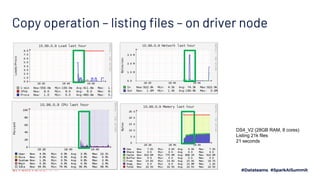

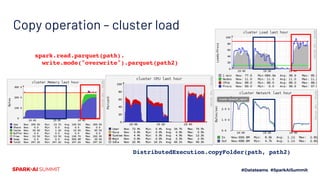

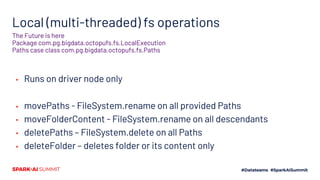

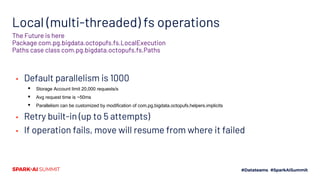

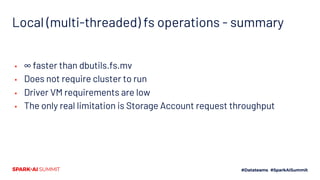

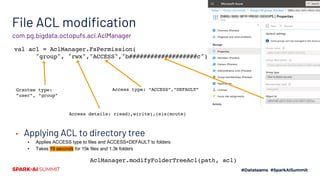

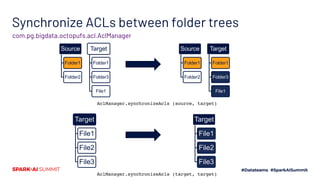

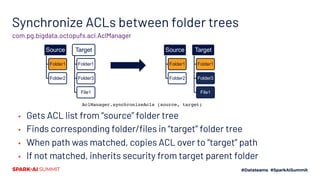

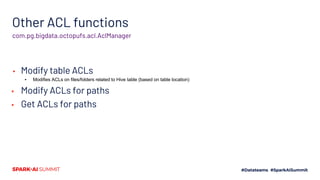

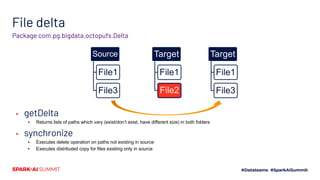

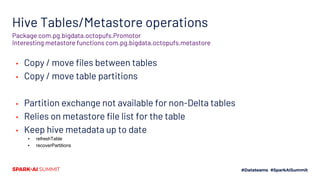

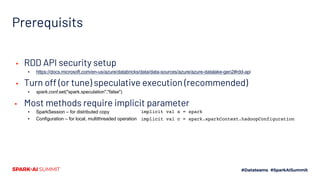

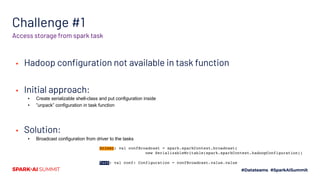

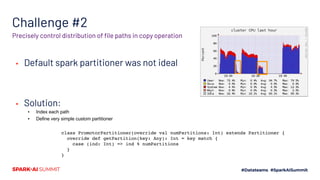

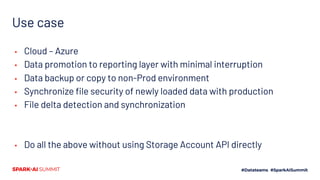

The document presents the 'Octopufs' management toolkit developed by Procter & Gamble for efficient data operations in Azure, including file copy, ACL management, and delta operations. It outlines use cases, design approaches, setup requirements, and key functionalities such as distributed file handling and multithreaded operations. The toolkit is open-sourced, available on GitHub, and emphasizes optimal performance and minimal disruption during data management tasks.